Mysterious FrontCache

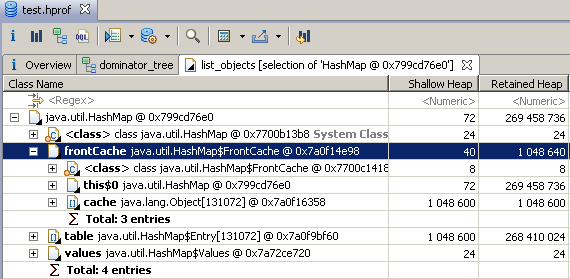

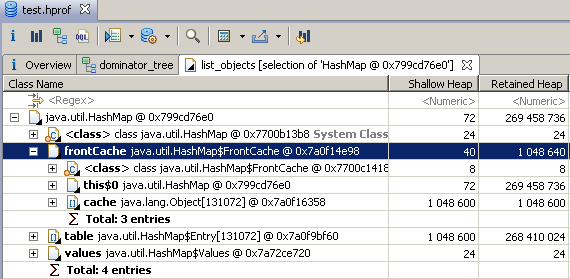

It all started with the fact that I once again picked a Java application memory dump in Eclipse Memory Analyzer and saw such an interesting thing:

I am familiar with the HashMap code quite well, but I never saw the nested FrontCache class there. Maybe with the latest JDK update they sent me an updated HashMap? I looked at the source, but the word "front" was not found there. It became interesting where this class comes from and what it does.

Having rummaged in the JRE (I have 1.7u10, but in the last 1.6 it is also there), I found a curious jarik: alt-rt.jar, in which HashMap $ FrontCache.class was found, as well as several other classes (LinkedHashMap, TreeMap, BigDecimal, BigInteger, MutableBigInteger and their nested classes). Usually these classes are connected from rt.jar. Why did they start loading from this mysterious jarik?

I recalled that I had recently experimented with options on a Java machine and, in particular, included -XX: + AggressiveOpts. On the website of Oracle about this key it is written sparingly:

On Habré there was an attempt to explain this option in more detail, supposedly, it is a combination of other keys. Having rummaged in OpenJDK source codes of the 7th version, I realized that business is not limited to keys. Here is what we see in hotspot / src / share / vm / runtime / arguments.cpp:

Yeah! With this option, alt-rt.jar is indeed added, among other things. You can verify this from your application using

But what is the difference in implementing a HashMap? I began to look for a modified source, but then I failed. It turned out that this jar is being built using jdk / make / altclasses / Makefile, and the source directory is designated as

This smelled not very good, and the jdk / make / common / Defs.gmk file confirmed my concerns:

Of course, the specified directory is not included. Just in case, I deflated JDK 8, but there the situation was no better. Oracle hides an alternative HashMap.

Having rummaged on the Internet, I ran into a project that encouraged me, but in vain. The main one says that there are source codes of classes from jre \ lib \ alt-rt.jar, in fact there is a standard implementation of HashMap and other classes. Apparently, the author did not understand that there are two options.

There is one way left - to disassemble the bytecode (javap -c -private) and read it like this. To make it easier, I disassembled the usual and alternative HashMap, a couple of regexpov threw out irrelevant things and compared diff'om. At first, everything looked pretty scary, but then I guessed that the code of the ordinary and alternative HashMap evolved independently, so you need to compare the alternative HashMap with a common ancestor, which turned out to be the HashMap from the latest updates of the 6th JDK. Here the picture became much clearer, it did not even require special tools for decompilation into Java code. HashMap really has a HashMap.FrontCache frontCache, which is initialized in the constructor. The FrontCache constructor accepts capacity - the number of elements in the main hash table. The following code has been added to the get (Object key) method:

The following has been added to the put (K key, V value) method:

There are technical changes in other methods, but the point is here. It is clear that frontCache is some kind of alternative (apparently faster) data storage. When an element is requested, it is first searched in frontCache, and when a new one is entered, it is entered in frontCache and in a regular hash table. What makes FrontCache class faster? Here's what the most important methods from it look like:

If the keys are not integers, then FrontCache is simply useless. However, the array is still allocated and checks are made during each operation with the HashMap.

In fact, even more memory is lost, since HashMap.Entry has a setValue method, which should now update the value in FrontCache if necessary. Therefore, a link to the HashMap itself has been added to each Entry, which can add up to 8 extra bytes per record.

The discovery somewhat shocked me. Recently, to the glory of Trove , we almost never use keys like Integer, so it turned out that optimization only eats time and memory in vain. In general, I decided that AggressiveOpts should be turned off. Of course, I did not understand what had changed in TreeMap and math classes (in LinkedHashMap, the changes were cosmetic, just related to the changes in HashMap.Entry to support FrontCache). Be careful and do not use options whose meaning you do not fully understand.

I am familiar with the HashMap code quite well, but I never saw the nested FrontCache class there. Maybe with the latest JDK update they sent me an updated HashMap? I looked at the source, but the word "front" was not found there. It became interesting where this class comes from and what it does.

Having rummaged in the JRE (I have 1.7u10, but in the last 1.6 it is also there), I found a curious jarik: alt-rt.jar, in which HashMap $ FrontCache.class was found, as well as several other classes (LinkedHashMap, TreeMap, BigDecimal, BigInteger, MutableBigInteger and their nested classes). Usually these classes are connected from rt.jar. Why did they start loading from this mysterious jarik?

I recalled that I had recently experimented with options on a Java machine and, in particular, included -XX: + AggressiveOpts. On the website of Oracle about this key it is written sparingly:

Turn on point performance compiler optimizations that are expected to be default in upcoming releases.

On Habré there was an attempt to explain this option in more detail, supposedly, it is a combination of other keys. Having rummaged in OpenJDK source codes of the 7th version, I realized that business is not limited to keys. Here is what we see in hotspot / src / share / vm / runtime / arguments.cpp:

jint Arguments::parse_vm_init_args(const JavaVMInitArgs* args) {

...

if (AggressiveOpts) {

// Insert alt-rt.jar between user-specified bootclasspath

// prefix and the default bootclasspath. os::set_boot_path()

// uses meta_index_dir as the default bootclasspath directory.

const char* altclasses_jar = "alt-rt.jar";

size_t altclasses_path_len = strlen(get_meta_index_dir()) + 1 +

strlen(altclasses_jar);

char* altclasses_path = NEW_C_HEAP_ARRAY(char, altclasses_path_len);

strcpy(altclasses_path, get_meta_index_dir());

strcat(altclasses_path, altclasses_jar);

scp.add_suffix_to_prefix(altclasses_path);

scp_assembly_required = true;

FREE_C_HEAP_ARRAY(char, altclasses_path);

}

Yeah! With this option, alt-rt.jar is indeed added, among other things. You can verify this from your application using

System.getProperty("sun.boot.class.path"). Thus, for the mentioned classes, when AggressiveOpts is enabled, the implementation changes. But what is the difference in implementing a HashMap? I began to look for a modified source, but then I failed. It turned out that this jar is being built using jdk / make / altclasses / Makefile, and the source directory is designated as

ALTCLASSES_SRCDIR = $(CLOSED_SRC)/share/altclassesThis smelled not very good, and the jdk / make / common / Defs.gmk file confirmed my concerns:

# Files that cannot be included in the OpenJDK distribution are

# collected under a parent directory which contains just those files.

ifndef CLOSED_SRC

CLOSED_SRC = $(BUILDDIR)/../src/closed

endifOf course, the specified directory is not included. Just in case, I deflated JDK 8, but there the situation was no better. Oracle hides an alternative HashMap.

Having rummaged on the Internet, I ran into a project that encouraged me, but in vain. The main one says that there are source codes of classes from jre \ lib \ alt-rt.jar, in fact there is a standard implementation of HashMap and other classes. Apparently, the author did not understand that there are two options.

There is one way left - to disassemble the bytecode (javap -c -private) and read it like this. To make it easier, I disassembled the usual and alternative HashMap, a couple of regexpov threw out irrelevant things and compared diff'om. At first, everything looked pretty scary, but then I guessed that the code of the ordinary and alternative HashMap evolved independently, so you need to compare the alternative HashMap with a common ancestor, which turned out to be the HashMap from the latest updates of the 6th JDK. Here the picture became much clearer, it did not even require special tools for decompilation into Java code. HashMap really has a HashMap.FrontCache frontCache, which is initialized in the constructor. The FrontCache constructor accepts capacity - the number of elements in the main hash table. The following code has been added to the get (Object key) method:

if(frontCache != null) {

V value = frontCache.get(key);

if(value != null) return value;

}The following has been added to the put (K key, V value) method:

if(frontCache != null) {

frontCache.put(key, value);

}There are technical changes in other methods, but the point is here. It is clear that frontCache is some kind of alternative (apparently faster) data storage. When an element is requested, it is first searched in frontCache, and when a new one is entered, it is entered in frontCache and in a regular hash table. What makes FrontCache class faster? Here's what the most important methods from it look like:

private class FrontCache {

private Object[] cache;

private int bitMask;

public FrontCache(int capacity) {

this.cache = new Object[capacity];

this.bitMask = makeBitMask(capacity);

}

public int makeBitMask(int capacity) {

return -1 << (32 - Integer.numberOfLeadingZeros(capacity - 1));

}

public boolean inRange(int value) {

return (value & bitMask) == 0;

}

public V get(Object key) {

if(key instanceof Integer) {

int intKey = ((Integer)key).intValue();

if(inRange(intKey)) {

return (V) cache[intKey];

}

}

return null;

}

private void put(K key, V value) {

if(key instanceof Integer) {

int intKey = ((Integer)key).intValue();

if(inRange(intKey)) {

cache[intKey] = value;

}

}

}

}If the keys are not integers, then FrontCache is simply useless. However, the array is still allocated and checks are made during each operation with the HashMap.

In fact, even more memory is lost, since HashMap.Entry has a setValue method, which should now update the value in FrontCache if necessary. Therefore, a link to the HashMap itself has been added to each Entry, which can add up to 8 extra bytes per record.

The discovery somewhat shocked me. Recently, to the glory of Trove , we almost never use keys like Integer, so it turned out that optimization only eats time and memory in vain. In general, I decided that AggressiveOpts should be turned off. Of course, I did not understand what had changed in TreeMap and math classes (in LinkedHashMap, the changes were cosmetic, just related to the changes in HashMap.Entry to support FrontCache). Be careful and do not use options whose meaning you do not fully understand.