Mobile OAuth 2.0 Security

Hello! I am Nikita Stupin, information security specialist at Mail.Ru Mail. Not so long ago, I conducted a study on mobile OAuth 2.0 vulnerabilities. To create a secure mobile OAuth 2.0 scheme, it’s not enough to implement the standard in its pure form and check redirect_uri. It is necessary to take into account the specifics of mobile applications and apply additional security mechanisms.

In this article I want to share with you the knowledge of attacks on mobile OAuth 2.0, methods of protection and secure implementation of this protocol. All the necessary protection components, which I will discuss below, are implemented in the latest SDK for Mail.Ru mobile clients.

The nature and function of OAuth 2.0

OAuth 2.0 is an authorization protocol that describes how a client service can securely access user resources on a service provider. In this case, OAuth 2.0 saves the user from having to enter a password outside the service provider: the whole process is reduced to clicking the "I agree to provide access to ..." button.

A provider in terms of OAuth 2.0 is a service that owns user data and, with the user's permission, provides third-party services (clients) with secure access to this data. The client is an application that wants to receive user data from the provider.

Some time after the release of the OAuth 2.0 protocol, ordinary developers adapted it for authentication, although initially it was not intended for this. Authentication shifts the attack vector from user data stored by the service provider to user accounts of the service client.

Only one authentication is not limited to. In the era of mobile applications and exalting the conversion, entering the application with one button became very attractive. The developers have put OAuth 2.0 on mobile rails. Naturally, few people thought about the security and specifics of mobile applications: once and again, and in production. However, OAuth 2.0 works poorly outside of web applications: the same problems are observed in mobile and desktop applications.

Let's see how to make a secure mobile OAuth 2.0.

How does it work?

Remember that on mobile devices, the client may not be a browser, but a mobile application without a backend. Therefore, we face two major security issues of mobile OAuth 2.0:

- The client is not trusted.

- The behavior of the redirect from the browser to the mobile application depends on the settings and applications that the user has installed.

Mobile application is a public client

To understand the roots of the first problem, let's take a look at how OAuth 2.0 works when a server-to-server interaction occurs, and then compare it with OAuth 2.0 if a client-to-server interface works.

In both cases, it all starts with the fact that the service client registers with the service provider and receives

client_idand, in some cases client_secret. The value client_idis public and necessary to identify the client service, as opposed to client_secret, the value of which is private. The registration process is described in more detail in RFC 7591 . The diagram below shows how OAuth 2.0 works when server-to-server interacts.

The picture is taken from https://tools.ietf.org/html/rfc6749#section-1.2

There are 3 main steps of the OAuth 2.0 protocol:

- [AC steps] Get the Authorization Code (simple

code). - [DE steps] Exchange

codeforaccess_token. - Get access to the resource with

access_token.

We will analyze the receipt of the code in more detail:

- [Step A] The service client redirects the user to the service provider.

- [Step B] The service provider asks the user for permission to provide data to the service client (arrow B up). The user provides access to the data (arrow B to the right).

- [Step C] The service provider returns the

codeuser's browser, and he redirects to thecodeservice client.

We will analyze the receipt in

access_tokenmore detail:- [Step D] The client server sends a receipt request

access_token. At the request included:code,client_secretandredirect_uri. - [Step E] In the case of valid

code,client_secretand isredirect_uriprovidedaccess_token.

The request for

access_token is performed according to the server-to-server scheme, therefore, in general, to kidnap, client_secret an attacker must hack into the server of the service-client or server of the service provider. Now let's see how the OAuth 2.0 scheme looks on a mobile device without a backend (client-to-server interaction).

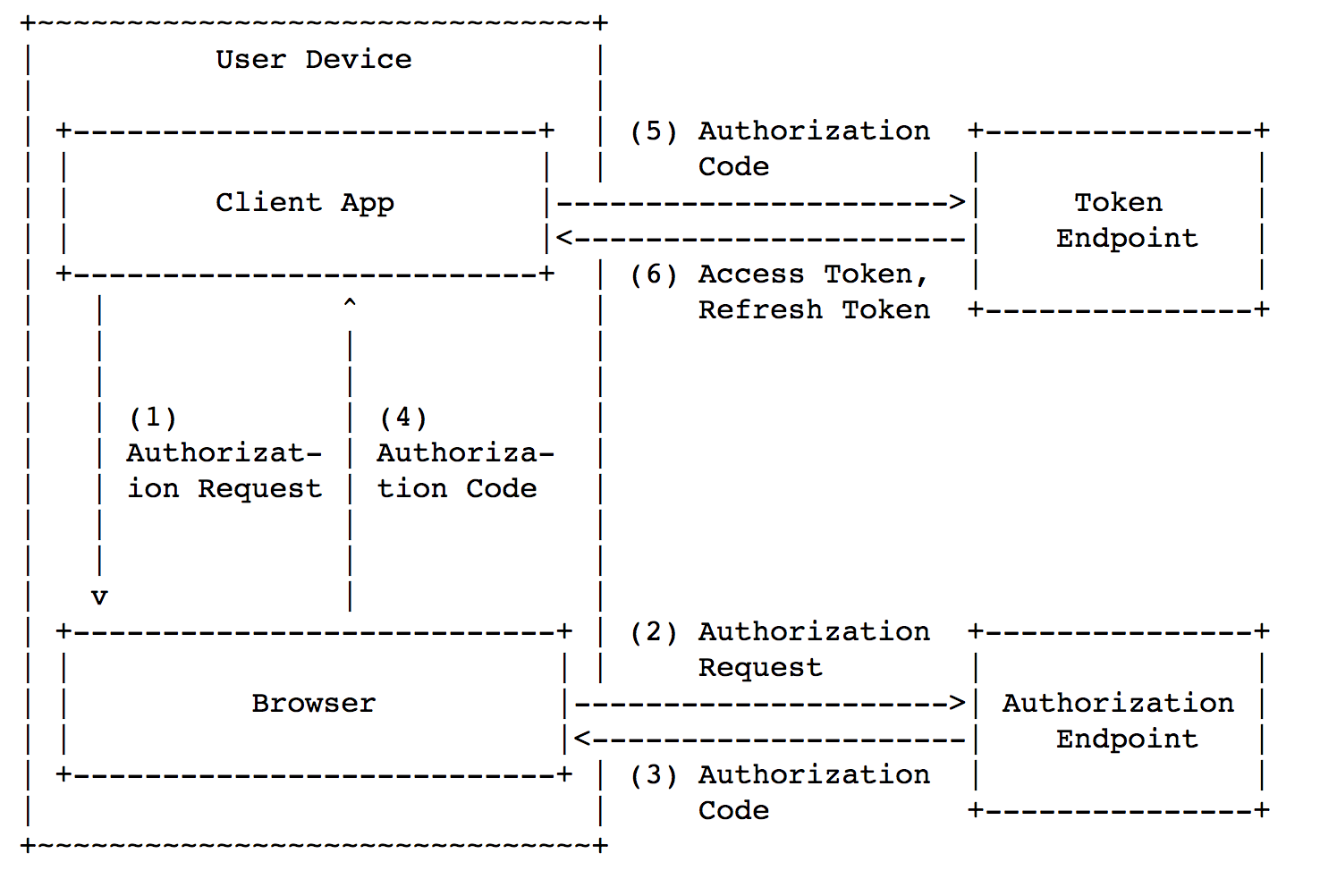

The picture is taken from https://tools.ietf.org/html/rfc8252#section-4.1

The general scheme is divided into the same 3 basic steps:

- [steps 1-4 in the picture] Get

code. - [steps 5-6 in the picture] Exchange

codeforaccess_token. - Get access to the resource with

access_token.

However, in this case, the mobile application also serves as a server, which means it

client_secret will be sewn inside the application. This leads to the fact that on mobile devices it is impossible to keep сlient_secret a secret from an attacker. You can get client_secretit wired into the application in two ways: siphon traffic from the application to the server or perform reverse engineering of the application. Both methods are easy to implement, therefore client_secret useless on mobile devices. Regarding the client-to-server scheme, you might have a question: “Why not immediately get it

access_token?”. It would seem, why do we need an extra step? Moreover, there is an Implicit Grant scheme , in which the client immediately receivesaccess_token. And although in some cases it can be used, we will see below that the Implicit Grant scheme is not suitable for secure mobile OAuth 2.0 .Redirect on mobile devices

In general, Custom URI Scheme and AppLink mechanisms are used to redirect from browser to application on mobile devices . None of these mechanisms in its pure form is as reliable as a browser redirect.

Custom URI Scheme (or deep link) is used as follows: the developer determines the application's scheme before building. The scheme can be arbitrary, and several applications with the same scheme can be installed on one device. It's pretty simple, when on the device each scheme corresponds to one application. And what if two applications registered the same scheme on the same device? How can the operating system determine which of the two applications to open when accessing the Custom URI Scheme? Android will show a window with the choice of the application in which you want to open the link. In iOS, the behavior is undefined , which means that any of the two applications can be opened. In both cases, the attacker has the opportunity to intercept the code or access_token .

AppLink, in contrast to the Custom URI Scheme, allows you to open the desired application, but this mechanism has several disadvantages:

- Each service client must independently pass the verification procedure .

- Android users can turn off AppLink for a specific application in the settings.

- Android below 6.0 and iOS below 9.0 do not support AppLink.

The above disadvantages of AppLink, firstly, raise the entry threshold for potential client services, and secondly, can lead to the fact that, under certain circumstances, the user will not have OAuth 2.0 working. This makes the AppLink mechanism unsuitable for replacing browser redirects in the OAuth 2.0 protocol.

Okay, what to attack?

Mobile OAuth 2.0 issues have generated specific attacks. Let's see what they are and how they work.

Authorization Code Interception Attack

Initial data: a legitimate application (OAuth 2.0 client) and a malicious application that registered the same scheme as the legitimate one are installed on the user's device. The figure below shows the attack pattern.

The picture is taken from https://tools.ietf.org/html/rfc7636#section-1

The problem here is this: at step 4, the browser returns

code to the application through the Custom URI Scheme, so it code can be intercepted by the malware (because it registered the same scheme as a legitimate application). After that, the malware changes code to access_token and gets access to user data.How to protect yourself? In some cases, you can use interprocess communication mechanisms, we will talk about them below. In the general case, it is necessary to apply a scheme called Proof Key for Code Exchange . Its essence is reflected in the diagram below.

Picture taken from https://tools.ietf.org/html/rfc7636#section-1.1

in the request from the client there are several additional parameters:

code_verifier, code_challenge (in the diagram t(code_verifier)), and code_challenge_method (on the scheme t_m). Code_verifier - This is a random number with a length of at least 256 bits , which is used only once . That is, for each request for receipt, the code client must generate a new one code_verifier.Code_challenge_method Is the name of the conversion function, most often SHA-256. Code_challenge - this is the code_verifierone to which the conversion was applied code_challenge_method and encoded into the Safe Base64 URL. Conversion

code_verifier to code_challenge necessary to protect against attack vectors based on interception code_verifier (for example, from the system logs of the device) when prompted code. In the event that the user's device does not support SHA-256, then we assume a downgrade to the absence of the code_verifier conversion . In all other cases, you must use SHA-256.

The scheme works as follows:

- The client generates

code_verifierand remembers it. - Customer selects

code_challenge_methodand receivescode_challengefromcode_verifier. - [Step A] The client requests

code, and the query is addedcode_challengeandcode_challenge_method. - [Step B] The provider remembers

code_challengebothcode_challenge_methodon the server and returns to thecodeclient. - [Step C] The client requests

access_token, and the request is addedcode_verifier. - The provider receives

code_challengefrom the visitorcode_verifier, and then compares it withcode_challengewhat he remembered. - [Step D] If the values match, the provider issues it to the client

access_token.

Let's see why

code_challenge it allows you to protect yourself from code interception attacks. To do this, go through the stages of receipt access_token.- First, the legitimate application requests

code(with the requestcode_challengeand sentcode_challenge_method). - The malware intercepts

code(but notcode_challengebecause there iscode_challengeno response in the response ). - The malware requests

access_token(with a valid onecode, but without a valid onecode_verifier). - The server notices the discrepancy

code_challengeand gives an error.

Note that the attacker does not have the ability to guess

code_verifier (random 256 bits!) Or to find him somewhere in the logs ( code_verifier transmitted once). If you put it all in one phrase, then

code_challenge it allows the service provider to answer the question: “is it access_token requested by the same client application that requested codeor another?”.OAuth 2.0 CSRF

On mobile devices, OAuth 2.0 is often used as an authentication mechanism. As we remember, authentication through OAuth 2.0 differs from authorization in that OAuth 2.0 vulnerabilities affect user data on the client side of the service, and not the service provider. As a result, a CSRF attack on OAuth 2.0 allows you to steal someone else's account.

Consider a CSRF attack applied to OAuth 2.0 using the example of taxi client application and provider.com provider. First, the attacker on his device enters the account

attacker@provider.comand gets a code taxi. After that, the attacker interrupts the OAuth 2.0 process and generates a link:com.taxi.app://oauth?

code=b57b236c9bcd2a61fcd627b69ae2d7a6eb5bc13f2dc25311348ee08df43bc0c4

Then the attacker sends the link to the victim, for example, under the guise of a letter or SMS from the administration of the taxi. The victim follows the link, the taxi application on her phone opens, which receives

access_token, and as a result, the victim enters the attacker's taxi account . Unaware of a dirty trick, the victim uses this account: makes trips, enters his data, etc. Now an attacker can enter the victim’s taxi account at any time because he is tied to

attacker@provider.com. CSRF-attack on the login allowed to steal the account. CSRF attacks are usually protected with a CSRF token (also called

state), and OAuth 2.0 is no exception. How to use CSRF token:- The client application generates and stores a CSRF token on the user's mobile device.

- The client application includes a CSRF token in the receive request

code. - The server returns the same CSRF token in the response along with the code.

- The client application compares the incoming and saved CSRF token. If the values match, the process continues.

Requirements for CSRF token: nonce minimum length of 256 bits, obtained from a good source of pseudo-random sequences.

In short, the CSRF token allows the client application to answer the question: “did I start receiving it

access_token, or is someone trying to deceive me?”.Malware pretending to be a legitimate client

Some malware can mimic legitimate applications and raise the consent screen on their behalf (the consent screen is the screen on which the user sees: “I agree to give access to ...”). An inattentive user can click “allow”, and as a result, the malware gets access to user data.

Android and iOS provide mechanisms for mutual verification of applications. The application provider can verify the legitimacy of the application client, and vice versa.

Unfortunately, if OAuth 2.0 uses a stream through a browser, then you cannot defend against this attack.

Other attacks

We looked at attacks that are unique to mobile OAuth 2.0. But do not forget about the attacks on the usual OAuth 2.0: substitution

redirect_uri, interception of traffic over an unprotected connection, etc. You can read more about them here .What to do?

We learned how the OAuth 2.0 protocol works, and figured out what vulnerabilities exist in the implementations of this protocol on mobile devices. Let's now assemble the secure OAuth 2.0 mobile schema out of individual pieces.

Good, bad OAuth 2.0

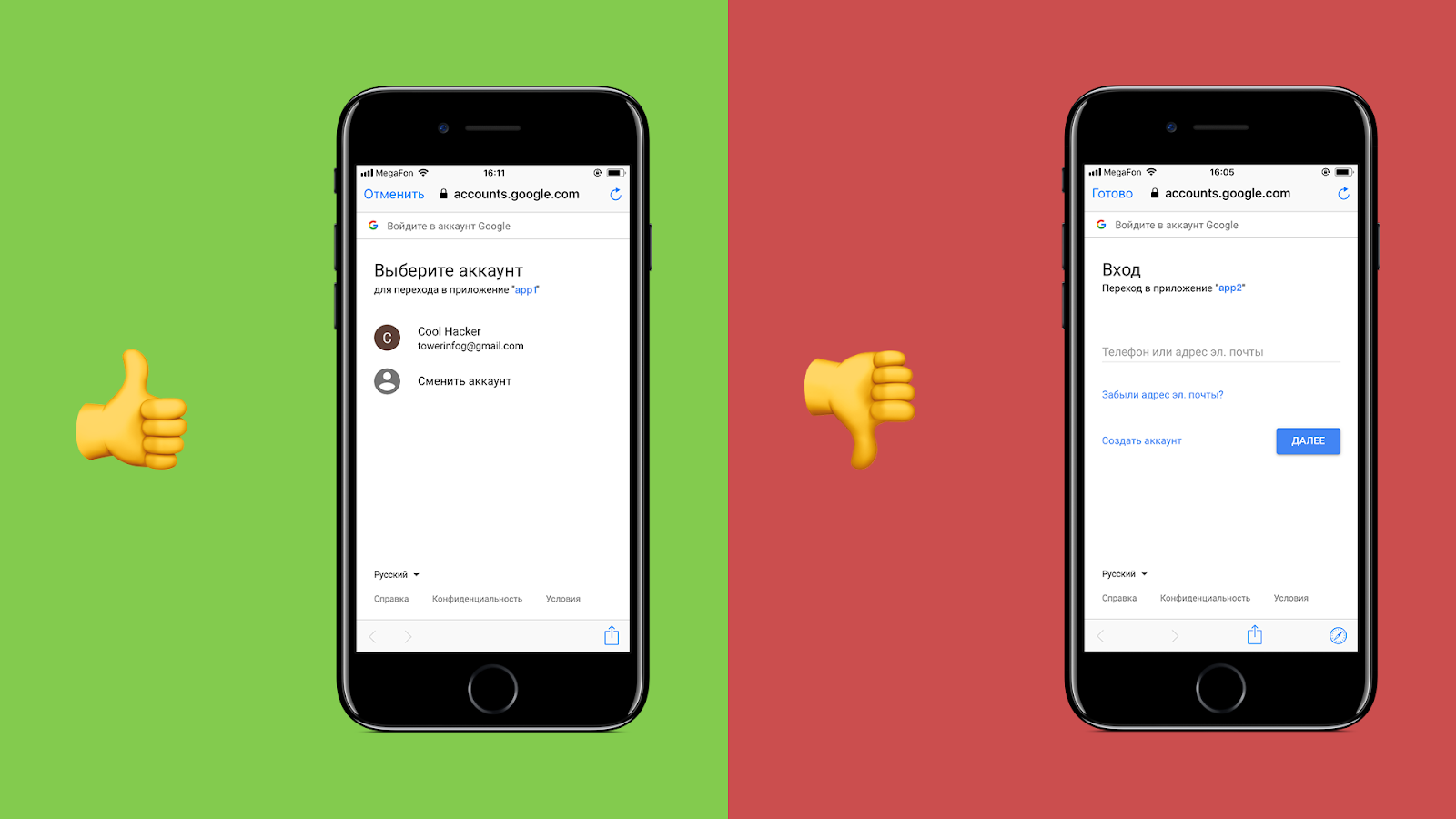

To begin with, how to raise the consent screen. On mobile devices, there are two ways to open a web page from a native application (examples of native applications: Mail.Ru Mail, VK, Facebook).

The first method is called Browser Custom Tab (in the picture on the left). Note : Browser Custom Tab on Android is called Chrome Custom Tab, and on iOS SafariViewController. In fact, this is the usual browser tab, which is displayed directly in the application, i.e. There is no visual switching between applications.

The second method is called “raise WebView” (in the picture on the right), as applied to mobile OAuth 2.0, I consider it bad.

WebView is a standalone browser for the native application.

" Stand alone browser"Means that WebView denies access to cookies, storage, cache, history and other data browsers Safari and Chrome. The converse is also true: Safari and Chrome cannot access WebView data.

" Browser for a native application " means that the native application that brought up WebView has full access to cookies, storage, cache, history and other WebView data.

Now imagine: the user presses the "log in using ..." button and the malicious application WebView requests his login and password from the service provider.

Failure immediately on all fronts:

- The user enters the username and password of the service provider account in the application, which can easily steal this data.

- OAuth 2.0 was originally designed in order not to enter the login and password from the service provider.

- The user gets used to enter the username and password anywhere, increases the likelihood of phishing .

Given that all the arguments against WebView, the conclusion suggests itself: raise the Browser Custom Tab for the consent screen.

If any of you have arguments in favor of WebView instead of Browser Custom Tab, write about it in the comments, I will be very grateful.

Secure Mobile OAuth 2.0 Schema

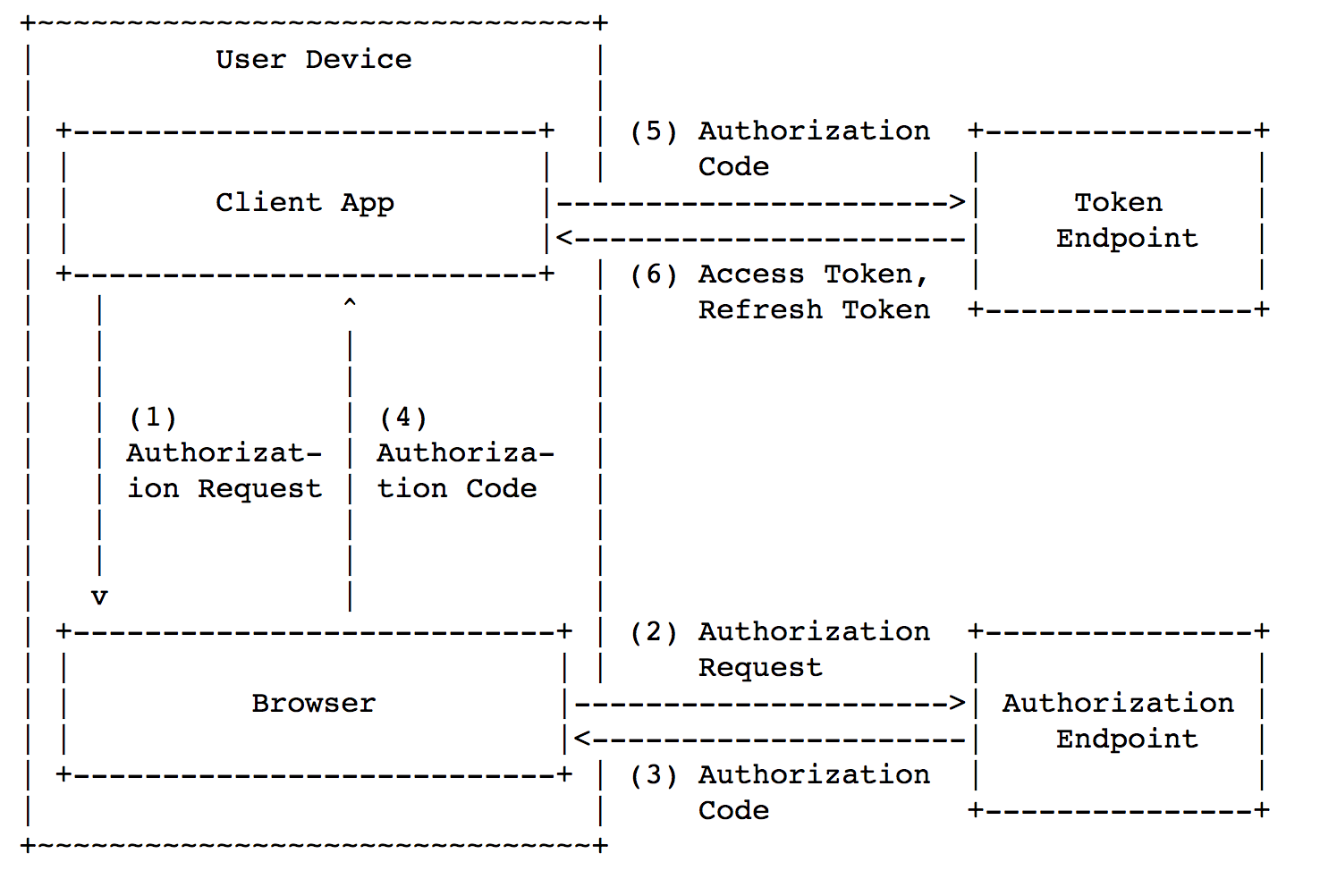

We will use the Authorization Code Grant scheme, because it allows you to add

code_challenge and protect against code interception attacks.

The picture is taken from https://tools.ietf.org/html/rfc8252#section-4.1 The

request for the code (steps 1-2) will look like this: In step 3, the browser receives a response with the redirect: In step 4, the browser opens Custom URI Scheme and passes the CSRF token to the client application. Request for receipt (step 5): At the last step, the answer is returned with . In general, the above scheme is safe, but there are also special cases in which OAuth 2.0 can be made simpler and a bit safer.

https://o2.mail.ru/code?

redirect_uri=com.mail.cloud.app%3A%2F%2Foauth&

anti_csrf=927489cb2fcdb32e302713f6a720397868b71dd2128c734181983f367d622c24& code_challenge=ZjYxNzQ4ZjI4YjdkNWRmZjg4MWQ1N2FkZjQzNGVkODE1YTRhNjViNjJjMGY5MGJjNzdiOGEzMDU2ZjE3NGFiYw%3D%3D&

code_challenge_method=S256&

scope=email%2Cid&

response_type=code&

client_id=984a644ec3b56d32b0404777e1eb73390c

com.mail.cloud.app://oаuth?

code=b57b236c9bcd2a61fcd627b69ae2d7a6eb5bc13f2dc25311348ee08df43bc0c4&

anti_csrf=927489cb2fcdb32e302713f6a720397868b71dd2128c734181983f367d622c24codeaccess_tokenhttps://o2.mail.ru/token?

code_verifier=e61748f28b7d5daf881d571df434ed815a4a65b62c0f90bc77b8a3056f174abc&

code=b57b236c9bcd2a61fcd627b69ae2d7a6eb5bc13f2dc25311348ee08df43bc0c4&

client_id=984a644ec3b56d32b0404777e1eb73390c

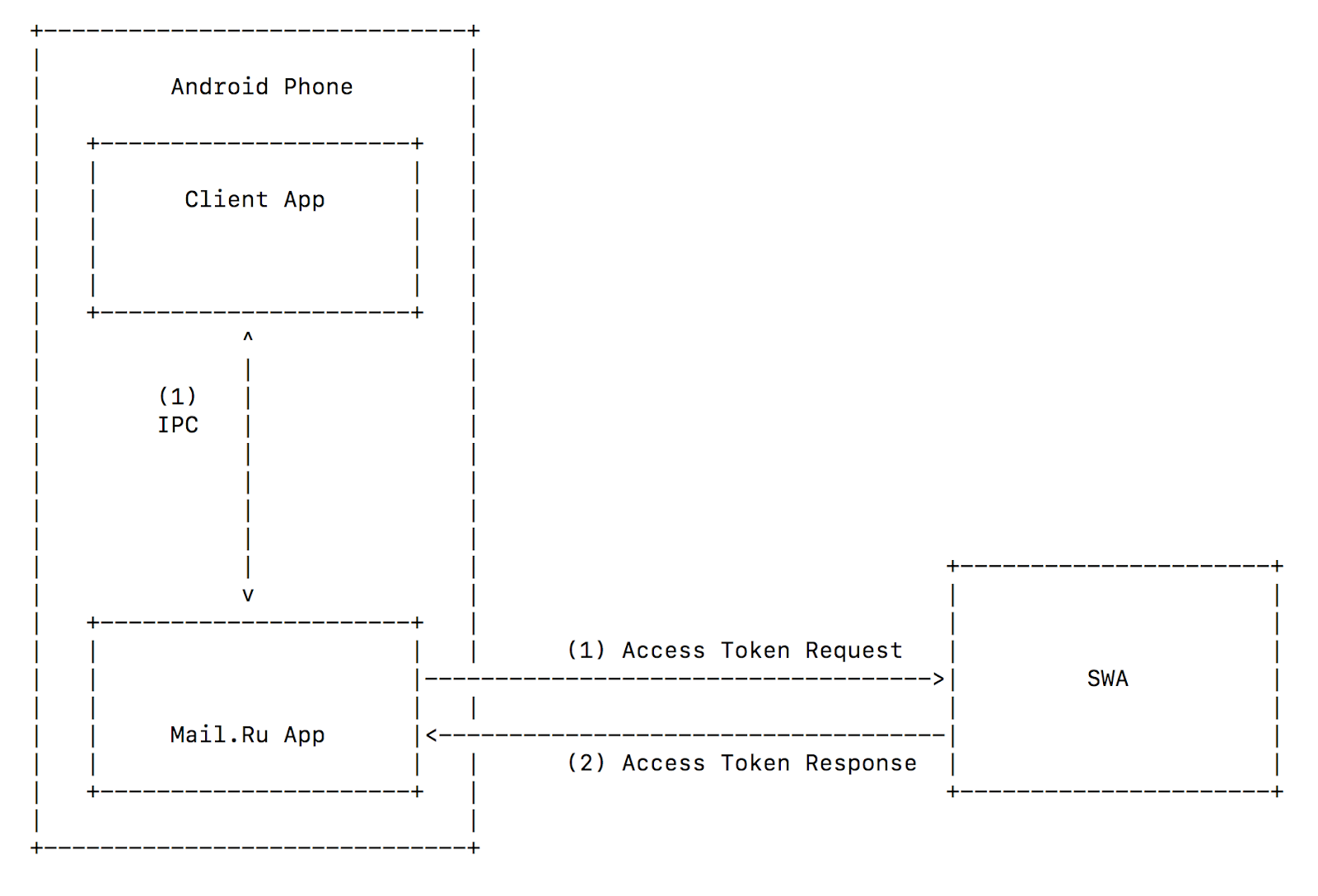

access_tokenAndroid IPC

In Android, there is a mechanism for two-way communication between processes: IPC (inter-process communication). IPC is preferable to Custom URI Scheme for two reasons:

- An application that opens an IPC channel can verify the authenticity of the application being opened by its certificate. The reverse is also true: an open application can verify the authenticity of the application that opened it.

- By sending a request via the IPC channel, the sender can receive a response through the same channel. Together with mutual verification (Clause 1), this means that no third-party process can intercept

access_token.

Thus, we can use Implicit Grant and significantly simplify the mobile OAuth 2.0 scheme. No

code_challengeCSRF tokens. Moreover, we can protect against malicious programs that mimic under valid clients in order to steal user accounts.Customer SDK

In addition to implementing the secure mobile OAuth 2.0 scheme outlined above, the provider should develop an SDK for its clients. This will facilitate client-side OAuth 2.0 implementation and at the same time reduce the number of errors and vulnerabilities.

Draw conclusions

For OAuth 2.0 providers, I compiled the Checklist for secure mobile OAuth 2.0:

- A solid foundation is vital. In the case of mobile OAuth 2.0, the foundation is the scheme or protocol we choose to implement. When implementing your own OAuth 2.0 scheme, it's easy to make a mistake. Others have already filled the bumps and made conclusions; there is nothing wrong with learning from their mistakes and immediately making a safe implementation. In general, the most secure mobile OAuth 2.0 scheme is the one in the “What should I do?” Section.

Access_tokenand other sensitive data are stored: under iOS - in Keychain, under Android - in Internal Storage. These stores are specifically designed for such purposes. If necessary, you can use the Android Content Provider, but you need to configure it safely.Codemust be disposable, with a short lifetime.- To protect against interception code use

code_challenge. - To protect against a CSRF attack on a login, use CSRF tokens.

- Do not use the WebView for the consent screen, use the Browser Custom Tab.

Client_secretuseless if it is not stored on the backend. Do not give it to public customers.- Use HTTPS everywhere , with the prohibition of downgrade to HTTP.

- Follow the cryptographic guidelines (cipher selection, token length, etc.) from the standards . You can copy the data and find out why it was done this way, but you cannot do your own cryptography .

- From the client side, check who you are opening for OAuth 2.0, and from the provider side, check who opens you to OAuth 2.0.

- Remember the usual OAuth 2.0 vulnerabilities . Mobile OAuth 2.0 expands and complements the usual, so no one has canceled

redirect_urithe exact match check and other recommendations for the usual OAuth 2.0. - Be sure to provide SDK clients. The client will have fewer errors and vulnerabilities in the code, and it will be easier for him to implement your OAuth 2.0.

What to read

- [RFC] OAuth 2.0 for Native Apps https://tools.ietf.org/html/rfc8252

- Google OAuth 2.0 for Mobile & Desktop Apps https://developers.google.com/identity/protocols/OAuth2InstalledApp

- [RFC] Proof Key for Code Exchange by OAuth Public Clients https://tools.ietf.org/html/rfc7636

- OAuth 2.0 Race Condition https://hackerone.com/reports/55140

- [RFC] OAuth 2.0 Threat Model and Security Considerations https://tools.ietf.org/html/rfc6819

- Attacks on regular OAuth 2.0 https://sakurity.com/oauth

- [RFC] OAuth 2.0 Dynamic Client Registration Protocol https://tools.ietf.org/html/rfc7591

Thanks

Thanks to everyone who helped write this article, especially Sergey Belov, Andrey Sumin, Andrey Labunts ( @isciurus ) and Darya Yakovleva.