Automatic Photo Studio, Part 1

A year and a half ago, I was browsing the blog of one of the successful Russian portrait photographers with a recognizable style, and the thought crept into my head, why not just put the camera on a tripod, put the lights in the studio once, set all the camera settings and do the automatic photo processing with the given profile? The blog photos were great, but very similar to each other.

Since I belong to people who do not know how to take pictures on the phone and like cameras, I really liked the idea. Yes, I saw all sorts of photo booths and photo stands, but the developers of these devices have not even mastered making normal colors. I decided that this was because the developers did not understand photography.

So that with this idea the same thing does not happen as with the others (who did not budge or stalled at the initial stage). I decided that the most important thing was to make everything work as a whole, and not to polish some separate component to shine. And since I have very little development time, after the main full-time work, I have 1-2 hours maximum strength, and a little more on the weekend, you should try not to learn anything new, make the most of available knowledge.

I want to tell in this article what problems I had on my way and how I solved them.

A small explanation of the shooting conditions and equipment: I considered only cameras with the APS-C sensor minimum and professional studio flashes, this is the only way to guarantee high quality images at any time of the day or night.

All people of different stature

The first thing I was surprised to find when I put the camera on a tripod is that it’s not so easy to fit into the frame and even have a good composition. When you move from and to the camera, the whole composition also deteriorates if it is correctly placed for a specific person standing at a certain point. Yes, you can put a chair and say that you need to sit on a chair, but it will not be very interesting. You can still crop the photos, but then the quality will greatly deteriorate. Well, the last way that I chose is to make the camera aim automatically.

There are also 2 options here. Correct - the optical axis is always horizontal, the camera shifts up and down, and it’s easier to implement - adjust the position of the camera with tilts. In this case, there will be promising distortions, but they are quite well corrected during processing if you remember the angle of the camera.

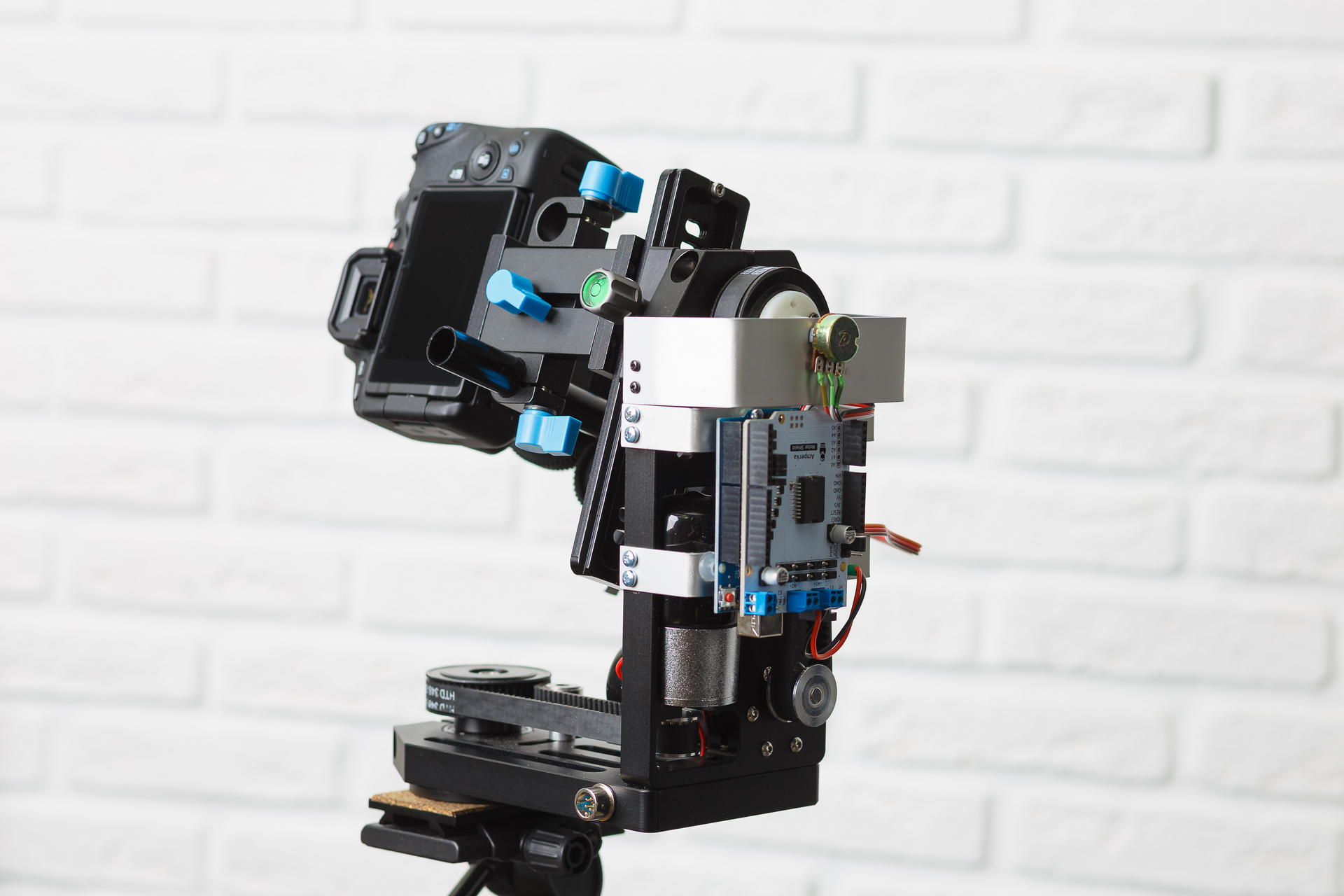

Since I practically did not have any experience in manufacturing any kind of iron devices, I tried to find something as ready to use as possible. I found several devices for panoramic shooting within the range of $ 1000, all of which made it possible to manually control tilts and turns, as well as automatically shoot panoramas. But it was impossible to control them from a computer. There are also quite a few devices for controlling video cameras, for shooting from camera cranes, for example. Good devices that have hints of digital control are very expensive, and it’s completely unclear if there is any API available. As a result, I found here such a device on a popular site:

From electronics there is nothing. A year ago, only the version with collector engines (with integrated gearboxes) was available, which I bought. It was necessary to somehow manage this thing from a computer. At the forum of our institute, they suggested that the most affordable way is to use the Arduino. So I did. I bought another motor shield, since the engines there are powered by 12 volts. After I tried to turn this on, I felt all the pain that collector motors can cause to a person - they are not only impossible to rotate at a given angle, just “turning a little” is also not easy. My first thought was to put a stepper motor there. For a very long time I was looking for a stepper motor that would fit into this platform instead of the one that was standing there, but could not find it. Then he began to think about how you can screw the servo there, even bought it but also could not come up with anything reliable. The next thought was to fasten the accelerometer to the platform and gradually rotate the platform to a predetermined angle. I screwed the accelerometer with a gyroscope and a compass, but it was very buggy and I also refused this idea (a month later I realized that the Chinese power supply for the camera was blame for the glitches of the accelerometer, from which there were not bad interference). And then I accidentally read how the servo is arranged. I liked the idea of screwing a resistor to measure the angle, but I had to somehow connect it to a pulley. I had to learn FreeCAD and use 3D printing for the first time in my life. In short, after processing with a file, everything was able to be assembled. I screwed the accelerometer with a gyroscope and a compass, but it was very buggy and I also refused this idea (a month later I realized that the Chinese power supply for the camera was blame for the glitches of the accelerometer, from which there were not bad interference). And then I accidentally read how the servo is arranged. I liked the idea of screwing a resistor to measure the angle, but I had to somehow connect it to a pulley. I had to learn FreeCAD and use 3D printing for the first time in my life. In short, after processing with a file, everything was able to be assembled. I screwed the accelerometer with a gyroscope and a compass, but it was very buggy and I also refused this idea (a month later I realized that the Chinese power supply for the camera was blame for the glitches of the accelerometer, from which there were not bad interference). And then I accidentally read how the servo is arranged. I liked the idea of screwing a resistor to measure the angle, but I had to somehow connect it to a pulley. I had to learn FreeCAD and use 3D printing for the first time in my life. In short, after processing with a file, everything was able to be assembled. I liked the idea of screwing a resistor to measure the angle, but I had to somehow connect it to a pulley. I had to learn FreeCAD and use 3D printing for the first time in my life. In short, after processing with a file, everything was able to be assembled. I liked the idea of screwing a resistor to measure the angle, but I had to somehow connect it to a pulley. I had to learn FreeCAD and use 3D printing for the first time in my life. In short, after processing with a file, everything was able to be assembled.

I had to torment myself with the program for arduino to set a given angle, since the camera on the platform has a large moment of inertia and it does not stop immediately. But in the end, it turned out to set the angle with an accuracy of about 1 degree.

Now about automatic aiming - the idea is simple to make the face was at the top of the frame. So you just need to find a face and adjust the platform in every picture from liveview. I didn’t know anything about identifying faces, so I used the tutorial using the Haar signs (haar cascades). I found out that for individuals this method does not work. It finds on each frame a bunch of garbage besides what is needed and consumes a lot of processor time. Then he found another example of how to use neural networks through OpenCV. Neural networks work just fine! But I was glad until I started processing photos in parallel. And Linux somehow began to allocate processor time between the platform management thread and photo processing processes. He took the path of least resistance - he began to make faces on the video card. Everything began to work perfectly.

Despite the fact that I did not want to delve into the details, I nevertheless conducted a small test. And I bought Intel Neural Compute Stick 2 - I tried to count on it instead of a video card. My results are approximately the same (digits - processing time for one image 800x533 in size) -

- Core i5 9400F - 59

- Core i7 7500U - 108

- Core i7 3770-110

- GeForce GTX 1060 6Gb - 154

- GeForce GTX 1050 2Gb - 199

- Core i7 3770, ubuntu 18.04 with opencv from OpenVINO - 67

- Intel Neural Compute Stick 2, ubuntu 18.04 with opencv from OpenVINO - 349

It turned out that it was enough to process images of size 300 on the smaller side so that the face of a person standing at full height in the frame was reliably located. Works faster on such images. I currently use the GeForce GTX 1050. I am sure it can be greatly improved, but now there is a much more serious problem.

Exposition

It is no secret that the photograph must be correctly exposed. In my case, this is even more important since there is no retouching. For skin defects to be less noticeable, the photo should be as light as possible, on the verge of overexposure, but without overexposure.

The brightness of the final picture when shooting with a flash depends on the following parameters:

- Flash power

- The distance from the flash to the subject

- Diaphragm

- ISO value

- Options when converting from RAW

After the frame is made, we can only change the last parameter. But changing it over a wide range is not very good, because with a large positive correction of the exposure of the dark frame there will be noise, and in the opposite case, there may be clipping in bright areas.

The TTL (Through The Lens) system is used to automatically determine exposure during flash shooting. It works as follows:

- The flash makes a series of small flashes.

- At this time, the camera measures the exposure, focuses and measures the distance to the focus subject.

- Based on these data, it calculates the required flash output.

- The flash fires again, and at this time the shutter opens, a picture is taken.

This system works great when you can manually adjust the pictures after shooting. But to get the finished result, it works unsatisfactorily. If that - I tried the Profoto flash for> 100t.r.

I have well-known conditions, flashes should stand all the time in one place. So you can simply calculate the exposure by the position of a person in space. The problem arises - how to determine the position of a person?

The first idea was simply to take the distance to the focusing object from EXIF and for the first frame to do a lot of exposure compensation in the RAV converter, and for the next one, adjust the flash power or aperture. It is very likely that a person will make a lot of shots, standing in one place. But it turned out that the distance in EXIF is written very discrete, the further the object - the greater the step. Moreover, for different lenses, the distance to the object takes different sets of values, and some do not measure it at all.

The next idea is to use an ultrasonic rangefinder. This device measures the distance quite accurately, but only up to a meter and only if a person is not dressed in something that absorbs sound waves. If you put the range finder on the servo and twist it like a radar, it gets a little better - it measures up to 1.5 meters, which is also very small (people get the best if you shoot them from a distance of 2 meters).

Of course, I knew that even inexpensive phones already build depth maps and blur the background selectively. But I didn’t want to get involved in it. Unfortunately, there was no choice. First I wanted to buy 2 webcams, combine them and read the displacement map using OpenCV. But, fortunately, I found many depth cameras that already do this inside themselves. I opted for Intel D435 (if someone wants to buy one, it is not supported in Linux in the main kernel branch. There are patches for debian and ubuntu in the librealsense repository. I had to fix them for fedora).

As soon as I connected everything, I wrote a test program that measures the distance to a small square in the center. So this code still works. And it works pretty well. Of course, you need to look for a face in the picture from the RGB camera and calculate the distance from the flash to that face. But these are plans for the future.

According to the position of a person in space, it is necessary to calculate the correction to the exposure. At first I came up with some formula that worked only for a point source of light in a vacuum (in fact, the absence of reflecting walls and ceiling mattered). But then he just made a series of shots with a constant flash output and adjusted the exposure in an equalizer by eye, it turned out that the correction almost linearly depended on the distance. I use the Rembrandt lighting scheme, a flash with a softbox is in the plane of the camera.

But something needs to be done with exposure correction. Ideally, you need to change the flash power, but so far my diaphragm and additives are changing <1 / 6Ev - in the rav converter. My flash sync can be controlled via bluetooth using the phone app. So in the future I plan to figure out how the protocol is arranged there and change the power of the flashes.

Here is a comparison of constant flash output with TTL and my method. It works much more stable and more accurately TTL:

Diversity

When a girl (or even a guy) comes to the photographer for a photo shoot, she (or he) usually wants a photo of a different plan, a larger one, where only her face and more general are full-length or waist-high. Not everyone knows, but best of all, the plan is changed by changing the focal length of the lens. That is, a person always stands at a distance of, say, 2 meters, if we need to shoot at full height, we wrap the 35mm lens, if only the face is 135mm, and if waist-high, then 50mm or 85mm. Well, or do not change the lenses and set the lens with zoom. To offer the user to twist the zoom with his hands on the camera, which stands on a movable platform, breaking through a bundle of wires, does not sound very good. So I bought a pack of spare parts on aliexpress, took a servo drive that was not useful to me to control the platform and did this:

And this is how it works:

Результаты первого теста в фотостудии, прежде всего хотелось посмотреть, насколько разнообразные получится делать снимки, ничего не двигали и не перенастраивали во время съемки:

Видео процесса:

Результат

Вот это одни из лучших кадров, которые получилось сделать:

вроде у всех разрешение на публикацию спрашивали, если вы себя узнали и хотите убрать фото — напишите мне

Why did I do this? This is a thing that has not happened yet, at least I have not found anything like it. Potentially useful - now there are many specialists, such as psychologists, business trainers, sports trainers, hairdressers selling their services through blogs, they need a lot of photos, and in exactly the form in which they want, not the photographer. Some people just don’t like it when a stranger (photographer) looks at them when shooting. Well, the simplest is great entertainment for corporate events, exhibitions and other events.

I did not describe the software part, how photos are processed and about user interaction, since there is already so much text, I will write the second part later. These points are already pretty well worked out so that people who are not familiar with programming can use the system.