How we did autopilot for a service station

Hello, Habr! I work in a small startup in Berlin that develops autopilots for cars. We are completing a project for service stations of a major German automaker, and I would like to talk about it: how we did it, what difficulties we encountered and what new things we discovered. In this part I will talk about the perception module and a little about the architecture of the solution as a whole. About the rest of the modules, we will probably tell in the following parts. I will be very glad to receive feedback and a look from the outside on our approach.

The press release of the project from the customer can be found here .

To begin with, I’ll tell you why the automaker turned to us, and did not do the project on his own. It is difficult for large German concerns to change processes, and the car development format is rarely suitable for software - iterations are long and require good planning. It seems to me that German automakers understand this, and therefore you can meet startups that are founded by them, but work as an independent company (for example, AID from Audi and Zenuity from Volvo). Other automakers are organizing events like Startup Autobahn, where they are looking for potential contractors for tasks and new ideas. They can order a product or prototype and after a short period of time get the finished result. This may turn out to be faster than trying to do the same thing yourself, and it costs no more than its own development in terms of costs.

In the current project, the customer wants to understand whether it is possible to drive cars in service centers using “AI”. The user script is:

Features: not all cars have cameras. On the machines on which they are, we do not have access to them. The only data on the machine that we have access to is sonars and odometry

Thus, the car must be controlled by external sensors installed in the service area.

The architecture of the final product is as follows:

For infrastructure level, ROS is used .

Here's what happens after a technician chooses a car and clicks “drive in”:

Departure takes place in the same way as arrival.

One of the main and, in my opinion, the most interesting module is perception. This module describes the data from the sensors in such a way that you can accurately make a decision about the movement. In our project, it gives the coordinates, orientation and sizes of all objects that fall on the camera. When designing this module, we decided to start with algorithms that would allow us to analyze the image in one pass. We tried:

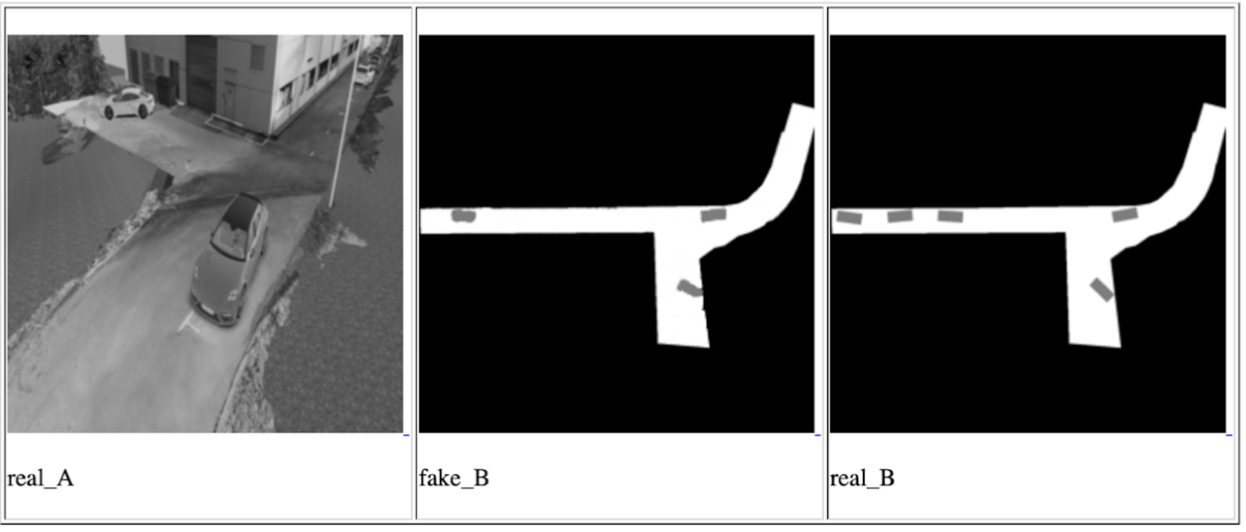

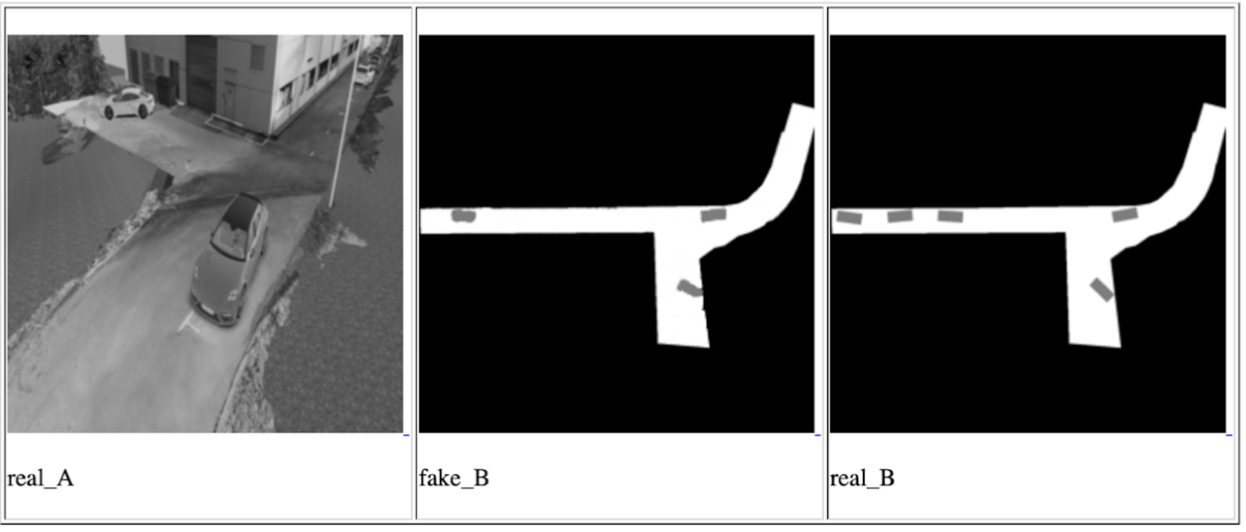

One of the Conditional GAN iterations for one camera, from left to right: input image, network prediction, expected result

Actually, the idea of these approaches is to ensure that the final network can understand the location and orientation of all cars and other moving objects that fell on the camera by looking at the input photo once. Data on objects in this case will be stored in latent vectors. The training of the network took place on the data from the simulator, which is an exact copy of the point where the demonstration will take place. And we managed to achieve certain results, but we decided not to use these methods for several reasons:

Nevertheless, we were interested in understanding the possibilities of these approaches, and we will keep them in mind for future tasks.

After that, we approached the task on the other hand, through a regular search for objects + a network to determine the spatial position of the found objects (for example, this or that ). This option seemed to us the most accurate. The only negative is that it is slower than the approaches proposed before, but it fits into our possible delay framework, since the speed of the car in the service area is no more than 5 km / h. The most interesting work in the field of predicting the 3D position of the object seemed to us to be this one , which shows pretty good results on KITTI. We built a similar network with some changes and wrote our own algorithm for determining the surrounding box, and to be more precise, an algorithm for estimating the coordinates of the center of the projection of the object onto the ground - to make decisions about the direction of movement, we do not need data on the height of the objects. The image of the object and its type (car, pedestrian, ..) are fed to the network input, and its dimensions and spatial orientation are output. Next, the module evaluates the projection center and gives data for all objects: the coordinates of the center, orientation and dimensions (width and length).

In the final product, each picture is first run through the network to search for objects, then all objects are sent to the 3D network to predict orientation and size, after which we estimate the projection center of each and send it and the orientation and size data further. A feature of this method is that it is very strongly tied to the accuracy of the boundary box boundary from the object search network. For this reason, networks like YOLO did not suit us. We found the optimal balance of performance and accuracy of the boundary box on the RetinaNet network.

It is worth noting one thing that we were lucky with on this project: the land is flat. Well, that is, not as flat as a well-known community, but there are no bends in our territory. This allows the use of fixed monocular cameras to project objects into the coordinates of the earth plane without information about the distance to the object. Future plans include the introduction of monocular depth prediction. There are many works on this subject, here, for example, one of the last and very interesting that we are trying for future projects. Depth prediction will allow you to work not only on flat ground, it should potentially increase the accuracy of determining obstacles, simplify the process of configuring new cameras and eliminate the need to label each object - we don’t care what kind of object it is if it is some kind of obstacle.

That's all, thanks for reading, and I’ll be happy to answer questions. As a bonus, I want to talk about an unexpected negative effect: the autopilot does not care about the orientation of the car, for him it does not matter how to go - front or back. The main thing is to drive optimally and not crash into anyone. Therefore, there is a high probability that the car will travel part of the way in reverse, especially in small areas where high maneuverability is required. However, people are used to the fact that the car is mostly moving forward, and often expect the same behavior from the autopilot. If a business person sees a car that, instead of riding in front, rides backwards, then he may consider that the product is not ready and contains errors.

PS I apologize that there are no images and videos with real testing, but I can not publish them for legal reasons.

The press release of the project from the customer can be found here .

To begin with, I’ll tell you why the automaker turned to us, and did not do the project on his own. It is difficult for large German concerns to change processes, and the car development format is rarely suitable for software - iterations are long and require good planning. It seems to me that German automakers understand this, and therefore you can meet startups that are founded by them, but work as an independent company (for example, AID from Audi and Zenuity from Volvo). Other automakers are organizing events like Startup Autobahn, where they are looking for potential contractors for tasks and new ideas. They can order a product or prototype and after a short period of time get the finished result. This may turn out to be faster than trying to do the same thing yourself, and it costs no more than its own development in terms of costs.

Task

In the current project, the customer wants to understand whether it is possible to drive cars in service centers using “AI”. The user script is:

- The technician wants to start working with a machine that is somewhere in the parking lot outside the test area.

- He selects the car on the tablet, selects the service box and clicks “Drive in”.

- The car drives inside and stops at the end point (elevator, ramp, or something else).

- When the technician finishes work on the car, he presses a button on the tablet, the car drives out and parks in some empty space outside.

Features: not all cars have cameras. On the machines on which they are, we do not have access to them. The only data on the machine that we have access to is sonars and odometry

Sonars and odometry

Sonars are distance sensors that are installed in a circle on a car and often look like round dots, they allow you to estimate the distance to the object, but only close and with low accuracy. Odometry - data on the actual speed and direction of the car. Knowing this data and the initial position, you can pretty accurately determine the current position of the machine.

Thus, the car must be controlled by external sensors installed in the service area.

Decision

The architecture of the final product is as follows:

- In the service area we install external cameras, lidars and other things (hello Tesla).

- The data from the cameras goes to Jetson TX2 (three cameras each), which are engaged in the task of finding the machine and pre-processing images from the cameras.

- Further, the camera data comes to the central server, which is proudly called Control Tower and on which they fall into the perception, tracking and path-planning modules. As a result of the analysis, a decision is made on the further direction of movement of the car and it is sent to the car.

- At this stage of the project, another Jetson TX2 is put in the car, which, using our driver, connects to Vector, which decrypts the vehicle data and sends commands. TX2 receives control commands from a central server and broadcasts them to the car.

For infrastructure level, ROS is used .

Here's what happens after a technician chooses a car and clicks “drive in”:

- The system is looking for a car: we send a command to the car to blink alarms, after which we can determine which of the cars in the parking lot is selected by the technician. At the initial stage of development, we also considered the option of determining the machine by the number plate, but in some areas of the parked car the number may not be visible. In addition, if we made the determination of the car by registration number, then the resolution of the photos would have to be greatly increased, which would negatively affect the performance, and we use the same image for searching and driving. This stage occurs once and is repeated only if for some reason we lost the car in tracking.

- As soon as the car is found, we drop the pictures from the cameras that the car hits into the perception module, which segmentes the space and gives the coordinates of all objects, their orientation and sizes. This process is ongoing, running at about 30 frames per second. Subsequent processes are also constant and run until the machine arrives at the end point.

- The tracking module receives input from perception, sonars and odometry, keeps in memory all the objects found, combines them, refines the location, predicts the position and speed of the objects.

- Next, the path planner, which is divided into two parts: global path planner for the global route and local path planner for the local (responsible for avoiding obstacles), builds a path and decides where to go to our car, sends a command.

- Jetson takes the command by car and broadcasts it to the car.

Departure takes place in the same way as arrival.

Perception

One of the main and, in my opinion, the most interesting module is perception. This module describes the data from the sensors in such a way that you can accurately make a decision about the movement. In our project, it gives the coordinates, orientation and sizes of all objects that fall on the camera. When designing this module, we decided to start with algorithms that would allow us to analyze the image in one pass. We tried:

- Disentangled VAE . A small modification made to β-VAE allowed us to train the network so that latent vectors stored image information in a schematic top-down view.

- Conditional GAN (the most famous implementation is pix2pix ). This network can be used to build maps. We also used it to build a schematic view from above, putting data from one or all cameras into it at the same time and waiting for a schematic view from above at the output.

One of the Conditional GAN iterations for one camera, from left to right: input image, network prediction, expected result

Actually, the idea of these approaches is to ensure that the final network can understand the location and orientation of all cars and other moving objects that fell on the camera by looking at the input photo once. Data on objects in this case will be stored in latent vectors. The training of the network took place on the data from the simulator, which is an exact copy of the point where the demonstration will take place. And we managed to achieve certain results, but we decided not to use these methods for several reasons:

- In the allotted time, we could not learn how to use data from latent vectors to describe the image. The result of the network has always been a picture - a top view with a schematic layout of objects. This is less accurate and we feared that such accuracy would not be enough to drive a car.

- The solution is not scalable: for all subsequent installations and for cases when you need to change the direction of some cameras, reconfiguration of the simulator and repeated full training are required.

Nevertheless, we were interested in understanding the possibilities of these approaches, and we will keep them in mind for future tasks.

After that, we approached the task on the other hand, through a regular search for objects + a network to determine the spatial position of the found objects (for example, this or that ). This option seemed to us the most accurate. The only negative is that it is slower than the approaches proposed before, but it fits into our possible delay framework, since the speed of the car in the service area is no more than 5 km / h. The most interesting work in the field of predicting the 3D position of the object seemed to us to be this one , which shows pretty good results on KITTI. We built a similar network with some changes and wrote our own algorithm for determining the surrounding box, and to be more precise, an algorithm for estimating the coordinates of the center of the projection of the object onto the ground - to make decisions about the direction of movement, we do not need data on the height of the objects. The image of the object and its type (car, pedestrian, ..) are fed to the network input, and its dimensions and spatial orientation are output. Next, the module evaluates the projection center and gives data for all objects: the coordinates of the center, orientation and dimensions (width and length).

In the final product, each picture is first run through the network to search for objects, then all objects are sent to the 3D network to predict orientation and size, after which we estimate the projection center of each and send it and the orientation and size data further. A feature of this method is that it is very strongly tied to the accuracy of the boundary box boundary from the object search network. For this reason, networks like YOLO did not suit us. We found the optimal balance of performance and accuracy of the boundary box on the RetinaNet network.

It is worth noting one thing that we were lucky with on this project: the land is flat. Well, that is, not as flat as a well-known community, but there are no bends in our territory. This allows the use of fixed monocular cameras to project objects into the coordinates of the earth plane without information about the distance to the object. Future plans include the introduction of monocular depth prediction. There are many works on this subject, here, for example, one of the last and very interesting that we are trying for future projects. Depth prediction will allow you to work not only on flat ground, it should potentially increase the accuracy of determining obstacles, simplify the process of configuring new cameras and eliminate the need to label each object - we don’t care what kind of object it is if it is some kind of obstacle.

That's all, thanks for reading, and I’ll be happy to answer questions. As a bonus, I want to talk about an unexpected negative effect: the autopilot does not care about the orientation of the car, for him it does not matter how to go - front or back. The main thing is to drive optimally and not crash into anyone. Therefore, there is a high probability that the car will travel part of the way in reverse, especially in small areas where high maneuverability is required. However, people are used to the fact that the car is mostly moving forward, and often expect the same behavior from the autopilot. If a business person sees a car that, instead of riding in front, rides backwards, then he may consider that the product is not ready and contains errors.

PS I apologize that there are no images and videos with real testing, but I can not publish them for legal reasons.