What is information?

A 2009 study by How Much Information showed that the amount of information consumed per week has increased 5-fold since 1986. From 250 thousand words a week to 1.25 million! Since then, this figure has increased significantly. The following are staggering indicators: in 2018, the number of Internet and social network users is 4.021 billion and 3.196 billion. A modern person analyzes an incredible amount of information per day, using various schemes and strategies for processing it, to make profitable decisions. The human species has generated 90% of the information in this world over the past two years. Now, if you round off, we produce about 2.5 quintillion bytes (2.5 * 10 ^ 18 bytes) of new information per day. If we divide this number by the number of people living now, it turns out that on average one person creates 0 per day,

How much information does Homo sapiens take? (hereinafter referred to as Homo). For simplicity in computer science, they coined a term called bit. Bit is the smallest unit of information. A file with this job takes several kilobytes. Such a document fifty years ago would occupy the entire memory of the most powerful computer. The average book in digital form takes up a thousand times more space and this is megabytes. A high-quality photo on a powerful camera is 20 megabytes. One digital drive is 40 times larger. Interesting proportions start with gigabytes. Human DNA, all the information about you and me is about 1.5 gigabytes. We multiply this by seven billion and get 1.05x10 ^ 19 bytes. In general, we can produce such a volume of information in modern conditions in 10 days. This number of bits will describe all the people living now. And this is only data about the people themselves, without interactions between them, without interactions with nature and culture, which man created for himself. How much will this figure increase if we add the variables and uncertainties of the future? Chaos would be a good word.

Information has an amazing property. Even when she is not there, she is. And here we need an example. Behavioral biology has a famous experiment. Opposite each other there are two cells. In the 1st high-ranking monkey. Alpha male. In the 2nd cage, a monkey with a lower status, a beta male. Both monkeys can watch their counterparts. Add an influence factor to the experiment. Between two cells we put a banana. A beta male will not dare to take a banana if he knows that the alpha male also saw this banana. For he immediately feels the whole aggression of the alpha male. Further, the initial conditions of the experiment are slightly altered. An alpha male cell is covered with an opaque cloth to deprive him of a view. Repeating everything that was done before, the picture becomes completely different. The beta male comes up and takes a banana without any remorse.

The thing is his ability to analyze, he knows that the alpha male did not see how to put a banana and for him a banana simply does not exist. The beta male analyzed the fact that there was no signal about the appearance of information about the banana in the alpha male and took advantage of the situation. A specific diagnosis is made to a patient in many cases when he has certain symptoms, but a huge number of diseases, viruses and bacteria can even confuse an experienced doctor, how can he determine an accurate diagnosis without spending time, which can be vital for the patient? Everything is simple. He analyzes not only the symptoms that the patient has, but also those that he does not have, which reduces the search time by tens of times. If something does not give this or that signal, it also carries certain information - as a rule, negative, but not always. Analyze not only information signals that exist, but also those that do not.

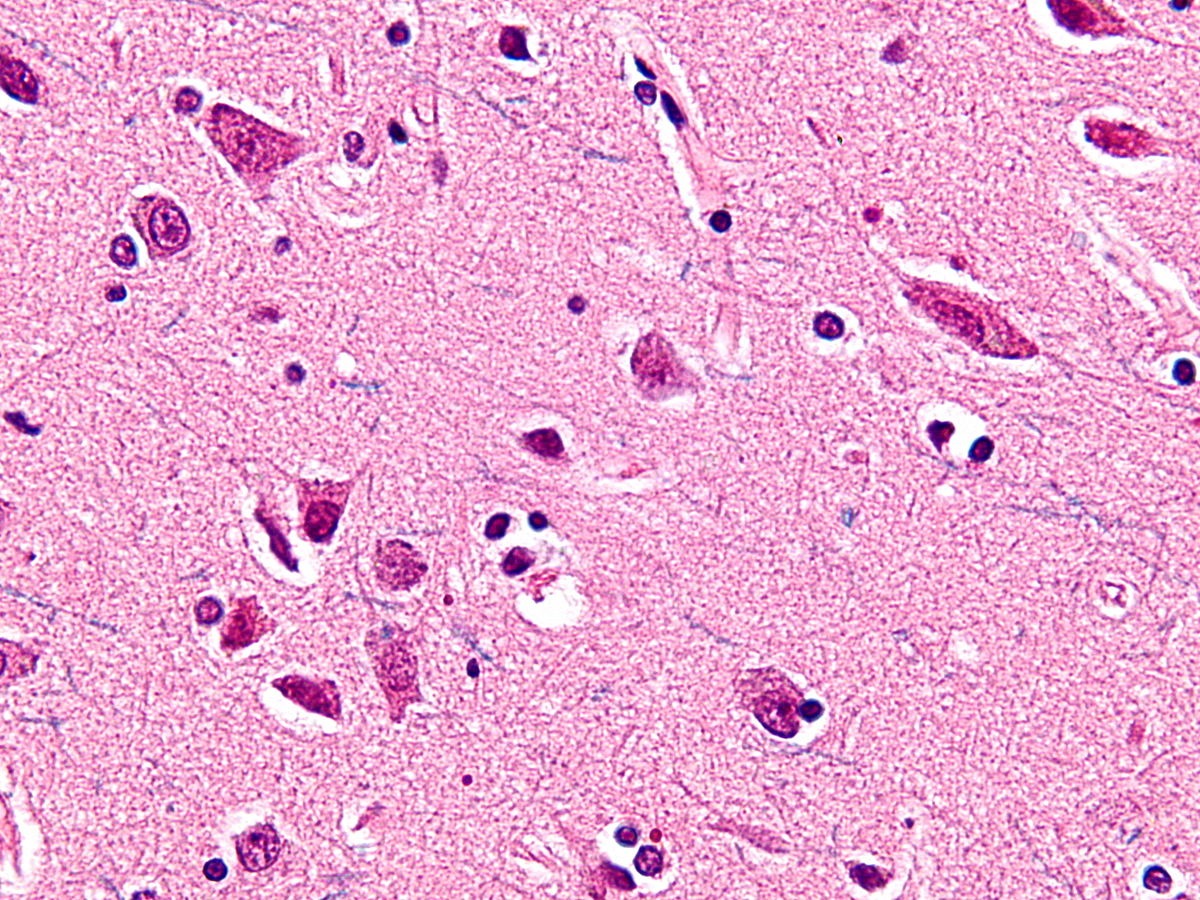

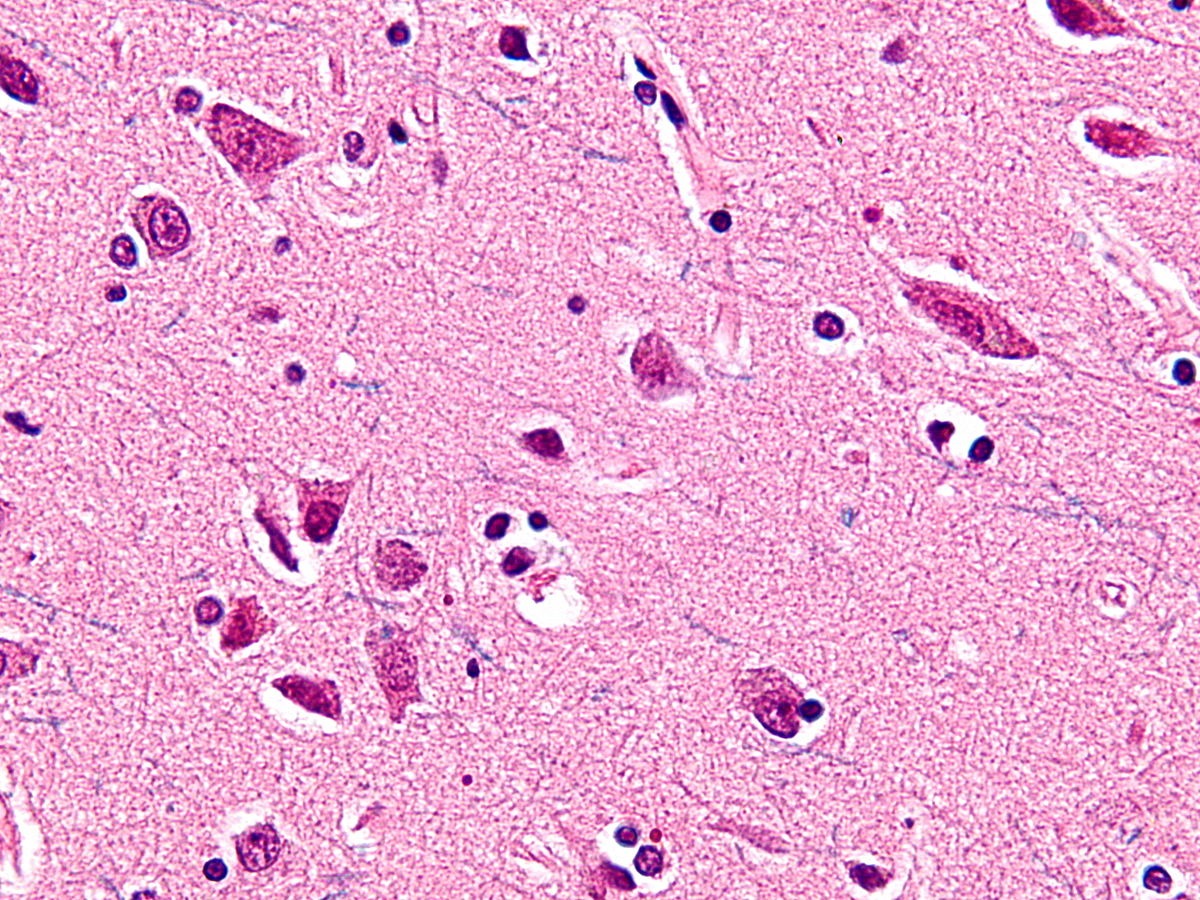

These examples add zeros and ones to the numbers above. In connection with the above figures and problems, a number of questions arise. How? How did you manage to achieve this? Is the body / society able to function normally in such conditions. How information affects biological, economic and other types of systems. The amount of information that we perceive in 2019 will seem scanty for posterity from 2050. Already, the view is creating new schemes and patterns of working with information, studying its properties and impact. Phrase: ““ I have lived a million years in a year ”is no longer jokes and not absurd, but reality. The amount of information that a person creates affects social, economic, cultural and even biological life. In 1980, they dreamed of creating a quantum computer to increase computing power. Dream of a view. The discoveries that this invention promised should have anticipated a new era. In 2018, IBM launched the first commercial quantum computer, but no one noticed. The news was discussed by an incredibly small number of people. She just drowned in the information abundance in which we now exist. The main area of research in recent years has become neuroscience, algorithms, mathematical models, artificial intelligence, which generally indicates the search for the possibility of normal functioning in an environment enriched with information. In 1929, von Economo neurons were discovered, which are found only in highly social groups of animals. There is a direct correlate of group size and brain size, the larger the group of animals, the larger their brain size relative to the body. It is not surprising that von Economo neurons are found only in cetaceans, elephants and primates.

while A.G. Lukashenko did not forbid them to communicate, look at them

This type of neuron is a neural adaptation in very large brains, which allows you to quickly process and transmit information on very specific projections, which has evolved in relation to new social behaviors. The apparent presence of these specialized neurons only in highly intelligent mammals can be an example of convergent evolution. New information always generates new, qualitatively different patterns and relationships. Patterns are established only on the basis of information. Example, a primate hits a stone on the bone of a dead buffalo. One hit and the bone breaks into two parts. One more blow and one more break. The third blow and a few more fragments. The pattern is clear. A bone strike and at least one new splinter. Are primates like good at recognizing patterns? Many intercourse and delayed childbirth after nine months.

How long did it take to connect these two events? For a long time, childbirth was not associated at all with sexual acts between a man and a woman. In most cultures and religions, the gods were responsible for the birth of a new life. The exact date for the discovery of this pattern, unfortunately, has not yet been established. However, it is worth noting that there are still closed hunter-gatherer societies that do not bind these processes, and for the birth in them there are special rituals performed by the shaman. The main cause of child mortality in childbirth before 1920 was dirty hands. Clean hands and a living child are also an example of an unobvious regularity. Here is another example of a pattern that remained implicit until 1930. What are you talking about? About blood groups. In 1930, Landsteiner received the Nobel Prize for this discovery. Up to this point, knowledge of that a person can be transfused with a blood group that coincides with the donor in need is unclear. There are thousands of similar examples. It is worth noting that the search for patterns is what the species does all the time. A businessman who finds a pattern in the behavior or needs of people, and then earns on this pattern for many years. Serious scientific research that allows us to predict climate change, human migration, finding places for mining, cyclical comets, the development of the embryo, the evolution of viruses and like the tip, the behavior of neurons in the brain. Of course, everything can be explained by the structure of the universe in which we live, and the second law of thermodynamics that entropy is constantly increasing, but this level is not suitable for practical purposes. You should choose closer to life. The level of biology and computer science.

What is information? According to popular beliefs, information is information regardless of the form of their presentation or the solution to the problem of uncertainty. In physics, information is a measure of the order of a system. In information theory, the definition of this term is as follows: information is data, bits, facts or concepts, a set of values. All of these concepts are vague and inaccurate; moreover, I believe that they are a little erroneous.

To prove this, we put forward the thesis - the information itself is meaningless. What is the number “3”? Or what is the letter “A”? A symbol without an assigned value. But what is the number “3” in the blood group graph? This is the meaning that will save a life. It already affects the behavior strategy. An example brought to the point of absurdity, but not losing its significance. Douglas Adams wrote The Hitchhiker's Guide to the Galaxy. In this book, the created quantum computer was supposed to answer the main question of life and the universe. What is the meaning of life and the universe? The answer was received after seven and a half million years of continuous computing. The computer concluded by repeatedly checking the value for correctness that the answer was “42”. The above examples make it clear that information without the external environment in which it is located (context) does not mean anything. The number “2” can mean the number of monetary units, patients with ebola, happy children, or be an indicator of the erudition of a person in some matter. For further proof, let's move on to the world of biology: the leaves of plants often have the shape of a semicircle and at first they rise upward, expanding, but after a certain point of refraction, they stretch downward, narrowing. In DNA, as in the main carriers of information or values, there is no gene that encodes their downward pull after a certain point. The fact that the leaf of the plant stretches down is a trick of gravity. but after a certain point of refraction, they stretch downward, tapering. In DNA, as in the main carriers of information or values, there is no gene that encodes their downward pull after a certain point. The fact that the leaf of the plant stretches down is a trick of gravity. but after a certain point of refraction, they stretch downward, tapering. In DNA, as in the main carriers of information or values, there is no gene that encodes their downward pull after a certain point. The fact that the leaf of the plant stretches down is a trick of gravity.

DNA itself, that of plants, that of mammals, that of the already mentioned Homo Sapiens, carries little information, if at all. DNA is a set of meanings in a particular environment. DNA mainly carries transcription factors, something that must be activated by a certain external environment. Place the plant / human DNA in an environment with a different atmosphere or gravity, and the output will be a different product. Therefore, transferring our DNA for research purposes to alien life forms is a rather stupid occupation. It is possible, in their midst, human DNA will grow into something more terrifying than a biped erect primate with a protruding thumb and ideas about equality. Information is values / data / bits / matter in any form and in continuous connection with the environment, system or context. Information does not exist without environmental factors, system or context. Only inextricably linked to these conditions, is information able to convey meanings. In the language of mathematics or biology, information does not exist without an external environment or systems whose variables it affects. Information is always an appendage of the circumstances in which it moves. This article will discuss the main ideas of information theory. Proceedings of the intellectual activity of Claude Shannon, Richard Feynman. This article will discuss the main ideas of information theory. Proceedings of the intellectual activity of Claude Shannon, Richard Feynman. This article will discuss the main ideas of information theory. Proceedings of the intellectual activity of Claude Shannon, Richard Feynman.

A distinctive feature of the species is the ability to create abstractions and build patterns. Represent some phenomena through others. We are coding. Photons on the retina create pictures, air vibrations are converted into sounds. We associate a certain sound with a certain picture. The chemical element in the air, with its receptors in the nose, we interpret as a smell. Through drawings, pictures, hieroglyphs and sounds, we can connect events and transmit information.

so he actually encodes your reality

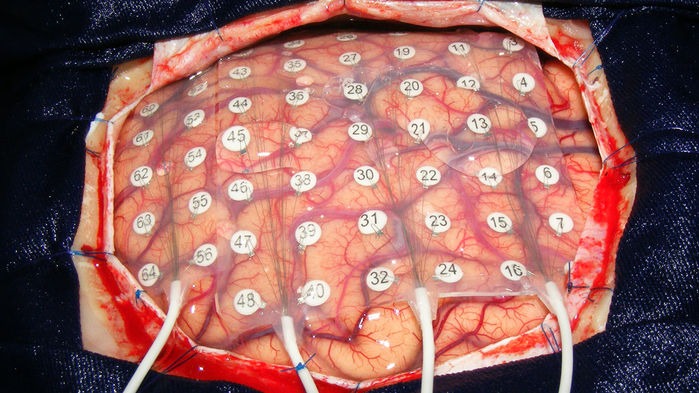

Such coding and abstractions should not be underestimated, just remember how much it affects people. Encodings are able to prevail over biological programs, a person for the sake of an idea (a picture in his head that defines a strategy of behavior) refuses to transfer copies of his genes further. Or remember the full power of physical formulas that allowed us to send a representative of a species into space. Chemical equations that help treat people and so on. Moreover, we can encode what is already encoded. The simplest example is a translation from one language to another. One code is presented in the form of another. The ease of transformation, as the main factor in the success of this process, allows you to make it endless. You can translate the expression from Japanese to Russian, from Russian to Spanish, from Spanish to the binary system, from it to Morse code, after presenting it in Braille, then in the form of a computer code, and then in the form of electrical impulses, put it directly into the brain, where it decodes the message. Recently they did the reverse process and decoded brain activity into speech.

put electrodes in the picture above and considered all your uniqueness

In the period from forty to twenty thousand years ago, primitive people began to actively encode information in the form of speech or gesture codes, rock paintings. Modern people, observing the first cave paintings, try to determine (decode) their meaning, the search for meanings is another distinguishing feature of the species. By reconstructing the context of certain markers or remnants of information, modern anthropologists are trying to understand the life of primitive people. The quintessence of the coding process is embodied in the form of writing. Writing, solved the problem of information loss during its transmission not only in space but also in time. Hieroglyphs of numbers allow you to encode calculations, words, objects, etc. However, if the problem is solved more or less effectively with accuracy, if, of course, both participants in the communication process use the same conditional agreements for the interpretation and decoding of the same characters (hieroglyphs), then printed writing has failed with time and transmission speed. To solve the speed problem, radio and telecommunication systems were invented. The key stage in the development of information transfer can be considered two ideas. The first is digital communication channels, and the second is the development of the mathematical apparatus. Digital communication channels solved the problem in the speed of information transfer, and the mathematical apparatus in its accuracy. The key stage in the development of information transfer can be considered two ideas. The first is digital communication channels, and the second is the development of the mathematical apparatus. Digital communication channels solved the problem in the speed of information transfer, and the mathematical apparatus in its accuracy. The key stage in the development of information transfer can be considered two ideas. The first is digital communication channels, and the second is the development of the mathematical apparatus. Digital communication channels solved the problem in the speed of information transfer, and the mathematical apparatus in its accuracy.

Any channel has a certain level of noise and interference, due to which information comes with interference (the set of values and characters is distorted, the context is lost) or does not come at all. With the development of technology, the amount of noise in digital communication channels decreased, but never reduced to zero. As the distance increased, it generally increased. The key problem that needs to be solved when information is lost in digital communication channels has been identified and resolved.Claude Shannon in 1948, and he coined the term beat. It sounds as follows: - “Let the message source have entropy (H) for one second, and (C) the channel bandwidth. If H <C or H = C, then such coding of information is possible in which the source data will be transmitted through the channel with an arbitrarily small number of errors. "

but they didn’t call you to play this game

This formulation of the problem is the reason for the rapid development of the theory of information. The main problems that she solves and tries to solve are that digital channels, as mentioned above, have noise. Or we formulate it as follows - “there is no absolute reliability of the channel in the transmission of information”. Those. information can be lost, distorted, filled with errors due to environmental influences on the information transmission channel. Claude Shannon, put forward a number of theses, from which it follows that the ability to transmit information without loss and changes in it, i.e. with absolute accuracy, exists in most channels with noise. In fact, he allowed Homo Sapiens not to spend efforts on improving communication channels. Instead, he proposed the development of more efficient coding and decoding schemes for information. Present information in the form of 0 and 1. The idea can be expanded to mathematical abstractions or language coding. Demonstrate the effectiveness of the idea can be an example. The scientist observes the behavior of quarks at the hadron collider, he enters his data into a table and analyzes, displays the regularity in the form of formulas, formulates the main trends in the form of equations or writes in the form of mathematical models, factors affecting the behavior of quarks. He needs to transfer this data without loss. He faces a series of questions. To use or transmit a digital communication channel through your assistant or to call and personally tell everything? Time is critically short, and information needs to be transmitted urgently, which is why e-mail is eliminated. The helper is an absolutely unreliable communication channel with a probability of noise close to infinity.

How accurately can he reproduce the data in the table? If the table has one row and two columns, then it’s pretty accurate. And if there are ten thousand rows and fifty columns? Instead, it conveys a pattern encoded in the form of a formula. If he was in a situation where he could transfer the table without losses and was sure that another participant in the communication process would come to the same patterns, and if time would not be an influence factor, then the question would be meaningless. However, the regularity deduced as a formula reduces the amount of time it takes to decode, it is less susceptible to transformations and noise when transmitting information. Examples of such encodings in the course will be given multiple times. A communication channel can be considered a disk, a person, paper, a satellite dish, a telephone, a cable through which signals flow. Encoding not only eliminates the problem of information loss, but also the problem of its volume. Using coding, you can reduce the dimension, reduce the amount of information. After reading the book, the probability of retelling the book without loss of information tends to zero, in the absence of savant syndrome. Having encoded (formulated) the main idea of the book in the form of a certain statement, we present its brief review. The main task of coding is to shorten the formulation of the original signal without loss of information for its transmission to a large distance out of time to another participant in communication, so that the participant was able to decode it effectively. A web page, a formula, an equation, a text file, a digital image, digitized music, a video image are all striking examples of encodings.

The problems of transmission accuracy, distance, time, and the encoding process were solved to one degree or another, and this allowed us to create information many times more than a person is able to perceive, find patterns that will go unnoticed for a long time. There are a number of other problems. Where to store such a volume of information? How to store? Modern coding and mathematical apparatus, as it turned out, does not completely solve the storage problems. There is a limit to the shortening of information and a limit to its encoding, after which it is not possible to decode the values back. As already mentioned above, a set of values without a context or external environment, no longer carries information. However, it is possible to encode separately information about the external environment and the set of values, and then combine in the form of certain indices and decode the indices themselves, however, the original values about the set of values and the external environment still need to be stored somewhere. Wonderful ideas were proposed, which are now being used everywhere, but they will be considered in another article.

Looking ahead, we can give an example of the fact that it is not necessary to describe the entire external environment, we can only formulate the conditions for its existence in the form of laws and formulas. What is science? Science is the highest degree of mimicry over nature. Scientific achievements are an abstract embodiment of real-life phenomena. One of the solutions to the problem of information storage was described in a charming article by Richard Feynman “There is a lot of space below: an invitation to a new world of physics”. This article is often considered the work that laid the foundation for the development of nanotechnology. In it, the physicist suggests paying attention to the amazing features of biological systems, as information repositories. In miniature and tiny systems, an incredibly large amount of data about behavior is contained - the way they store and use information can cause nothing but admiration. If we talk about how much biological systems can store information, then Nature magazine estimated that all the information, values, data and patterns of the world can be written into DNA storage weighing up to one kilogram. That's the whole contribution to the universe, one kilogram of matter. DNA is an extremely efficient structure for the storage of information, which allows you to store and use sets of values in huge volumes. If anyone is interested, then herean article that tells how to write down photos of cats and generally any information in a DNA repository, even Scriptonite songs (extremely stupid use of DNA).

It encodes that you listen to garbage

Feynman draws attention to how much information is encoded in biological systems, that in the process of existence, they not only encode information, but also change the structure of matter on the basis of this. If until this moment all the ideas proposed were based only on the encoding of a set of values or information, as such, then after this article the question was already in the encoding of the external environment within individual molecules. Encode and modify matter at the atomic level, enclose information in them, and so on. For example, he suggests creating connecting wires with a diameter of several atoms. This, in turn, will increase the number of computer components by a factor of millions, and such an increase in elements will qualitatively improve the computing power of future intelligent machines. Feynman, as the creator of quantum electrodynamics and man,

He emphasizes that physics does not prohibit the creation of objects atom by atom. In the article, he resorts to comparing the activities of man and machine, paying attention to the fact that any representative of the species can easily recognize the faces of people, unlike computers, for which at that time it was a task outside of computing power. He asks a number of important questions from “what prevents the creation of an ultra-small copy of something?” to “the difference between computers and the human brain only in the number of constituent elements?”, he also describes the mechanisms and main problems in creating something of atomic size.

Contemporaries estimated the number of brain neurons at about 86 billion, naturally, not a single computer, then and now, approached this value, as it turned out, this was not necessary. However, the work of Richard Feynman began to move the idea of information downward, where there is a lot of space. The article was published in 1960, after the appearance of the work of Alan Turing “Computers and Mind” of one of the most cited works of the kind. Therefore, a comparison of human activities and computers was a trend that was reflected in the article by Richard Feynman.

Thanks to the direct contribution of the physicist, the cost of data storage is falling every year, cloud technologies are developing at an insane pace, a quantum computer has been created, we are writing data to DNA storages and doing genetic engineering, which once again proves that matter can be changed and encoded. The next article will talk about chaos, entropy, quantum computers, spiders, ants, hidden Markov models, and category theory. There will be more math, punk rock and dna. Continued here in this article .

How much information does Homo sapiens take? (hereinafter referred to as Homo). For simplicity in computer science, they coined a term called bit. Bit is the smallest unit of information. A file with this job takes several kilobytes. Such a document fifty years ago would occupy the entire memory of the most powerful computer. The average book in digital form takes up a thousand times more space and this is megabytes. A high-quality photo on a powerful camera is 20 megabytes. One digital drive is 40 times larger. Interesting proportions start with gigabytes. Human DNA, all the information about you and me is about 1.5 gigabytes. We multiply this by seven billion and get 1.05x10 ^ 19 bytes. In general, we can produce such a volume of information in modern conditions in 10 days. This number of bits will describe all the people living now. And this is only data about the people themselves, without interactions between them, without interactions with nature and culture, which man created for himself. How much will this figure increase if we add the variables and uncertainties of the future? Chaos would be a good word.

Information has an amazing property. Even when she is not there, she is. And here we need an example. Behavioral biology has a famous experiment. Opposite each other there are two cells. In the 1st high-ranking monkey. Alpha male. In the 2nd cage, a monkey with a lower status, a beta male. Both monkeys can watch their counterparts. Add an influence factor to the experiment. Between two cells we put a banana. A beta male will not dare to take a banana if he knows that the alpha male also saw this banana. For he immediately feels the whole aggression of the alpha male. Further, the initial conditions of the experiment are slightly altered. An alpha male cell is covered with an opaque cloth to deprive him of a view. Repeating everything that was done before, the picture becomes completely different. The beta male comes up and takes a banana without any remorse.

The thing is his ability to analyze, he knows that the alpha male did not see how to put a banana and for him a banana simply does not exist. The beta male analyzed the fact that there was no signal about the appearance of information about the banana in the alpha male and took advantage of the situation. A specific diagnosis is made to a patient in many cases when he has certain symptoms, but a huge number of diseases, viruses and bacteria can even confuse an experienced doctor, how can he determine an accurate diagnosis without spending time, which can be vital for the patient? Everything is simple. He analyzes not only the symptoms that the patient has, but also those that he does not have, which reduces the search time by tens of times. If something does not give this or that signal, it also carries certain information - as a rule, negative, but not always. Analyze not only information signals that exist, but also those that do not.

These examples add zeros and ones to the numbers above. In connection with the above figures and problems, a number of questions arise. How? How did you manage to achieve this? Is the body / society able to function normally in such conditions. How information affects biological, economic and other types of systems. The amount of information that we perceive in 2019 will seem scanty for posterity from 2050. Already, the view is creating new schemes and patterns of working with information, studying its properties and impact. Phrase: ““ I have lived a million years in a year ”is no longer jokes and not absurd, but reality. The amount of information that a person creates affects social, economic, cultural and even biological life. In 1980, they dreamed of creating a quantum computer to increase computing power. Dream of a view. The discoveries that this invention promised should have anticipated a new era. In 2018, IBM launched the first commercial quantum computer, but no one noticed. The news was discussed by an incredibly small number of people. She just drowned in the information abundance in which we now exist. The main area of research in recent years has become neuroscience, algorithms, mathematical models, artificial intelligence, which generally indicates the search for the possibility of normal functioning in an environment enriched with information. In 1929, von Economo neurons were discovered, which are found only in highly social groups of animals. There is a direct correlate of group size and brain size, the larger the group of animals, the larger their brain size relative to the body. It is not surprising that von Economo neurons are found only in cetaceans, elephants and primates.

while A.G. Lukashenko did not forbid them to communicate, look at them

This type of neuron is a neural adaptation in very large brains, which allows you to quickly process and transmit information on very specific projections, which has evolved in relation to new social behaviors. The apparent presence of these specialized neurons only in highly intelligent mammals can be an example of convergent evolution. New information always generates new, qualitatively different patterns and relationships. Patterns are established only on the basis of information. Example, a primate hits a stone on the bone of a dead buffalo. One hit and the bone breaks into two parts. One more blow and one more break. The third blow and a few more fragments. The pattern is clear. A bone strike and at least one new splinter. Are primates like good at recognizing patterns? Many intercourse and delayed childbirth after nine months.

How long did it take to connect these two events? For a long time, childbirth was not associated at all with sexual acts between a man and a woman. In most cultures and religions, the gods were responsible for the birth of a new life. The exact date for the discovery of this pattern, unfortunately, has not yet been established. However, it is worth noting that there are still closed hunter-gatherer societies that do not bind these processes, and for the birth in them there are special rituals performed by the shaman. The main cause of child mortality in childbirth before 1920 was dirty hands. Clean hands and a living child are also an example of an unobvious regularity. Here is another example of a pattern that remained implicit until 1930. What are you talking about? About blood groups. In 1930, Landsteiner received the Nobel Prize for this discovery. Up to this point, knowledge of that a person can be transfused with a blood group that coincides with the donor in need is unclear. There are thousands of similar examples. It is worth noting that the search for patterns is what the species does all the time. A businessman who finds a pattern in the behavior or needs of people, and then earns on this pattern for many years. Serious scientific research that allows us to predict climate change, human migration, finding places for mining, cyclical comets, the development of the embryo, the evolution of viruses and like the tip, the behavior of neurons in the brain. Of course, everything can be explained by the structure of the universe in which we live, and the second law of thermodynamics that entropy is constantly increasing, but this level is not suitable for practical purposes. You should choose closer to life. The level of biology and computer science.

What is information? According to popular beliefs, information is information regardless of the form of their presentation or the solution to the problem of uncertainty. In physics, information is a measure of the order of a system. In information theory, the definition of this term is as follows: information is data, bits, facts or concepts, a set of values. All of these concepts are vague and inaccurate; moreover, I believe that they are a little erroneous.

To prove this, we put forward the thesis - the information itself is meaningless. What is the number “3”? Or what is the letter “A”? A symbol without an assigned value. But what is the number “3” in the blood group graph? This is the meaning that will save a life. It already affects the behavior strategy. An example brought to the point of absurdity, but not losing its significance. Douglas Adams wrote The Hitchhiker's Guide to the Galaxy. In this book, the created quantum computer was supposed to answer the main question of life and the universe. What is the meaning of life and the universe? The answer was received after seven and a half million years of continuous computing. The computer concluded by repeatedly checking the value for correctness that the answer was “42”. The above examples make it clear that information without the external environment in which it is located (context) does not mean anything. The number “2” can mean the number of monetary units, patients with ebola, happy children, or be an indicator of the erudition of a person in some matter. For further proof, let's move on to the world of biology: the leaves of plants often have the shape of a semicircle and at first they rise upward, expanding, but after a certain point of refraction, they stretch downward, narrowing. In DNA, as in the main carriers of information or values, there is no gene that encodes their downward pull after a certain point. The fact that the leaf of the plant stretches down is a trick of gravity. but after a certain point of refraction, they stretch downward, tapering. In DNA, as in the main carriers of information or values, there is no gene that encodes their downward pull after a certain point. The fact that the leaf of the plant stretches down is a trick of gravity. but after a certain point of refraction, they stretch downward, tapering. In DNA, as in the main carriers of information or values, there is no gene that encodes their downward pull after a certain point. The fact that the leaf of the plant stretches down is a trick of gravity.

DNA itself, that of plants, that of mammals, that of the already mentioned Homo Sapiens, carries little information, if at all. DNA is a set of meanings in a particular environment. DNA mainly carries transcription factors, something that must be activated by a certain external environment. Place the plant / human DNA in an environment with a different atmosphere or gravity, and the output will be a different product. Therefore, transferring our DNA for research purposes to alien life forms is a rather stupid occupation. It is possible, in their midst, human DNA will grow into something more terrifying than a biped erect primate with a protruding thumb and ideas about equality. Information is values / data / bits / matter in any form and in continuous connection with the environment, system or context. Information does not exist without environmental factors, system or context. Only inextricably linked to these conditions, is information able to convey meanings. In the language of mathematics or biology, information does not exist without an external environment or systems whose variables it affects. Information is always an appendage of the circumstances in which it moves. This article will discuss the main ideas of information theory. Proceedings of the intellectual activity of Claude Shannon, Richard Feynman. This article will discuss the main ideas of information theory. Proceedings of the intellectual activity of Claude Shannon, Richard Feynman. This article will discuss the main ideas of information theory. Proceedings of the intellectual activity of Claude Shannon, Richard Feynman.

A distinctive feature of the species is the ability to create abstractions and build patterns. Represent some phenomena through others. We are coding. Photons on the retina create pictures, air vibrations are converted into sounds. We associate a certain sound with a certain picture. The chemical element in the air, with its receptors in the nose, we interpret as a smell. Through drawings, pictures, hieroglyphs and sounds, we can connect events and transmit information.

so he actually encodes your reality

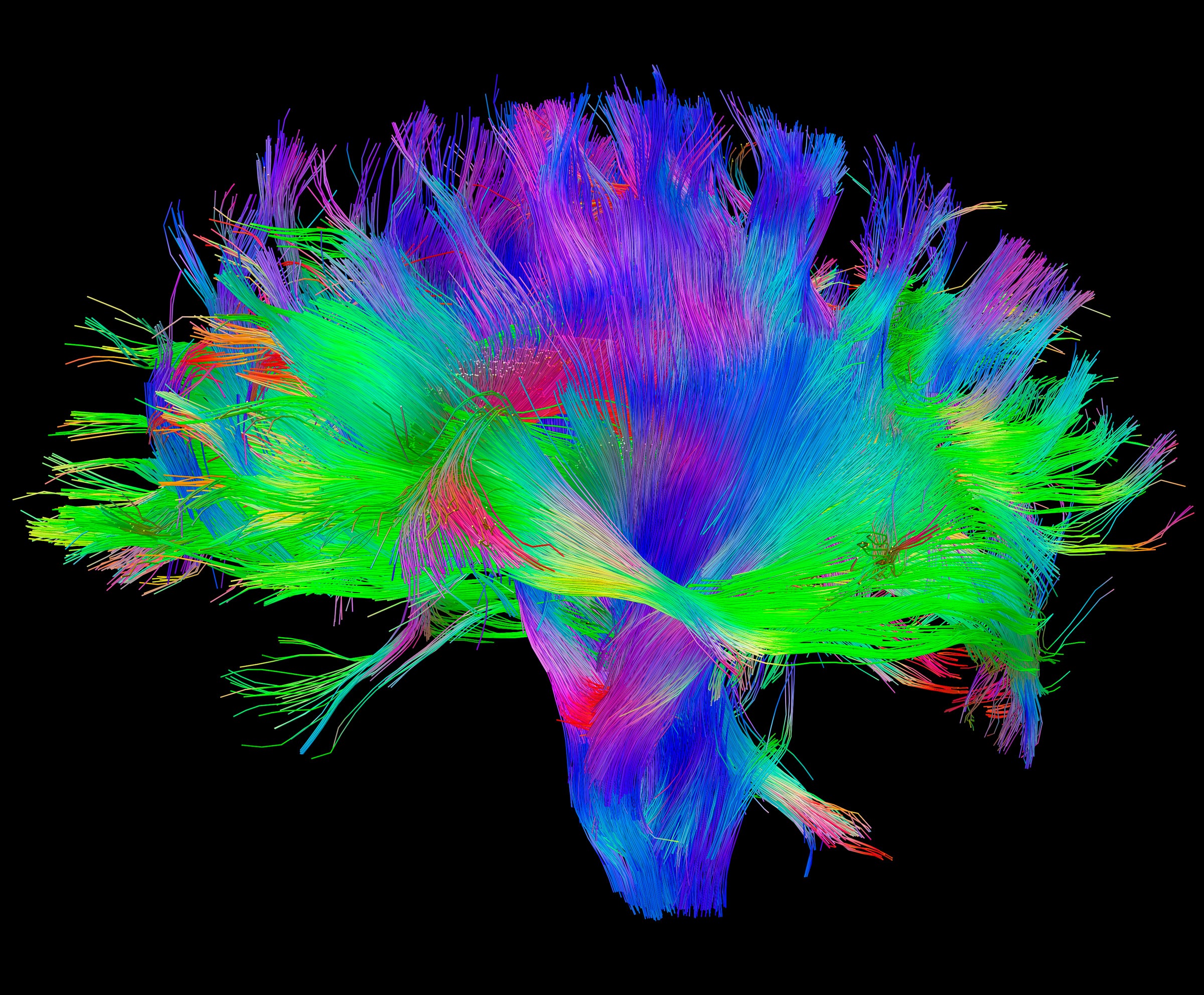

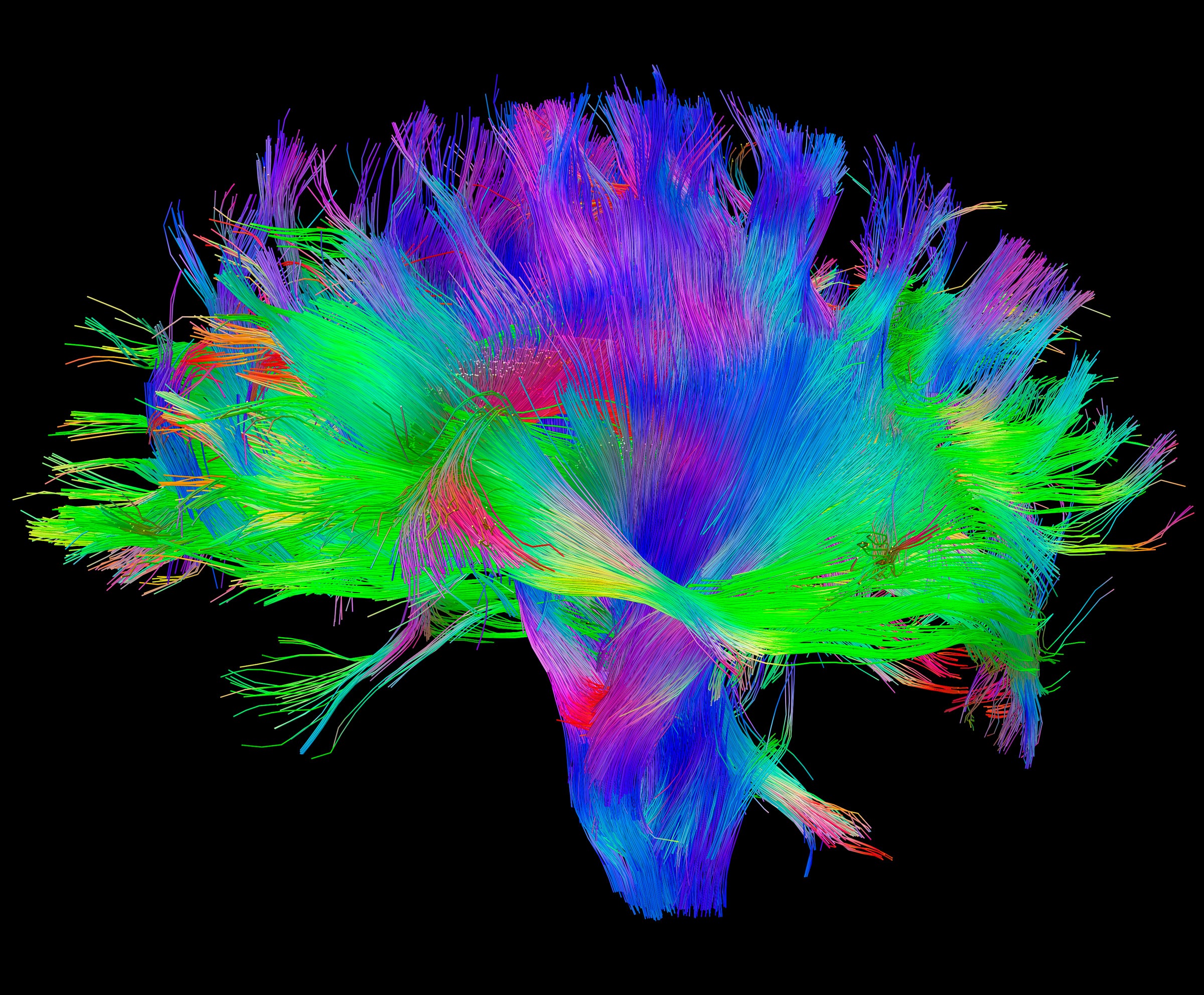

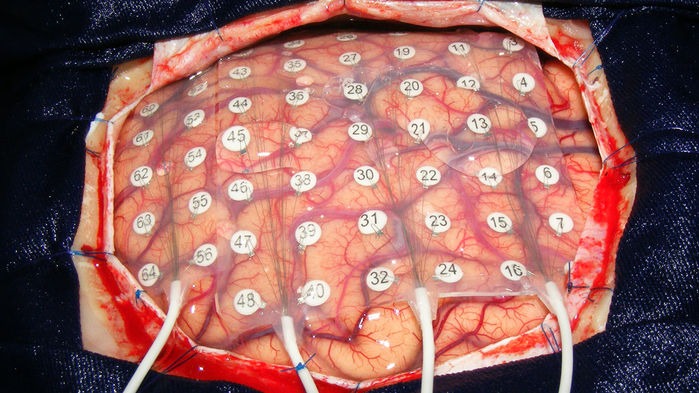

Such coding and abstractions should not be underestimated, just remember how much it affects people. Encodings are able to prevail over biological programs, a person for the sake of an idea (a picture in his head that defines a strategy of behavior) refuses to transfer copies of his genes further. Or remember the full power of physical formulas that allowed us to send a representative of a species into space. Chemical equations that help treat people and so on. Moreover, we can encode what is already encoded. The simplest example is a translation from one language to another. One code is presented in the form of another. The ease of transformation, as the main factor in the success of this process, allows you to make it endless. You can translate the expression from Japanese to Russian, from Russian to Spanish, from Spanish to the binary system, from it to Morse code, after presenting it in Braille, then in the form of a computer code, and then in the form of electrical impulses, put it directly into the brain, where it decodes the message. Recently they did the reverse process and decoded brain activity into speech.

put electrodes in the picture above and considered all your uniqueness

In the period from forty to twenty thousand years ago, primitive people began to actively encode information in the form of speech or gesture codes, rock paintings. Modern people, observing the first cave paintings, try to determine (decode) their meaning, the search for meanings is another distinguishing feature of the species. By reconstructing the context of certain markers or remnants of information, modern anthropologists are trying to understand the life of primitive people. The quintessence of the coding process is embodied in the form of writing. Writing, solved the problem of information loss during its transmission not only in space but also in time. Hieroglyphs of numbers allow you to encode calculations, words, objects, etc. However, if the problem is solved more or less effectively with accuracy, if, of course, both participants in the communication process use the same conditional agreements for the interpretation and decoding of the same characters (hieroglyphs), then printed writing has failed with time and transmission speed. To solve the speed problem, radio and telecommunication systems were invented. The key stage in the development of information transfer can be considered two ideas. The first is digital communication channels, and the second is the development of the mathematical apparatus. Digital communication channels solved the problem in the speed of information transfer, and the mathematical apparatus in its accuracy. The key stage in the development of information transfer can be considered two ideas. The first is digital communication channels, and the second is the development of the mathematical apparatus. Digital communication channels solved the problem in the speed of information transfer, and the mathematical apparatus in its accuracy. The key stage in the development of information transfer can be considered two ideas. The first is digital communication channels, and the second is the development of the mathematical apparatus. Digital communication channels solved the problem in the speed of information transfer, and the mathematical apparatus in its accuracy.

Any channel has a certain level of noise and interference, due to which information comes with interference (the set of values and characters is distorted, the context is lost) or does not come at all. With the development of technology, the amount of noise in digital communication channels decreased, but never reduced to zero. As the distance increased, it generally increased. The key problem that needs to be solved when information is lost in digital communication channels has been identified and resolved.Claude Shannon in 1948, and he coined the term beat. It sounds as follows: - “Let the message source have entropy (H) for one second, and (C) the channel bandwidth. If H <C or H = C, then such coding of information is possible in which the source data will be transmitted through the channel with an arbitrarily small number of errors. "

but they didn’t call you to play this game

This formulation of the problem is the reason for the rapid development of the theory of information. The main problems that she solves and tries to solve are that digital channels, as mentioned above, have noise. Or we formulate it as follows - “there is no absolute reliability of the channel in the transmission of information”. Those. information can be lost, distorted, filled with errors due to environmental influences on the information transmission channel. Claude Shannon, put forward a number of theses, from which it follows that the ability to transmit information without loss and changes in it, i.e. with absolute accuracy, exists in most channels with noise. In fact, he allowed Homo Sapiens not to spend efforts on improving communication channels. Instead, he proposed the development of more efficient coding and decoding schemes for information. Present information in the form of 0 and 1. The idea can be expanded to mathematical abstractions or language coding. Demonstrate the effectiveness of the idea can be an example. The scientist observes the behavior of quarks at the hadron collider, he enters his data into a table and analyzes, displays the regularity in the form of formulas, formulates the main trends in the form of equations or writes in the form of mathematical models, factors affecting the behavior of quarks. He needs to transfer this data without loss. He faces a series of questions. To use or transmit a digital communication channel through your assistant or to call and personally tell everything? Time is critically short, and information needs to be transmitted urgently, which is why e-mail is eliminated. The helper is an absolutely unreliable communication channel with a probability of noise close to infinity.

How accurately can he reproduce the data in the table? If the table has one row and two columns, then it’s pretty accurate. And if there are ten thousand rows and fifty columns? Instead, it conveys a pattern encoded in the form of a formula. If he was in a situation where he could transfer the table without losses and was sure that another participant in the communication process would come to the same patterns, and if time would not be an influence factor, then the question would be meaningless. However, the regularity deduced as a formula reduces the amount of time it takes to decode, it is less susceptible to transformations and noise when transmitting information. Examples of such encodings in the course will be given multiple times. A communication channel can be considered a disk, a person, paper, a satellite dish, a telephone, a cable through which signals flow. Encoding not only eliminates the problem of information loss, but also the problem of its volume. Using coding, you can reduce the dimension, reduce the amount of information. After reading the book, the probability of retelling the book without loss of information tends to zero, in the absence of savant syndrome. Having encoded (formulated) the main idea of the book in the form of a certain statement, we present its brief review. The main task of coding is to shorten the formulation of the original signal without loss of information for its transmission to a large distance out of time to another participant in communication, so that the participant was able to decode it effectively. A web page, a formula, an equation, a text file, a digital image, digitized music, a video image are all striking examples of encodings.

The problems of transmission accuracy, distance, time, and the encoding process were solved to one degree or another, and this allowed us to create information many times more than a person is able to perceive, find patterns that will go unnoticed for a long time. There are a number of other problems. Where to store such a volume of information? How to store? Modern coding and mathematical apparatus, as it turned out, does not completely solve the storage problems. There is a limit to the shortening of information and a limit to its encoding, after which it is not possible to decode the values back. As already mentioned above, a set of values without a context or external environment, no longer carries information. However, it is possible to encode separately information about the external environment and the set of values, and then combine in the form of certain indices and decode the indices themselves, however, the original values about the set of values and the external environment still need to be stored somewhere. Wonderful ideas were proposed, which are now being used everywhere, but they will be considered in another article.

Looking ahead, we can give an example of the fact that it is not necessary to describe the entire external environment, we can only formulate the conditions for its existence in the form of laws and formulas. What is science? Science is the highest degree of mimicry over nature. Scientific achievements are an abstract embodiment of real-life phenomena. One of the solutions to the problem of information storage was described in a charming article by Richard Feynman “There is a lot of space below: an invitation to a new world of physics”. This article is often considered the work that laid the foundation for the development of nanotechnology. In it, the physicist suggests paying attention to the amazing features of biological systems, as information repositories. In miniature and tiny systems, an incredibly large amount of data about behavior is contained - the way they store and use information can cause nothing but admiration. If we talk about how much biological systems can store information, then Nature magazine estimated that all the information, values, data and patterns of the world can be written into DNA storage weighing up to one kilogram. That's the whole contribution to the universe, one kilogram of matter. DNA is an extremely efficient structure for the storage of information, which allows you to store and use sets of values in huge volumes. If anyone is interested, then herean article that tells how to write down photos of cats and generally any information in a DNA repository, even Scriptonite songs (extremely stupid use of DNA).

It encodes that you listen to garbage

Feynman draws attention to how much information is encoded in biological systems, that in the process of existence, they not only encode information, but also change the structure of matter on the basis of this. If until this moment all the ideas proposed were based only on the encoding of a set of values or information, as such, then after this article the question was already in the encoding of the external environment within individual molecules. Encode and modify matter at the atomic level, enclose information in them, and so on. For example, he suggests creating connecting wires with a diameter of several atoms. This, in turn, will increase the number of computer components by a factor of millions, and such an increase in elements will qualitatively improve the computing power of future intelligent machines. Feynman, as the creator of quantum electrodynamics and man,

He emphasizes that physics does not prohibit the creation of objects atom by atom. In the article, he resorts to comparing the activities of man and machine, paying attention to the fact that any representative of the species can easily recognize the faces of people, unlike computers, for which at that time it was a task outside of computing power. He asks a number of important questions from “what prevents the creation of an ultra-small copy of something?” to “the difference between computers and the human brain only in the number of constituent elements?”, he also describes the mechanisms and main problems in creating something of atomic size.

Contemporaries estimated the number of brain neurons at about 86 billion, naturally, not a single computer, then and now, approached this value, as it turned out, this was not necessary. However, the work of Richard Feynman began to move the idea of information downward, where there is a lot of space. The article was published in 1960, after the appearance of the work of Alan Turing “Computers and Mind” of one of the most cited works of the kind. Therefore, a comparison of human activities and computers was a trend that was reflected in the article by Richard Feynman.

Thanks to the direct contribution of the physicist, the cost of data storage is falling every year, cloud technologies are developing at an insane pace, a quantum computer has been created, we are writing data to DNA storages and doing genetic engineering, which once again proves that matter can be changed and encoded. The next article will talk about chaos, entropy, quantum computers, spiders, ants, hidden Markov models, and category theory. There will be more math, punk rock and dna. Continued here in this article .