Terraformer - Infrastructure To Code

I would like to talk about the new CLI tool that I wrote to solve one old problem.

Problem

Terraform has long been the standard in the Devops / Cloud / IT community. The thing is very convenient and useful for doing infrastructure as code. There are many charms in Terraform as well as many forks, sharp knives and rakes.

With Terraform, it’s very convenient to do new things and then manage, change or delete them. And what about those who have a huge infrastructure in the cloud and are not created through Terraform? To rewrite and recreate the whole cloud is somehow expensive and unsafe.

I encountered such a problem in 2 works, the simplest example is when you want everything to be in the form of terraform files, and you have 250+ bucket and write a lot of them for terraform with your hands.

There is an issue since 2014 in terrafom which was closed in 2016 with the hope that there will be import.

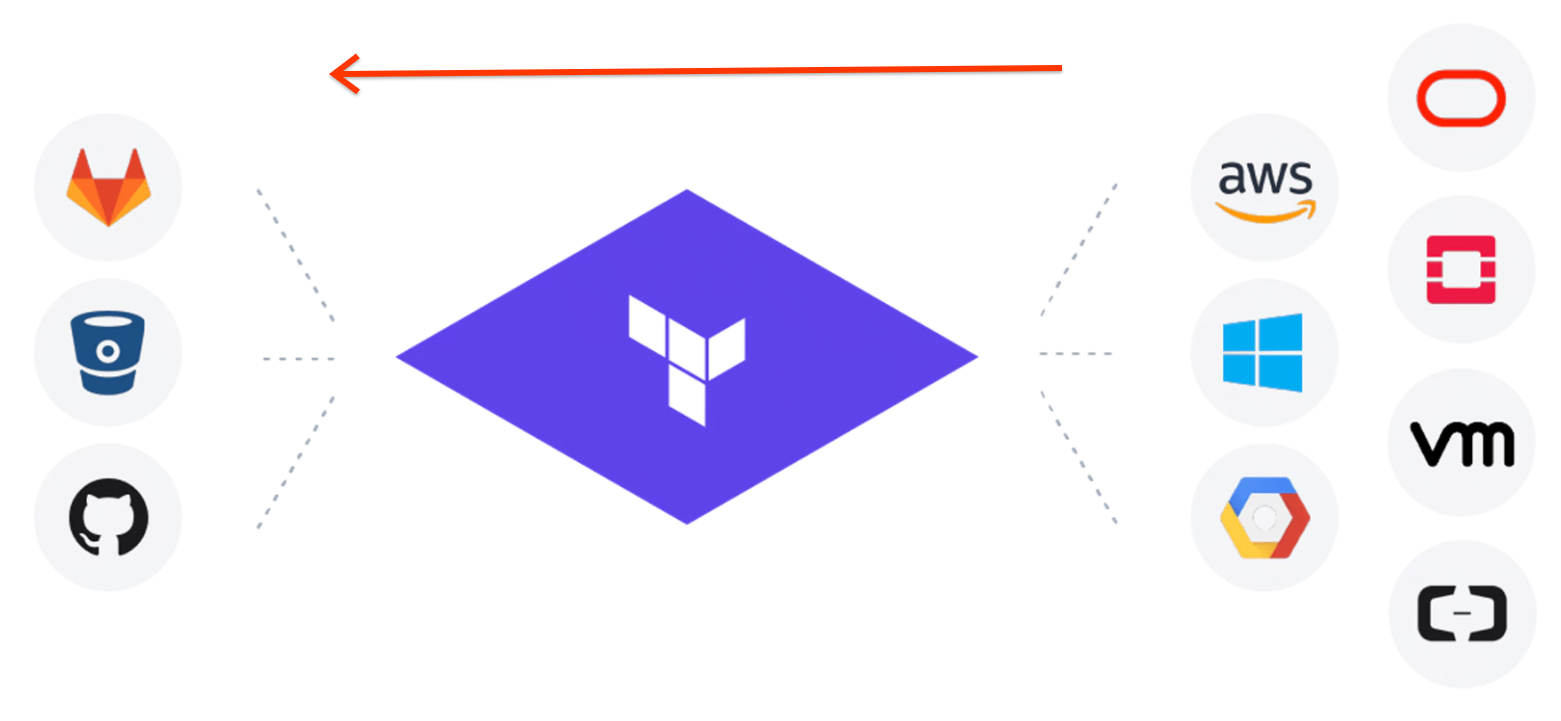

In general, everything is as in the picture only from right to left.

Warnings: The author does not live in Russia for half his life and writes little in Russian. Caution spelling errors.

Solutions

1. There are turnkey and old solutions for AWS terraforming . When I tried to get my 250+ bucket through it, I realized that everything was bad there. AWS has already thrown up a lot of new options for a long time and terraforming does not know about them and in general it has a ruby template that looks poor . After 2 in the evening I sent a Pull request to add more features there and realized that such a solution does not fit at all.

How terraforming works, it takes data from the AWS SDK and generates tf and tfstate through the template.

There are 3 problems:

1. There will always be backlogs in updates

2. tf files sometimes come out broken

3. tfstate is collected separately from tf and does not always converge

In general, it is difficult to get a result in which the `terraform plan` will say that there are no changes

2.` terraform import` is a built-in command in terraform. How does it work?

You write an empty TF file with the name and type of the resource, then run `terraform import` and pass the ID of the resource. terraform calls the provider receives the data and makes a tfstate file.

There are 3 problems:

1. We get only the tfstate file and tf is empty we need to write or convert with tfstate

2. We can work with only one resource each time and do not support all resources. And what should I do again with 250+ bucket

3. You need to know the resource ID - that is, you need to wrap it in code that gets the list of resources

In general, the result is partial and does not scale well

My decisions

Requirements: 1. Ability to create tf and tfstate files by resources. For example, download all bucket / security group / load balancer and that the `terraform plan` returned that there are no changes

2. You need 2 GCP + AWS clouds

3. A global solution that is easy to update every time and not waste time on each resource for 3 days

4. Make Open source - the problem for everyone is such a

Go language - because I love it, and it has a library for creating HCL files that is used in terraform + a lot of code in terraform which can be useful

Way

The first attempt

Started a simple option. Access to the cloud through the SDK for the desired resource and converting it into fields for terraform. The attempt died right away on the security group because I did not like 1.5 days to convert only the security group (and there are a lot of resources). For a long time and then the fields can be changed / added.

Second attempt.

Based on the ideas described here . Just take and convert tfstate to tf. All data is there and the fields are the same. How to get full tfstate for many resources ?? Then the `terraform refresh` command came to the rescue. terraform takes all the resources in tfstate and pulls the data by ID and writes everything to tfstate. That is, create an empty tfstate with only names and IDs, run `terraform refresh`, we get the full tfstate. Hurrah!

Now let's get down

Here is its important part attributes

"attributes": {

"id": "default/backend-logging-load-deployment",

"metadata.#": "1",

"metadata.0.annotations.%": "0",

"metadata.0.generate_name": "",

"metadata.0.generation": "24",

"metadata.0.labels.%": "1",

"metadata.0.labels.app": "backend-logging",

"metadata.0.name": "backend-logging-load-deployment",

"metadata.0.namespace": "default",

"metadata.0.resource_version": "109317427",

"metadata.0.self_link": "/apis/apps/v1/namespaces/default/deployments/backend-logging-load-deployment",

"metadata.0.uid": "300ecda1-4138-11e9-9d5d-42010a8400b5",

"spec.#": "1",

"spec.0.min_ready_seconds": "0",

"spec.0.paused": "false",

"spec.0.progress_deadline_seconds": "600",

"spec.0.replicas": "1",

"spec.0.revision_history_limit": "10",

"spec.0.selector.#": "1",

There are:

1. id - string

2. metadata - an array of size 1 and in it an object with fields which is described below

3. spec - hash of size 1 and in it key, value

In short, a fun format, everything can be in depth also at several levels

"spec.#": "1",

"spec.0.min_ready_seconds": "0",

"spec.0.paused": "false",

"spec.0.progress_deadline_seconds": "600",

"spec.0.replicas": "1",

"spec.0.revision_history_limit": "10",

"spec.0.selector.#": "1",

"spec.0.selector.0.match_expressions.#": "0",

"spec.0.selector.0.match_labels.%": "1",

"spec.0.selector.0.match_labels.app": "backend-logging-load",

"spec.0.strategy.#": "0",

"spec.0.template.#": "1",

"spec.0.template.0.metadata.#": "1",

"spec.0.template.0.metadata.0.annotations.%": "0",

"spec.0.template.0.metadata.0.generate_name": "",

"spec.0.template.0.metadata.0.generation": "0",

"spec.0.template.0.metadata.0.labels.%": "1",

"spec.0.template.0.metadata.0.labels.app": "backend-logging-load",

"spec.0.template.0.metadata.0.name": "",

"spec.0.template.0.metadata.0.namespace": "",

"spec.0.template.0.metadata.0.resource_version": "",

"spec.0.template.0.metadata.0.self_link": "",

"spec.0.template.0.metadata.0.uid": "",

"spec.0.template.0.spec.#": "1",

"spec.0.template.0.spec.0.active_deadline_seconds": "0",

"spec.0.template.0.spec.0.container.#": "1",

"spec.0.template.0.spec.0.container.0.args.#": "3",In general, who wants a programming task for an interview, just ask to write a parser for this matter :)

After many attempts to write a parser without bugs, I found a part of it in the terraform code and the most important part. And everything seemed to work fine.

Trying three

terraform providers - these are binaries in which there is code with all the resources and logic for working with the cloud API. Each cloud has its own provider and terraform itself only calls them through its RPC protocol between two processes.

Now I decided to access terraform providers directly through RPC calls. It turned out beautifully and made it possible to change terraform providers to newer ones and get new opportunities without changing the code. It turned out that not all fields in tfstate should be in tf, but how can I find out? Just ask the provider about this. Then another

In the end, we got a useful CLI tool which has a common infrastructure for all terraform providers and you can easily add a new one. Also adding resources takes up little code. Plus all sorts of goodies such as the connection between resources. Of course there were many different problems that all can not be described.

He called the little animal Terrafomer.

The final

Using Terrafomer, we generated 500-700 thousand lines of tf + tfstate code from two clouds. They could take legacy things and start touching them only through terraform as in the best ideas of infrastructure as code. It's just magic when you take a huge cloud and get it through the command in the form of terraform working files. And then grep / replace / git and so on.

He combed out and put in order, received permission. Released on github for all on Thursday (05/02/19). github.com/GoogleCloudPlatform/terraformer

Already received 600 stars, 2 pull requests adding support for openstack and kubernetes. Good feedback. In general, the project is useful for people.

I advise everyone who wants to start working with Terraform and not rewrite everything for this.

I would be happy to pull requests, issues, stars.

Demo

Updates: screwed up minimal Openstack support and kubernetes support is almost ready, thanks to people for PRs