Cisco HyperFlex vs. competitors: testing performance

We continue to introduce you to the Cisco HyperFlex Hyper-Converged System.

In April 2019, Cisco again held a series of demonstrations of the new Cisco HyperFlex hyperconverged solution in the regions of Russia and Kazakhstan. You can sign up for a demonstration through the feedback form by clicking on the link. Join now!

Earlier, we published an article on stress tests performed by the independent ESG Lab in 2017. In 2018, Cisco HyperFlex (HX version 3.0) features improved significantly. In addition, competitive solutions also continue to improve. That is why we are publishing a newer, more recent version of ESG's comparative load tests.

In the summer of 2018, the ESG laboratory conducted a repeated comparison of Cisco HyperFlex with competitors. Given the current trend of using Software-defined solutions, manufacturers of similar platforms were also added to the comparative analysis.

During testing, HyperFlex was compared with two fully software hyperconverged systems that are installed on standard x86 servers, as well as with one software and hardware solution. Testing was carried out using standard software for hyperconverged systems - HCIBench, which uses the Oracle Vdbench tool and automates the testing process. In particular, HCIBench automatically creates virtual machines, coordinates the load between them and generates convenient and understandable reports.

140 virtual machines per cluster were created (35 on the cluster node). Each virtual machine used 4 vCPUs, 4 GB RAM. The local VM disk was 16 GB and an additional 40 GB disk.

The following cluster configurations participated in the testing:

The processors and RAM of all the solutions were identical.

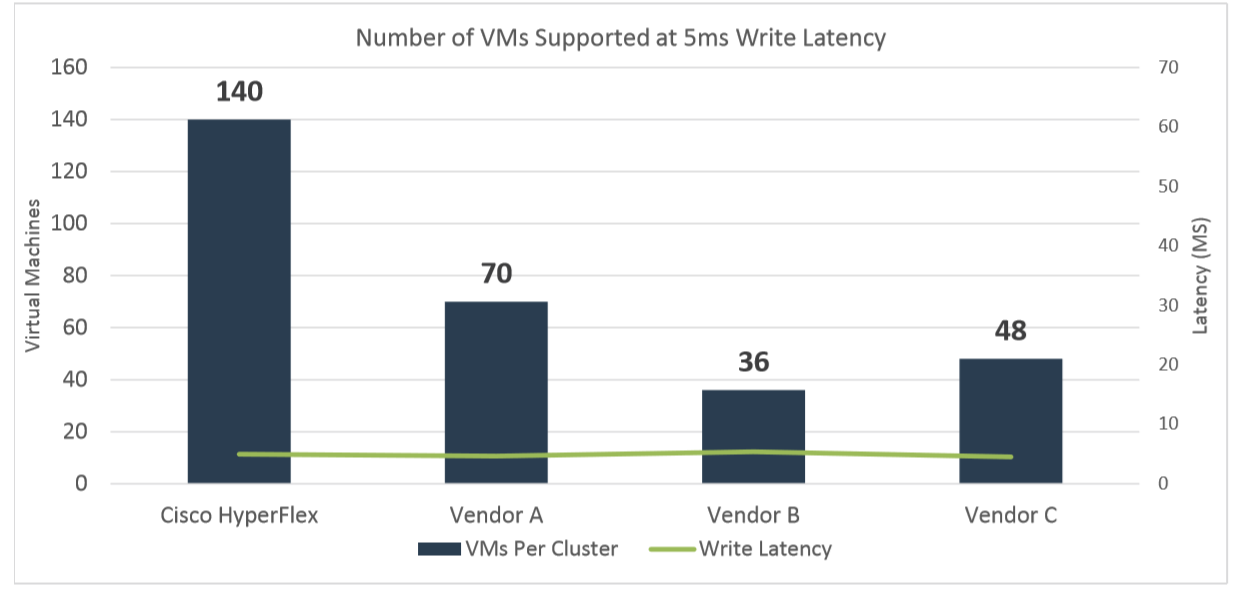

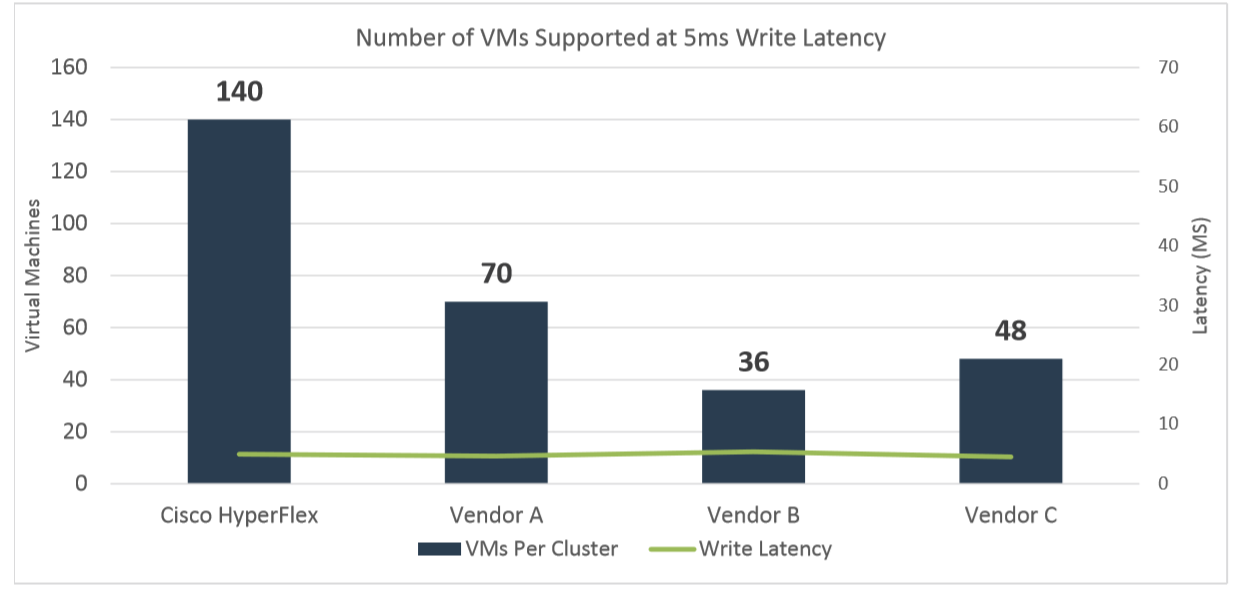

Testing began with a workload designed to emulate a standard OLTP test: read / write (RW) 70% / 30%, 100% FullRandom with a target value of 800 IOPS per virtual machine (VM). The test was carried out at 140 VMs in each cluster for three to four hours. The purpose of the test is to save delays when recording on the maximum number of VMs at 5 milliseconds or less.

As a result of the test (see the graph below), HyperFlex was the only platform that completed this test with the initial 140 VMs and with delays below 5 ms (4.95 ms). For each of the other clusters, the test was restarted in order to experimentally adjust the number of VMs for a target delay of 5 ms over several iterations.

Vendor A successfully handled 70 VMs with an average response time of 4.65 ms.

Vendor B provided the required delays of 5.37 ms. with only 36 VMs.

Vendor C was able to withstand 48 virtual machines with a response time of 5.02 ms

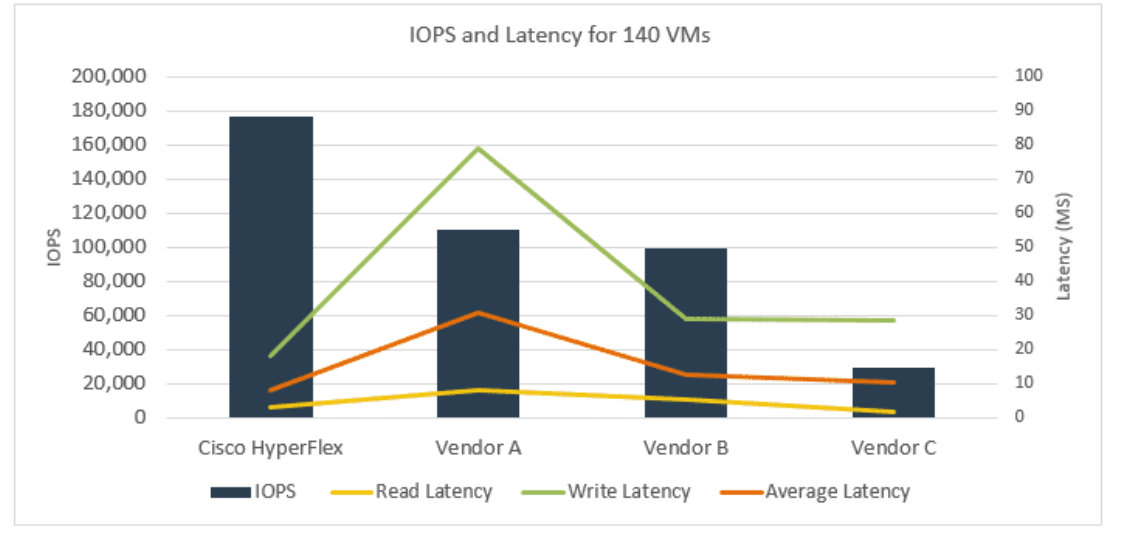

Next, ESG Lab emulated the load of SQL Server. The test used various block sizes and read / write ratios. The test was also run on 140 virtual machines.

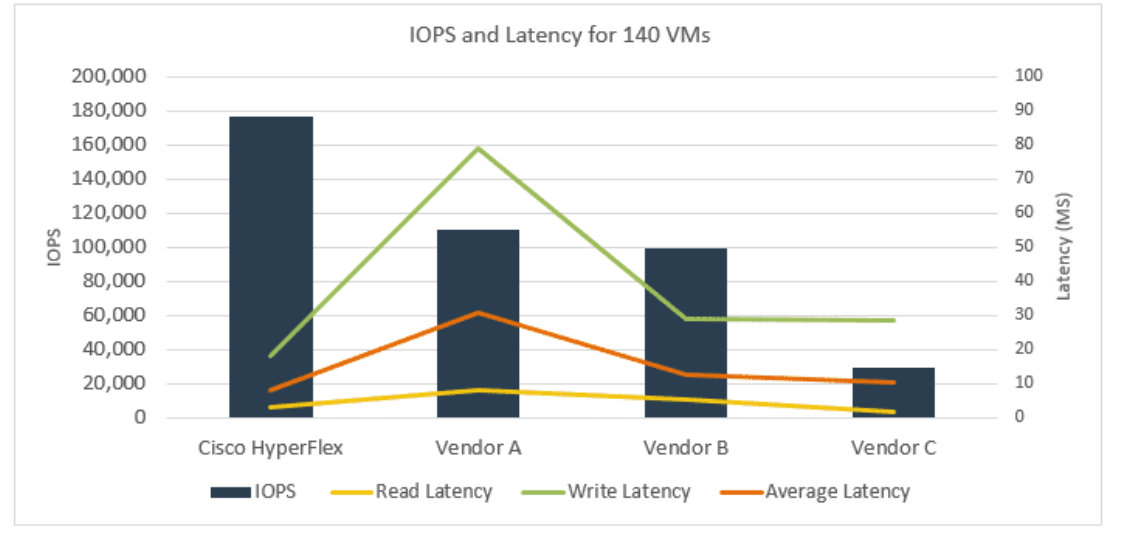

As shown in the figure below, the Cisco HyperFlex cluster has almost doubled the IOPS of vendor A and B, and vendor C by more than five times. The average Cisco HyperFlex response time was 8.2 ms. For comparison, the average response time of vendor A was 30.6 ms, vendor B was 12.8 ms, and vendor C was 10.33 ms.

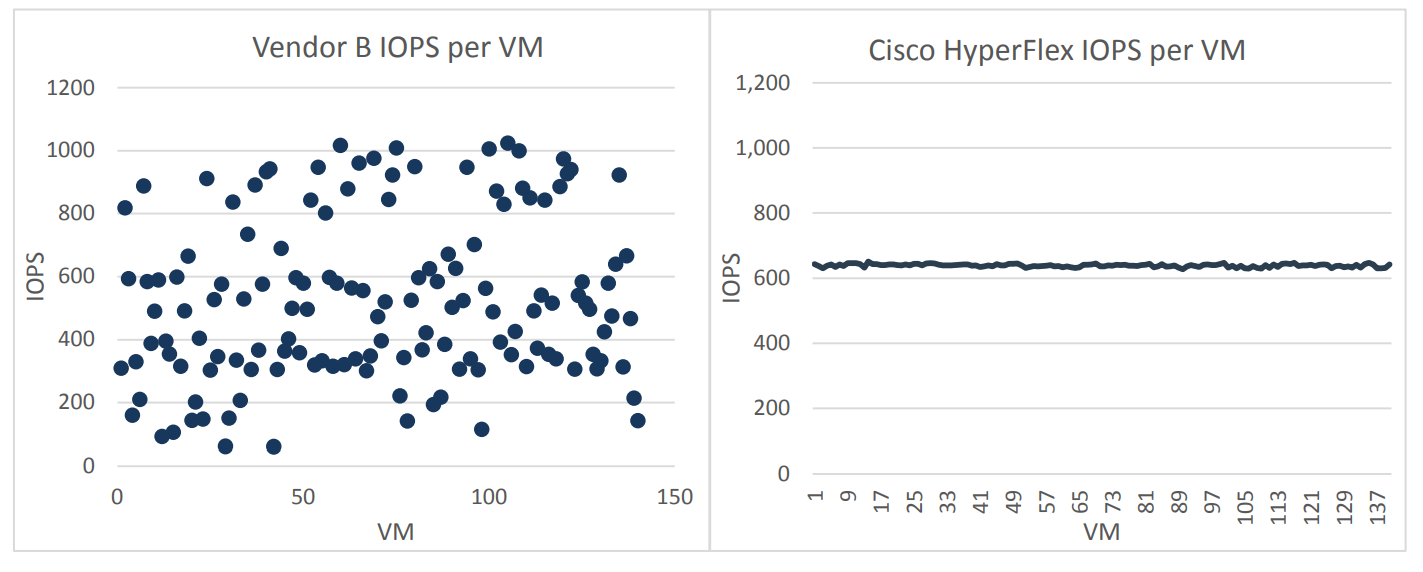

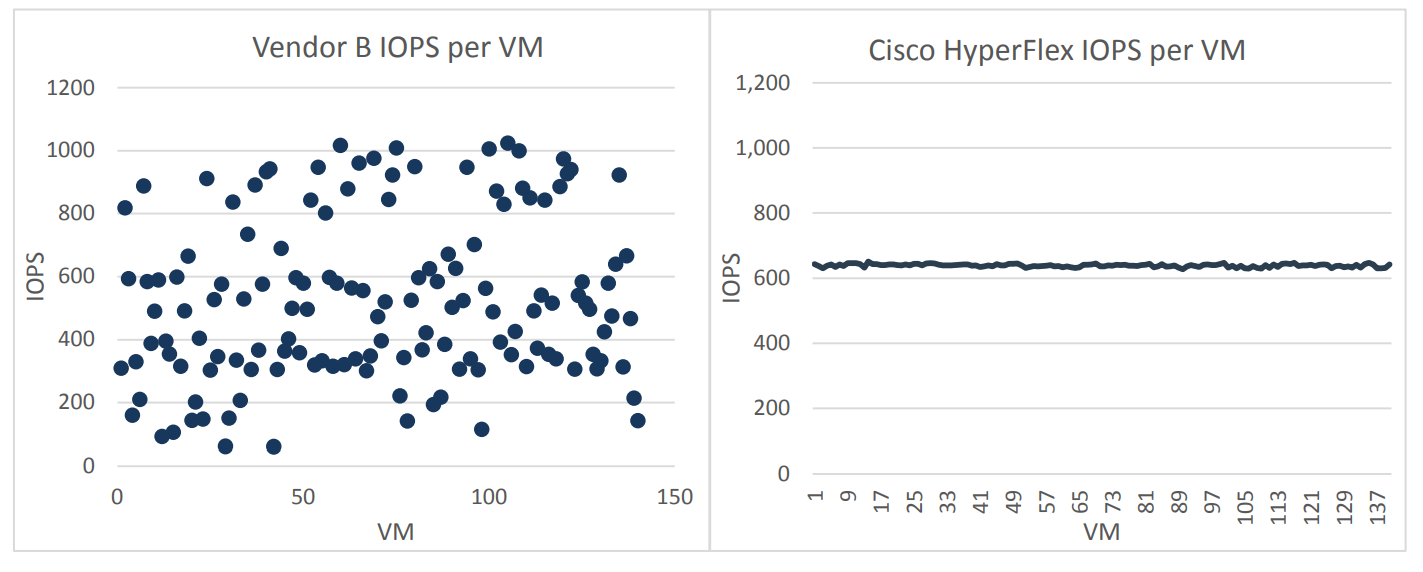

An interesting observation was made during all tests. Vendor B showed a significant variation in average performance in IOPS on different VMs. That is, the load was distributed extremely unevenly, some VMs worked with an average value of 1000 IOPS +, and some with a value of 64 IOPS. Cisco HyperFlex in this case looked much more stable, all 140 VMs received an average of 600 IOPS from the storage subsystem, that is, the load between the virtual machines was distributed very evenly.

It is important to note that such an uneven distribution of IOPS across virtual machines for vendor B was observed at each testing iteration.

In real productivity, this behavior of the system can be a big problem for administrators, in fact, individual virtual machines randomly “hang” and there is practically no way to control this process. The only, not very successful way of load balancing, when using a solution from vendor B, is to use one or another QoS implementation or balancing.

Let's think, what is 140 virtual machines in Cisco Hyperflex for 1 physical node versus 70 or fewer in other solutions? For business, this means that in order to maintain the same number of applications on Hyperflex, you need 2 times less nodes than competitors' solutions, i.e. the final system will be much cheaper. If we add here the level of automation of all operations for servicing the network, servers and storage platform HX Data Platform, it becomes clear why Cisco Hyperflex solutions are so rapidly gaining popularity in the market.

In general, ESG has confirmed that Cisco HyperFlex HX 3.0 Hybrid versions provide higher and more stable performance than other similar solutions.

At the same time, HyperFlex hybrid clusters also outperformed their competitors in terms of IOPS and Latency. Equally important, the performance of HyperFlex was ensured with a very well-distributed load across the entire storage.

Recall that you can see the Cisco Hyperflex solution and see its capabilities right now. The system is available for demonstration to everyone:

In April 2019, Cisco again held a series of demonstrations of the new Cisco HyperFlex hyperconverged solution in the regions of Russia and Kazakhstan. You can sign up for a demonstration through the feedback form by clicking on the link. Join now!

Earlier, we published an article on stress tests performed by the independent ESG Lab in 2017. In 2018, Cisco HyperFlex (HX version 3.0) features improved significantly. In addition, competitive solutions also continue to improve. That is why we are publishing a newer, more recent version of ESG's comparative load tests.

In the summer of 2018, the ESG laboratory conducted a repeated comparison of Cisco HyperFlex with competitors. Given the current trend of using Software-defined solutions, manufacturers of similar platforms were also added to the comparative analysis.

Test configurations

During testing, HyperFlex was compared with two fully software hyperconverged systems that are installed on standard x86 servers, as well as with one software and hardware solution. Testing was carried out using standard software for hyperconverged systems - HCIBench, which uses the Oracle Vdbench tool and automates the testing process. In particular, HCIBench automatically creates virtual machines, coordinates the load between them and generates convenient and understandable reports.

140 virtual machines per cluster were created (35 on the cluster node). Each virtual machine used 4 vCPUs, 4 GB RAM. The local VM disk was 16 GB and an additional 40 GB disk.

The following cluster configurations participated in the testing:

- a four-node cluster of Cisco HyperFlex 220C 1 x 400 GB SSD for cache and 6 x 1.2 TB SAS HDD for data;

- a competitor cluster of Vendor A with four nodes 2 x 400 GB SSD for cache and 4 x 1 TB SATA HDD for data;

- rival Vendor B cluster of four nodes 2 x 400 GB SSD for cache and 12 x 1.2 TB SAS HDD for data;

- a competitor cluster of Vendor C with four nodes 4 x 480 GB SSD for cache and 12 x 900 GB SAS HDD for data.

The processors and RAM of all the solutions were identical.

Test for the number of virtual machines

Testing began with a workload designed to emulate a standard OLTP test: read / write (RW) 70% / 30%, 100% FullRandom with a target value of 800 IOPS per virtual machine (VM). The test was carried out at 140 VMs in each cluster for three to four hours. The purpose of the test is to save delays when recording on the maximum number of VMs at 5 milliseconds or less.

As a result of the test (see the graph below), HyperFlex was the only platform that completed this test with the initial 140 VMs and with delays below 5 ms (4.95 ms). For each of the other clusters, the test was restarted in order to experimentally adjust the number of VMs for a target delay of 5 ms over several iterations.

Vendor A successfully handled 70 VMs with an average response time of 4.65 ms.

Vendor B provided the required delays of 5.37 ms. with only 36 VMs.

Vendor C was able to withstand 48 virtual machines with a response time of 5.02 ms

SQL Server load emulation

Next, ESG Lab emulated the load of SQL Server. The test used various block sizes and read / write ratios. The test was also run on 140 virtual machines.

As shown in the figure below, the Cisco HyperFlex cluster has almost doubled the IOPS of vendor A and B, and vendor C by more than five times. The average Cisco HyperFlex response time was 8.2 ms. For comparison, the average response time of vendor A was 30.6 ms, vendor B was 12.8 ms, and vendor C was 10.33 ms.

An interesting observation was made during all tests. Vendor B showed a significant variation in average performance in IOPS on different VMs. That is, the load was distributed extremely unevenly, some VMs worked with an average value of 1000 IOPS +, and some with a value of 64 IOPS. Cisco HyperFlex in this case looked much more stable, all 140 VMs received an average of 600 IOPS from the storage subsystem, that is, the load between the virtual machines was distributed very evenly.

It is important to note that such an uneven distribution of IOPS across virtual machines for vendor B was observed at each testing iteration.

In real productivity, this behavior of the system can be a big problem for administrators, in fact, individual virtual machines randomly “hang” and there is practically no way to control this process. The only, not very successful way of load balancing, when using a solution from vendor B, is to use one or another QoS implementation or balancing.

Conclusion

Let's think, what is 140 virtual machines in Cisco Hyperflex for 1 physical node versus 70 or fewer in other solutions? For business, this means that in order to maintain the same number of applications on Hyperflex, you need 2 times less nodes than competitors' solutions, i.e. the final system will be much cheaper. If we add here the level of automation of all operations for servicing the network, servers and storage platform HX Data Platform, it becomes clear why Cisco Hyperflex solutions are so rapidly gaining popularity in the market.

In general, ESG has confirmed that Cisco HyperFlex HX 3.0 Hybrid versions provide higher and more stable performance than other similar solutions.

At the same time, HyperFlex hybrid clusters also outperformed their competitors in terms of IOPS and Latency. Equally important, the performance of HyperFlex was ensured with a very well-distributed load across the entire storage.

Recall that you can see the Cisco Hyperflex solution and see its capabilities right now. The system is available for demonstration to everyone: