Automate UI testing on PhoneGap. Payment Application Case

I don’t know about you, but I feel confident in the water. However, recently they decided to teach me how to swim again, using the old Spartan method: they threw me into the water and told me to survive.

But enough metaphors.

Given: PhoneGap-application with iframe, inside which a third-party site loads; 1.5 years of tester experience; programmer experience 0 years.

Objective: to find a way to automate testing of the main business case of the application, because manual testing is long and expensive.

Solution: a lot of crutches, regular calls to programmers for help.

However, the tests work and I learned a lot. And the moral of my retrospective will not be that I should not make my mistakes, but that I figured out a strange and atypical task - in my own way, but figured it out.

about the project

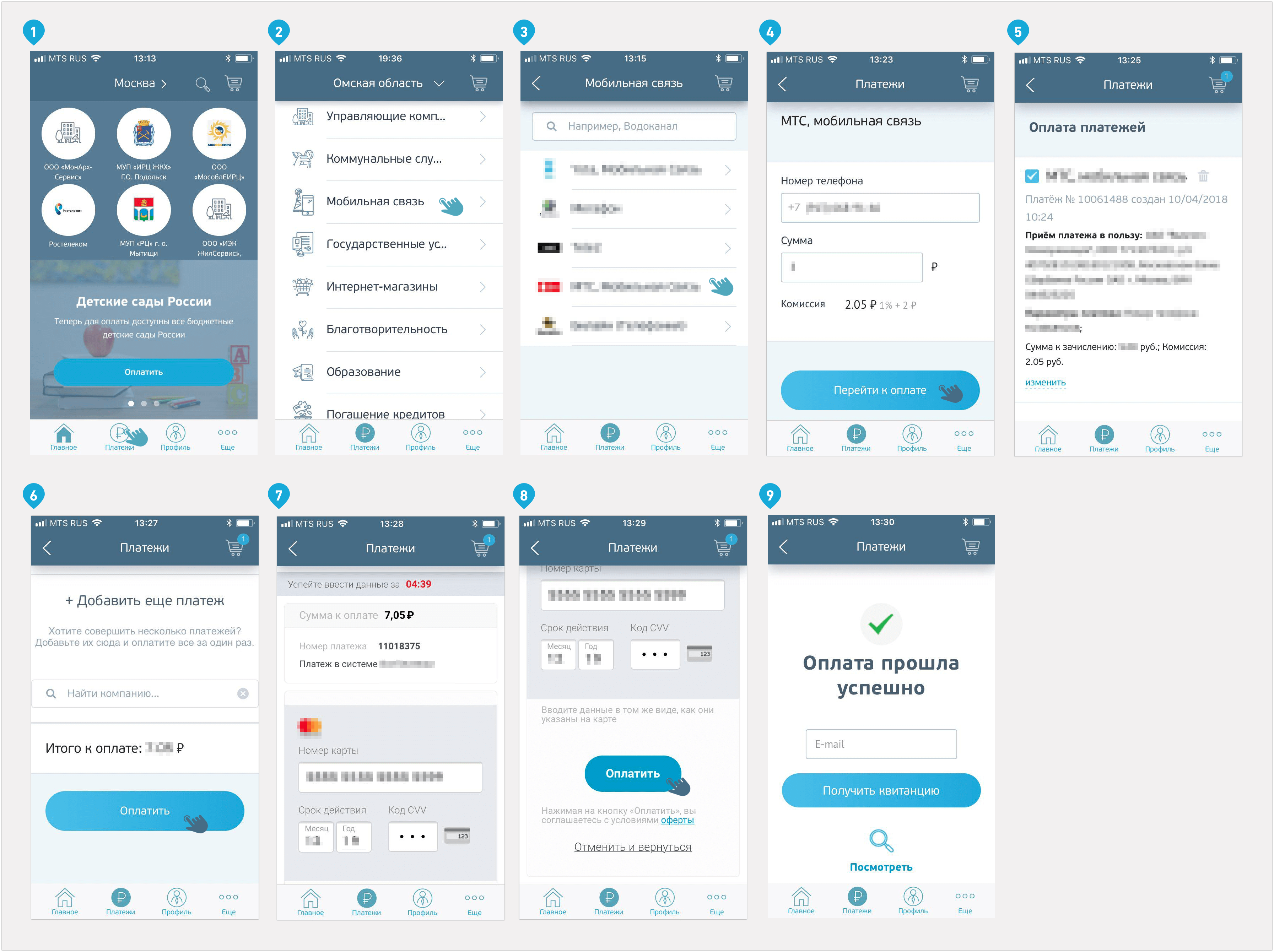

I will begin my story by introducing myself to the project. This is a web-based service called VsePayment , through which people pay utility bills, communications, car fines, make loan payments and pay for purchases in some online stores. The service works with 30 thousand service operators, this is a great product with a complex history, an impressive number of integrations and its own team.

The Live Typing company , where I work as a QA specialist, has developed a cross-platform mobile application for the service . The client needed to do MVP in order to test a number of hypotheses, and the "cross platform" was the only way to quickly and inexpensively fulfill this desire.

Why was there a task to automate UI tests?

As I said above, the service lives a complex and interesting internal life. The web service development team regularly makes changes to its work and rolls them to the server twice a week. Our team does not participate in anything that happens on the back, but participates in the development of the application. We do not know what we will see on the application screen after the next release and how the changes made will affect the application.

When it comes to the work of MVP, the maximum task is to support the work of one or more key features of the product. In a payment service, such opportunities include making a payment to a service provider, operating a basket, and displaying a list of service providers. But payment is more important than others.

So that the changes made by the developers of the site do not block the execution of key business cases of the application, testing is necessary. Smoke is enough: it started? didn't burn out? excellent. But with such a release frequency, manually testing the application would be too costly.

The hypothesis came to mind: what if this process is automated? And we set aside time and budget to automate a number of manual tests and spend two hours a week on testing in the future instead of six to eight.

Can everything be automated?

It is important to say that changes in the site work concern not only the UI, but also the UX. We agreed that the client’s analyst will tell us in advance about the planned updates on the site. They can be different, from moving a button to introducing a new section. Testing the latter can not be trusted with automation - this is a complex UX-script, which has to be found and checked by hand in the old way.

How we imagined the implementation

We decided to test the main functions through the application interface, armed with the Appium framework . Appium Inspector remembers actions with the interface and converts them into a script, the tester runs this script and sees the test results. So we imagined work in the beginning.

Here we will return briefly to a metaphorical introduction to my story. To automate tests, you must be able to program, and here my authority, as they say, is all. The introduction to this world took about four hours: we deployed and set up the environment, the technical director assured that everything was simple, using the example of one test showed how to write the rest, and didn’t interfere more with my work on the project. He threw it into the water with a lowered lifebuoy and saluted.

I really had no idea what would happen.

I started by

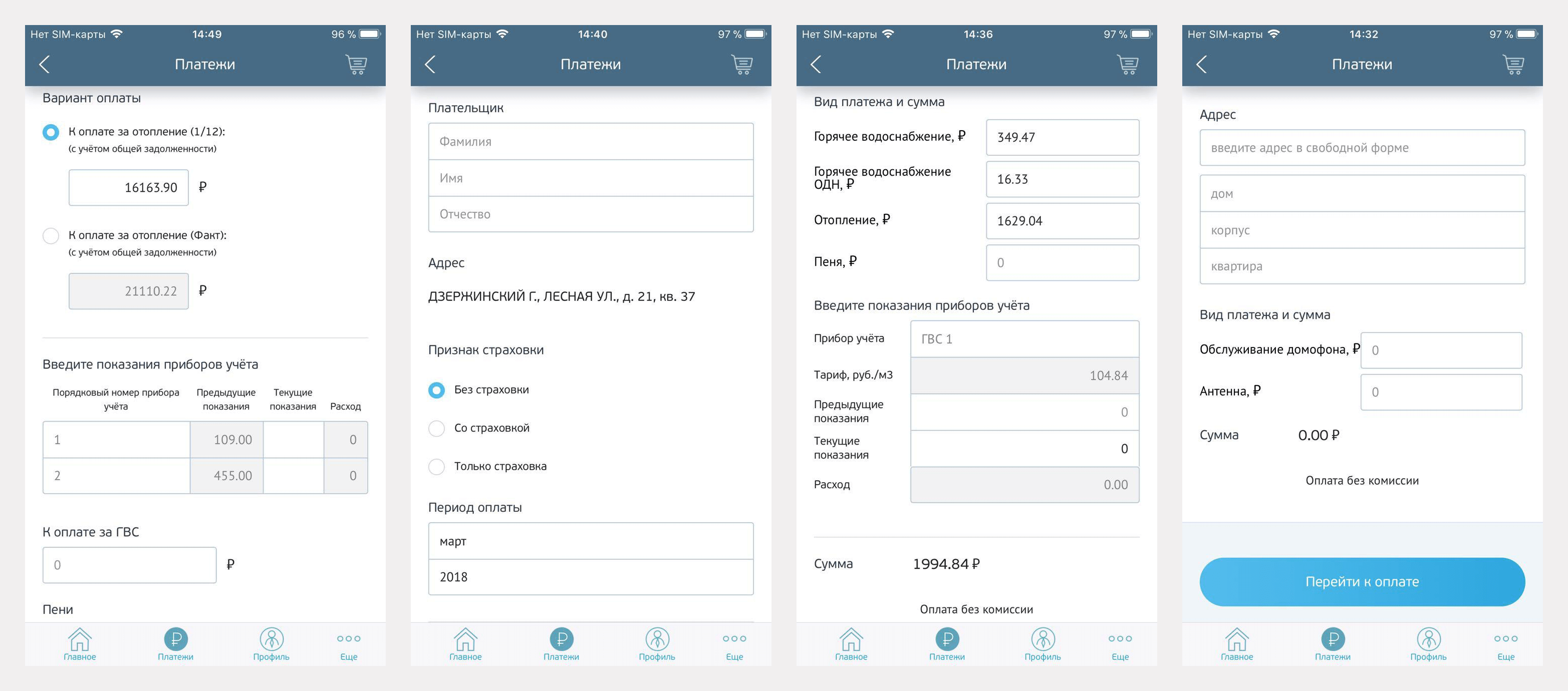

compiling test cases for checking payment: Test cases were also drawn up for the basket and the list of suppliers. Further it was planned to switch to automation of other, more complex scenarios. But we abandoned such a plan, because different types of service providers have many types of fill-out forms. For each of the forms would have to do an automated test separately, and it is long and expensive. The client tests them himself.

Another expectation was this: since this is an autotest, the test case can be any complicated. After all, the program will test everything itself, and the tester will only need to set the sequence of actions. I came up with huge, monstrous cases in which a few additional checks are inserted in the payment cycle, for example, what will happen if you go from the basket to the list of suppliers in the payment, add a new one, then return, delete it and continue paying.

When I wrote such a test, I saw how huge it is and how unstable it works. It became clear that test cases need to be simplified. A short test is easier to maintain and add. And instead of tests with several checks, I started doing tests with one or two checks.

As for the software for testing, I presented the work with Appium as follows: I perform some sequence of actions in the recorder, he writes it, the framework collects a script from this, I run the resulting code and it repeats my actions in the application.

It sounded good, but only sounded.

What the process turned out to be in reality

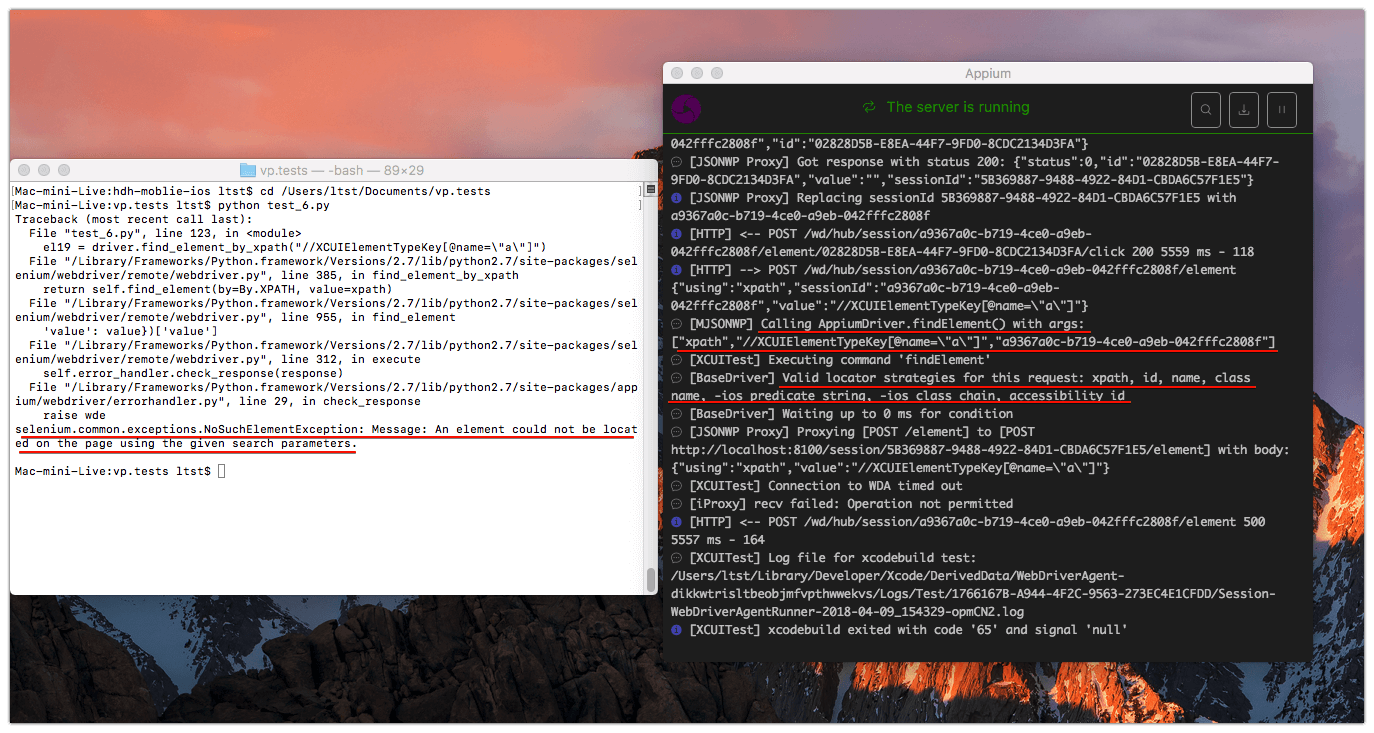

And here are the problems I encountered:

- As soon as I tried to run the script recorded by the recorder, I realized that programming was indispensable. The code that the recorder issued would not work in its original form - improvements were needed. For example, if the input form is being tested, it is easiest to fill in the data (phone number, personal account, name and surname, etc.). The recorder records each character of the keyboard as a separate element, so the script enters the characters one at a time, switching the keyboard where necessary - in general, blindly copies the recorded actions. Here and later, I solved my problems either with the help of a programmer who suggested what to do, or with five-hour surfing on Google. And this is the main minus in the whole story.

- At some point, I realized that we needed the conditions under which the script would work. I will explain with an example. The name of the service provider is written in Cyrillic characters, and in order to enter it, you need to turn on the keyboard on the device, switch the language from Latin to Cyrillic and enter the name.

The first time the script performs these actions and it gets what it needs. But when you run the script again, it switches the keyboard from Cyrillic to Latin, cannot find the Russian letter on the keyboard and crashes. Therefore, the script needed to set conditions using the code, for example, "try to find the Russian letter, if you can’t, switch the keyboard." It seems to be a simple thing for someone who has been programming for a long time, but not for me at that time. - The script does not understand whether the called element has been loaded, whether it has opened, whether it is visible; he simply presses the buttons that the recorder has recorded. Therefore, you need to add delays, scroll, or some other additional actions somewhere to the script, and this again requires programming experience.

- Lack of ID for elements. What are they needed for? Suppose you have a login button in your application. By the name of this button, the script may not understand that you want to click on it. Having IDs on elements solves this problem.

Appium can find an element by name, but it happens that an element does not even have it. For example, the application has a basket, and the basket button is indicated by an icon; it is not signed, it has no name - nothing. And for the script to click on it, it must somehow find it. Without a name, this cannot be done in any way, even by busting - the script has nothing to turn to and it cannot click on the basket button. And if the basket had an ID, the recorder would see it in the process of recording actions, and the script could find the button. The solution was ambiguous.

In native development, most elements are assigned an ID that the recorder accesses without problems.

But do not forget that our product is a cross-platform application. Their main feature is that among the native elements there is a web part that the recorder cannot access in the same way as the native one. It reads elements unpredictably - somewhere text, somewhere type - and there are no special IDs, because the web id is used for other purposes. The project is initially written using web development tools (that is, in JavaScript), after which Cordova generates native code that is different for the iOS and Android platforms, and assigns an ID in only the slave order itself.

So the solution. Since I could access elements by their names, I asked the developer to give the buttons a transparent font name. There is a name, the user does not see it, but sees the Appium recorder. The script can access such a button and click on it.

- The absence of IDs on elements slows down the operation of autotests. Why? The names of the elements on the screen can be repeated (for example, two input fields have the same name, but for different purposes). And since the test, in search of the necessary element, iterates over everything with a certain name, it may not appeal to the element that it needs. If you find an element by name directly, the problem is not solved, because the name is not unique. Therefore, the script must compare not only the names of the elements, but also their type. Because of all this, tests pass slowly.

- This project could not be run on an emulator - only on a physical device. To support the class for displaying web pages in the WKWebView applications used in the project, it is necessary that localhost is deployed inside the physical device. Tests on the emulator would work four times faster, but this is not our case.

- The recorder created tests unstructured and not within the framework of one project. If I wrote this code from scratch, as programmers do, all the tests would be in one file, and each of the tests would be described in a separate cycle within the framework of one project. And the recorder generates each test as a separate file, and there are no cycles in it. As a result, I received all the tests as separate pieces of code that I could not combine and make a structure for them.

- Report on test results, or logging. Everything is simple: it was not there. I knew about existing tools like py.test or TestNG (it depends on the programming language in which the tests are written), but they were again designed for a structured project, and this again is not about us. Therefore, it was impossible to connect something ready, but in writing my own, I had no experience at all. As a result, I came to the following: the message that the terminal showed me when the test crashed is quite enough to understand which element the test did not find and why.

In any case, I checked the error manually. Logs were, in its minimum execution, but there were. And that’s good.

- The last and perhaps the most painful point. When I rested my forehead on some kind of problem, I either went to our technical director and the initiator of the idea to do self-tests, or simply google the problem for five hours and did not understand what was happening. The help of the technical assistant made the tasks quick and easy. But at some point he left me, and every time pulling the developers is not cool. I had to cope myself.

Summary

Now that the work on the tests is finished, I look at what happened and think: I would have done everything differently: where the recorder generates scripts, I would have written the tests manually; I would add an insertion of login data where elements are searched on the keyboard; I would get rid of enumeration of elements by adding IDs; would learn a framework that runs tests in a loop; configure and connect CI so that the tests themselves run after each deployment; would configure logging and mail the results.

But then I knew almost nothing and checked all my decisions, because I was not sure of them. Sometimes I had no idea what it should be.

Nevertheless, I completed the task - auto-tests appeared on the project, and we were able to test the application against the background of constant changes. In addition, the availability of tests eliminated the need to sit and pierce the same scripts twice a week, which takes a lot of time with manual testing, because each script is repeated 100 times. And I got powerful experience and understanding of how to really do all this. If you have anything to advise or add to the above, I will be glad to continue the conversation in the comments.