Efficient memory usage for parallel I / O in Python

- Transfer

There are two classes of tasks where we may need parallel processing: input-output operations and tasks that actively use CPUs, such as image processing. Python allows you to implement several approaches to parallel data processing. Consider them in relation to input-output operations.

Prior to Python 3.5, there were two ways to implement parallel processing of I / O operations. The native method is the use of multithreading, another option is libraries like Gevent, which parallelize tasks in the form of micro-threads. Python 3.5 provided native concurrency support using asyncio. I was curious to see how each of them would work in terms of memory. The results are below.

For testing, I created a simple script. Although there are not many functions in it, it demonstrates a real use case. The script downloads bus ticket prices from the website for 100 days and prepares them for processing. Memory consumption was measured using memory_profiler . The code is available on Github .

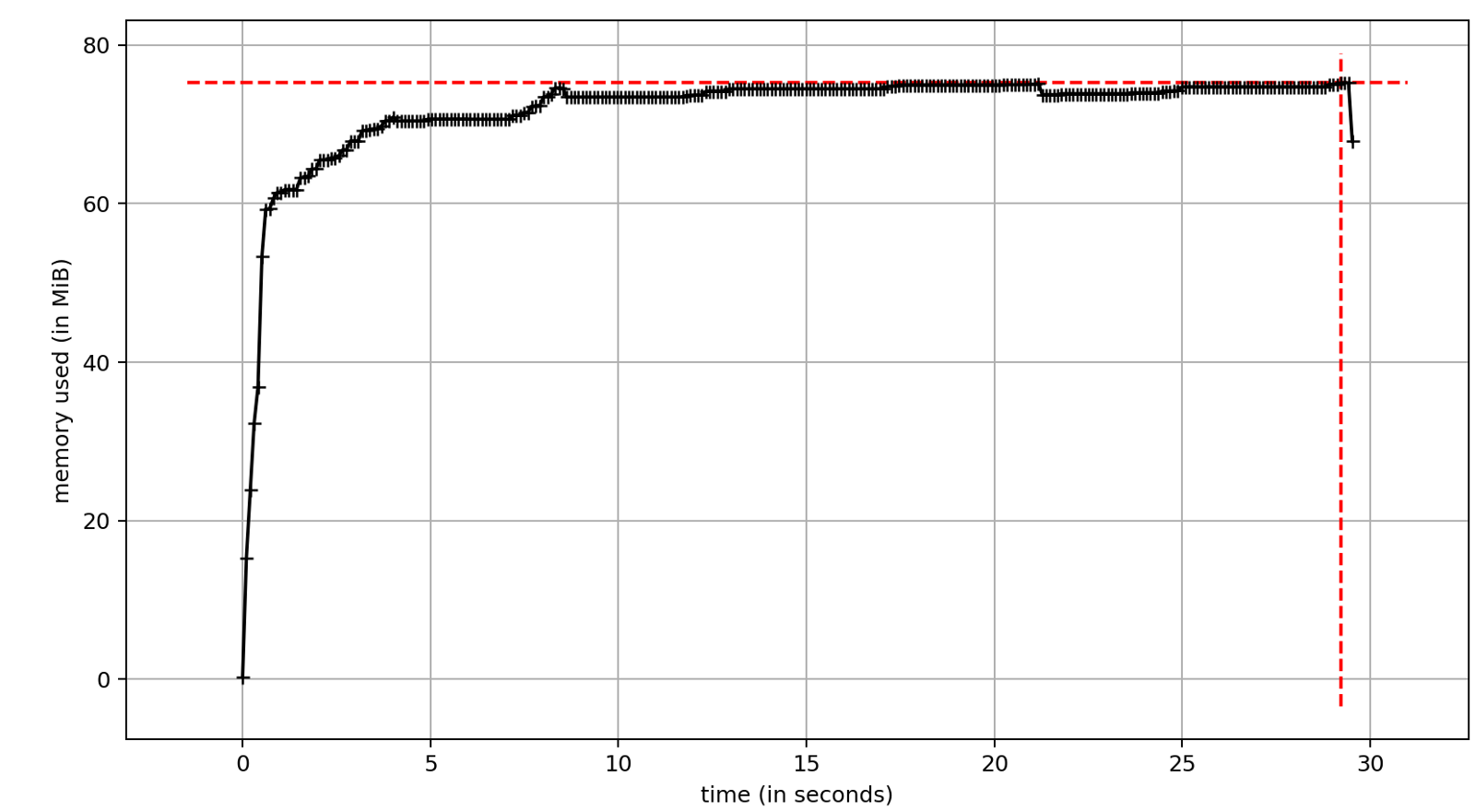

I implemented a single-threaded version of the script, which became the standard for other solutions. Memory usage was fairly stable throughout the execution, and runtime was an obvious drawback. Without any concurrency, the script took about 29 seconds.

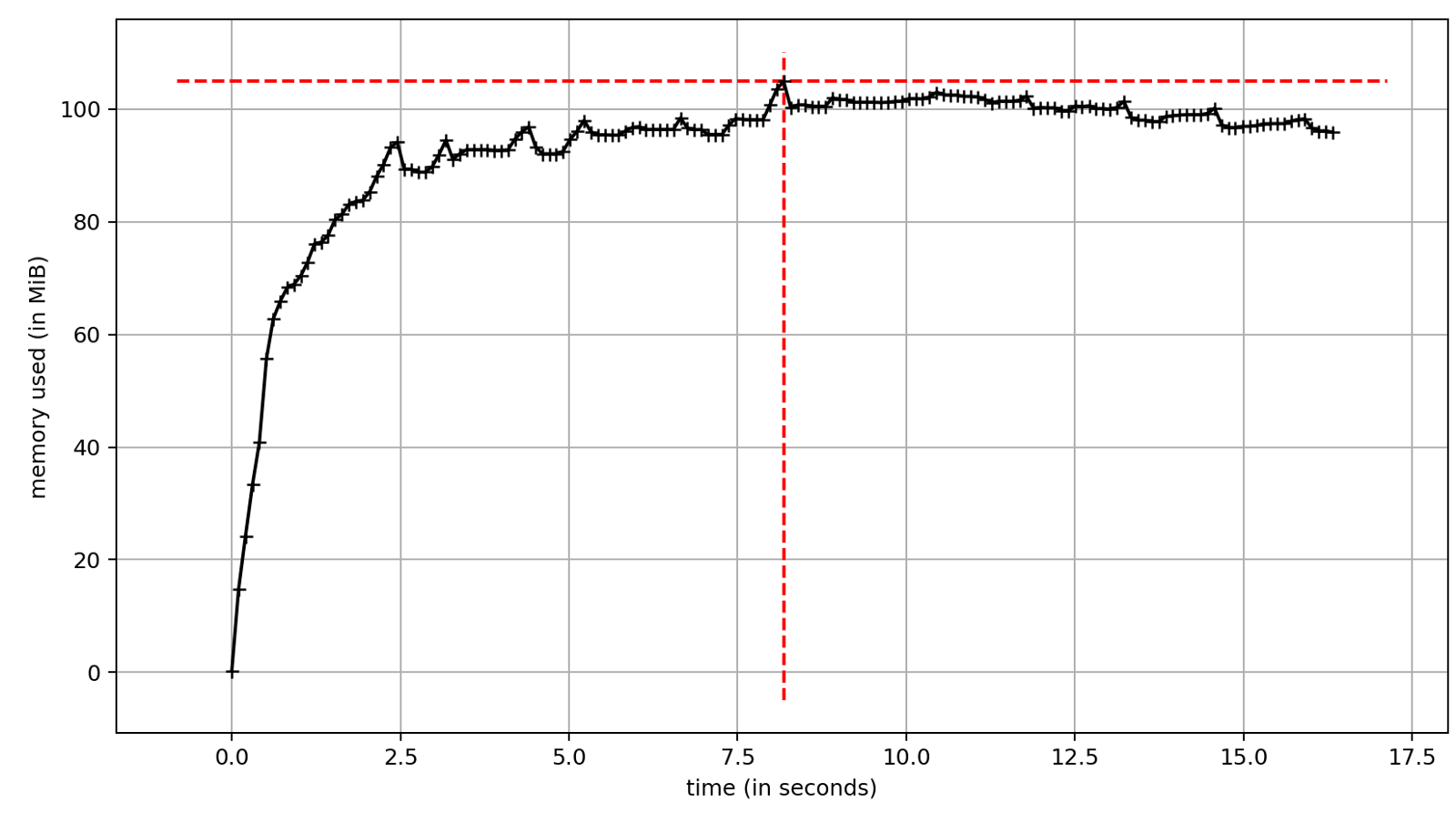

Work with multithreading is implemented in the standard library. The most convenient API is provided by ThreadPoolExecutor . However, the use of threads is associated with some drawbacks, one of them is significant memory consumption. On the other hand, a significant increase in execution speed is the reason why we want to use multithreading. Test run time ~ 17 seconds. This is significantly less than ~ 29 seconds in synchronous execution. The difference is the speed of the I / O operations. In our case, network latency.

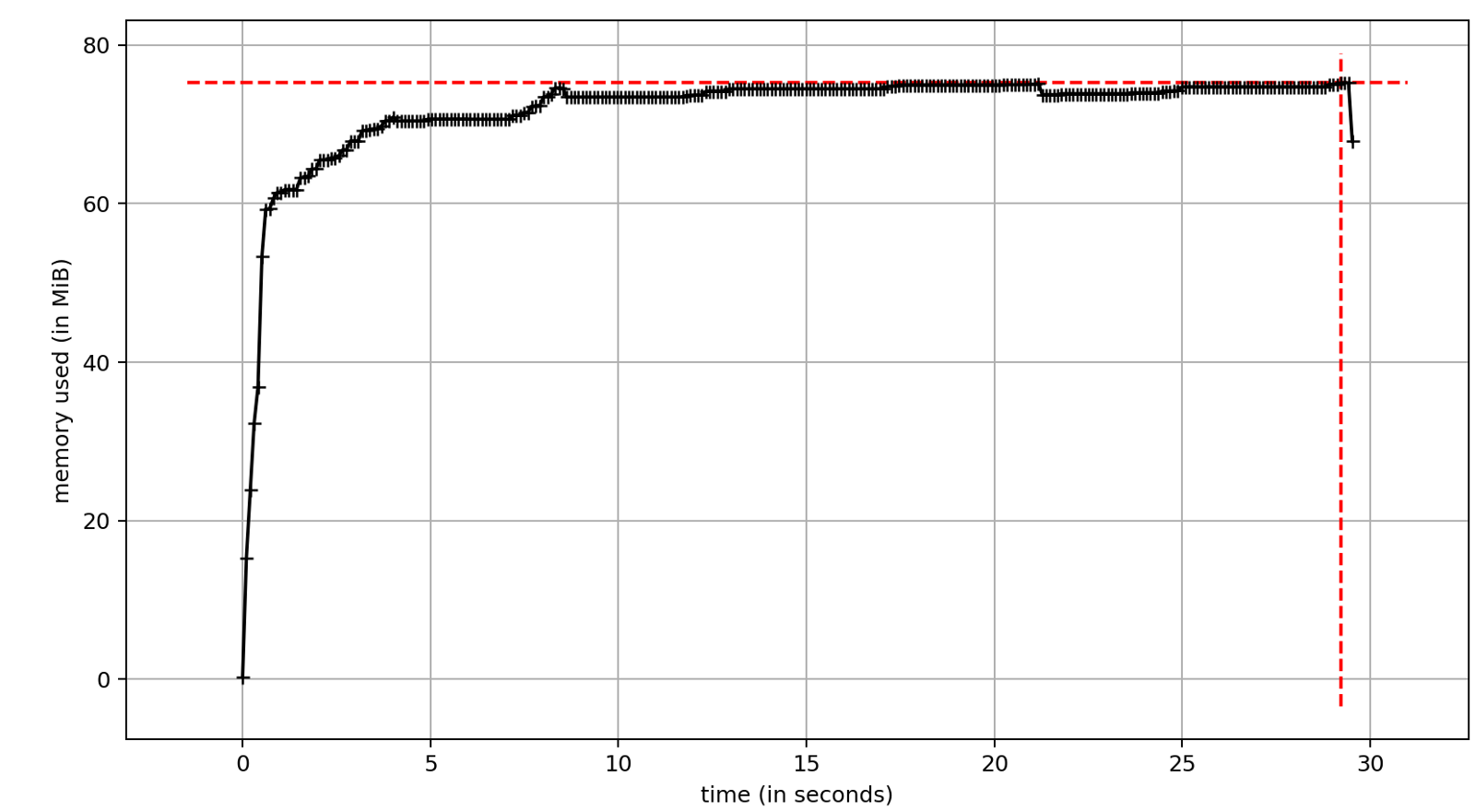

Gevent is an alternative approach to concurrency; it brings coroutines to Python code up to version 3.5. Under the hood we have light pseudo-flows “greenlets” plus several flows for domestic use. Total memory consumption is similar to multipathing.

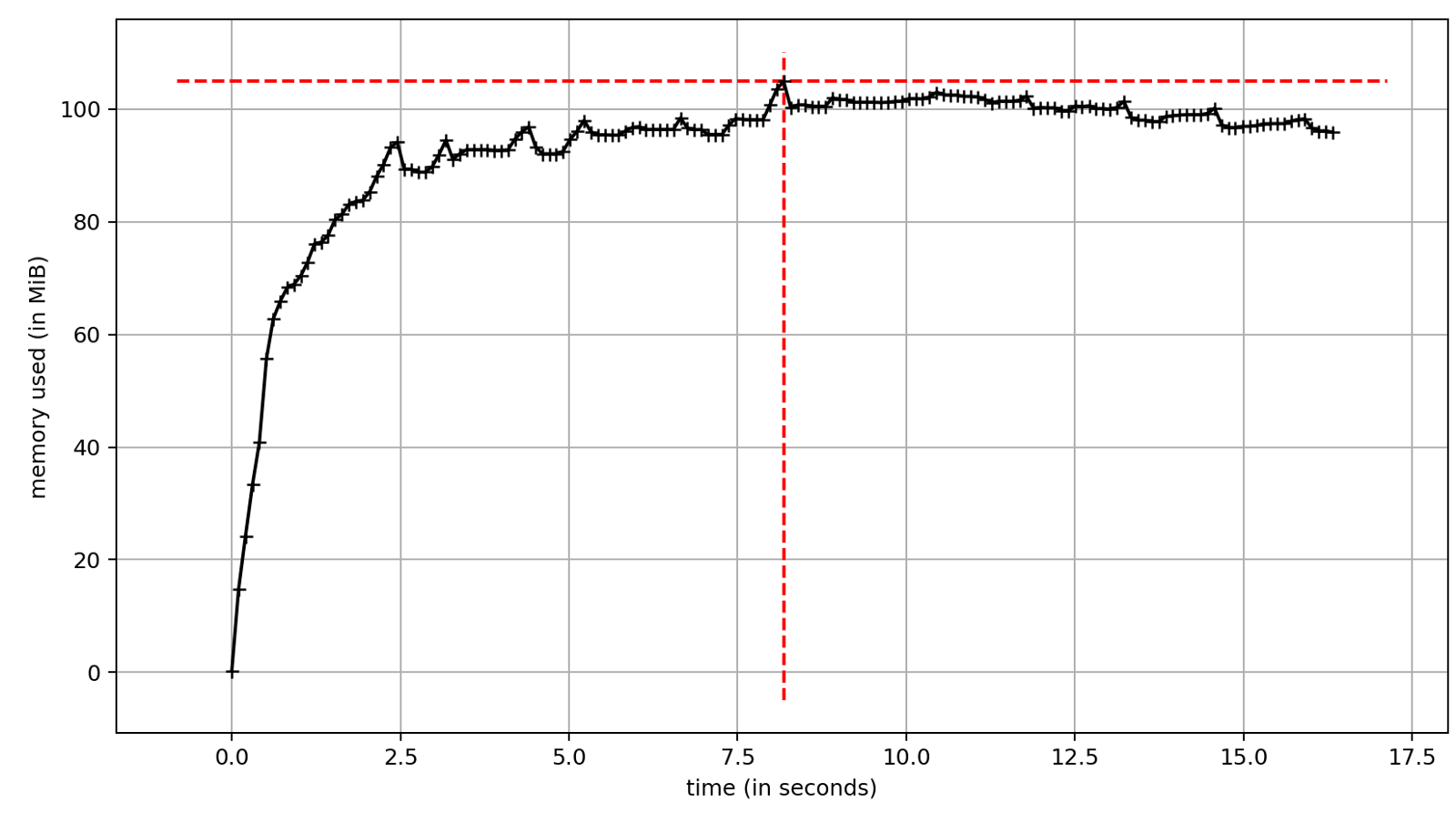

Since Python 3.5, coroutines are available in the asyncio module , which has become part of the standard library. To take advantage of asyncio , I used aiohttp instead of requests . aiohttp is the asynchronous equivalent of requests with similar functionality and API.

The presence of appropriate libraries is the main issue that needs to be clarified before starting development with asyncio , although the most popular IO libraries - requests , redis , psycopg2 - have asynchronous analogues.

With asynciomemory consumption is much less. It is similar to a single-threaded version of the script without parallelism.

Concurrency is a very efficient way to speed up applications with lots of I / O. In my case, this is ~ 40% performance increase compared to sequential processing. The differences in speed for the considered methods of implementing parallelism are insignificant.

ThreadPoolExecutor and Gevent are powerful tools that can speed up existing applications. Their main advantage is that in most cases they require minor changes to the code base. In terms of overall performance, the best tool is asyncio . Its memory consumption is significantly lower compared to other concurrency methods, which does not affect the overall speed. You have to pay for the pros with specialized libraries tailored to work with asyncio.

Prior to Python 3.5, there were two ways to implement parallel processing of I / O operations. The native method is the use of multithreading, another option is libraries like Gevent, which parallelize tasks in the form of micro-threads. Python 3.5 provided native concurrency support using asyncio. I was curious to see how each of them would work in terms of memory. The results are below.

Test Environment Preparation

For testing, I created a simple script. Although there are not many functions in it, it demonstrates a real use case. The script downloads bus ticket prices from the website for 100 days and prepares them for processing. Memory consumption was measured using memory_profiler . The code is available on Github .

Go!

Synchronous processing

I implemented a single-threaded version of the script, which became the standard for other solutions. Memory usage was fairly stable throughout the execution, and runtime was an obvious drawback. Without any concurrency, the script took about 29 seconds.

ThreadPoolExecutor

Work with multithreading is implemented in the standard library. The most convenient API is provided by ThreadPoolExecutor . However, the use of threads is associated with some drawbacks, one of them is significant memory consumption. On the other hand, a significant increase in execution speed is the reason why we want to use multithreading. Test run time ~ 17 seconds. This is significantly less than ~ 29 seconds in synchronous execution. The difference is the speed of the I / O operations. In our case, network latency.

Gevent

Gevent is an alternative approach to concurrency; it brings coroutines to Python code up to version 3.5. Under the hood we have light pseudo-flows “greenlets” plus several flows for domestic use. Total memory consumption is similar to multipathing.

Asyncio

Since Python 3.5, coroutines are available in the asyncio module , which has become part of the standard library. To take advantage of asyncio , I used aiohttp instead of requests . aiohttp is the asynchronous equivalent of requests with similar functionality and API.

The presence of appropriate libraries is the main issue that needs to be clarified before starting development with asyncio , although the most popular IO libraries - requests , redis , psycopg2 - have asynchronous analogues.

With asynciomemory consumption is much less. It is similar to a single-threaded version of the script without parallelism.

Is it time to start using asyncio?

Concurrency is a very efficient way to speed up applications with lots of I / O. In my case, this is ~ 40% performance increase compared to sequential processing. The differences in speed for the considered methods of implementing parallelism are insignificant.

ThreadPoolExecutor and Gevent are powerful tools that can speed up existing applications. Their main advantage is that in most cases they require minor changes to the code base. In terms of overall performance, the best tool is asyncio . Its memory consumption is significantly lower compared to other concurrency methods, which does not affect the overall speed. You have to pay for the pros with specialized libraries tailored to work with asyncio.