Introducing the AVAudioEngine Sound Engine on iOS

Looking through the Runet in search of articles on this topic, you are surprised at their absence. Perhaps people have enough descriptions in the class header, or maybe everyone just prefers to study it empirically. One way or another, for those who come across this class for the first time or feel the need for illustrative examples, we offer this simple practical guide from our partners, Music Topia.

In terms of working with sound, Apple is ahead of its competitors, and this is no accident. The company offers good tools for playing, recording and processing tracks. Thanks to this, on the AppStore you can see a huge number of applications that somehow work with audio content. These include players with good sound ( Vox), audio editors with tools for editing and applying effects ( Sound Editor ), voice-changing applications ( Voicy Helium ), various simulators of musical instruments that give a fairly accurate imitation of the corresponding sound ( Virtuoso Piano ), and even simulators of DJ settings ( X Djing )

To work with audio, Apple provides the AVFoundation framework, which combines several tools. For example, AVAudioPlayer is used to play a single track, and AVAudioRecorder is used to record sound from a microphone. And if you only need the functions provided by these classes, then just use them. If you need to apply any effect, play several tracks at the same time, mix them, process or edit audio, or record sound from the output of a specific audio node, then AVAudioEngine will help you with this. Most of all, this class is attracted by the ability to impose effects on the track. A lot of applications with equalizers and the ability to change the voice are built on the basis of these effects. In addition, Apple allows developers to create their own effects, sound generators and instruments.

Let's start with the main AVAudioEngine engine. This engine is a group of audio node connections that generate and process audio signals and represent audio inputs and outputs. It can also be described as a diagram of audio nodes that are located in a certain way to achieve the desired result. That is, we can say that AVAudioEngine is a motherboard on which microcircuits are placed (in our case, audio nodes).

By default, the engine is connected to an audio device and automatically renders in real time. It can also be configured to work in manual mode, that is, without connecting to the device, to render in response to a request from a client, usually in real time or even faster.

When working with this class, it is necessary to execute initializations and methods in a certain order so that all operations are carried out correctly. Here is the sequence of actions on the items:

1. Setting up an audio session

Without setting up an audio session, the application may not work correctly or there may be no sound at all. The specific list of configuration steps depends on what functions the engine performs in the application: recording, playing, or both. The following example sets the audio session to play only:

To configure for recording, you must set the category AVAudioSessionCategoryRecord. All categories are available here .

2. Creating the engine

For this engine to work, you need to import the AVFoundation framework and initialize the class.

Initialization is as follows:

3. Creating nodes

AVAudioEngine works with various child classes of the AVAudioNode class that are attached to it. The nodes have input and output buses, which can be considered as connection points. For example, effects usually have one input bus and one output bus. Buses have formats presented in the form of AudioStreamBasicDescription , which include parameters such as sampling rate, number of channels and others. When connections between nodes are created, in most cases these formats should fit together, but there are exceptions.

Consider each type of audio nodes individually.

The most common type of audio node is AVAudioPlayerNode. This variety, as the name implies, is used to play audio. The node plays sound either from the specified AVAudioBuffer view buffer or from segments of the audio file opened by AVAudioFile. Buffers and segments can be scheduled for playback at a certain point or can be played immediately after the previous segment.

Initialization and playback example:

Next, consider the AVAudioUnit class, which is also a child of the AVAudioNode class. AVAudioUnitEffect (a child class of AVAudioUnit) acts as the genus class for effect classes. The following effects are most commonly used: AVAudioUnitEQ (equalizer), AVAudioUnitDelay (delay), AVAudioUnitReverb (echo effect) and AVAudioUnitTimePitch (acceleration or deceleration effect, pitch). Each of them has its own set of settings for changing and processing sound.

Initialization and adjustment of the effect on the example of the delay effect:

The next node, AVAudioMixerNode, is used for mixing. Unlike other nodes, which have strictly one input node, a mixer can have several. Here you can combine player nodes with effect nodes and an equalizer, after which the node output is already connected to AVAudioOutputNode to output the final sound.

An example of initializing mixer and output nodes:

4. Attaching nodes to the engine

However, just declaring the node is not enough to make it work. It needs to be attached to the engine and connected to other nodes via input and output buses. The engine supports dynamic connection, disconnection and deletion of nodes during startup with slight restrictions:

To attach nodes to the engine, the “attachNode:” method is used, and for detaching from the engine, “detachNode:” is used.

5. Connecting nodes to each other

All attached nodes must be connected to a common circuit for sound output. Connections depend on what you want to output. We need effects - connect the effect nodes to the player node, you need to combine two tracks - connect the two player nodes to the mixer. Or maybe you need two separate tracks: one with clear sound, and the second with processed sound - this can also be implemented. The connection order is not so important, but the sequence of connections should ultimately be connected to the main mixer, which, in turn, connects to the audio output node. The only exceptions are the input nodes, which are usually used for recording from a microphone.

I will give examples on diagrams and in the form of code:

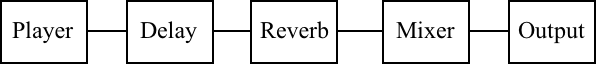

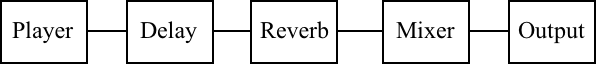

1) Player with effects

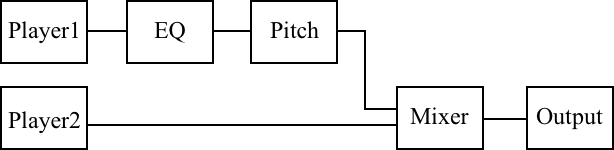

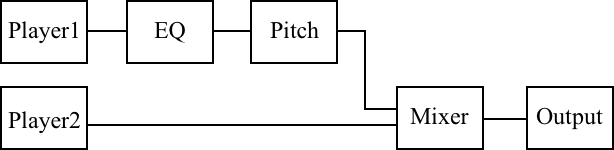

2) Two players, after one of which sound is processed

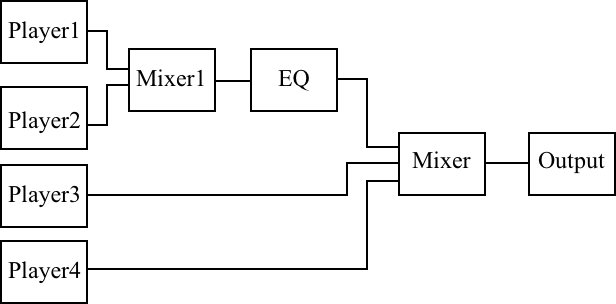

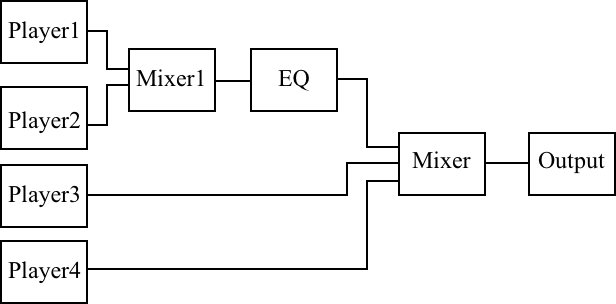

3) Four players, two of which are processed by an equalizer

Now that we have considered all the necessary steps for working with the engine, we will write a small example to see all these actions together and better imagine the order in which they must be performed. Here is an example of creating a player with an equalizer with adjustable lower, middle and high frequencies on the AVAudioEngine engine:

Well, an example of a playback method:

When developing with AVAudioEngine, do not forget about some things without which the application will not work correctly or crash.

1. Audio session and check for start before playing

Before playing, it is always worth setting an audio session: for one reason or another, it can be reset or change the category in the process of using the application. Also, before playing, you should check whether the engine is running, as it may be stopped (for example, as a result of switching to another application with the same engine). Otherwise, there is a risk that the application crashes.

2. Switching the headset

Another important point is the connection / disconnection of headphones or bluetooth headsets. At the same time, the engine also stops, and the often played track stops playing. Therefore, it’s worth catching this moment by adding an observer to receive the notification “AVAudioSessionRouteChangeNotification”. In the selector, you will need to set the audio session and restart the engine.

In this article, we familiarized ourselves with AVAudioEngine, looked at the points how to work with it, and gave some simple examples. This is a good tool in the arsenal of Apple, with which you can make many interesting applications of varying degrees of complexity, to some extent working with sound.

In terms of working with sound, Apple is ahead of its competitors, and this is no accident. The company offers good tools for playing, recording and processing tracks. Thanks to this, on the AppStore you can see a huge number of applications that somehow work with audio content. These include players with good sound ( Vox), audio editors with tools for editing and applying effects ( Sound Editor ), voice-changing applications ( Voicy Helium ), various simulators of musical instruments that give a fairly accurate imitation of the corresponding sound ( Virtuoso Piano ), and even simulators of DJ settings ( X Djing )

To work with audio, Apple provides the AVFoundation framework, which combines several tools. For example, AVAudioPlayer is used to play a single track, and AVAudioRecorder is used to record sound from a microphone. And if you only need the functions provided by these classes, then just use them. If you need to apply any effect, play several tracks at the same time, mix them, process or edit audio, or record sound from the output of a specific audio node, then AVAudioEngine will help you with this. Most of all, this class is attracted by the ability to impose effects on the track. A lot of applications with equalizers and the ability to change the voice are built on the basis of these effects. In addition, Apple allows developers to create their own effects, sound generators and instruments.

The main elements and their relationship

Let's start with the main AVAudioEngine engine. This engine is a group of audio node connections that generate and process audio signals and represent audio inputs and outputs. It can also be described as a diagram of audio nodes that are located in a certain way to achieve the desired result. That is, we can say that AVAudioEngine is a motherboard on which microcircuits are placed (in our case, audio nodes).

By default, the engine is connected to an audio device and automatically renders in real time. It can also be configured to work in manual mode, that is, without connecting to the device, to render in response to a request from a client, usually in real time or even faster.

When working with this class, it is necessary to execute initializations and methods in a certain order so that all operations are carried out correctly. Here is the sequence of actions on the items:

- First, set up the audio session AVAudioSession.

- Create an engine.

- We create separately AVAudioNode nodes.

- We attach nodes to the engine (attach).

- We connect nodes among themselves (connect).

- We start the engine.

1. Setting up an audio session

Without setting up an audio session, the application may not work correctly or there may be no sound at all. The specific list of configuration steps depends on what functions the engine performs in the application: recording, playing, or both. The following example sets the audio session to play only:

[[AVAudioSession sharedInstance] setCategory:AVAudioSessionCategoryPlayback error:nil];

[[AVAudioSession sharedInstance] overrideOutputAudioPort:AVAudioSessionPortOverrideSpeaker error:nil];

[[AVAudioSession sharedInstance] setActive:YES error:nil];To configure for recording, you must set the category AVAudioSessionCategoryRecord. All categories are available here .

2. Creating the engine

For this engine to work, you need to import the AVFoundation framework and initialize the class.

#import Initialization is as follows:

AVAudioEngine *engine = [[AVAudioEngine alloc] init];3. Creating nodes

AVAudioEngine works with various child classes of the AVAudioNode class that are attached to it. The nodes have input and output buses, which can be considered as connection points. For example, effects usually have one input bus and one output bus. Buses have formats presented in the form of AudioStreamBasicDescription , which include parameters such as sampling rate, number of channels and others. When connections between nodes are created, in most cases these formats should fit together, but there are exceptions.

Consider each type of audio nodes individually.

The most common type of audio node is AVAudioPlayerNode. This variety, as the name implies, is used to play audio. The node plays sound either from the specified AVAudioBuffer view buffer or from segments of the audio file opened by AVAudioFile. Buffers and segments can be scheduled for playback at a certain point or can be played immediately after the previous segment.

Initialization and playback example:

AVAudioPlayerNode *playerNode = [[AVAudioPlayerNode alloc] init];

…

NSURL *url = [[NSBundle mainBundle] URLForResource:@"sample" withExtension:@"wav"];

AVAudioFile *audioFile = [[AVAudioFile alloc] initForReading:url error:nil];

[playerNode scheduleFile:audioFile atTime:0 completionHandler:nil];

[playerNode play];Next, consider the AVAudioUnit class, which is also a child of the AVAudioNode class. AVAudioUnitEffect (a child class of AVAudioUnit) acts as the genus class for effect classes. The following effects are most commonly used: AVAudioUnitEQ (equalizer), AVAudioUnitDelay (delay), AVAudioUnitReverb (echo effect) and AVAudioUnitTimePitch (acceleration or deceleration effect, pitch). Each of them has its own set of settings for changing and processing sound.

Initialization and adjustment of the effect on the example of the delay effect:

AVAudioUnitDelay *delay = [[AVAudioUnitDelay alloc] init];

delay.delayTime = 1.0f;//Время затрачиваемое входным сигналом для достижения выхода, от 0 до 2 секундThe next node, AVAudioMixerNode, is used for mixing. Unlike other nodes, which have strictly one input node, a mixer can have several. Here you can combine player nodes with effect nodes and an equalizer, after which the node output is already connected to AVAudioOutputNode to output the final sound.

An example of initializing mixer and output nodes:

AVAudioMixerNode *mixerNode = [[AVAudioMixerNode alloc] init];

AVAudioOutputNode *outputNode = engine.outputNode;4. Attaching nodes to the engine

However, just declaring the node is not enough to make it work. It needs to be attached to the engine and connected to other nodes via input and output buses. The engine supports dynamic connection, disconnection and deletion of nodes during startup with slight restrictions:

- all dynamic reconnections must occur in front of the mixer node;

- while deleting effects usually leads to automatic joining of neighboring nodes, deleting a node with a different number of input and output channels or a mixer is likely to damage the graph.

To attach nodes to the engine, the “attachNode:” method is used, and for detaching from the engine, “detachNode:” is used.

[engine attachNode:playerNode];

[engine detachNode:delay];5. Connecting nodes to each other

All attached nodes must be connected to a common circuit for sound output. Connections depend on what you want to output. We need effects - connect the effect nodes to the player node, you need to combine two tracks - connect the two player nodes to the mixer. Or maybe you need two separate tracks: one with clear sound, and the second with processed sound - this can also be implemented. The connection order is not so important, but the sequence of connections should ultimately be connected to the main mixer, which, in turn, connects to the audio output node. The only exceptions are the input nodes, which are usually used for recording from a microphone.

I will give examples on diagrams and in the form of code:

1) Player with effects

[engine connect:playerNode to:delay format:nil];

[engine connect:delay to:reverb format:nil];

[engine connect:reverb to:engine.mainMixerNode format:nil];

[engine connect:engine.mainMixerNode to:outputNode format:nil];

[engine prepare];2) Two players, after one of which sound is processed

[engine connect:player1 to:eq format:nil];

[engine connect:eq to:pitch format:nil];

[engine connect:pitch to:mixerNode fromBus:0 toBus:0 format:nil];

[engine connect:player2 to:mixerNode fromBus:0 toBus:1 format:nil];

[engine connect:mixerNode to:outputNode format:nil];

[engine prepare];3) Four players, two of which are processed by an equalizer

[engine connect:player1 to:mixer1 fromBus:0 toBus:0 format:nil];

[engine connect:player2 to:mixer1 fromBus:0 toBus:1 format:nil];

[engine connect:mixer1 to:eq format:nil];

[engine connect:eq to:engine.mainMixerNode fromBus:0 toBus:0 format:nil];

[engine connect:player3 to: engine.mainMixerNode fromBus:0 toBus:1 format:nil];

[engine connect:player4 to: engine.mainMixerNode fromBus:0 toBus:2 format:nil];

[engine connect:engine.mainMixerNode to:engine.outputNode format:nil];

[engine prepare];Now that we have considered all the necessary steps for working with the engine, we will write a small example to see all these actions together and better imagine the order in which they must be performed. Here is an example of creating a player with an equalizer with adjustable lower, middle and high frequencies on the AVAudioEngine engine:

- (instancetype)init

{

self = [super init];

if (self) {

[[AVAudioSession sharedInstance] setCategory:AVAudioSessionCategoryPlayback error:nil];

[[AVAudioSession sharedInstance] overrideOutputAudioPort:AVAudioSessionPortOverrideSpeaker error:nil];

[[AVAudioSession sharedInstance] setActive:YES error:nil];

engine = [[AVAudioEngine alloc] init];

playerNode = [[AVAudioPlayerNode alloc] init];

AVAudioMixerNode *mixerNode = engine.mainMixerNode;

AVAudioOutputNode *outputNode = engine.outputNode;

AVAudioUnitEQ *eq = [[AVAudioUnitEQ alloc] initWithNumberOfBands:3];

eq.bands[0].filterType = AVAudioUnitEQFilterTypeParametric;

eq.bands[0].frequency = 100.f;

eq.bands[1].filterType = AVAudioUnitEQFilterTypeParametric;

eq.bands[1].frequency = 1000.f;

eq.bands[2].filterType = AVAudioUnitEQFilterTypeParametric;

eq.bands[2].frequency = 10000.f;

[engine attachNode:playerNode];

[engine attachNode:eq];

[engine connect:playerNode to:eq format:nil];

[engine connect:eq to:engine.mainMixerNode format:nil];

[engine connect:engine.mainMixerNode to:engine.outputNode format:nil];

[engine prepare];

if (!engine.isRunning) {

[engine startAndReturnError:nil];

}

}

return self;

}Well, an example of a playback method:

-(void)playFromURL:(NSURL *)url

{

AVAudioFile *audioFile = [[AVAudioFile alloc] initForReading:url error:nil];

[playerNode scheduleFile:audioFile atTime:0 completionHandler:nil];

[playerNode play];

}Possible problems

When developing with AVAudioEngine, do not forget about some things without which the application will not work correctly or crash.

1. Audio session and check for start before playing

Before playing, it is always worth setting an audio session: for one reason or another, it can be reset or change the category in the process of using the application. Also, before playing, you should check whether the engine is running, as it may be stopped (for example, as a result of switching to another application with the same engine). Otherwise, there is a risk that the application crashes.

[[AVAudioSession sharedInstance] setCategory:AVAudioSessionCategoryPlayback error:nil];

[[AVAudioSession sharedInstance] overrideOutputAudioPort:AVAudioSessionPortOverrideSpeaker error:nil];

[[AVAudioSession sharedInstance] setActive:YES error:nil];

if (!engine.isRunning) {

[engine startAndReturnError:nil];

}2. Switching the headset

Another important point is the connection / disconnection of headphones or bluetooth headsets. At the same time, the engine also stops, and the often played track stops playing. Therefore, it’s worth catching this moment by adding an observer to receive the notification “AVAudioSessionRouteChangeNotification”. In the selector, you will need to set the audio session and restart the engine.

[[NSNotificationCenter defaultCenter] addObserver:self selector:@selector(audioRouteChangeListenerCallback:) name:AVAudioSessionRouteChangeNotification object:nil];Conclusion

In this article, we familiarized ourselves with AVAudioEngine, looked at the points how to work with it, and gave some simple examples. This is a good tool in the arsenal of Apple, with which you can make many interesting applications of varying degrees of complexity, to some extent working with sound.