Face detection network built into smartphone

- Transfer

Apple began using in-depth learning to identify faces starting with iOS 10. With the release of the Vision framework, developers can now use this technology and many other machine vision algorithms in their applications. When developing the framework, significant problems had to be overcome in order to maintain user privacy and work efficiently on the hardware of a mobile device. The article discusses these problems and describes how the algorithm works.

Apple began using in-depth learning to identify faces starting with iOS 10. With the release of the Vision framework, developers can now use this technology and many other machine vision algorithms in their applications. When developing the framework, significant problems had to be overcome in order to maintain user privacy and work efficiently on the hardware of a mobile device. The article discusses these problems and describes how the algorithm works.Introduction

For the first time, the definition of faces in public APIs appeared in the Core Image framework through the CIDetector class. These APIs also worked in Apple’s own applications, such as Photos. The very first version of CIDetector used to determine a method based on the Viola - Jones algorithm [1]. CIDetector's consistent enhancements were based on the achievements of traditional machine vision.

With the advent of deep learning and its application to machine vision problems, the accuracy of facial recognition systems has taken a significant step forward. We had to completely rethink our approach in order to benefit from this paradigm shift. Compared to traditional machine vision, deep learning models require an order of magnitude more memory, much more disk space and more computing resources.

As of today, a typical high-performance smartphone is not a viable platform for deep learning models of vision. Most industry players circumvent this limitation using the cloud API. There, the pictures are sent for analysis to the server, where the deep learning system gives the result in determining the faces. Cloud services typically run powerful desktop GPUs with a large amount of available memory. Very large network models, and potentially entire ensembles of large models, can work on the server side, allowing clients (including mobile phones) to take advantage of large deep learning architectures that are unrealistic to run locally.

Apple iCloud Photo Library is a cloud-based solution for storing photos and videos. Each photo and video before being sent to iCloud Photo Library is encrypted on the device and can only be decrypted on the device with the corresponding iCloud account. Therefore, for the operation of machine vision systems in deep learning, we had to implement algorithms directly on the iPhone.

There were several problems to be solved. Deep learning models have to be delivered as part of the operating system, occupying valuable NAND space. They need to be loaded into RAM and consume significant computing resources of the GPU and / or CPU. Unlike cloud services, where resources can be allocated exclusively for machine vision tasks, on a computing device, system resources are shared with other running applications. Finally, the calculations must be efficient enough to process a large collection of Photos photos at a reasonable time, but without significant power consumption or heating.

The rest of the article discusses our approach to using algorithms to determine faces in the deep learning system and how we successfully managed to overcome difficulties in order to achieve maximum accuracy of determination. We will discuss:

- how we fully used our GPUs and CPUs (using BNNS and Metal);

- memory optimization for neural network output, image loading and caching;

- how we implemented the neural network in such a way as not to interfere with the work of many other simultaneously performed tasks on the iPhone.

Transition from Viola-Jones to deep learning

When we started working on deep learning to identify faces in 2014, deep convolutional neural networks (GNSS) just started to show promising results in object detection tasks. The most famous among all was the OverFeat model [2], which demonstrated some simple ideas and showed that the GNSS are quite effective in detecting objects in images.

The OverFeat model deduced the correspondence between fully connected neural network layers and convolutional layers with valid convolutional filters in the same spatial dimensions as the input data. This work clearly showed that a binary classification network with a fixed receptive field (for example, 32 × 32 with a natural pitch of 16 pixels) can potentially be used for images of arbitrary size (for example, 320 × 320) and produce a map of the corresponding size at the output (in this example 20 × 20). The scientific article describing OverFeat also contained clear recipes on how to produce denser output cards, effectively decreasing neural network spacing.

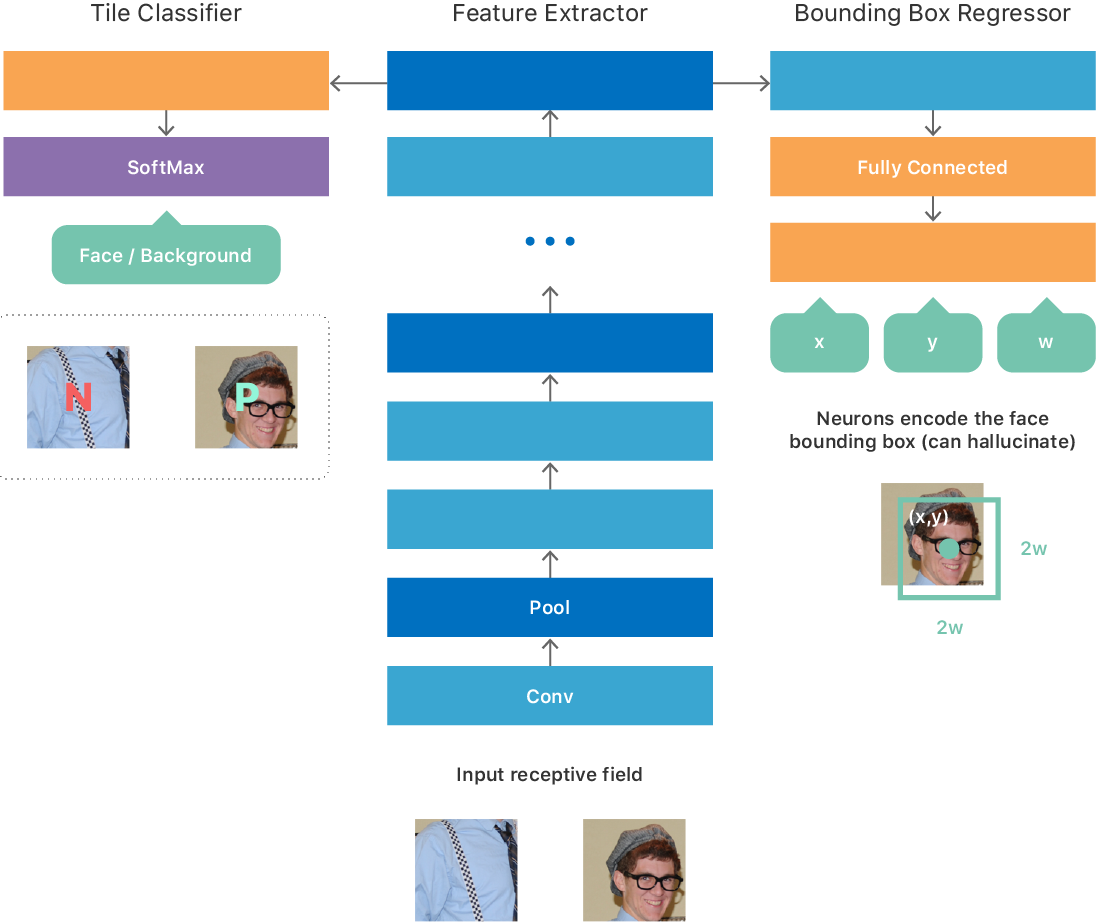

We initially based the architecture on some ideas from an article on OverFeat, which resulted in a fully convolutional network (see Figure 1) with a multitasking goal:

- binary classification to predict the presence or absence of a person in the input;

- regression to predict the parameters of the bounding box that best localizes the face in the input.

We experimented with different training options for such a neural network. For example, a simple training procedure was to create a large data set with fixed-size image tiles that corresponded to the minimum valid input data size, so that each tile generated one result at the output of the neural network. The data set for training is perfectly balanced, so there is a person on half of the tiles (positive class), and not on the other half (negative class). For each positive tile, the true coordinates (x, y, w, h) of the face were indicated. We trained the neural network to optimize for the multitasking goal described above. After training, the neural network learned to predict the presence of a face in the image and, in the case of a positive answer, gave out the coordinates and scale of the face in the frame.

Fig. 1. Improved GNSS architecture for detecting faces.

Since the network is fully convolutional, it can efficiently process an image of arbitrary size and make an output 2D map. Each point on the map corresponds to the input image tile and contains a neural network prediction regarding the presence or absence of a person on this tile, as well as its location / scale (see the GNSS input and output in Fig. 1).

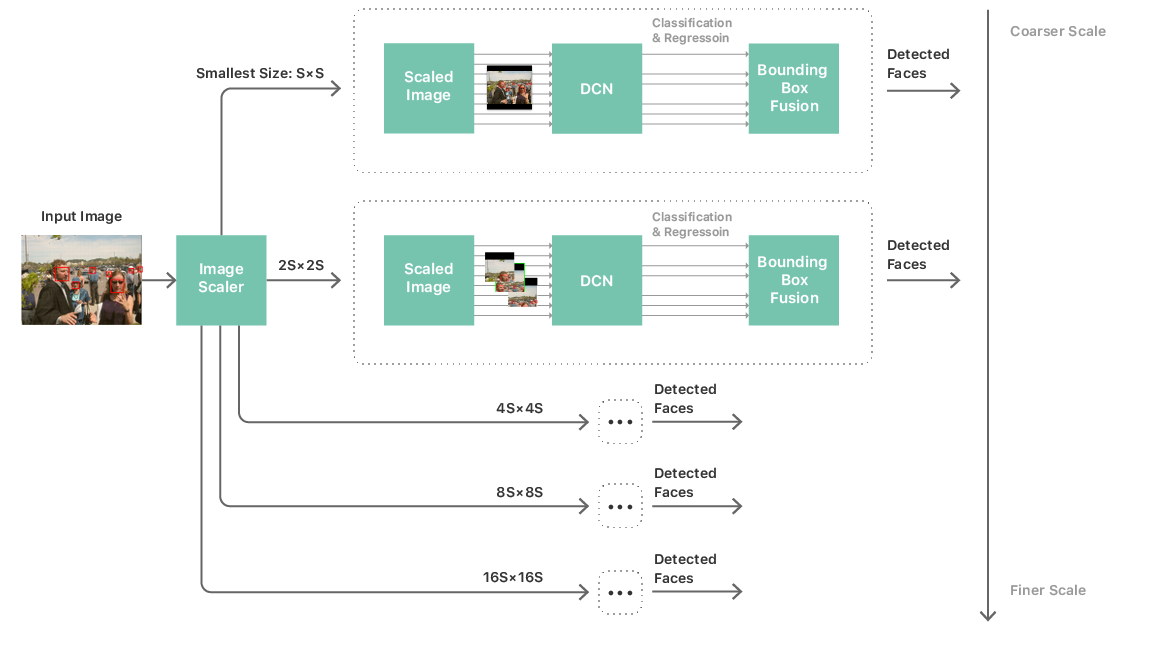

With such a neural network, you can build a fairly standard processing pipeline for identifying faces. It consists of a multi-scale pyramid of images, a face detection neural network and a post-processing module. A multi-scale pyramid is required to handle faces of all sizes. A neural network is used at each level of the pyramid, from where the candidates for recognition are extracted (see Fig. 2). The post-processing module then combines candidates from all scales to produce a list of bounding boxes that correspond to the final prediction of the neural network by faces in the image.

Fig. 2. The process of identifying individuals

Such a strategy has made it more realistic to launch a deep convolutional neural network and complete image scanning on mobile hardware. But the complexity and size of the neural network remained the main bottlenecks in performance. To solve this problem meant not only restricting the neural network to a simple topology, but also limiting the number of layers, the number of channels per layer, and the size of the convolutional filter kernel. These limitations raised an important problem: our neural networks, which provided acceptable accuracy, are far from simple: in most of them there are more than 20 layers plus several network-in-network modules [3]. The use of such networks in the image scanning framework described above is absolutely impossible due to unacceptable performance and power consumption. In fact, we cannot even load the neural network into memory. The task boils down to how to train a simple and compact neural network that can simulate the behavior of accurate, but very complex networks.

We decided to use an approach informally known as teacher-student education [4]. This approach provides a mechanism for teaching the second thin-and-deep neural network (“student”), so that it very closely matches the output of a large complex neural network (“teacher”), which we taught as described above. The student neural network consists of a simple repeating structure of 3 × 3 convolutions and subsample layers, and its architecture is adapted to maximize the use of our neural network output engine (see Fig. 1).

Now, finally, we have a deep face detection neural network algorithm suitable for running on mobile hardware. We repeated several training cycles and got a neural network model accurate enough to perform the tasks. Although this model is accurate and capable of working on a mobile device, a huge amount of work remains to make it possible in practice to deploy the model to millions of user devices.

Image Processing Pipeline Optimization

Practical considerations for deep learning have made a big difference in choosing an easy-to-use developer platform architecture, which we call Vision. It soon became apparent that only excellent algorithms were not enough to create a great framework. I had to greatly optimize the image processing pipeline.

We did not want developers to think about scaling, color conversion, or image sources. Face detection should work well regardless of whether the stream from the camera is used in real time, video processing, files from disk or from the web. It should work regardless of the type and format of the picture.

We were worried about power and memory usage, especially during streaming and image capture. Memory consumption bothered us, including when processing 64-megapixel panoramas. We solved these problems using partial-downsampling decoding and automatic tiling. This allowed us to run machine vision tasks on large images even with a non-standard aspect ratio.

Another issue was matching color spaces. Apple has a wide range of APIs, but we did not want to load developers with the work of choosing color space. This takes care of the Vision framework, thereby lowering the entry threshold for the successful implementation of machine vision in any application.

Vision is also optimized by efficiently reusing and processing intermediates. Detecting faces, determining the coordinates of faces, and some other tasks of computer vision - all of them work on the same scaled intermediate image. By abstracting the interface to the level of algorithms and finding the optimal place for processing an image or buffer, Vision is able to create and cache intermediate images - this improves the performance of various computer vision tasks even without the intervention of a developer.

The converse is also true. From the perspective of the central interface, we can direct the development of the algorithm in such a direction as to optimize the reuse or sharing of intermediate data. Vision implements several different and independent machine vision algorithms. For different algorithms to work well together, they share the same input resolutions and color spaces where possible.

Performance Optimization for Mobile Iron

The pleasure of an easy-to-use framework will quickly disappear if the API for detecting faces is not able to work in real time or in background system processes. Users expect that the face detection works automatically and imperceptibly during the processing of photo albums or works immediately after shooting the frame. They do not want the battery to decrease due to this or to slow down the system. Apple mobile devices are multitasking. Therefore, the background process of machine vision should not significantly affect other system functions.

We have implemented several strategies to reduce memory consumption and GPU usage. To reduce memory usage, we allocate intermediate layers of our neural networks by analyzing a computational graph. This allows you to assign multiple layers to one buffer. Being completely deterministic, this technique nevertheless reduces memory consumption without affecting performance or fragmentation in the memory, and it can be used for both the CPU and GPU.

The Vision detector works with five neural networks (one for each level of the multiscale pyramid, as shown in Fig. 2). The total weights and parameters are indicated for these five neural networks, but they have a different format for the input and output data and the intermediate layers. To further reduce memory consumption, we run the memory optimization algorithm on a joint graph composed of these five networks, which significantly reduces memory consumption. Also, all neural networks together use the same buffers with weights and parameters, again reducing the amount of allocated memory.

To achieve better performance, we use the fully convolutional nature of the neural network: all scales are dynamically changed to match the resolution of the input image. Compared with an alternative approach - fitting the image to a square grid of the neural network (laid with empty stripes) - fitting the neural network to the size of the image can dramatically reduce the total number of operations. Since the topology of operations does not change as a result of such a rearrangement, and due to the high performance of the rest of the distributor, dynamic shape change does not consume more resources than allocation.

In order to guarantee the interactivity and lack of braking of the UI during the operation of the deep neural network in the background process, we divided the work tasks for the GPU for each layer of the neural network so that each task was performed for no longer than one millisecond. This allows the driver to change contexts while allocating resources for tasks with a higher priority, such as UI animation, which reduces and sometimes completely eliminates frames.

Together, these strategies ensure that the user will enjoy the local machine vision operation with low latency and in private mode, although he does not even know that the neural networks on the smartphone perform several hundred billion floating point operations every second.

Using the Vision Framework

Did we manage to achieve our goal and develop a high-performance, easy-to-use API for identifying faces? You can try the Vision framework and decide for yourself. Here are the resources to get you started:

- Presentation with WWDC: “ Vision Framework: Core ML Development ”

- Vision Framework Help

- Guide "Core ML and Vision: Machine Learning in iOS 11" [5]

Literature

[1] Viola, P., Jones, MJ Robust Real-time Object Detection Using a Boosted Cascade of Simple Features . Published in Proceedings of the Computer Vision and Pattern Recognition Conference , 2001. ↑

[2] Sermanet, Pierre, David Eigen, Xiang Zhang, Michael Mathieu, Rob Fergus, Yann LeCun. OverFeat: Integrated Recognition, Localization and Detection Using Convolutional Networks . arXiv: 1312.6229 [Cs], December 2013. ↑

[3] Lin, Min, Qiang Chen, Shuicheng Yan. Network In Network . arXiv: 1312.4400 [Cs], December 2013. ↑

[4] Romero, Adriana, Nicolas Ballas, Samira Ebrahimi Kahou, Antoine Chassang, Carlo Gatta, Yoshua Bengio. FitNets: Hints for Thin Deep Nets . arXiv: 1412.6550 [Cs], December 2014. ↑

[5] Tam, A. Core ML and Vision: Machine learning in iOS Tutorial . Retrieved from www.raywenderlich.com , September 2017. ↑