How to render a frame engine Unreal Engine

- Transfer

Once I was looking for the Unreal source code and, inspired by an excellent analysis of how popular games render the frame ( translation of the article on Habré), I also decided to do something similar with it to learn how the engine renders the frame (with parameters and settings of the scene according to default).

Since we have access to the source code, we can study the source code of the renderer to understand what it does, however it is a rather voluminous part of the engine, and the rendering paths are highly context sensitive, so it will be easier to examine a pure low-level API (sometimes looking into the code, to fill in the blanks).

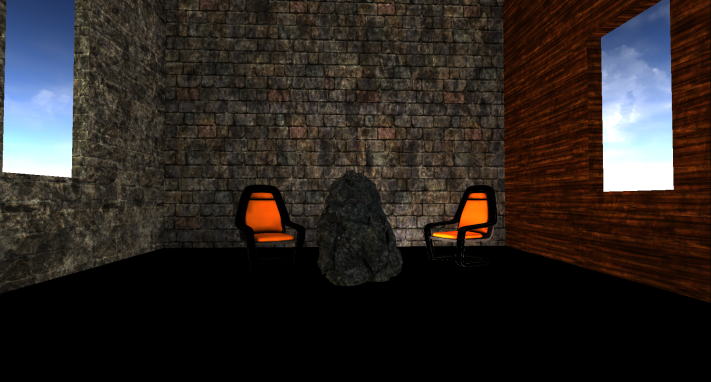

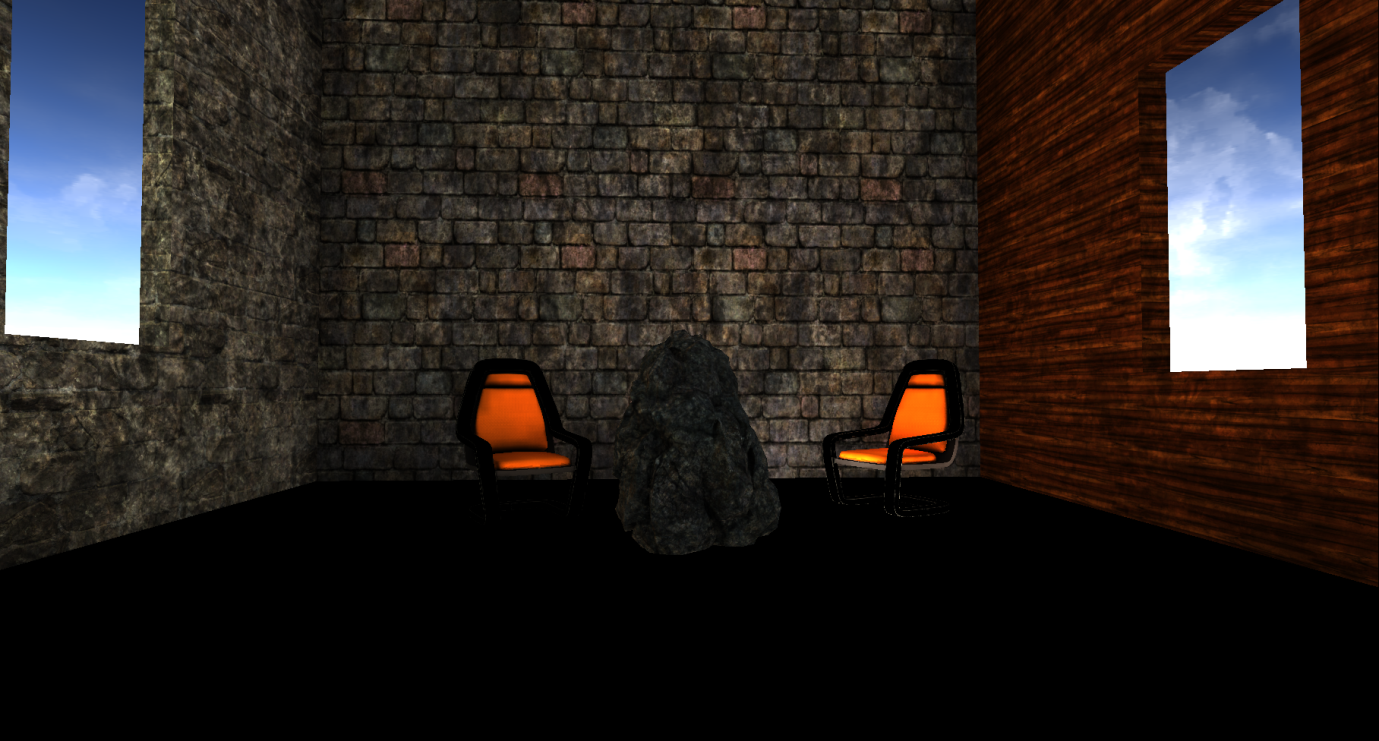

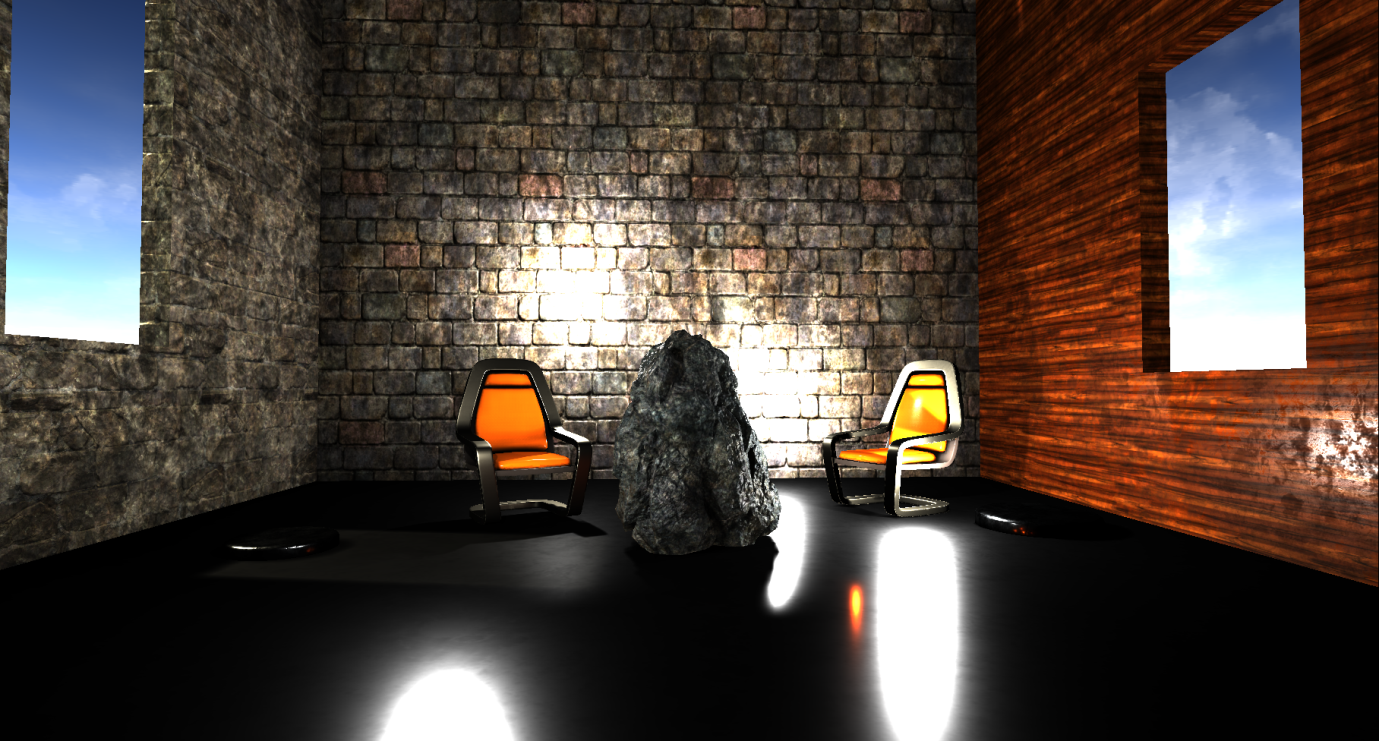

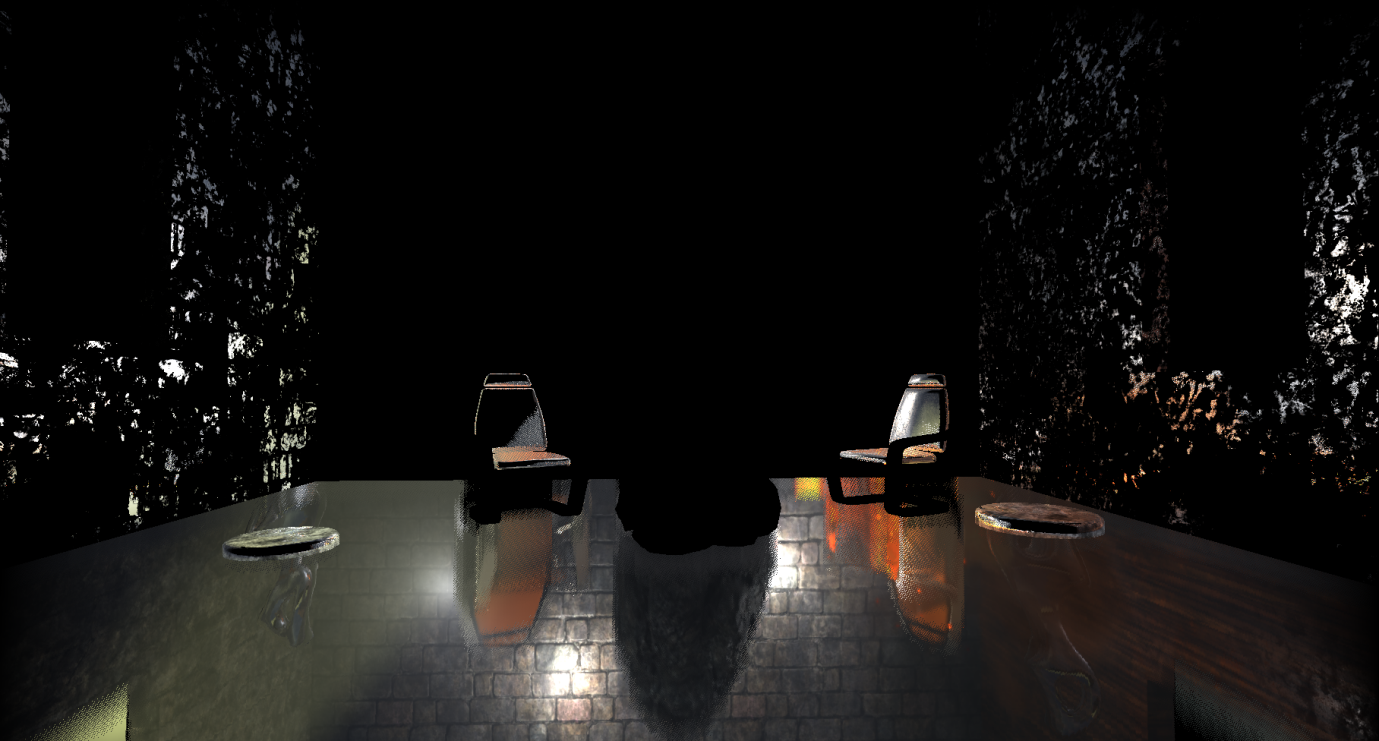

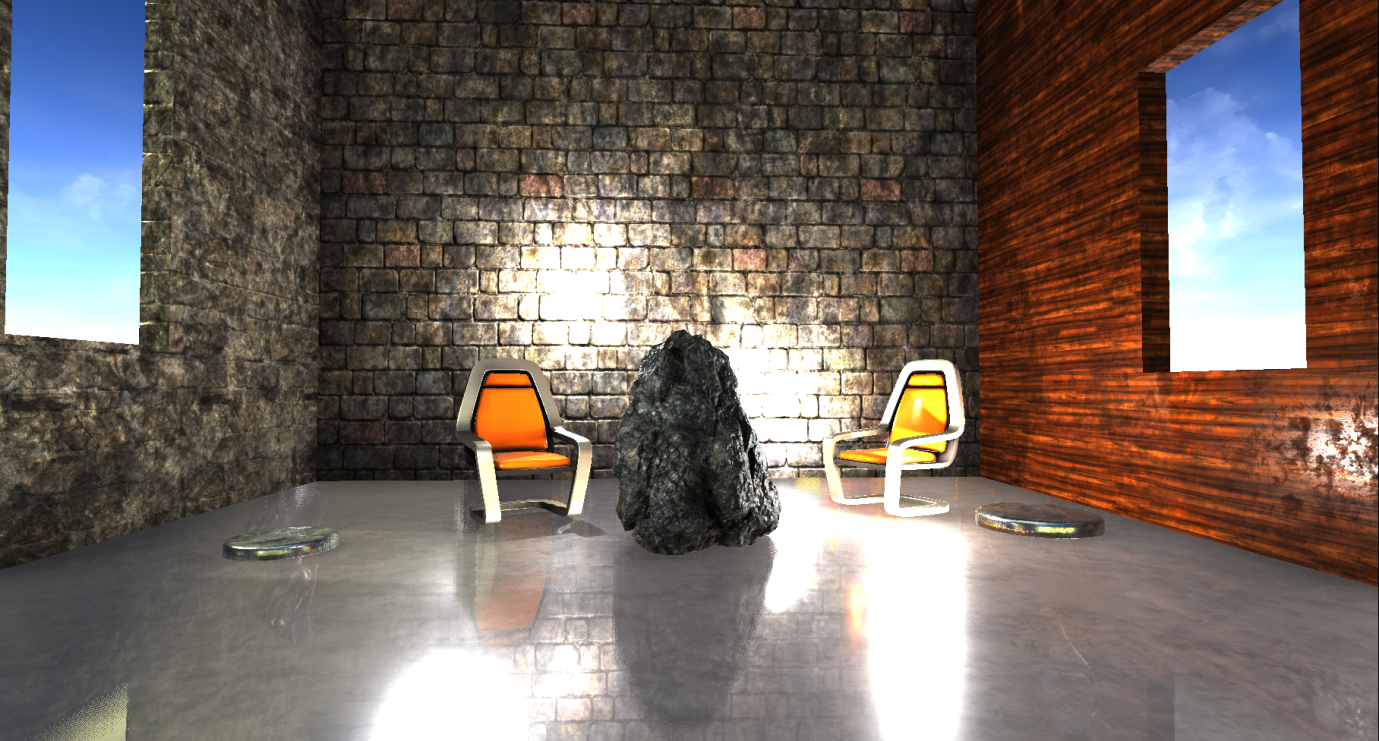

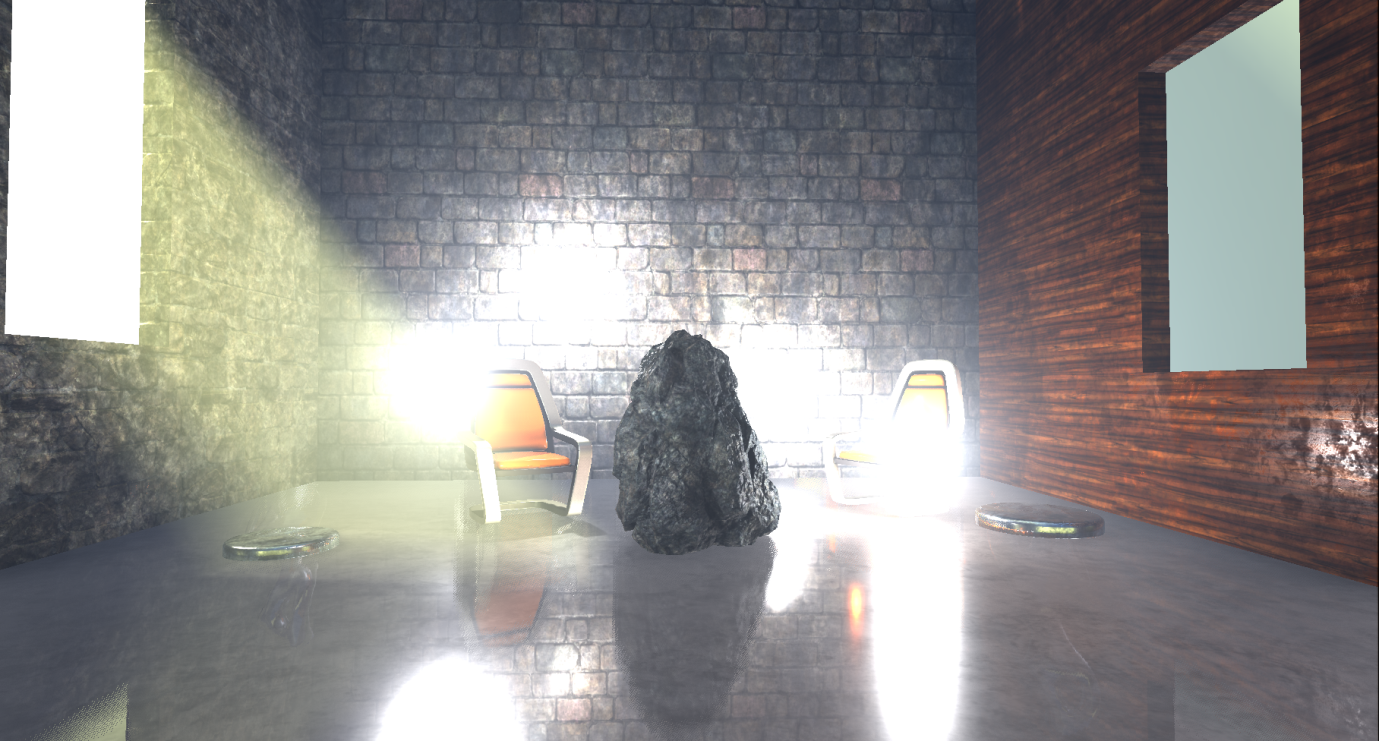

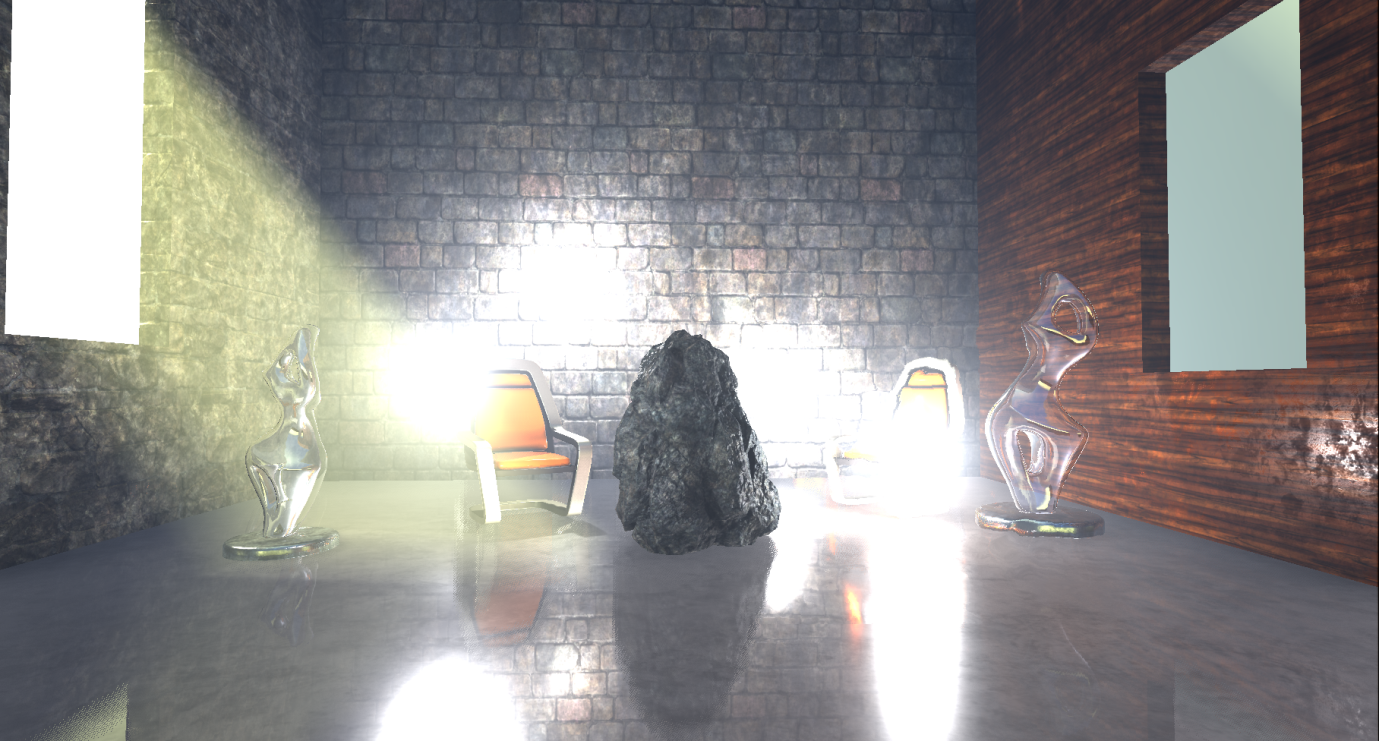

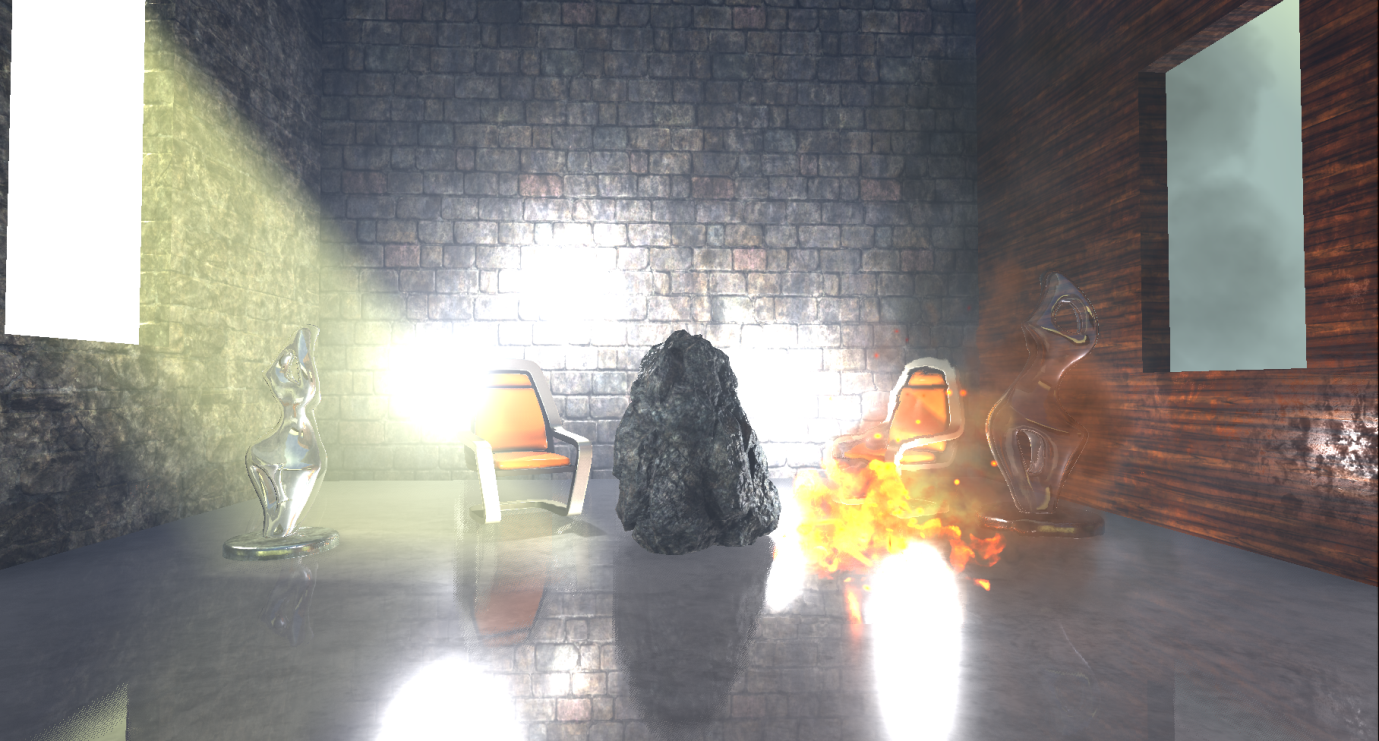

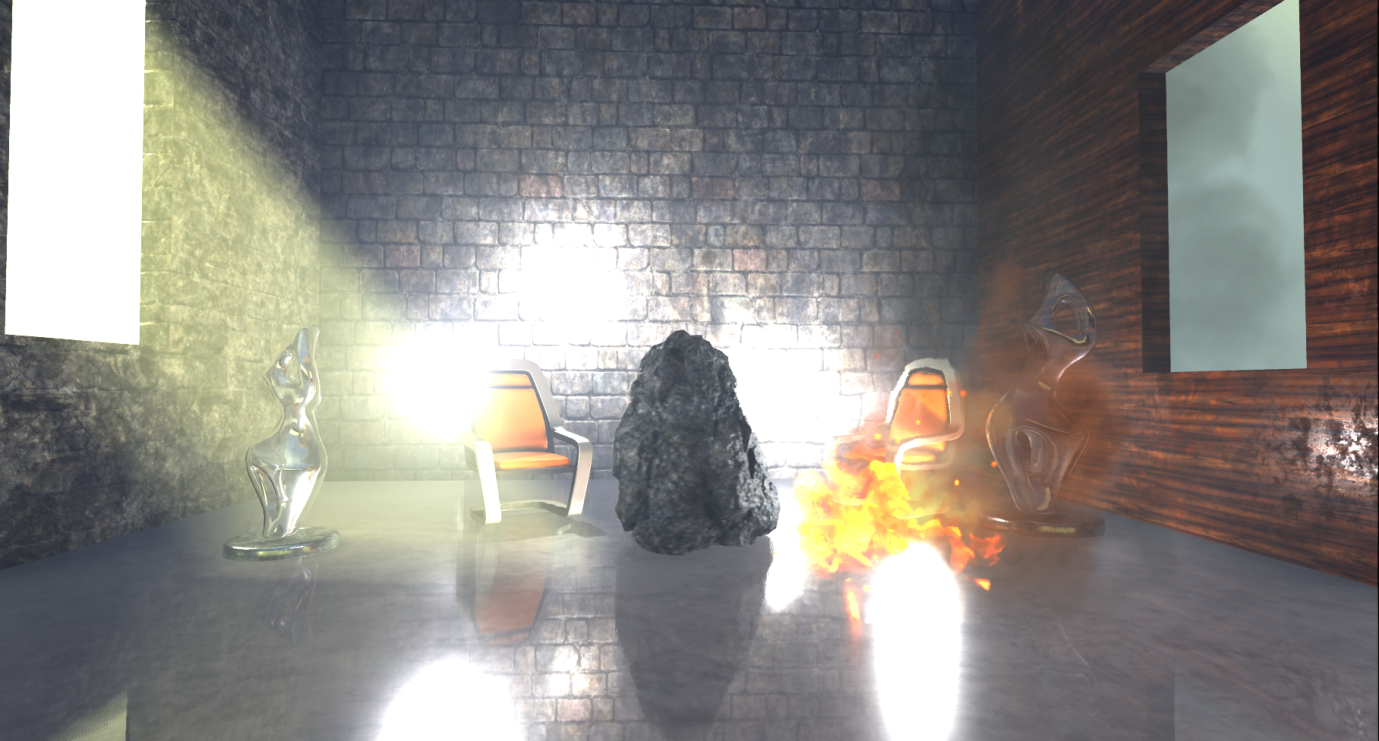

I put together a simple scene with several static and dynamic props, several light sources, volumetric fog, transparent objects and particle effects to use a wide enough range of materials and rendering methods.

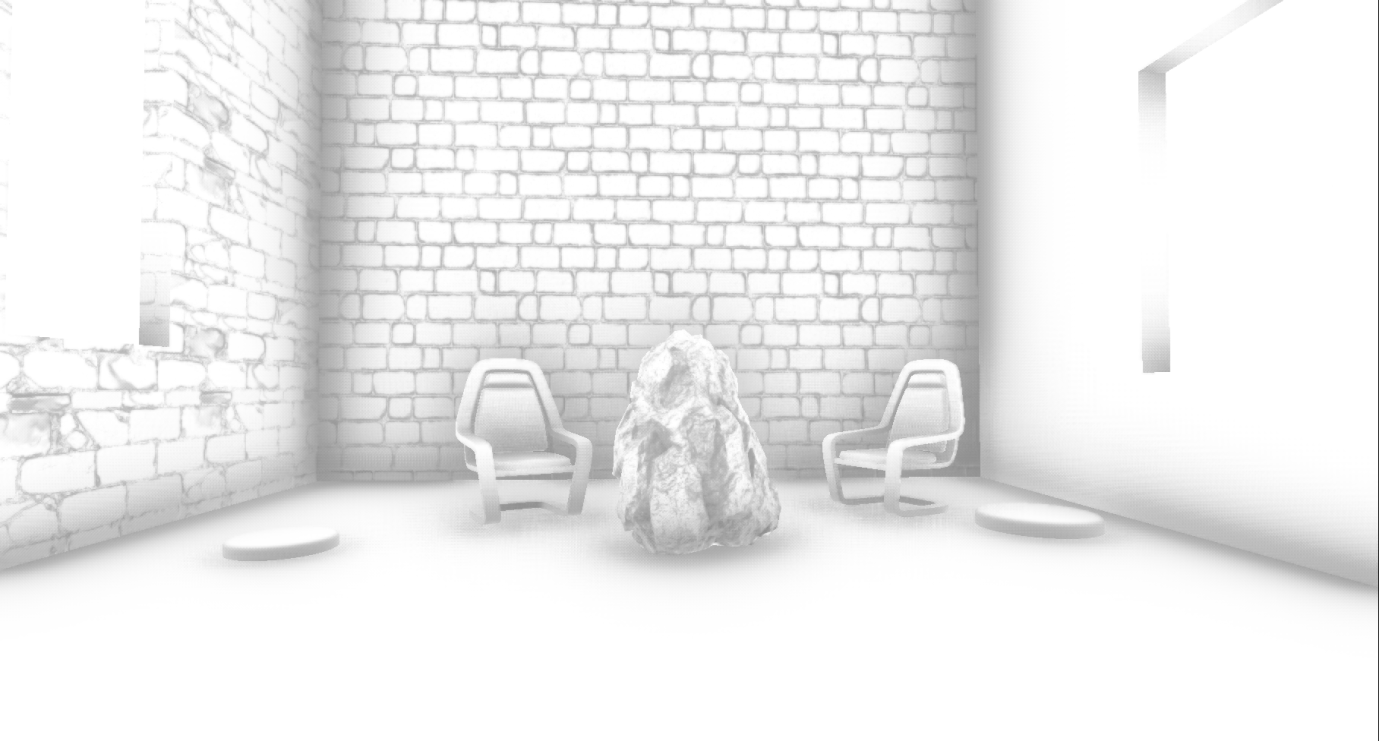

So, I skipped Editor through RenderDoc and turned on capture. Perhaps this is not too similar to what the frame of the real game will look like, but it will give us an approximate idea of how Unreal renders the standard frame (I did not change any settings and chose the maximum quality for the PC):

Note: the analysis is based on the capture of video processor information and the source code of the renderer ( version 4.17.1 ). Prior to this project, I did not have much experience with Unreal. If I missed something or was mistaken in something, then let me know in the comments.

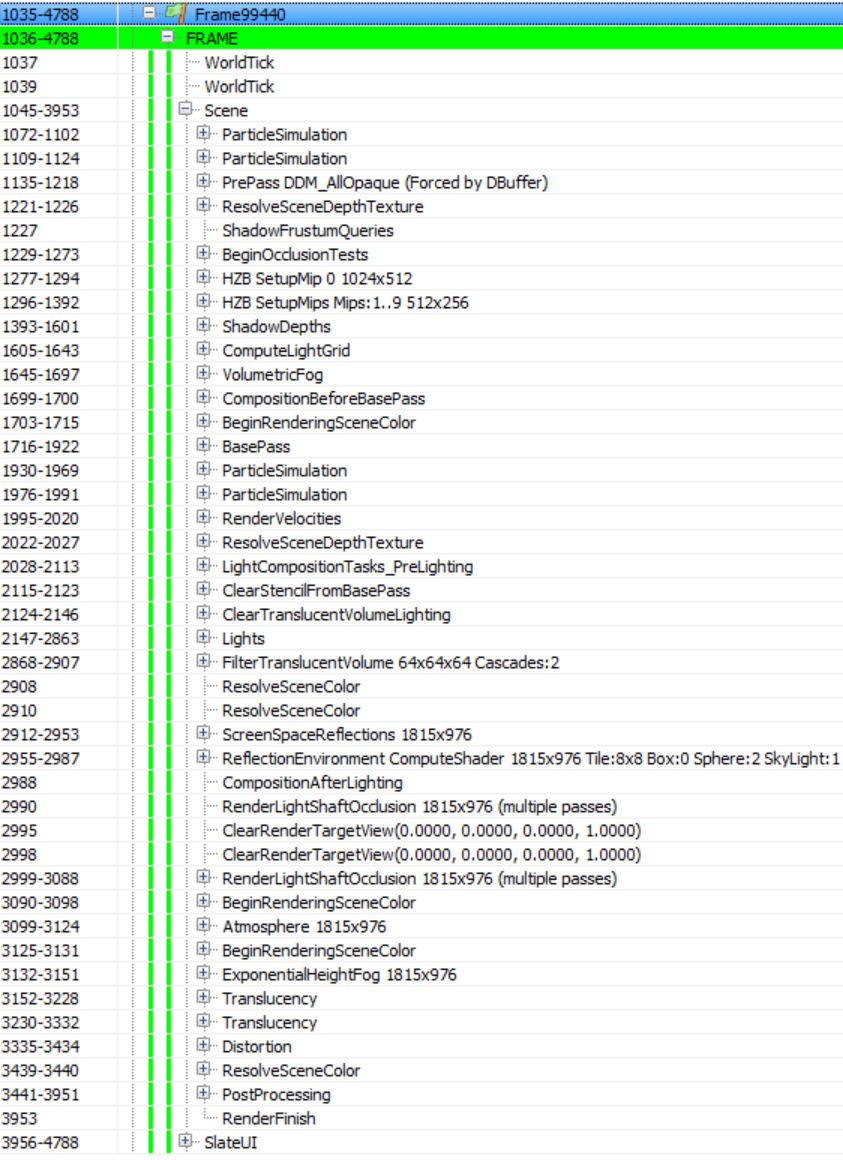

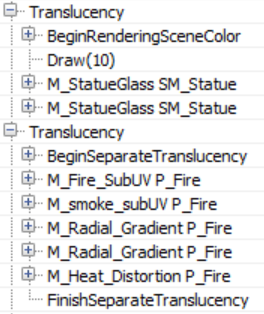

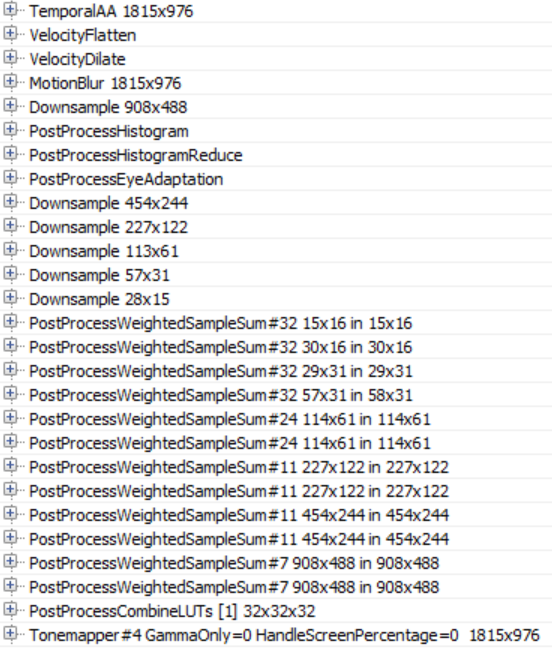

Fortunately, the Unreal rendering call list is clear and well annotated, and this will simplify our work. The list may look different if there are not some entities / materials in the scene, or if you select a lower quality. For example, if you render without particles, ParticleSimulation passes will be absent. SlateUI

rendering passcontains all the API calls made by Unreal Editor to render its UI, so we will skip it and focus on all the passes in the Scene section .

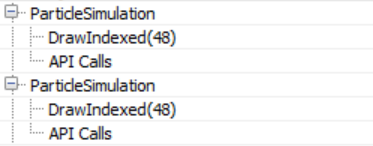

Particle Simulation

A frame begins with a ParticleSimulation pass . It calculates in the video processor the particle motion and other properties for each particle emitter in the scene for two target renders: RGBA32_Float (positions are written here) and RGBA16_Float (speeds) (and pairs of time / life related data). Here, for example, the output for the target render RGBA32_Float, where each pixel corresponds to the position of the sprite in the world:

It seems that in this case, the particle effect that I added to the scene has two emitters that require simulation in a video processor without calculating collisions, so the corresponding rendering passes can be performed in the early stages of creating a frame.

Z-buffer pre-pass

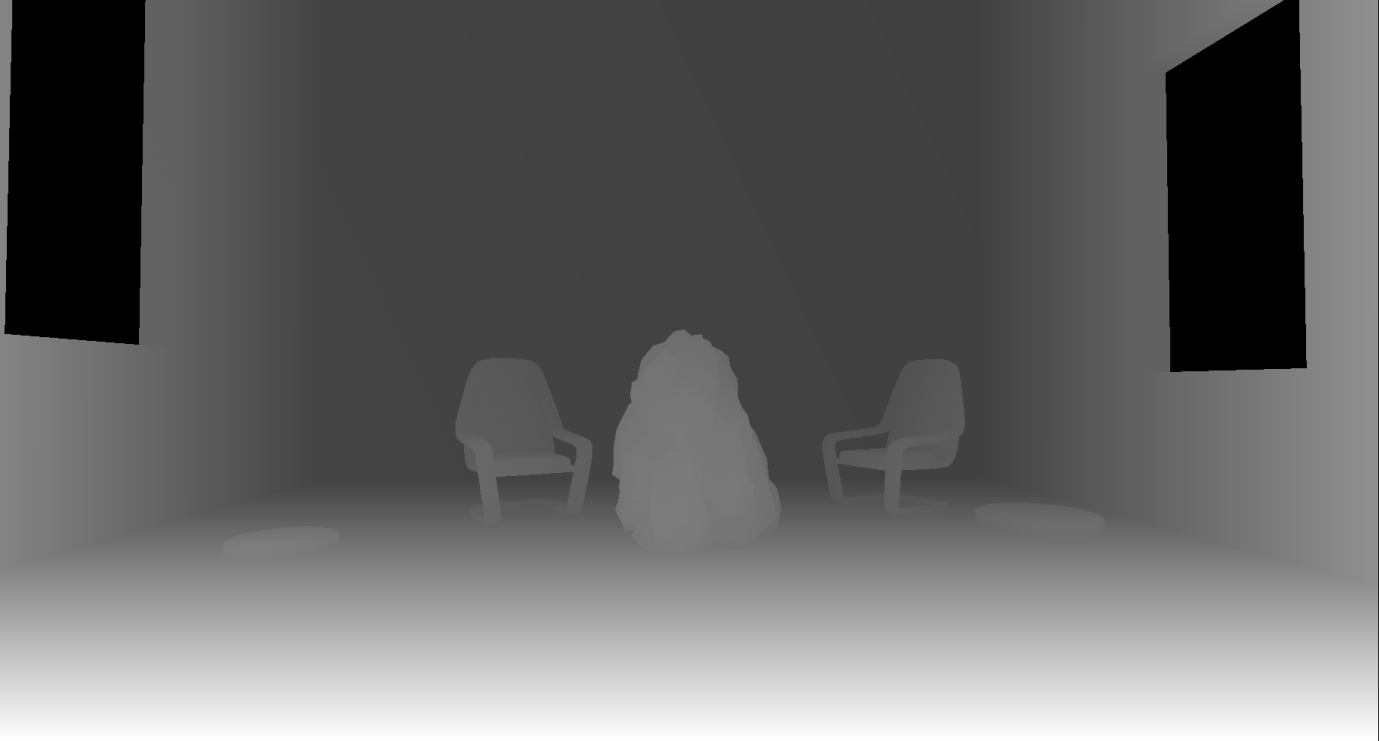

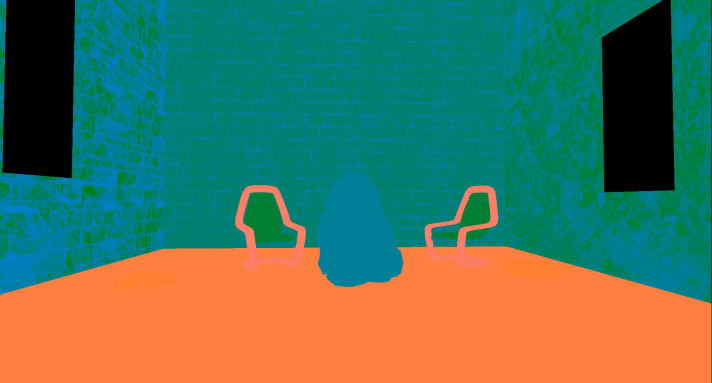

The next is the PrePass rendering pass , which is the z-buffer pre-pass. It renders all opaque polygonal meshes (meshes) into the R24G8 depth buffer:

It is worth noting that in Unreal, when rendering to the depth buffer, a reverse Z-buffer (reverse-Z) is used . This means that the near plane is assigned the value 1, and the far one - 0. This provides greater accuracy along the depth range and reduces the number of z-conflicts for distant grids. The name of the rendering passage means that the passage is triggered by the DBuffer buffer. This is the name of the decal buffer, which Unreal Engine uses to render deferred decals. It requires scene depth, so the Z-buffer pre-pass is activated. But, as we will see below, the Z-buffer is also used in other contexts, for example, to calculate occlusion and reflections in the screen space.

Some render passages in the list are empty. e.g. ResolveSceneDepth, which, I believe, is necessary for platforms that really require “resolving in depth” of the target render before using it as a texture (it is not needed on the PC), as well as ShadowFrustumQueries , which looks like a blank marker because these shadow overlap tests are performed in the next render pass.

Overlap Check

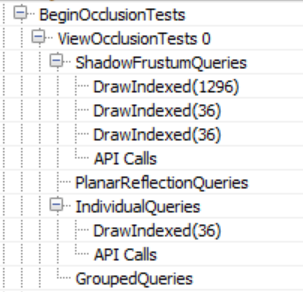

BeginOcclusionTests handles all overlap checks in the frame. By default, Unreal uses hardware occlusion queries to check for overlaps . In short, it is carried out in three stages:

- We render everything that we perceive as an overlapping object (for example, a large opaque mesh) into the depth buffer

- We create an overlap request, pass it and render the props for which we want to define an overlap. This is implemented using the z-test and the depth buffer created in step 1. The query returns the number of pixels that passed the z-test, that is, if the value is zero, it means that the props are entirely behind an opaque grid. Since rendering the entire props grid for overlapping can be costly, we use the bounding box of this props as a replacement. If it is invisible, then props are definitely invisible too.

- We read the query results back to the video processor and based on the number of rendered pixels, we can choose whether to send props for rendering or not (even if a small number of pixels is visible, we can decide that it is not worth rendering the props).

Unreal uses different types of overlap requests, depending on the context:

Hardware overlap requests have their drawbacks - they have a fragmentation of draw calls. This means that they require the renderer to perform one draw call per grid (or grid group), for which you want to define an overlap. They can significantly increase the number of draw calls per frame, require reading back to the CPU, which adds synchronization points between the CPU and the video processor, and makes the CPU wait until the video processor finishes processing the request. They are not very suitable for cloned geometry, but for now we will not pay attention to this.

Unreal solves the problem of the synchronization point of the CPU and the video processor like any other engine that uses requests - reading request data deferred for several frames. This approach works, but it can add the problem of “jumping” the screen props when the camera moves fast (in practice, this may not be a serious problem, because cutting off ceilings using boundary parallelepipeds is conservative, that is, the grid will most likely be marked as visible even before it really becomes visible). However, the problem of unnecessary rendering calls remains, and it is not so simple to solve. Unreal tries to reduce its influence by grouping queries as follows: first, it renders all the opaque geometry into a z-buffer (the Z-buffer preliminary pass described above). Then it passes separate requests for each props that need to be checked for overlap. At the end of the frame, it receives request data from the previous (or even earlier) frame and solves the problem of visibility of props. If it is visible, the engine marks it for rendering in the next frame. On the other hand, if it is invisible, then the engine adds it to the “grouped” query, which combines the props (eight objects maximum) into a group of boundary parallelepipeds and uses it to determine visibility during the next frame. If in the next frame the group becomes visible (as a whole), the engine breaks it and sends individual requests again. If the camera and props are static (or move slowly), then this approach reduces the number of required overlap requests by eight times. The only oddity

This process matches the IndividualQueries and GroupedQueries tokens in the list of render passes above. The GroupedQueries part is empty because the engine was unable to create a query during the previous frame.

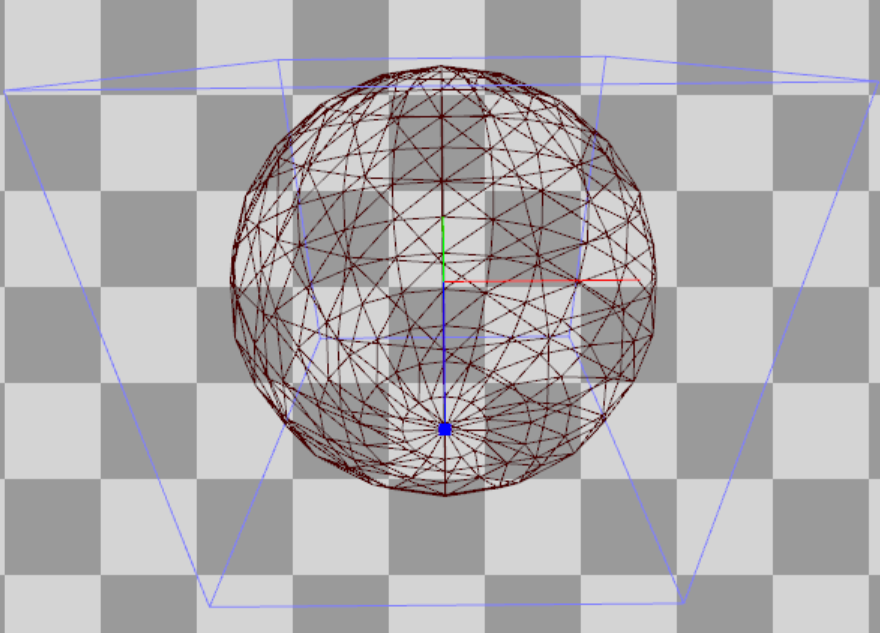

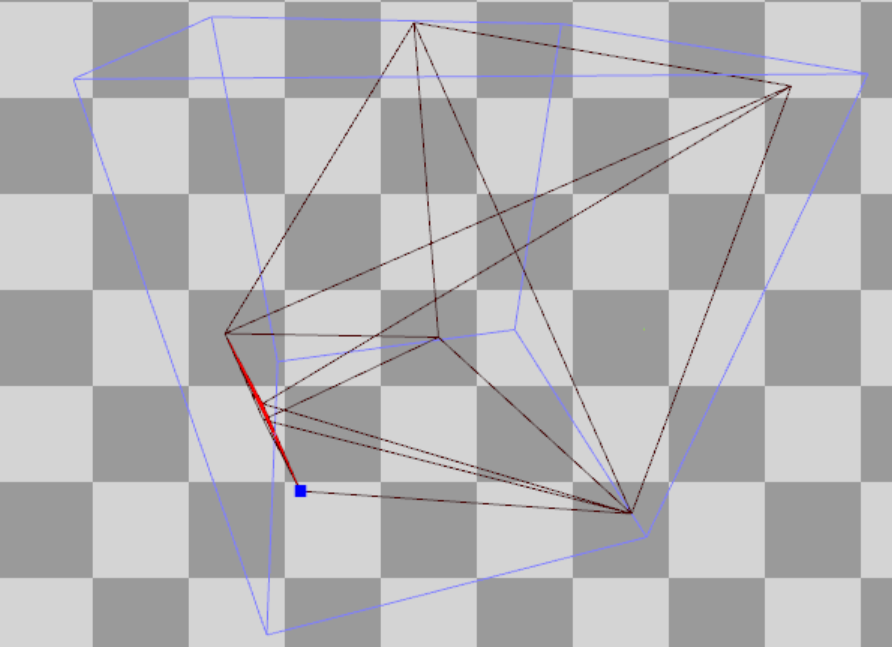

To complete the passage of overlappings, ShadowFrustumQueries sends hardware requests for overlapping local boundary networks (point or directional) (casting and not casting a shadow, contrary to the name of the passage). If they are overlapped, then it makes no sense to perform calculations for them lighting / shadows. It is worth noting that despite the presence in the scene of four local light sources casting a shadow (for which it is necessary to calculate a shadow map for each frame), the number of draw calls inShadowFrustumQueries equals three. I suspect it happened because the limiting volume of one of the sources crosses the near plane of the camera, so Unreal believes that it will still be visible. It is also worth mentioning that for dynamic lighting, for which a cubic shadow map is calculated, we transfer the sphere to check the overlap,

and for static dynamic lighting, which Unreal calculates for the shadows of each object (more on this below), the pyramid is transmitted:

Finally, I assume that PlanarReflectionQueries refers to overlap tests performed when calculating plane reflections (created by moving the camera behind / in front of the reflection plane and redrawing the grids).

Generating a Hi-Z Buffer

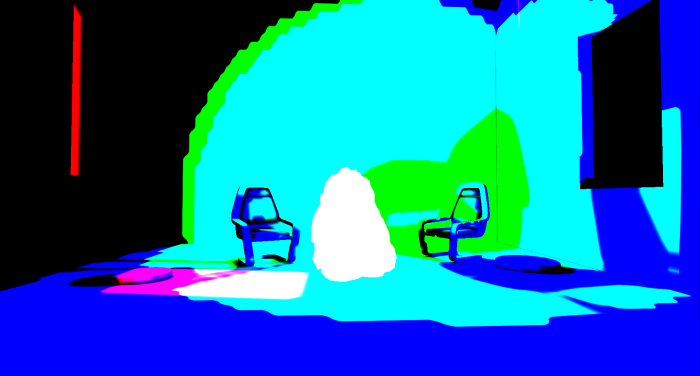

Then Unreal creates a Hi-Z buffer ( HZB SetupMipXX passes ), stored as a 16-bit floating-point number (R16_Float texture format). It receives as input the depth buffer created during the preliminary passage of the Z-buffer and creates a mip chain (i.e., gradually decreases their resolution) of the depths. It also seems that for convenience, it will resample the first mip to a power of two:

Since, as mentioned above, Unreal uses a reverse Z-buffer, the pixel shader uses the min operator to reduce the expansion.

Rendering Shadow Maps

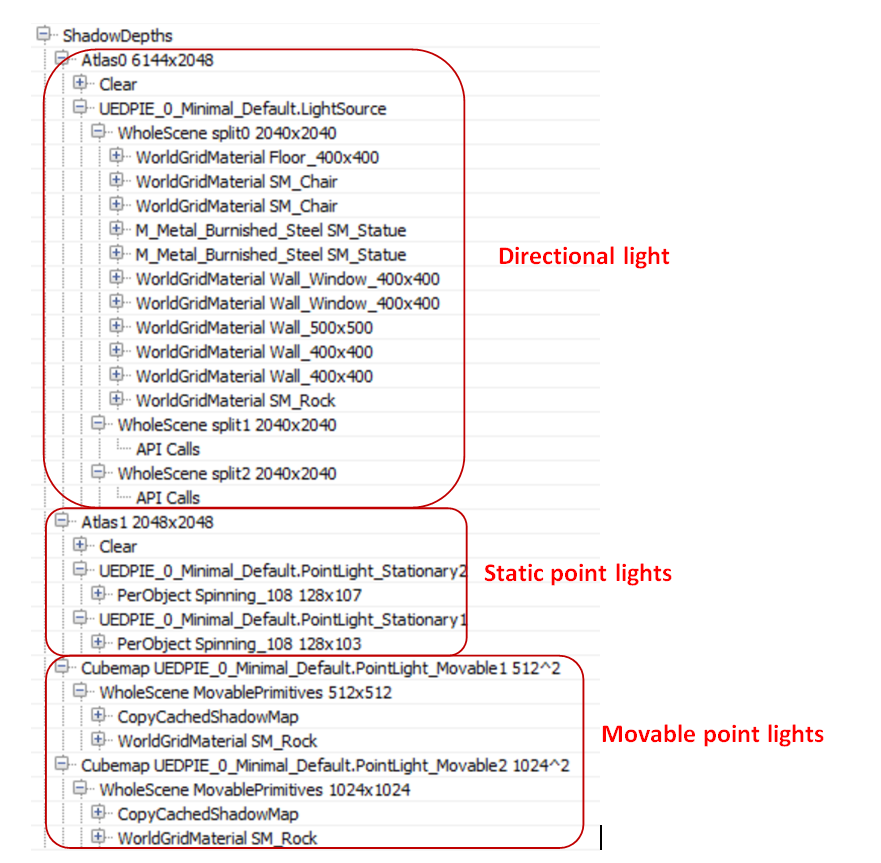

Then follows the rendering pass for computing shadow maps ( ShadowDepths ).

I added a “Stationary” directional light source, two “Movable” point sources, two stationary point sources and a “Static” point source to the scene. They all cast shadows:

In the case of stationary sources, the renderer bakes the shadows of static props and calculates shadows only for dynamic (moving) props. In the case of moving sources, it calculates the shadows for everything and each frame (fully dynamic). Finally, in the case of static sources, it bakes the lighting + shadows in the lighting map so that they never appear during rendering.

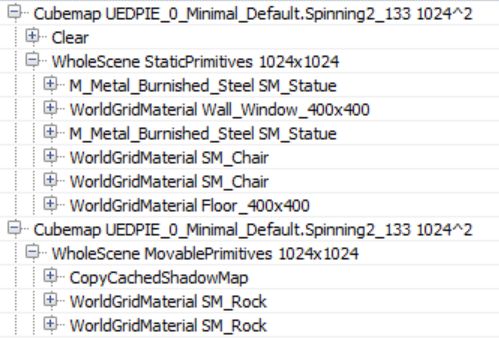

For a directional light source, I also added cascading shadow maps with three splits to see how Unreal handles them. Unreal creates the texture of the R16_TYPELESS shadow map (three tiles in a row, one for each split), which is reset in each frame (so there are no torn shadow map split updates based on the distance). Then in the passage stage Atlas0 the engine renders all opaque props to the corresponding shadow map tile:

As the above call list confirms, only Split0 has geometry for rendering, so the rest of the tiles are empty. The shadow map is rendered without using a pixel shader, which provides twice the speed of generating a shadow map. It is worth noting: it seems that the separation of Stationary and Movable is not saved for the directional (Directional) light source, the renderer renders all the props (including static) into the shadow map.

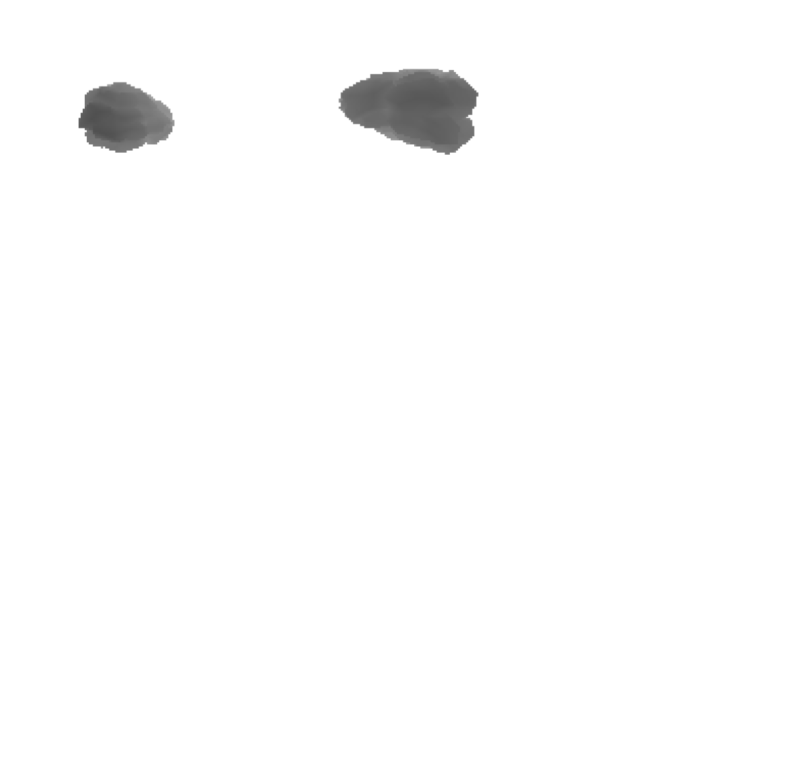

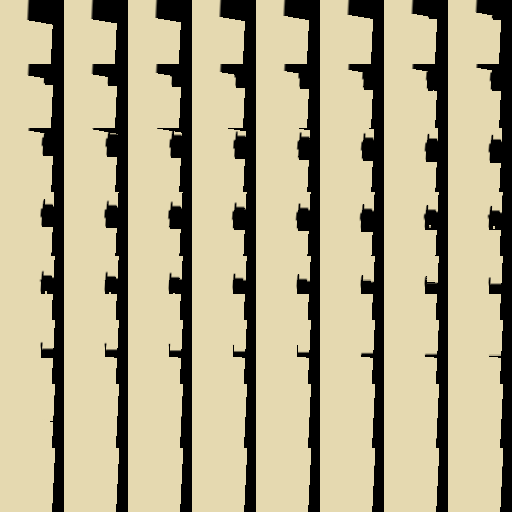

Next up is the Atlas1 passage, which renders shadow maps for all stationary lights. In my scene, only the props “stone” is marked as moving (dynamic). For stationary sources and dynamic props, Unreal uses object-based shadow maps stored in a texture atlas. This means that it renders for each source and for dynamic props one shadow map tile:

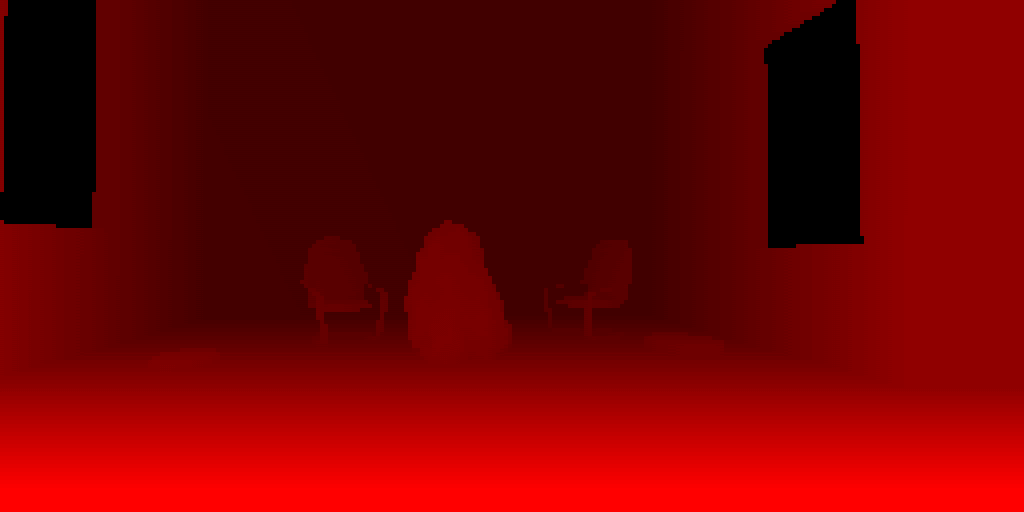

And finally, for each dynamic (Movable) light source, Unreal creates a traditional cubic shadow map ( Cubemap XX passes ), using a geometric shader to select the face of the cube to render on (to reduce the number of draw calls). In it, he renders only dynamic props, using shadow map caching for static / stationary props. The CopyCachedShadowMap passage copies the cached cubic shadow map, after which the depths of the dynamic props shadow map are rendered on top of it. For example, here is the face of a cached cubic shadow map for a dynamic light source (CopyCachedShadowMap output):

And here she is with the rendered dynamic props “stone”:

A cubic map for static geometry is cached and not created in every frame, because the renderer knows that the light source is not actually moving (although it is marked as Movable). If the source is animated, then each frame renderer renders a “cached” cubic map with all the static / stationary geometry, after which it adds dynamic props to the shadow map (this picture is from another test that I made specifically to make sure of this):

The only static light source does not appear at all in the draw call list. This confirms that it does not affect dynamic props and only affects static props through a baked lighting map.

I’ll give you advice: if there are stationary sources of light in the scene, then bake all the lights in the Editor before profiling (at least I'm not sure what the game starts as “standalone”). Otherwise, Unreal seems to treat them as dynamic sources, creating cubic maps instead of using shadows for each object.

Now we will continue to study the process of rendering a frame in the Unreal engine, having considered the generation of a lighting grid, preliminary passage of the g-buffer and lighting.

Lighting purpose

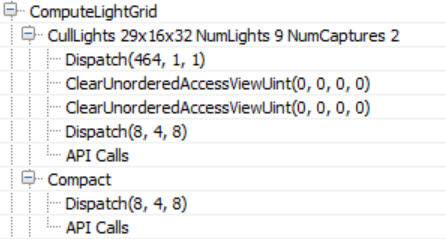

The renderer then switches to the compute shader to bind the lighting to the 3D grid ( ComputeLightGrid pass ) in a manner similar to clustered shading. This lighting grid can be used to quickly identify light sources that affect the surface depending on its position.

As the name of the passage implies, the visible lighting grid has dimensions of 29x16x32. Unreal uses a screen space tile of 64 × 64 pixels and 32 parts of z-depth. This means that the number of dimensions of the XY lighting grid will depend on the screen resolution. In addition, judging by the name, we assign 9 light sources and two reflection probes. A reflection probe is an “entity” with a position and radius that reads the environment around itself and is used to create reflections at the props.

According to the source code compute shader (LightGridInjection.usf), the separation is performed exponentially: this means that the z-size of each mesh cell in the visible space becomes larger with distance from the camera. In addition, it uses the parallelepiped aligned on the coordinate axes of each cell to make intersections of the bounding volumes of light sources. To store the indexes of light sources, a linked list is used, which in the Compact passage is converted to a continuous array.

This lighting grid will be used for in the volume fog calculation pass to add light scattering in the fog, in the ambient reflections pass and the translucency rendering pass.

I noticed another interesting fact: the CullLights pass begins with clearing Unordered Access Views for lighting data, but it uses ClearUnorderedAccessViewUint for only two of the three UAVs. For the rest, it uses a compute shader that sets the value manually (the first Dispatch in the list above). Obviously, for buffers larger than 1024 bytes, the source code prefers to use cleanup with the compute shader instead of using the API cleanup call.

Volumetric fog The

following are calculations of volumetric fog, which again use the compute shader shaders.

In this passage, the permeability and scattering of light in the volume texture are calculated and stored, which allows a simple calculation of fog using only the position of the surface. As in the previously completed lighting destination passage, the volume “fits” into the pyramid of visibility using 8 × 8 tiles and 128 depth gradations. Depth gradations are distributed exponentially. They slightly move the near plane to avoid a large number of small cells close to the camera (this is similar to the Avalanche Studios cluster shading system ).

Like Assassin's Creed IV and Frostbite ’s LINK fog technology , fog is calculated in three passes: the first ( InitializeVolumeAttributes) calculates and stores the fog parameters (scattering and absorption) into the volume texture, and also stores the global emission value into the second volume texture. The second pass ( LightScattering ) calculates the scattering and attenuation of light for each cell, combining shaded directional lighting, sky lighting and local light sources assigned to the texture of the volume of illumination in the ComputeLightGrid passage. He also applies antialiasing (AA) to the output of the compute shader (Light Scattering, Extinction) using a history buffer, which itself is a 3D texture, improving the quality of diffuse lighting in the grid cell. Last Pass ( FinalIntegration) simply performs raymarching 3D textures along the Z axis and accumulates diffuse lighting and permeability, saving the result in the process to the corresponding mesh cell.

The ready volume buffer with light scattering is as follows. In it you can see the pillars of light due to directional light sources and local sources scattered in the fog.

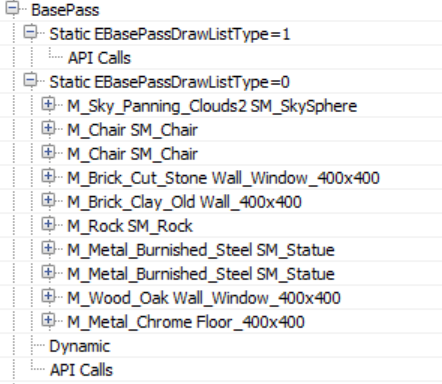

G-buffer

pre-pass The following is a proprietary version of the G-buffer pre-pass of the Unreal engine, commonly used in deferred rendering architectures. This passage is needed in order to cache the properties of materials in a variety of target renders in order to reduce redrawing during costly calculations of lighting and shading.

In this passage, all opaque props (static, mobile, etc.) are usually rendered. In the case of Unreal, it also primarily renders the sky! In most cases, this is a bad decision, because the sky is later redrawn by other props that are closer to the camera, that is, the work turns out to be superfluous. However, in this case, this is quite normal, because the preliminary pass of the Z-buffer previously performed by the renderer eliminates the redrawing of the sky (and most of the redrawing as a whole, at least for opaque props).

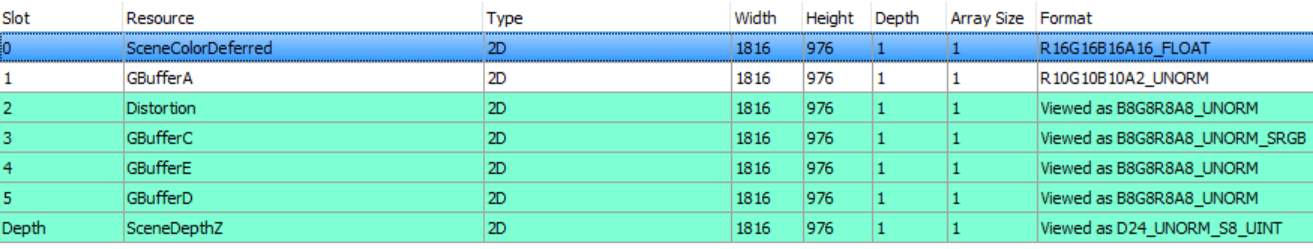

Here is a list of the target renders that the g-buffer pre-pass writes to.

The depth buffer is used only for the z-test, it was already filled in the preliminary pass of the z-buffer, and now the renderer does not write anything to it. However, the renderer writes to the stencil buffer to mark those pixels that belong to the rendered opaque geometry.

The contents of the g-buffer may depend on the render settings. For example, if the renderer should write speed to the g-buffer, then it will take GBufferD and the data will be moved. For our scene and rendering path, the g-buffer has the following scheme.

|  |

| SceneColorDeferred : contains indirect lighting | GBufferA : world space normals stored as RGB10A2_UNORM. It seems that no encoding is used |

|  |

| Distortion : various material properties (metalness, roughness, reflection intensity and shading model) | GBufferC : Albedo in RGB, AO in alpha |

|  |

| GBufferE : proprietary data depending on the shader model (e.g. subsurface color or tangent vector). | GBufferD : Baked Shading Indicators |

| |

| Stencil to tag opaque props |

It is worth noting that all the opaque props in the scene (except for moving stone and sky) sample lighting information from three atlases with mip levels that cache the irradiance, shadows, and surface normals:

Again, particle simulation. Particle

simulation was the first action performed in the frame, it was a passage recording the positions in the world and the speed of particle sprites. It happens in the frame so early that the renderer does not have access to depth and normal buffers for calculating collisions in the video processor, so it is time to return and re-run the simulation for those particles that require it.

Rendering speeds

By default, Unreal writes the speed of moving props to a separate buffer in the R16G16 format. In the future, the speed will be used for motion blur and for all effects that require re-projection (for example, for temporary smoothing). In our scene, only a stone is marked as a moving object, so it is the only one rendered into the speed buffer.

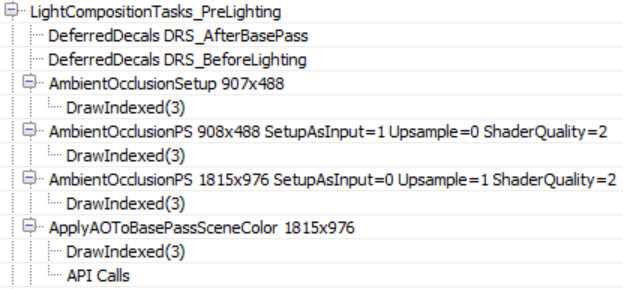

Ambient Occlusion

Having received all the information about the materials, the renderer is preparing to move on to the lighting stage. But first, he needs to first calculate the ambient occlusion in the screen space.

There are no deferred decals in our scene, but if there were, I suggest that the empty DeferredDecals passages would change the properties of some materials in the g-buffer. Ambient occlusion in the screen space is calculated in two passes - in a quarter of the resolution and in full screen. The AmbientOcclusionPS 908 × 488 pass computes AO using a quarter-resolution normal buffer created in the AmbientOcclusionSetup pass , a Hi-Z buffer created previously by the renderer and random vector textures from which depth / normal buffers will be sampled. In addition, when sampling texture from random vectors, the shader adds small distortion to each frame to emulate “supersampling” and gradually improve the quality of AO.

The AmbientOcclusionPS 1815 × 976 pass then calculates a full screen, higher resolution, with AO and combines them with a quarter resolution buffer. The results are good enough even without the need for a blur pass.

Finally, the full resolution AO buffer is applied to the SceneColourDeferred buffer (which is part of the aforementioned G-buffer), which so far contains indirect (ambient) lighting for the scene.

Lighting

Before starting the discussion of lighting, it is worth to step aside a bit and briefly talk about how Unreal illuminates translucent objects, because soon we will often meet with this system. Unreal's approach to illuminating translucent surfaces is to introduce lighting into two textures of 64x64x64 volume in RGBA16_FLOAT format. Two textures contain illumination (shaded + weakened) in the form of spherical harmonics that reach each cell of the volume (TranslucentVolumeX texture) and approximate the direction of light movement from each light source (TranslucentVolumeDirX texture). The renderer stores 2 sets of such textures, one for props close to the camera, requiring high-resolution lighting, and the second for more distant objects for which high-resolution lighting is not so important. It uses a similar approach,

Here is an example of volume textures for translucent lighting close to the camera with only a (shaded) directional source.

These volumes of translucent lighting do not affect opaque props; they will be used later to illuminate translucent props and effects (particles, etc.). However, they will be filled in the lighting aisle.

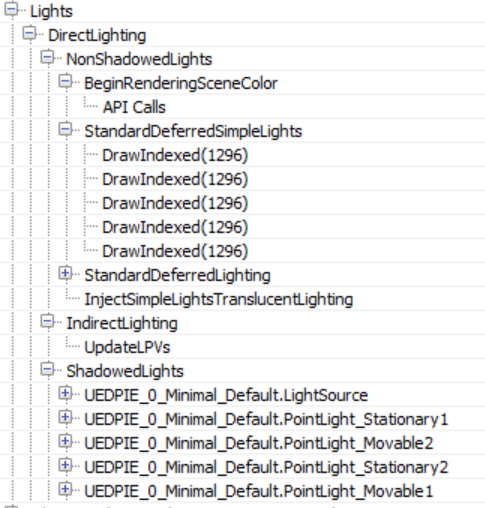

Returning to the direct lighting of opaque props, the renderer can now calculate and apply lighting to the scene. With a large number of light sources, this list of draw calls can be quite long. I deployed only the most important parts.

Lighting sources are handled by two groups, NonShadowedLights and ShadowedLights . The NonShadowedLights group includes simple light sources, such as those used for particle effects, and non-shading conventional sources in the scene. The difference between them is that conventional scene lighting sources use a depth boundary test when rendering to avoid pixel illumination outside the approximate amount of illumination. This is implemented using specialized driver extensions.. Lighting accumulates in the above SceneColourDeferred. Another difference is that simple light sources do not write to volumes of translucent lighting at all (although this seems to be possible in the renderer code, so you can turn this option on somewhere).

Interestingly, in the case where the number of non-shading (and non-static) visible light sources in the scene exceeds 80, the renderer switches from classic deferred shading mode to tile deferred lighting mode.

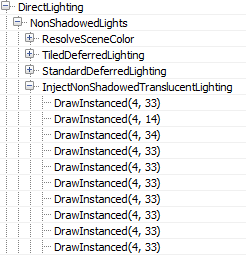

In this case, the renderer uses a compute shader to calculate the lighting (only for such light sources), passing the lighting data down to the shader through constant buffers (Thanks to wand de for pointing me to this.). In addition, it seems that switching to tile deferred lighting and using the compute shader to apply all light sources in a single pass only affects direct lighting. The InjectNonShadowedTranscluscentLighting pass still adds all the light sources separately to the volumes of translucent lighting (for each, a separate draw call is created):

The ShadowedLights passage processes all light sources casting a shadow, both stationary and moving. By default, Unreal processes every shadow-casting light source in three steps:

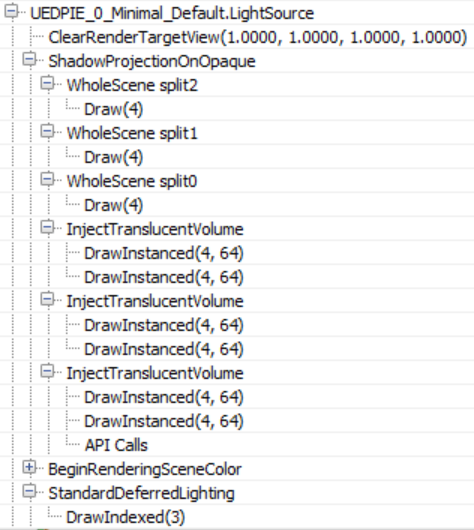

First, it calculates the shadow of the screen space ( ShadowProjectionOnOpaque ), then adds the influence of lighting to the amount of translucent lighting ( InjectTranslucentVolume ) and finally calculates the lighting in the scene ( StandardDeferredLighting ).

As mentioned above, for this scene, in the case of directional lighting, only Split0 contains information about the shadows. The result of shadow calculations is written to the RGBA8 buffer the size of the screen.

The next stage ( InjectTranslucentVolume ) records the influence of directional lighting for both stages in the amount of translucent lighting described above (two calls to the InjectTranslucentVolume passage ). Finally, the StandardDeferredLighting pass calculates and writes lighting by the mask of the shadow buffer of the screen space to the SceneColorDeferred buffer.

It seems that local sources use the same order to project shadows into the screen buffer, adding lighting to the amount of translucent lighting and calculating the lighting written to the SceneColorDeferred buffer .

Both types are processed in approximately the same way, the difference between moving / stationary local sources is that the shifters add lighting with shadows to the amount of translucent lighting, and, of course, that for shadows, moving sources with shadows do not use a feature atlas, but a cubic map .

All light sources use one target rendering of the shadow buffer of the screen space, clearing the corresponding parts for the shadows of each source (I believe this is done to save memory).

After completing the lighting pass, SceneColorDeferred contains all the accumulated direct lighting for the scene.

It is worth noting that despite the fact that the renderer created the structure of the grouped / clustered data in advance (the lighting assignment pass), it is not used at all at the stage of lighting illumination of opaque geometry, using instead of it the traditional deferred shading with separate rendering of each light source.

As the last stage, the volume of translucent lighting is filtered (for both stages) in order to suppress distortion in the illumination of translucent props / effects.

Lighting in the image space

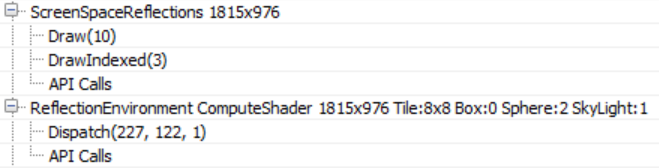

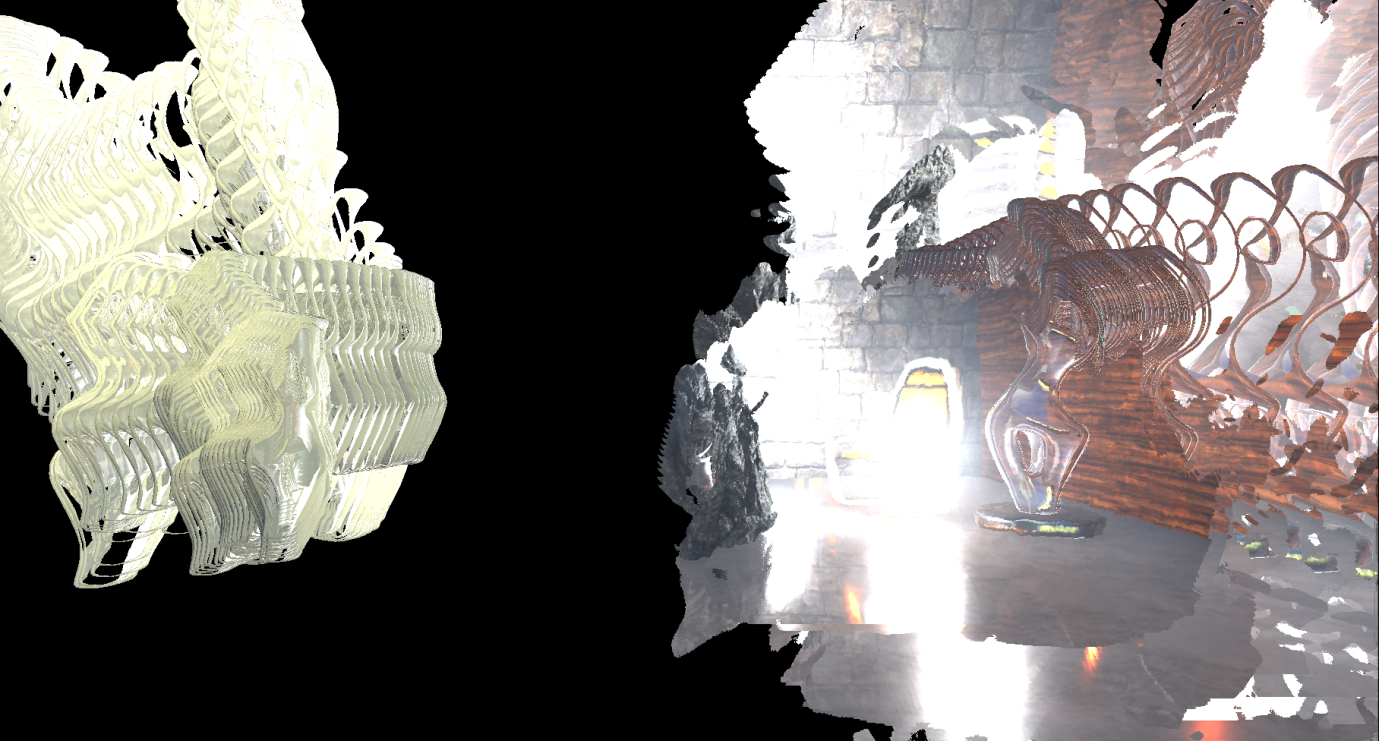

Then, full-screen reflections in the screen space are calculated (the target render format is RGBA16_FLOAT).

The shader also uses the Hi-Z buffer calculated at the beginning of the frame to speed up the calculation of intersections by selecting the mip level of the Hi-Z buffer when raymarching based on the roughness of the surface (i.e. making ray tracing for rough surfaces coarser, because the details in their reflections are invisible). Finally, in each frame, oscillations are added to the initial position of the beam, which, combined with temporary smoothing, increases the quality of reflection display.

The shader uses the target render of the previous frame to sample color when a collision is detected during raymarching, this can be seen by volumetric fog in reflections, as well as by reflected transparent props (statues). Also on the right under the chair you can see traces of the particle effect. Since we do not have the correct depth for transparent surfaces (to calculate the correct collisions), the reflections are usually stretched, but in many cases the effect looks quite convincing.

Using a compute shader, screen reflections are applied to the main target render ( ReflectionEnvironment pass ). This shader also applies ambient reflections captured by two reflection probes in the scene. Reflections for each probe are stored in cubic maps with mip levels:

Ambient reflection probes are generated when the game starts and capture only static / stationary geometry (note that the animated props “stone” is missing on the above cubic maps).

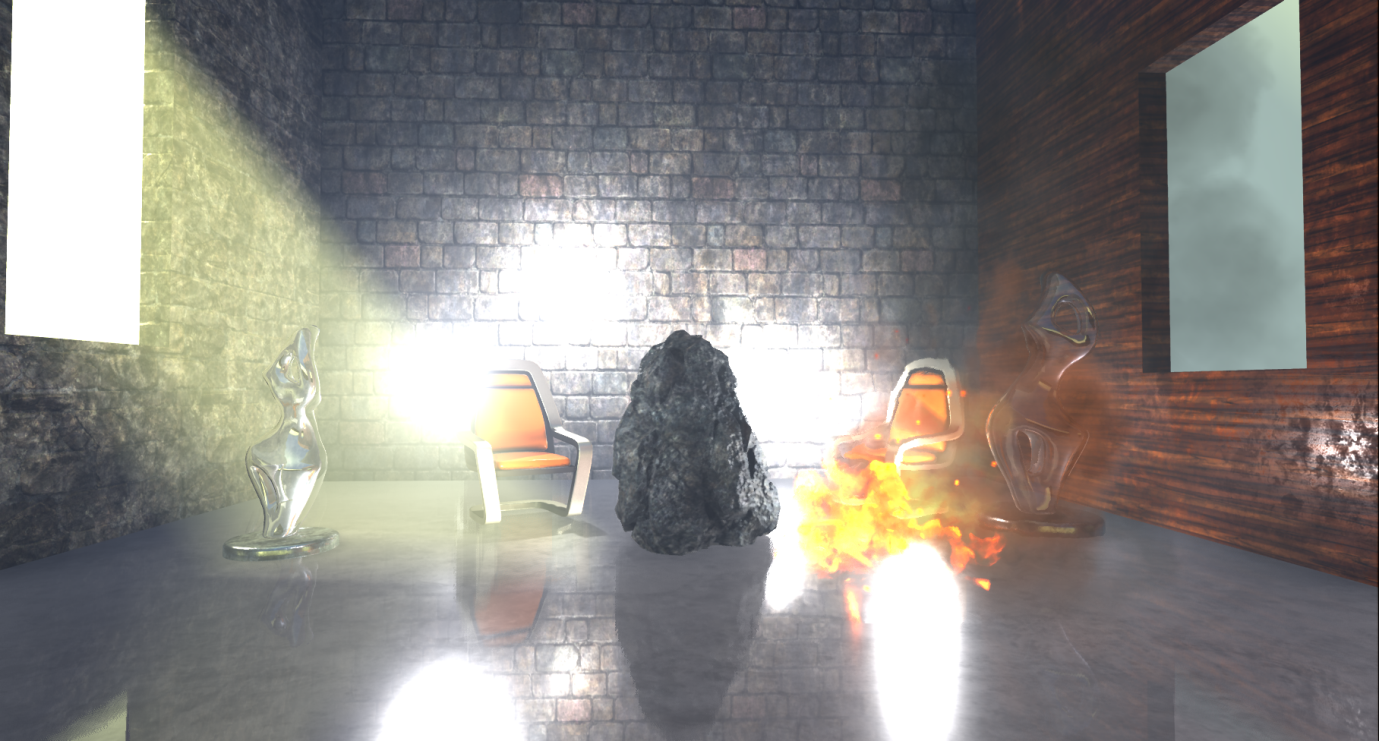

Our scene with the reflections applied in the screen space and the reflections of the environment now looks like this.

Fog and atmospheric effects The

following are fog and atmospheric effects, if they are also included in our scene.

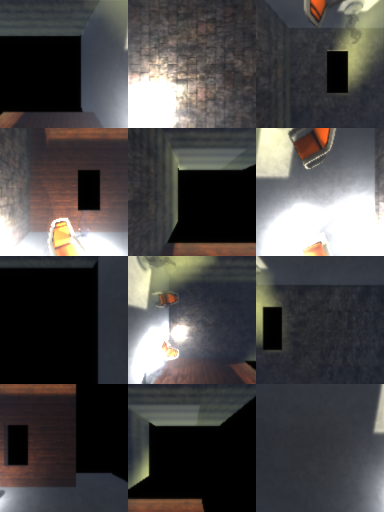

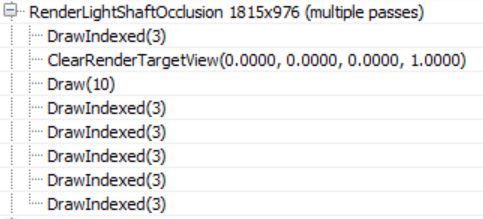

First, a mask of overlapping pillars of light is created in a quarter of the resolution, which determines which of the pixels the lighting poles will receive (which apply only to directional lighting in the scene).

Then the renderer starts to improve the quality of the mask using temporary smoothing and applies three blurring passes to create this mask (I had to process the mask because it was almost completely white):

From this capture of the video processor’s actions, it’s not entirely clear to me why a temporary AA is applied to the mask before blurring, because the final result has a very low resolution. Perhaps to clarify this, more examples of use in different environments will be required.

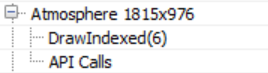

Before adding fog and lighting poles to the scene, the renderer gives itself a break and applies atmospheric effects (in full resolution) to the main target render.

It looks like a complete scattering calculation using pre-calculated transmittance, irradiation, and inward scattering, similar to Bruneton's work .

Our scene is in the room, so, unfortunately, the effects of the simulation are not too noticeable.

Finally, the renderer uses exponential fog and lighting poles in the scene.

The shader uses a volumetric fog volume texture created by several nada passes, sampling based on the position of the opaque geometry. She also applies the lighting pillar mask calculated above.

Rendering transparency

After applying fog to opaque props, the renderer is taken for translucent geometry and effects.

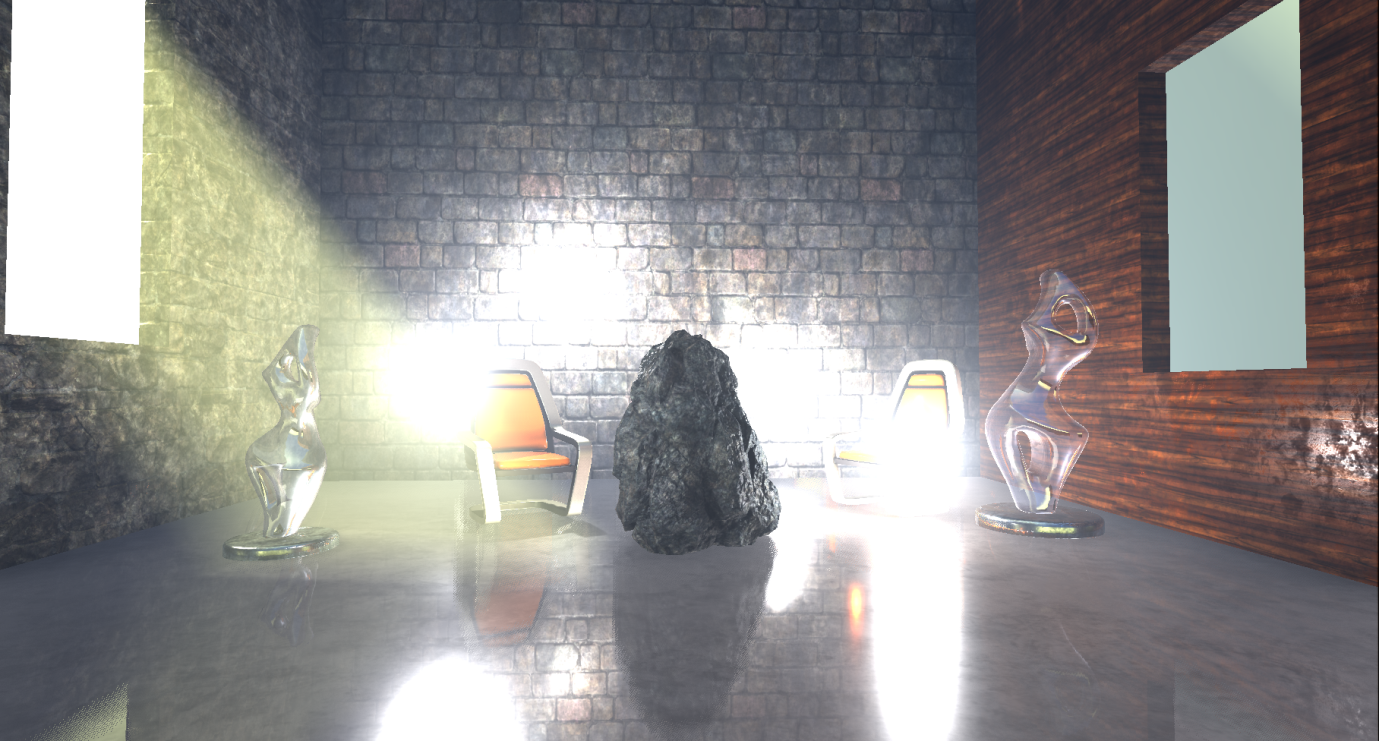

I added two glass statues to the scene, which are rendered first, using the usual alpha blending on top of the main target render.

These two transparent props are well located in the scene, they are affected by local and directional light sources, ambient reflections, fog, etc. By default, the renderer uses a high-quality shader to render transparent props, which, among other things, samples pre-computed atmospheric simulation textures, data from baked light maps, volumes of translucent lighting containing lighting from directional and local light sources, and cubic maps of light probes. All this is used to calculate lighting. However, I did not see that the shader reads the texture of the volume of the volumetric fog, it seems that it only calculates the fog based on the height / distance, maybe I missed this parameter somewhere. The distance-dependent fog, like atmospheric scattering, is calculated in the vertex shader.

The particle effects renderer writes to a separate target render (full resolution).

As in the case of transparent props, atmospheric scattering and fog for them are calculated in the vertex shader. In addition, with certain settings of the particle system, the renderer can use volumes of translucent illumination to illuminate the particles (in one of the cases I saw how it does this in the pixel shader).

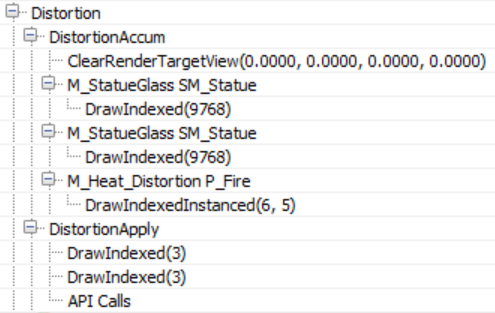

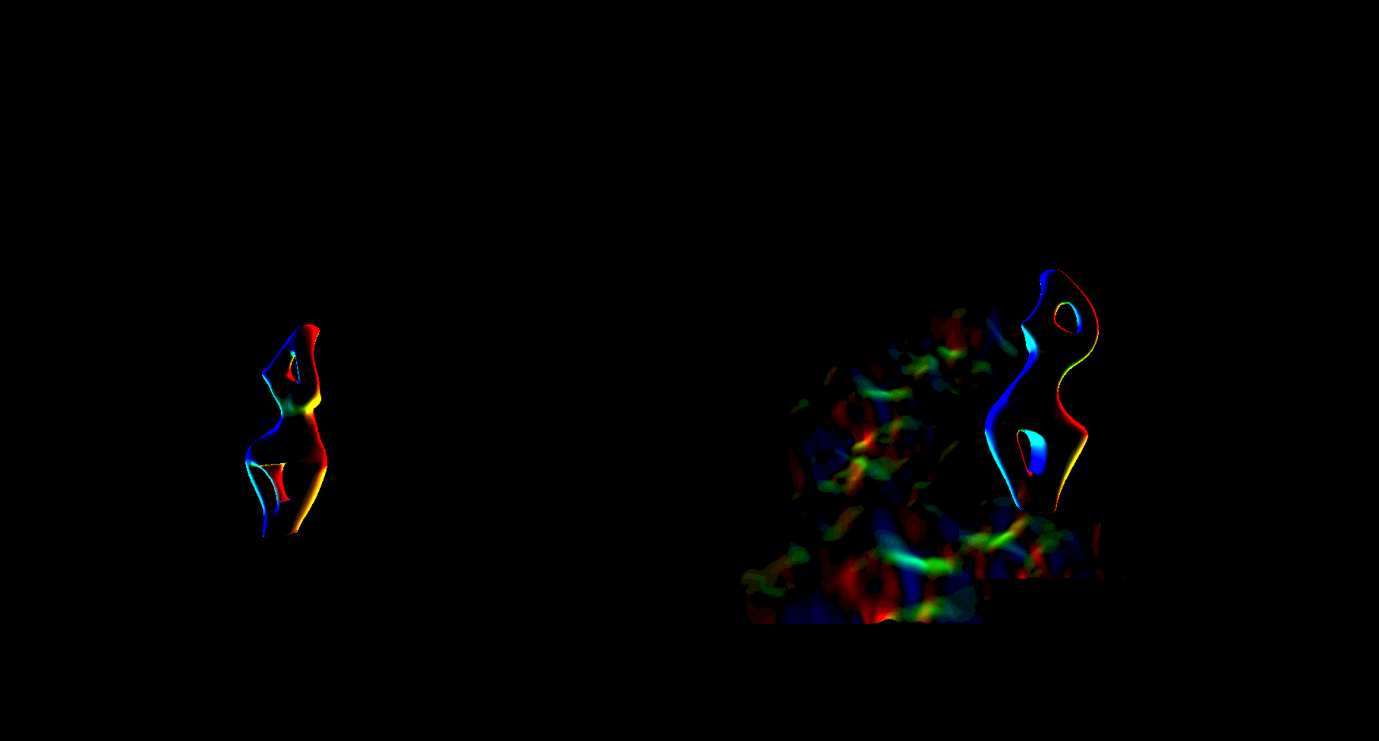

Before completing the transparency processing, the renderer performs another pass to calculate the refractions.

Both transparent props and particles (which should provide refraction) are rendered again for writing to the full resolution buffer with distortion vectors, which will later be used to calculate refraction (I processed the image so that the vectors are better visible). A stencil buffer is also active on this passage, which marks pixels requiring refraction.

During the DistortionApply pass, the renderer reads the contents of the main target render (which is currently) and the distortion vectors, and then writes the strange refraction texture.

Since a stencil buffer is active that marks pixels that are refracted, the renderer does not need to clear the texture.

As we have already said, the last pass of refractions simply copies the texture of refractions using the stencil buffer into the main target render.

You could already notice the refraction on the right armchair caused by particles that we have not yet applied. For transparent props, refraction is rendered after rendering props.

The next passage ( BokehDOFRecombine ) finally applies particles to the scene. This is a simple shader that does less than can be decided from the name of the passage (perhaps it depends on the rendering settings).

Postprocessing

The last part of the frame processing process includes several postprocessing passes, which I will discuss briefly.

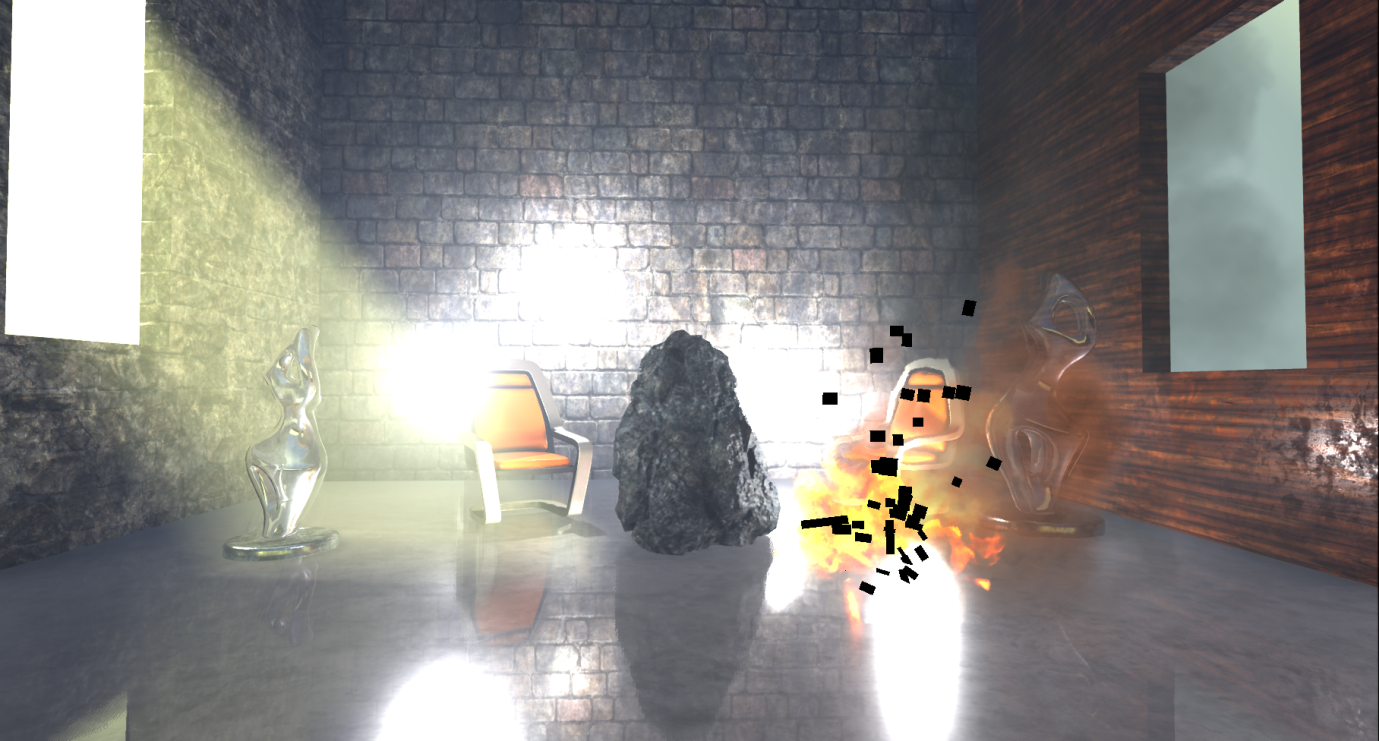

When configuring our scene, the renderer applies the calculations of temporal smoothing, motion blur, automatic exposure, bloom and tonemapping to the main target render.

Temporary smoothing Unreal uses the history buffer to gradually accumulate samples, after which it is rendered in two passes. In the first pass, to pixels that are not in the stencil buffer (in our case, these are some of the particles), temporary AA is applied using the main target render, the history buffer and the speed buffer for re-projection:

Then a similar temporary AA pass is performed for parts in the stencil buffer, creating a finished image with smoothing:

The difference between these two passes of the temporary AA is that the first uses the mixing coefficient (feedback) between the history buffer and the current target render, which is variable and may depend on the illumination of the pixel, the distance transmitted by the renderer of the weights, etc. (based on the parameters), and the second pass uses a constant mixing factor of 0.25: this means that the final pixel with smoothing will mainly consist of the current sample. I think this is done to reduce the “phantom” effect of fast-moving particles, for which we do not have speed information.

Then follows the creation of motion blur, preceded by a passage of leveling and increasing speed.

The blur effect in motion in our case is not too noticeable, because the camera is static and the only moving props that has speed is stone (and it is already a little blurred due to movement and temporary smoothing).

To implement autoexposure (eye adaptation), the renderer using the compute shader creates a histogram of the illumination of the current scene. The histogram groups the brightness of the pixels and calculates the number of pixels belonging to each brightness group.

The advantage of this approach is that we can easily skip areas of the image in which there are very dark or very bright values and create a more reasonable approximation of the average illumination of the scene. Using this average illumination, the renderer can calculate the adaptation of the eye by adjusting the exposure accordingly (bright images result in low exposure, and darker ones lead to large).

To implement the Bloom effect, several resolution reduction passes are used, in which Gaussian filtering is used, and then several operations of increasing resolution and combination (the image is changed in such a way as to make it more visible without exposure control).

In the PostProcessCombineLUTs pass , a geometric shader and a rather long pixel shader are used to create a color scale lookup table (volume texture 32x32x32 RGB10A2). The lookup table will be used at the tonemapping stage:

The last frame pass ( Tonemapper ) combines the previously computed bloom with the main target render, adjusts the exposure of the image using the previously adapted eye adaptation, and then transfers the color through the color scale lookup table to create the final pixel color:

To summarize, it

must be emphasized that this is just one way of rendering, it can be influenced by many parameters and settings, and in fact we examined the very basics.

In general, this turned out to be an interesting activity, despite the fact that I rather found out what the renderer does, and not how it does it. A lot has remained unexplored and I want to return to this topic again.

The source code for Unreal is not well documented, but it is pretty clear and straightforward. Following the list of draw calls, it’s easy to find the code that matches it. However, in many cases it was quite difficult to understand from the source code what shaders do, because conditional compilation is actively used in them. For the convenience of studying and profiling performance, it would be nice to have some kind of intermediate cache of specialized shaders processed and ready for compilation (whose names are added to the list of draw calls).

It seems that by default, the Unreal renderer focuses on creating high-quality images. He actively uses baking data (surroundings, lighting, volumes, etc.) and applies temporary smoothing to significantly improve image quality.

If there are many props in your scene and there are not many opportunities to block them (for example, with a large number of large overlapping objects), then you should carefully consider the passage for calculating the overlap. In addition, refractions on transparent props and particles lead to double rendering. Finally, many stationary or moving local light sources affect the lighting stage because they are rendered individually (and contribute to the cost of the passage of adding lighting for transparency and volume effects).

In conclusion, I want to thank Baldurk for the excellent RenderDoc tool and Epic for revealing the Unreal source code for use, study, and training.