MIT course "Computer Systems Security". Lecture 15: "Medical software", part 3

- Transfer

- Tutorial

Massachusetts Institute of Technology. Lecture course # 6.858. "Security of computer systems." Nikolai Zeldovich, James Mykens. year 2014

Computer Systems Security is a course on the development and implementation of secure computer systems. Lectures cover threat models, attacks that compromise security, and security methods based on the latest scientific work. Topics include operating system (OS) security, capabilities, information flow control, language security, network protocols, hardware protection and security in web applications.

Lecture 1: “Introduction: threat models” Part 1 / Part 2 / Part 3

Lecture 2: “Control of hacker attacks” Part 1 / Part 2 / Part 3

Lecture 3: “Buffer overflow: exploits and protection” Part 1 /Part 2 / Part 3

Lecture 4: “Privilege Separation” Part 1 / Part 2 / Part 3

Lecture 5: “Where Security System Errors Come From” Part 1 / Part 2

Lecture 6: “Capabilities” Part 1 / Part 2 / Part 3

Lecture 7: “Native Client Sandbox” Part 1 / Part 2 / Part 3

Lecture 8: “Network Security Model” Part 1 / Part 2 / Part 3

Lecture 9: “Web Application Security” Part 1 / Part 2/ Part 3

Lecture 10: “Symbolic execution” Part 1 / Part 2 / Part 3

Lecture 11: “Ur / Web programming language” Part 1 / Part 2 / Part 3

Lecture 12: “Network security” Part 1 / Part 2 / Part 3

Lecture 13: “Network Protocols” Part 1 / Part 2 / Part 3

Lecture 14: “SSL and HTTPS” Part 1 / Part 2 / Part 3

Lecture 15: “Medical Software” Part 1 / Part 2/ Part 3

The next slide shows what the device deemed seen, although in reality this did not occur. Keep in mind that we should have had a flat line, because there is no patient and no heartbeat.

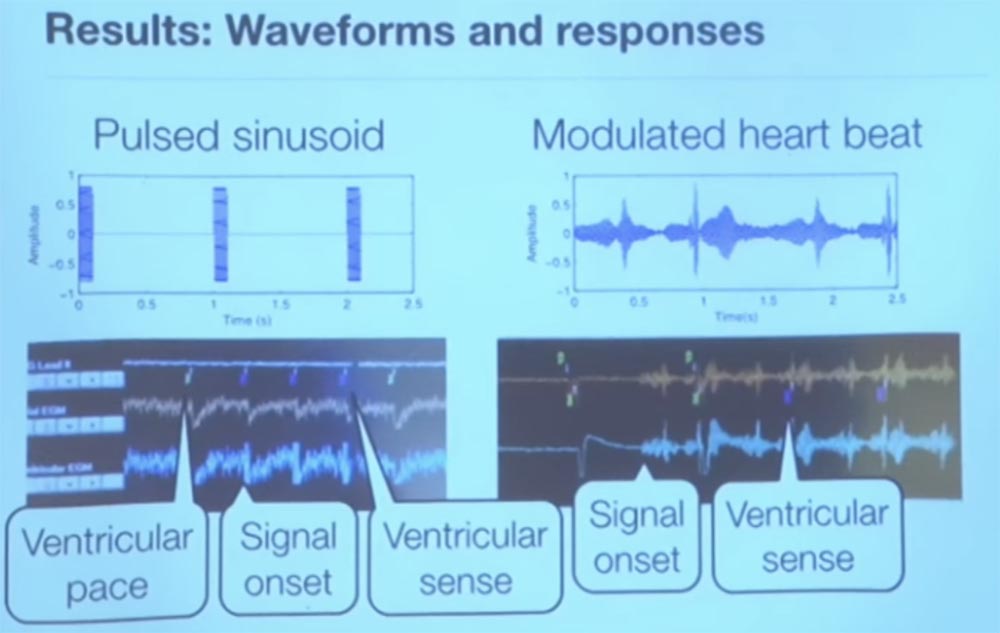

For the sake of interest, we tried a couple of different signals representing a sinusoid pulse. It was really a sine wave, launched at an unimaginable speed, but still corresponding to the rhythm of the heartbeat. Every second we sent a pulse - you see it on the left, and on the right a signal is shown that is modulated, it is a bit noisy.

So, this is a screenshot of a pacemaker programmer that shows telemetry output in real time. The small green marks at the top, VP, indicate that the programmer sends a heart rhythm to the pacemaker. The meaning of the pacemaker is to create an artificial heartbeat, that is, to cause a pulsation of the cardiac tissue.

The interesting thing is that when we started sending our interference, the programmer got a VS, or a feeling of ventricular activity. These are the three small purple marks in the top row. Because of this, the pacemaker thought that the heart was beating by itself, so he decided to turn off the stimulation to save energy. When we stopped transmitting interference, he began heart stimulation again.

On the right, you see where the interference begins, and how the stimulant perceives the sensation of ventricular activity transmitted to it. He begins to think that the heart works on its own and that it does not need to waste energy to maintain heart activity. Thus, we can cause interference and thus deceive the microprocessor to believe in the patient's excellent state of the heart.

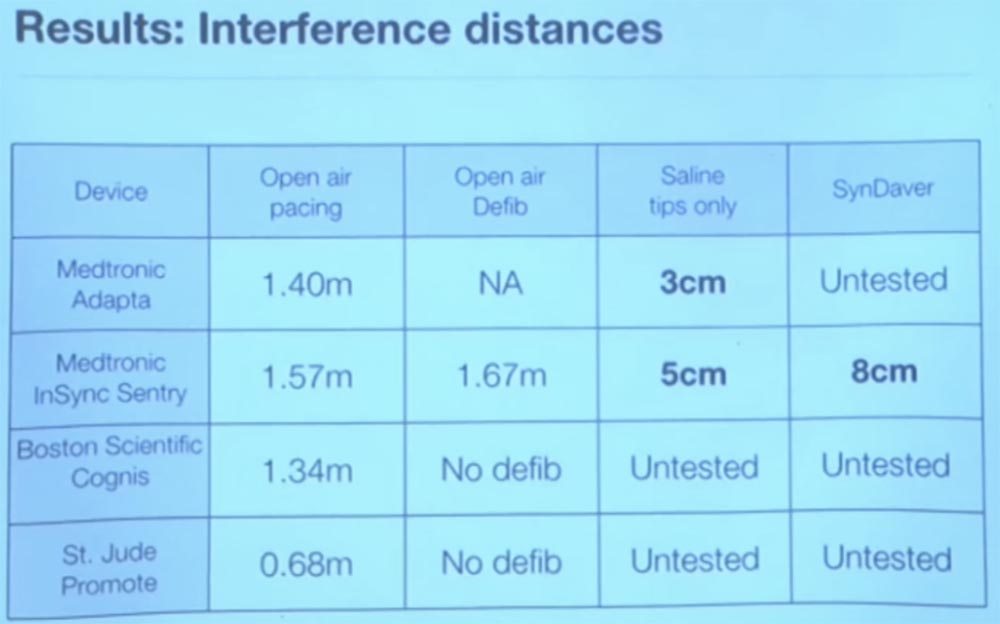

The good news is that it works only in vitro, in vitro, outside the living human body. When we tried to do this in a physiological solution or in everything that in its parameters approached the real human body, it basically did not work. This is because the human body absorbs the energy of radio waves, and they are not actually captured by the sensor. Best of all, we obtained experiments with saline, somewhere at a distance of 3 cm. The slide shows the propagation distance of interference in various media, obtained during the experiment by different research groups.

In general, this means that there is no particular reason to worry about changing the mode of operation of the implant due to external interference. But we do not know how other vital medical devices will react to interferences. We have not tested the work of insulin pumps, although there are many different types of such devices. There are percutaneous glucose sensors, I would not be surprised if someone uses them, they are quite common, but we just don’t know how they react to external interference.

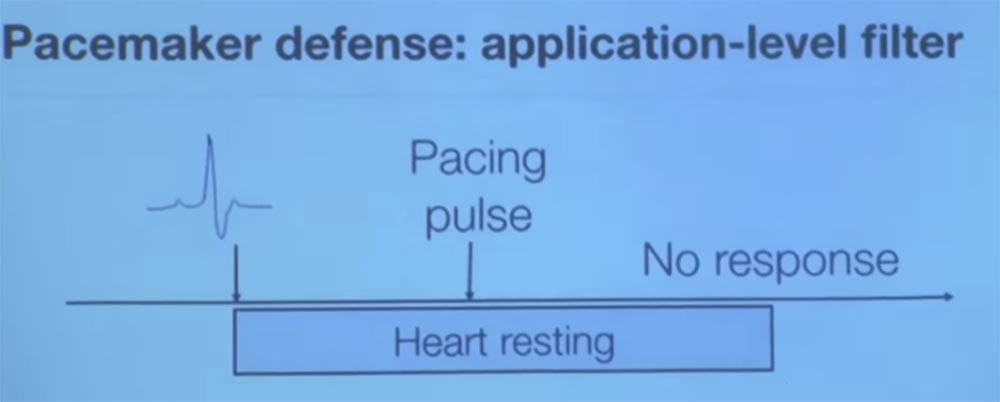

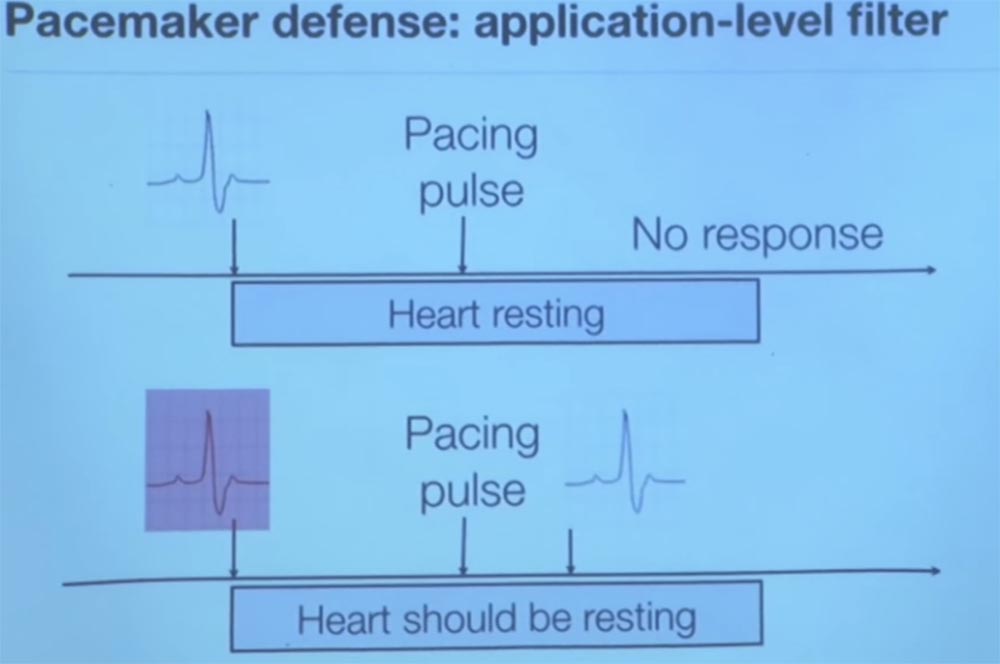

I do not think that an analog filter can distinguish a good signal from a bad one, so you should make your filter closer to the application level. One of the methods of protection that we tried was as follows. This method also has its limitations, but the basic idea is this. Imagine that you are a pacemaker and want to know if you get a reliable signal or not?

To do this, from time to time you begin to send test pulses to keep the opponent's activity under control. When we worked with electrophysiologists, we learned such an interesting thing. We have learned that if you send an impulse to a heart that recently, somewhere within 200 milliseconds, has already fallen back, that is, it has struck, then the heart tissue is physically unable to send an electrical impulse due to polarization because it is at rest.

So, we asked them what will happen if we send an additional impulse immediately after the ventricle contraction? We were told that if the heart really did strike, as your sensor told you, then you would not get any answer, it is physiologically impossible.

Therefore, if we see that the heart sends an electrical signal to us after it has struck less than 200 ms back, this proves that we were deceived about the previous pulse beat. If this happens, then we get intentional electromagnetic interference.

Thus, the basic idea is that we again investigate this issue, relying on knowledge of the physiology of the human body for greater reliability of the results. Another approach was that we did not consider the case of propagation of a delayed heart rate, because electromagnetic interference travels at the speed of light. If you have two pacemaker sensors in different parts of the body, and they perceive the same heart signal at the same time, there is clearly something wrong. Because the electrochemical delay, like an electrical signal from a vagus nerve, moves from above down through your heart.

There are other ways to try to establish whether a physiological signal is reliable, but this is a completely new theory. Little is happening in this area, so it provides many interesting projects for postgraduate and undergraduate studies.

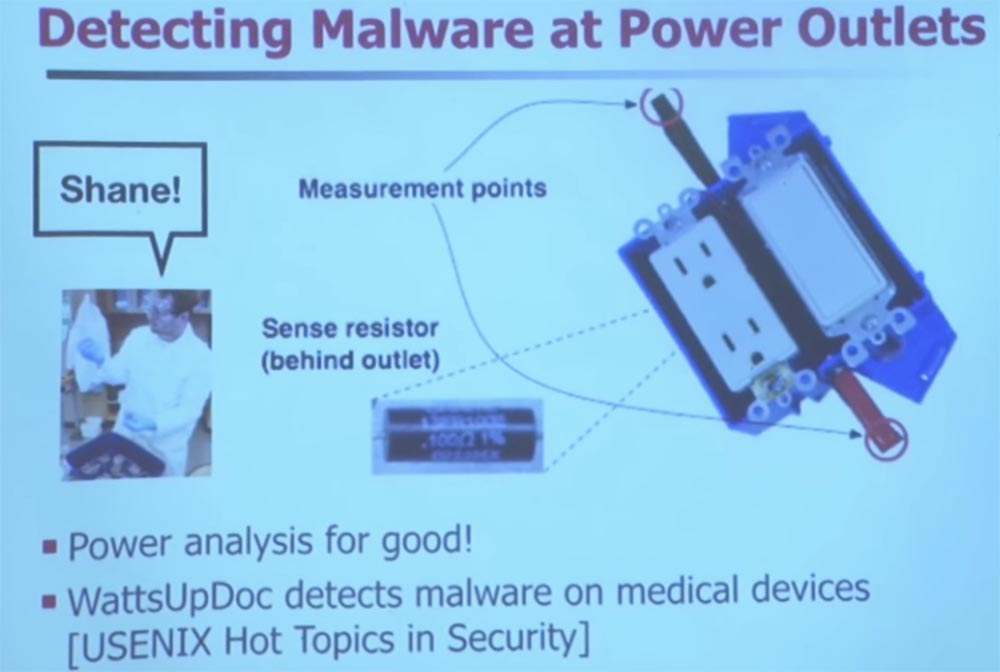

And now I want to tell you about another project that allowed detecting malware through electrical outlets. A few years ago, one of my students, Shane, said: "Hey, I designed this electrical outlet and now I can tell which site you are viewing." He inserted a sensitive resistor inside the outlet, which measures the so-called phase shift of reactive power. This mainly concerns the proxy for downloading information to your computer.

Thanks to this sensor, it can tell how the processor of your computer changes the load and how it affects the parameters of consumed electricity. This is nothing new. Has anyone heard of such a term - TEMPEST protection? I see you in the know. TEMPEST has been around for many years. As the signals flow from everywhere, there is a whole art to stop the leakage of signals. I like to keep all my old computers - I even have a machine with an exo-core, this is an old Pentium 4. They were released before they came up with advanced power management. So if you measured the electricity consumption of the old "Pentium", it would remain unchanged regardless of the CPU usage.

It does not matter whether he worked in a closed loop or was engaged in processing computing processes. But if you buy a modern computer, be it a desktop computer or a smartphone, energy consumption will depend on the workload. So Sean discovered what was going on in this case.

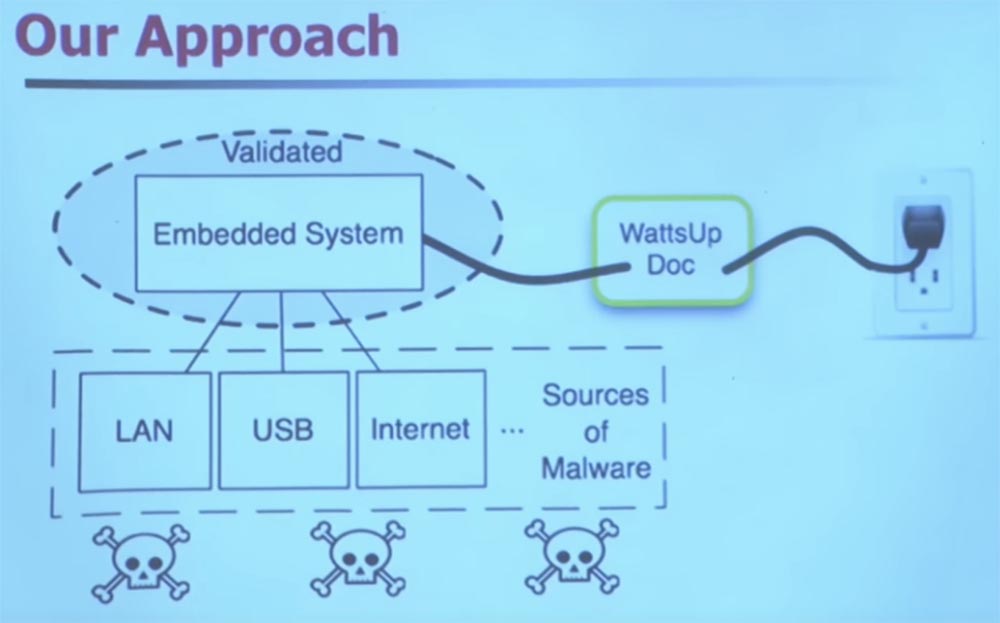

If you have a built-in system that is very difficult to change, and you want to equip it with safety features, you can use an intelligent extension cable.

It uses machine learning to classify the frequency domain of your energy usage. It does not track how much electricity you consume, but rather how often you do it.

Let me give you some hint. Imagine that you have a medical device that is infected with malware. Suppose this virus will wake up every few minutes to send spam. How can this change power consumption?

That's right, every few minutes the sleep interrupt will work, and the processor will wake up. This will probably reinforce his need for memory resources. He is going to make several cycles, or to insert several additional cycles into what used to be a constant set of work instructions.

Medical devices perform a small set of instructions, unlike general-purpose computers, and this is the usual picture of their work. Therefore, when you suddenly have malware, it immediately changes the power consumption model, the behavior of the power supply, and thanks to that it can be tracked. You do a Fourier transform, apply other "magical" technologies involving machine learning. The devil is in the details. You can use machine learning to detect the presence of malware and other anomalies with very high accuracy and minimal error rates.

This is a project that Sean has been working on for several years. He initially created this project to determine which website you were viewing. Unfortunately, he presented him at a heap of conferences for exactly this purpose, and everyone said to him: “well, and why did you need it”? However, this turned out to be very useful, because he chose the top 50 sites rated by Alexa. And then he compiled the power profile of his computer to use it for machine learning, and then again, with very high accuracy, it was possible to determine which site was visited on other computers. We were really surprised that it worked at all. And we still do not know exactly why this is possible, but we have strong suspicions that the Drupal website content management system is to blame for everything.

Who still writes HTML on Emacs? Great, me too. That is why I have all these errors on my site. But a few years ago there was an active movement, especially in institutions, so that the code automatically created a file of web page contents that follows a regular structure. For example, if you go to the cnn.com page, then they always have ads in the upper right corner, with flash animation that lasts exactly 22 seconds. Thus, when you enter this page, the processor of your computer begins to process it, that is, the web browser has an impact on the usual pattern of energy consumption, and its changes can be fixed as specific to this site.

The only site that we could not confidently classify was GoDaddy. We do not know why, and this doesn’t worry us much, since this question is far from security problems.

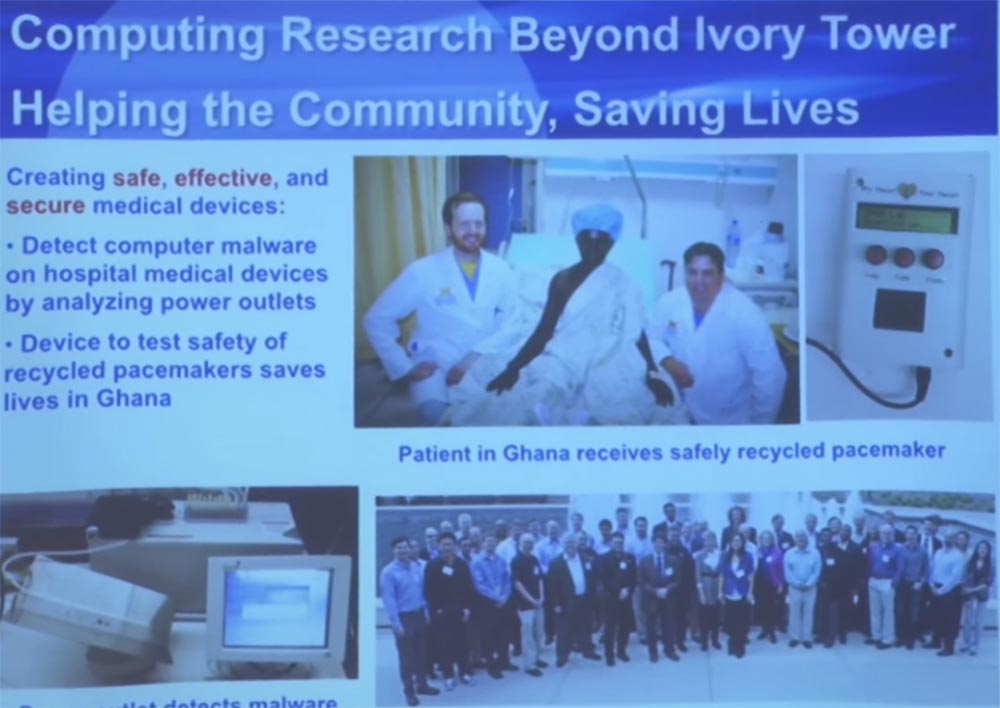

When you help your colleagues from different clinics, they often talk to you again for help. We were working on one of the interesting projects related to the failure to ensure the operation of pacemakers in developing countries, especially in Ghana, which literally gave patients a second life. Because if you don’t have a health care system in your country, it’s very difficult, say, to get $ 40,000 for a pacemaker plus a team of surgeons.

They were engaged in the restoration of defective pacemakers and defibrillators, and then they were sterilized. This is quite interesting. You must use an ethylene oxide gas chamber for sterilization to remove all pyrogens - substances that cause fever. These devices are sterilized and then re-implanted in patients. This gentleman in the picture suffered from a slow heart rate, which was a death sentence for him. But since he was able to get a pacemaker, this gave him extra years of life.

So, the problem with which they came to us was how they can learn that these devices are still safe, because they did not even use them. Obviously, you can look at the battery life - this is the first thing you do. If the charge is too low, the device cannot be implanted. What about some other things? For example, if the metal rusted a little? How can we check the device from start to finish to see if it can correctly recognize arrhythmia?

Students from my laboratory created a special tester that sends a electrical equivalent of cardiac arrhythmia to the pacemaker, anomalies that are different from the sinusoid of a normal heart rhythm.

The pacemaker thinks he is connected to the patient and begins to react. Then we check the answer to see if he really diagnoses a cardiac arrhythmia and whether or not he correctly sends saving strokes.

This development is now undergoing a full FDA review process to get their approval for use. So far this is an incomplete program called My Heart Is Your Heart. You can find detailed information about her if you are interested.

In addition, we often interact with the community of manufacturers of medical devices. We invite them every summer to the Medical Device Safety Center in Ann Arbor along with the medical staff responsible for managing the clinics, and they share their complaints and concerns about medical devices around the table. We had one company to which we simply showed all the existing problems about which no one would turn to the manufacturer of medical equipment. This is a new communication culture.

So I don't know if any of you have been doing safety analysis or reverse engineering. I see a couple of people. This is a very sensitive thing, almost an art, because you are dealing with social elements in production, especially in the production of medical devices, because life is at stake. It can be very, very difficult to share such problems with people who are really able to fix them. Therefore, it often requires personal contacts.

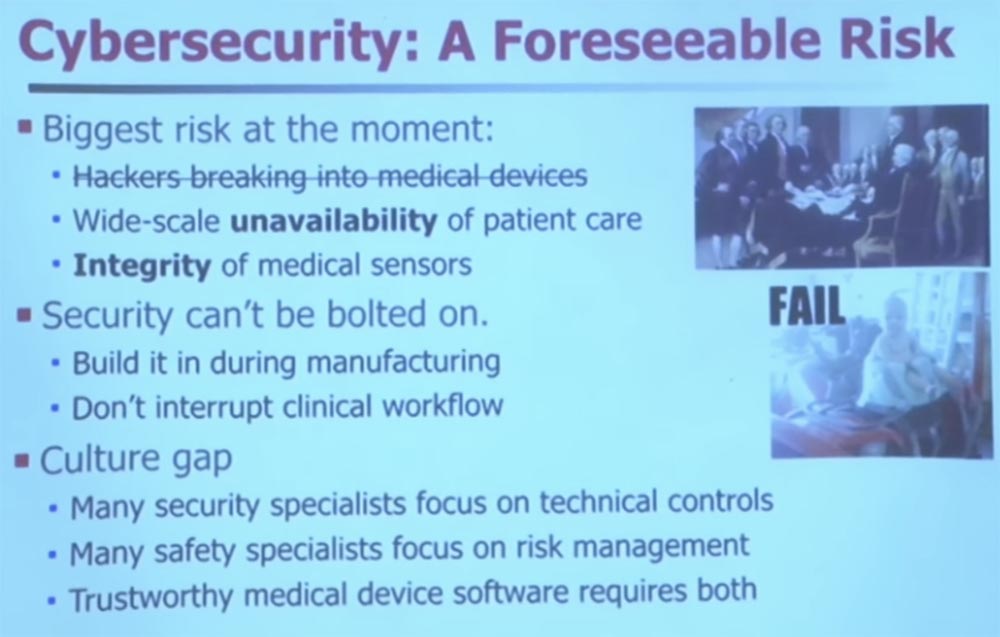

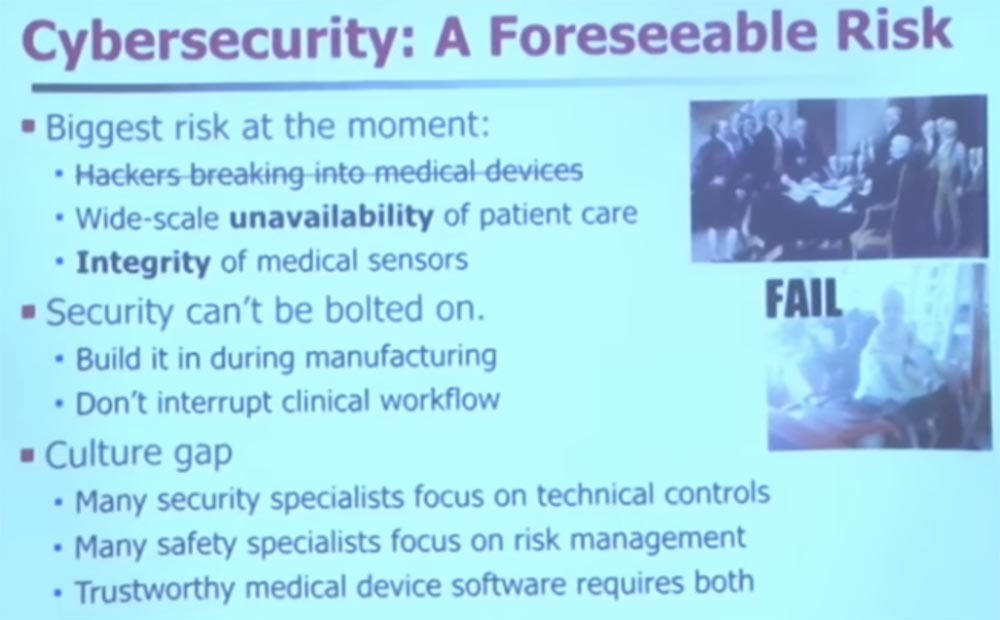

I want to save some more time. I hope we have questions, because I think we have five or 10 minutes. But I want to dispel a couple of myths. You are probably familiar with a lot of newspaper headlines and television shows about how hackers break into medical devices. Perhaps this is a problem, but it is not the only and certainly not the most significant problem. It is difficult to consider this a problem when you analyze security, because in fact there are two more important problems.

The first is the lack of widespread access to devices due to the inaccessibility of patient care. Therefore, forget about external opponents - what to do if you just have malicious programs that accidentally get into medical devices, because they all run under the same operating system? What happens when 50,000 injection pumps break down at the same time? In such a case, it is very difficult to provide proper patient care.

One of my colleagues wrote to me that his catheterization lab was closed.

Catheterization laboratories are a relatively new specialization. This is a special type of operating room for minimal invasive surgery. They had to close such a laboratory in the clinic due to the fact that a nurse accidentally brought a virus from a USB drive from which she wanted to transfer family photos to Yahoo. Somehow, the malware has penetrated and infected their catheterization laboratory. Therefore, they had to close it and stop all work.

So if you are waiting for angioplasty, now this particular medical center is not available to you. You will have to use one of the backup centers. Thus, the availability of medical equipment for medical personnel is one of the key things that is often forgotten from a security point of view.

The second important issue is the integrity of the sensor. If your medical device is infected with malware, its operation changes in a way that its developers could not have foreseen. Here is a very simple example. Suppose some malware get into the timer. They wake him up to send network packets and spam. It takes some time. What happens if your medical device assumes that it completely controls the interruptions of the sensor to collect evidence, and then the interruptions are skipped? It is possible that this sensor regulates the power supply parameters of the medical device, but due to malware, it missed the next transfer of readings. Because of this, you can start misdiagnosing patients because the device received incorrect data from the sensor.

There were several reports that a high-risk pregnancy monitor was infected with a virus and gave incorrect readings. It is good that a highly qualified doctor can look at such testimony and say that it is nonsense, my device cannot issue such figures. Also note that we will reduce the safety margin of the sensors if we do not ensure their integrity.

As I already mentioned, it is very difficult to make any changes to medical equipment after it is released. Do you think it is difficult to change software on an Internet scale?

Try this on medical devices. I met a guy from the same hospital where his MRI still works on Windows 95. I have a pacemaker programmer that works on OS / 2, and only recently updated it to Windows XP.

Thus, doctors have really old things, so it’s very difficult to change their safety in fact. Not impossible, but difficult, because it is necessary to interrupt the clinical workflow.

If you want to develop and sell medical devices or do something about health security, you should first look into the operating room. That is what we did, because only there you can see strange things happening with medical devices. I took all of my students for surgery in pediatric surgery.

And while they were watching the operation, they noticed how one doctor checked his Gmail mail on one of the medical devices. At the same time, they wanted to reassure the patient, so pediatricians went to the Pandora website to play music.

Actually, I was at the dentist the other day, and she also had Pandora. And all this advertising of sorts of beer appeared on the monitor at the moment when she looked at x-rays of my teeth. I said: “where did Dos Equis beer come from, did I drink so much?”, To which she replied - no, this is the same web browser, just click on another tab.

So in medicine, often personal interests are mixed with work, and although this happens unintentionally, it still creates security gaps. And it remains beyond the boundaries of vision, beyond the boundaries of the thinking of physicians. You need to wash your hands, you can not touch anything with gloves after you wear them - it is firmly hammered into their minds. But when it comes to safety hygiene, it really can be described as "out of sight, out of mind." It is not yet part of medical culture. They don’t even understand that they have to ask themselves questions such as, for example, can I run Pandora on the same device that controls my x-rays?

So the main thing in the development of medical devices is not to interrupt the clinical workflow, because then mistakes happen. You want the clinical workflow to be regular, predictable, easy to make decisions. And if you add a new dialog box to enter a password, do you think a problem may arise in the operating room if you ask the doctor to enter a password, say, every ten minutes? Are such distractions permissible? The doctor needs to put off the scalpel, take off the gloves, enter the password ... during this time you can lose the patient.

So, if you are a security engineer, you will have to take into account the special conditions for dealing with malware in a clinical setting, which, oddly enough, not everyone knows. There are definitely very talented engineers who know about it, but they are not enough.

Another big problem is that, as I noticed, security specialists tend to specialize in the mechanistic side of security control. You can own cryptography, know CBC mode, public key cryptography, it's all great. You know how to prevent problems and you can detect them.

The problem is that most people in the medical world think in other categories that are called “risk management.” Let me explain it. Risk management is that you assess all the impairments and benefits and ask yourself whether they are balanced? If I take an action, will it make the risk management process easier?

For example, you want to find out if you should implement a password system on all of your medical devices. The security specialist will say that of course, enter, you must be authenticated!

A cautious person might say: “Well, no, wait a minute. If we set passwords everywhere, then we must take care of sterility. How do we know how often a timeout occurs? What about emergency access? What if we forget the password? We want to make sure we can get an answer within 30 seconds. ” So doctors can make another decision, for example, do not use passwords at all. In fact, many hospitals do not have access to medical records. Instead, they have what is called auditing-based access control. If you look at something that you do not have the right to look at, then only then will they come for you. Because they know how difficult it is to predict in advance exactly what data you may need in the flow of clinical operations.

Thus, the risk management method will depend on the deployment of safety management elements and all knowledge of the technology of the treatment process. In the risk management picture, you can decide not to implement anything, because it can cause harm. However, with both ways of thinking, reliable medical device software is required.

At this point I will complete the lecture. I think there are many interesting things you can do. You are taking a class safety course here, and I encourage you to go and use the tools you have learned.

If you are thinking about where to go after graduation, be it industry or high school, think about medical devices, because they need your help. They need a lot of smart people there. And therefore they lack only one thing - you. I think that there are a lot of interesting things and much more to be done.

I think we have another 5-10 minutes, and would be happy to answer a few questions. Or I can go into the subject. I have some funny videos that I can show. I think it is worth taking and stopping to find out if you have any questions.

Audience: How are a pacemaker and a defibrillator connected in purpose and can they be reused?

Professor:Indeed, defibrillators and pacemakers are associated with each other because they perform similar tasks. This is a defibrillator, it sends large discharges, and a pacemaker - small discharges. But in the US, their reimplantation is illegal. It does not matter that you can do it. It is simply illegal. But in many developing countries, reuse of these implants is not prohibited. If you look, not from the safety management mechanisms, but from the risk management side, then reimplantation and reuse of these devices may actually lead to improved public health, just in developing countries there is no other choice. And this is not my project, it is just a project in the implementation of which we are helping.

But in this particular case, patients really have no choice. In fact, this is a death sentence. Sterilizing such an implant is quite difficult. There are various technologies and scientific methods used to properly sterilize and completely get rid of pathogens. Since they are in the blood, they first use an abrasive cleaner and then ethylene oxide, which destroys most, if not all, pathogens. There is a whole test to test the success of sterilization. In one chamber with a defibrillator you place special dies with known quantities of pathogens, and after sterilization you check whether all microorganisms have been destroyed.

Lecture hall:So you say the integrity of the sensors is a greater risk for a hacker attack, because most of the examples of sensory interference that you demonstrated are the result of deliberate interference. So this is a kind of security threat.

Professor: the question is, why did I focus on the integrity of the sensors, and not on the possibility of hacking, because everything I showed was about hacking? This is the bias of my choice. I chose such cases, but this does not mean that it is statistically important. I divided them into three periods - past, present and future.

Currently, most of the problems that malware causes in our rather rudimentary observation of medical devices are due to the fact that viruses accidentally get inside devices and then lead to errors and malfunctions.

But we know that in the future a deliberate adversary may also arise, he simply has not materialized yet. The closest example is from the news, I don’t know if it’s true. In my opinion, the New York Times spoke about the CHS public health clinic, which is run by the Mandiant computer security company. They believe that a certain nation-state intends to steal their medical records. They do not know exactly why he needed it, but they are sure of the reality of such a threat. And nation states are powerful adversaries, right? If you come across a nation state, you can just give up, because no control over the devices will help you (probably

But that's what worries me. If a nation-state, for example, is very interested in obtaining a certain part of medical information, but it mistakenly affects the software of medical devices, this may later affect the veracity of their testimony.

Therefore, in the future there may be instances of the use of custom malware, but I think it will be worth some effort to someone who really wants to do harm. I hope that there are not too many of them, although such people are found. However, there are people who write malware and do not understand that these malware get into medical devices in hospitals, and the like still causes problems.

Full version of the course is available here .

Thank you for staying with us. Do you like our articles? Want to see more interesting materials? Support us by placing an order or recommending to friends, 30% discount for Habr's users on a unique analogue of the entry-level servers that we invented for you: The whole truth about VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps from $ 20 or how to share the server? (Options are available with RAID1 and RAID10, up to 24 cores and up to 40GB DDR4).

VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps until December for free if you pay for a period of six months, you can order here .

Dell R730xd 2 times cheaper? Only here2 x Intel Dodeca-Core Xeon E5-2650v4 128GB DDR4 6x480GB SSD 1Gbps 100 TV from $ 249 in the Netherlands and the USA! Read about How to build an infrastructure building. class c using servers Dell R730xd E5-2650 v4 worth 9000 euros for a penny?