Palantir: Object Model

Sriyas Vijaykumar, Lead Implementation Engineer, talks about another element of the Palantir system’s internal kitchen.

Together with Edison, we continue to investigate the capabilities of the Palantir platform.

How do organizations manage data at the moment?

In existing systems, quite common artifacts are found, and many of them, if not all, are familiar to you:

What are we doing fundamentally different in Palantir?

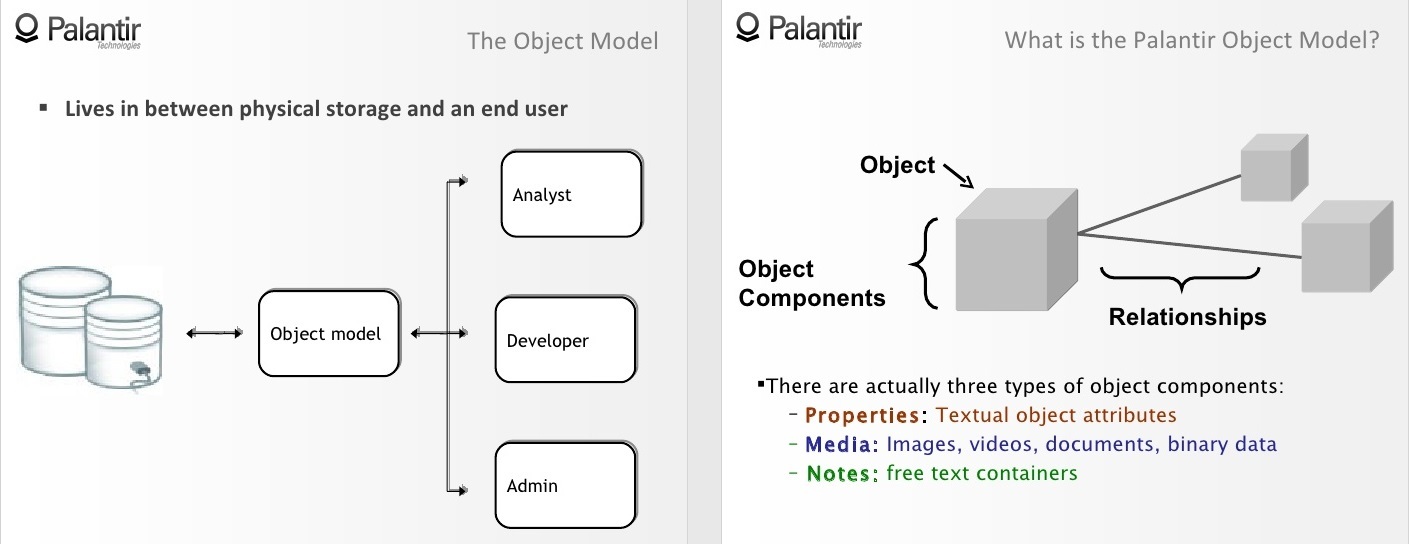

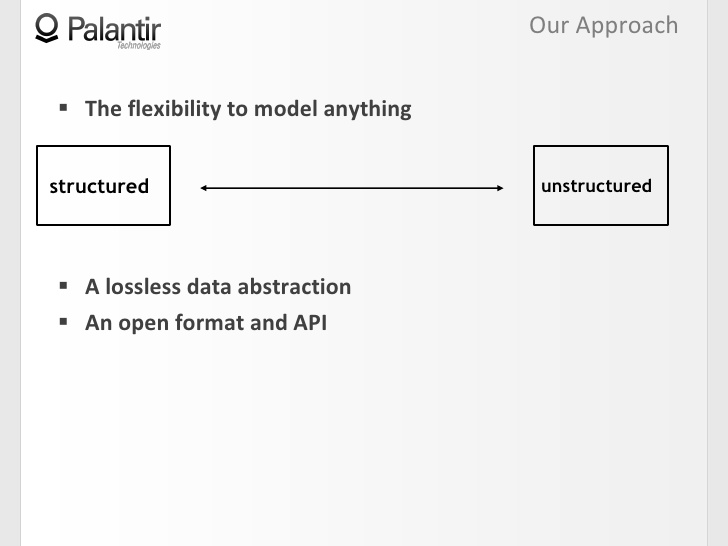

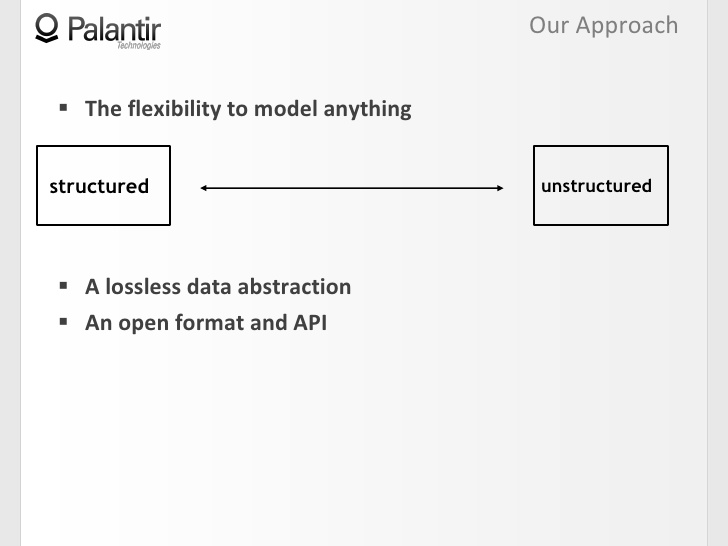

When we developed the system, we worked a lot with community feedback. The first thing we tried to design is the maximum flexibility of the system, which makes it possible to simulate anything.

Flexibility means the ability to work with any type of data in one common space: from highly structured, such as databases with built relationships, to unstructured ones, such as a repository of message traffic, as well as everyone located between these extremes. It also means the ability to create many different fields for research without being tied to a single building model. Like an organization, they can change and evolve over time.

The next thing we designed was lossless data compilation. We need a platform that tracks every piece of information to its source or sources. In a multi-platform system, access control is important, especially if such a system allows you to complete the entire range of data operations.

2:26 The next thing we designed was an open format and an API. A genuine data platform allows you to enter data into the system, interact with data in this system, and output data from the system so that you can perform the necessary operations with this data.

2:38 The object model is the core of Palantir, and one way or another, it can be seen in each of our videos.

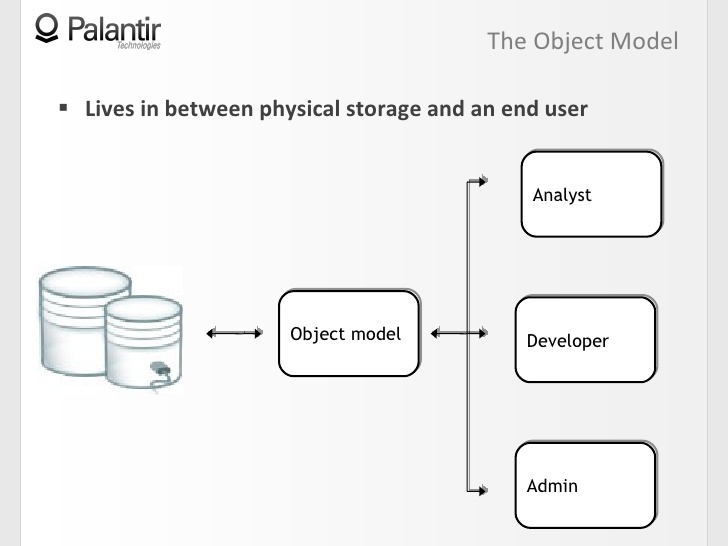

2:45 Now let's see how the model fits into the big picture.

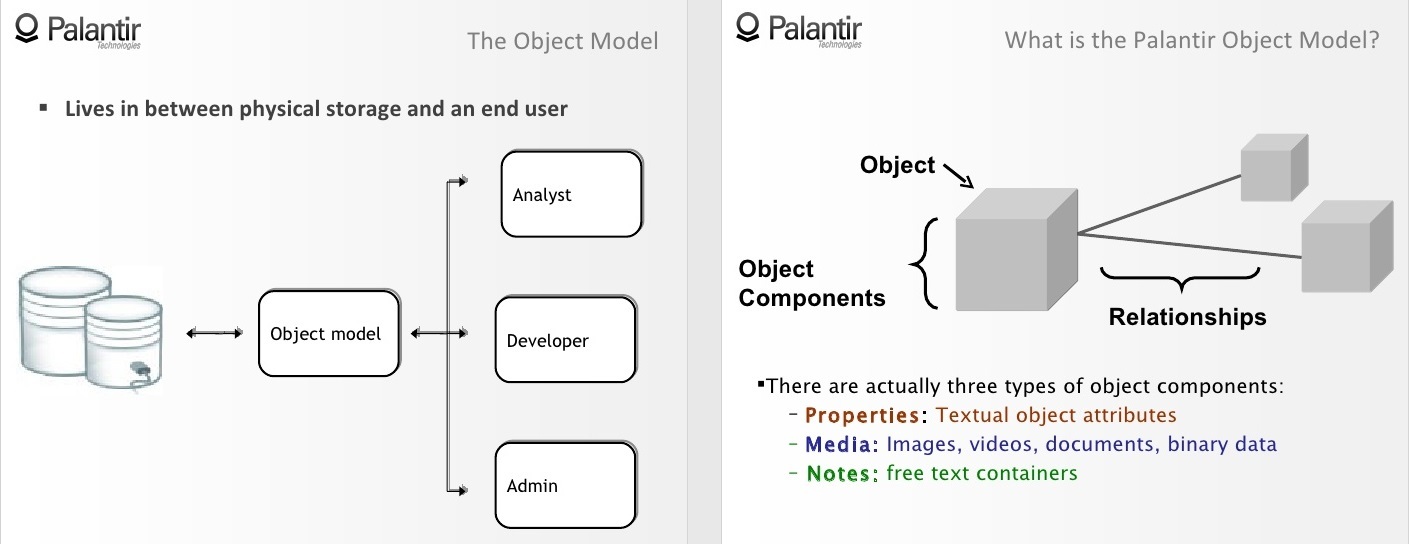

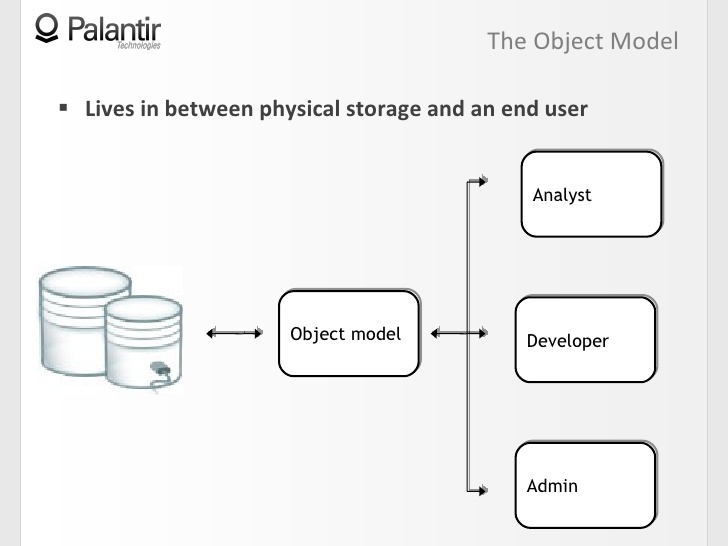

2:50 An object model is an abstraction between a physical data warehouse and an end user. In our case, the end user can be a workplace analyst, developer, or administrator.

3:07 Through the object model, all users interact with the data as a first order conceptual object, instead of gathering at a common table, sharing a vision, and playing back stored procedures (store procedure) again and again.

3:22 Now that we have an understanding of how the model looks in the big picture, let's move on to the structure. What are these objects?

3:36 First, an object is an empty container, a shell that we will fill with attributes and known information. Examples of objects are such entities as: people, places, telephones, computers, events, such as a meeting, for example, phone calls, documents, emails, and more.

3:54 All these objects have what we call object components.

3:58 There are four types of components of the object, three of which we will now list:

- signs, that is, text attributes, such as names, emails, and others;

- media files, which allows you to associate images, videos, texts and any other binary data formats with the object;

- notes, that is, free text fields for analysts.

4:18 Now we have objects in which we store information and there are connections that connect objects.

4:28 This system of objects and components of objects gives an idea of the object model. The reason we can model such a number of “fields” (domain - field, sphere, region; most likely, we are talking about a separate workspace in the general Palantir) is because we did not register any semantics inside the object as such.

4:42 I did not say that relationships must be unifying, governing, or hierarchical; an object model exists before these concepts. Each organization individually defines semantics using a dynamic ontology.

4:56 Let's see how the object model and dynamic ontology interact, creating the semantics necessary for the organization.

5:03 Let's use an example. Here we have a very simple graph, consisting of two objects containing some components, and relations.

5:12 There is no semantics. A certain organization will now choose the types of objects, features and relationships that it needs.

5:22 If I do network security, it can be routers and hosts, if counter-terrorism, it can be terrorist organizations, money and group members.

5:38 If you now combine the object model with the ontology, you are expected to get some semantics, for example: Zach works in Palantir.

5:50 The same, from the object point of view, graph can have a completely different meaning: it can indicate the presence of a document.

6:00 Abstracting the semantics of the structure, we got the opportunity to create a wide range of "fields" in an accessible and flexible way.

6:09 There is a tribute to be paid if you want flexibility, and this tribute should be very familiar to you.

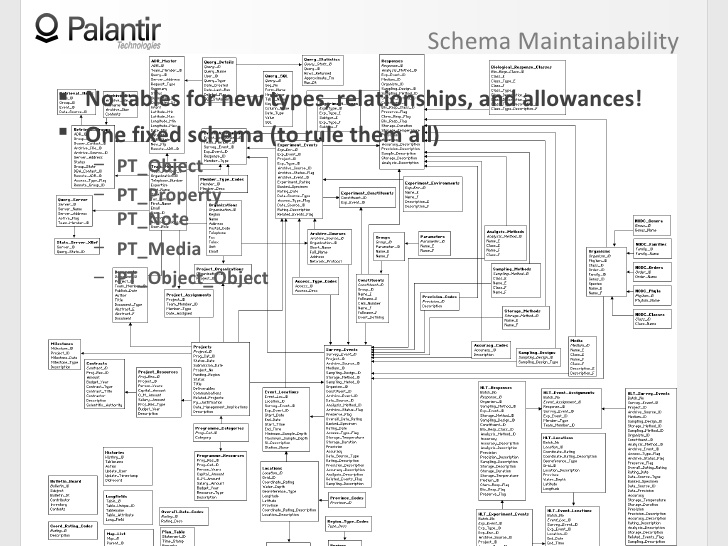

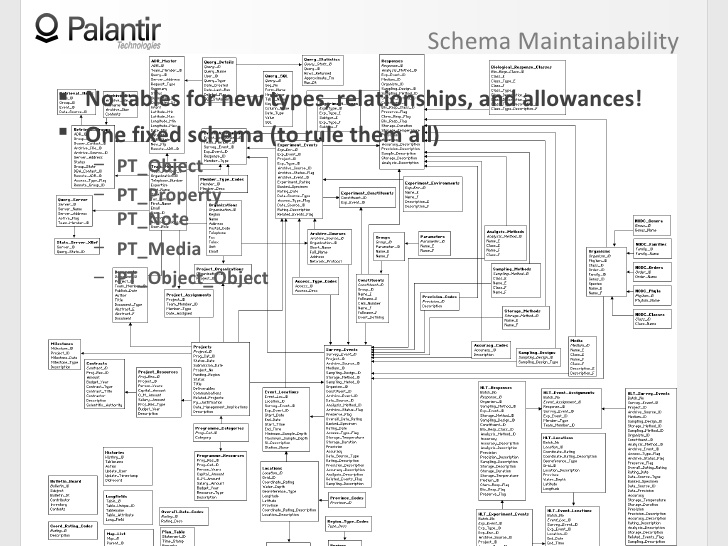

6:22 The price of the opportunity for the system to be flexible is almost always a loss of support for the ability to create circuits.

6:29 You can add a new type of object or type of relationship, but it will cost you five separate linked tables, with explanations, directions, and more.

6:41 So it’s really hard to maintain, and that’s not what you really want from a data platform.

6:45 In Palantir, we use the opposite approach: there is no need to create new tables for object types, relationships, and permissible restrictions.

6:57 To be more precise, there is one scheme in Palantir that we use in every organization and every implementation.

7:02 There are five tables from which you can take content for any object and any component of objects, it does not matter if you model documents based on message traffic or a highly structured database.

7:15 So, if you look at how the object model looks in the big picture, the structure of the object model itself and how it interacts with dynamic ontology, we will see high flexibility and the ability to create many "fields".

7:29 Now let's talk about how we implemented lossless data mining.

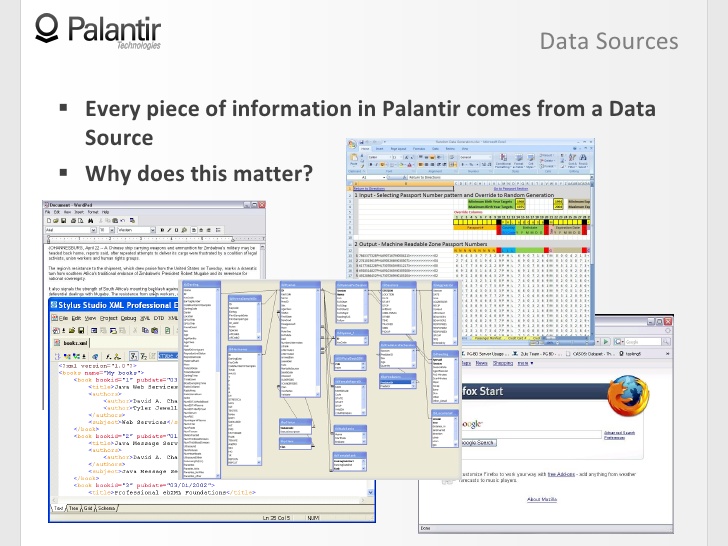

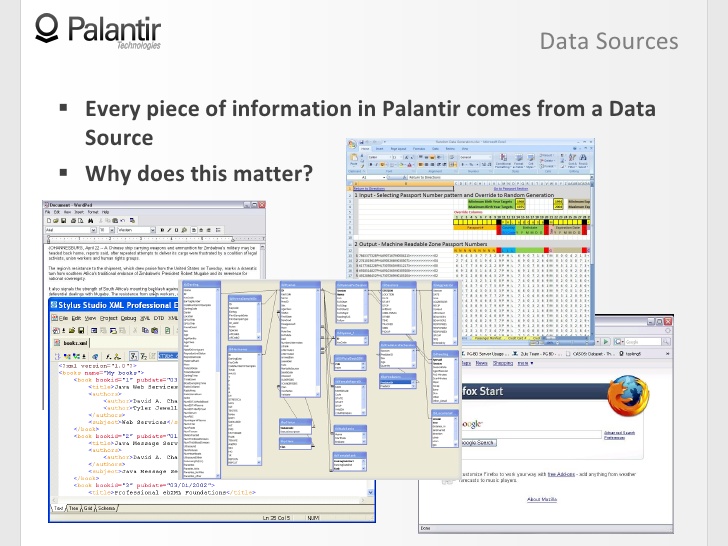

7:35 The most important thing here is the data sources, well, because all the information that is in Palantir came from the sources.

7:43 Examples. It can be anything: tax documents, spreadsheets, xml files, databases, web pages. Created by the analyst himself during the work, it is still based on information from sources.

7:58 Why is this important? You have something, a product: you need to trace where it came from, what it is based on, return to the sources and make sure there are no distortions - this is the only way to be confident in your conclusions.

8:11 Now, let's look at the relationship between data sources and the object model that I described to you.

8:17 Each component in Palantir contains a record of its data source. This record associates information with a source or multiple sources.

8:25 So if I want to justify my graph, we will see that these two objects are supported by sources A, B and C.

8:34 You can also see that several sources support one component of the object, and I am therefore more confident in this piece of information, because it relies on data from the storage of traffic and logs of operators, for example. Both sources confirm the information, I can move on based on it.

9:00 Records about data sources inform a little more accurately than just an indication of data sources, if we are dealing with unstructured sources. So, for example, if it is a document, the entry will indicate a specific place in the document. In structured databases, this source record can point to a primary key.

9:17 Now that we have seen how data sources are related to objects, let's see what operations we can perform here.

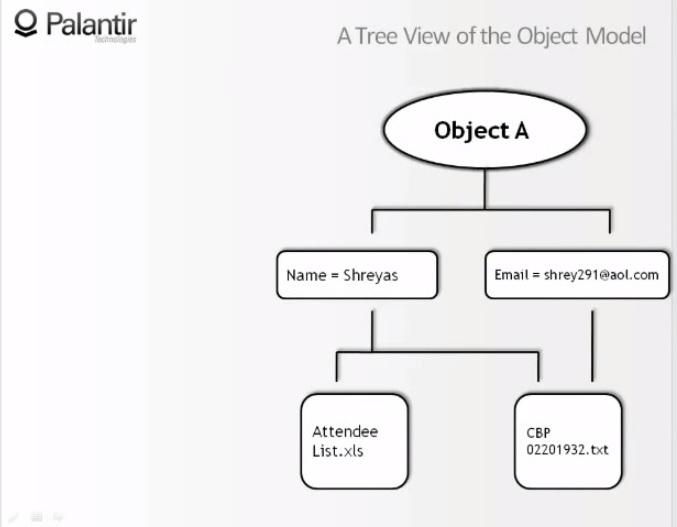

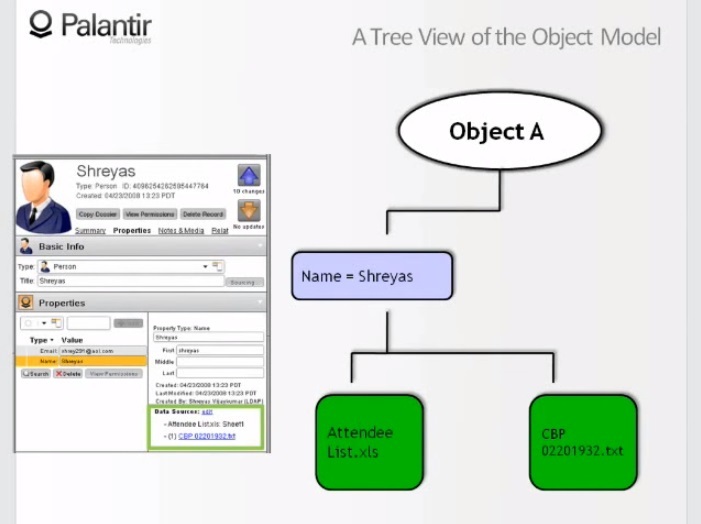

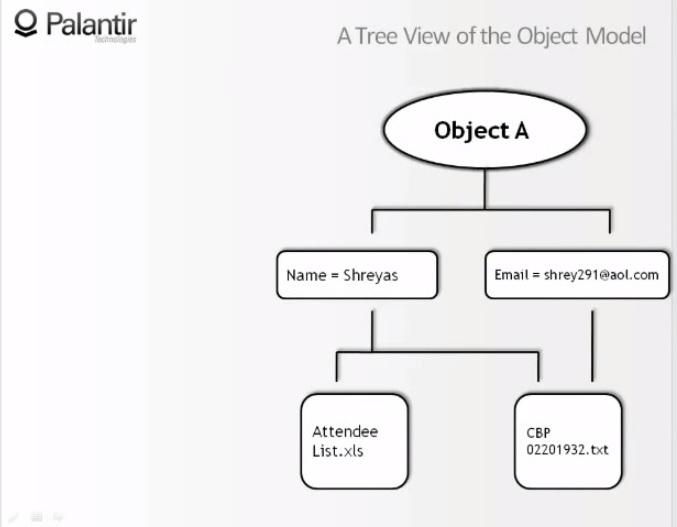

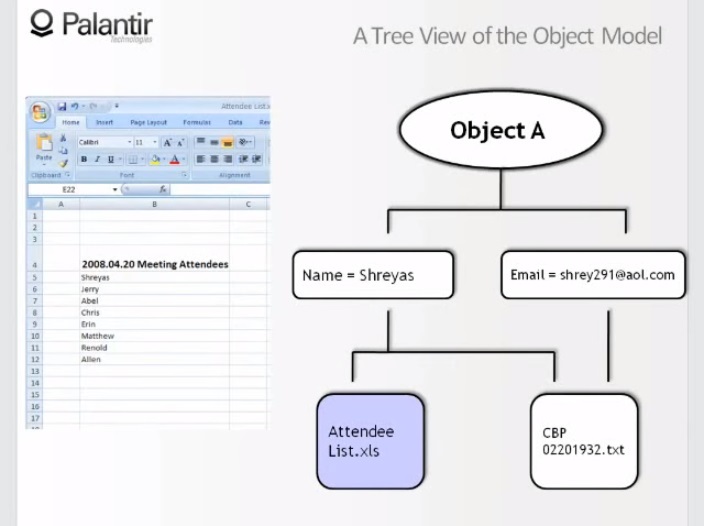

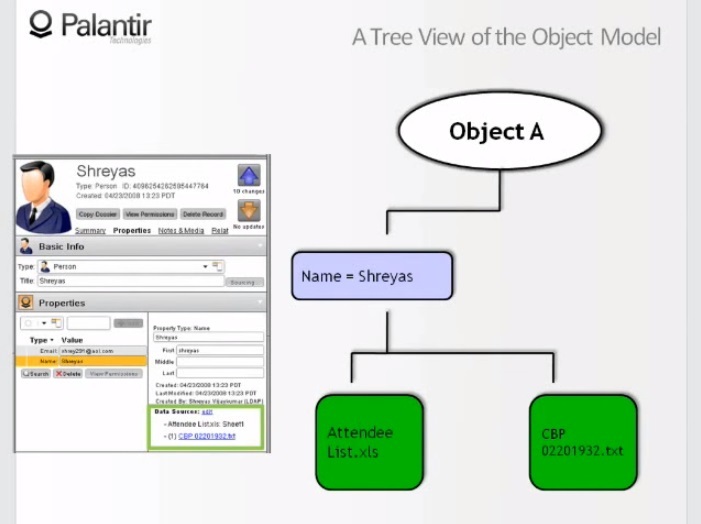

9:23 We see the graph simplified to the limit, it consists of one object containing two components, and two features. “Name: Sriyas” and “Email: shrey291@aol.com”.

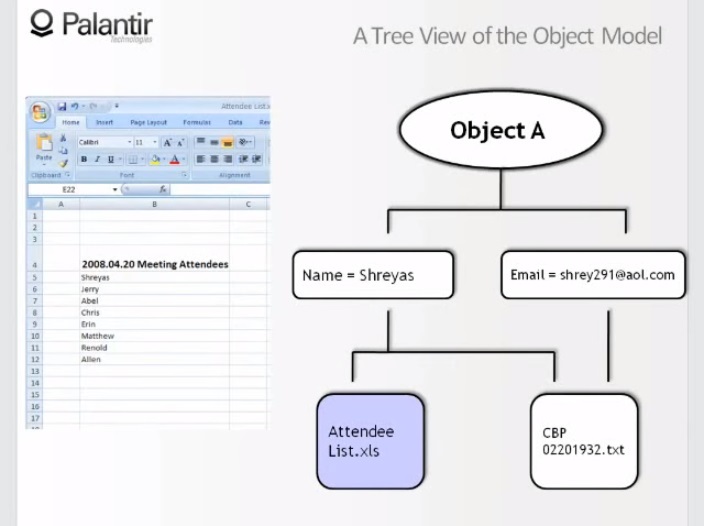

9:37 And we see that the name is taken from the visitors spreadsheet (attendee - participant, listener, visitor), and from a text document, and the email is taken only from a text document.

9:46 Let's look at these sources. Firstly, the list of visitors: we see that the name Sriyas is taken from a raw file, something like an excerpt from another source.

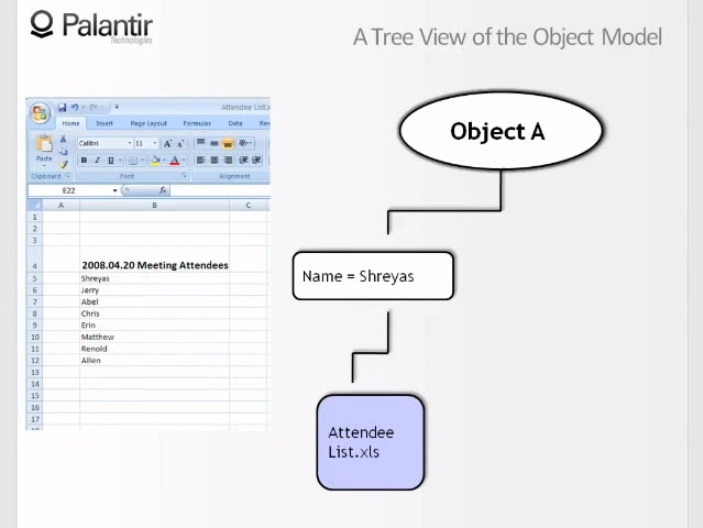

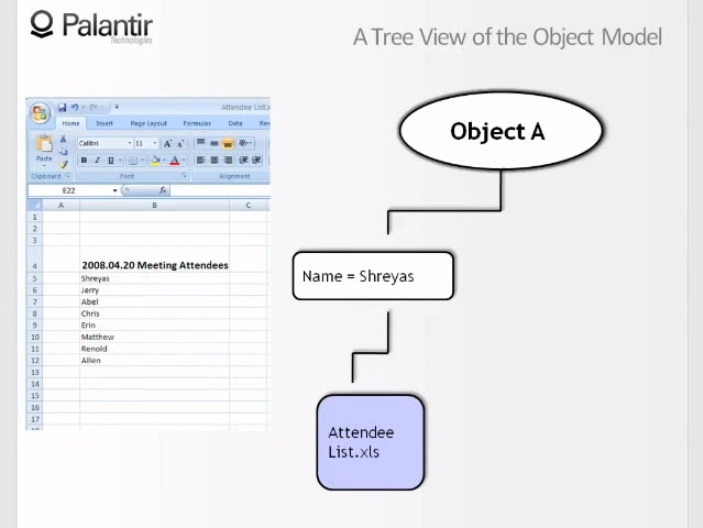

10:00 Imagine that the second, text file has been recalled (recall), or the user no longer has access to this source. What will the object look like now?

10:11 If we remove the text file, we will see that only the component name remains, so we effectively brought the object to a new look, since its second attribute is no longer supported.

10:25 Let's go back to the original view and look at another source.

10:30 We see a text document, we see that the name and email are extracted from the document. If the list of visitors was revoked or we were denied access, our object still looks the same. This is because both components have proven sources.

10:55 Now we saw what happens when changes occur at the source level, and that we can look at the signs, where they come from, and this is a useful opportunity.

10:53 Let's take a look at the properties: Name: Sriyas.

11:07 We see that the name is taken from the list of visitors and from a text file. It is important for the analyst to understand where the information comes from.

11:18 It is also important that everything we talked about is used to control access to information and to protect information sources. Also, it allows us to perform other actions.

11:30 For example, using this approach is easy to maintain a plurality of features. What does “add a new sign” mean for the sign “Name: Sriyas”?

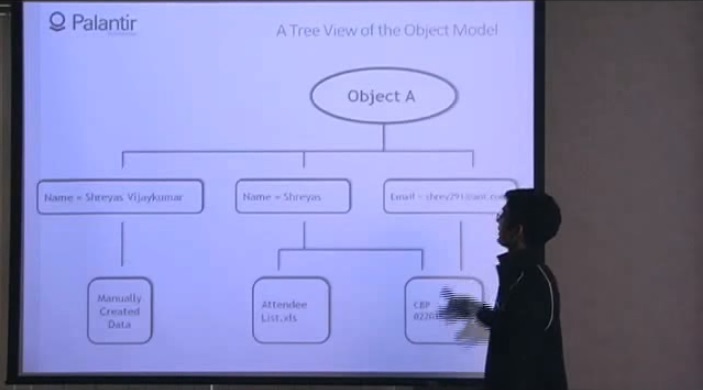

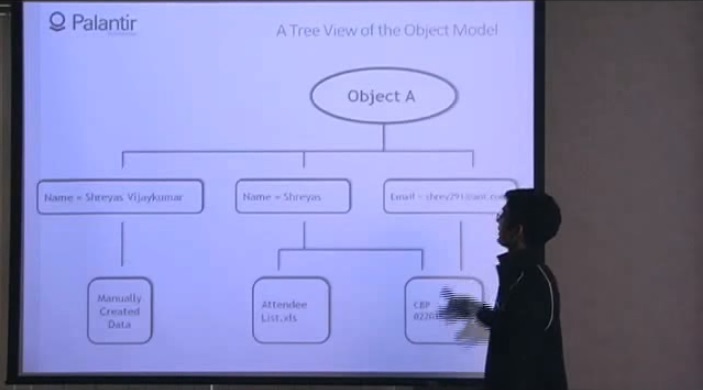

11:38 This means that we added a new branch to my graph, from the source "manually created data", and I can perform the same manipulations with this data that we considered earlier.

11:50 Using the object model, I can perform a number of useful data manipulations.

11:58 I want to mention separately that this approach is one of the components of data resynchronization. For example, you have some external data source, which, possibly, changes the value of your characteristic, and it’s not very clear how not to miss this value in Palantir.

12:12 All you need to know is that any discrepancies return to the sign itself, that is, if there is a mismatch associated with the sign “Name: Sriyas”, as this symptom in another source changes to “Sriyas Vijaykumar ", You can’t just change the value, because the old value is based on your own data source. You will have to create a new tag.

12:31 Now that we have seen the operations that can be performed with the object model, let's see how you can interact with this model, how you will enter and retrieve data.

12:39 As I said at the beginning, we support a very open format, an open API - these are our requirements for a data platform.

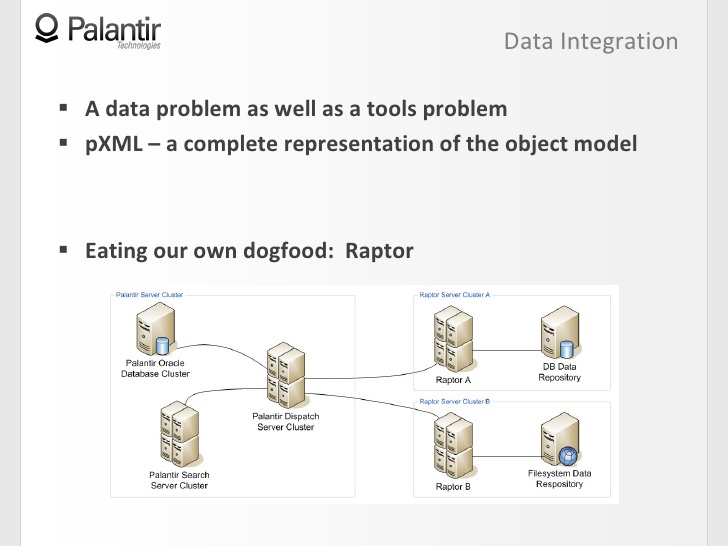

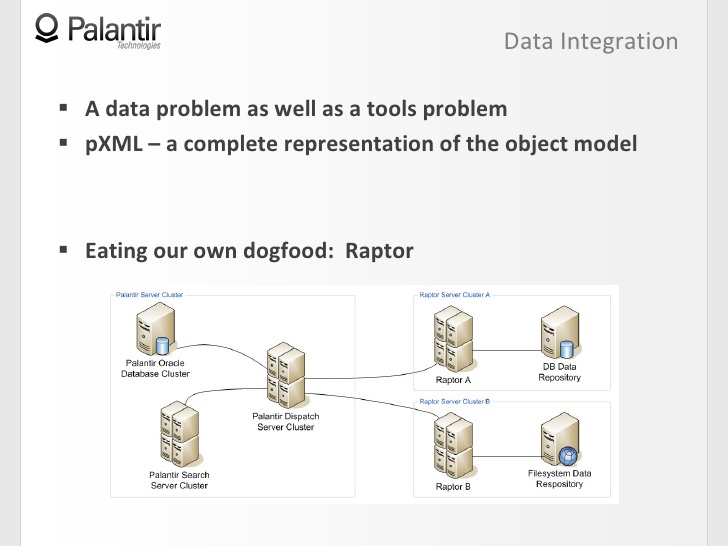

12:48 Typically, in such environments there is a problem of data and tools. This is a data problem, since it is difficult to receive data from different formats and interact with them.

13:00 But this is also a problem of tools, as you may come across products that hold you in a proprietary or binary format, and it may be difficult for such products to interact with your data.

13:08 In Palantir, an open xml format called Palantir XML is the embodiment of the object model.

13:19 This means that you can do all your work and extract data from Palantir in the form of Palantir XML, or, if you have a certain set of data, from unstructured documents to entire file systems, you can add them to Palantir using the same Palantir XML.

13:33 The reason this is possible is because you are entering data as an object model.

13:36 The reason this is important is because the object model effectively describes every piece of information in the system.

13:43 The last thing I want to touch on is how we used the object model when we designed our own Raptor system, an integrated search component.

13:57 Raptor's idea is to work efficiently with rapidly changing data sources, so they need to be in sync with your Palantir.

14:09 Usually, Palantir works like this: sends requests to the search cluster, the search cluster returns the result to the dispatch server.

14:14 Raptor serves as a bridge between external data sources and the dispatch server, and its task is to recognize the correct objects based on the object model.

14:29 When you launched a search through Raptor, it combines the entire data stream, sends all clumsy objects back, and for the user it looks like a smooth process, without a single break.

14:36 Summing up, I will say that the object model is the core of the Palantir platform, and when we developed this platform, we proceeded from three considerations:

(Special thanks to Alexei Vorsin, a Russian expert on the Palantir system, for helping prepare this article.)

More about Palantir:

Together with Edison, we continue to investigate the capabilities of the Palantir platform.

How do organizations manage data at the moment?

In existing systems, quite common artifacts are found, and many of them, if not all, are familiar to you:

- users often leave notes for themselves in the file name, so that we can come across constructions of the form send_ by_mail. Friday.10_utra. do not wash !!;

- every change in ontology requires modification of the whole scheme;

- data from different sources cannot be examined together in the same environment, so you can have a database of people and message traffic that you have to examine separately;

- resynchronization of data is impractical or impossible - and this is often necessary;

- information cannot be traced to its source.

What are we doing fundamentally different in Palantir?

When we developed the system, we worked a lot with community feedback. The first thing we tried to design is the maximum flexibility of the system, which makes it possible to simulate anything.

Flexibility means the ability to work with any type of data in one common space: from highly structured, such as databases with built relationships, to unstructured ones, such as a repository of message traffic, as well as everyone located between these extremes. It also means the ability to create many different fields for research without being tied to a single building model. Like an organization, they can change and evolve over time.

The next thing we designed was lossless data compilation. We need a platform that tracks every piece of information to its source or sources. In a multi-platform system, access control is important, especially if such a system allows you to complete the entire range of data operations.

2:26 The next thing we designed was an open format and an API. A genuine data platform allows you to enter data into the system, interact with data in this system, and output data from the system so that you can perform the necessary operations with this data.

2:38 The object model is the core of Palantir, and one way or another, it can be seen in each of our videos.

2:45 Now let's see how the model fits into the big picture.

2:50 An object model is an abstraction between a physical data warehouse and an end user. In our case, the end user can be a workplace analyst, developer, or administrator.

3:07 Through the object model, all users interact with the data as a first order conceptual object, instead of gathering at a common table, sharing a vision, and playing back stored procedures (store procedure) again and again.

3:22 Now that we have an understanding of how the model looks in the big picture, let's move on to the structure. What are these objects?

3:36 First, an object is an empty container, a shell that we will fill with attributes and known information. Examples of objects are such entities as: people, places, telephones, computers, events, such as a meeting, for example, phone calls, documents, emails, and more.

3:54 All these objects have what we call object components.

3:58 There are four types of components of the object, three of which we will now list:

- signs, that is, text attributes, such as names, emails, and others;

- media files, which allows you to associate images, videos, texts and any other binary data formats with the object;

- notes, that is, free text fields for analysts.

4:18 Now we have objects in which we store information and there are connections that connect objects.

4:28 This system of objects and components of objects gives an idea of the object model. The reason we can model such a number of “fields” (domain - field, sphere, region; most likely, we are talking about a separate workspace in the general Palantir) is because we did not register any semantics inside the object as such.

4:42 I did not say that relationships must be unifying, governing, or hierarchical; an object model exists before these concepts. Each organization individually defines semantics using a dynamic ontology.

4:56 Let's see how the object model and dynamic ontology interact, creating the semantics necessary for the organization.

5:03 Let's use an example. Here we have a very simple graph, consisting of two objects containing some components, and relations.

5:12 There is no semantics. A certain organization will now choose the types of objects, features and relationships that it needs.

5:22 If I do network security, it can be routers and hosts, if counter-terrorism, it can be terrorist organizations, money and group members.

5:38 If you now combine the object model with the ontology, you are expected to get some semantics, for example: Zach works in Palantir.

5:50 The same, from the object point of view, graph can have a completely different meaning: it can indicate the presence of a document.

6:00 Abstracting the semantics of the structure, we got the opportunity to create a wide range of "fields" in an accessible and flexible way.

6:09 There is a tribute to be paid if you want flexibility, and this tribute should be very familiar to you.

6:22 The price of the opportunity for the system to be flexible is almost always a loss of support for the ability to create circuits.

6:29 You can add a new type of object or type of relationship, but it will cost you five separate linked tables, with explanations, directions, and more.

6:41 So it’s really hard to maintain, and that’s not what you really want from a data platform.

6:45 In Palantir, we use the opposite approach: there is no need to create new tables for object types, relationships, and permissible restrictions.

6:57 To be more precise, there is one scheme in Palantir that we use in every organization and every implementation.

7:02 There are five tables from which you can take content for any object and any component of objects, it does not matter if you model documents based on message traffic or a highly structured database.

7:15 So, if you look at how the object model looks in the big picture, the structure of the object model itself and how it interacts with dynamic ontology, we will see high flexibility and the ability to create many "fields".

7:29 Now let's talk about how we implemented lossless data mining.

7:35 The most important thing here is the data sources, well, because all the information that is in Palantir came from the sources.

7:43 Examples. It can be anything: tax documents, spreadsheets, xml files, databases, web pages. Created by the analyst himself during the work, it is still based on information from sources.

7:58 Why is this important? You have something, a product: you need to trace where it came from, what it is based on, return to the sources and make sure there are no distortions - this is the only way to be confident in your conclusions.

8:11 Now, let's look at the relationship between data sources and the object model that I described to you.

8:17 Each component in Palantir contains a record of its data source. This record associates information with a source or multiple sources.

8:25 So if I want to justify my graph, we will see that these two objects are supported by sources A, B and C.

8:34 You can also see that several sources support one component of the object, and I am therefore more confident in this piece of information, because it relies on data from the storage of traffic and logs of operators, for example. Both sources confirm the information, I can move on based on it.

9:00 Records about data sources inform a little more accurately than just an indication of data sources, if we are dealing with unstructured sources. So, for example, if it is a document, the entry will indicate a specific place in the document. In structured databases, this source record can point to a primary key.

9:17 Now that we have seen how data sources are related to objects, let's see what operations we can perform here.

9:23 We see the graph simplified to the limit, it consists of one object containing two components, and two features. “Name: Sriyas” and “Email: shrey291@aol.com”.

9:37 And we see that the name is taken from the visitors spreadsheet (attendee - participant, listener, visitor), and from a text document, and the email is taken only from a text document.

9:46 Let's look at these sources. Firstly, the list of visitors: we see that the name Sriyas is taken from a raw file, something like an excerpt from another source.

10:00 Imagine that the second, text file has been recalled (recall), or the user no longer has access to this source. What will the object look like now?

10:11 If we remove the text file, we will see that only the component name remains, so we effectively brought the object to a new look, since its second attribute is no longer supported.

10:25 Let's go back to the original view and look at another source.

10:30 We see a text document, we see that the name and email are extracted from the document. If the list of visitors was revoked or we were denied access, our object still looks the same. This is because both components have proven sources.

10:55 Now we saw what happens when changes occur at the source level, and that we can look at the signs, where they come from, and this is a useful opportunity.

10:53 Let's take a look at the properties: Name: Sriyas.

11:07 We see that the name is taken from the list of visitors and from a text file. It is important for the analyst to understand where the information comes from.

11:18 It is also important that everything we talked about is used to control access to information and to protect information sources. Also, it allows us to perform other actions.

11:30 For example, using this approach is easy to maintain a plurality of features. What does “add a new sign” mean for the sign “Name: Sriyas”?

11:38 This means that we added a new branch to my graph, from the source "manually created data", and I can perform the same manipulations with this data that we considered earlier.

11:50 Using the object model, I can perform a number of useful data manipulations.

11:58 I want to mention separately that this approach is one of the components of data resynchronization. For example, you have some external data source, which, possibly, changes the value of your characteristic, and it’s not very clear how not to miss this value in Palantir.

12:12 All you need to know is that any discrepancies return to the sign itself, that is, if there is a mismatch associated with the sign “Name: Sriyas”, as this symptom in another source changes to “Sriyas Vijaykumar ", You can’t just change the value, because the old value is based on your own data source. You will have to create a new tag.

12:31 Now that we have seen the operations that can be performed with the object model, let's see how you can interact with this model, how you will enter and retrieve data.

12:39 As I said at the beginning, we support a very open format, an open API - these are our requirements for a data platform.

12:48 Typically, in such environments there is a problem of data and tools. This is a data problem, since it is difficult to receive data from different formats and interact with them.

13:00 But this is also a problem of tools, as you may come across products that hold you in a proprietary or binary format, and it may be difficult for such products to interact with your data.

13:08 In Palantir, an open xml format called Palantir XML is the embodiment of the object model.

13:19 This means that you can do all your work and extract data from Palantir in the form of Palantir XML, or, if you have a certain set of data, from unstructured documents to entire file systems, you can add them to Palantir using the same Palantir XML.

13:33 The reason this is possible is because you are entering data as an object model.

13:36 The reason this is important is because the object model effectively describes every piece of information in the system.

13:43 The last thing I want to touch on is how we used the object model when we designed our own Raptor system, an integrated search component.

13:57 Raptor's idea is to work efficiently with rapidly changing data sources, so they need to be in sync with your Palantir.

14:09 Usually, Palantir works like this: sends requests to the search cluster, the search cluster returns the result to the dispatch server.

14:14 Raptor serves as a bridge between external data sources and the dispatch server, and its task is to recognize the correct objects based on the object model.

14:29 When you launched a search through Raptor, it combines the entire data stream, sends all clumsy objects back, and for the user it looks like a smooth process, without a single break.

14:36 Summing up, I will say that the object model is the core of the Palantir platform, and when we developed this platform, we proceeded from three considerations:

- flexibility in modeling anything;

- the need to extract data without loss;

- open format and API.

(Special thanks to Alexei Vorsin, a Russian expert on the Palantir system, for helping prepare this article.)

More about Palantir:

- Dynamic ontology. As Palantir engineers explain this to the CIA, the NSA, and the military

- Cyber Counterintelligence. How Palantir Can Catch Snowden

- Palantir: how to detect a botnet

- Palantir and money laundering

- Palantir: arms trade and pandemic spread

- Palantir, PayPal mafia, special services, world government

- Palantir 101. What is allowed to mere mortals to know about the second highest private company in Silicon Valley