The process of developing and testing daemons

Today we’ll talk about the “low-level” building blocks of our project — demons.

Today we’ll talk about the “low-level” building blocks of our project — demons. Wikipedia definition:

" Demon is a computer program in UNIX-class systems, launched by the system itself and running in the background without direct user interaction."

Although this is not obvious, but almost all the functionality of the site largely depends on the work of these programs. The game of “Dating”, the search for new faces, the center of attention, messaging, statuses, geolocation and many other things are tied to a particular demon. So we can say that they help people around the world communicate and find new friends. At the same time, several dozen demons can work and interact with each other on the site. Their correct behavior is a very important task, so we decided to cover the main functionality of demons with autotests.

At Badoo, this is done by a special department. And today we will talk about how we are going through the process of developing this critical part of the site and the implementation of autotests. This area is quite specific and there is a lot of material, so we prepared a structured review of the whole process so that everyone who is interested could understand it.

We use Git as VCS, TeamCity for continuous integration, and JIRA acts as a bug tracker. For testing, we use PHPUnit. The development of demons, like the rest of the site, is carried out on the basis of the "feature - branch" principle. In order to understand what it is, we will consider the projections of our flow on Git and on JIRA.

Git flow

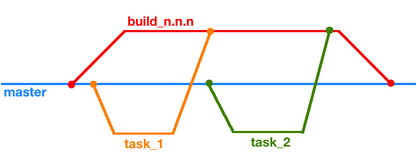

Work in Git can be schematically described by a picture:

To work on a task, a separate branch task_n is forked from the master branch, in which all development is carried out. Then this branch and, possibly, several others merge into the so-called branch build_n.nn (or just build), and only it already ends up in master.

Jira flow

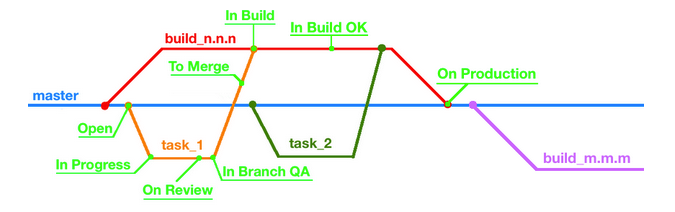

The process at JIRA is more complex and involves more steps. The figure below shows the main ones.

Open -> In progress

The Open status indicates that there is a task, but it has not been started yet. When the programmer starts working and sends the first changes to our git server (there will be a branch containing task_n in the name, the identifier in JIRA), several events occur. First, the task status becomes In progressand secondly, our TeamCity creates a separate assembly for this branch, which starts every time with a new “push” of the developer. Thanks to the efforts of release engineers and developers, artifacts from the assembly will be copied to the machines of our development environment, after that all regression tests will be launched, and the result of the run will be displayed in the TeamCity web interface. When the developer considers that he has finished work on the task, he transfers the ticket with it to On Review status .

On Review

Here the task is thoroughly studied by a colleague. If his gaze did not find something to complain about, the ticket is transferred to the status of In Branch QA .

In branch qa

At this stage, the task falls to the tester. First of all, he looks at the result of the regression test run - suddenly some of them now fail. In this case, there are two options: either everything is fine, it is the result of a new behavior and regression tests need to be updated, or the daemon does not work as expected, then the task returns to the developer. If you need to finalize the tests, the QA engineer takes up the matter closely. It covers the new behavior of the daemon with tests, simultaneously correcting the old ones, if necessary.

To Merge -> In Build

When the developer and the tester consider that the assembly from the task_n branch works stably and as expected, the To Merge button is clicked in JIRA. At this point, TeamCity “merges” the developer's branch into the build_n.nn branch and starts building it. The task again falls to the tester, but with the status In Build .

When the developer and the tester consider that the assembly from the task_n branch works stably and as expected, the To Merge button is clicked in JIRA. At this point, TeamCity “merges” the developer's branch into the build_n.nn branch and starts building it. The task again falls to the tester, but with the status In Build . The fact is that with merger unexpected conflicts can arise: while the branch was being tested, incompatible changes could be added to the build. When such a situation arises, the task is transferred to the programmer for a manual merge in the build. Another problem may be falling regression tests or, in the worst case, the inability to start the daemon. This is solved by rolling back the task from the build branch and returning the ticket to the developer. But if minor changes are needed to solve the problem, then the task remains and a patch appears in the build itself.

When the tests pass and the daemon is stable, the QA engineer sets the task status to In Build OK and she returns to the developer. The behavior-changing daemon build becomes the main build in the devel development environment and is being tested in combat.

In Build OK -> On Production

At some point in the build branch many necessary changes accumulate or critical tasks appear, and the developer decides that it is time to move the build to production. Click Finish Build, and the new version of the daemon, thanks to our valiant admins, starts working on machines that are responsible for the real work of our site. At this time, the build merges into the master branch and a new build is created with the name build_m.mm, into which all new changes will fall. Well, the programmer’s ticket will be set to On Production status .

Combining the two projections of the entire development process, we get the cycle shown below.

You can read more about our development processes and tight integration of JIRA, TeamCity and Git in our articles here , here and here .

Running AutoTests

Test Object

To begin with, let's determine what exactly we are testing.

In our case, the program in general is a kind of binary file that starts from the terminal. As arguments, he can be given various parameters that affect his work: configuration files ("configs"), ports, log files, folders with scripts, etc. Most often this is at least a config, and the launch line in this case will look like this:

>> daemon_name -c daemon_config

After starting, the daemon expects to connect on several ports (socket files) through which it communicates with the outside world. The format can be different, starting from text or JSON and ending with protobuf . Usually a couple of them are supported right away. Also, some daemons have the ability to save their data to disk, and then load them at startup. C and C ++ are mainly used for development, but recently Golang and Lua have been added.

In general, the execution of autotests can be divided into several stages:

- Preparing a test environment.

- Running and running tests.

- Cleaning the test environment.

So, run the tests.

>> phpunit daemon_tests/test.php --option=value

Preparing the test environment

After starting the tests, the part of the code that is defined in the setUpBeforeClass and setUp constructors is first executed. Just at this stage, the environment is being prepared: first, launch parameters are read, which we will discuss separately, then the necessary version of the binary file is selected, temporary folders, configs and various files that may be needed for work are created, and databases are prepared if necessary . All created objects contain a unique identifier in the name, which is selected once in the constructor and then is used everywhere. This allows you to avoid conflict while running tests with several developers and testers.

After starting the tests, the part of the code that is defined in the setUpBeforeClass and setUp constructors is first executed. Just at this stage, the environment is being prepared: first, launch parameters are read, which we will discuss separately, then the necessary version of the binary file is selected, temporary folders, configs and various files that may be needed for work are created, and databases are prepared if necessary . All created objects contain a unique identifier in the name, which is selected once in the constructor and then is used everywhere. This allows you to avoid conflict while running tests with several developers and testers.It often happens that a demon communicates with other demons during work - it can receive or send data, receive or send commands from its relatives. In this case, we use test stubs ("moki") or run real demons. When everything you need is created, a daemon instance is launched with our config and works in our environment.

Remember, I said that the daemon is waiting for connections on the ports? The last step is to create connections to them and check the readiness of the demon for communication. If all is well, then the tests are run directly.

Launch and execution

Most of the tests can be described by the algorithm: One or several requests can lead to

a send request , leading to some particular state. Actions insideget response - receiving a response, reading records in the database, reading a file. Under assert is meant a wide range of actions: this validation response, and assessment of the state of the daemon, and verification of data, came to the neighboring demons, and a comparison of records in the database, and even check the creation of snapshots with the correct data.

But our tests are not limited to the aforementioned algorithm - we try to cover various aspects of the life of our experimental subject as far as possible. Therefore, accidental troubles may occur with it: it may restart several times, the data in the requests may be truncated or contain incorrect information, invalid settings may get into the config, the interlocutors of the daemon may not respond or mysteriously disappear.

At the moment, the most difficult and interesting (in my opinion) is the area of testing inter-program interaction “demon - demon”. This is mainly due to the fact that the communication process depends on many factors: internal logic, external requests, time, settings in the config, etc. It becomes quite fun when our object can communicate with several different demons.

Cleaning the test environment

No matter how the tests end, you need to clean after yourself. First of all, we close the connections, stop the demon and all associated moks or neighboring demons. Then, all files and folders created during the tests are deleted if they are not needed, and the database is cleaned.

The problem here is cleaning after fatal errors - in some cases temporary files may remain (sometimes even the daemon continues to work).

A specific feature is superimposed on the entire testing process described - distribution across different machines. The usual scenario now is to run tests on one server, and start the daemon on another. Well, or on another server in the docker container.

Let's go back to the test run parameters. Now the list consists of 5-6 options, and it is constantly growing. All of them are aimed at facilitating the testing process and providing the opportunity to “customize” tests a bit without getting into the code. Here is our top:

- branch . This is the name of the ticket in JIRA, which included work on the demon. Tests will use the binary file assembly for this particular task;

- settings . The path to the ini-file is passed here, in which things specific for tests are registered. This may be the version of the build, the path to the default config, the user from whom the daemon is launched, the location of the necessary files;

- debug . If this flag is passed, the tests work in debug mode. For us, this means that the names of temporary files and folders will be readable, which makes them easy to distinguish from the rest. Also, all files created during the test run will not be deleted - this allows you to play falling tests if necessary;

- script . When this flag is present, during the execution of the test, a php script is generated that repeats all the actions of the test until it is completed or the assertion is triggered. This script is completely independent, which helps with debugging the daemon.

For today, this is all that we would like to talk about, and we are happy to move on to the questions in the comments.

In stock, we have ideas for articles about creating scripts for playing a test, generating documentation and using docker containers in tests - mark which topics you will be most interested in.

Konstantin Karpov, QA engineer