How to test us

Evgeny Kaspersky talks about independent tests of security software and our methodology for evaluating their results.

Evgeny Kaspersky talks about independent tests of security software and our methodology for evaluating their results. As we always say, Kaspersky Lab saves the world from cyber evil. How well are we doing? In general, and with respect to other rescuers? To evaluate the success of this ambitious task, we use various metrics. One of the main such metrics is an independent expert assessment of the quality of our products and technologies. The better the indicators, the better our technologies crush the digital plague and more actively save the world.

And who can do this? Independent test labs, of course! But the question is how to summarize the results? Indeed, in the world dozens, hundreds of tests are carried out by all and sundry! Various protection technologies are tested, individually or comprehensively, performance, ease of installation, and much more are investigated.

How to squeeze the most right out of this mess - something that reflects the most plausible picture of the capabilities of hundreds of antivirus products? And eliminate the possibility of test marketing? And also to make this metric understandable to a wide range of users for a conscious and reasoned choice of protection? Happiness is there, it can

How does she work?

Firstly, it is necessary to take into account all known test sites that conducted research on anti-malware protection for the reporting period. Secondly, you need to take into account the whole range of tests of the selected sites and all participating vendors. Thirdly, it is necessary to take into account a) the number of tests in which the vendor took part; b)% of absolute victories and c)% of prizes (TOP3).

Just like that - simply, transparently and excluding unscrupulous test marketing (and this, alas, often happens) Of course, you can screw another 25 thousand parameters to add 0.025% of objectivity, but it will be technological narcissism and geek-boring - and here we will definitely lose the average reader ... and not very ordinary either. Most importantly, we take a specific period, the entire set of tests for specific sites and all participants. We don’t miss anything and do not spare anyone (including ourselves).

Let’s now pull this methodology onto the real entropy of the world as of 2014!

Technical details and disclaimers who are interested in:

• Studies from eight test laboratories turned out to be taken into account in 2014 (by the way, they have many years of experience, the technological base (I checked myself ) and the impressive coverage of both vendors and various protective technologies are membersAMTSO ): AV-Comparatives , AV-Test , Anti-malware , Dennis Technology Labs , MRG EFFITAS , NSS Labs , PC Security Labs and Virus Bulletin . A detailed explanation of the methodology in this video and in this document .

• Only vendors who participated in 35% of the tests and more are taken into account, otherwise you can get the “winners” who won the only one test in their entire “medical history”.

• If someone considers the methodology for calculating the results incorrect - welcome to the comments.

• If someone in the comments speaks out on the topic “you will not praise yourself - no one will praise”, I will immediately answer: yes. Do not like the technique - suggest and justify your own.

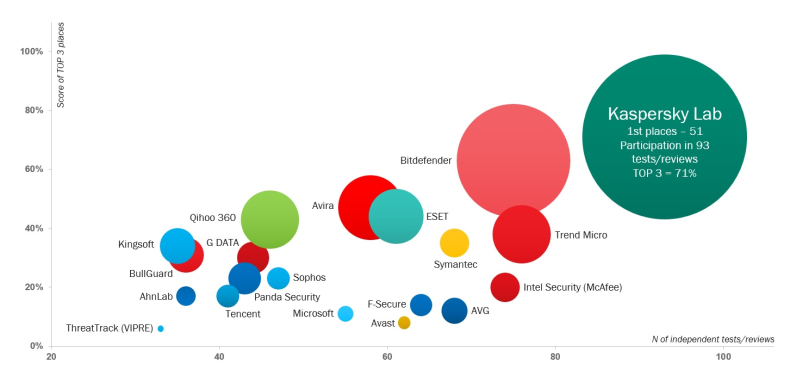

So, we analyze the test results for 2014 and get such a picture of the world: Here, on the abscissa, the number of tests in which the vendor participated and the final awards received; by ordinate - percentage of the vendor getting into the top three (TOP3). And the diameter of the circle is the number of absolute victories.

Well, then, congratulations to our R&D and all the other employees of the company, as well as our partners, customers, contractors, our just friends / girlfriends and all their family members! Congratulations to all on a well-deserved, dignified and clear victory! Keep it up, the salvation of the world is not far off !!! Hurrah!

But that is not all! Ahead is a dessert for the mind of real anti-virus industry experts who appreciate the dynamic data changes.

The method described above has been used for three years. And here it is very interesting to use it to deal with the issue of “the uniformity of washing powders”. Yes, there is an opinion that all antiviruses are more or less the same in terms of protection and functionality. And here are some interesting observations coming out of this assessment:

• There were more tests (on average in 2013, vendors participated in 50 tests, in 2014 already in 55), it became more difficult to win (the average indicator for getting into TOP3 tests decreased from 32% to 29%).

• Insiders to reduce the rate of getting into the test winners are Symantec (-28%), F-Secure (-25%) and Avast! (-19%).

• Curious - the positions of vendors relative to each other do not change so significantly. Only 2 vendors changed their positions in the number of TOP3 places by more than 5 points. This proves that high-quality protection is determined not only by signatures, it is built for years and theft of a detection will not give a decisive advantage. The advantage gives consistently high results.

• Sophisticated statistics buffs will also be interested to know that the 7 first vendors byTOP3-ranking took prizes 2 times more than the remaining 13 combined (240 hits in TOP3 versus 115) and took 63% of all “gold medals”.

• At the same time, only one vendor took 19% of all “gold medals” - guess who? :) A

savvy reader will ask: is it possible to include other test laboratories in the statistics and get other results?

The answer is that everything is theoretically possible. How there are alternative theories of gravity other than Newton or non-Euclidean geometry . But, most likely, these will be either completely unknown sites with dubious results, or their addition to the general statistics will not change the overall picture. The magic of big numbers, however!

Well, and the main conclusion.

Tests are one of the most important criteria for choosing protection. Of course, the user would rather believe an authoritative opinion from the outside than a beautiful booklet ("every sandpiper praises its swamp"). All vendors understand this well, but everyone solves the problem to the best of their technological abilities. Some really “worry” about quality, constantly improve protection, and some manipulate the results. They received the only certificate of conformity for the year, fastened a medal on the lapel and seemed to be the best. And you need to look at the whole picture , evaluating not only the test results, but also the percentage of participation.

Therefore, users are advised to choose protection thoughtfully and not let cranberries hang on their ears :)

Thank you all for your attention and see you soon!