Mastering technical vision using the example of Bioloid STEM and HaViMo2.0

Good afternoon, dear readers of Habr! With this article, I open a series of publications on robotics. The main directions of the topics of the articles will be a description of the practical implementations of various tasks - from the simplest programming of robots, to the implementation of navigation and autonomous behavior of the robot in various conditions. The main goal of these articles is to show and teach how to easily solve a particular applied problem, or how to quickly adapt your robotic kit to specific conditions. I will try to use affordable and widespread kits on the market so that many of you can use my solutions and refine them for your own purposes. We hope that these articles will be useful both to students of various educational institutions, and teachers of robotics.

Instead of the foreword

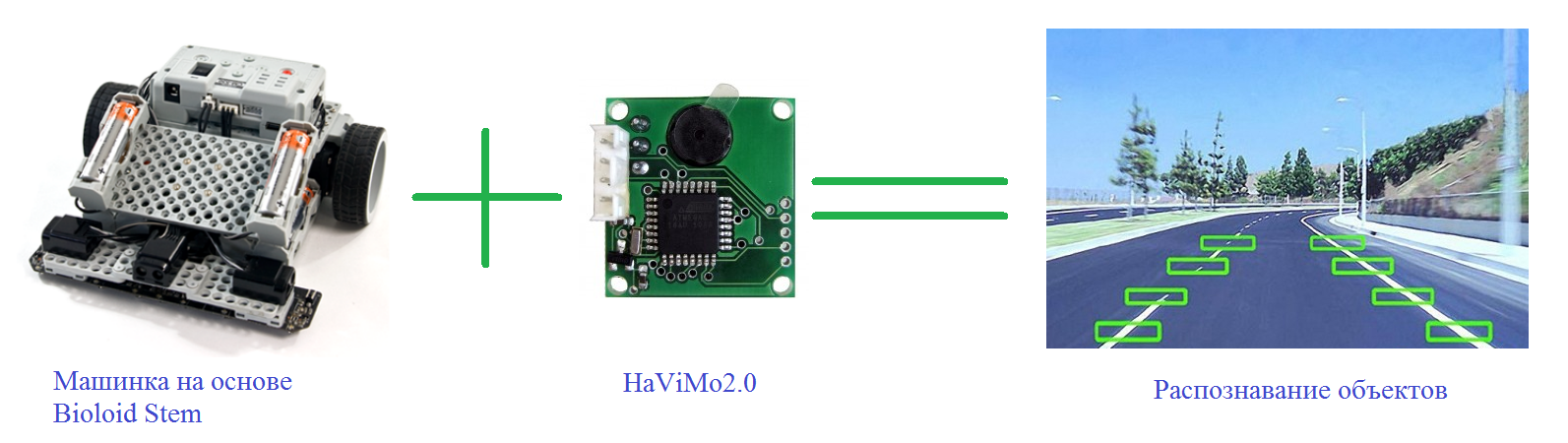

The work of modern mobile robots is often associated with constant and active movement in a dynamic (subject to change) environment. Currently, due to the intensive robotization of the service sector, for example, the introduction of robocars in production, service robots for contacting people, there is a serious need to create robots that could not only be able to move along predefined routes and detect obstacles, but also classify them so that, if necessary, flexibly adapt to a changing environment. This problem can and should be solved using technical vision. In this paper, I propose to deal with the main points in the implementation of technical vision using the example of a machine from the Bioloid STEM robotic kit. and HaViMo2.0 cameras.

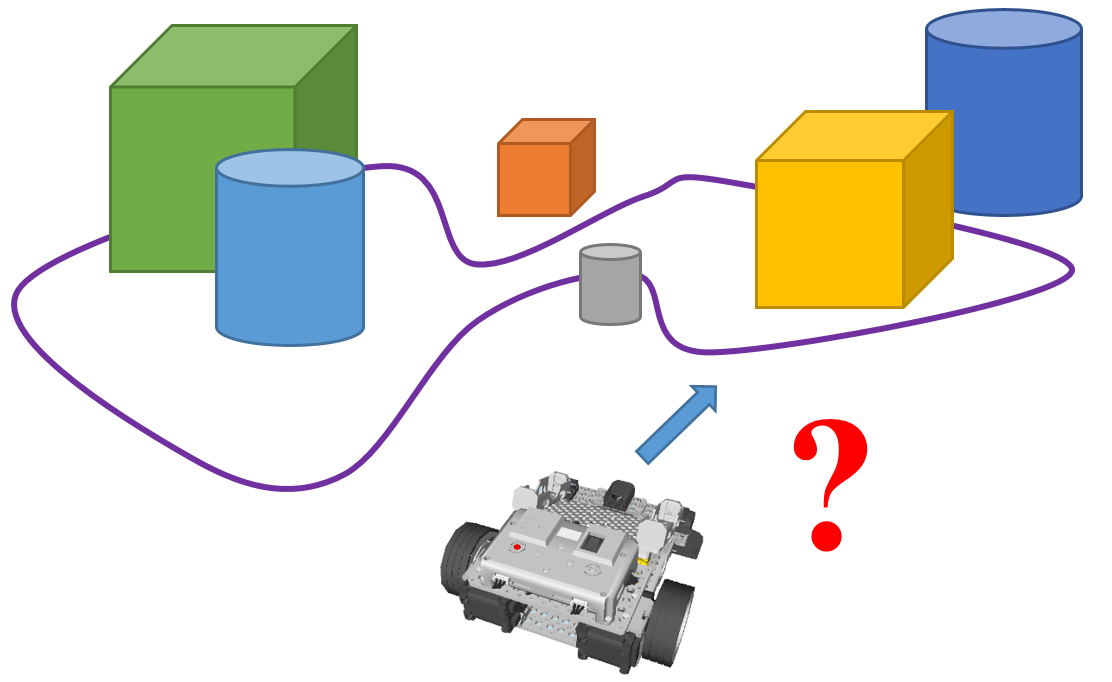

Currently, such a form of movement of robots as riding along a line is widespread. This is widely used both in factories in AGV (Automatic Guided Vehicle) - robots move along pre-drawn lines, and when organizing robotic competitions - you can often find tasks that are somehow related to line orientation. The main sensors with which the robot receives data are infrared sensors, which determine the difference in the contrast of the line and the surrounding background, and magnetic sensors, which are used in the case of magnetic lines. The above examples of solutions require both careful maintenance during operation, and a certain cleanliness of the environment: for example, in a dirty warehouse, lines may become dirty and become unrecognized by IR sensors, and, for example, in metalworking, magnetic lines can become clogged with iron shavings. In addition to all this, it is assumed that the robot moves along predetermined routes on which there should be no obstacles either in the form of people whom it can cripple, or in the form of any other objects. However, as a rule, there are always a lot of moving objects in production, which could potentially become an obstacle for the robot, so the robot should always not only receive information about its route and moving objects, but also must analyze the environment in order to be able to react correctly if necessary on the situation. which he can cripple, not in the form of any other objects. However, as a rule, there are always a lot of moving objects in production, which could potentially become an obstacle for the robot, so the robot should always not only receive information about its route and moving objects, but also must analyze the environment in order to be able to react correctly if necessary on the situation. which he can cripple, not in the form of any other objects. However, as a rule, there are always a lot of moving objects in production, which could potentially become an obstacle for the robot, so the robot should always not only receive information about its route and moving objects, but also must analyze the environment in order to be able to react correctly if necessary on the situation.

Figure 1. Warehouse robots moving along lines

When developing mobile robots, it is necessary to consider what tasks are posed to the mobile robot in order to select the necessary touch devices for their solution. So, for example, to solve the problem of moving within the working area, the robot can be equipped with expensive laser scanning rangefinders and GPS devices to determine its own position, while to solve the problem of local navigation, the mobile robot can be equipped with simple ultrasonic or infrared sensors around the perimeter. However, all of the above tools cannot give the robot a complete picture of what is happening around it, since the use of various distance sensors allows the robot to determine the distance to objects and their dimensions, but they do not allow to determine their other properties - shape, color, position in space,

To solve the problem identified above, technical vision systems come to the rescue, allowing the robot to receive the most complete information about the state of the environment around it. In fact - vision system is the "eyes" of the robot, capable of using a camera to digitize the surrounding area and to provide information about the physical characteristics of objects in it in the form of data

- size

- location in space

- appearance (color, surface condition, etc. .p.)

- marking (recognition of logos, bar codes, etc.).

The data obtained can be used to identify objects, measure their any characteristics, as well as manage them.

The vision system is based on a digital camera, which records the surrounding space, then the received data is processed by the processor using a certain image analysis algorithm to extract from them and classify the parameters of interest to us. At this stage, data is being prepared and output in a form suitable for processing by the robot controller. Then the data is transmitted directly to the robot controller, where we can use it to control the robot.

Figure 2. Application of a technical vision system for monitoring traffic conditions

As I mentioned earlier, our solution will be based on the popular robotic designer made by the Korean company Robotis, namely the designer Bioloid STEM.

Figure 3. Designer Bioloid STEM

This constructor contains components that enable to assemble one of the 7 basic models of robots. I allow myself not to dwell on the detailed description of this kit, since the network has enough of its reviews of varying degrees of completeness, for example, Bioloid STEM Designer Overview . As a working model, we will use the standard set configuration - the Avoider machine.

Figure 4. Avoider - one of the 7 standard configurations of the Bioloid STEM set.

As the basis of the vision system, we will use the HaViMo2.0 image processing module, built on the basis of a color CMOS camera. This module is specifically designed for use with low-power processors. In addition to the camera, this module is equipped with a microcontroller that performs image processing, so the controller’s resources are not wasted on the image processing itself. Data is output via the serial port.

Figure 5. HaViMo2.0 image processing module

Module specifications

• Built-in color CMOS camera:

o Resolution: 160 * 120 pixels

o Color depth: 12 bits YCrCb

o Frame rate: 19 frames per second

• Saving image processing parameters in EEPROM, there is no need to configure the camera each time after turning on the power.

• Auto / manual exposure, gain and white balance

• Adjustable hue / saturation

• Color-based image processing

• Built-in color reference table

• Save calibration parameters to internal memory, no need to re-calibrate after power on

• Capable of distinguishing up to 256 objects

• V composition go off 3D viewing and editing tools

• Supports the ability to display calibration results in real time

• Supports the implementation of the Region - Growing algorithm in real time

• Detects up to 15 contiguous areas in a frame

• Defines the color, number of pixels and border areas

• Defines the color and number of pixels for each 5x5 cell

• Supports raw image output in calibration mode

• Frame rate for interlaced output - 19 FPS

• Full frame refresh rate - 0.5 FPS

• Supported equipment:

CM5

CM510

USB2 Dynamixel

o Resolution: 160 * 120 pixels

o Color depth: 12 bits YCrCb

o Frame rate: 19 frames per second

• Saving image processing parameters in EEPROM, there is no need to configure the camera each time after turning on the power.

• Auto / manual exposure, gain and white balance

• Adjustable hue / saturation

• Color-based image processing

• Built-in color reference table

• Save calibration parameters to internal memory, no need to re-calibrate after power on

• Capable of distinguishing up to 256 objects

• V composition go off 3D viewing and editing tools

• Supports the ability to display calibration results in real time

• Supports the implementation of the Region - Growing algorithm in real time

• Detects up to 15 contiguous areas in a frame

• Defines the color, number of pixels and border areas

• Defines the color and number of pixels for each 5x5 cell

• Supports raw image output in calibration mode

• Frame rate for interlaced output - 19 FPS

• Full frame refresh rate - 0.5 FPS

• Supported equipment:

CM5

CM510

USB2 Dynamixel

As you may have noticed, HaViMo2.0 does not have official support for the CM-530 controller included in the Bioloid STEM kit. Nevertheless, it is possible to connect the CM-530 and HaViMo2.0, but first things first.

First of all, we need to somehow install the image processing module on the machine. And the first thing that comes to mind is to mount the camera as shown on the models below. By the way, these models were obtained using the modeling environment from Bioloid - R + Design. This environment is “sharpened” specifically for modeling robots from components offered by Bioloid. What is good and bad at the same time. Since there is no image module in the collection of R + Design parts, during the simulation I replaced it with a similar-sized die. And framed:

Figure 6. Location of the HaViMo2.0 image processing module on the Avoider

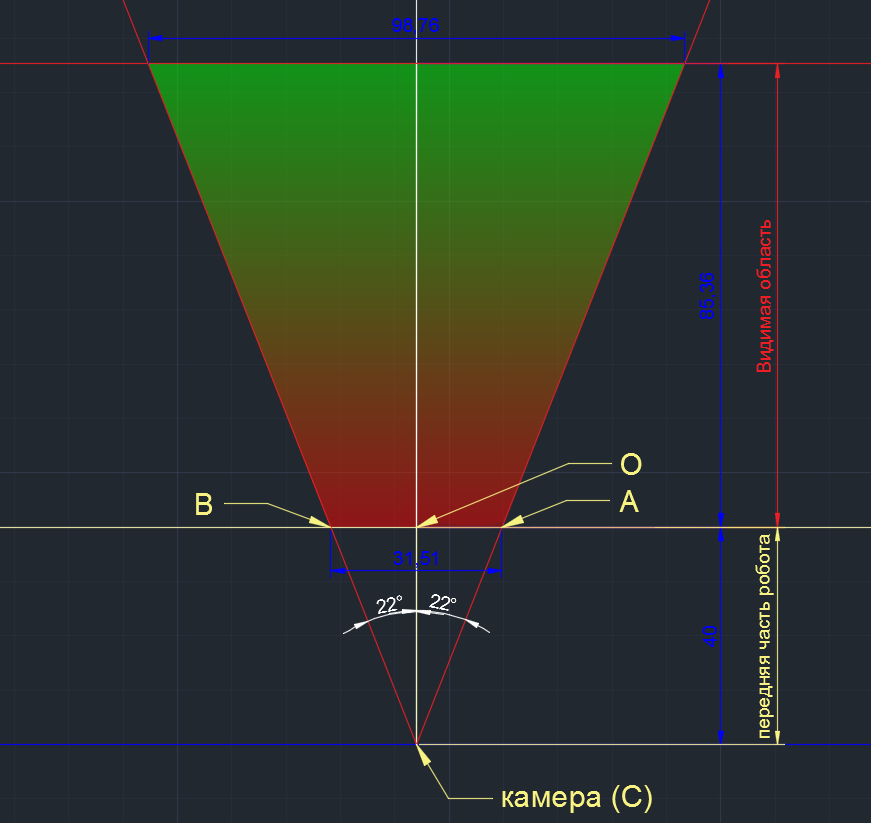

Well, it should look good, but you need to take into account another important point - the camera’s viewing angles, as the image that the module will process will depend on this. Since the manufacturer HaViMo2.0 did not specify the viewing angles of the camera in the specification, I had to determine them myself. I do not pretend to high accuracy of, but the results I get the following:

viewing angles HaViMo2.0:

Horizontal - 44 ° (no, I will not be rounded up to 45 °, as up to 44 °, and so I rounded)

vertically - 30 °

I can say in advance - the obtained results of the viewing angles correlated well with reality, so I will consider them working. Now, having the values of the viewing angles, I will formulate the problem of positioning the module as follows:

At what angle from the vertical should the module be deviated from the location shown above to minimize the scope, but at the same time to exclude the robot elements from falling into the camera scope?

I decided to just minimize the scope for the following reasons:

- firstly, in practice, to check what would happen

- and secondly, to ease the post-calibration module (less various objects falls into the scope, the easier it is to calibrate the module)

We will depict our problem on the plane (linear dimensions are indicated in mm, angular dimensions are in deg.):

Figure 7. Determining the angle of deviation of the module from the vertical

We know the point of camera position (C), we know the size of the front of the robot (AB), and we also know the vertical viewing angle (highlighted in red lines). Since there is nothing more complicated than the geometry of the 7th class, I allow myself not to give the calculation progress (read - calculations) and say right away that there should be a 52 ° angle between the camera plane and the vertical (thanks to CAD for accuracy). When calculating manually, I got a value of 50 °, so we will consider the results acceptable.

In addition to the angle, we also obtained that the horizontal visible area of the camera is about 85 mm.

The next step is to evaluate how large the objects should be so that they fall into the camera’s field of view. We will do this in relation to the width of the line that the robot will have to track and move along it. Let's draw a drawing similar to the previous one for horizontal projection:

Figure 8. Visible area. Horizontal projection.

In this drawing, we again know the camera’s location point (C), the size of the front of the robot (CO), the horizontal viewing angle, and we already know the length of the visible area that was obtained in the previous step. Here, we are primarily interested in the length of the segment AB, which shows the limit value of the width of the object that the camera can fix. As a result, we have - if the robot will travel along a line, then with this arrangement of the camera, the line width should not exceed 31 mm. If the line is wider, then we will not be able to accurately track its behavior.

Using the protractor and the tools at hand, I tried to fix the camera module in the way that was intended and this is what came of it:

Figure 9. Harsh reality - a live photo of the resulting design

It's time to start working directly with the HaViMo2.0 module. Personally, I want to say on my own - this module is very moody. Therefore, if something did not work for you, then try again. Two is better.

Very important! Connecting the camera must be done to the Dynamixel port located on the side of the controller between the battery connector and Communication Jack.

To work with the camera and the subsequent programming of the robot, we need the following set of software:

- RoboPlus version 1.1.3.0 ( download from the manufacturer's website )

- HaViMoGUI version 1.5 ( download )

- calibration firmware for the CM-530 controller ( download )

Let's start with the preparation of the controller. Since the CM-530 controller that comes with the Bioloid STEM robot kit is not officially supported by the image processing module, we need to use custom firmware for the controller. So we are reflashing the controller. We connect the controller to the computer, turn it on with the toggle switch and perform the following steps:

1. Open RoboPlus

2. In the Expert tab, launch RoboPlus Terminal

3. We will see an error message, do not get scared and click OK. Note that in the lower left corner is written No recent file, and in the lower right corner - Disconnect:

Figure 10. Preparing the controller. Steps 1-3

. 4. Select Connect from the Setup menu. A window will appear with a choice of port and its configuration. Select the COM port to which the controller is connected. Specify the speed - 57600. Click Connect. If the connection is successful, in the lower right corner Disconnect will change to a similar one: COM8-57600.

5. Without disconnecting the controller from the computer, turn it off with the POWER toggle switch. Then we’ll make sure that we have installed the English keyboard layout, hold down the Shift and 3 keys (triple with # sign) and, without releasing the button, turn on the controller with the toggle switch. If everything is done correctly, system information will appear in the terminal window. Press Enter once.

Figure 11. Preparing the controller. Steps 4-5

6. Make sure that we don’t have wires hanging anywhere and that the USB cable is securely connected to the computer and controller. Further, it is forbidden to disconnect the controller from the PC and remove power from it. Enter the command l (English little L) into the terminal. Press Enter.

7. Select the Transmit file item in the Files menu. Select the downloaded custom firmware CM-530.hex and click the Open button.

Figure 12. Preparing the controller. Steps 6-7

8. After the download is complete, enter the GO command and press Enter. You can close the terminal, disconnect the controller from the computer.

Figure 13. Preparing the controller. Step 8

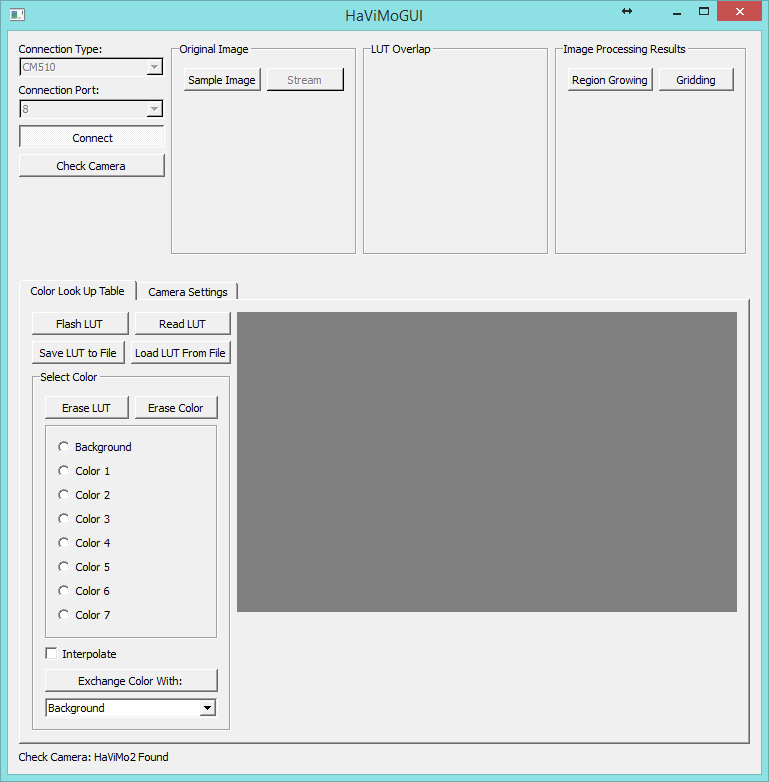

Our CM-530 controller is ready to work with the HaViMo2.0 module. Outwardly, it will not look very good - only one red LED will light up, and it will not give any other signs of life. Do not be alarmed. This is normal. Now let's proceed with the calibration of our image processing module. We open HaViMoGUI, and we launch on behalf of the administrator. We will see the following window:

Figure 14. Faceplate HaViMoGUI

Paragraph Connection Type select controller CM-510, a Connection Port - Port number COM is connected to the controller. Click Connect. If the connection is successful, we will see Port Opened in the lower left corner of the window. Now connect directly to the camera. To do this, click Check Camera . If the connection to the camera was successful, then in the lower left corner we will see the inscription Check Camera: HaViMo2 Found. If such an inscription does not appear, check that the camera is connected to the controller correctly and repeat the steps starting from starting HaViMoGUI.

Figure 15. Connection to the camera was successful

After connecting to the camera, go to the Camera Settings tab and activate Auto Update Settings , which will allow the camera to automatically select the brightness / contrast (and other settings) of the image, depending on the illumination of the object.

Figure 16. Setting auto-settings

Then we return to the Color Look Up Table tab and click Sample Image to fix the image from the camera. As a result, we get the image of our object at the top of the window in the Original Image section . Tab the Color the Look the Up the Table (right) were recognized by the camera shades in the form of 3D-gradient. The camera recognizes an object by its color. At the same time, you can remember 7 different objects (+ background). In order to remember the color, in the Color Look Up Table tab, select Color 1 .

Figure 17. Fixation camera image

color tone may be selected using the 3D-gradient (click on the box) or clicking a particular point Original Image . In our case, we choose the white line along which our robot will go. By clicking on Region Growing , we will see the recognition area that the module recognized. The simplest settings are made. In order to remember them, press the Flash LUT button . If the status bar says LUT Written Successfully, then saving the settings is successful. You can also save the settings to a separate file by clicking on the Save LUT to File button , then to read them from the file without first selecting the desired colors. Read LUT Button allows you to read the current camera settings.

Figure 18. Saving the module

settings The configuration of the image recognition module has been solved. You can return the controller to the world of living and working controllers. To do this, run RoboPlus, open RoboPlus Manager and select the top line piktogramku Controller software Firmware Manager . Click Next .

Figure 19. Restoring the controller firmware

Then we do everything that the utility asks us: in the window that appears, click Find , then turn the controller off and on with the toggle switch. The controller should be detected by the utility, then double click Next .

Figure 20. The controller was successfully detected.

We wait until the firmware installation is complete, click Next , then Finish . Then with a probability of 99% we see an error that we do not pay attention to - just close the window. This error occurs due to incorrect identification of the image recognition module connected to the controller. The controller is back in the world of the living - you can start programming it to control our robot.

We will develop the firmware in the standard Robotis environment - RoboPlus Task. Programming in RoboPlus Task is carried out using a specialized language similar to the C programming language. For user convenience, RoboPlus uses basic typing options in the form of graphic blocks, such as timers, data processing units from sensors, data transfer units between devices, etc. I will not dwell on the description of the programming process, since the environment is quite intuitive and does not cause difficulties. However, if you have any questions, I recommend looking at the development examples in the "Articles" and "Lessons" section on our website . But before we start programming, we will figure out how data is exchanged between the controller and the HaViMo2.0 module.

The communication between the module and the controller is implemented according to the same protocol, according to which the data exchange between the controller and the Dynamixel AX-12 servos is implemented. The module itself is built around a DSP (Digital signal processor), which is configured through access to its registers, each of which can be read or configured using the calibration interface. A detailed description of the DSP settings is provided in the manual modulo HaViMo2.0. A complete list of registers is also given there. To work with the image processing module, it’s enough for us to understand how data is output from the module.

The following example shows the output of the image processed by the module. Up to 15 areas with addresses 0x10 to 0xFF can be read using the READ DATA (0x02) command. Each region consists of 16 bytes, which consist of the following parts:

- Region Index - Region index: contains 0 if the region is not selected

- Region COLOR - Region color

- Number of Pixels - Number of pixels: the number of pixels detected within the region.

- Sum of X Coordinates - Sum of X Coordinates: The result of adding the X coordinates of all the detected points. Can be divided by the number of pixels to calculate the average of X.

- Sum of Y Coordinates - Sum of Y coordinates: the result of adding the Y coordinates of all the detected points. Can be divided by the number of pixels to calculate the average Y.

- Max X: The bounding side line to the right.

- Min X: The bounding side line to the right.

- Max Y: Boundary line from below.

- Min Y: Boundary line from above.

Figure 21. An example of image output from the HaViMo2.0 module

This example is enough to start programming the robot. We will write a function in the RoboPlus Task environment that will be responsible for determining the boundaries of the recognized area. Since this environment does not allow you to display the code in a digestible text form, you will have to post screenshots. Call our function Get_Bounding_Box. The algorithm of the function is as follows:

- sequentially poll the areas (Index) from 1 to 15 in a cycle, at each iteration of which we change the value of the address (Addr) in accordance with the rule indicated above.

- in case we find the recognized area (its index is not equal to 0), then we check it for compliance with the given color (Color)

- if its color matches the specified one, we fix the size of the area (Size) and the value of the boundary lines of our area (Maxx, Minx, Maxy, Miny)

Figure 22. Code of the function for determining the boundaries of the recognized area

Having received the size of the area and the coordinates of the boundary lines, we can proceed to programming the movement of the robot. The robot movement algorithm is quite simple - knowing the coordinates of the boundary lines along X, we find the average X. When moving, if the shift of the average X is fixed, the robot will turn in the right direction, thereby self-positioning itself on the line along which it is traveling. Graphically, the algorithm for determining the movement of the robot is presented in the following figure:

Figure 23. The algorithm for determining the movement of the robot

and its text-block implementation:

Figure 24. The code of the main function of the program

So, for this, when you turn on the controller, we first initialize our variables - speed, forward time, time during which the robot will rotate, and pause time. Next, in an endless loop, we initialize the connection to the camera (specify the port number to which it is connected - CamID) and the color of the area that we will detect. As we recall, during the calibration we chose Color 1, so now we specify it as we are interested in. After that, we turn to the camera and pause for 150ms, because after the first call the camera does not respond for at least 128 ms. I put the initialization function of connecting the camera to the main program cycle, since the camera is very capricious and might want to “fall off”. Initializing it at each iteration of the cycle, we kind of “ping” it. Then we call the function described above to determine the coordinates of the bounding lines of the recognized area and based on the obtained values we find the average (Cx). And then we compare the received value to the conditions - if the obtained value is in a certain range, then the robot goes straight, if it has shifted to the right, the robot turns to the right, if to the left - to the left, respectively. The functions themselves responsible for the movement are presented below:

Figure 25. Examples of functions that are responsible for moving forward and turning left - right

As their implementation following the logic functions - first activated actuators that operate for a certain time and then stop. For controlling the operating time and stop, 2 other functions are responsible:

Figure 26. Timer and stop functions of servos

All. This completes the development of a robot control program. You can upload it to the controller and see what happened. I got the following:

We see that the robot in an autonomous mode passes quite adequately the route invented for it, made, by the way, from sheets of ordinary white paper and glued with adhesive tape to the dark floor. Let's now figure out how our robot positions itself on this line. The camera of the HaViMo2.0 image processing module sees the following:

Figure 27. What the camera really sees

After processing this image, based on the settings we set, the shades of interest in the selected color are selected:

Figure 28. Highlighting color shades in the image

And the last, most important step is to highlight the rectangle in this image, the parameters of which we use when programming:

Figure 29. Defining the recognized area as a rectangle.

As applied to our task, this rectangle indicates the following:

Figure 30. Interpretation of the recognition area in relation to our task

Well, this is all wonderful, but the camera does not allow us to transmit the video stream in real time so that we can visually observe what the camera sees and how it recognizes it. But I really wanted to track at least schematically what the camera recognizes, and how the robot behaves. To do this, I used the LabView environment, with the help of which I organized the collection of data from the CM-530 controller and their processing (For more details on how to collect data from the CM-530 controller, see my article - “LabView in Robotics - Creating a SCADA System for Controlling the Robot”) Not that the utility turned out beautiful, but, in my opinion, quite useful for analyzing the movement of the robot. Since I did not have at hand any wireless adapter - neither ZigBee, nor Bluetooth, I had to drive data about the USB cable, which somewhat constrained my actions. The interface is as follows:

Figure 31. Application interface for analyzing the movement of the robot

AND marking the recognition area, with explanations, as applied to our task:

Figure 32. Marking the recognition window, in relation to our task

The interface consists of the following elements:

- upper left window: this is the camera visibility area. The red lines show our route, the green dotted line shows the middle of the line. The blue area is the area that the image recognition module has recognized at the moment (the same recognized area from Figure 29. The blue dotted line is the middle of this area. When the robot moves, it continuously analyzes the position of this line relative to the red ones - if the blue line is inside the area, limited by red lines, then the robot moves directly, if it goes beyond its limits - the robot turns in the appropriate direction.

- the lower left window shows the position of the robot relative to the track in real time. The red lines, again, indicate the borders of our route, the green dashed lines indicate the middle.

- the lower right window shows the actual movement of the robot - shooting the movement of the robot with the camera of a mobile phone.

- at the top right are the connection settings and indicators for displaying data coming from the robot, as well as a window for displaying an error if it occurs.

But looking at a static picture is not interesting, so I suggest you watch a video where the whole process of the robot’s movement is visually visible:

Now you can already analyze the movement of the robot in quite some detail. You can notice the following things:

- the scope decreases when the robot passes corners

- shadows (for example, from a wire) can interfere with recognition, again reducing the recognition area, so I have highlighted for myself and want to offer you some recommendations that should be considered when programming such a robot :

- “better less, but more” - the lower the speed of movement and the smaller the distance the robot travels per unit of time, the better, because the less likely that the robot will miss (“not see”) the turn, or go far off the route;

- follows from the first recommendation - the sharper the angle of rotation, the greater the likelihood that the robot will miss it;

- Our main enemy is flooring. Then think for yourself what to do - I personally, to drive along the linoleum, attached a sheet of paper to the bottom of the robot, because the resistance and creak were terrible;

- Murphy’s law - if a robot travels along a light line against a dark background and there is an object in the district that can be conditionally called light — you should know that for the robot it will turn out to be the lightest and it will happily run to it;

- a consequence of Murphy’s law - no matter how you reduce the field of view of the camera, the robot will always find such a bright object;

- the robot may be afraid of its own shadow, so always think about how the light will fall, and train the recognition module to work in different lighting conditions;

- be prepared for the fact that the batteries are discharged at the most inopportune moment, and outwardly it will look as if you have errors in the control program, controller firmware, the controller itself and DNA. So always control the battery charge - for such a robot, the normal voltage is 8-9V.

Well, in conclusion, I would like to say that there is no limit to perfection - so everything is in your hands. Go for robotics adventures!

Conclusion

Today we have figured out how to implement technical vision on the widespread Bioloid STEM setsusing the image recognition module - HaViMo2.0. This was just the first step towards technical vision in our publications. In the next article devoted to technical vision, we will consider a complicated task - moving along a line with a different type of marking, taking into account the recognition of some signs along it - an analogy to the movement of a car along the highway. I hope this article turned out to be useful and interesting to you. See you soon!