EMC VMAX 3: Enterprise Data Management Platform

In light of the global development trend of various kinds of social, mobile and cloud platforms, most modern companies need an IT infrastructure that can provide instant access to the large amounts of information that are characteristic of new generation hybrid cloud environments. EMC Introduces New VMAX 3 Storage Systems with a Range of Approaches to Simplify Information Lifecycle Management

The new storage line is a high-end storage system for business critical first-tier loads. At the same time, the solution allows customers to have full control over data services in SLA terms and the infrastructure where the applications are located - in the data center or in the public cloud. In fact, such capabilities make EMC VMAX 3 a corporate management platform, because before it was only possible with the integration of third-party data management services and external software, now control over the information life cycle, as well as the implementation of the “data storage as a service” model in a hybrid cloud is possible using the basic functions of the system.

The EMC VMAX 3 lineup consists of three storage systems - VMAX 100K, 200K and 400K. All storage systems are built on a fundamentally new architecture based on HYPERMAX OS and Dynamic Virtual Matrix. HYPERMAX OS combines the storage system hypervisor and the operating system, which allows embedding data storage infrastructure services directly into the array in VMAX 3. The Dynamic Virtual Matrix allows you to dynamically allocate computing resources to increase productivity and provide predictable levels of service across a large enterprise. All this makes it possible to increase the level of efficiency and consolidation of the data center by reducing the occupied space and reducing energy consumption.

Despite the fact that server cluster architectures such as Vsan or ScaleIO are increasingly used to build data storage services and middle-class systems have achieved performance sufficient for any application, there is still room for using multi-controller Hi-END systems:

1) These are multi-user databases that Transactional performance is needed, the size of which is constantly growing and placing them only on SSDs will be quite expensive.

2) This is a virtualization environment where many virtual machines with a wide variety of performance requirements can really generate a decent load.

In both cases, everything is stored on the same storage system. Accordingly, it is necessary that this system provides a very high availability of services, as well as the flexibility of online redistribution of resources in accordance with the load changing over time.

3) It is necessary to decide on the organization of a second or third backup site, with minimal impact of replication on application performance and restoring the service on this site with minimal or no data loss.

Architecture features

The new version of VMAX, like all previous ones, is built on the INTEL multi-controller hardware architecture. Only this time, the storage controllers are connected by a fault-tolerant data bus - Infiniband 56 Gb / s. The total coherent cache reaches 16 TB in older models, the number of computing cores reaches 384.

VMAX 3 has undergone many design changes. The controllers, disk shelves have changed, and the racks themselves have changed. VMAX 3 now ships in standard 19-inch enclosures and supports third-party rack support. Thus, it is not necessary to plan especially for the placement of VMAX, which is especially true for large data centers. VMAX no longer has a separation between system bay and storage bay. All racks are completely equal. Each rack can accommodate from 1 to 2 Engine (1 Engine or 2 controllers), interconnect and a number of disk shelves. There are two options for disk shelves - and both with tight placement: 60 in 3.5 inch format and 120 in 2.5 inch format.

Due to this, the total density of the array has increased: in one rack you can put 720 disks and two controllers (1 Engine) or 480 disks and four controllers (2 Engine). The maximum array configuration fits in 8 cabinets: 5760 drives and 8 Engine.

VMAX racks can be spaced up to 25 meters from the first, in which internal switching is installed.

The ability to span VMAX racks up to 25 meters from System Bay

The ability to span VMAX racks up to 25 meters from System BayVMAX 3 supports I / O interfaces:

- FC 8 and 16 Gb / s;

- FCoE / iSCSI 10 Gb / s.

The range of disks is constantly expanding, and the following types of drives are currently supported:

- SSD 200, 400, 800 GB;

- SAS 15K 300 GB;

- SAS 10K 300, 600, 1200 GB;

- NL-SAS 7K 2, 4 TB.

For the first time, Vault to flash technology has been used to protect cache memory. Previously, a special partition on vault hard drives was provided for this purpose, but now it is a separate Flash memory installed in the controllers themselves. This allowed the battery capacity used to protect the cache to be reduced: vault hard drives are no longer needed.

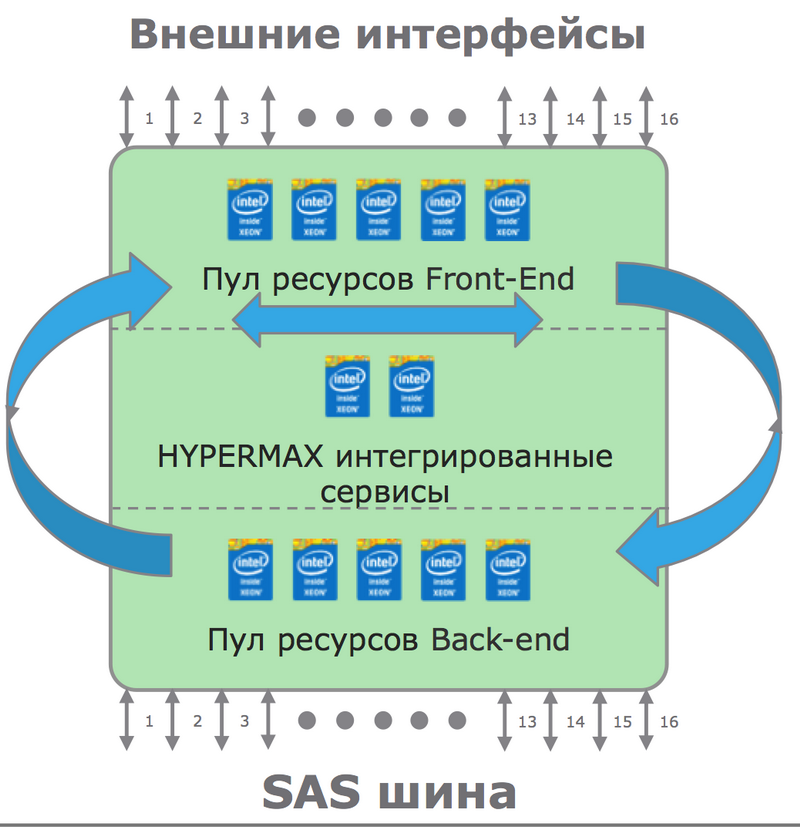

The main operating system of the array changed its name to HYPERMAX (formerly Enginuity), and this name was not chosen by chance. The thing is that, in addition to the operating system, a specialized hypervisor runs on the controllers, which allows you to run a large number of additional services, such as: monitoring, management, file access. Of particular note is the possibility of integrating a virtual version of VPLEX to create disaster-tolerant infrastructures without the need for additional equipment.

There is a dynamic internal load balancing. Previously, Enginuity had a tight binding of processor cores to I / O ports, but in HYPERMAX it disappeared, and the processing power is balanced between Front-end, Back-end and the built-in hypervisor.

Dynamic balancing of processor cores between tasks

Dynamic balancing of processor cores between tasksGiven the huge amount of resources (VMAX 400K in the maximum configuration supports 384 Intel cores and 16 TB of cache memory) combined with the incredible flexibility of dynamic resource reallocation, we can talk about the new VMAX as one of the most powerful High- End to date.

Resource planning

In the new version, the approach to sizing the system and the dynamic allocation of resources has fundamentally changed. If earlier the introduction and configuration of the VMAX system could be compared with a whole small project, now VMAX is delivered in a fully configured state divided by RAID, with pre-configured virtual resource pools and a simplified management interface. Preliminary configuration with observance of SLA levels is assembled at the plant in full accordance with the load profile, which was agreed with the customer at the stage of sizing and planning. At the stage of final commissioning at the customer’s site, you just need to connect the pre-configured logical volumes to the applications, and you can start working with a guaranteed SLA level!

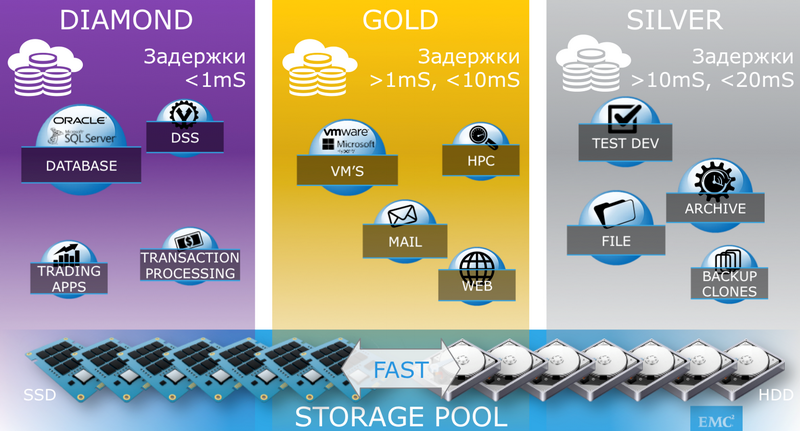

Several SLA levels are standardly offered: DIAMOND, PLATINUM, GOLD, SILVER, BRONZE (more precisely, in VMAX terminology this is called SLO - Service Level Objective), divided by the response time acceptable for the application, as well as the load profile. The following parameters are taken into account:

- average response time;

- read / write ratio;

- load profile sequential / random;

- middle block read / write;

- data volume.

SLO levels with response time classification

SLO levels with response time classificationIf you plan to run several different tasks on the system, you must predetermine the load profile of each of them. The absence of interference is fully guaranteed by the basic HYPERMAX services.

The more load information was known at the design stage, the more accurately VMAX will be able to plan its resources predicting bottlenecks. In other words, the VMAX 3 setup does not start from the moment the system is connected to the customer’s data center, but from the moment of collecting information before ordering the system.

Federated Storage

One of the key features that appeared in VMAX some time ago is Federated Tiered Storage (FTS), which has been significantly expanded and expanded in the new version.

FTS, firstly, allows you to consolidate arrays of various manufacturers based on VMAX, and secondly, extend technologies such as FAST, SRDF, TimeFinder to the connected array pool. The key difference between FTS and similar implementations of other manufacturers is that VMAX, when writing data to a third-party array, necessarily performs test reading and CRC control.

The application of FTS is very diverse. This includes the use of third-party arrays as part of FAST tiered storage, and the storage of Timefinder snapshots together with SRDF for remote replication. An interesting implementation together with VPLEX is to create Active / Active configurations with continuous access to data and complete stability against the failure of one of the data centers as a whole.

Together with VPLEX, data mobility is increasing, and it is also possible to work with a single geographically distributed cross-platform resource pool.

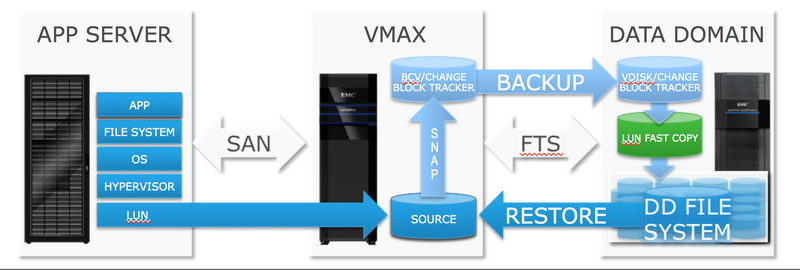

VMAX 3 introduced a completely new technology based on FTS algorithms - EMC ProtectPoint, which allows you to connect the EMC DataDomain (DD) backup system as Federated Tiered Storage and back up and restore data directly from DD.

All this works as follows: the database is paused, the ProtectPoint agent gives a command to take a TimeFinder snapshot, the database is restored. After that, in the background, VMAX copies the contents of the image to DD.

From the point of view of the database and applications, when implementing ProtectPoint, the backup procedure does not change, and everything looks very similar to a normal backup using snapshots, except that the traffic is not routed across the network between the application server and the server, but is localized between VMAX and DD.

Data recovery is also performed by the command of the ProtectPoint agent installed on the application / database server. The administrator, manually or through any supported backup software, selects the desired control point: the process of recovering data from a DD backup begins. A key feature of ProtectPoint is that you can use the recovered volume immediately after the initialization of the recovery procedure, without waiting for the data copy to complete. All requested blocks will be priority read with DD and provided to the application. Of course, this will cause additional delays, but the period of time until the volume is completely physically restored can be spent on the official functions of checking content, access rights, compatibility, etc.

Together with the high channel speed between DD and VMAX, this greatly reduces the recovery time after a failure.

Multilevel storage

Multilevel storage has remained the strongest side of EMC arrays for the past 6 years, when in 2008 the corporation first introduced Flash drives on the market for use as part of one of the media types in VMAX.

The algorithms that move data between storage tiers are designed to maintain a predefined SLA (SLO). If earlier the decision to move between levels was dictated by the frequency of requests of one or another block, now the decision is made on the basis of maintaining a predetermined response time and other parameters (provided that they are predetermined).

Data analysis and movement between storage tiers now runs 24x7 continuously. This does not affect performance, since VMAX is endowed with enormous computing resources even in a minimal configuration.

Service Level Objective (SLO) and virtual pools

Another important change in FAST is that if earlier the decision-making center for moving between levels was located directly in the VMAX core, now agents installed on application and database servers help in this. The agents receive information on the most popular data areas and possible changes in the load profile. FAST on VMAX has become proactive.

Database Performance Analysis

Now with Unisphere you can not only manage the array, but also analyze database performance: the DBclassify utility has been added to the interface, which can collect information directly from the DBMS server and transfer it to Unisphere. This allows us to consider Unisphere as a single point of performance monitoring, both from the side of the array and from the side of the application server. DBclassify can compare the IO response time on the storage system side and the response time on the application / database side and conclude whether there is a bottleneck inside the array or whether it needs to be looked for somewhere outside: in the network stack, file system, etc. DBclassify gives a table view, so you can see the bottleneck in a specific area of the database, and using FAST technology, give the command to move the table to another resource pool. DBclassify is primarily aimed at Oracle, but support is expected for other databases.

Unisphere, DBclassify and all the built-in functions for monitoring and managing the system are located in the kernel of the HYPERMAX OS and do not require third-party management servers.

We distribute EMC solutions in Ukraine and Belarus . Prices, questions - write: abo@muk.ua, or in PM.

Catalog of all solutions and services of the MUK distributor

Authorized EMC training courses

Translation of the review into Tajik

Translation of the review into Belarusian

MUK-Service - all types of IT repair: warranty, non-warranty repair, sale of spare parts, contract service