Testing with Microsoft Tools - Field Experience

This article was created by our friends and partners from Kaspersky Lab and describes the real experience of using test tools from Microsoft with recommendations. The author is a testing engineer at Kaspersky Lab, Igor Shcheglovitov.

Hello everybody. I work as a testing engineer at Kaspersky Lab in a team developing a server-side cloud infrastructure on the Microsoft Azure cloud platform.

The team consists of developers and testers (approximately 1 to 3). Developers write code in C # and practice TDD and DDD, which makes the code suitable for testing and loosely coupled. Tests that developers write are run either manually from Visual Studio or automatically when building a build on TFS. To start the build, we have the Gated Check-In trigger installed, so it starts when you check in Source Control. The peculiarity of this trigger is that if for some reason (either a compilation error or the tests did not pass) the build falls, then the check itself that launched the build does not get into SourceControl.

You probably came across the statement that the code is difficult to test? Some resort to pair programming. Other companies have dedicated test departments. We have this mandatory code review and automated integration testing. Unlike unit tests, integration tests are developed by dedicated testing engineers, which I also belong to.

Clients interact with us through a remote SOAP and REST API. The services themselves are based on WCF, MVC, while the data is stored in Azure Storage. Azure Service Bus and Azure Cloud Queue are used for reliability and scaling of long business processes.

A bit of lyrics: there is an opinion that a tester is a certain step to becoming a developer. This is not entirely true. The line between programmers and testing engineers disappears every year. Testers must have a large technical background. But at the same time, have a slightly different mindset than the developers. Testers should be aimed at destruction in the first place, and developers at creation. Together, they should try to make a quality product.

Integration tests, as well as the main code, are developed in C #. By simulating the actions of the end user, they test business processes on configured and running services. To write these tests, use Visual Studio and the MsTest framework. Developers use the same thing. In the process, testers and developers inter-code, so that team members can always speak the same language.

Test scripts (test cases) live and are managed through MTM (Microsoft Test Manager). The test case in this case is the same TFS entity as a bug or task. Test cases are manual or automatic. Due to the specifics of our system, we use only the latter.

Automatic test cases are connected by full CLR name with test methods (one case - one test method). Until the advent of MTM (Visual Studio 2010), I ran tests as modular, limited to the studio. It was difficult to build test reports, manage tests. Now this is done directly through MTM.

Now you can combine test cases into test plans, and inside the plans to build flat and hierarchical groups through TestSuite.

We have Feature Branch development, i.e. major improvements are made in separate branches, stabilization and release. For each branch we have created a test plan (to avoid chaos). After stabilization is completed in the Feature Branch branch, code is transferred to the main branch, and tests are transferred to the corresponding test plan. Test cases are very easy to add to test plans (Ctrl + C Ctrl + V, or through TFS query).

Some recommendations. Personally, I try to separate stable tests from new ones for each branch. This is easy to do through TestSuite. The peculiarity here is that stable (or regression) tests must pass 100%. If you do not, then it is worth considering. Well, and after the new functionality becomes stable, the corresponding tests are simply transferred to the regression Test Suite.

For me personally, one of the most boring and routine processes was the creation of test cases. The process of developing autotests is different for everyone. Someone at the beginning writes detailed test scripts, and then creates auto tests based on them. I have the opposite. I write dummy tests (without logic) in the code (in the test class). Then comes the implementation of the logic, architecture of the testing components, test data, and so on.

After the tests are created, they must be transferred to TFS. It’s one thing when there are 10 tests, and if 50 or 100, you will have to spend a lot of time routinely filling out the steps, linking each new test case with the test method.

To simplify this process, Microsoft came up with the tcm.exe utility, which can automatically create test scripts in TFS and include it in the test plan. This utility has a number of drawbacks; for some reason, one cannot add tests from different assemblies to one test plan or steps and a normal name cannot be set for a test case. In addition, the utility itself appeared relatively recently. When it was not there yet, we created a self-written utility that automates the process of creating test scripts. Special custom attributes TestCaseName and TestCaseStep were also developed to specify the name and steps of the test script.

The utility itself can both update, and create test scripts, include in the desired plan. The utility can work in silent mode, its launch is included in the Worfklow TFS build. Thus, it starts automatically itself and adds or updates existing test scripts. As a result, we have an actual test plan.

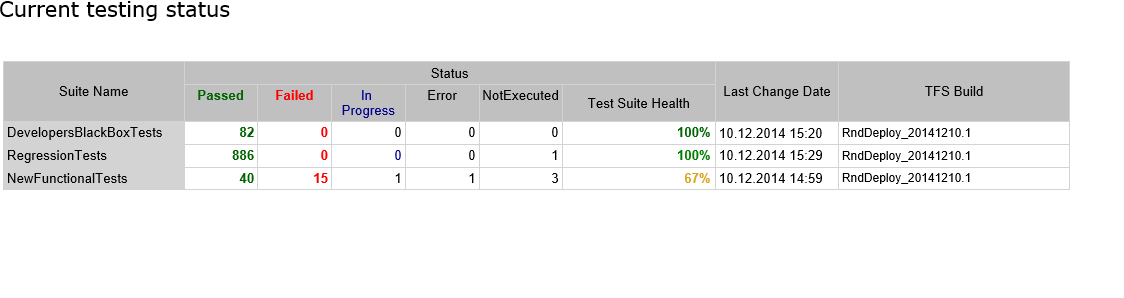

The status of the test run in the test plan (which is equivalent to the quality of the code) is visible in special reports. TFS has a set of ready-made report templates that build charts based on the status of test cases and test plans based on OLAP cubes. But we have a self-written and simplified report. We made it so that everyone who looked at it would immediately understand everything.

Here is an example:

The peculiarity of the report is that it is not built on the basis of cubes, which are not reconstructed synchronously and may contain data that are not yet relevant, our report uses data that is pulled directly through the MTM API. Thus, at any time, we get the current status of the test plan.

The report is built in HTML format and sent to the whole team through SmtpClient. All this is done using a simple utility, the launch of which is included directly in the Workflow build: download the utility .

In addition to managing test scenarios, MTM is also used to manage test environments and configure agents that run tests. To speed up the execution of tests (here we are talking about tests that check long asynchronous business processes) we use 6 testing agents, i.e. Due to the horizontal scaling of agents, we achieve parallel tests.

By themselves, auto integration tests can be manually run both through MTM and through Visual Studio. But manual start occurs during debugging (either tests or code). In our team, a reproducing test is started for almost every bug. Next, the bug along with the test is transferred to the development. And it’s easier for developers to simulate a problem situation and fix it.

Now about the regression process.

Our project has a special build that initiates a full run of tests; it runs on a trigger like the Rolling Build. That is, if the fast Gated Checkin build was successful, then the Rolling Build starts. The peculiarity of this build is that at most one build at most one build.

The beginning of this build is the same as the previous one - collecting the latest versions of the test and main project, and then the unit tests run.

If everything went well, then specially written Power Shell scripts are launched that deploy a package of collected services in Azure. Deployment management uses the Azure REST API. The deployment itself is carried out on an intermediate deployment of a cloud service. If the deployment was successful and the role starts without errors, then I will start the configuration verification scripts. If all is well, then the roles are switched (intermediate deployment becomes the main one) and the integration tests themselves are launched.

After running the tests, a letter is sent to the entire team that contains a report on the status of the tests.

Before writing tests, classic unit and integration tests, I recommend that you read the books The Art of Unit Testing and xUnit Test Patterns: Refactoring Test Code

These books (I personally love the first one) contain a large number of tips and tricks on how to and should not write tests on any xUnit framework.

I would like to end my article by listing the basic principles of writing auto-test code in our team:

- The "friendly" CLR is the name of the test method . Here the main idea is that by looking at one name of the test, the programmer can understand that he will check with what data, and what is expected. The name of the test should consist of 3 parts, separated by underscores, and look something like this: FunctionalUnderTest_Conditions_ExpectedResult

- The strict structure of the test is Arrange-Act-Assert . Data preparation, some action and verification. The point is that the tests should have the simplest and most compact structure, essentially consisting of the 3 steps described earlier. Thanks to this, good readability is achieved, as well as ease of support and debugging of tests.

- Tests should not contain conditional logic and loops . Here, without comment, if we had such tests, then we would have to write tests for tests.

- One test - one test . Often people insert a bunch of Assert`s in their tests, and then they can’t understand which one the test fell on. Try to do one test in the test, this greatly simplifies debugging and troubleshooting. Here I add a little that in some cases, after one action, it is required to check the state of several objects, and the state of each of them is characterized by many properties. In this case, it is advisable to do two tests, and check the properties of all the properties in separate methods with helpers with a normal name, so that instead of Assert.IsTrue call your redefined assert. Also, when writing property checks, try to write readable error messages. Here I just gave an example. In fact, there are tons of different patterns.

- Tests, even integration ones, should be quick . Try to write them with this in mind. I saw many examples of tests with such inserts: Thread.Sleep (60000). Avoid this. If you expect some kind of asynchronous action to be completed, then figure out how you can track its execution (for example, an asynchronous process changes its state in the database, etc.). Or maybe it's worth breaking the test into several?

- You may not be doing this yet, but keep thread safety in the tests. Because in the future, you will most likely come to the point that you want to speed up the execution of tests and multithreading will be almost one of the ways to achieve this acceleration.

- Independence . Tests should not depend on the order of their execution, and from each other. Write tests based on this principle. Try to clean up test data, as often it leads to various unforeseen consequences and slow down the execution of tests.

And finally, I want to add that the right tests really make development fast and the code quality.