Analysis of the Linux kernel boot process

- Transfer

Hello!

While Leonid is preparing for his first open lesson in our course “Linux Administrator” , we continue to talk about loading the Linux kernel.

Go!

Understanding the operation of a system that works without failures - preparing to eliminate inevitable breakdowns

The oldest joke in the field of open source software is the statement that “the code documents itself”. Experience shows that reading the source code is like listening to weather forecasts: intelligent people will still go out and look at the sky. The following are tips for checking and investigating the boot of Linux systems using familiar debugging tools. An analysis of the boot process of a system that works well prepares users and developers to eliminate the inevitable failures.

On the one hand, the boot process is surprisingly simple. The kernel of the operating system (kernel) runs single-threaded and synchronous on one core (core), which may seem understandable even to a pitiful human mind. But how does the kernel run itself? What functions do the initrd ( disk in memory for initial initialization) and downloaders? And wait, why is the LED on the Ethernet port always on?

Read on to get answers to these and some other questions; The code for the described demos and exercises is also available on GitHub .

Start of boot: OFF state

Wake-on-LAN

The OFF state means that the system has no power, right? The seeming simplicity is deceptive. For example, the Ethernet LED is on even in this state, because wake-on-LAN is on in your system (WOL, wake-up on [signal from] local network). Make sure to write:

Where instead may be, for example, eth0 (ethtool is in Linux packages with the same name). If the “wake-on” in the output shows g, remote hosts can boot the system by sending MagicPacket . If you do not want to remotely turn on your system yourself and give this opportunity to others, turn off WOL in the system BIOS menu, or using:

A processor that responds to MagicPacket can be a Baseboard Management Controller (BMC) or part of a network interface.

The Intel Management Engine, Platform Controller Hub and Minix

BMC are not the only microcontroller (MCU) that can “listen” to a nominally turned off system. On x86_64 systems, there is the Intel Management Engine (IME) software package for remote system management. A wide range of devices, from servers to laptops, have technology that has features such as KVM Remote Control or Intel Capability Licensing Service. According to Inte l's own tool , IME has unpatched vulnerabilities.Bad news - disable IME is difficult. Trammell Hudson created the me_cleaner project, which erases some of the most egregious IME components, such as the embedded web server, but at the same time there is a chance that using the project will turn the system on which it is running into a brick.

The IME firmware and the System Management Mode (SMM) program, which follows it on boot, are based on the Minix operating system and run on a separate Platform Controller Hub processor, rather than the main system CPU. Then SMM launches the Universal Extensible Firmware Interface (UEFI) program on the main processor, which has already been written about more than once . The Coreboot group launched an excitingly ambitious Google project.Non-Extensible Reduced Firmware (NERF) , which aims to replace not only UEFI, but also the early components of the Linux user space, for example, systemd. And while we are waiting for results, Linux users can purchase laptops from Purism, System76 or Dell, on which IME is disabled , plus, we can hope for laptops with a 64-bit ARM processor .

Loaders

What besides running booted spyware does boot firmware do? The task of the loader is to provide the just-enabled processor with the necessary resources to run a general-purpose operating system like Linux. During power up, there is not only virtual memory, but DRAM until the time when its controller is raised. The boot loader then turns on the power supplies and scans the buses and interfaces to find the kernel image and root filesystem. Popular boot loaders, such as U-Boot and GRUB, have support for common interfaces like USB, PCI, and NFS, as well as other more specialized embedded devices, such as NOR and NAND flash. Downloaders also interact with hardware security devices, such as the Trusted Platform Module (TPM)to establish a trust chain from the beginning of the download.

Run the U-boot bootloader in the sandbox on the build server.

The popular open source U-Boot downloader is supported by systems from Raspberry Pi to Nintendo devices, car boards and Chromebooks. There is no system log, and if something goes wrong, there may not even be a console output. To facilitate debugging, the U-Boot team provides a sandbox for testing patches on the build host or even in the Continuous Integration system. On a system where the usual development tools like Git and the GNU Compiler Collection (GCC) are installed, it’s easy to understand the U-Boot sandbox.

That's all: you launched U-Boot on x86_64 and can test tricky features, for example, repartition of dummy storage devices , TPM-based key manipulation and hot plug (hotplug) of USB devices. The U-Boot sandbox can be single-stage as part of the GDB debugger. Development using a sandbox is 10 times faster than testing by rewriting the loader onto the board, plus a “brick” sandbox can be restored by pressing Ctrl + C.

Starting the kernel

Purchasing the loading kernel

After completing his tasks, the loader switches to the kernel code that it loaded into main memory, and starts its execution, passing all the command line parameters that the user specified. What is the core program? file / boot / vmlinuz indicates that this is a bzImage. There is a extract-vmlinux tool in the Linux source tree that can be used to decompress a file:

The kernel is an Executable and Linking Format (ELF) binary file, just like the Linux user-space program. This means that we can use commands from the binutils package, such as readelf, to study it. Compare, for example, the following conclusions:

The list of sections in binary files is mostly similar.

So, the kernel has to run other ELF Linux binaries ... But how are user-space programs running? In function

Before starting a function,

ELF binary files have an interpreter, just like Bash and Python scripts. But it does not need to be clarified through

An investigation of the launch of a kernel with GDB provides an answer to this question To get started, install the kernel debugging package, which provides undiminished version

From start_kernel () to PID 1

Kernel hardware manifest: ACPI tables and device trees

When loading, the kernel needs hardware information in addition to the type of processor for which it was compiled. The instructions in the code are supplemented with configuration data that is stored separately. There are two main storage method: Device trees (Device Tree) and ACPI tables . The kernel learns from these files what hardware needs to be run on each boot.

For embedded devices, the device tree (DM) is the manifest of the installed hardware. The DU is a file that compiles at the same time as the kernel source and is usually located in / boot along with

The x86 family and many ARM64 business-level devices use an alternative Advanced Configuration and Power Interface mechanism ( ACPI , advanced configuration and power management interface). Unlike a remote control, ACPI information is stored in a virtual file system.

ACPI tables on Lenovo laptops are ready for Windows 2001.

Yes, your Linux system is ready for Windows 2001 if you want to install it. ACPI has both methods and data, in contrast to the DU, which is more similar to the hardware description language. ACPI methods continue to be active after loading. For example, if you run the acpi_listen command (from the apcid package) and then close and open the lid of the laptop, you will see that the ACPI functionality has continued to work all this time. Temporary and dynamic rewriting of ACPI tablespossible, but for permanent change you will need to interact with the BIOS menu at boot or flashing the ROM. Instead of such difficulties, you may simply need to install coreboot , a replacement for open source firmware.

From start_kernel () to user space

The code in

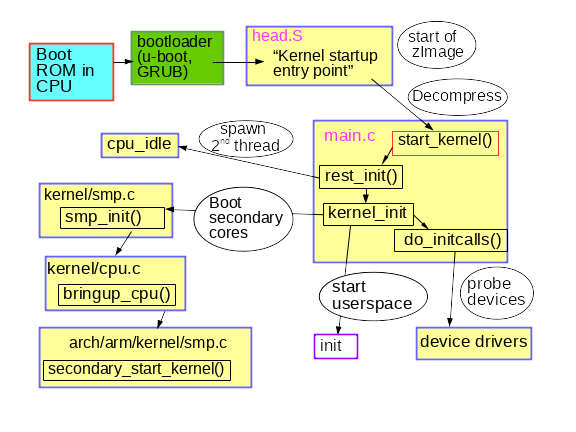

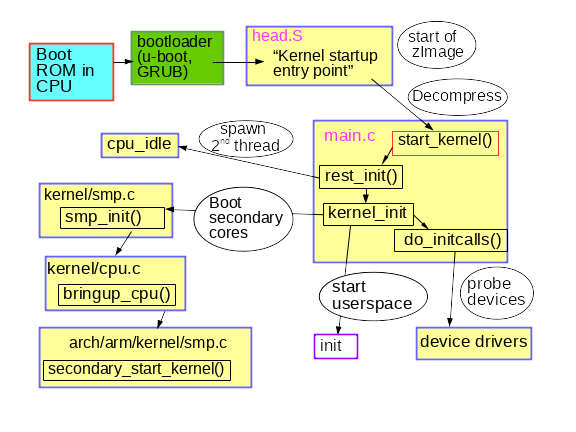

A brief description of an early kernel boot process

Modestly named

Please note that the code

where the PSR field means “processor”. Each core must have its own timers and hotplug cpuhp handlers.

And finally, how does user space run? Toward the end, is

Early user space: who ordered the initrd?

In addition to the device tree, another path to the file, optionally provided to the kernel at boot, belongs

Why try to create

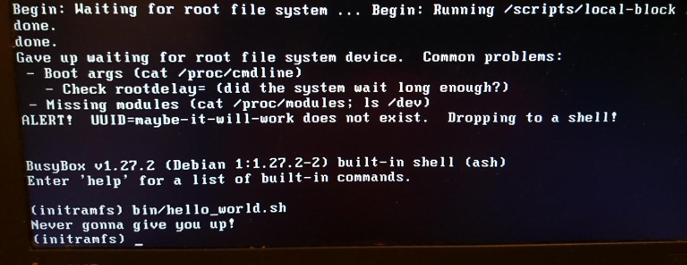

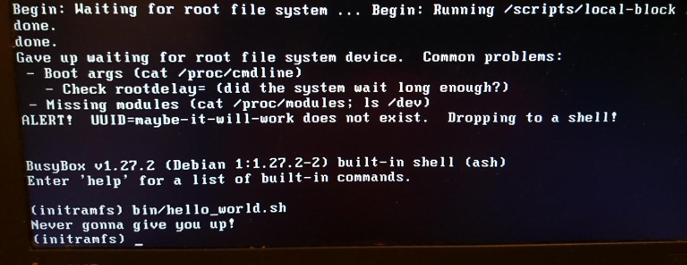

Fun with a rescue shell and custom initrd.

Finally, when it

Total

The Linux boot process sounds forbidden, given the amount of affected software, even on the simplest embedded device. On the other hand, the boot process is quite simple, since the excessive complexity caused by preemptive multitasking, RCU, and race conditions is absent here. Paying attention only to the kernel and PID 1, you can lose sight of the great work done by the loaders and auxiliary processors to prepare the platform for launching the kernel. The kernel is certainly different from other Linux programs, but using tools to work with other ELF binary files will help you better understand its structure. Examining a workable boot process will prepare for future failures.

THE END

We are waiting for your comments and question, as usual, or here, or in our open lesson.Where sweat is Leonid .

While Leonid is preparing for his first open lesson in our course “Linux Administrator” , we continue to talk about loading the Linux kernel.

Go!

Understanding the operation of a system that works without failures - preparing to eliminate inevitable breakdowns

The oldest joke in the field of open source software is the statement that “the code documents itself”. Experience shows that reading the source code is like listening to weather forecasts: intelligent people will still go out and look at the sky. The following are tips for checking and investigating the boot of Linux systems using familiar debugging tools. An analysis of the boot process of a system that works well prepares users and developers to eliminate the inevitable failures.

On the one hand, the boot process is surprisingly simple. The kernel of the operating system (kernel) runs single-threaded and synchronous on one core (core), which may seem understandable even to a pitiful human mind. But how does the kernel run itself? What functions do the initrd ( disk in memory for initial initialization) and downloaders? And wait, why is the LED on the Ethernet port always on?

Read on to get answers to these and some other questions; The code for the described demos and exercises is also available on GitHub .

Start of boot: OFF state

Wake-on-LAN

The OFF state means that the system has no power, right? The seeming simplicity is deceptive. For example, the Ethernet LED is on even in this state, because wake-on-LAN is on in your system (WOL, wake-up on [signal from] local network). Make sure to write:

$# sudo ethtool <interface name>Where instead may be, for example, eth0 (ethtool is in Linux packages with the same name). If the “wake-on” in the output shows g, remote hosts can boot the system by sending MagicPacket . If you do not want to remotely turn on your system yourself and give this opportunity to others, turn off WOL in the system BIOS menu, or using:

$# sudo ethtool -s <interface name> wol dA processor that responds to MagicPacket can be a Baseboard Management Controller (BMC) or part of a network interface.

The Intel Management Engine, Platform Controller Hub and Minix

BMC are not the only microcontroller (MCU) that can “listen” to a nominally turned off system. On x86_64 systems, there is the Intel Management Engine (IME) software package for remote system management. A wide range of devices, from servers to laptops, have technology that has features such as KVM Remote Control or Intel Capability Licensing Service. According to Inte l's own tool , IME has unpatched vulnerabilities.Bad news - disable IME is difficult. Trammell Hudson created the me_cleaner project, which erases some of the most egregious IME components, such as the embedded web server, but at the same time there is a chance that using the project will turn the system on which it is running into a brick.

The IME firmware and the System Management Mode (SMM) program, which follows it on boot, are based on the Minix operating system and run on a separate Platform Controller Hub processor, rather than the main system CPU. Then SMM launches the Universal Extensible Firmware Interface (UEFI) program on the main processor, which has already been written about more than once . The Coreboot group launched an excitingly ambitious Google project.Non-Extensible Reduced Firmware (NERF) , which aims to replace not only UEFI, but also the early components of the Linux user space, for example, systemd. And while we are waiting for results, Linux users can purchase laptops from Purism, System76 or Dell, on which IME is disabled , plus, we can hope for laptops with a 64-bit ARM processor .

Loaders

What besides running booted spyware does boot firmware do? The task of the loader is to provide the just-enabled processor with the necessary resources to run a general-purpose operating system like Linux. During power up, there is not only virtual memory, but DRAM until the time when its controller is raised. The boot loader then turns on the power supplies and scans the buses and interfaces to find the kernel image and root filesystem. Popular boot loaders, such as U-Boot and GRUB, have support for common interfaces like USB, PCI, and NFS, as well as other more specialized embedded devices, such as NOR and NAND flash. Downloaders also interact with hardware security devices, such as the Trusted Platform Module (TPM)to establish a trust chain from the beginning of the download.

Run the U-boot bootloader in the sandbox on the build server.

The popular open source U-Boot downloader is supported by systems from Raspberry Pi to Nintendo devices, car boards and Chromebooks. There is no system log, and if something goes wrong, there may not even be a console output. To facilitate debugging, the U-Boot team provides a sandbox for testing patches on the build host or even in the Continuous Integration system. On a system where the usual development tools like Git and the GNU Compiler Collection (GCC) are installed, it’s easy to understand the U-Boot sandbox.

$# git clone git://git.denx.de/u-boot; cd u-boot

$# make ARCH=sandbox defconfig

$# make; ./u-boot

=> printenv

=> helpThat's all: you launched U-Boot on x86_64 and can test tricky features, for example, repartition of dummy storage devices , TPM-based key manipulation and hot plug (hotplug) of USB devices. The U-Boot sandbox can be single-stage as part of the GDB debugger. Development using a sandbox is 10 times faster than testing by rewriting the loader onto the board, plus a “brick” sandbox can be restored by pressing Ctrl + C.

Starting the kernel

Purchasing the loading kernel

After completing his tasks, the loader switches to the kernel code that it loaded into main memory, and starts its execution, passing all the command line parameters that the user specified. What is the core program? file / boot / vmlinuz indicates that this is a bzImage. There is a extract-vmlinux tool in the Linux source tree that can be used to decompress a file:

$# scripts/extract-vmlinux /boot/vmlinuz-$(uname -r) > vmlinux

$# file vmlinux

vmlinux: ELF 64-bit LSB executable, x86-64, version 1 (SYSV), statically

linked, stripped

The kernel is an Executable and Linking Format (ELF) binary file, just like the Linux user-space program. This means that we can use commands from the binutils package, such as readelf, to study it. Compare, for example, the following conclusions:

$# readelf -S /bin/date

$# readelf -S vmlinux

The list of sections in binary files is mostly similar.

So, the kernel has to run other ELF Linux binaries ... But how are user-space programs running? In function

main(), right? Not really. Before starting a function,

main() programs need an execution context, including heap- (heap) and stack- (stack) memory, plus file descriptors for stdio, stdout and stderr. User-space programs get these resources from the standard library ( glibc for most Linux systems). Consider the following:$# file /bin/date

/bin/date: ELF 64-bit LSB shared object, x86-64, version 1 (SYSV), dynamically

linked, interpreter /lib64/ld-linux-x86-64.so.2, for GNU/Linux 2.6.32,

BuildID[sha1]=14e8563676febeb06d701dbee35d225c5a8e565a,

strippedELF binary files have an interpreter, just like Bash and Python scripts. But it does not need to be clarified through

#!, as in scripts, because ELF is a proprietary format of Linux. The ELF interpreter supplies the binary file with all the necessary resources using a call _start(), a function available in the source package glibcthat can be learned through GDB . The kernel obviously does not have an interpreter, and it must supply itself, but how? An investigation of the launch of a kernel with GDB provides an answer to this question To get started, install the kernel debugging package, which provides undiminished version

vmlinux, for example apt-get install linux-image-amd64-dbg. Or compile and install your own kernel from some source, for example, following the instructions from the excellent Debian Kernel Handbook .gdb vmlinuxfollowed by the info filesELF section init.text. Specify the start of the program in init.textusing l *(address)where address is a hexadecimal start init.text. GDB will indicate that the x86_64 kernel is launched in the kernel file arch/x86/kernel/head_64.S, where we find the build function start_cpu0()and the code that explicitly creates the stack and unpacks the zImage before calling the function x86_64 start_kernel(). ARM 32-bit kernels have a similar arch/arm/kernel/head.S. start_kernel()architecture independent, so the function is in the init/main.ckernel. It can be said that start_kernel()is a true main()feature of Linux. From start_kernel () to PID 1

Kernel hardware manifest: ACPI tables and device trees

When loading, the kernel needs hardware information in addition to the type of processor for which it was compiled. The instructions in the code are supplemented with configuration data that is stored separately. There are two main storage method: Device trees (Device Tree) and ACPI tables . The kernel learns from these files what hardware needs to be run on each boot.

For embedded devices, the device tree (DM) is the manifest of the installed hardware. The DU is a file that compiles at the same time as the kernel source and is usually located in / boot along with

vmlinux. To see what is in a binary device tree on an ARM device, simply use the command strings from the binutils package in the file whose name matches/boot/*.dtb, since it dtb means the Device-Tree Binary binary file. The remote control can be changed by editing the JSON-like files from which it is composed and by restarting the special dtc compiler provided with the kernel source. The remote control is a static file whose path is usually transmitted to the kernel by loaders on the command line, but in recent years a device tree overlay has been added where the kernel can dynamically load additional fragments in response to hotplug events after loading. The x86 family and many ARM64 business-level devices use an alternative Advanced Configuration and Power Interface mechanism ( ACPI , advanced configuration and power management interface). Unlike a remote control, ACPI information is stored in a virtual file system.

/sys/firmware/acpi/tableswhich is created by the kernel at startup through the access to the built-in ROM. To read ACPI tables, use the command acpidumpfrom the package acpica-tools. Here is an example:

ACPI tables on Lenovo laptops are ready for Windows 2001.

Yes, your Linux system is ready for Windows 2001 if you want to install it. ACPI has both methods and data, in contrast to the DU, which is more similar to the hardware description language. ACPI methods continue to be active after loading. For example, if you run the acpi_listen command (from the apcid package) and then close and open the lid of the laptop, you will see that the ACPI functionality has continued to work all this time. Temporary and dynamic rewriting of ACPI tablespossible, but for permanent change you will need to interact with the BIOS menu at boot or flashing the ROM. Instead of such difficulties, you may simply need to install coreboot , a replacement for open source firmware.

From start_kernel () to user space

The code in

init/main.c, surprisingly, is easy to read and, oddly enough, still carries the original copyright of Linus Torvalds (Linus Torvalds) from 1991-1992. The strings found in the dmesg | headrunning system mainly originate from this source file. The first CPU is registered by the system, global data structures are initialized, the scheduler, interrupt handlers (IRQs), timers, and the console come up one by one. All timestamps before launchtimekeeping_init()are zero. This part of the kernel initialization is synchronous, that is, execution occurs only in one thread. Functions are not executed until the last one is completed and returned. As a result, the output dmesg will be fully reproducible even between two systems, as long as they have the same remote control or ACPI tables. Linux also behaves like a real-time operating system (RTOS, real-time operating system) running on an MCU, for example, QNX or VxWorks. This situation is stored in a function rest_init()that is called start_kernel()at the time of its completion.

A brief description of an early kernel boot process

Modestly named

rest_init()creates a new thread that starts kernel_init(), which in turn causesdo_initcalls(). Users can follow the work initcallsby adding a initcalls_debugkernel to the command line. As a result, you will get an entity dmesgevery time you start the function initcall. initcallspasses through seven consecutive levels: early, core, postcore, arch, subsys, fs, device, and late. The most noticeable part for users initcalls is the definition and installation of processor peripherals: buses, network, storage, displays, and so on, accompanied by the loading of their core modules. rest_init()It also creates a second thread in the boot processor, which starts with the launch cpu_idle(), while the scheduler distributes its work. kernel_init()also sets symmetric multiprocessing(symmetric multiprocessing, SMP). In modern kernels, this moment can be found in the dmesg output by the line “Bringing up secondary CPUs ...”. The SMP then makes a “hot plug” of the CPU, which means that it manages its lifecycle using state machines that are conditionally similar to those used in devices like auto-detecting USB memory sticks. The kernel power management system often shuts down individual cores (core), and wakes them up as needed so that the same hotplug CPU code is called on an unoccupied machine time and time again. Look at how the power management system calls the hotplug CPU using the BCC tool called offcputime.py. Please note that the code

init/main.calmost finished execution at the time of launchsmp_init(). The boot processor has completed most of the one-time initialization that other cores do not need to repeat. However, threads must be created for each core (core) in order to manage interrupts (IRQs), workqueue, timers, and power events on each one. For example, look at the threads of the processors that serve softirqs and workqueues with the commandps -o psr.$\# ps -o pid,psr,comm $(pgrep ksoftirqd)

PID PSR COMMAND

7 0 ksoftirqd/0

16 1 ksoftirqd/1

22 2 ksoftirqd/2

28 3 ksoftirqd/3

$\# ps -o pid,psr,comm $(pgrep kworker)

PID PSR COMMAND

4 0 kworker/0:0H

18 1 kworker/1:0H

24 2 kworker/2:0H

30 3 kworker/3:0H

[ . . . ]where the PSR field means “processor”. Each core must have its own timers and hotplug cpuhp handlers.

And finally, how does user space run? Toward the end, is

kernel_init()looking for initrd, who can can start a process init on his behalf. If not, the kernel executes on its own init. Why then may be needed initrd? Early user space: who ordered the initrd?

In addition to the device tree, another path to the file, optionally provided to the kernel at boot, belongs

initrd. initrd often found in / boot along with the bzImage vmlinuz file in x86, or with a similar uImage and device tree for ARM. The list of contents intrdcan be viewed using the tool.lsinitramfswhich is part of the package initramfs-tools-core. The initrd distribution image contains minimal directories /bin, /sbinand /etcas well as kernel modules and files in /scripts. Everything should look more or less familiar, since initrd for the most part it looks like a simplified Linux root file system. This similarity is a bit deceptive, since almost all executable files in /binand /sbininside ramdisk are symlinks to the BusyBox binary , which makes the / bin and / sbin directories 10 times smaller than in glibc. Why try to create

initrd, if the only thing he does is load some modules and runinit in a regular root file system? Consider an encrypted root filesystem. Decryption may depend on the load of the kernel module stored in the /lib/modulesroot filesystem ... and, as expected, in initrd. A crypto module can be statically compiled into the kernel, and not loaded from a file, but there are several reasons to refuse it. For example, static compilation of a kernel with modules may make it too large to fit in the available storage, or static compilation may violate the terms of a software license. Not surprisingly, storage, network, and HID (human input devices) drivers can also be represented in initrd - in fact, any code that is not an essential part of the kernel that is required to mount the root file system. Also in the initrd, users can storeown ACPI table code .

Fun with a rescue shell and custom initrd.

initrd also great for testing file systems and storage devices. Put the testing tools in initrd and run the tests from memory, not from the test object. Finally, when it

init works, the system is running! Since the secondary processors are already running, the machine has become an asynchronous, paged, unpredictable, and high-performance creature that we all know and love. Indeed, it ps -o pid,psr,comm -pshows that the init user-space process is no longer running on the boot processor. Total

The Linux boot process sounds forbidden, given the amount of affected software, even on the simplest embedded device. On the other hand, the boot process is quite simple, since the excessive complexity caused by preemptive multitasking, RCU, and race conditions is absent here. Paying attention only to the kernel and PID 1, you can lose sight of the great work done by the loaders and auxiliary processors to prepare the platform for launching the kernel. The kernel is certainly different from other Linux programs, but using tools to work with other ELF binary files will help you better understand its structure. Examining a workable boot process will prepare for future failures.

THE END

We are waiting for your comments and question, as usual, or here, or in our open lesson.Where sweat is Leonid .