OpenFlow: current status, prospects, problems

Revolutionary technologies are always a mystery: it will take off, it will not take off, it will pass over, it will not go over, everyone will have it or it will be forgotten in six months ... We repeat the same questions every time we meet something really new, but it’s one thing if we say about another social network or a trend in the form of an interface and quite another if it comes to an industry standard.

OpenFlow, as an integral part of the SDN concept in the form of a control protocol between the respective controllers and switches, appeared by the standards of the computer industry for a relatively long time - on December 31 the first version of the standard will celebrate its fifth anniversary. So what did we get in the end? Let's look and understand, especially since the story is very interesting.

First, let's recall what the concept of Software Defined Networks (SDN) implies, which implies the use of OpenFlow.

Architecture

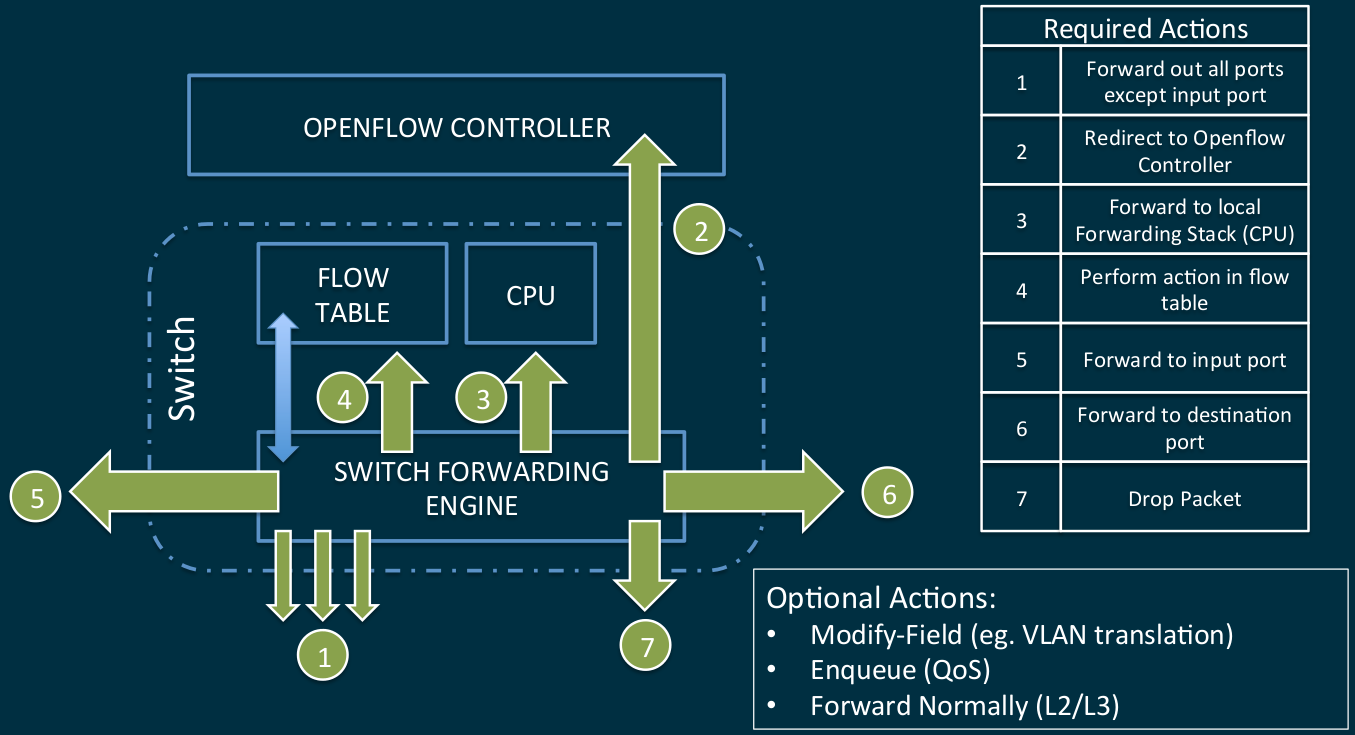

If you do not delve into the jungle of theory, then the concept assumes for the network infrastructure to separate the level of data transfer from the control level. As a result, at the data level, relatively simple switches operate with data streams according to the rules laid down in the tables. The rules are set by centralized network controllers, whose task is to analyze new flows (the packets of which the switches send them) and develop routing rules for them based on the algorithms laid down in the controller.

In general, the algorithm of actions caused by the packet arriving at the switch port has not undergone radical changes, only the functional modules of the algorithm have changed: the controller has become further centralized, and local tables more complex.

Possible actions

The entire network infrastructure has received a high degree of virtualization - virtual ports and switches are managed in the same way as physical ones.

Pages of history

With honesty in mind, the very first version of the OpenFlow 1.0 standard, which was carefully released for the New Year, which was responsible for communication between switches and controllers and, by and large, for routing data on a network, was more familiar than working. Yes, she described the basic functioning within the framework of the new protocol, but it did not support so many necessary functions that there was no need to talk about real application. Therefore, for the next 2.5 years the standard was drafted: multiple processing tables, label support, IPv6 support, hybrid switch mode, flow rate control, redundant communication channels for switches and controllers were added - you can already understand from this list how incomplete the original standard. Therefore, only after June 25, 2012 with the advent of version 1.3.0 can we talk about the standard's readiness for use. Further development to the current version 1.4 can already be described as minor changes with the elimination of errors.

Well, for two years now we have had a full-fledged standard about which we were told that this is a new paradigm for building networks, everything will be stylish-fashionable, youth, OPEX and CAPEX will fall below the plinth and other things that are supposed to be in such cases. So why is it still not visible widespread enthusiasm for the introduction of new items? There are several reasons for this from the category “it was smooth on paper, but forgot about the ravines”.

Problems

1. Controllers

It’s easy to guess that with this concept of the whole system, the choice of the OpenFlow controller becomes especially important. It is its speed that will become a decisive factor in the event of any emergency situations, it is its algorithms that will determine the efficiency of the entire network, and it is its failure with a high degree of probability that it will "put" the entire network. At first glance, the choice of controllers is more than great: Open DayLight, NOX, POX, Beacon, Floodlight, Maestro, SNAC, Trema, Ryu, FlowER, Mul ... there is even a RUN OS developed by the CPPCS guys in Russia . And all this apart from controllers from giants such as Cisco, HP, IBM.

The problem is that if we take a controller with an open architecture, we get, by and large, not a ready-made solution, but a workpiece in which you need to invest a fair amount of effort, bringing its capabilities to the necessary within your task. For Google or Microsoft level companies, this is obviously not a problem. For everyone else, a lower rank, this leads to the need for their own staff of programmers who are well versed in network technologies. Well, of course, it requires significant time to prepare the project for launch, and also creates a fair amount of risks associated with the uniqueness and impossibility of large-scale long-term testing.

An alternative is to purchase off-the-shelf commercial controllers. But here we get what everyone is trying to get away from, choosing options starting with the word “Open” - closed architectures with their unknown logic of work, complete dependence on the supplier for any problems, compatibility issues, proprietary additional functionality that binds, again, to a specific manufacturer.

2. Architecture issues

Removing the control plane from the switches to a separate device and centralizing the management creates perhaps as many architectural issues as it solves, since it brings us back to the old debate about the effectiveness and problems of centralized management when compared to distributed.

- What happens if the controller fails?

- In the case of redundancy of controllers, how is their communication and decision-making on leadership / transfer of functions?

- What communication channels do the controller / s and switches interact with, along the main data transfer structure or parallel? What happens with problems on the communication line?

And the solution of these issues without the right to make mistakes should occur at the stage of network planning.

3. Compatibility

It would seem that since we are talking about an open standard, it’s enough to fence ourselves off from inevitably proprietary solutions, as everything will be fine within the framework of one version of the standard. But it was not there. Due to the imperfection of iron in different modes of operation, a fair amount of field checks and even actions may simply not be supported at the level of the switching matrix. And the saddest thing is that it is extremely difficult to find out information of this level, since manufacturers are extremely reluctant to post it in the public domain. And then we smoothly move on to the next point ...

4. Readiness of the switches

If we take the management plan out of the switches, they should become simpler. So, yes, not quite: the new protocol, or rather a huge number of default fields in the routing tables, puts forward increased demands on the amount of memory on the switches.

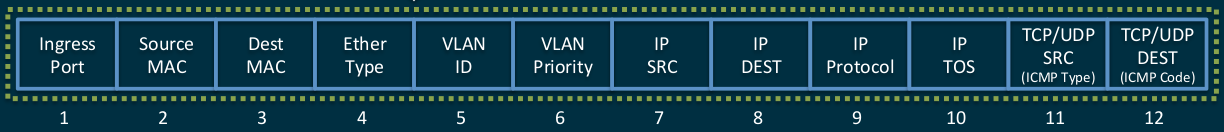

Famous 12 basic standard tuples

They are expanded by tags

Here it is necessary to remember that in modern high-speed switches TAMary (Ternary Content Addressable Memory) is used to store tables - this is the only adequate way to store data in terms of information retrieval speed, which is impossible to escape from anywhere if we want to do switching and routing at interface speeds. And TCAM is quite expensive. Therefore, even switches on powerful relatively modern ASICs can sometimes support OpenFlow exclusively formally, providing less than a thousand OF-records in TCAM. In principle, it would be possible to organize multi-level storage of records by making a buffer in TCAM for the most relevant records and putting everything else in RAM, but for this it is necessary to significantly modify the switch platform,

And this is not to mention the fact that many ASICs in principle are not able to support part of the functions declared in OpenFlow, because they simply do not have their support “in silicon”.

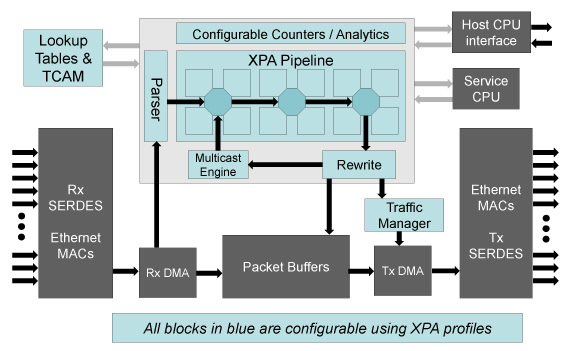

An example of really supported fields and actions

Of course, this drawback is easily stopped by adding network processors (NPUs) with a high degree of programmability to the ASIC, but this immediately entails a noticeable increase in both energy consumption and cost. And this, in turn, raises the question of the appropriateness of the whole undertaking, since instead of simplifying and cheapening the end devices, we get exactly the opposite.

Everything is bad?

So, is everything bad and the new standard has no chance?

Not at all, if you do not believe all the promises of enthusiastic apologists for new technologies and sensibly assess the capabilities of the new standard, then it can now be used to your advantage.

Most of the issues discussed above can be successfully solved at the stage of network design, carefully approaching the choice of equipment. Well-chosen controller (commercial or open, but custom -built ), well-thought-out architecture, selected switches (for example, Eos420 on Broadcom Trident 2 or Eos 410i , built on Intel FM6764 with one of the largest TCAM tables in the class ), and a workable network on OpenFlow 1.3 becomes a reality.

Do not believe that it is available to someone who is not a huge Corporation of Goodness? Ask Performancex, a company with a stable network on OpenFlow in production for a year now .

In general, the beast called OpenFlow is not so terrible, although, of course, it is still far from widespread adoption. But building small, high-performance, highly specialized network structures on OpenFlow is already possible without involving significant developer efforts. Moreover, this can be a unique combination of performance, convenience and manageability at a low cost.

Bright future?

Intel FM6764 matrix already promises programmability (for hardcore register lovers), current products of other developers are less open.

The lack of hardware capabilities of other modern ASICs is promised to be solved in the next generations, which will enter the market in 2015. Such products showed Broadcom - its matrix Tomahawkcan be configured to support different forwarding / match / action.

In turn, Cavium promises a fully programmable XPliant matrix , in which it will be possible to add new protocols as they appear, while maintaining line-rate processing speed.

XPliant structure

Yes, and the demand for OpenFlow solutions from traditional carriers will spur progress.

PS In the preparation of this article, we were not too lazy and re-read the articles on the hub using the SDN and OpenFlow tags - it's quite interesting to look at both optimists and pessimists from the position of the past 2-3 years. We will not be malicious, we only note that making predictions for the future is a thankless task.

PPS interested in the topic of SDN recommend an article on the "profile" network resource NAG:

nag.ru/articles/reviews/26333/neodnoznachnost-sdn.html

both the article itself and the comments on it are interesting.