So different blur

I’ll talk about various implementations of the blur effect on GLSL.

To begin with, I want to warn you right away - I set limits for myself to use GLSL version no higher than 1.1. This is necessary in order for the application to work on as many devices and systems as possible. For example, I had an iMac with Radeon HD 6750M and the most supported version of GLSL 1.2, a laptop with Kubuntu on intel hd 4000 with GLSL 1.3, and a desktop with GeForce gtx560.

I will try to describe the effects in simple words and without complex formulas, the main goal is to give examples of blurring methods. The article is preparatory to the next two articles.

Gaussian blur

Consider the classic Gaussian blur. Where only it was not written about, perhaps this is the most popular way of blurring in game dev and not only. But I cannot but consider it, at least briefly.

What is blur in general? Roughly speaking, this averaging of neighboring pixels, that is, considering the current pixel, we find the average color of all its neighbors in a certain radius. But if you use a simple arithmetic mean (uniform distribution), then the blur will not be very beautiful. Therefore, neighbors are usually multiplied by coefficients whose values obey the normal distribution law (this is the Gaussian distribution, hence the name of the blur).

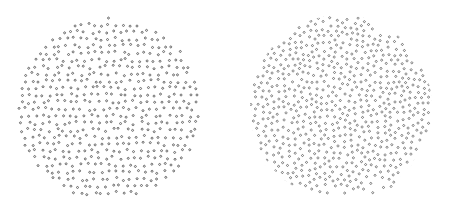

blur with uniform and normal distribution respectively

Gaussian blur has one important property - separability. This makes it possible to divide the algorithm into two parts - blur along the x coordinate and blur on y. Thus, the coefficients do not need to be calculated for all neighbors, just find for one column or row. The coefficients can be found from the formula of Gauss :

,

, where μ - the expectation and σ - dispersion.

The implementation of this blur is quite simple, we need two buffers of the same format and a calculated array of blur coefficients:

- Render the scene to the first buffer.

- Render the image into a second buffer with a vertical blur shader.

- Render again to the first horizontal blur buffer.

Shaders

Vertex:

Fragmented:

#version 110

attribute vec2 vertex;

attribute vec2 texCoord;

varying vec2 vTexCoord;

voidmain(){

gl_Position = vec4(vertex, 0.0, 1.0);

vTexCoord = texCoord;

}

Fragmented:

#version 110constint MAX_KOEFF_SIZE = 32; //максимальный размер ядра (массива коэффициентов)

uniform sampler2D texture; //размываемая текстура

uniform int kSize; //размер ядра

uniform float koeff[MAX_KOEFF_SIZE]; //коэффициенты

uniform vec2 direction; //направление размытия с учетом радиуса размытия и aspect ratio, например (0.003, 0.0) - горизонтальное и (0.0, 0.002) - вертикальное

varying vec2 vTexCoord; //текстурные координаты текущего фрагментаvoidmain(){

vec4 sum = vec4(0.0); //результирующий цвет

vec2 startDir = -0.5*direction*float(kSize-1); //вычисляем начальную точку размытияfor (int i=0; i<kSize; i++) //проходимся по всем коэффициентам

sum += texture2D(texture, vTexCoord + startDir + direction*float(i)) * koeff[i]; //суммируем выборки

gl_FragColor = sum;

}

Bokeh effect

In fact, this is the main reason for writing the article - I wanted to talk about how pretty easy to get this effect . The algorithm is similar to the previous one, but there are differences:

- The normal distribution law can be replaced by uniform

- Another third pass is added.

- Blurring now does not occur vertically and horizontally, but along three vectors, the angle between which is 120 °

- In addition to the color sum, the shader now also has the maximum color for all samples, after which both colors are mixed in a given proportion

Shader

The vertex remains the same, fragmented:

#version 110

uniform sampler2D texture; //размываемая текстура

uniform vec2 direction; //направление размытия, всего их три: (0, 1), (0.866/aspect, 0.5), (0.866/aspect, -0.5), все три направления необходимо умножить на желаемый радиус размытия

uniform float samples; //количество выборок, float - потому что операции над этим параметром вещественные

uniform float bokeh; //сила эффекта боке [0..1]

varying vec2 vTexCoord; //входные текстурные координаты фрагментаvoidmain(){

vec4 sum = vec4(0.0); //результирующий цвет

vec4 msum = vec4(0.0); //максимальное значение цвета выборокfloat delta = 1.0/samples; //порция цвета в одной выборкеfloat di = 1.0/(samples-1.0); //вычисляем инкрементfor (float i=-0.5; i<0.501; i+=di) {

vec4 color = texture2D(texture, vTexCoord + direction * i); //делаем выборку в заданном направлении

sum += color * delta; //суммируем цвет

msum = max(color, msum); //вычисляем максимальное значение цвета

}

gl_FragColor = mix(sum, msum, bokeh); //смешиваем результирующий цвет с максимальным в заданной пропорции

}

blur with different bokeh coefficients: 0.2, 0.5, 0.8 (the picture is clickable)

I experimentally found out that the best blending factor, providing a more or less beautiful effect close to the real one, is 0.5.

The disadvantages of this method are:

- One draw call more and about one third more samples compared to the previous method

- Bokeh shape - only regular polygons with an even number of angles

- Cannot be applied in depth blur algorithm

There are several more ways to make this effect, here is one of them: we’ll go through the texture with a special shader, in which we identify the most contrasting places and write their coordinates to the buffer, then blur the texture in any way without the bokeh effect, after which we render the bokeh sprites directly on top of it in those coordinates that were found in the first step. The advantages of this method - the shape of the bokeh can be of any shape, of the shortcomings - we need geometric shaders, which eliminates weak devices, and you can’t draw bokeh for every pixel - we get a wild fill rate.

Depth blur

The full name of the effect is the depth of the sharply depicted space, or DoF (Depth Of Field). The name speaks for itself - everything that is in focus - clearly, out of focus - is blurry. At first glance, the effect seems simple, but there are moments that complicate it both in terms of the cost of resources and in terms of implementation. One of its drawbacks is that it is impossible to apply the previous approaches, due to the fact that it does not have the property of separability, which means that it cannot be divided into several passes (blurring vertically and horizontally). Sometimes you can cheat: first render the background and blur it, then the front without blur. Of course, this will not be a full-fledged effect, but there will be a feeling of blurring in depth. But if the scene is filled with objects throughout its depths, then you have to blur it in a more “honest” way. But usually they don’t use completely honest methods - there are too many samples, therefore, as a rule, a small cloud of points is taken as samples in a certain radius from the considered one. The most optimal distribution of such points is called the Poisson disk - it is distinguished from the completely random distribution of points by the fact that the points are at approximately equal distance from each other. There are many ways to get a Poisson disk, I use this for myself:

- let r be the radius of the disk, then the upper boundary of the disk is yMax = r, and the lower boundary yMin = -r.

- in a cycle on yR from yMin to yMax, we do the following:

- we find xMax = cos (asin (yR / r)) * r and xMin = -xMax.

- in a nested loop over xR from xMin to xMax we find the point with coordinates (xR, yR).

- then shift the coordinates of this point by a random variable from -r / 4 to r / 4

- the points obtained in this way are the desired ones.

Actually, this is not quite a Poisson disk, but it looks very much like it, compare it yourself (on the left is my implementation, on the right are the points generated by this algorithm):

C ++ implementation

float yMax = r;

float yMin = -r;

yMin += fmod(yMax-yMin, 1)/2;

for (float y=yMin; y<yMax; y++) {

float xMax = cos(asin(y/r))*r;

float xMin = -xMax;

xMin += fmod(xMax-xMin, 1)/2;

for (float x=xMin; x<xMax; x++)

points.append(QPoint(x+floatRand(-r/4, r/4), y+floatRand(-r/4, r/4)));

}In the pictures above there are a lot of points, in fact there are enough of them about 10-20. After the points are received, they can be sampled. But first, let's talk about the power of blur.

Information about the blur strength for each pixel will be stored in the alpha channel. The blur strength varies from -1 to 1, where one is maximum blur, zero is no blur. I used an HDR texture (RGBA16F), but this information can also be encoded into an ordinary 8-bit alpha channel:

a = depth * 0.5 + 0.5 - encoding

a = depth * 2.0-1.0 - decoding, where depth is the blur strength [-1 ..one]

The depth parameter (blur strength) can be calculated using the formula: (focalDistance + zPos) / focalRange, where focalDistance is the focal length, focalRange is the range or blur depth. The negative value of depth indicates that the current object or fragment is in front of the focus, if depth is positive, then behind the focus. By the way, it’s not really necessary to store the character, this will double the range of values (it can be critical for an 8-bit texture), but it’s impossible to understand in the shader if the fragment is in front of or behind the focus - because of this artifacts may occur.

So, making a selection using a Poisson disk, we get information about the color of the pixel and how hard it needs to be blurred. Remember the sampling rates in the two previous algorithms? So, now the role of these coefficients is the blurring force (of course, modulo). Also, the blur strength affects the blur radius of the current fragment. Another thing to be said about the parasitic effect arising from the current implementation is that if the depth boundary is sharp (the transition between the distant and near objects), then something like moire or a halo can be observed between them, in this case we simply adjust the coefficient.

As an additional improvement to the algorithm, you can use a second texture - a smaller size and slightly blurry. This will reduce the number of samples and improve the quality of the blur.

thank Chaos_Optima , forgot to write about the disadvantage of this method: it appears at the borders of nearby objects, when they begin to blur, their boundaries remain sharp.

Shader

In the fragment shader of the scene render:

The vertex blur shader is the same as in the previous methods, fragmented:

float blur = clamp((focalDistance+zPos)/focalRange, -1.0, 1.0);

gl_FragColor = vec4(color, blur);The vertex blur shader is the same as in the previous methods, fragmented:

#version 110constint MAX_OFFSET_SIZE = 128; //максимальный размер массива точек диска Пуассона

uniform sampler2D texture; // текстура с отрендеренной сценой

uniform sampler2D lowTexture; // уменьшенная и размытая текстура со сценой

uniform int offsetSize; // размер массива точек диска Пуассона

uniform vec2 offsets[MAX_OFFSET_SIZE]; // диск Пуассона

varying vec2 vTexCoord; // входящие текстурные координатыvoidmain(){

float currentSize = texture2D(texture, vTexCoord).a; //запоминаем силу размытия фрагмента

vec4 resulColor = vec4 (0.0); //результирующий цветfor (int i=0; i<offsetSize; i++) {

vec4 highSample = texture2D(texture, vTexCoord+offsets[i]*currentSize); //делаем выборку

vec4 lowSample = texture2D(lowTexture, vTexCoord+offsets[i]*currentSize);

float sampleSize = abs(highSample.a);//вычисляем силу размытия выбранной точки

highSample.rgb = mix(highSample.rgb, lowSample.rgb, sampleSize); //смешиваем цвет размытой и оригинальной текстуры исходя из силы размытия

highSample.a = highSample.a >= currentSize ? 1.0 : highSample.a; //корректировка весов (вклад, окторый вносит текущая выборка в результирующий цвет)

sampleSize = abs(highSample.a);

resultColor.rgb += highSample.rgb * sampleSize; //суммируем цвет

resultColor.a += sampleSize; //увеличиваем общий вес

}

gl_FragColor = resultColor/resultColor.a;

}

shader result - blurry stick

References

- encelo.netsons.org/2008/04/15/depth-of-field-reloaded - one way to do DoF

- www.gamedev.net/topic/563149-real-time-bokeh-high-quality-dof - I realized that I invented another bike after I came across this page and read the last post

- steps3d.narod.ru/tutorials/depth-of-field-tutorial.html - implementation of Dof

- www.jasondavies.com/poisson-disc - how to generate a Poisson disk

- openglinsights.com - This book discusses DoF implementation with bokeh effect on GLSL 4.2