Similar searches in hh.ru

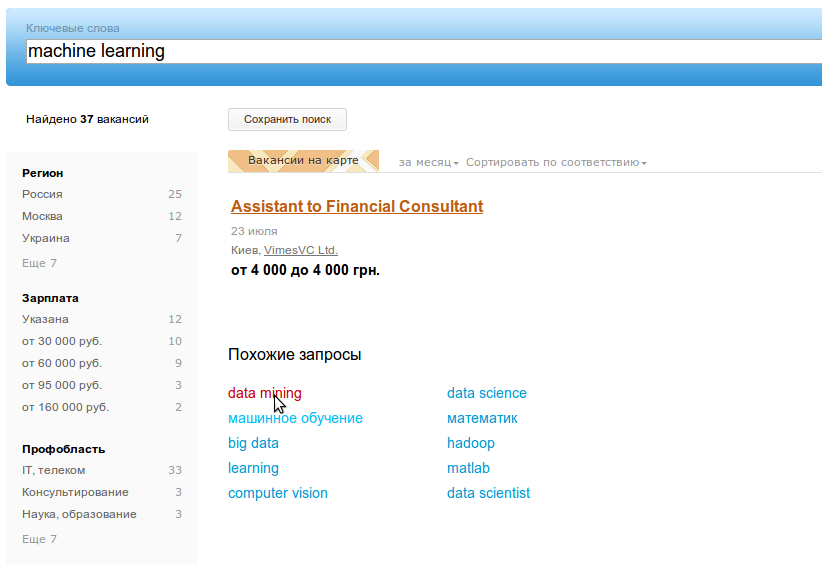

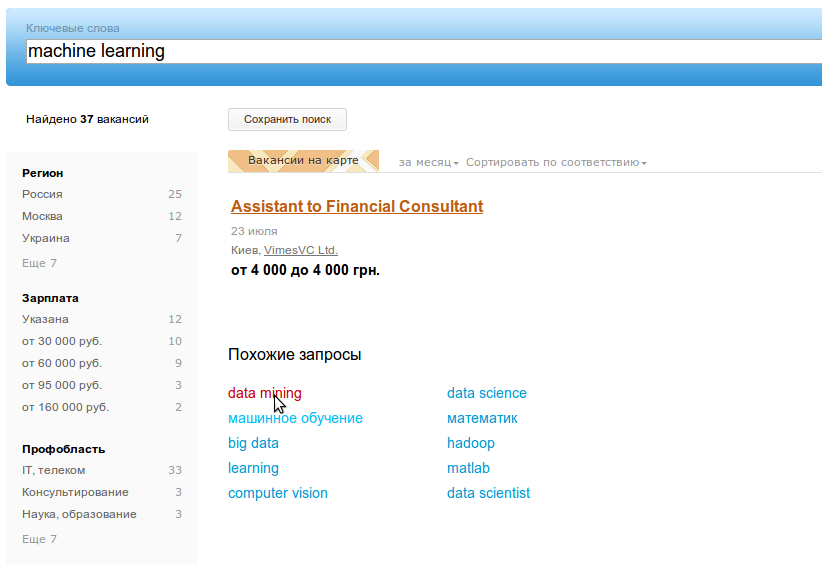

Most major search engines and services have a mechanism for similar search queries when the user is offered options that are thematically close to what he was looking for. So they do it in google, yandex, bing, amazon, a few days ago it appeared on our website too!

In this article, I will talk about how we extracted similar search queries from the hh.ru site logs.

First, a few words about what are “related searches” (related searches) and why they are needed.

We thought about this feature for a long time, but the final impetus was given by a wonderful article from LinkedIn about their implementation of similar queries - metaphor . It describes three methods for extracting such queries from the search activity of users, which we have implemented.

The simplest and most obvious of the related query retrieval algorithms is overlap or searching for intersecting queries.

We break all search phrases into tokens and find queries with common tokens. In reality, there can be a lot of such requests, so the question arises of choosing the most suitable. Following common sense, two rules can be defined: the more tokens intersect, the closer the requests, and intersection in rare tokens is more significant than in widely used ones. From here we get the formula:

where overlap is the number of common tokens in q1 and q2 requests ,

Q (t) is the number of requests from t tokens,

N is the number of unique requests.

The next way is collaborative filtering (CF). It is often used in recommendation systems and is well suited for recommending similar queries. The complex name is based on the simple assumption that during one session the user makes related queries. Moreover, to look for work, a long session can be neglected, because user preferences change slowly. It is hard to imagine that the applicant who makes the request “personal driver” today will search for vacancies “java developer” tomorrow. But this assumption is not true for employers, they can search for candidates for very different vacancies, even within one hour.

So, with collaborative filtering, for each user we find all the requests made by him for a certain period and form pairs of them. To select the most frequency pairs, we use a variation of the tf-idf formula.

where tf is the number of query pairs q1 and q2 ,

df is the number of pairs containing q2 ,

N is the number of unique queries,

c = 0.1, this value is still hardcoded, but it can be selected from user behavior.

df does not allow the most common queries like “manager” and “accountant” to appear in recommendations too often.

The third QRQ (query-result-query) algorithm is similar to CF, but we build pairs of queries not by user, but by vacancy.

Those. we consider similar requests for which they switch to the same vacancy. Having found all pairs of queries, it remains for us to choose the most suitable ones. To do this, we need to find the most popular pairs, not forgetting to lower the weight of frequency queries and vacancies. LinkedIn in its article offers the following formulas, and they give interesting results even for rare queries: for example, for “probability theory” related queries - “algorithmic trading” and “futures”.

Despite the fact that the formulas look complicated, simple things stand behind them. V is the contribution of the vacancy to the overall rank: the ratio of the number of views of the vacancy for the query q to the total number of views of this vacancy. Similarly, Q is the contribution of the request: the ratio of the number of views of the vacancy for the query q to the number of views of other vacancies for this query.

It should be noted that the last two methods are not symmetrical, i.e.

We implemented the above algorithms using hadoop and hive. Hadoop is a system for distributed storage of “big data”, cluster management and performing distributed computing. Every night, we upload there the access logs of the site over the past day, while transforming them into a convenient structure for analysis.

We also use Apache Hive, an add-on for Hadoop that allows us to formulate data queries in the form of HiveQL (SQL-like overgrowth language). HiveQL does not comply with SQL standards, but allows you to do join and subselect, contains many functions for working with strings, dates, numbers and arrays. Able to do Group By and supports various aggregate functions and window-functions. Allows you to write your map, reduce and transform functions in any programming language. For example, in order to get the most popular search queries of the day, you need to perform the following HiveQL:

Before starting the “extraction” of similar queries, they need to be cleaned from debris and normalized. We have done the following:

After such a modification, the request “tender department chief” turned into “# 0d26431546 department chief”. This can’t be shown to users, so we change this code to the most popular request form “head of the tender department”.

Then we proceed to the implementation of the algorithms described above, which consists in applying several successive transformations of the original logs. All transformations are written in HiveQL, which may not be very optimal in terms of performance, but it is simple, clear and takes a few lines of code. The figure shows data transformations for collaborative filtering. QRQ is implemented similarly.

In order not to recommend “junk” requests, we ran each request through a job search on hh.ru and threw out all for which there are less than 5 results.

The last stage of the task is to combine the results of three algorithms. We considered two options: show users three top queries from each method or try to sum the weights. We chose the second method, normalizing before adding up the weight:

And everything seemed to work out pretty well, but for some queries strange results came out: “novice specialist” -> “career manager”. Firstly, here we were summed up by stamming, which “career” and “career” leads to the same form. And secondly, it turned out that the three found weights have different distributions and it’s simply impossible to summarize them.

I had to bring them to the same scale. To do this, each weight was sorted in ascending order, numbered, and the resulting serial number was divided by the total number of query pairs for a particular algorithm. This number has become a new weight, the problem with the “career manager” has disappeared.

To find similar search queries, we used hh.ru logs for 4 months, which is 270 million searches and 1.2 billion vacancy views. In total, about 1.5 million unique text queries were made by users; after cleaning and normalizing, we left just over 70 thousand.

Already on the first day of this feature, about 50 thousand people used the correction of requests. The most popular corrections:

driver -> personal driver

administrator -> beauty salon administrator

driver -> driver with a personal car

accountant -> accountant for primary documentation

accountant -> deputy chief accountant

Corrections of popular queries in the it-area:

It was interesting to see in which professional areas this feature is most in demand:

It can be seen that more than half of the students who were looking for vacancies tried other queries. The least popular feature in IT and Consulting.

What else I would like to do:

Metaphor: A System for Related Search Recommendations

Apache Hadoop

Apache Hive

In this article, I will talk about how we extracted similar search queries from the hh.ru site logs.

First, a few words about what are “related searches” (related searches) and why they are needed.

- First, not all job seekers have a clear idea of what they want to find. Often they simply research offers in several fields of activity, entering different inquiries. This behavior is especially pronounced among students starting their careers, and among applicants without a specific specialty. For such surfing, tips for the correct queries will be useful.

- Often, applicants enter too general a request, for example, “ manager in Moscow ”, for which there are more than 18,000 vacancies. It’s difficult to choose something suitable from them, so the request needs to be narrowed down, for example, to “ building materials sales manager ”.

- Queries that are too narrow, on the contrary, can lead to empty output. For example, at the request “ trainee payroll accountant ” nothing is found, but if you correct it for a more general “ accountant trainee ”, several results appear.

- Sometimes job seekers and employers speak different languages. For example, a vacancy is called a “mixer truck driver,” and a job seeker searches for a “mixer driver”. Usually we try to solve this problem with the help of synonyms, but this is not always possible. Related searches should help the user to formulate a search in the language of employers.

- The most interesting case, in my opinion, is when the applicant knows what he wants to find and clearly formulates his request. But he may not be aware of the existing nearby field of activity that might interest him. For example, a "soil scientist" wants to do "ecology." This problem is usually solved by personal recommendations, but similar requests can lead such a specialist to interesting vacancies, “move” him to another area of job search.

We thought about this feature for a long time, but the final impetus was given by a wonderful article from LinkedIn about their implementation of similar queries - metaphor . It describes three methods for extracting such queries from the search activity of users, which we have implemented.

Ways to Identify Similar Queries

The simplest and most obvious of the related query retrieval algorithms is overlap or searching for intersecting queries.

We break all search phrases into tokens and find queries with common tokens. In reality, there can be a lot of such requests, so the question arises of choosing the most suitable. Following common sense, two rules can be defined: the more tokens intersect, the closer the requests, and intersection in rare tokens is more significant than in widely used ones. From here we get the formula:

where overlap is the number of common tokens in q1 and q2 requests ,

Q (t) is the number of requests from t tokens,

N is the number of unique requests.

The next way is collaborative filtering (CF). It is often used in recommendation systems and is well suited for recommending similar queries. The complex name is based on the simple assumption that during one session the user makes related queries. Moreover, to look for work, a long session can be neglected, because user preferences change slowly. It is hard to imagine that the applicant who makes the request “personal driver” today will search for vacancies “java developer” tomorrow. But this assumption is not true for employers, they can search for candidates for very different vacancies, even within one hour.

So, with collaborative filtering, for each user we find all the requests made by him for a certain period and form pairs of them. To select the most frequency pairs, we use a variation of the tf-idf formula.

where tf is the number of query pairs q1 and q2 ,

df is the number of pairs containing q2 ,

N is the number of unique queries,

c = 0.1, this value is still hardcoded, but it can be selected from user behavior.

df does not allow the most common queries like “manager” and “accountant” to appear in recommendations too often.

The third QRQ (query-result-query) algorithm is similar to CF, but we build pairs of queries not by user, but by vacancy.

Those. we consider similar requests for which they switch to the same vacancy. Having found all pairs of queries, it remains for us to choose the most suitable ones. To do this, we need to find the most popular pairs, not forgetting to lower the weight of frequency queries and vacancies. LinkedIn in its article offers the following formulas, and they give interesting results even for rare queries: for example, for “probability theory” related queries - “algorithmic trading” and “futures”.

Despite the fact that the formulas look complicated, simple things stand behind them. V is the contribution of the vacancy to the overall rank: the ratio of the number of views of the vacancy for the query q to the total number of views of this vacancy. Similarly, Q is the contribution of the request: the ratio of the number of views of the vacancy for the query q to the number of views of other vacancies for this query.

It should be noted that the last two methods are not symmetrical, i.e.

Implementation

We implemented the above algorithms using hadoop and hive. Hadoop is a system for distributed storage of “big data”, cluster management and performing distributed computing. Every night, we upload there the access logs of the site over the past day, while transforming them into a convenient structure for analysis.

We also use Apache Hive, an add-on for Hadoop that allows us to formulate data queries in the form of HiveQL (SQL-like overgrowth language). HiveQL does not comply with SQL standards, but allows you to do join and subselect, contains many functions for working with strings, dates, numbers and arrays. Able to do Group By and supports various aggregate functions and window-functions. Allows you to write your map, reduce and transform functions in any programming language. For example, in order to get the most popular search queries of the day, you need to perform the following HiveQL:

SELECT lower(query_all_values['text']), count(*) c

FROM access_raw

WHERE

year=2014 AND month=7 AND day=16

AND path='/search/vacancy' AND query_all_values['text'] IS NOT NULL

GROUP BY lower(query_all_values['text'])

ORDER BY c DESC LiMIT 10;

Before starting the “extraction” of similar queries, they need to be cleaned from debris and normalized. We have done the following:

- threw out long requests (more than 5 words and 100 characters),

- removed garbage characters and query grammar words,

- used stemming

- combined synonyms

- sorted the words.

After such a modification, the request “tender department chief” turned into “# 0d26431546 department chief”. This can’t be shown to users, so we change this code to the most popular request form “head of the tender department”.

Then we proceed to the implementation of the algorithms described above, which consists in applying several successive transformations of the original logs. All transformations are written in HiveQL, which may not be very optimal in terms of performance, but it is simple, clear and takes a few lines of code. The figure shows data transformations for collaborative filtering. QRQ is implemented similarly.

In order not to recommend “junk” requests, we ran each request through a job search on hh.ru and threw out all for which there are less than 5 results.

The last stage of the task is to combine the results of three algorithms. We considered two options: show users three top queries from each method or try to sum the weights. We chose the second method, normalizing before adding up the weight:

And everything seemed to work out pretty well, but for some queries strange results came out: “novice specialist” -> “career manager”. Firstly, here we were summed up by stamming, which “career” and “career” leads to the same form. And secondly, it turned out that the three found weights have different distributions and it’s simply impossible to summarize them.

I had to bring them to the same scale. To do this, each weight was sorted in ascending order, numbered, and the resulting serial number was divided by the total number of query pairs for a particular algorithm. This number has become a new weight, the problem with the “career manager” has disappeared.

results

To find similar search queries, we used hh.ru logs for 4 months, which is 270 million searches and 1.2 billion vacancy views. In total, about 1.5 million unique text queries were made by users; after cleaning and normalizing, we left just over 70 thousand.

Already on the first day of this feature, about 50 thousand people used the correction of requests. The most popular corrections:

driver -> personal driver

administrator -> beauty salon administrator

driver -> driver with a personal car

accountant -> accountant for primary documentation

accountant -> deputy chief accountant

Corrections of popular queries in the it-area:

| System Administrator | assistant system administrator, helpdesk, windows system administrator |

| programmer | c ++ programmer, remote programmer, c # programmer |

| Technical Director | Operations Director, Deputy Technical Director, Technical Manager |

| tester | testing specialist, game tester, tester remotely |

| junior | java junior, junior c ++, junior qa |

It was interesting to see in which professional areas this feature is most in demand:

It can be seen that more than half of the students who were looking for vacancies tried other queries. The least popular feature in IT and Consulting.

Todo

What else I would like to do:

- Automate. Now, related queries are built once for the period from January 1 to May 1, 2014. During automation, you should not forget about protection against vulnerabilities, because, knowing the algorithms, it’s easy to create advertising or just hooligan instead of related requests.

- Experiment with the scales of algorithms and conduct AB testing to show the most useful queries.

- Take into account the number of results for a query: prompt only those queries for which there are guaranteed results. Even with a large number of vacancies found, it will be useful to show narrowing queries, and with a small - expanding ones.

- Related searches in resume search.

- Use in suggest.

References

Metaphor: A System for Related Search Recommendations

Apache Hadoop

Apache Hive