Making SQL service plans for the needs of 1C: Enterprise 8.x

After another request to tell how to make a plan for servicing sql-bases used by 1C: Enterprise , I decided to share my experience with everyone right away.

Why is this necessary - if you do not maintain databases in sql, then its meaning is completely lost. The main tool is indexes and they need to be kept up to date. I did not meet any dogma not in practice, not in nete, not in courses in 1C itself, and therefore I share my experience.

Often the base operates in “normal” conditions. What is meant by this:

It is also worth considering several nuances:

It is rational under normal conditions to use 2 service plans Weekly (once a week) and Daily (on the remaining 6 days of the week).

On the items of the service plan:

The difference in the first task is “Reorganization of indices”. Tasks differ in that the reorganization tries to straighten out the existing indexes, and does not do everything from scratch. The more fragmentation - the more often it costs to run. But under normal conditions, once a day is enough to keep the index up to date until the next rebuild.

That's all. I repeat, I did not see dogmas in this moment, this option was developed and tested by me. Actual for databases ranging in size from 6 to 100 GB.

I wish you fast and reliable work.

PS Due to the fact that I am not a full-fledged DBA, perhaps my comments are very superficial, I will gladly read the comments in the comments and PM.

Why is this necessary - if you do not maintain databases in sql, then its meaning is completely lost. The main tool is indexes and they need to be kept up to date. I did not meet any dogma not in practice, not in nete, not in courses in 1C itself, and therefore I share my experience.

Often the base operates in “normal” conditions. What is meant by this:

- The SQL server is well powered, i.e. The amount of RAM provided for the operation of the SQL server should be selected from the calculation of 70% of the size of all mdf database files.

- The processor is not loaded more than 50% for 90% of the time.

- There is sufficient disk space (in particular, the temp.db database is used for sorting, 1C uses it in general for all its life, so you should take care of the disk space with this database in advance).

- Database recovery mode is “Simple”. (It has been empirically found that a large ldf file slows down 1s, and the ability to recover from a log file is very doubtful).

It is also worth considering several nuances:

- When using the Standard Edition of SQL, with a complete rebuild of the index, all users will be disconnected from the database, so you should consider this when deciding on a Weekly maintenance plan (the plan will be described below).

- It is worth considering that the 1C server also consumes memory, especially if thin clients or web services are used.

- It is better for SQL itself to limit the maximum amount of RAM in the server parameters, so that when it reaches a critical mass, it will start to clear unnecessary data from RAM in advance. And in order not to drive the entire server into a stupor.

It is rational under normal conditions to use 2 service plans Weekly (once a week) and Daily (on the remaining 6 days of the week).

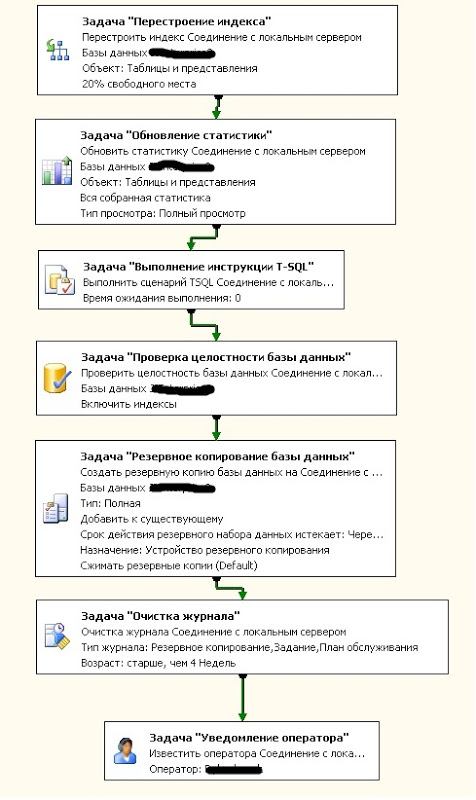

Weekly

General form

On the items of the service plan:

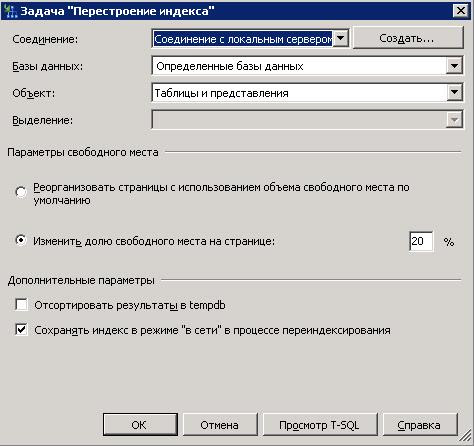

- Rebuilding the index. The meaning of the task is to remove all existing indexes and install new ones. (roughly speaking the inventory and arrangement of everything in order).

As parameters:- The choice of the target base (this will be in almost all tasks, because further on this parameter I will not pay attention to this article).

- The object in which we select "Tables and Views."

- Free space options - if the hard disk is small, you can select the "default" option, however I recommend using "Change the proportion of free space on the page", the recommended value is 20%. This will leave a reserve of free pages, and will allow you to keep indexes up to date. ATTENTION: Increases database size.

- Sort the results in tempdb. I think it’s not necessary to explain, but I want to warn you, at this time tempdb will grow very much, although sorting in it is designed to speed up the process, be careful, have a margin of space.

- Keeping the index online is a feature available for enterprise version of SQL. Allows re-indexing without disconnecting clients.

Settings example

- Statistics update. The task of collecting information about the status of indexes in the database. (In general, a little relevant after reindexing, but still I do).

Options:- An object. All the same tables and views as for rebuilding the index.

- Refresh. Here we update all the statistics.

- View Type - Full view.

Settings example

- Executing a T-SQL statement. This is the execution of an arbitrary SQL command, in particular, we are interested in

As the name implies, cache cleanup.dbcc proccacheExample

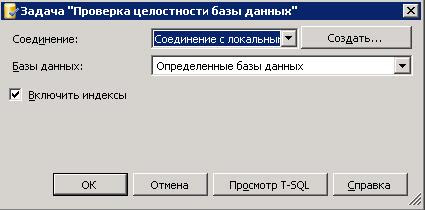

- Checking the integrity of the database. Here it seems unnecessary explanations - we are convinced that nothing has broken. In the parameters “include indexes” in the check, it was not in vain that they rebuilt.Settings example

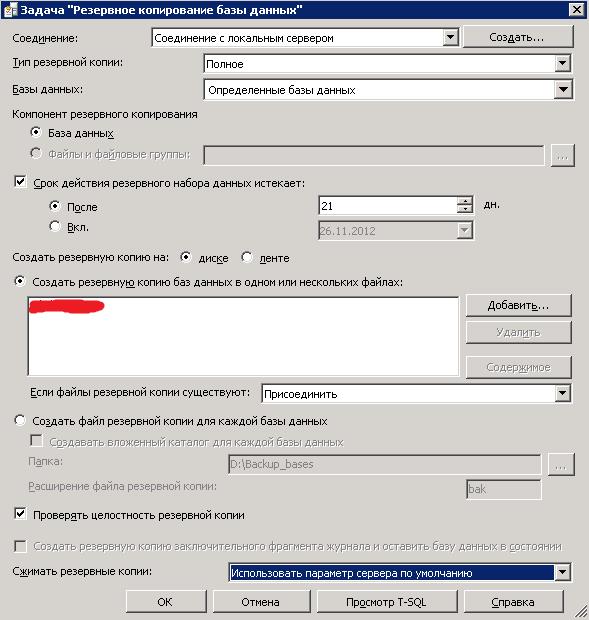

- Backing up the database. Here we need to talk more, due to many features. It is better to study this item separately on your own in other manuals, the format of this article does not provide an in-depth study of backups.

But I want to warn about a couple of nuances:- SQL does not know how to clean its container, because if you add backups to a file (it is also called the “Backup Device”), you will eventually forget about all the free space.

- SQL remembers its backups, therefore having made backups one-time using handles (for example, taking the database to another location, or to deploy it to another database from the backup), the next "difference" will be counted from it. In order to prevent this, you need to check the box “Backup only”. There is no such item in the backup task. In general, in the weekly plan, I still recommend using the full type of backup.

- And it would be nice to check the copy, let him sleep better.

- Compression, in general, can be used, but be careful, then differential ones must also be compressed.

Settings example

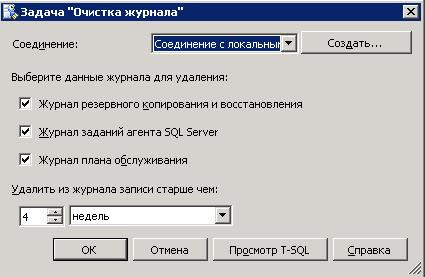

- Log cleaning.

- Backup and recovery log.

- SQL Server Agent Job Log

- Service Plan Log.

Settings example

- Operator notification. Fad again for independent study. But as the name implies, to report problems during the implementation of the plan.

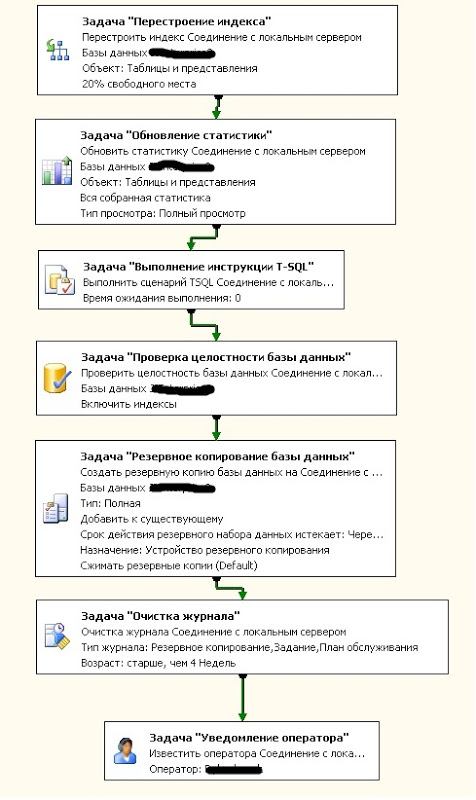

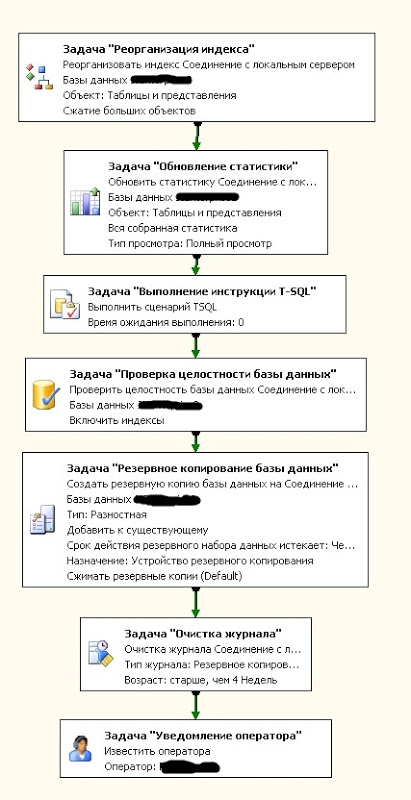

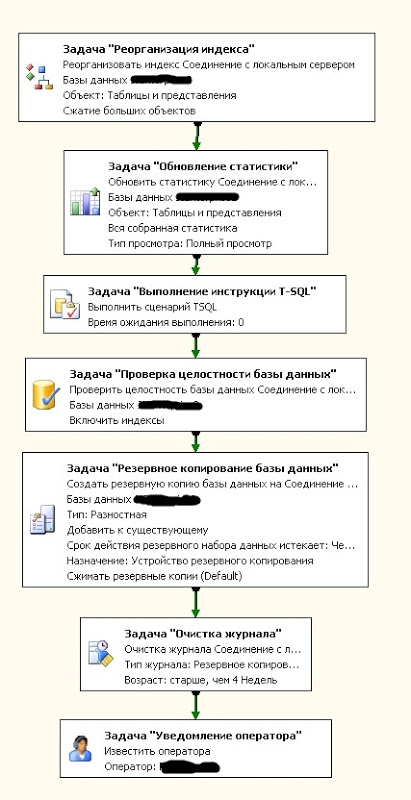

Daily

General form

Speaking separately does not make sense. Almost everything is similar to Weekly.

The difference in the first task is “Reorganization of indices”. Tasks differ in that the reorganization tries to straighten out the existing indexes, and does not do everything from scratch. The more fragmentation - the more often it costs to run. But under normal conditions, once a day is enough to keep the index up to date until the next rebuild.

Options

You can also use differential backup.

That's all. I repeat, I did not see dogmas in this moment, this option was developed and tested by me. Actual for databases ranging in size from 6 to 100 GB.

I wish you fast and reliable work.

PS Due to the fact that I am not a full-fledged DBA, perhaps my comments are very superficial, I will gladly read the comments in the comments and PM.