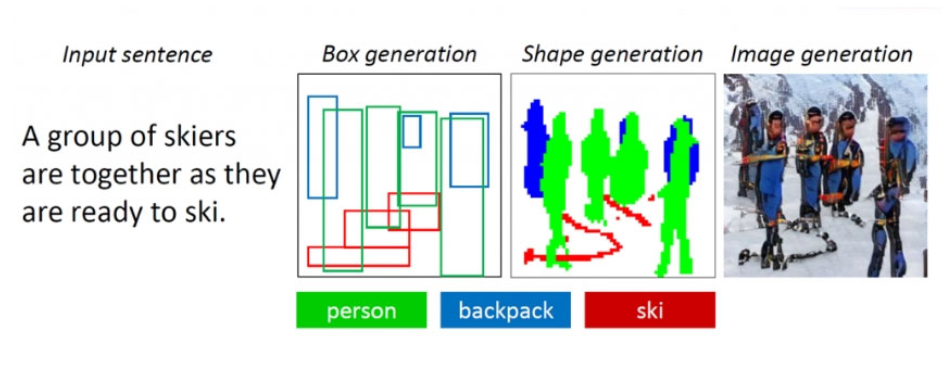

The neural network has learned to draw complex scenes from a textual description

The research group Microsoft Research introduced a generative-competitive neural network that is capable of generating images with multiple objects based on a textual description. Unlike earlier similar text-to-image algorithms capable of reproducing images of only basic objects, this neural network can cope with complex descriptions more efficiently.

The complexity of creating such an algorithm was that, firstly, the bot was previously not able to recreate all basic objects in good quality according to their descriptions, and secondly, it could not analyze how several objects can relate to each other in within one composition. For example, to create an image according to the description “A woman in a helmet sits on a horse”, the neural network had to semantically “understand” how each of the objects relates to each other. We managed to solve these problems by training the neural network based on the COCO open data set containing markup and segmentation data for more than 1.5 million objects.

The algorithm is based on the object-oriented generative-competitive neural network ObjGAN (Object-driven Attentive Generative Adversarial Newtorks). She analyzes the text, extracting from it words-objects that need to be placed on the image. Unlike a conventional generative-adversarial network consisting of one generator that creates images and one discriminator that evaluates the quality of the generated images, ObjGAN contains two different discriminators. One analyzes how realistic each of the reproduced objects is and how much it matches the existing description. The second determines how realistic the whole composition is and relates to the text.

The predecessor of the ObjGAN algorithm was AttnGAN, also developed by Microsoft researchers. It is able to generate images of objects from simpler textual descriptions. The technology for converting text to images can be used to help designers and artists create sketches.

The ObjGAN algorithm is publicly available on GitHub.

More technical details.