How to create cool action for Google Assistant. Lifehacks from Just AI

The ecosystem around Google Assistant is growing incredibly fast. In April 2017, only 165 actions were available to users, and today only in English there are more than 4,500. How diverse and interesting the Russian-speaking corner of the Google Assistant universe will become depends on the developers. Is there an ideal action formula? Why separate code and content from script? What should be remembered when working on a conversational interface? We asked the team of Just AI, the developers of conversational AI technologies, to share life hacks for creating applications for Google Assistant. On the Aimylogic platform from Just AI, several hundred action games have been created, among which there are very popular ones - the game “Yes, my lord”already played more than 140 thousand people. How to properly build work on the dream action, says Dmitry Chechetkin, head of strategic projects at Just AI.

Shake but not mix: the role of script, content, and code

Any voice application consists of three components - an interactive script, content that the action interacts with, and programmable logic, i.e. the code.

The scenario is perhaps the main thing. It describes what phrases a user can speak, how an action should react to them, what states it goes into, and how exactly it responds. I have been programming for 12 years, but when it comes to creating a conversational interface, I resort to various visual tools.

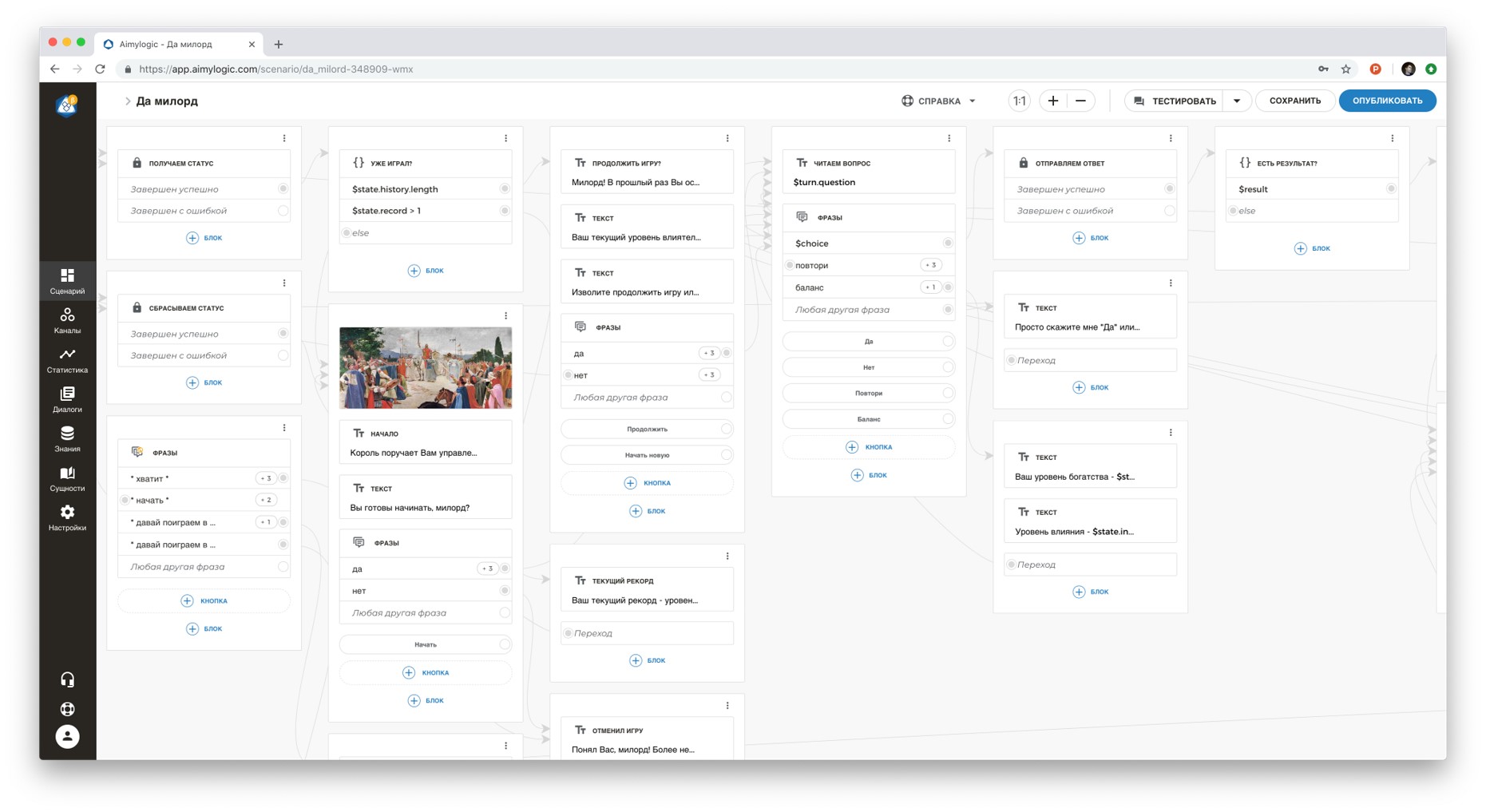

For starters, it doesn’t hurt to draw a simple outline of the script on paper. So you decide what and what follows in the dialogue. Then you can transfer the script to some product to visualize it. Google offers to create a fully customized Dialogflow dialog, and for the simplest and shortest scenarios that do not require an extensive understanding of the language, Actions SDK . Another option is a visual designer with Aimylogic NLU ( how to create an action for Google Assistant in Aimylogic ), in which you can build a script without any in-depth programming skills, and also immediately test the action. I use Aimylogic to see how all the transitions in my dialog will work, to test and validate the hypothesis itself and the idea of what I want to implement.

Programmable logic is often required. For example, your site may look cool, but in order for it to "know how", it will have to refer to the code on the server - and the code will be able to calculate something, save and return the result. Same thing with the action script. The code should run smoothly, and better if completely free. Today there is no need to pay thousands of dollars so that a code of 50, 100, 1000 lines is available for your action 24/7. I use several services for this at once: Google Cloud Functions , Heroku, Webtask.io, Amazon Lambda. Google Cloud Platform provides a fairly wide range of services for free in its Free Tier .

The script can access the code using the simplest http calls we are all used to. But at the same time, the code and the script do not mix. And this is good, because you can keep both of these components up to date, expand them as you like, without complicating the work on the action.

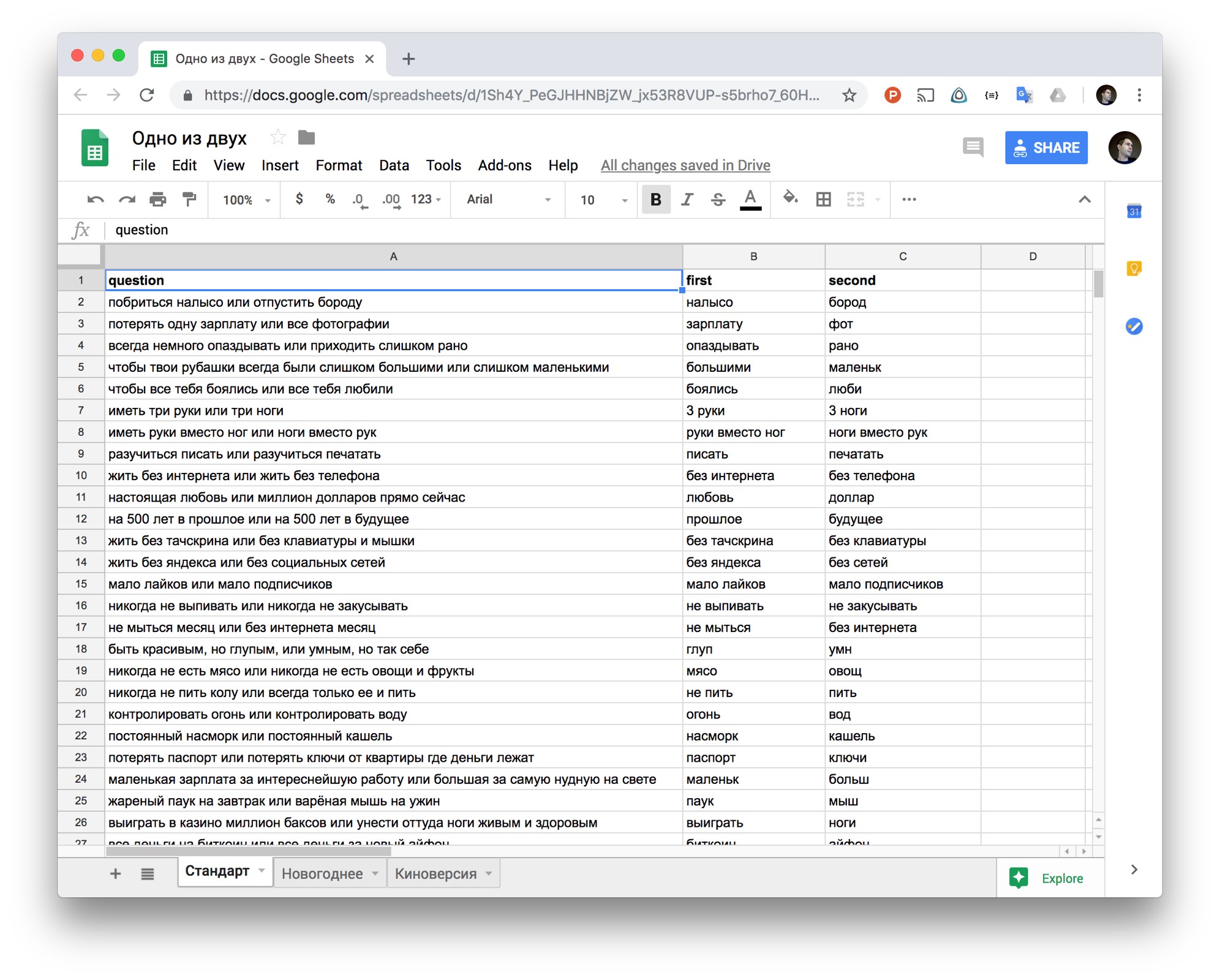

The third component is content. This is data that can change all the time, without affecting the structure of the script itself. For example, quiz questions or episodes in our Yes Yes game. If the content lived with the script or with the code, then such a script would become more cumbersome. And in this case, no matter what tool you use to create an action, working with it will still be inconvenient for you. Therefore, I recommend storing the content separately: in the database, in a file in the cloud storage, or in a table that the script can also access through the API in order to receive data on the fly. Separating the content from the script and the code, you can attract other people to work on the action - they will be able to replenish the content independently of you. And the development of content is very important, because the user expects from the action, to which he returns time after time, fresh and diverse content.

How to use ordinary tables in the cloud so as not to store all the content in the script itself? For example, in the game “First or Second” we used a cloud-based Excel spreadsheet where any of the project participants could add new questions and answers for the action. Aimylogic script accesses this table using a single http request through a special API. As you can see, the script itself is small - because it does not store all the data from the table, which is updated every day. Thus, we separate the interactive script from the content, which allows us to work with the content independently and collectively replenish the script with fresh data. By the way, 50 thousand people have already played this game.

Checklist: Things to Remember When Creating a Conversational Interface

Any interface has components that the user interacts with: lists, buttons, pictures, and more. The conversational interface exists according to the same laws, but the fundamental difference is that a person communicates with the program by voice. From this we must build on, creating our own action.

The right action should not be able to do everything in the world. When a person speaks with a program, he cannot keep a lot of information in his head (remember how you listen to multi-story personal offers from a bank or mobile operator by phone). Give up the superfluous and focus on one single, but the most important function of your service, which will be most conveniently performed using your voice, without touching the screen.

For example, you have a ticket service. You should not hope that the client will crank out the usual scenario with a voice - look for a ticket according to five or six criteria, choose between carriers, compare and pay. But an application that tells you the minimum price in the chosen direction may well come in handy: this is a very quick operation, and it is convenient to perform it by voice without opening the site, without having to go through the “form-filling” script each time (when you fill out the fields and select filters )

Action is about the voice, not about the service as a whole.The user should not regret that he launched the action in the Assistant, and did not go, for example, into the application or the site. But how to understand that one cannot do without a voice? To get started, try the idea of action on yourself. If you can easily perform the same action without a voice, there will be no sense. One of my first Assistant apps was Yoga for the Eyes . This is such a virtual personal trainer that helps to do exercises for vision. There is no doubt that a voice is needed here: your eyes are busy with exercises, you are relaxed and focused on oral recommendations. Peeping into the memo, distracting from training, would be inconvenient and ineffective.

Or here is an example of a failed script for a voice application. Often I hear about how another online store wants to sell something through a virtual assistant. But filling the basket with voice is inconvenient and impractical. And it is unlikely that the client will understand why he needs it. But the ability to repeat the last order by voice or to throw something on the go on the shopping list is another matter.

Remember about UX. The action should be along with the user: accompany and guide him in the course of the dialogue so that he easily understands what is required to be said. If a person comes to a standstill, he begins to think, “And what's next?” Is a failure. There is no need to hope that your user will always refer to the help. Deadlocks need to be tracked (for example, in analytics in the Actions Console), and help the user with leading questions or tips. In the case of voice action, predictability is not a vice. For example, in our game “Yes, my lord”, each phrase ends so that the participant can answer either “yes” or “no”. He is not required to invent something on his own. And it's not that this is such an elementary game. It’s just that the rules are organized in such a way that everything is extremely clear to the user.

"He speaks well!" The action “hears” well thanks to the Assistant, and well “speaks” - thanks to the script developer. A recent update gave Google Assistant new voice options and a more realistic pronunciation. Everything is cool, but the developer should reflect on the phrase, its structure, sound, so that the user can understand everything the first time. Arrange stresses, use pauses to make action phrases sound human.

Never load the user. For action games voicing news feeds or reading fairy tales to children, this is not a problem. But listening to a voice assistant’s speech endlessly when you want to order pizza is difficult. Try to make replicas concise, but not monosyllabic and varied (for example, to think through several options for greetings, farewells and even phrases in case the assistant misunderstood something). The dialogue should sound natural and friendly, for this you can add elements of colloquial speech, emotions, interjection to phrases.

The user does not forgive stupidity.People often blame voice assistants for stupidity. And basically this happens when an assistant or an application for him cannot recognize different variations of the same phrase. Let your action be as simple as setting an alarm, it is important that he still understands synonyms, different forms of words that are identical in meaning and do not fail if the user responds unpredictably.

How to get out of situations when an action refuses to understand? Firstly, you can diversify the answers in the Default Fallback Intent - use not only the standard provided, but also custom ones. And secondly, you can train the Fallback Intent with all sorts of spam phrases that are not related to the game. This will teach the application not only to adequately respond to irrelevant requests, but also increase the accuracy of the classification of other types of requests.

And one more tip. Never, never make a button menu out of your action to make the user's life easier - it annoys, distracts from the dialogue and makes you doubt the need to use voice.

Teach the politeness action. Even the coolest action should end. Ideally, goodbye, after which you want to return to him again. By the way, remember that if the action does not ask a question, but simply answers the user’s question, he must “close the microphone” (otherwise the application will not be moderated and will not be published). In the case of Aimylogic, you just need to add the "Script Completion" block to the script.

And if you are counting on retention, it is important to provide other rules of good tone in the script: the action should work in a context - remember the name and gender of the user and not ask again what has already been specified.

How to work with ratings and reviews

Google Assistant users can rate action games and thereby influence their rating. Therefore, it is important to learn how to use the rating system to your advantage. It would seem that you just need to give the user a link to a page with your action and ask him to leave a review. But there are rules. For example, do not offer to evaluate the action in the first message: the user must understand what he is rating. Wait until the application really fulfills some useful or interesting user mission, and only then offer to leave a review.

And it’s better not to try to voice this request with your voice, with the help of speech synthesis - you just spend the user’s time. Moreover, he may not follow the link, but say “I bet five,” and this is not at all what you need in this case.

In the game “Yes, my lord”, we display the link for feedback only after the user has played the next round. And at the same time we do not voice the request, but simply display a link to the screen and offer to play again. I’ll pay attention again - offer this link when the user is guaranteed to receive some benefit or pleasure. If you do this at the wrong time, when the action does not understand something or slows down, you can get a negative feedback.

In general, try our Aimylogic actions “Yoga for the eyes” , the games “First or Second” and “Yes, my lord” (and soon transactions will appear in it, and it will be easier for my lord to maintain her power and wealth!). And recently, we released the first voice quest for Google Assistant “Lovecraft World”- This is an interactive drama in the mystical style “Call of Cthulhu”, where the scenes are voiced by professional actors, the plot can be controlled using voice and make in-game payments. This action is already developed on Just AI Conversational Platform, a professional enterprise solution.

Three Secrets of Google Assistant

- The use of music. Of the voice assistants in Russian, only Google Assistant allows you to use music directly in the action script. The musical arrangement sounds great in game action games, and from yoga to music there are completely different sensations.

- Payment options inside the action. For in-app purchases, the Google Assistant uses the Google Play platform. The conditions for working with the platform for game action creators are the same as for mobile application developers - 70% of the transaction is deducted to the developer.

- Moderation . For successful moderation, the action must have a Personal Data Processing Policy. You need to place it on sites.google.com , indicate the name of your action and email - the same as that of the developer in the developer console, and write that the application does not use user data. Moderation of an action without transactions lasts 2-3 days, but moderation of an application with built-in payments can take 4-6 weeks. More about the review procedure

More life hacks, more cases and instructive epics await developers at the conference on conversational AI Conversations , which will be held June 27-28 in St. Petersburg. Andrey Lipatsev, Strategic Partner Development Manager Google, will talk about international experience and the Russian specifics of Google Assistant. And at Developers' Day, Tanya Lando, a leading linguist of Google, will talk with participants about dialog boxes, signals and methodologies and how to choose them for your tasks; and the developers themselves will share their personal experience in creating voice applications for assistants - from a virtual secretary for Google Home to voice games and B2B actions that can work with the company's closed infrastructure.

And by the way, on June 28 at the conference Google and Just AI will hold an open hackathonfor professional and novice developers - it will be possible to work on action games for the Assistant, experiment with conversational UX, speech synthesis and NLU tools and compete for cash prizes! Register - the number of seats is limited!