Hardware RAID: Usage Features

Organizing a single disk space is a task that can be easily solved using a hardware RAID controller. However, you should first familiarize yourself with the features of using and managing such a controller. We will talk about this today in our article.

The reliability and speed of disk drives is a matter of concern to every system administrator. Despite the assurances of manufacturers about the quality of their own devices - HDD and SSD continue to fail at the most inopportune time, losing precious data. SMART technology in most cases makes it possible to evaluate the “health” of the drive, but this does not guarantee that the drive will continue to work smoothly.

It is impossible to predict the failure of a drive with 100% accuracy, so you should consider the option in which this does not become a problem or cause a service stop. Using RAID arrays solves this problem. Consider the three main approaches used for this task:

- Software RAID is the least expensive option, but also the least productive. The array is created by the operating system, the entire data processing burden falls on the shoulders of the central processor.

- Integrated hardware RAID (also often called Fake-RAID) is a microchip installed on the motherboard, which takes over part of the functionality of the hardware RAID controller, working in tandem with the central processor. This approach works a little faster than software RAID, but the reliability of such an array leaves much to be desired.

- Hardware RAID is a separate controller with its own processor and caching memory, completely taking over the performance of all disk operations. The most expensive, however, the most productive and reliable option for use.

Let's look at hardware RAID in detail.

Appearance

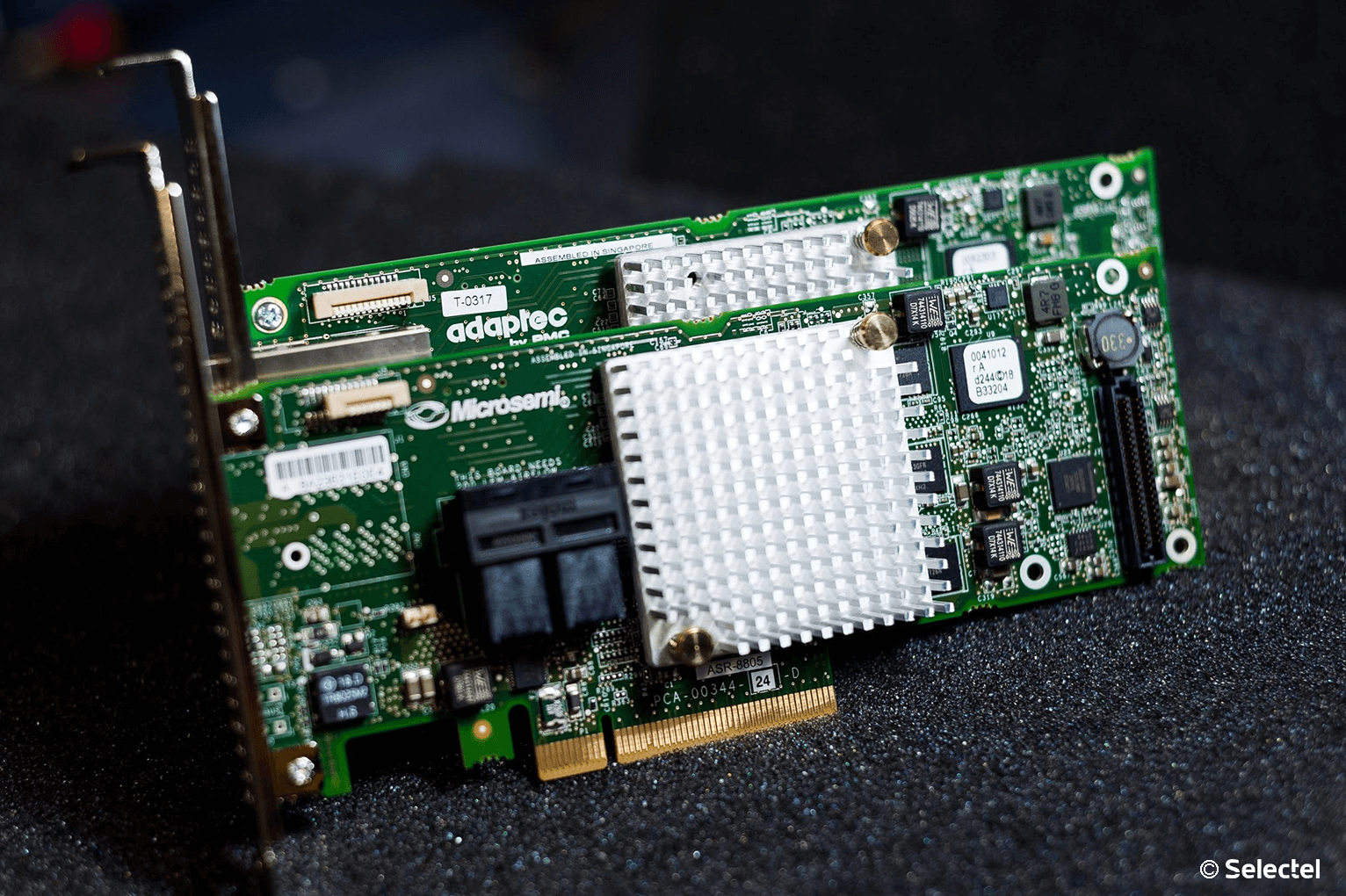

We chose Adaptec solutions from Microsemi. These are RAID controllers with proven usability and high performance. We install them if our client decided to order a server of arbitrary or fixed configuration.

To connect disks, special interface cables are used. On the controller side, SFF8643 connectors are used . Each cable allows you to connect up to 4 SAS or SATA drives (depending on model). In addition, the interface cable also has an eight-pin SFF-8485 connector for the SGPIO bus, which we will talk about the purpose a bit later.

In addition to the RAID controller itself, there are two additional devices that can increase reliability:

- BBU (Battery Backup Unit) is an expansion module with a lithium-ion battery that allows you to maintain voltage on a volatile cache chip. In the event of a sudden blackout of the server, its use allows you to temporarily save the contents of the cache, which has not yet been written to disks.

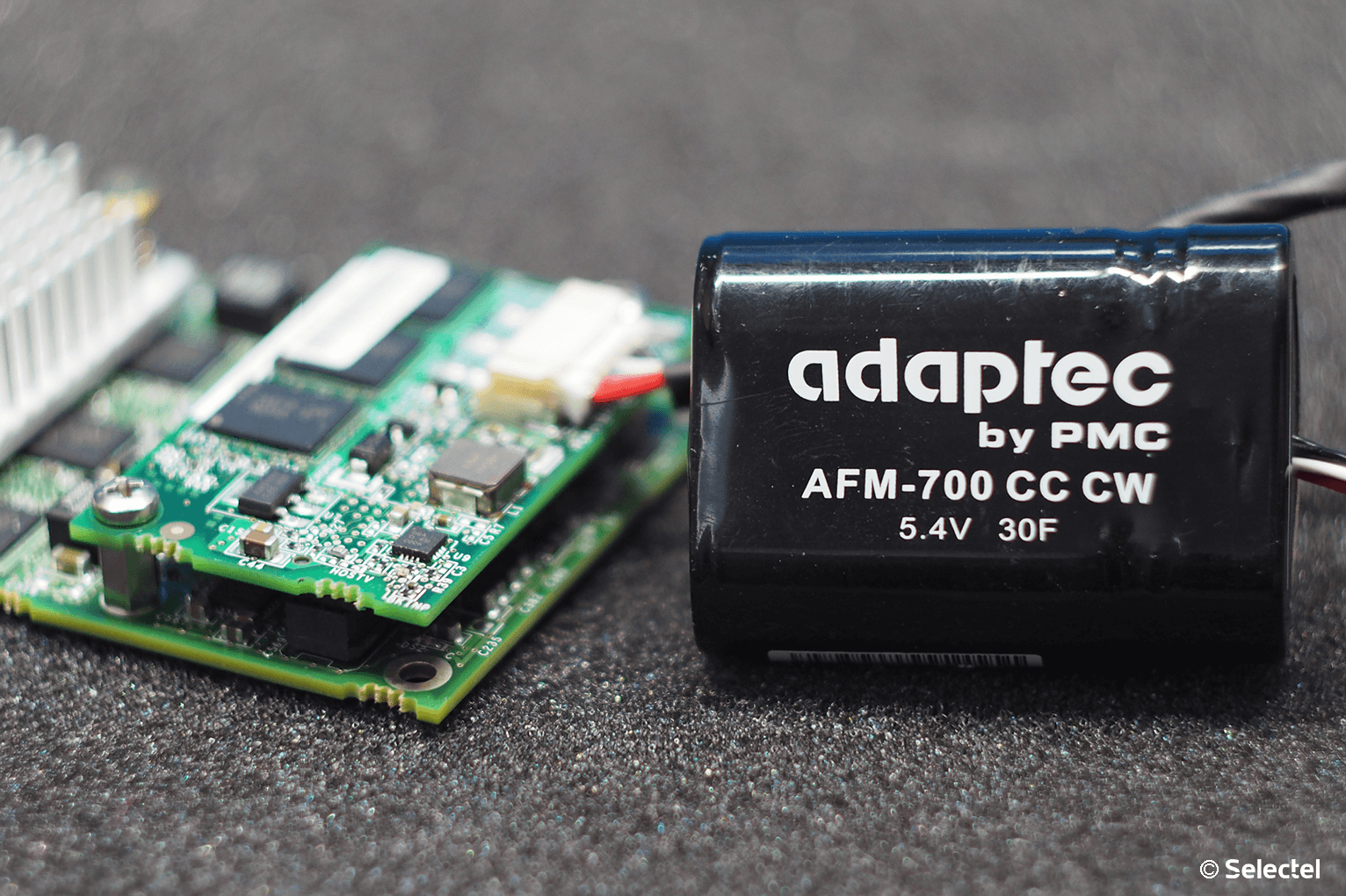

As soon as the server’s power supply is restored, the contents of the cache will be written to disks in the normal mode. According to the manufacturer, a fully charged battery is capable of storing cache data for 72 hours. - ZMCP (Zero-Maintenance Cache Protection) is a special expansion module for the RAID controller, which has its own non-volatile memory and supercapacitor. In the event of a server power failure, the supercapacitor provides the microcircuit with electric power, which is enough to write the contents of the volatile cache memory to the ZMCP NAND memory.

After the power of the server is restored, the contents of the cache will automatically be written to disks. It is these modules that are installed on our servers with a hardware RAID controller and Cache Protection.

This is especially important when Writeback is enabled. In the event of a power failure, the cache contents will not be flushed to disks, which will lead to data loss and, as a result, the regular operation of the disk array will be disrupted.

Specifications

Temperature

First, I would like to touch on such an important thing as the temperature regime of Adaptec hardware RAID controllers. All of them are equipped with small passive radiators, which can cause a false impression of a small heat dissipation.

The controller manufacturer cites 200 LFM (linear feet per minute) as the recommended airflow value, which corresponds to an indicator of 8.24 liters per second (or 1.02 meters per second). Such controllers are designed exclusively for installation in rackmount packages, where such air flow is created by high-speed standard coolers.

From 0 ° C to 40-55 ° C - the operating temperature of most Adaptec RAID controllers (depending on the availability of installed modules), recommended by the manufacturer. The maximum operating temperature of the chip is 100 ° C. Operating the controller at elevated temperatures (over 85 ° C) may damage it. For the sake of convenience, below the spoiler we give a plate of recommended temperatures for different Adaptec series controllers.

Recommended temperatures

| Series 2 (2405, 2045, 2805) and 2405Q | 55 ° C without modules |

| Series 5 (5405, 5445, 5085, 5805, 51245, 51645, 52445) | 55 ° C without battery module, 40 ° C with battery module ABM-800 |

| Series 5Z (5405Z, 5445Z, 5805Z, 5805ZQ) | 50 ° C with ZMCP module |

| Series 5Q (5805Q) | 55 ° C without battery module, 40 ° C with battery module ABM-800 |

| Series 6E (6405E, 6805E) | 55 ° C without modules |

| Series 6 / 6T (6405, 6445, 6805, 6405T, 6805T) | 55 ° C without ZMCP module, 50 ° C with ZMCP module AFM-600 |

| Series 6Q (6805Q, 6805TQ) | 50°C с ZMCP модулем AFM-600 |

| Series 7E (71605E) | 55°C без модулей |

| Series 7 (7805, 71605, 71685, 78165, 72405) | 55°C без ZMCP модуля, 50°C с ZMCP модулем AFM-700 |

| Series 7Q (7805Q, 71605Q) | 50°C с ZMCP модулем AFM-700 |

| Series 8E (8405E, 8805E) | 55°C без модулей |

| Series 8 (8405, 8805, 8885) | 55°C без ZMCP модуля, 50°C с ZMCP модулем AFM-700 |

| Series 8Q (8885Q, 81605Z, 81605ZQ) | 50°C с ZMCP модулем AFM-700 |

Our customers do not have to worry about overheating of the controllers, because our data centers maintain a constant temperature regime , and the assembly of arbitrary configuration servers takes into account the features of such components (as we mentioned in our previous article ).

Work speed

In order to demonstrate how the presence of a hardware RAID controller helps to increase the speed of the server, we decided to assemble a test bench with the following configuration:

- Intel Xeon CPU E3-1230v5;

- RAM 16 Gb DDR4 2133 ECC;

- 4 HDDs with a capacity of 1 TB.

CentOS 7 will be installed as the operating system. The 1C Bitrix24 will take on the role of the server application. First, we will build the software RAID using mdadm and measure the performance using the built-in test in Bitrix24. We don’t specially make any changes or additional settings to the system - a demo configuration with default settings is installed.

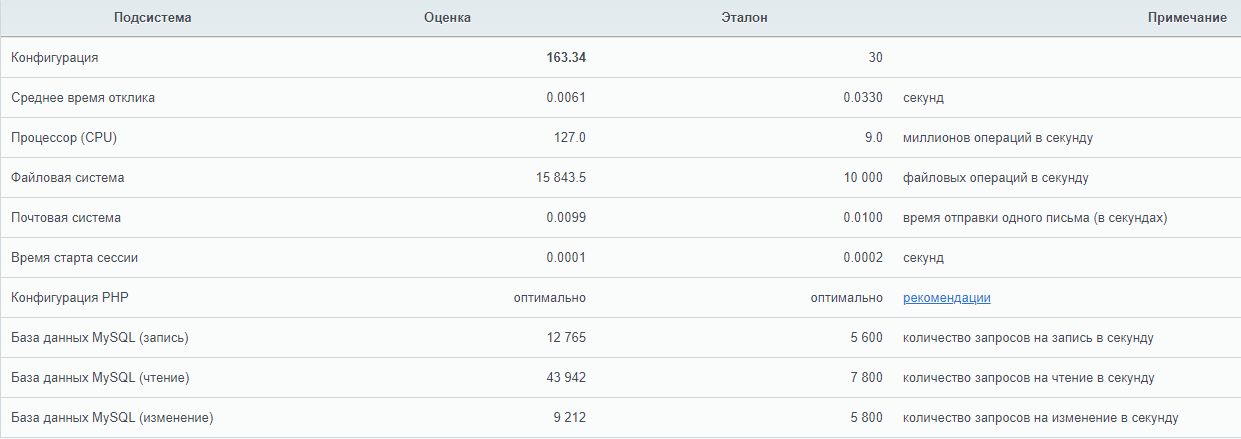

Then we will put the Adaptec ASR 7805 RAID controller with the AFM-700 cache protection module in the same stand, connect the same hard drives to it and perform the exact same test.

With software RAID

The undoubted advantage of software RAID is ease of use. An array in Linux is created using the standard mdadm utility. When installing the operating system, most often the creation of an array is provided directly from the installer. In the case when the installer does not provide such an opportunity, just go to the next console using the keyboard shortcut Ctrl + Alt + F2 (where the number of the function key is the number of the called tty).

Creating an array is very simple. The fdisk -l command looks at which disks are present in the system. In our case, these are 4 disks:

/dev/sda

/dev/sdb

/dev/sdc

/dev/sddCheck that there are no metadata on the disks, for example, from the previous array:

mdadm --examine /dev/sda /dev/sdb /dev/sdc /dev/sddOn all 4 disks there should be a message:

mdadm: No md superblock detectedIf there is metadata on one or several disks, you can delete them as follows (where sdX is the required disk):

mdadm --zero-superblock /dev/sdXCreate partitions on each disk for the future array using fdisk . The partition type should be fd (Linux RAID autodetect) .

fdisk /dev/sdXWe collect the RAID 10 array from the created partitions using the command:

mdadm --create --verbose /dev/md0 --level=10 --raid-devices=4 /dev/sda1 /dev/sdb1 /dev/sdc1 /dev/sdd1Immediately after that, the / dev / md0 array will be created and the process of rebuilding the data on the disks will start. To track the current status of the process, enter:

cat /proc/mdstat

Until the process of rebuilding data is completed, the speed of the disk array will be reduced.

After installing the operating system and Bitrix24 on the created array, we launched the standard test and got the following results:

With hardware RAID

Before the server can use the single disk space of the RAID array, it is necessary to perform basic configuration of the controller and logical drives. There are two ways to do this:

- using the internal controller utility,

- utility from the operating system.

The first method is ideal for initial setup. Logging into the utility in Legacy mode (the default mode for our servers) is performed using the CTRL + A key combination when a notification appears during the POST initialization process.

The utility allows not only to control the controller settings, but also logical devices. We initialize the physical disks (all information on the disks during initialization will be destroyed) and create a RAID-10 array using the Create Array section. When creating, the system will ask for the desired stripe size, that is, the size of the data block for one I / O operation:

- larger stripe size is ideal for working with large files;

- smaller stripe size is suitable for processing a large number of small files.

Important - the stripe size is set only once (when creating the array) and this value cannot be changed in the future.

Immediately after the controller was given the command to create an array, as well as with software RAID, the process of rebuilding data on disks begins. This process runs in the background, and the logical drive is immediately accessible to the BIOS. The performance of the disk subsystem will also be reduced until the process is complete. If created several arrays, it is necessary to define the boot array using the key combination the Ctrl + Bed and .

After the array status changed to Optimal , we installed Bitrix24 and performed the exact same test. Test result:

It immediately becomes clear that the hardware RAID controller accelerates read and write operations to disk media through the use of a cache, which allows faster processing of mass user requests.

Controller management

Directly from the operating system, the controller is controlled using software available for download from the manufacturer’s website . Options are available for most operating systems and hypervisors:

- Debian

- Ubuntu

- Red Hat Linux,

- Fedora

- SuSE Linux,

- FreeBSD

- Solaris,

- Microsoft Windows

- Citrix XenServer,

- VMware ESXi

Other Linux distributions also have driver source codes available. In addition to drivers and console utility ARCCONF, the manufacturer also offers a program with a graphical interface for convenient control of the controller - maxView Storage Manager.

Using these utilities, you can easily manage logical and physical disks without interrupting the server. You can also use such useful functionality as “disk highlighting". We already mentioned the fifth cable for connecting SGPIO - this cable connects directly to the backplane (from the English backplane - the connection board for server drives) and allows the RAID controller to fully control the light indication of each disk.

Keep in mind that backplanes support not only SGPIO, but also I2C. Switching between these modes is most often carried out using jumpers on the backplane itself.

Each device connected to the Adaptec hardware RAID controller is assigned an identifier consisting of a channel number and a physical disk number. Channel numbers correspond to port numbers on the controller.

Disk replacement is a regular operation, however, requiring unambiguous identification. If you make a mistake during this operation, you can lose data and interrupt the server. With a hardware RAID controller, such an error is rare.

This is done very simply:

- A list of mapped drives to the controller is requested:

arcconf getconfig 1 - A disk is found that needs to be replaced, and its “coordinates” are recorded (parameter Reported Channel, Device (T: L) ).

- The disk is "highlighted" with the command:

arcconf identify 1 device 0 0

For example, on Supermicro platforms, the regular disk operation is green or blue, and the “highlighted” disk will blink red. It is impossible to mix up the disks in this case, which will allow to avoid errors due to the human factor.

Configure Caching

Now a few words about the options for working the write cache. The Write Through option means that the controller informs the operating system about the success of the write operation only after the data has actually been written to the disks. This increases the reliability of data security, but does not increase productivity.

To achieve maximum speed, you must use the Write Back option . With this scheme of operation, the controller will inform the operating system of the successful IO operation immediately after the data arrives in the cache.

Important - when using Write Back, it is strongly recommended to use a BBU or ZMCP-module, because without it, during a sudden power outage, some of the data may be lost.

Monitoring setup

The issue of monitoring the status of the equipment and the possibility of notification is quite acute for any system administrator. In order to configure the “bundle” of Zabbix and the Adaptec RAID controller, we recommend using the listed solutions .

Often, you need to monitor the status of the controller directly from the hypervisor, for example, VMware ESXi. The problem is solved by installing the CIM provider using the Microsemi instruction .

Firmware

The need for firmware RAID controller arises most often to fix the problems identified by the manufacturer with the operation of the device. Despite the fact that the firmware is available for self-updating, this operation should be approached very responsibly, especially if the procedure is performed on a “combat” system.

If our client needs to change the firmware version of the controller, then he just needs to create a ticket in our control panel. System engineers will flash the RAID controller to the required version at the specified time and do it as correctly as possible.

Important - you should not perform flashing yourself, since any error can lead to data loss!

Conclusion

Using a hardware RAID controller is justified in most cases when a high speed and reliability of the disk subsystem is required.

Selectel system engineers will perform basic configuration of the disk array on the hardware RAID controller free of charge when ordering a custom configuration server. In the event that additional assistance with configuration is required, we will be happy to help as part of our administration service . We also prepared for our readers a small memo on the commands of the arcconf utility.

Do you use hardware RAID controllers? See you in the comments.