The new algorithm accelerates 200 times the automatic design of neural networks

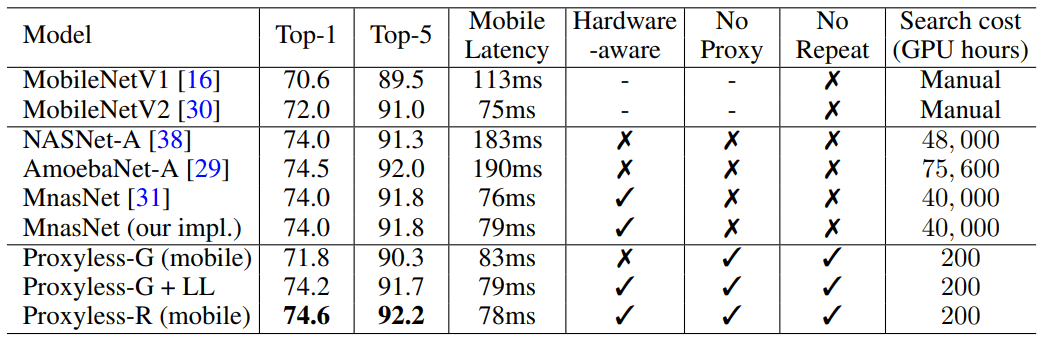

ProxylessNAS directly optimizes the architecture of neural networks for a specific task and equipment, which can significantly increase productivity compared to previous proxy approaches. On an ImageNet dataset, a neural network is designed in 200 GPU hours (200–378 times faster than its counterparts), and the automatically designed CNN model for mobile devices reaches the same level of accuracy as MobileNetV2 1.4, working 1.8 times faster.

Researchers at the Massachusetts Institute of Technology have developed an efficient algorithm for the automatic design of high-performance neural networks for specific hardware, writes the publication MIT News .

Algorithms for the automatic design of machine learning systems are a new field of research in the field of AI. This technique is called neural architecture search (NAS) and is considered a difficult computational task.

Automatically designed neural networks have a more accurate and efficient design than those developed by humans. But the search for neural architecture requires really huge calculations. For example, the modern NASNet-F algorithm, recently developed by Google to run on GPUs, takes 48,000 hours of GPU computing to create one convolutional neural network, which is used to classify and detect images. Of course, Google can run hundreds of GPUs and other specialized hardware in parallel. For example, on a thousand GPUs this calculation will take only two days. But not all researchers have such opportunities, and if you run the algorithm in the Google computing cloud, then it can fly into a pretty penny.

MIT researchers have prepared an article for the International Conference on Learning Representations, ICLR 2019 , which will be held from May 6 to 9, 2019. The article ProxylessNAS: Direct Neural Architecture Search on Target Task and Hardware describes the ProxylessNAS algorithm that can directly develop specialized convolutional neural networks for specific hardware platforms.

When run on a massive set of image data, the algorithm designed the optimal architecture in just 200 hours of GPU operation. This is two orders of magnitude faster than the development of the CNN architecture using other algorithms (see table).

Researchers and companies with limited resources will benefit from the algorithm. A more general goal is to “democratize AI,” says songwriter Song Han, an assistant professor of electrical engineering and computer science at MIT's Microsystems Technology Laboratories.

Khan added that such NAS algorithms will never replace the intellectual work of engineers: "The goal is to offload the repetitive and tedious work that comes with designing and improving the architecture of neural networks."

In their work, researchers found ways to remove unnecessary components of a neural network, reduce computational time, and use only part of the hardware memory to run the NAS algorithm. This ensures that the CNN developed works more efficiently on specific hardware platforms: CPU, GPU and mobile devices.

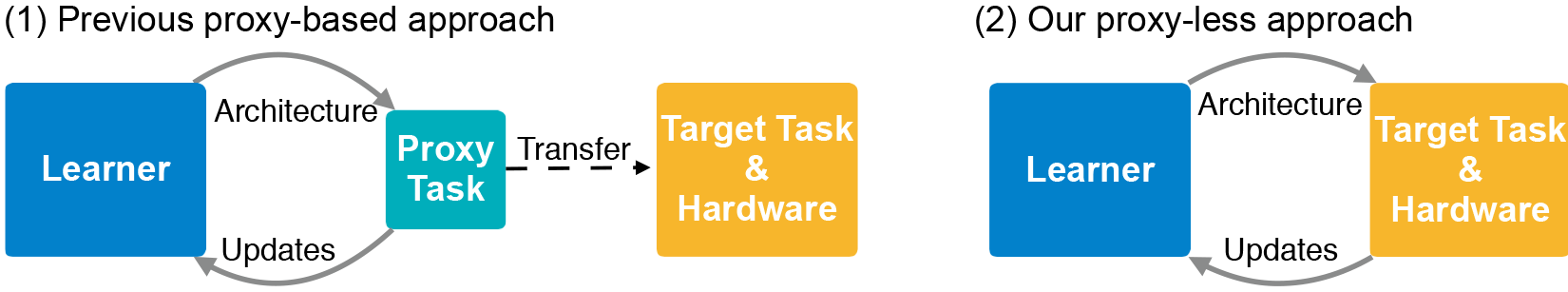

The CNN architecture consists of layers with adjustable parameters called “filters” and possible relationships between them. Filters process image pixels in square grids - such as 3 × 3, 5 × 5 or 7 × 7 - where each filter covers one square. In fact, the filters move around the image and combine the colors of the pixel grid into one pixel. In different layers, filters are of different sizes, which are connected in different ways to exchange data. The CNN output produces a compressed image combined from all filters. Since the number of possible architectures - the so-called "search space" - is very large, the use of NAS to create a neural network on massive sets of image data requires huge resources. Typically, developers run NAS on smaller data sets (proxies) and transfer the resulting CNN architectures to the target. However, this method reduces the accuracy of the model. In addition, the same architecture applies to all hardware platforms, resulting in performance issues.

MIT researchers trained and tested the new algorithm on the task of classifying images directly in the ImageNet dataset, which contains millions of images in a thousand classes. First, they created a search space that contains all the possible “paths” for CNN candidates so that the algorithm finds the optimal architecture among them. To fit the search space into the GPU memory, they used a method called path-level binarization, which saves only one path at a time and saves memory by an order of magnitude. Binarization is combined with path-level pruning, a method that traditionally studies which neurons in a neural network can be safely removed without harming the system. Only instead of removing neurons, the NAS algorithm removes entire paths, completely changing the architecture.

In the end, the algorithm cuts off all unlikely paths and saves only the path with the highest probability - this is the ultimate CNN architecture.

The illustration shows samples of neural networks for classifying images that ProxylessNAS has developed for GPUs, CPUs, and mobile processors (top to bottom, respectively).