Who can be called a “pixel shader boss and a pixel commander”?

Denis Radin , who works at Evolution Gaming on photorealistic web games using React and WebGL: he is known to many just under the name Pixels Commander.

In December, at our HolyJS conference, he made a presentation on how using GLSL can improve the work with UI components compared to “regular javascript”. And now for Habr we have prepared a text version of this report - welcome to kat! At the same time we attach a video of the speech:

First, a question for the audience: how many languages are well supported on the web? (Voice from the audience: “Not a single one!”)

Well, the languages in the browser, let’s say so. Three? Let's assume there are four of them: HTML, CSS, JS, and SVG. SVG can also be considered a declarative language, another kind, it's still not HTML.

But actually there are even more of them. There is VRML, he died, you can not count it. And there’s GLSL (“OpenGL Shading Language”). And GLSL is a very special language for the web.

Because the rest (JS, CSS, HTML) originated on the web, and from web pages began a victorious march on other platforms (for example, mobile). And GLSL was born in the world of computer graphics, in C ++, and came to the web from there. And what's great about it: it works wherever OpenGL works, so if you learned it, you can use it anywhere (in Unity, Swift, Java, and so on).

GLSL stands for crazy special effects in computer games. And I like that with its help you can develop interesting and unusual UI components, and we'll talk about them later. It is also a technology for parallel computing, which means you can mine cryptocurrencies using GLSL. What interested?

History

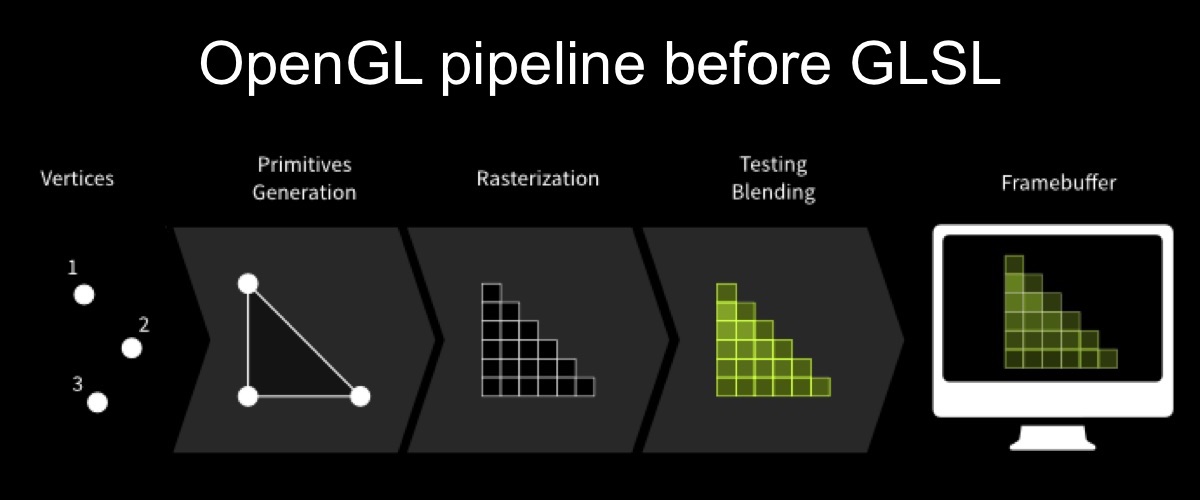

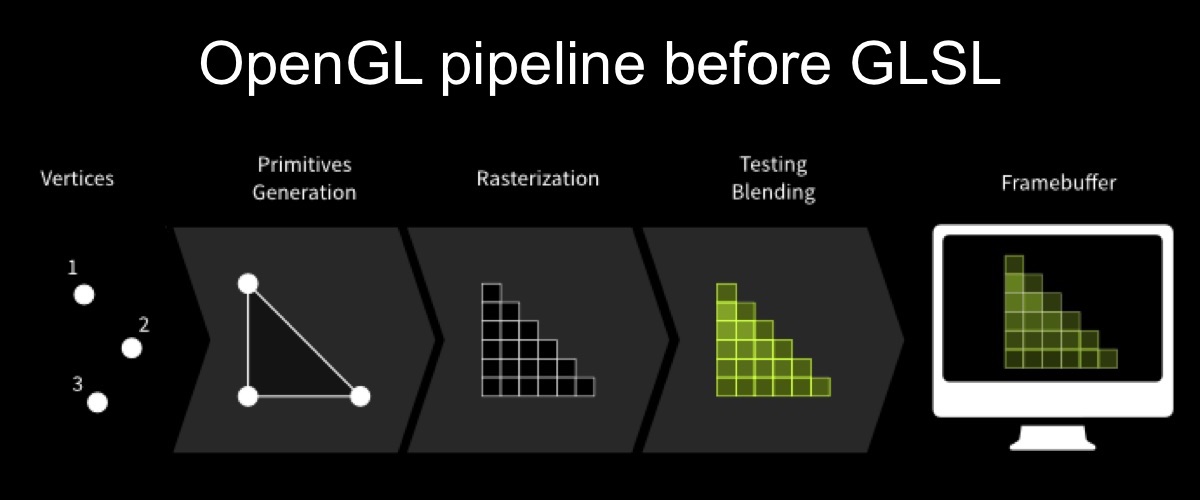

Let's start with the history of GLSL. When and why did he appear? This diagram displays the OpenGL rendering pipeline:

Initially, in the first version of OpenGL, the pipeline rendering looked like this: vertices are sent to the input, primitives are gathered from the vertices, the primitives are rasterized, the frame is trimmed and then the framebuffer is output.

There is a problem here: it is not customizable. Since we have a clearly defined pipeline, you can upload textures there, but you can’t do anything special with any exact request.

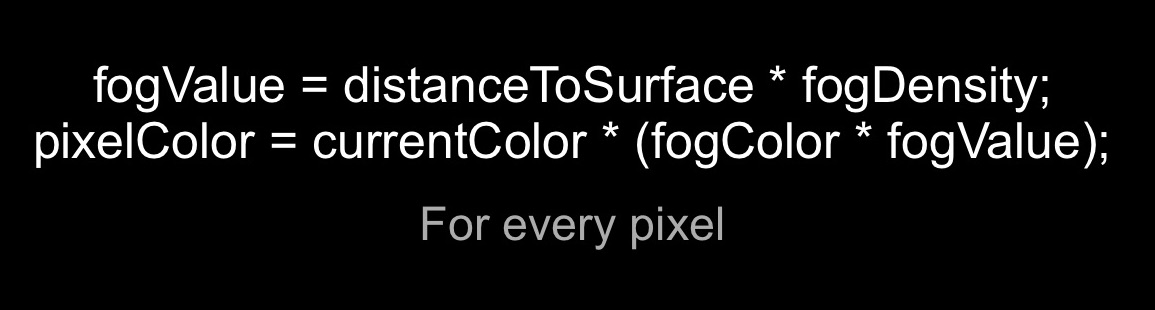

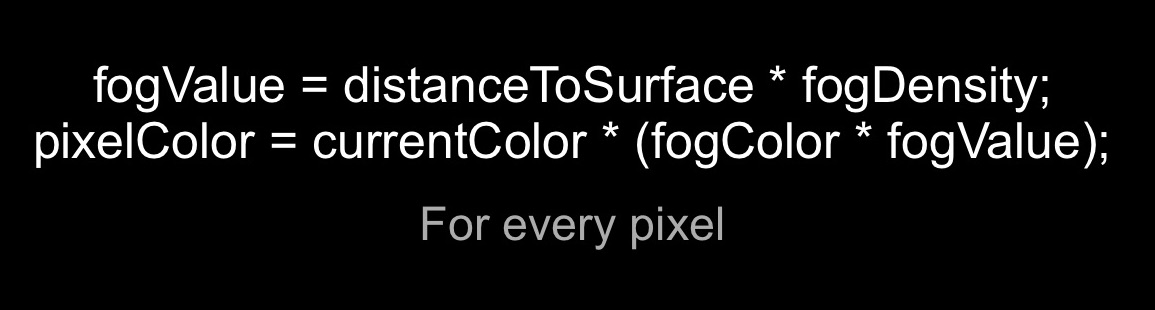

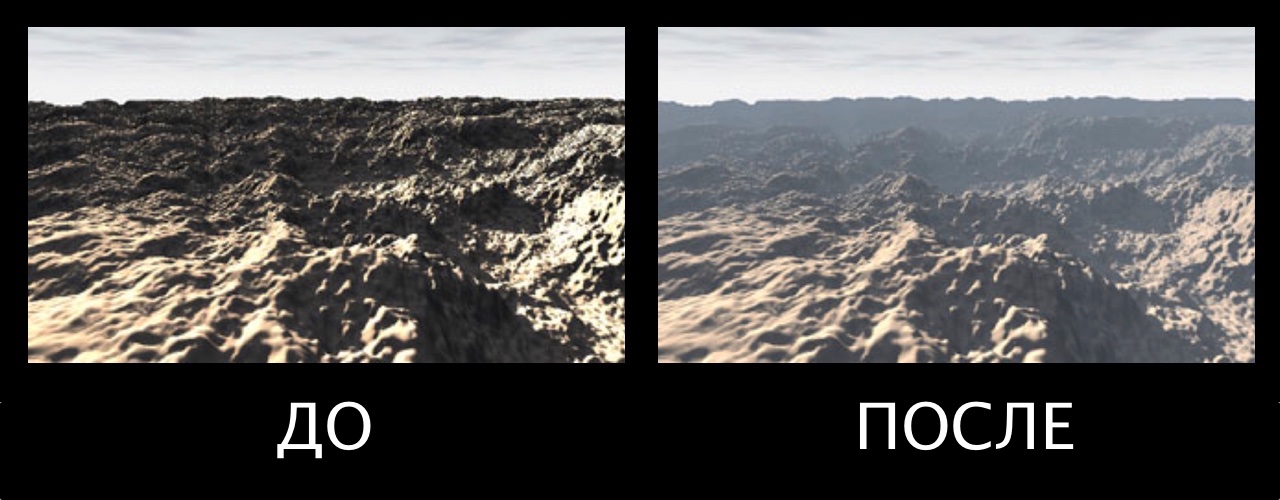

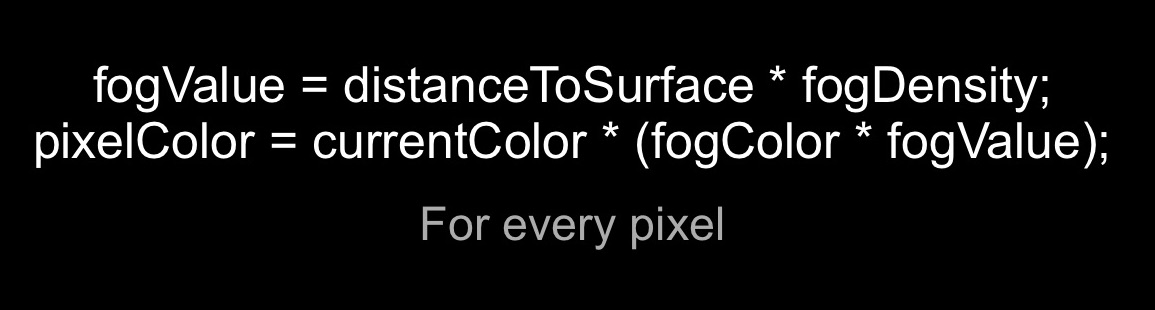

Let's look at the simplest example: draw a fog. There is a scene. It all consists of vertices, they are superimposed texture. In the first version of OpenGL, it looked like this:

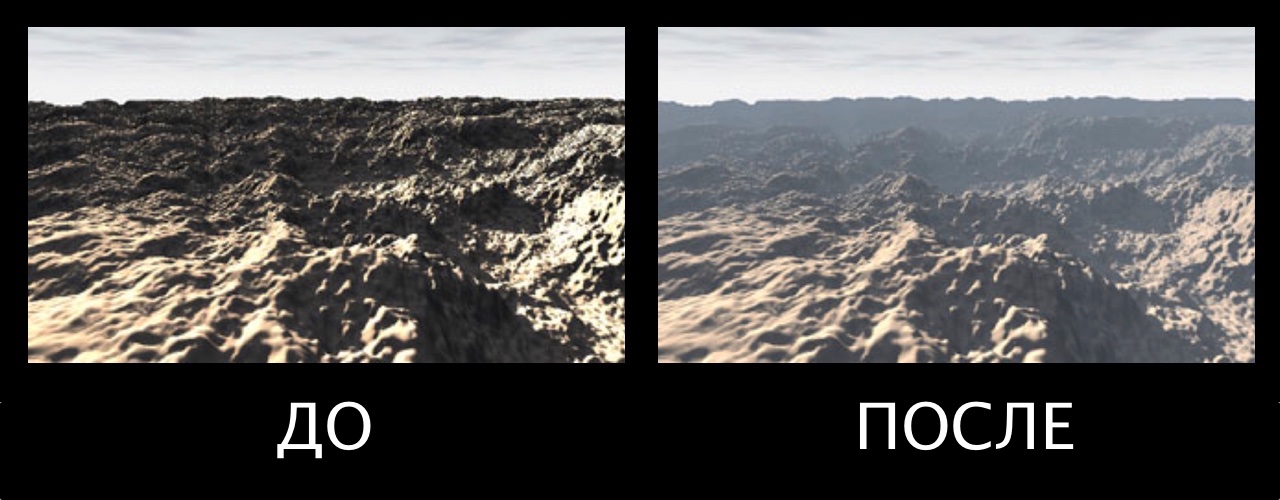

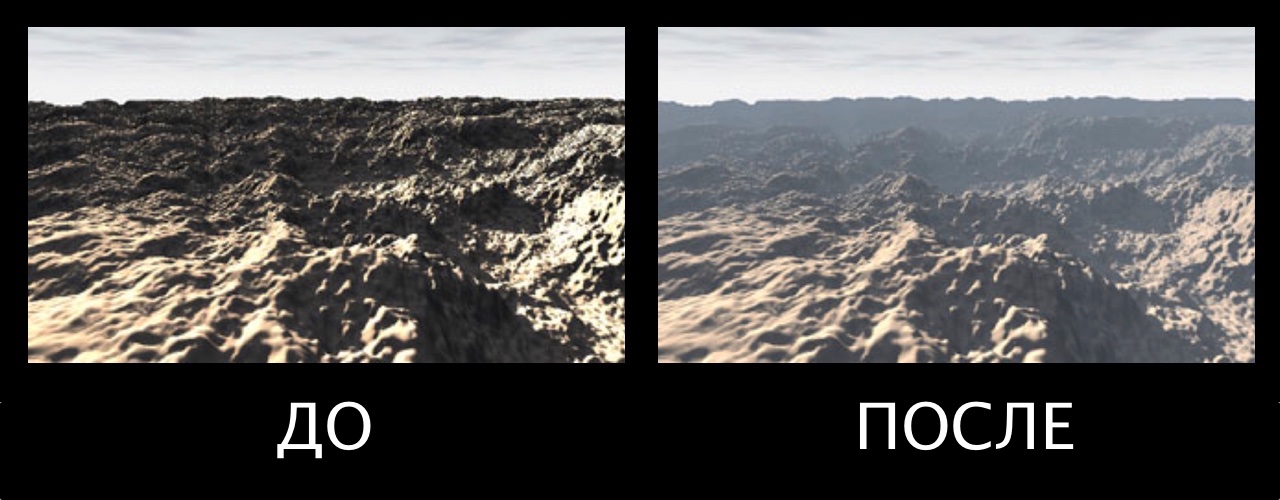

How can fog be made? In the fogvalue formula, this is the distance to the camera times the density of the fog, and the pixel color is equal to the current pixel color times the color of the fog and the amount of fog. If we perform this operation for each pixel on the screen, we will get the following result:

GLSL shaders appeared in 2004 in OpenGL v2, and this was the biggest breakthrough in the history of OpenGL. It appeared in 1991, and now, 13 years later, the next version was released.

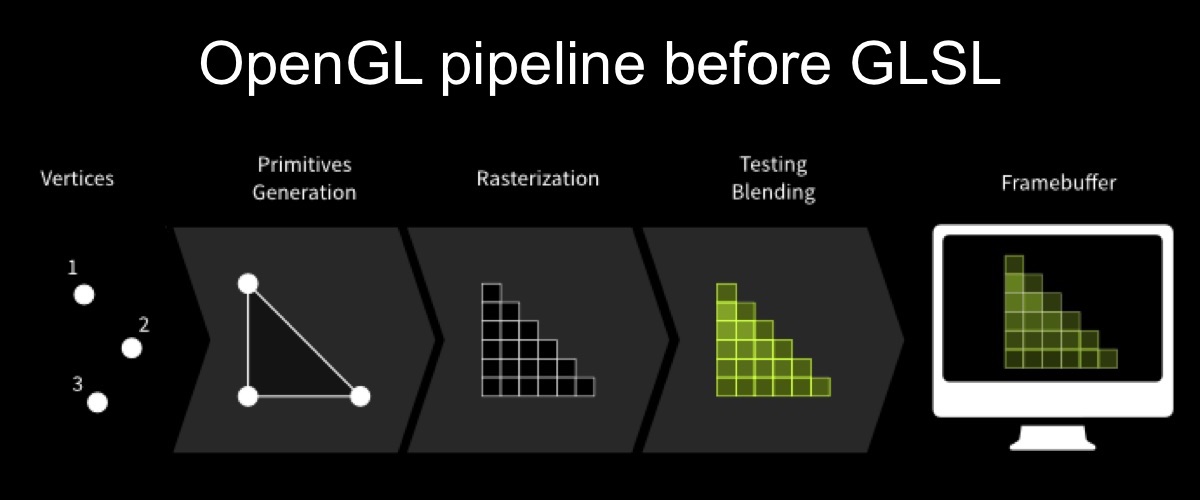

From then on, the pipeline rendering began to look like this:

The vertices are fed to the input, the vertex shader is executed first, which allows you to change the geometry of the object, then the primitives are built, they are rasterized, then the fragment shader is executed (“fragment” means “pixel”, in English terminology often uses “fragment shader”), then it cropped and displayed.

Okay, let's talk about some of the features of GLSL, because it has so many, many things that are unusual and sound strange, for JS developers so for sure.

Well, first, what's important to us, for web developers: GLSL is part of the WebGL specification. The gateway to GLSL will be