Out of the Box Hybrid Storage for Homes and High Availability from Synology

A few years ago, when choosing the first storage for my home, I looked in the direction of “boxed solutions” due to my lack of knowledge in building a storage system based on open source software and a regular PC. At that time, the choice fell on a 2-disk NAS - Shuttle KD20 . The storage was compact and quiet. RAID1 provided the necessary reliability, but there was no need for high performance and advanced functionality at that time. This NAS worked for almost 4 years, until at one point the fan power line was covered. Disks heated up to 60 degrees and miraculously survived. I soldered the fan directly to the motherboard, but began to pick up a replacement option. As the second NAS, I chose the 4-disk Synology. The tasks remained the same, therefore, in the functionality of DiskStation Manager (DSM)I did not particularly delve into. This went on until I decided to install home video surveillance on several channels. Despite the fact that Synology has its own video surveillance service, I settled on Macroscop - there was a need for advanced functionality and serious analytics. Fortunately, I discovered a new Virtual Machine Manager package in DSM- The hypervisor with which I created a virtual machine and installed Windows and Macroscop on it. The system worked fine for recording, the built-in 1.6 GHz Pentium was difficult, but managed to work out the storage and virtual machine tasks. But as soon as any analytics were activated, the service fell off due to processor overload. As a result, I was forced to start looking for a separate budget Windows device with adequate performance for implementing a video surveillance server, since Synology of the required level is not cheap. At that very moment, I once again stumbled online on articles on installing DSM on regular hardware and my XPenology project began ...

A few years ago, when choosing the first storage for my home, I looked in the direction of “boxed solutions” due to my lack of knowledge in building a storage system based on open source software and a regular PC. At that time, the choice fell on a 2-disk NAS - Shuttle KD20 . The storage was compact and quiet. RAID1 provided the necessary reliability, but there was no need for high performance and advanced functionality at that time. This NAS worked for almost 4 years, until at one point the fan power line was covered. Disks heated up to 60 degrees and miraculously survived. I soldered the fan directly to the motherboard, but began to pick up a replacement option. As the second NAS, I chose the 4-disk Synology. The tasks remained the same, therefore, in the functionality of DiskStation Manager (DSM)I did not particularly delve into. This went on until I decided to install home video surveillance on several channels. Despite the fact that Synology has its own video surveillance service, I settled on Macroscop - there was a need for advanced functionality and serious analytics. Fortunately, I discovered a new Virtual Machine Manager package in DSM- The hypervisor with which I created a virtual machine and installed Windows and Macroscop on it. The system worked fine for recording, the built-in 1.6 GHz Pentium was difficult, but managed to work out the storage and virtual machine tasks. But as soon as any analytics were activated, the service fell off due to processor overload. As a result, I was forced to start looking for a separate budget Windows device with adequate performance for implementing a video surveillance server, since Synology of the required level is not cheap. At that very moment, I once again stumbled online on articles on installing DSM on regular hardware and my XPenology project began ...The cost of the necessary components for the new storage was comparable with the cost of Intel NUC, which I looked after for the video surveillance server. Therefore, I decided to abandon the existing Synology in favor of my brother (and use it as a remote backup), and myself to build an all-in-one DSM-based system.

As a platform, I chose the well-known Chenbro SR30169 case for 4 hot- swappable baskets. I selected the motherboard by the number of LANs and the form factor - I found only this one from the fresh ones - Asrock Z370M-ITX / ac. Two network interfaces, support for the 8th generation of processors, and most importantly - 6 x SATA on board, which means that you can also connect the SSD to the read cache. The i3-8100 processor with 4 cores and 16GB memory (with a margin for virtual machines). Disks left from the previous Synology - 4 x 6Tb.

Platform assembly

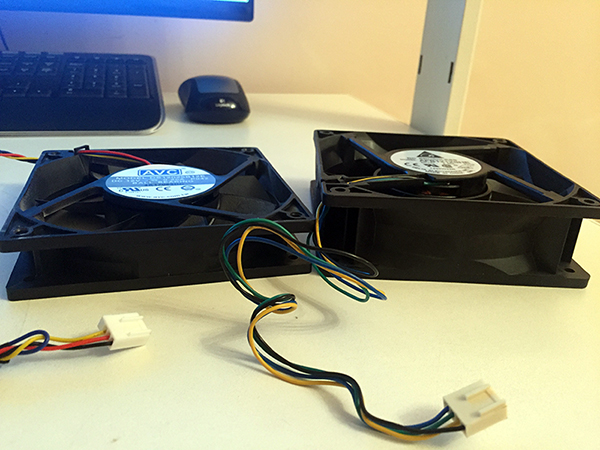

When used on a table, this case normally cools 4 discs in hot-swappable baskets. But in a cabinet under load, the temperature of the disks reached 48-50 degrees. Therefore, I decided to replace the regular 120th fan with a more efficient one.

1,5A-fan lowered the temperature of the drives to 36-40 degrees. After finalizing the hood from the cabinet, I am sure that the temperature will still drop significantly.

I installed one 2.5 "SSD for the cache on a standard mount on one side of the disk basket. Its temperature did not exceed 30-32 degrees, and this despite the fact that it does not actively cool.

As a drive for DSM packages and a quick partition, I installed the M.2 SATA SSD in the slot on the motherboard. The drive was heated to 50 degrees, despite the direct blowing. I solved the problem by installing several radiators on it - the temperature dropped by 10 degrees.

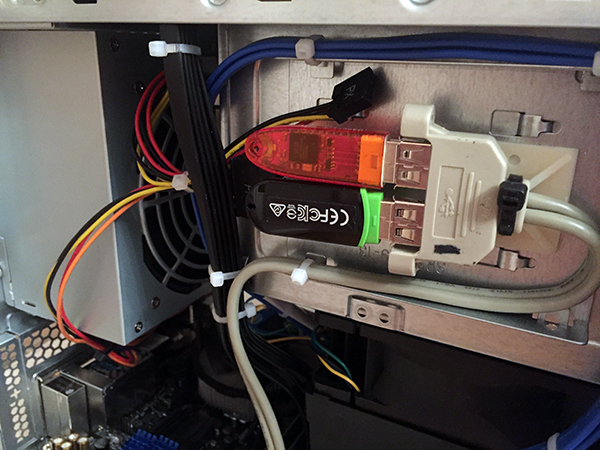

I have 2 constantly active USB devices: the XPenology bootloader and the Macroscop Guardant key. In order not to occupy the external connectors, I attached these devices inside the case.

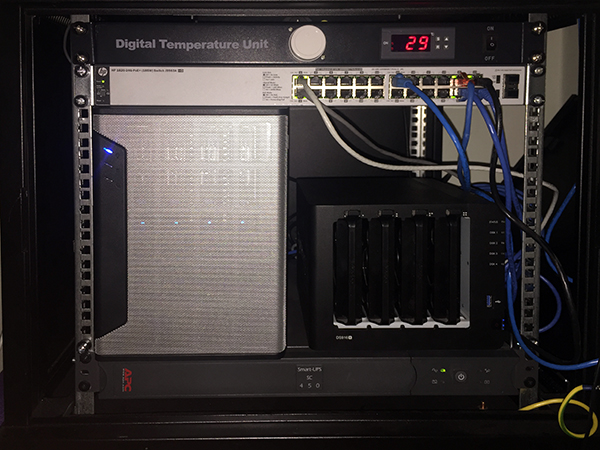

Ready storage with high processor performance and the smallest size with a creak, but fit into the free 6 units.

Bootloader preparation

In order to install DSM, you need a bootloader that will introduce hardware as Synology storage.

There are a lot of instructions on the Internet on this subject, so I won’t go into details, but if you want to, I can describe the details of preparing a boot device.

After installing a valid serial / MAC pair and other parameters, the image for the DS3615 is uploaded to any device from which you can boot. You can use SATA DOM, but since I have SATA ports for conversion, I settled on the classic version - a USB flash drive.

In the BIOS, it is necessary to remove all boot devices except USB, and in the SATA parameters enable the HotPlug function so that new disks are detected “hot” without waiting for a reboot.

Launch

At the first start, we search for the device using find.synology.com. If this option does not work, then download Synology Assistant from the official site and scan the network using it.

After connecting to the web interface at the storage address, the system offers to install DSM. If everything was done correctly at the bootloader preparation step, installation can be done not from the image file, but immediately from the official site in automatic mode.

The system formats all installed media and on each creates an area for DSM. Thus, by moving the disks to another Synology or Xpenology storage, you can migrate while preserving all the data and system settings.

Before implementing everything at home, I trained for a long time on various platforms. The system migrated without problems from a computer based on Celeron J1900 to a server with 2 x E5-2680V4, and then to an ancient exhibit based on 2 x E5645. If there are virtual machines, then of course you must enable processor compatibility mode before installing the OS on the virtual machine. This probably reduces performance, as the processor in the virtual machine becomes not real, but universal. But then, migration takes place without difficulty and BSOD.

Customization

Working through the Xpenology bootloader has almost no restrictions compared to the original device. Of the differences, the lack of the QuickConnect function is noted - there is no remote access to the storage through the Synology account. But I have an external IP - this restriction is not relevant for my case.

The processor model and the number of cores are also displayed incorrectly - the information is protected in the bootloader and will always look like for the DS3615xs: INTEL Core i3-4130 / 2 core. But then, the frequency is determined by the current. This feature does not prevent the real number of cores from being determined and used by the hypervisor. But there are limitations - Virtual Machine Manager will see no more than 8 cores in the system. Therefore, putting DSM on multi-core configurations is pointless.

With the volume of RAM, everything is in order - the entire volume was determined and used (in practice, up to 48GB).

Integrated network controllers are detected without problems, but I did not find WiFi. I suppose that this problem can be solved by adding drivers, but, unfortunately, my knowledge of Linux does not allow me to implement this. If there is a person among the readers of this article who can describe the instructions for adding drivers to the wireless controller in the assembly, I will be grateful.

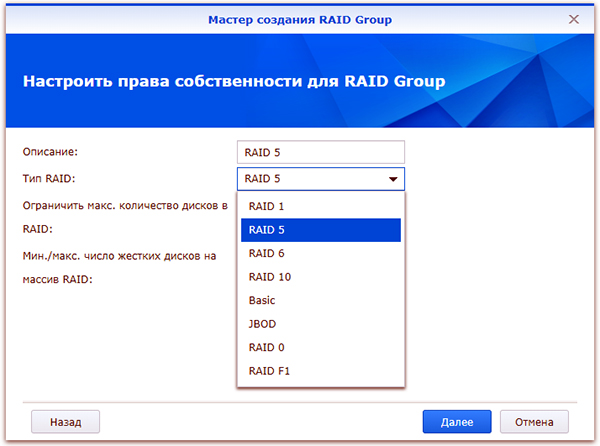

Before you can use storage, you must create RAID groups. After switching to the first Synology, I left the “mirror”, and launched 2 additional discs on Hot Spare. When switching to Xpenology, he chose RAID5 + HS, but then added the 4th drive to RAID6. It still spins and heats up - albeit with benefit.

Since DSM provides both file and block access, you need to determine the type of future storage before creating a RAID array.

I immediately created several LUNs for use on the home mini-PC and laptop. A file ball is good, and a block access disk for installing programs is even better.

Next, the required number of LUNs and partitions on RAID groups, shared folders, and more are created. It makes no sense to describe the well-known functionality of Synology. All available expansion packs with functional descriptions are available on the official website .

The following packages were relevant for my tasks:

Virtual Machine Manager - because of it, the whole idea with Xpenology was due to it.

The package has more advanced functionality than I use, so I decided to test its work on several nodes in High Availability Cluster mode.

But, he was soon disappointed. A cluster requires 3 nodes: active, passive, and storage. Automatic migration of virtual machines when an active node fails is supported only on Synology Virtual DSM virtual machines - it will not work with Windows and other operating systems. What is the point of raising a cluster with virtual DSMs on DSMs, I still don’t understand ...

In general, I did not discover this module for myself, more than a banal hypervisor.

VPN Server - supports PPTP, OpenVPN and L2TP / IPSec

PPTP, as I was able to find out, it supports only one connection for free - I use it to communicate with remote Synology for backup.

I use OpenVPN to connect from iPhone and a working computer, as well as to remotely connect LUN via iSCSI.

Hyper Backup is a convenient, functional and, at the same time, concise backup service.

You can reserve both folders and LUN. File backup can be merged to another Synology, to another NAS and to the clouds. The LUN is only backed up locally or remotely to the Synology device. Therefore, if you need to backup the moon to the cloud, as I understand it, you can first backup it to the local folder, and already to the cloud.

I use 3 types of redundancy:

- Reserve for remote Synology - everything is copied there, except for the backup folder (it contains the full backup of the deleted Synology).

- Backup of only the most important to Yandex-drive (via WebDAV)

- Take a google drive (available on the list of available cloud services)

The possibilities of file backup are quite wide.

By selecting a method and specifying the data for authorization on the remote device, folders for backup are marked.

Next, configure the schedule and backup parameters.

If you choose encryption, you will need to enter a password to access the backup. After creating a task, a key file is automatically uploaded, which can replace a forgotten password during data recovery.

Client-side encryption, in my opinion, is very useful when backing up to a public cloud. If Google can do anything with the archive of your photos, then an encrypted backup of the same photos will be of little use to anyone.

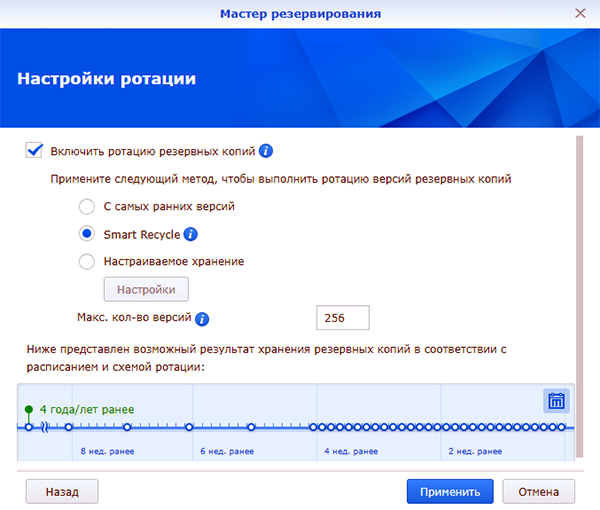

Next, backup rotation is enabled / configured.

I use Smart Recycle mode, but you can set the rotation schedule for incremental backup copies in your own way.

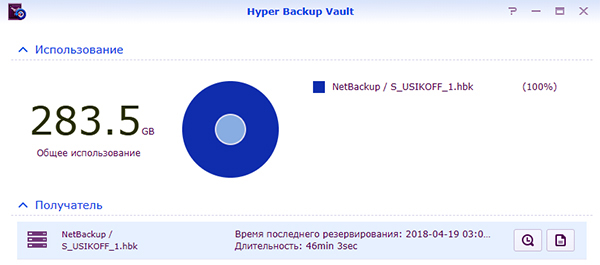

The Hyper Backup module works only in tandem with the back part - the Hyper Backup Vault module.

This service accepts remote copies and is responsible for their storage.

Recovery of data, applications and settings is possible both on the current system (if the array is damaged, data is lost, etc.), or on a new Synology or Xpenology that is the same or completely different. To restore, when creating a backup task, you must specify that this is not a new task, but a connection to an existing one. Hyper Backup will see the necessary backup on the remote machine and offer to select the copy version by date and time.

At the moment, this is all the functionality that I managed to learn and use.

Home Xpenology continues to work without problems - periodically updated DSM and packages, computing power with a margin, and for the money it cost me 1.5 times cheaper than Synology DS916 +.

Synology High Availability Cluster

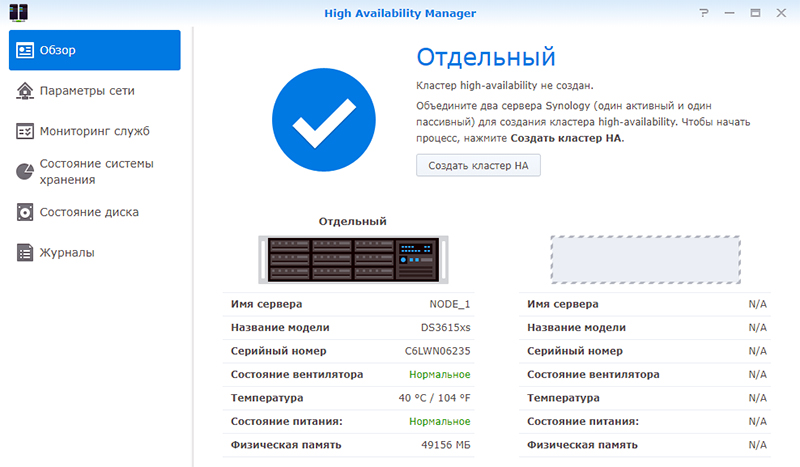

I was interested in the High Availability Manager service , which turned out to be incompatible with the Virtual Machine Manager service, as the cluster also does it, but in a different way.

For testing, I picked up Xpenology on two 2 x Xeon E5645 based servers. The servers for this cluster must be identical, the IP addresses are static, the second port of each server is connected directly to each other (it can also be through the switch, but more efficiently).

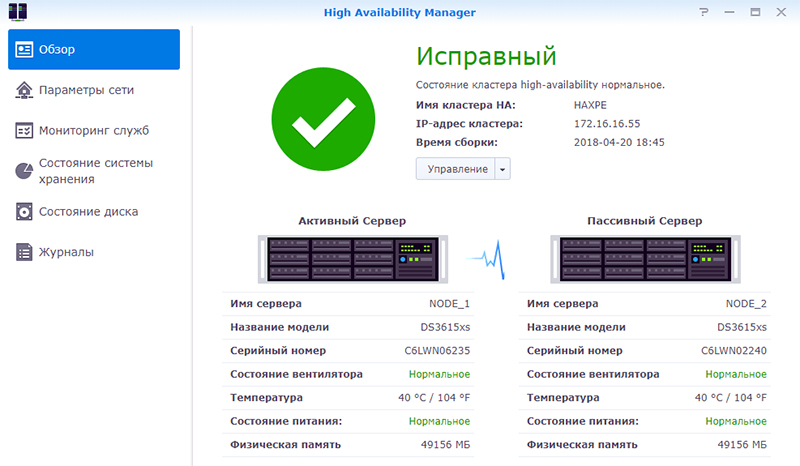

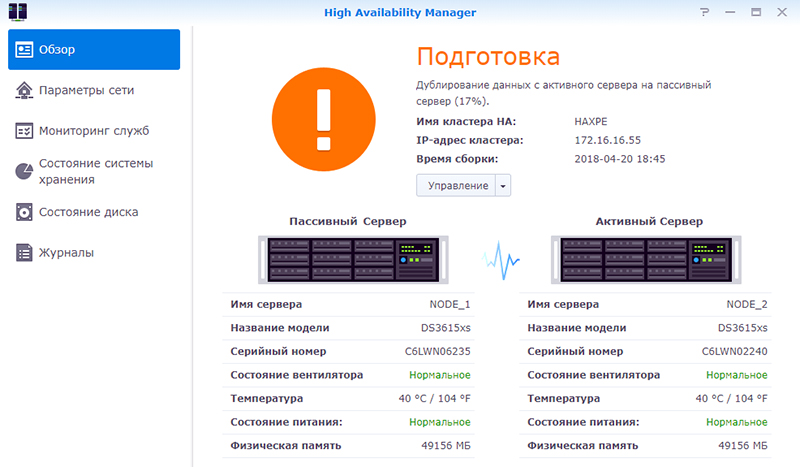

After connecting the second node, the Heartbeat connection is tested. Next, the cluster name and static local address are assigned. During the merging of nodes, the configuration of the passive node is brought to the active state, applications, storage and data are synchronized. Both nodes fall off for network access, and after creation, the cluster is available at its new address.

Depending on the volume of existing data, a full synchronization of arrays can take a lot of time, but the cluster is available for operation without fault tolerance 10 minutes after the start of the merger.

After the second node is a full copy of the first, the high availability mode is activated.

To test the fault tolerance, I created a LUN, connected it via iSCSI and launched the voluminous task of reading and writing from my PC, together with playing the video.

At the time of activity, I turned off the main server. The LUN did not fall off, the copying process did not interrupt, but stopped for 10-15 seconds - this time it took the passive server to take the active role and start the fallen services. Playback also paused for a few seconds. After a short downtime, data copying and video playback continued as normal without having to restart the process. In most cases, such a “failure” will not be noticeable to users, unless the video is being played back without buffering or any other processes that require continuous access to the repository are started.

After turning on the first node, it goes into passive server mode. The background synchronization process starts, after which the high-availability mode is restored again.

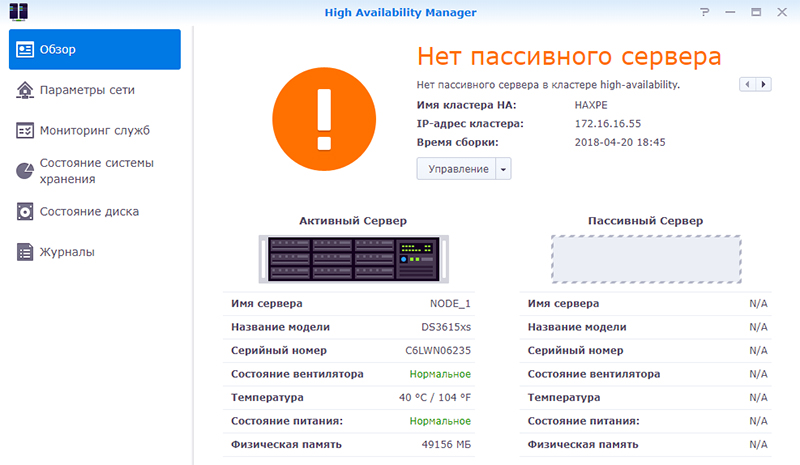

To replace a node, in the event of a complete failure, it is necessary to free the passive server.

The procedure for binding a passive server is similar to the procedure for creating a cluster, first synchronization - then High Availability. Only with one exception - the addition occurs already from the cluster interface, and not from the active server.

Of the minuses of this solution is high redundancy, but the plus is honest fault tolerance.

The main expenses fall on the disks, but for lovers of RAID10 the most it! Mirror two nodes with RAID5 or RAID6 - the disks will be almost the same thing. But fault tolerance will increase by a multiple.

It is clear that this is not a unique functionality, but “out of the box” and does not require special experience and knowledge - only a web interface. And, considering that Xpenology works on any hardware, it turns out to be a very interesting, productive and fault-tolerant solution for personal use.

Thanks for attention!

Only registered users can participate in the survey. Please come in.

Does it make sense to use Xpenology?

- 24% Of course not! Open source solutions on Linux and FreeBSD are not more difficult to implement and are similar in functionality. 33

- 19.7% Perhaps only if there are not enough brains as an author to make a decision on FreeNAS and other products. 27

- 36.4% Of course, yes! The flexibility of choosing hardware and DSM functionality is an indispensable solution for the home. fifty

- 19.7% Only original Synology! Trusting Xpenology is dangerous - it’s not clear how it will behave during the next update ... 27