The history of gaming analytics platforms

- Transfer

Since the release of the Dreamcast console and the advent of the modem adapter, game developers have been able to collect data from players about their behavior in their natural habitat. In fact, the history of gaming analytics began with old PC games like EverQuest, released in 1999. Game servers were needed to authorize users and fill the worlds, but at the same time provided the ability to record data about the game process.

Since 1999, the situation with the collection and analysis of data has changed significantly. Instead of storing data locally in the form of log files, modern systems can track actions and apply machine learning in almost real time. I will talk about the four stages of the development of gaming analytics, which I identified during my stay in the gaming industry:

- Regular files: data is stored locally on game servers

- Databases: data is obtained as simple files and loaded into a database

- Data Lakes: Data is saved in Hadoop / S3 and then uploaded to the database

- Serverless stage: for storage and execution of requests services with remote control are used (managed services)

Each of these evolutionary stages supported an increasing amount of data being collected and reduced the delay between data collection and analysis. In this post I will present examples of systems of each of these eras and talk about the pros and cons of each approach.

Game analytics began to gain momentum around 2009. Georg Zoeller of Bioware created a system for collecting game telemetry during the development of games. He introduced this system at GDC 2010 . Shortly after, Electronic Arts began collecting data from games after development to track the behavior of real players. In addition, the scientific interest in the use of analytics in gaming telemetry has constantly increased. Researchers in this field, like Ben Medler, have suggested using game analytics to personalize gameplay.

Although over the past two decades there has been a general evolution of the gameplay analytics pipelines, there is no clear distinction between different eras. Some game teams still use systems from earlier eras, and maybe they are better suited for their purposes. In addition, there are a large number of ready-made game analytics systems, but we will not consider them in this post. I will talk about teams of game developers collecting telemetry and using their own data pipeline for this.

The era of simple files

Components of analytics architecture before the database era

I started playing analytics in Electronic Arts in 2010, before EA even created an organization to work with this data. Although many game companies have already collected huge amounts of gameplay data, most telemetry was stored in the form of log files or other simple file formats stored locally on game servers. It was impossible to query directly to any data, and the calculation of basic metrics, for example, the number of monthly active users (MAU), required considerable effort.

Electronic Arts has integrated the replay feature in Madden NFL 11, providing an unexpected source of gaming telemetry. After each match, a summary of the game in XML format was transmitted to the game server, which listed the game strategies, movements made during the game and the results of downs. The result was millions of files that could be analyzed to learn more about how Madden interacts with players in their natural habitat. After completing an internship at EA in the fall of 2010, I created a regression model that analyzed the functions that most influenced the retention of user interest in the game.

The effect of the winning ratio on player retention in Madden NFL 11 based on your preferred game mode.

About ten years before my internship at EA, Sony Online Entertainment already used game analytics to collect gameplay data using log files stored on servers. These data sets began to be used for analysis and modeling only a few years later, but, nevertheless, they turned out to be one of the first examples of game analytics. Researchers such as Dmitri Williams and Nick Yi have published works based on the EverQuest franchise data analyzed.

Local data storage is without a doubt the easiest approach to collecting gameplay data. For example, I wrote a tutorial on using PHP to save data generated byBy Mario Infinite . But this approach has significant drawbacks. Here is a list of compromises that you have to make with this approach:

Pros

- Simplicity: save any data we need in any format you need.

Cons

- No fault tolerance.

- Data is not stored in a central repository.

- High latency data access.

- Lack of standard tools or ecosystems for analysis.

Regular files are quite suitable if you have only a few servers, but in fact, this approach is not an analytics pipeline if you do not move files to a central repository. While working at EA, I wrote a script to transfer XML files from dozens of servers to a single server, which parsed files and saved game events in the Postgres database. This meant that we could analyze Madden's gameplay, but the data set was incomplete and had significant delays. This system was the forerunner of the next era of gaming analytics.

Another approach that was used in that era was website scraping to collect gameplay data and then analyze it. During my post-graduate research work, I scrapped websites like TeamLiquid and GosuGamers to create a replay dataset of professional players in StarCraft. Then I created a predictive model to determine the construction order . Other examples of analytic projects of that era were website scraping like WoW Armory . A more modern example is SteamSpy .

Database Era

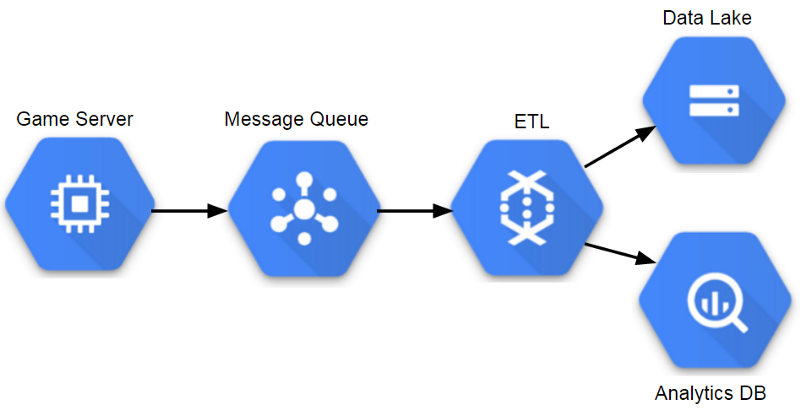

ETL-based analytic architecture components The

convenience of collecting game telemetry in a central repository became apparent around 2010, and many game companies began to store telemetry in databases. Many different approaches have been used to transfer event data to a database that analysts could use.

When I worked at Sony Online Entertainment, we had game servers that saved event files to a central file server every couple of minutes. Then the file server started the ETL process about once per hour, which quickly loaded these event files into the analytic database (at that time it was Vertica). This process had a reasonable delay, about an hour from the moment the game client transmitted the event to the possibility of making requests to our analytical database. In addition, it was scaled to large amounts of data, but at the same time, event data should have an unchanged scheme.

While working at Twitch, we used a similar process for one of our analytic databases. The main difference from the Sony solution was that instead of transferring scp files from game servers to the central repository, we used Amazon Kinesis to stream events from servers to the S3 indexing area. Then we used the ETL process to quickly load data into Redshift for analysis. Since then, Twitch has moved to the data lake system to be able to scale to larger volumes of data and provide various options for querying datasets.

The databases used by Sony and Twitch are extremely valuable for both companies, but with the increase in the volume of stored data, we began to have problems. When we began to collect more detailed information about the gameplay, we could no longer store the full history of events in the tables and we had to trim the data stored for more than a few months. This is normal if you can create summary tables containing the most important details of these events, but this situation is not ideal.

One of the problems with this approach was that the staging server becomes the main point of failure. Bottlenecks may also occur when one game sends too many events, resulting in loss of events for all games. Another problem is the speed of query execution while increasing the number of analysts working with the database. A team of several analysts working on several months of gameplay data can do the job well, but after years of collecting data and increasing the number of analysts, the speed of query execution can become a serious problem, which may take several hours to complete.

Pros

- All data is stored in one place and access to it is possible through SQL queries.

- There is a good toolkit, for example, Tableau and DataGrip.

Cons

- Storing all the data in a database like Vertica or Redshift is expensive.

- Events should have a permanent pattern.

- May require truncation of tables.

Another problem with using the database as the main interface for gameplay data is that it is not possible to effectively use machine learning tools, such as MLlib Spark, since all relevant data must be unloaded from the database before starting work. One way to overcome this limitation is to store gameplay data in a format and storage layer that works well with Big Data tools, for example, save events as Parquet files in S3. This type of configuration became popular in the next era. It eliminated the need for truncating tables and reduced the cost of storing all data.

The era of data lakes

Components of the analytic architecture of the data lake

The most popular data storage pattern during my work as a data analysis specialist in the gaming industry was the data lake pattern. A common pattern is to store semi-structured data in a distributed database and to execute ETL processors to extract the most important data into analytic databases. For a distributed database, you can use many different tools: in Electronic Arts we used Hadoop, in Microsoft Studios - Cosmos, and in Twitch - S3.

This approach allows teams to scale to huge amounts of data, and also provides additional protection against crashes. Its main drawback is that it increases complexity and can lead to the fact that analysts will have access to less data than with the traditional approach to databases, due to a lack of tools or access policies. Most analysts will interact with the data in this model in the same way, using an analytic database populated from the ETL of the data lake.

One of the advantages of this approach is that it supports many different event schemes, and in it you can change the attributes of an event without affecting the analytical database. Another advantage is that analytics teams can use tools like Spark SQL to work directly with the data lake. However, in most places of my work, access to the data lake was limited, which did not allow using most of the advantages of such a model.

Pros

- Scalability to huge amounts of data.

- Support for flexible event schemes.

- Cost queries can be transferred to the data lake.

Cons

- Significant costs when working.

- ETL processes can create significant delays.

- In some data lakes there are not enough full-fledged tools.

The main disadvantage of data lakes is that usually a whole team is required for a system to function. This makes sense for large organizations, but for small companies it can be overkill. One way to take advantage of data lakes without cost overhead is to use remote services.

Serverless era

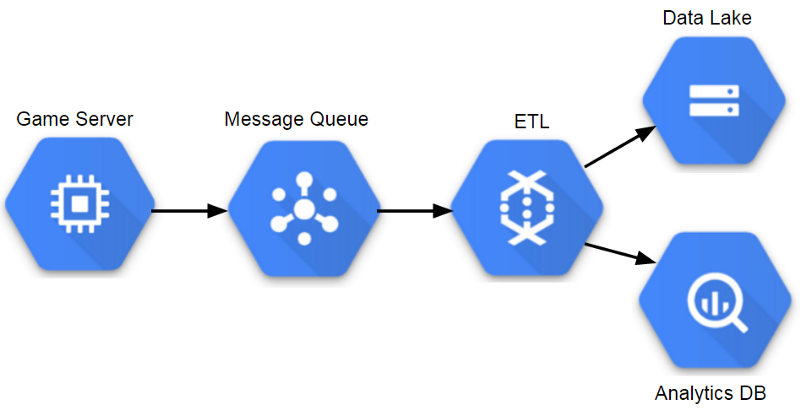

Components of a Managed Analytical Architecture (GCP)

In the current era, gaming analytics platforms use many network management services that allow teams to work with data in almost real time, scale systems if necessary, and reduce server support costs. I did not have to work in this era when I was in the gaming industry, but I saw signs of such a transition. Riot Games uses Spark for ETL and machine learning processes, so she needed the ability to scale infrastructure on demand. Some game teams use adaptive methods for game services, so it’s logical to apply this approach to analytics as well.

After GDC 2018, I decided to try building a sample conveyor. At my current job, I used the Google Cloud Platform, and it looks like she has a good toolkit for a managed data lake and query execution environment. The result has turned into this tutorial , which uses DataFlow to build a scalable pipeline.

Pros

- The same benefits as using a data lake.

- Automatic scaling based on storage needs and requests.

- The minimum cost of work.

Cons

- Remote management services can be expensive.

- Many services are platform-specific and porting may not be possible.

In my career, I achieved the greatest success by working with the approach of the database era, as it gave the analytics team access to all the important data. However, such a scheme cannot continue to scale, and most teams have since switched to environments with data lakes. For a data lake environment to be successful, analytic teams must have access to the underlying data and have ready-made tools to support their workflows. If I were building a conveyor today, I would definitely start with a serverless approach.

Ben Weber is a leading data analysis specialist at Windfall Data , who is creating the most accurate and comprehensive model of net worth.