MIT course "Computer Systems Security". Lecture 22: MIT Information Security, Part 2

- Transfer

- Tutorial

Massachusetts Institute of Technology. Lecture course # 6.858. "Security of computer systems." Nikolai Zeldovich, James Mykens. year 2014

Computer Systems Security is a course on the development and implementation of secure computer systems. Lectures cover threat models, attacks that compromise security, and security methods based on the latest scientific work. Topics include operating system (OS) security, capabilities, information flow control, language security, network protocols, hardware protection and security in web applications.

Lecture 1: “Introduction: threat models” Part 1 / Part 2 / Part 3

Lecture 2: “Control of hacker attacks” Part 1 / Part 2 / Part 3

Lecture 3: “Buffer overflow: exploits and protection” Part 1 /Part 2 / Part 3

Lecture 4: “Privilege Separation” Part 1 / Part 2 / Part 3

Lecture 5: “Where Security System Errors Come From” Part 1 / Part 2

Lecture 6: “Capabilities” Part 1 / Part 2 / Part 3

Lecture 7: “Native Client Sandbox” Part 1 / Part 2 / Part 3

Lecture 8: “Network Security Model” Part 1 / Part 2 / Part 3

Lecture 9: “Web Application Security” Part 1 / Part 2/ Part 3

Lecture 10: “Symbolic execution” Part 1 / Part 2 / Part 3

Lecture 11: “Ur / Web programming language” Part 1 / Part 2 / Part 3

Lecture 12: “Network security” Part 1 / Part 2 / Part 3

Lecture 13: “Network Protocols” Part 1 / Part 2 / Part 3

Lecture 14: “SSL and HTTPS” Part 1 / Part 2 / Part 3

Lecture 15: “Medical Software” Part 1 / Part 2/ Part 3

Lecture 16: “Attacks through a side channel” Part 1 / Part 2 / Part 3

Lecture 17: “User authentication” Part 1 / Part 2 / Part 3

Lecture 18: “Private Internet viewing” Part 1 / Part 2 / Part 3

Lecture 19: “Anonymous Networks” Part 1 / Part 2 / Part 3

Lecture 20: “Mobile Phone Security” Part 1 / Part 2 / Part 3

Lecture 21: “Data Tracking” Part 1 /Part 2 / Part 3

Lecture 22: MIT Information Security Part 1 / Part 2 / Part 3

So this was a preface to the fact that we have a team of just four people in a very large institution with a bunch of devices. Thus, the federation that Mark talked about is really necessary in order to at least try to ensure the security of a network of this size.

We communicate with people and help them on campus. Our portfolio consists of consulting services, services that we represent to the community, and tools that we use. The services we provide are quite diverse.

Abuse reporting - we issue reports of network abuse. Usually these are responses to complaints from the outside world, the vast majority of which concern the creation of Tor nodes in the institute’s network. We have them, what else can I say? (audience laughter)

Endpoint protection - endpoint protection. We have some tools and products that we install on "big" computers, if you wish, you can use them for work. If you are part of the MIT domain, which is mostly an administrative resource, these products will be automatically installed on your computer.

Network protection, or network protection, is a set of tools located both throughout the mit.net network and at its borders. They detect anomalies or collect traffic flow data for analysis. Data analytics helps us compare, connect all these things together and try to get some useful information.

Forensics is computer forensics, we will talk about it in a second.

Risk identification, or risk identification, is mainly network sensing and assessment tools such as Nessus. This includes things that are looking for PII - personal identification information, because, while in Massachusetts, we need to enforce the new rules of the basic state law 201 CMR 17.00. They require that agencies or companies that store or use personal information about residents of the state of Massachusetts develop a plan to protect personal information and regularly check its effectiveness. Therefore, we need to determine where in our network user personal information is located.

Outreach / Awareness / Training - information and training, I just talked about this.

Compliance needs, addressing needs is primarily a PCI DSS system. PCI, the payment card industry, which has DSS, a data security standard. Believe it or not, the Massachusetts Institute of Technology trades in credit cards. We have several suppliers of these payment tools on campus, and we need to be able to ensure that their infrastructure is compatible with PCI DSS. So, safety management and safety compliance in meeting such needs are also part of our team’s work.

PCI 3.0, which is the sixth major update of the standard, will be launched on January 1, so that we are in the process of ensuring compliance with our entire infrastructure.

Reporting / Metrics / Alerting, reporting, providing indicators and issuing alerts are also part of our work.

To protect the endpoints, a number of products are used, as shown in the next slide. On top of it seems to be an eagle. There is a tool called CrowdStrike, which is currently being tested in our IS & T department. Basically, it tracks anomalous behavior from a system call point of view.

For example, if you use MS Word, and the program suddenly starts doing something that it should not do, for example, trying to read the account database from the system or a bunch of passwords, CrowdStrike warns about this and throws an alarm flag. This is a cloud tool, which we'll talk about later. All this data is sent to the central console, and if computers try to do something malicious, as evidenced by a heuristic behavioral perspective, they get a red flag.

GPO - Group Policy Objects is a system that implements group security policies. S is all sorts of Sophos programs to protect against malicious applications, antiviruses, all the things we expect to get when you buy products to protect the endpoint.

PGP encrypts the hard disk for campus systems that contain sensitive data.

Some of these tools are being replaced with more advanced ones. The industry is increasingly targeting vendor neutral policies using solutions like BitLocker for Windows or FileVault for Mac, so we are exploring these options. Casper mainly manages the security policies of computers running Mac OS.

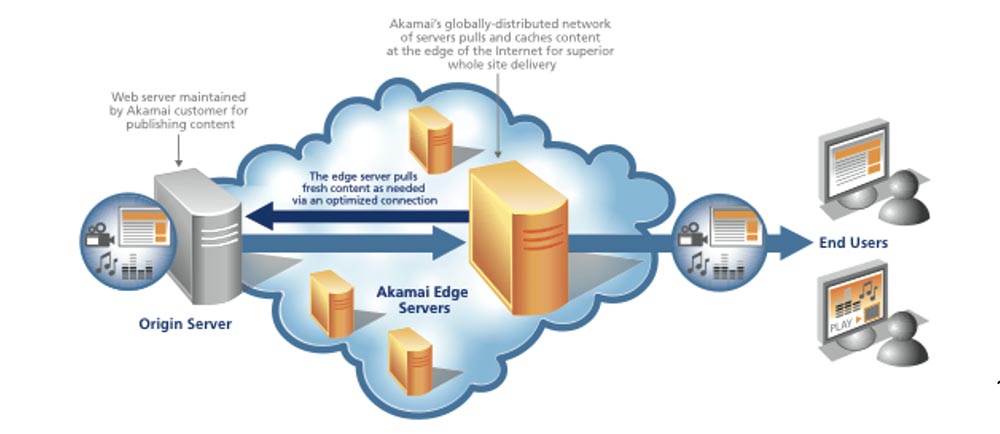

As for the network protection, we are working in this area with the company Akamai - this is a company that actually left MTI, many of our graduates work in it. They provide very good maintenance, so we use the many services they provide.

Later we will talk about them in sufficient detail.

TippingPoint is a risk identifier, an intrusion detection system. As I said, some of these tools are constantly being improved, and TippingPoint serves as an example. Thanks to him, we have an intrusion prevention system at our border. In fact, we do not prevent them, but simply discover them. We do not block anything at all at the MIT network boundary, using some very simple and widespread anti-spoofing rules.

Stealth Watch is a tool that generates NetFlow data, or, more correctly, collects NetFlow data. We use Cisco devices, but all network devices output some details, the metadata of the streams they send, the source port, destination port, source IP address, destination IP address, protocol, and so on. StealthWatch collects this data, performs basic security analysis on it, and also provides an API that we can use to do smarter things.

RSA Security Analytics is another tool that acts in many ways as an identifier “on steroids.” It performs a full packet capture, so you can see their contents if it is marked with a red flag.

In the area of risk identification, Nessus is a kind of de facto vulnerability assessment tool. We usually use it on demand, we do not deploy it as a whole on the 18/8 network subdomain. But if we get some DLC add-ons for the campus software, then we use Nessus to evaluate their vulnerabilities.

Shodan is called a computer search engine. Basically, it scans the Internet as a whole and provides a lot of useful data about its security. We have a subscription to this “engine”, so that we can use this information.

Identity Finder is a tool that we use in places where there is PII, confidential personal information, in order to comply with the registration data protection rules and just make sure that we know where the important data is.

Computer forensics is a matter that we deal with periodically ... I can’t find the right words, because we don’t do it regularly, sometimes such investigations take a lot of time, sometimes not so much. For this, we also have a set of tools.

EnCase is a tool that allows us to capture disk images and view HDD content with their help.

FTK, or Forensic Tool Kit - a set of tools for conducting investigations in the field of computer forensics. We are often contacted when it is necessary to take images of the disks when considering controversial cases of confirming intellectual property or in some other cases that are considered by OGC, the office of the General Counsel. We have all the necessary tools for this. Honestly, this is not a permanent job, it appears periodically.

So how do we collect all this data together? Mark mentioned correlation. To manage the system, we process the system logs. You can see that we have NetFlow logs, some DHCP logs, ID logs and Touchstone logs.

Splunk is a tool that performs most of the correlation work and takes data that is not necessarily normalized, normalizes it and allows us to compare data from different sources in order to get more “intelligence”. Thus, it is possible to display a page on which all your logins will be logged and to impose GeoIP on it in order to display also the log-in points.

Let's talk about attacks and more interesting things than the software we use. First, we’ll talk about the most common denial of service attacks in recent years. We will also talk about specific attacks that occurred as a result of the tragedy of Aaron Schwartz several years ago, they are also associated with distributed DoS attacks.

Now I will show you the DoS primer. The first DoS attack is aimed at the letter A of the Computer Security Triad CIA. CIA is an abbreviation composed of Confidentiality - Privacy, Integrity - Integrity, and Availability - Accessibility. So DoS attack is aimed primarily at availability, Availability. The attacker wants to disable the resource so that legitimate users cannot use it.

This may be a damage to the page. It's very simple, isn't it? Digital graffiti just spoils the page so that no one can see it. This can be a huge resource consumption, where the attack "eats" all the computing power of the system and the entire network bandwidth. This attack can be carried out by one person, but it is likely that the hacker will invite friends to organize the whole DDoS - party, that is, a distributed denial of service attack.

Current DDoS trends are listed in the Arbor Network report. This is an extension of the attack surface, with hacktivism being the most common motivation; it accounts for up to 40% of such attacks, the motivation of another 39% remains unknown. The intensity of the attacks, at least last year, reached 100 Gbps. In 2012, there was an attack on the organization Spamhaus, which reached an intensity of 300 Gbit / s.

DDoS attacks become longer. Thus, the hacker operation Abibal, directed against the US financial sector, lasted several months, periodically increasing the intensity to 65 Gbit / s. I heard about this, and you can find confirmation on Google that this attack lasted almost constantly, and it was impossible to stop it. Later we will talk about how they did it.

But, frankly, 65 Gbit / s or 100 Gbit / s, the difference for the victim is not big, because rarely which systems in the world can withstand a prolonged attack of this magnitude, because it is simply impossible to stop. Recently, there has been a shift towards attacks of reflection and amplification. These are attacks for which you take a small input signal and turn it into a large output signal. This is nothing new. It goes back to the ICMP Smurf attack, where you would ping the network broadcast address and every machine on that network that responds to the intended sender of the packet, which, of course, will be faked. For example, I pretend to be Mark and send a packet to the broadcast address of this class. And all of you will begin to respond with packets to Mark, thinking that he sent them. Meanwhile, I sit in the corner and laugh. So this is nothing new,

So, the next kind of DDoS attacks is UDP Amplifacation, or “UDP amplification”. UDP is a “burn and forget” user datagram protocol, right? This is not TCP, it is completely unreliable and not focused on creating secure connections. It is very easy to fake. What we have seen in the last year is the exploits to enhance the functions of the three protocols: DNS, port 53, UDP. If you send 64 bytes of an ANY request to an incorrectly configured server, it will generate a 512 byte response that will be returned to the victim of the attack. This is an 8-fold gain, which is not bad for a DoS – attack organization.

When there was a tendency to increase attacks of this type, we witnessed them here, in the mit.net network, before they were massively used against victims in the commercial sector. We saw a 12-gig DNS amplification attack that significantly affected our outgoing bandwidth. We have high enough bandwidth, but adding such data volumes to the legal traffic caused a problem, and Mark and I were forced to solve it.

SNMP, which is a UDP 161 port protocol, is a very useful management protocol. It allows you to remotely manipulate data using get / set statements. Multiple devices, such as network printers, allow get access without any authentication. But if you send a GetBulkRequest request of 64 bytes to a device that is incorrectly configured, it will send a response to the victim, the size of which may exceed the size of the request 1000 times. So it is even better than the previous version.

As an attacker, you usually choose targeted attacks, so we witnessed massive attacks on printers located on our campus network. Using a printer with an open SNMP agent, the hacker sent him packets, and the response, a thousand times larger in size, polluted the entire Internet network.

Next comes the time server protocol, or the network time protocol NTP. If it is used, an incorrectly configured server will respond to the “MONLIST” request. Here the basis of the attack will not be amplification, but the response to the monlist request in the form of a list of the last 600 NTP clients. Thus, a small request from an infected computer sends a large UDP traffic to the victim.

This is a very popular type of attack, and we got into a big mess due to incorrect configuration of the NTP monlist. In the end, we did a few things to mitigate these attacks. So, on the NTP side, we disabled the monlist command on the NTP server, and this allowed us to “kill” the attack in its tracks. But since we have a public institution, there are things that we have no right to touch, so we turned off the monlist as much as our powers allowed. In the end, we simply limited the NTP speed at the MIT network boundary to reduce the impact of the systems responding to us, and now we have been living for a year without any negative consequences. The speed limit of a few megabits is certainly better than the gigabytes that we previously sent to the Internet. So this is a solvable problem.

Taking care of DNS security is a little more difficult. We started using Akamai service called eDNS. Akamai provides this service as a shared hosting zone, so we used their eDNA and split our DNS, our system domain name space, on 2 tiers. We placed the external tier on the external view of Akamai, and placed the internal internal view on the servers that always served MIT. We blocked access to internal networks in such a way that only MIT clients could get on our internal servers, and the rest of the world was left in the care of Akamai.

The advantage of Akamai's eDNS is content distributed on the Internet throughout the world, it is a geographically distributed network infrastructure that delivers content from Asia, Europe, North America, from the East and the West. Hackers cannot destroy Akamai, so we don’t need to worry that our DNS will ever shut down. This is how we solved this problem.

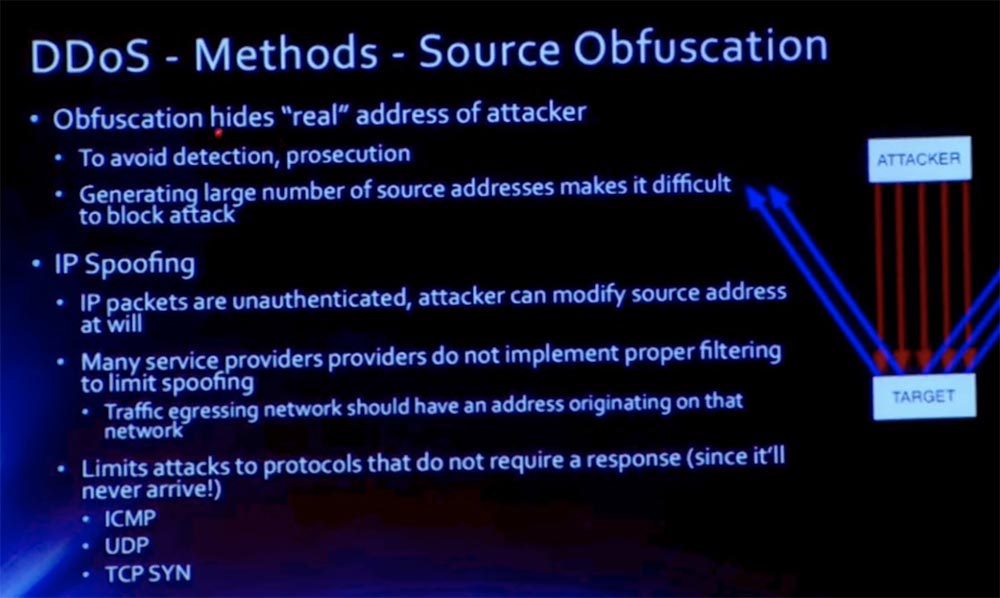

The following presentation slides list the methods for performing DDoS – attacks. The first, Source Obfuscation, is to hide the attacker's real address. This is done in order to avoid detection and prosecution. I will not go into details and skip this slide.

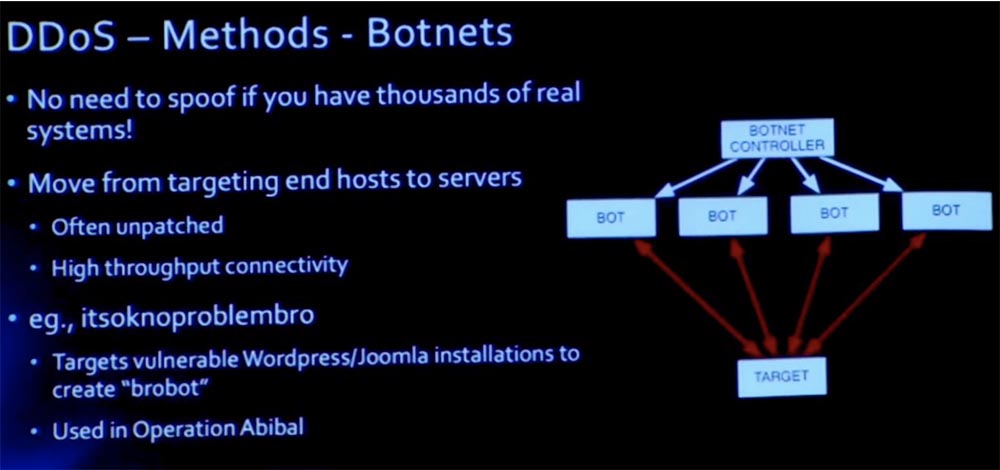

The next method of implementing DDoS attacks is to create a botnet network. The meaning of the attack is to destroy the enemy's network using bots.

The botnet networks are huge now. Bot "itsoknoproblembro" (everything is in order, no problem, bro) was used during the operation Ababil, which was aimed at the US financial sector. To create a "brobot" hackers took advantage of the vulnerabilities of the installation of Wordpress engines and Joomla.

In this case, instead of just faking a bunch of packages from one host, we use botnet legitimate systems that do not need to be faked. That is, the hacker does not need to hide or forge his real address, he just uses thousands of other people's computers. Since these are legal computers, they will react, say, to TCP SYN-ACK, and top-level attacks can be organized, such as an HTTP server attack using a mass of GET and POST requests.

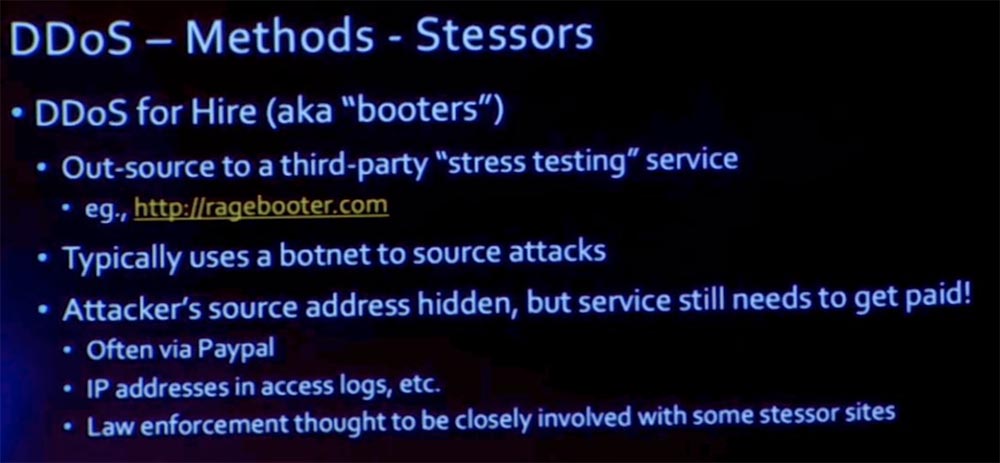

The following method of DDoS attacks involves the use of stressors. Stressors are the outsourcing of a network botnet, you hire them to perform network load testing.

Without a doubt, there are legitimate companies stressors, for example, Ragebooter. But there are other, illegal, helping to carry out a DDoS-attack based on the outsourcing of other networks botnet. The attacker's address remains hidden, but this is a paid service, and usually payment is made through the PayPal service.

Consider strategies for mitigating the effects of DDoS attacks. We can use the target DNS server to mitigate the attacks, as we did with Akamai. In the picture you see the scheme of such protection.

We had a similar attack on our web server, so I’ll just briefly tell you about it. One of the attacks that followed the Schwartz tragedy was an attack at MIT. She just "laid" our web server. To prevent such incidents in the future, we decided to use a split, or a two-tier DNS. Our source server is used to connect internal clients to the internal MIT network. To mirror the MIT network, we used the Akamai distributed content network, and then we used the external tier of our DNS to point it to external customers of Akamai.

Thus, when an Internet user wants to use the MIT network, he actually contacts the Akamai CDN server that serves our content. If for some reason this content cannot be presented to an external client directly from the cache, because it is a dynamic cache, then the original MIT server will transfer this content to Akamai, and already the Akamai server will provide this content to the user, after which it can potentially cache it to some period of time. In a minute I will talk about the attack itself, so far I will note that the decision to install an intermediate web server in the geographically-distributed Akamai content network was the defense against such attacks.

I mentioned several attacks, including an NTP attack, and am going to mention a few more. But in principle, these were brute-force attacks that simply tried to minimize our throughput. And although we are the largest supplier of traffic, even we have problems handling dozens of gigabits of excess traffic. So in this case, your protection options are really limited, aren't they?

If this is fake traffic, then how are you going to install a filter on your border to block it? Suppose that as soon as he got to your border, where you are going to filter it, he immediately floods your channels. So how can you protect against this? You have to push it back into the cloud, on the Internet, and block it there.

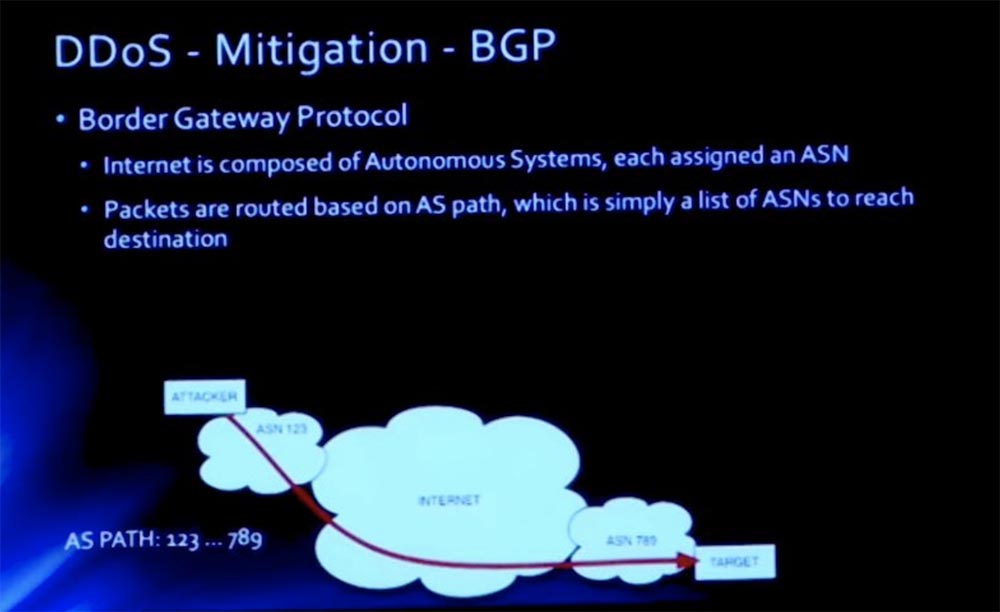

To mitigate the consequences, we decided to use the BGP border gateway protocol. If you are familiar with BGP, then you know that this is a vector protocol that performs routing on the Internet. It is used to exchange information between autonomous systems, which are denoted by their own autonomous system number ASN. Each multipurpose organization on the Internet has its own ASN, and BGP uses these numbers to build paths via the Internet, so you can use different paths to reach a specific ASN.

In this case, I use the example with the number 123, because I created it for another organization. We survived three attacks, so we recovered faster, Harvard experienced 11 attacks, from which he recovered a little slower.

So, we have a path, the beginning of this path is ASN123, the end is ASN789. Between them there is a certain sequence of autonomous systems ASE, through which this packet must pass.

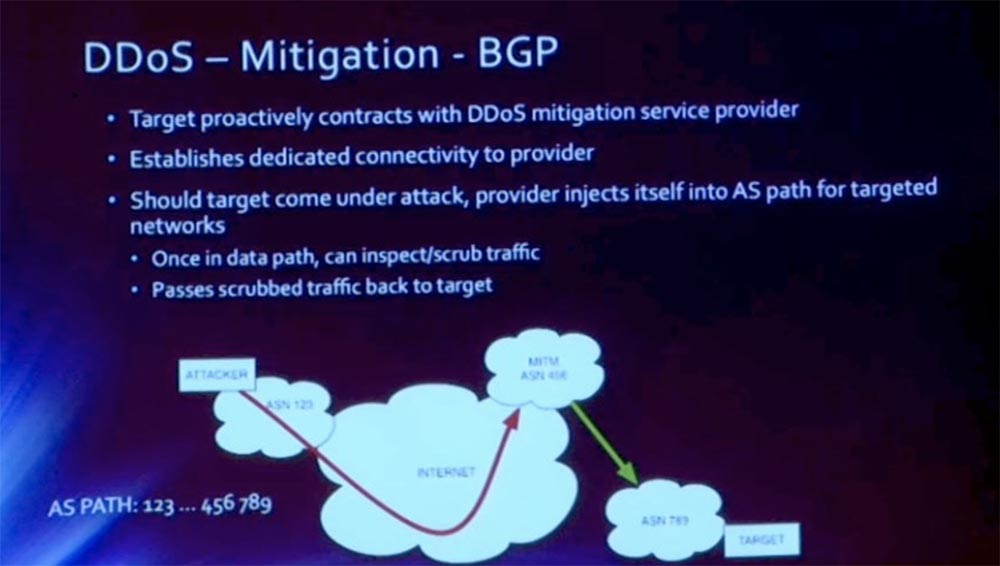

To mitigate BGP, we simply enter another ASN that handles our MIT traffic. In this case, it is ASN456. This system is going to become our legal "man in the middle", and we are going to allow it to publish our prefixes. For example, when our network is attacked with a prefix of 18.1.2.3.0 / 24 and a small piece of 255 MIT addresses is attacked, we allow this ASN456 to publish this prefix on our behalf.

As soon as this change spreads over the Internet, all traffic begins to enter this cloud. In this case, the autonomous system AS is Akamai. They have a series of scrubbers and can provide such high throughput that we are not able to provide.

Thus, in the background of this connection there is a private connection “Akamai”, where they clear the traffic intended for us. Thanks to this, we can avoid potentially deadly attacks of this kind that can disable us.

Before we continue, I want to know if you have any questions about the above?

Audience: You mentioned the boundaries of the MIT network, so I want to understand what they are.

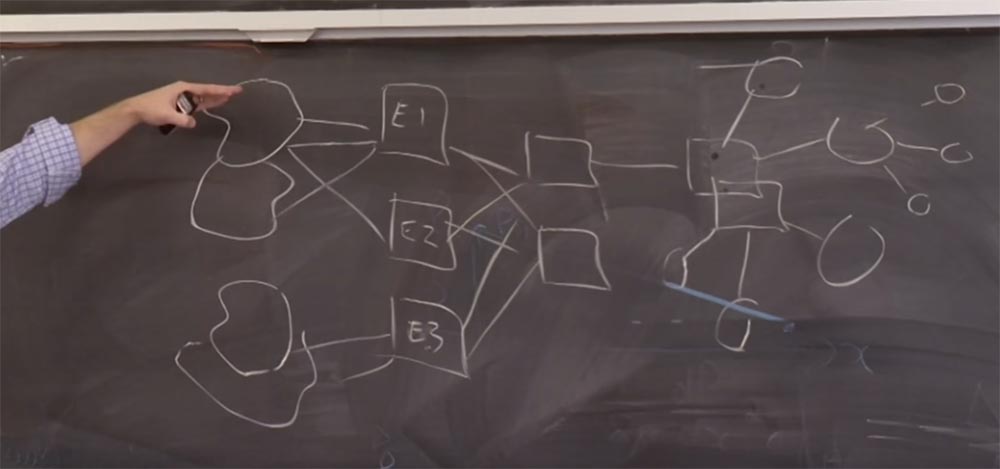

David Laporte:Now I will try to draw a diagram on the board. MIT has three border routers: External 1, External 2, and External 3. On the right is the standard star topology of the MIT network. We have the main switches connected to the distribution level, then they go to the access layers and so on. Thus, our network is limited by 3 boundaries E1,2,3, behind which there are several Internet providers. For example, our commercial providers will soon be serving both of our border routers. This external router 3 is not its current name, it is mainly intended for peering research. That is, we have a distinction between commercial peering and research peering.

Thus, everything that concerns BGP occurs between our external border and the providers. Two rectangles to the right of border routers are simply routers that communicate between the border and the core of the network.

So, in response to the tragedy of Schwartz, I think it happened 2 years ago, some hacktivists decided to attack MIT, and we survived three attacks. I will tell about all three, because these were separate attacks of different types.

The first attack was directed against our infrastructure. Our institute has always adhered to the policy of openness in the field of knowledge sharing. We have a very open network, especially compared to other educational institutions. This is a curse for security, but we are open to the world.

In this case, our border routers, these E1,2,3 squares that we just drew, were running with an outdated version of software vulnerable to a specific denial of service attack.

The attackers sent a stream with a very low bandwidth, 100 Kbps, and this was completely unnoticeable; in fact, it did not affect the device and debugging. But they sent it to the control interface of these devices, and these devices simply “fell off” from the network. They did not die, but their processors were loaded to the maximum. Therefore, they did not route the mit.net packets, and our network was offline.

This was the first attack we tried. I think it was at the time when the Patriots team was playing their playoffs. Sometimes I think that these attacks are planned specifically for some significant event that distracts the attention of technicians from monitoring the network. As a result, we immediately updated the software to the revised version, that is, we were able to find our way around the situation quite quickly and paid our security triad.

In the long run, we would need to correct what allows outsiders on the Internet to access our management interfaces, right? Very few should be able to access this interface. In the end, we implemented such a system of privileges that only the IP addresses of our employees on the VPN could get access to the interface. In addition, we no longer use clear text control protocols. This is what was corrected before the second attack took place.

Audience: Is it right to assume that this was a zero-day attack against the service provider?

David Laporte:I think it would be fair to say that this was not a zero-day attack. The second attack was against the web.mit.edu network itself. And that was what I referred to when describing mitigating DNS attacks. The web.mit.edu infrastructure was located in our firewall-protected data center. The attacker sent us a stream of HTTP traffic GET / POST, I can not say for sure, GET or POST. In principle, they did not kill the web server, but simply turned off the firewall. The list of access to the router without saving the state is very simple and provides quick work, but it also loses a lot of details that you can filter. Because you are forwarding a packet by packet, and the filtering criteria are applied to each packet, port, and IP address. We hid behind a firewall that worked well when it worked under normal conditions, but when it came under a huge load,

The fix for this attack was that we started using a routable network. We would prefer not to do this, but were forced to do so because of the attack suffered. The more long-term mitigation that we performed was that we moved to the Akamai CDN. You may notice that if you are outside the MIT network and contact web.mit, then the domain 09/18/22 is no longer used. You are redirected to the domain name C, which in turn, directs you to the Akamai IP address.

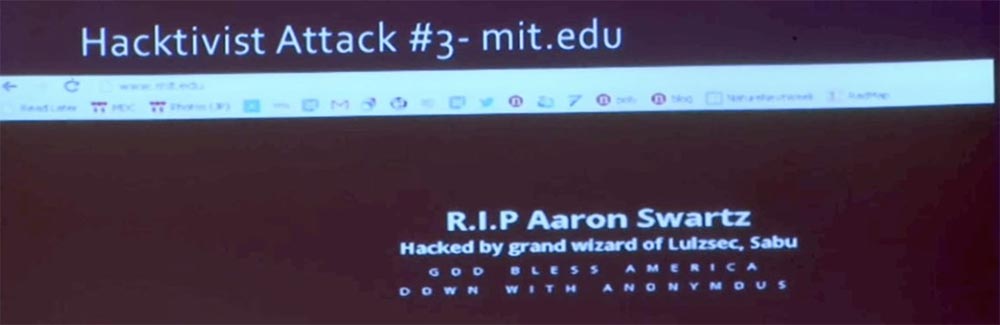

The third attack was actually not on the side of mit.net, but on the side of our registrar. Here is what we saw on the homepage of the site www.mitand web.mit - the content has been replaced by this image. We quickly made diagnostics on the web server. Everything looked great, our server was not hacked. That is, the page was damaged in another way.

In the end, we found that the who_is information for our name and our DNS simply did not work. On this slide, you see the changes made by the hacker in the “Administrative Contacts” block - “I Owned This — Massachusetts Institute of Technology” and the destroyed address for network operations — DESTROYED, MA 02139-4307.

The attackers were aimed specifically at us, but all this was delegated to two cloud hosting providers CloudFlare.

58:30 min.

Course MIT "Computer Systems Security". Lecture 22: MIT Information Security, Part 3

Full version of the course is available here .

Thank you for staying with us. Do you like our articles? Want to see more interesting materials? Support us by placing an order or recommending to friends, 30% discount for Habr's users on a unique analogue of the entry-level servers that we invented for you: The whole truth about VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps from $ 20 or how to share the server? (Options are available with RAID1 and RAID10, up to 24 cores and up to 40GB DDR4).

VPS (KVM) E5-2650 v4 (6 Cores) 10GB DDR4 240GB SSD 1Gbps until January for free if you pay for a period of six months, you can order here .

Dell R730xd 2 times cheaper? Only here2 x Intel Dodeca-Core Xeon E5-2650v4 128GB DDR4 6x480GB SSD 1Gbps 100 TV from $ 249 in the Netherlands and the USA! Read about How to build an infrastructure building. class c using servers Dell R730xd E5-2650 v4 worth 9000 euros for a penny?