Chatbot, which is “like Siri, only cooler” on the naive Bayes classifier

Hello! It is no secret that recently in the world there has been a sharp surge in activity, regarding the study of such a topic as artificial intelligence . So this phenomenon did not pass me by.

It all started when on the plane I watched a typical, at first glance, American comedy - “Why, he?” (Eng. Why him? 2016) . There, a voice assistant was installed in one of the key characters in the house, who immodestly positioned himself “like Siri, only cooler”. By the way, the bot from the film was able not only to defiantly talk with guests, sometimes cursing, but also to control the entire house and the surrounding area - from central heating to flushing the toilet. After watching the movie, I got the idea to implement something similar and I started writing code.

Figure 1 - Frame from the same film. Voice assistant on the ceiling.

The first stage was easy - the Google Speech API was connected for speech recognition and synthesis. The text received from the Speech API was processed through manually written regular expression patterns , which, when matched, determined the intent of the person talking to the chat bot. Based on the intent defined by regexp, one phrase was randomly selected from the corresponding list of answers. If a sentence spoken by a person did not fall under any pattern, then the bot said pre-prepared general phrases, like: “I like to think that I'm not just a computer” and so on.

Obviously, manually registering a lot of regular expressions for each intent is a laborious task, therefore, as a result of the searches, I came across the so-called “ naive Bayes classifier ”. It is called naive because when it is used, it is understood that the words in the analyzed text are not related to each other. Despite this, this classifier shows good results, which we will talk about below.

Just so sticking a line into the classifier will not work. The input string is processed according to the following scheme:

Figure 2 - Input text processing

flowchart I will explain each stage in more detail. With tokenization, everything is simple. Trite is a breakdown of text into words. After that, the so-called stop words are deleted from the received tokens (an array of words) . The final stage is rather difficult. Stamming is getting the word base for a given source word. Moreover, the basis of the word is not always its root. I used Stemmer Porter for the Russian language (link below). Let's move on to the mathematical part. The formula with which it all begins is as follows:

- this is the probability of assigning any intent to a given input line in other words to the phrase that the person told us.

- this is the probability of assigning any intent to a given input line in other words to the phrase that the person told us.  - probability of intent, which is determined by the ratio of the number of documents belonging to intent to the total number of documents in the training set. Document Probability -

- probability of intent, which is determined by the ratio of the number of documents belonging to intent to the total number of documents in the training set. Document Probability - therefore we discard it.

therefore we discard it.  - the probability of the relationship of the document to intent. She signs as follows:

- the probability of the relationship of the document to intent. She signs as follows:

Where - the corresponding token (word) in the document

- the corresponding token (word) in the document

We will write in more detail:

Where:

- how many times the token has been assigned to this intent

- how many times the token has been assigned to this intent

- anti-zeroing anti-aliasing

- anti-zeroing anti-aliasing

- number of words related to intent in the training data

- number of words related to intent in the training data

- the number of unique words in the training data.

- the number of unique words in the training data.

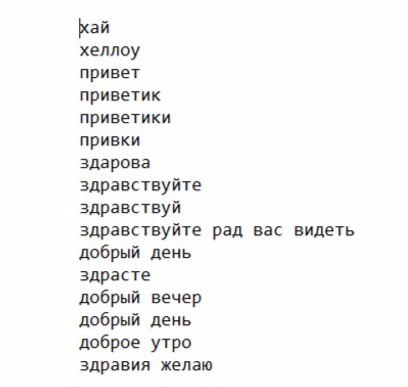

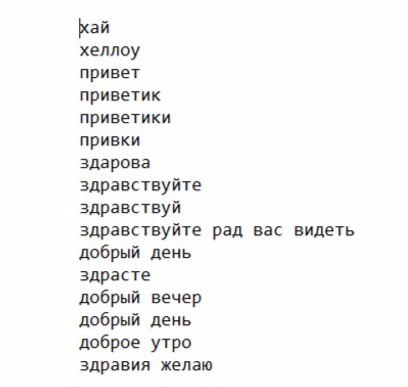

For training, I created several text files with the symbolic names “hello”, “howareyou”, “whatareyoudoing”, “weather” etc. For example, I’ll give the contents of the hello file:

Figure 3 - An example of the contents of the text file “hello.txt”

I won’t describe the learning process in detail, because all Java code is available on Github . I will give only a diagram of the use of this classifier:

Figure 4 - Scheme of the classifier.

After we trained our model, we proceed to the classification. Since, in the training data, we have identified several intent'ov, then the obtained probabilities there will be several.

there will be several.

So which one to choose? Choose the maximum!

And now the most interesting, the classification results:

The first results were a little disappointing, but in them I saw suspicious patterns:

This problem was solved by reducing the smoothing parameter ( ) from 0.5 to 0.1, after which the following results are obtained:

) from 0.5 to 0.1, after which the following results are obtained:

I consider the results successful, and given my previous experience with regular expressions, I can say that the naive Bayes classifier is a much more convenient and universal solution, especially when it comes to scaling training data.

The next step in this project will be the development of a module for defining named entities in the text ( Named Entity Recognition ), as well as improving current capabilities.

Thank you for your attention, to be continued!

Wikipedia

Stop Words

Stemmer Porter

Background

It all started when on the plane I watched a typical, at first glance, American comedy - “Why, he?” (Eng. Why him? 2016) . There, a voice assistant was installed in one of the key characters in the house, who immodestly positioned himself “like Siri, only cooler”. By the way, the bot from the film was able not only to defiantly talk with guests, sometimes cursing, but also to control the entire house and the surrounding area - from central heating to flushing the toilet. After watching the movie, I got the idea to implement something similar and I started writing code.

Figure 1 - Frame from the same film. Voice assistant on the ceiling.

Development start

The first stage was easy - the Google Speech API was connected for speech recognition and synthesis. The text received from the Speech API was processed through manually written regular expression patterns , which, when matched, determined the intent of the person talking to the chat bot. Based on the intent defined by regexp, one phrase was randomly selected from the corresponding list of answers. If a sentence spoken by a person did not fall under any pattern, then the bot said pre-prepared general phrases, like: “I like to think that I'm not just a computer” and so on.

Obviously, manually registering a lot of regular expressions for each intent is a laborious task, therefore, as a result of the searches, I came across the so-called “ naive Bayes classifier ”. It is called naive because when it is used, it is understood that the words in the analyzed text are not related to each other. Despite this, this classifier shows good results, which we will talk about below.

Writing a classifier

Just so sticking a line into the classifier will not work. The input string is processed according to the following scheme:

Figure 2 - Input text processing

flowchart I will explain each stage in more detail. With tokenization, everything is simple. Trite is a breakdown of text into words. After that, the so-called stop words are deleted from the received tokens (an array of words) . The final stage is rather difficult. Stamming is getting the word base for a given source word. Moreover, the basis of the word is not always its root. I used Stemmer Porter for the Russian language (link below). Let's move on to the mathematical part. The formula with which it all begins is as follows:

Where

We will write in more detail:

Where:

For training, I created several text files with the symbolic names “hello”, “howareyou”, “whatareyoudoing”, “weather” etc. For example, I’ll give the contents of the hello file:

Figure 3 - An example of the contents of the text file “hello.txt”

I won’t describe the learning process in detail, because all Java code is available on Github . I will give only a diagram of the use of this classifier:

Figure 4 - Scheme of the classifier.

After we trained our model, we proceed to the classification. Since, in the training data, we have identified several intent'ov, then the obtained probabilities

So which one to choose? Choose the maximum!

And now the most interesting, the classification results:

| No. | Input line | Specific intent | Is it true? |

| 1 | Hello, how do you do? | Howareyou | Yes |

| 2 | Glad to welcome you friend | Whatdoyoulike | Not |

| 3 | How was yesterday | Howareyou | Yes |

| 4 | What is the weather outside? | Weather | Yes |

| 5 | What weather is promised for tomorrow? | Whatdoyoulike | Not |

| 6 | I'm sorry, I need to go away | Whatdoyoulike | Not |

| 7 | Have a good day | Bye | Yes |

| 8 | Let's get acquainted? | Name | Yes |

| 9 | Hi | Hello | Yes |

| 10 | Glad to welcome you | Hello | Yes |

The first results were a little disappointing, but in them I saw suspicious patterns:

- Phrases No. 2 and No. 10 differ in one word, but give different results.

- All incorrectly defined intent's are defined as whatdoyoulike .

This problem was solved by reducing the smoothing parameter (

| No. | Input line | Specific intent | Is it true? |

| 1 | Hello, how do you do? | Howareyou | Yes |

| 2 | Glad to welcome you friend | Hello | Yes |

| 3 | How was yesterday | Howareyou | Yes |

| 4 | What is the weather outside? | Weather | Yes |

| 5 | What weather is promised for tomorrow? | Weather | Yes |

| 6 | I'm sorry, I need to go away | Bye | Yes |

| 7 | Have a good day | Bye | Yes |

| 8 | Let's get acquainted? | Name | Yes |

| 9 | Hi | Hello | Yes |

| 10 | Glad to welcome you | Hello | Yes |

I consider the results successful, and given my previous experience with regular expressions, I can say that the naive Bayes classifier is a much more convenient and universal solution, especially when it comes to scaling training data.

The next step in this project will be the development of a module for defining named entities in the text ( Named Entity Recognition ), as well as improving current capabilities.

Thank you for your attention, to be continued!

Literature

Wikipedia

Stop Words

Stemmer Porter