[Java Archeology] Context-sensitive tracing inlining in Java

- Transfer

Briefly about the article

Method inlining is one of the most important optimizations in JIT compilers (which are called "method-based" or "block" due to it). This optimization broadens the scope of compilation, allowing you to optimize several methods as a whole, which improves application performance. However, if you use method inlining too often, the compilation time will become unnecessarily long, and too much machine code will be generated. And this will affect productivity already negatively.

Tracing JIT compilers do not collect everything in a row, but only frequently executed paths, the so-called traces. With this, you can get faster compilation, reduce the amount of generated machine code, and improve its quality. In our previous works, we implemented the infrastructure for recording traces and the tracing Java compiler, modifying the Java code of HotSpot VM. Based on this work, we calculated what effect inlining of traces has on productivity and the amount of generated code.

Compared to inlining methods, inlining traces provides several important advantages. Firstly, inline tracing is more selective because only frequently executed paths inline. Secondly, recorded traces can contain information about virtual calls, which simplifies inlining. The third advantage is that the tracing information is context-sensitive, so that the various parts of the method may inline depending on the particular location of the call. These advantages make it more aggressive to inline when the amount of generated code is still within reasonable limits.

We tested several inlining heuristics using the DaCapo 9.12 Bach, SPECjbb2005, SPECjvm2008 benchmarks, and showed that our tracing compiler allows us to achieve 51% more peak performance than the block client compiler from HotSpot. Moreover, we showed that the expansion of the compilation area in our tracer compiler had a positive effect on other optimizations, such as folding constants and eliminating null checks.

Translation Notes

License: CC BY 3.0 (Creative Commons Attribution 3.0 Unported) The

words “trace” and “inlining” were left without translation, because these words entered the Russian spoken language too firmly. When you try to discuss with your colleague "building tracks", you may find yourself misunderstood.

If one code calls another, then the calling party is called the sender (caller, sender), and the called party is called the receiver (callee, receiver). These words are far-fetched, but they are short and general, and, thanks to this, do not clutter up the text.

Method-based compilers are referred to here as “block compilers”. This neologism was also introduced in order to reduce the clutter of the text and eliminate ambiguities in the Russian version.

Pictures and diagrams are not redrawn, and contain inscriptions in English. Sorry.

The translation is kept close to the text even where it causes unreasonable pain to the translator. In particular, endless self-repetitions, “introductions”, exceeding the content of the article in volume, a hundred times complex sentences - a frequent companion of various Java research papers. If you already have a desire to fill someone’s face, then contact the authors with these desires.

Translation Notes: Target Audience

The other day we were soaring in a bathhouse, and in the children of the revelry the idea sounded that someday the history of software would be studied in the same way as now - the history of Russia. Special software archaeologists will dig out wonders from the depths of ancient repositories, carefully brush off dust and try to collect.

Having returned home, I found that half of the documents from the “must read” daddy consist of documents 5+ years ago, which in our times is already “a long, long time ago, in a distant, distant galaxy”. That is, excavations can be started right now, why wait.

Therefore, it is difficult to give any recommendation whether it is worth reading this article and the following.

Sometimes you can dig a diamond in the mud, and sometimes rubbish is just rubbish.

On average, the text is intended for enthusiasts in the development of virtual machines.

Brief information about the authors of the text and the translator is given at the very end of the article.

And now we are transported to 2013, spring, April, birds are singing, grandfathers Christian Häubl, Christian Wimmer and Hanspeter Mössenböck are sitting on a bench and tell us about context-sensitive inlining of traces.

1. Introduction

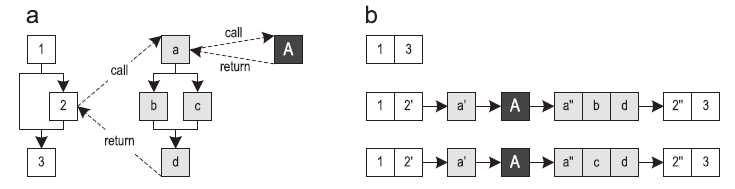

JIT compilers based on method inlining (hereinafter referred to as “block compilers”) translate the whole methods, turning them into optimized machine code. Tracer compilers use frequently executed paths as a compilation unit [1]. This improves peak performance while reducing the amount of machine code generated. Figure 1 shows a control flow graph (CFG) for three methods and three paths along them. The beginning of the trace is called the anchor (trace anchor), which is represented by block 1 for all the traces in this example. Which blocks will become anchors depends heavily on the specific implementation of the trace recording system.

Figure 1. Traces going through three methods: (a) control flow graph (b) possible traces

In a virtual machine, traces can be recorded by instrumenting the execution of bytecode. Then these traces are compiled into optimized machine code. If the part of the method that has not yet been compiled is executed, the system usually returns to interpreter mode.

Most current trace recording implementations allow traces to cross method boundaries. [1, 2, 10, 12, 18]. This can lead to large traces that must be compiled together.

In the previous work [14, 15], we implemented a tracing JIT compiler based on the client compiler from Oracle Java HotSpot. Our previous conference article [15] focused on inlining traces and included the following:

- Description of how to do inlining traces and discuss its advantages over inlining methods

- Description of several inlining features implemented on a native tracer JIT compiler

- Evaluation of the influence of heuristics of trace inlining on compilation time, peak performance, and the amount of generated machine code for the DaCapo 9.12 Bach benchmark [3].

This article is an extended version of the document described above, and adds the following new aspects:

- A more detailed description of recording and inlining traces

- A description of why compiler intrinsics for native methods benefit from expanding the compilation area resulting from trace inlining

- Evaluation of our specific heuristics on benchmarks SPECjbb2005 [23] and SPECjvm2008 [24].

Moreover, we compare the peak performance of our best heuristics with the HotSpot server compiler. - A study of which high-level optimizations in the compiler benefit from trace inlining as a result of expanding the compilation area.

The rest of this article is organized as follows:

- overview part 2 gives a short introduction to our tracer virtual machine

- part 3 will show the system of recording traces,

- in part 4 we will tell you how to do inlining

- Part 5 introduces the reader to various heuristics

- part 6 discusses benchmark results

- in part 7 we discuss related work

- and part 8 concludes this article.

2. A quick look

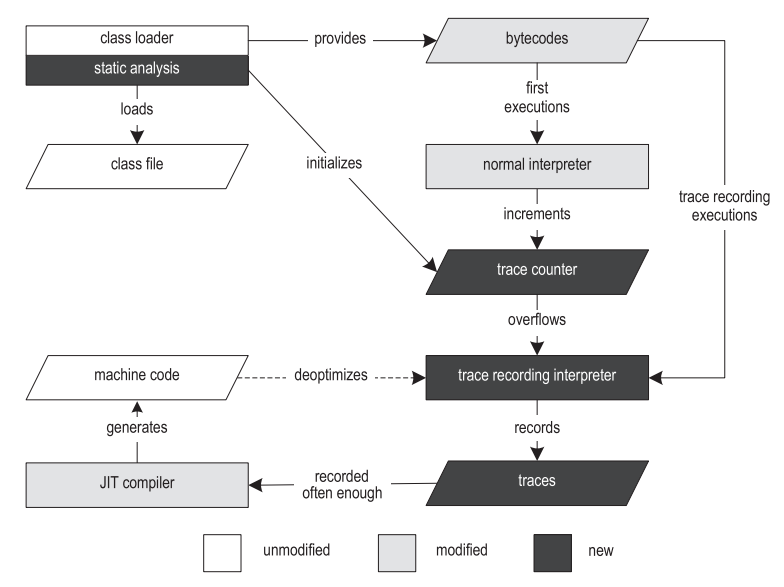

In our previous work, we implemented an infrastructure for recording traces and a tracing JIT compiler for Java [14,15]. Figure 2 shows the structure of our VM.

Figure 2. The structure of the tracer virtual machine.

Execution begins with the class loader, which loads, parses and verifies class files. The class loader provides runtime data structures, such as the Constant Pool and Method Object, which reference other parts of the VM. After the class is loaded, the bytecode preprocessing step is performed, it allows you to find loops and creates data structures specific to the tracing procedure.

To record the traces, we duplicated the Template Interpreter from HotSpot [13], and instrumented it. As a result, we got both an ordinary interpreter and an interpreter with recording traces. A conventional interpreter executes bytecode at approximately the same speed as an unmodified VM, and is used for initial executions. When a conventional interpreter comes across an anchor of a trace, it takes the call counter of that anchor and increments it by one. When the counter overflows, the anchor is marked as “hot”, and execution switches to the interpreter with the recording of traces (hereinafter referred to as the “recording interpreter”). The current implementation supports two different types of traces: cycle traces with an anchor on the loop header, and method traces with an anchor on the method entry.

HotSpot provides two different JIT compilers that share the majority of the VM infrastructure. The client compiler (client compiler) was created to ensure high startup performance and implements the most basic optimizations, however, it is quite sufficient to achieve decent performance [19]. At compile time, the compiler generates a high level intermediate representation (HIR) cast to SSA (static single assignment) [7], which is a control flow graph (CFG). Both during and after the construction of the HIR, such optimizations as convolution of constants, elimination of checks for null and inlining of methods are applied. The optimized HIR translates into a low-level intermediate representation (LIR), which is very close to machine code, but still mostly platform independent. Then, LIR is used for register allocation by linear scanning [27] and code generation.

The server compiler (server compiler) performs significantly more optimizations than the client, and produces highly efficient code that can produce the highest possible peak performance [21]. It is intended for long-lived server applications, such that the initial JIT compilation introduces only a small overhead compared to the total execution time. The server compiler goes through the following compilation phases: parsing independent of the optimization platform, instruction selection, global code movements and scheduling, allocation of registers for graph painting, peephole optimization and code generation. The server compiler can apply some more additional optimizations, such as loop-invariant code motion, loop unrolling, and escape analysis.

Our own tracer JIT compiler is based on the client compiler from HotSpot. Despite the fact that our techniques are generalized enough to be applicable also to the server compiler, its complex structure is much less suitable for modifications that are needed for tracing compilation, especially in the context of a research project. Therefore, we decided to use the client compiler as the basis.

When the traces begin to be created often enough, our compiler first merges them into the traces graph. This structure is a hybrid between the control flow graph (CFG) and the tree of traces [10], and even if there are merging points in it, some paths can be duplicated if this gives some advantage. At this level, we do general and trace-specific optimizations, such as constant folding, aggressive trace inlining, and explicit duplication of the control flow. The machine code generated in this case is subsequently directly called by either the interpreter or other compiled traces.

If the precondition of aggressive optimization was violated during execution, our system is deoptimized [18] to a recording interpreter. Deoptimization first saves all values that are alive in the current compiled frame, and then replaces this compiled frame with one or more interpreted frames. The exact number of interpreted frames created depends on the inlining depth of the instruction currently being executed. Then the interpreted frames are filled with previously saved values, and execution continues in the recording interpreter.

When a recording interpreter enters the code, instead of using the anchor of the main trace, it can write a partial trace that starts directly from the point of deoptimization. To notice too frequent deoptimizations of the compiled code, the counter is incremented every time deoptimization occurs. After reaching the threshold, the compiled machine code is discarded, and a new compilation is launched, which uses both the originally recorded traces and all partial traces. This allows you to do something that will reduce the frequency of deoptimization - for example, you can increase the coverage of methods or turn off some specific aggressive optimizations.

3. Recording Traces

Our approach to recording traces limits the trajectory to the boundaries of one method [14]. At the moment when we found that the anchor began to be executed quite often, execution switches from the usual to the recording interpreter. To record a trace, each thread carries a stack of traces that stores all the traces that are currently being written. Information about instructions that can modify the control graph is stored in the topmost trace on the stack, and the stack is modified accordingly to support this rule.

When a method call is made during a trace recording, the call is recorded in the call source trace. To call virtual methods, in addition to this, a receiving class is also written. Upon entering the called method, a new method trace is pushed onto the stack, and recording continues.

When the called method returns, the corresponding trace is taken from the stack and stored in the trace repository. Then the calling and called tracks are connected by saving the pointer to the called in the calling track, and further recording goes to the calling track. This relationship saves context-sensitive information about the call across the boundaries of the method, which leads to the appearance of a data structure similar to a dynamic graph of calls.

When the already saved trace is recorded again, instead of recording it completely in a new way, a simple increase in the counter occurs. We believe that traces are different if they went in different ways, or if they invoke different traces inside the called methods. Therefore, linking traces allows us to obtain accurate call information for each completed path that passes through any part of the application. To reduce the number of recorded traces to a reasonable value, we do not associate traces of cycles and recursive methods with the parent trace. Immediately after the trace recording is performed a certain number of times for the same anchor, it is assumed that all important traces for this anchor are already recorded, and they are compiled into optimized machine code.

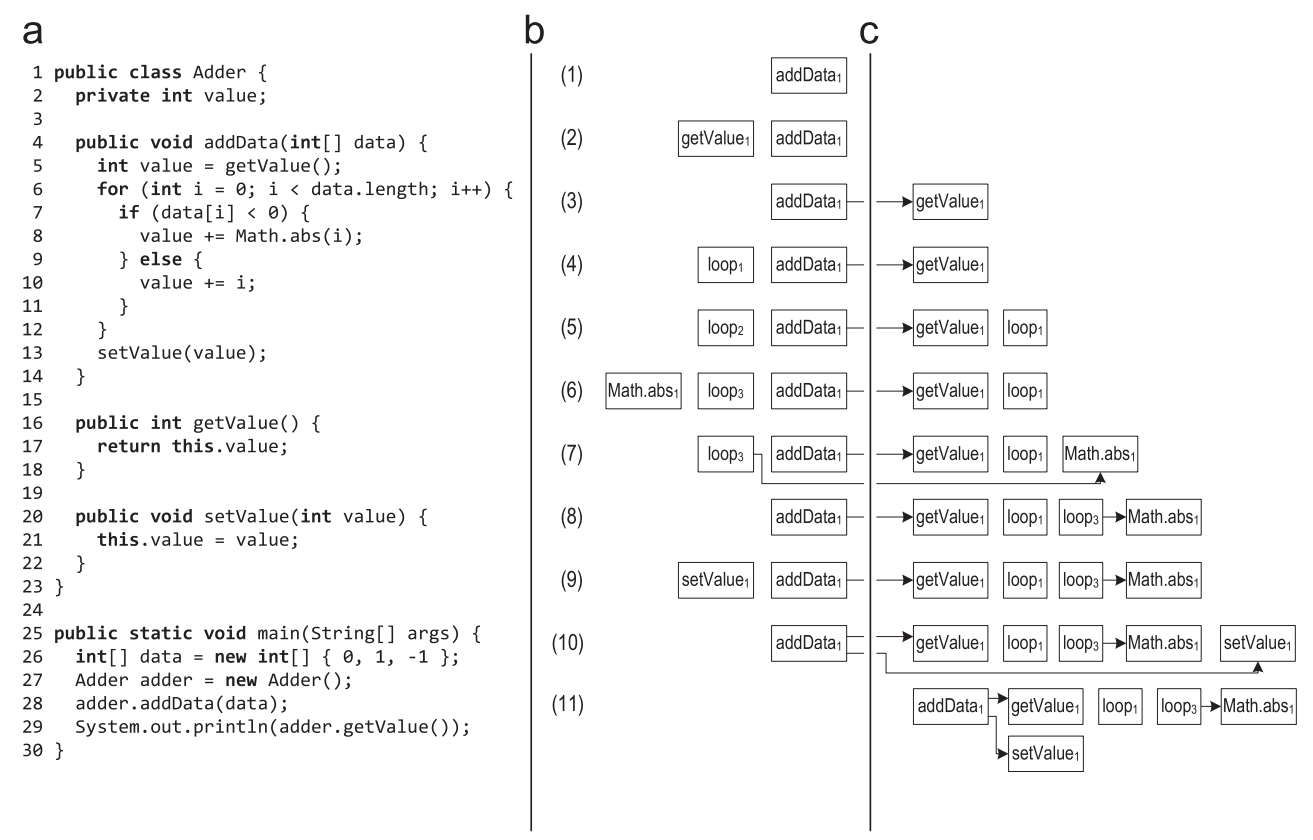

Figure 3 shows an example of a trace record in which the record began for the addData method.

Figure 3. The stack of traces during the recording of the trace: (a) the source code (b) the stack of traces (c) the traces stored in the repository

- When the anchor of the addData () method is marked as hot, execution switches to the recording interpreter, and the method trace is placed on the trace stack. The method is executed from the very beginning until the virtual getValue () method is called. When making a virtual call, the calling class and the receiving class are stored in the calling trace.

- When you enter the getValue () method, the new trace of the method is put on the stack of traces, and the recording continues in it.

- When getValue () returns, the corresponding trace is taken from the stack and stored in the trace store. After that, the traces are connected by saving the pointer to the getValue () trace in the getData () trace. Execution and writing continues for addData (), and reaches the loop header.

- To record a cycle, a new cycle trace is pushed onto the stack.

- After the first iteration of the loop, when the execution returns to the loop header, the trace of this loop is taken from the stack and saved. For the next iteration of the loop, a new loop trace is pushed onto the stack. The second iteration is performed in the same way as the first, so the system understands that such a trace has already been recorded, and does not save it in a new one, but only increases the counter in the old saved trace.

- The third iteration of the loop goes a different way, the Math.abs () method is called, and for it a new method trace is put on the stack.

- When Math.abs () returns, the corresponding trace is saved and associated with the calling trace.

- After that, execution reaches the loop header, and the loop ends. The cycle trace is taken from the stack and saved.

- After exiting the loop, the virtual setValue () method is called. The calling and receiving classes are stored in the calling trace, and the new method trace is pushed onto the stack when it enters setValue ().

- When setValue () returns, the corresponding trace is taken from the stack, saved, and associated with the calling trace.

- In the end, the addData () method returns, it also gets from the stack and is saved.

- After that, the stack is empty and execution switches back to the regular interpreter.

In this example, we meant that for the called methods and the loop, the traces did not compile. If the traces for the getValue () method were precompiled, then calling getValue () would lead to the execution of the compiled machine code instead of interpreting the method. A recording interpreter would not be able to put a new method trace on the stack, and would not be able to write a control flow for called methods. In this case, our approach to recording the trace could not ensure the preservation of specific information about the control flow when crossing the boundaries of the methods. It would be possible not to call the compiled code, and instead, at least once, force the execution of this code in the recording interpreter, having received all the necessary information. However, this would drastically reduce startup performance, because the application would be interpreted much longer.

For best recording performance, all frequently performed operations (such as recording information about specific instructions) are directly implemented in the recording interpreter in the form of assembler templates. More complex operations, such as saving recorded traces, are implemented in the interpreter runtime written in C. Our infrastructure for recording traces also supports efficient multithreading, so any thread in Java can independently switch between a regular and a recording interpreter. Each thread uses a thread-local buffer to record the trace in order to achieve the best write performance.

During recording, several threads can simultaneously work on data structures that store recorded traces. We noticed that for most anchors a very small number of traces are recorded, so saving a new trace is rarely required, but increasing the execution counter is required constantly. Therefore, we store saved traces in data structures that use a minimum number of locks and atomic instructions when reading. When it seems to the system that it will find a new trace, we block this data structure for other recording threads, and under blocking, we double-check whether this trace is really new, and only then add it to the repository. In such a simple way, for the most common case, we avoid synchronization and atomic machine instructions,

4. Inlaying traces

Method inlining replaces method calls with copies of real executable code. Inlining heuristics can be divided into static and dynamic.

The HotSpot client compiler uses a simple static method inlining heuristic, in which the method size is compared with a fixed limit. Virtual methods are inline using static class hierarchy analysis (CHA) [8]. This analysis will determine if the method has been overridden by any of the loaded subclasses, in which case it can be optimistically inlined. If then another subclass is loaded that overrides the optimistic inline method, the generated machine code is thrown away. In contrast, dynamic inlining heuristics use information from the profile, and on the basis of it they decide whether the method is inline.

Our tracer JIT compiler supports both types of heuristics simultaneously, using recorded information about traces. As with method inlining, tracing inlining replaces calls with copies of real executable code. This increases the compilation area and can improve performance.

4.1. Benefits of Trailing Inlining

Tracing inlining has several advantages over inlining methods:

- Trace inlining only processes frequently executed traces, not the entire methods. Block compilers try to use profile information to avoid compiling rarely used parts of methods [9,25,26]. This allows you to achieve an effect similar to inlining traces, but it is just an additional secondary approach.

- The recorded traces contain context-sensitive information about which parts of the methods are used, and by whom exactly. This information is stored when crossing the boundaries of the methods and can be used in order not to inline those parts of the methods that are generally used very often, but which are not needed right now by a specific sender.

- Traces contain information about the recipient of the virtual call, and as a result of linking the traces, this information is also context-sensitive. It may turn out that a certain place of a call calls only methods that have a very specific type of recipient. This information can be used for aggressive inlining of virtual methods. Block compilers also use profile information for aggressive inlining of virtual calls, but in most compilers this information is not context-sensitive.

4.2. Implementation

Inlining begins with calculating the maximum size of the trace that needs to be inline at the current call site. This mainly depends on the relevance to this place of the call (see part 5.1). Then a heuristic is used that decides whether it is worth inlining the called traces at this point in the call. To a large extent, it all depends on the size of these traces, because too many traces lead to bloat code.

Tracing inlining is similar to inlining methods except that traces usually do not cover the entire bytecode of the recipient. We take the traces that need to be inlined, build a graph from them, and replace the method calls with the contents of this graph. Then, all return operations located inside the inline bytecode are replaced by direct jumps with an instruction following the call instruction, and the exception throwing instructions are associated with exception handlers located in the sender's trace.

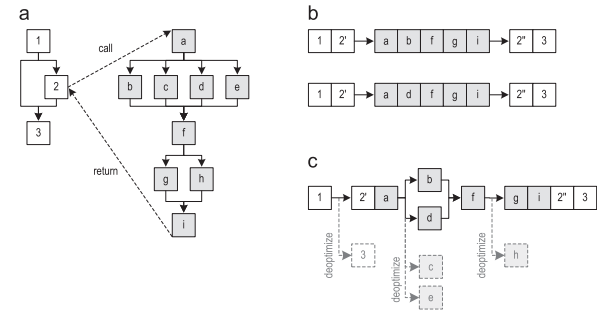

Figure 4 (a) shows the control flow graph for the two methods. Two traces passing through these methods are shown in Figure 4 (b). After the recording of the trace began to be performed quite often, the recorded traces are sent for compilation. The resulting graph obtained after inlining the traces (but without explicit duplication of the control graph) is shown in Figure 4 (c). Then the trace graph is compiled into optimized machine code. If one of the deleted blocks still needs to be executed in the future, the compiled code is deoptimized to the interpreter.

Figure 4. In-line tracing of a method: (a) a graph of a control flow (b) recorded traces (c) a graph of traces after inlining

It is also interesting that we removed the edge going from block 1 to block 3, even despite the fact that the trace graph does not contain block 3. This is a great idea, because it allows us to avoid merging control flows. If merging were done, this would limit possible compiler optimizations. Removing non-executable edges leads to a more optimized machine code.

In most cases, we inline only those traces that are called by the current sender. However, if the recipient’s trails were compiled before the trace record was enabled for the sender, this sender does not know what compiled traces he needs. In these cases, we conservatively believe that all the recipient's traces are suitable for inlining, except for those for which we can prove that they cannot be called by the current sender, due to the use of certain parameters that the sender passes to the recipient. Behind this is a technology similar to dead code elimination (DCE) in block compilers, but it allows you to delete entire traces instead of base blocks. To further reduce the number of inline traces, we also filter out rarely executed traces (see part 4.

To call virtual methods, information about the recorded trace is combined with CHA from the HotSpot client compiler, which allows you to determine the specific recipient class for the current call location. If the CHA defines one specific target method, the traces of the called method are inlined in the same way as the client compiler inlines the methods. If the CHA finds several possible target methods, we try to use the recorded sender classes to aggressively inline the traces of the methods. To do this, we add a runtime check, which compares the actual recipient type with the expected type and deoptimizes the interpreter if the types do not match. By combining CHA and context-sensitive trace information, we can inline virtual methods more often than most block compilers,

In addition to inlining traces of methods, we began to support inlining of cyclic traces. Figure 5 (a) shows the graph of traces constructed for the traces of the method that called the cyclic trace. Looping trails are not yet inlined, so the loop is presented as a black box that is still unknown to the compiler. In the next step, a separate graph of traces is constructed, only for cyclic traces - Figure 5 (b). Further inlining replaces the black box in the sender graph with the loop graph, and associates all exits from the loop with their correct continuation blocks using jump instructions. In this example, block b is connected to block e, and block c is connected to block d, which leads to the trace graph shown in Figure 5 (c).

Figure 5. Cycle trace inlining: (a) method trace graph (b) cycle trace graph (c) state after loop

inlining During loop tracing inlining, we consider that all traces recorded for a particular cycle are candidates for inlining. This is necessary because cyclic traces are never directly associated with their calling trace, and therefore no context-specific call information is available. However, we use information about the parameters and local variables that are included in this cycle, and thanks to them we delete those traces for which it is possible to prove that they cannot be called by the current sender. Moreover, we also delete traces that are not executed often enough.

A more complicated case is when an inline loop has an output, the continuation of which does not exist in the sender graph. In Figure 5 (a), block d may disappear, for example, because it has never been recorded. Despite this, both exits from the cycle can be represented in recorded cyclic traces, as shown in Figure 5 (b). One of the ways this can happen: loop traces were compiled before the method trace was recorded. Initially [15], we circumvented this problem by explicitly adding deoptimization points to all exits from loops that could not be associated with a continuation, and execution was deoptimized every time the loop terminated along this path. Now we just delete the cyclic traces that end with the exit from the cycle, which is unknown to the current sender.

4.3. Context dependent

Our recording infrastructure limits the size of a trace to a fixed depth of 1 method, so the tracing compiler relies heavily on aggressive inlining of traces [15]. The mechanism for recording traces saves context-sensitive information when crossing the border of the method, so each sender knows which parts of the recipient he should inline. This helps the compiler to avoid inlining those parts of the method that are generally executed quite often, but not relevant to the current sender. This reduces the amount of machine code generated and reduces the number of merge points, which generally has a positive effect on peak performance.

Block compilers also use profile information to remove code that never executes. However, the information in their profiles is usually not context-sensitive, which is why they cannot decide which parts of the method exactly which sender needs. Context-sensitive information in a profile can, in principle, be written for a block compiler as well, but we believe that trace writing and a tracing compiler greatly simplify this process.

Figure 6 shows the indexOf () method for the ArrayList class from the JDK.

Figure 6. ArrayList.indexOf () method

The first part of the method handles the rare case of null search, the second part searches for a list of non-null objects. Most senders only need the second part of the method. However, if at least one sender appears in the application that executes the first part of the method, the information in the block compiler profile will show that the first part has also been executed. Therefore, every time a block compiler inlines the indexOf () method, it also inlines this rarely executed piece of code. We have context-sensitive information, and our tracing compiler can avoid the above problem when the sender does not need any part of the method.

Since inlining is more legible in that it is going to inline, our tracing compiler can use a more aggressive inlining policy, for example, it can inline traces that follow methods that are too large in volume to be completely inline. This increases the compilation area without having to inline more Java bytecode than the block compiler does. In particular, for complex applications, this leads to a more optimized machine code, and has a positive effect on peak performance.

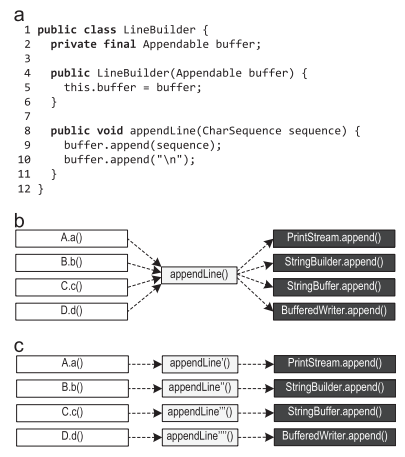

Figure 7 (a) shows the LineBuilder class, which wraps the Appendable and provides the appendLine () method.

Figure 7. Context-sensitive information: (a) pattern (b) possible method calls (c) preferred inlining

If multiple LineBuilder objects are used to wrap instances of various classes, such as PrintStream, StringBuilder, StringBuffer, and BufferedWriter, then the append () calls on lines 9 and 10 will become polymorphic calls that aren’t so easy to inline, as shown in Figure 7 (b).

If dispatching in appendLine () depends on the place of the call, for example, because different LineBuilder objects are used at different places of the call, inlining is preferable, as shown in Figure 7 (c). Our context-sensitive information about the trace also stores the recipient of virtual calls. Therefore, our tracer compiler can do exactly this preferred inlining from Figure 7 (c), using the available context-sensitive information for aggressive inlining of virtual calls. If the compiler does not write context-sensitive information to the profile, but simply collects all detected types (such as PrintStream, StringBuilder, StringBuffer and BufferedReader with buffer.append ()), it will not find enough information to inline such virtual calls.

In the previous version of our tracer compiler, in those cases when the trace record showed that the called method always belongs to the same recipient type, we used only the type information. Such inline traces are protected by a “type guard”, which compares the actual recipient type with the expected type, and deoptimizes to the interpreter in case the types do not match. In this article, we have expanded inlining of traces in the following ways:

- If the same method is always called from the place of the call, but it is executed on different types of the recipient, then it was previously impossible to inline such a method. However, in reality this situation occurs quite often, for example, if the abstract base class implements a method that does not overlap (override) in the subclass. We were able to create this kind of inlining using the so-called method guard, which refers to the virtual table of methods of the real recipient and compares the called method with the expected method. If the methods do not match, we will deoptimize to the interpreter.

- If the same method is always called from the place of the call, but does it on the receiver of the interface type, we extend the "type guard" to a structure similar to switch, so that it can check several types of recipients at once. This is cheaper than searching through interfaces, and allows us to inline calls to interface methods in a fairly decent number of cases. If the actual recipient type does not match any of the expected types, we will deoptimize to the interpreter.

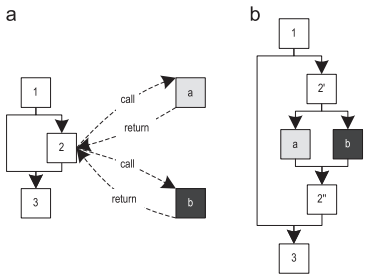

- Another improvement is inlining polymorphic calls. Figure 8 (a) shows a method in which virtual calls can call two different methods. Since these methods are small enough, it makes sense to inline both. This leads to the control flow shown in Figure 8 (b), in which block 2 'throws / dispatches the flow to one of the inline methods, depending on the actual type of receiver. Here we also use switch-like semantics, so that several types can be thrown to the same inline method at once. If the actual recipient type does not match any of the expected types, we will deoptimize to the interpreter.

Figure 8. Polymorphic inlining: (a) polymorphic challenge (b) polymorphic inlining

The HotSpot server compiler also inlines polymorphic calls, but limits the number of methods being called to a maximum of two, since a large number of them can easily lead to code bloat. Our tracer compiler inlines method chunks more selectively, because it has context-sensitive information about recorded traces. Therefore, we can avoid inlining those pieces of the method that, on the whole, were often executed, but not needed by the current sender. Moreover, the recorded type information is also context-sensitive, which reduces the number of candidates for inlining. Therefore, our tracer compiler does not have a limit on the number of inline methods, but instead limits the total number of all inline methods, depending on the frequency of execution of a particular call location.

4.4. Native methods

Java code can call native methods using the Java Native Interface (JNI). This mechanism is mainly used to implement platform-specific functions that cannot be expressed in Java in any other way. Since no Java code is executed for these methods, writing their traces is not possible.

HotSpot uses compiler intrinsics for the most important platform-specific methods, so the JIT compiler can inline such methods. If our tracer JIT compiler compiles a trace graph that contains a call to a native method implemented as an intrinsic of the compiler, we do exactly the same inlining as the block compiler. However, our tracer compiler still has one advantage: traces are smaller than methods, so our tracer compiler can inline traces more aggressively than the block compiler inline Java methods. This extends the scope of compilation, and the code that calls the native method has an accurate knowledge of the parameters that go into this native method. The JIT compiler can use this information to aggressively optimize inline compiler intrinsics.

Figure 9 (a) shows the pseudo-code for implementing the native System.arraycopy () method, which is used to copy primitive arrays.

Figure 9. Pseudocode of the System.arraycopy () method for copying primitive arrays: (a) non-optimized code (b) optimized code

Depending on what information about the parameters passed to System.arraycopy () is known to the compiler, it can optimize intrinsics. Figure 9 (b) shows an optimized version of the method where the compiler can optimally exploit parameter values. The necessary information about the parameters, for this specific example, becomes available in the place where the source array and the target array are located in the same compilation area in which System.arraycopy () is inline. That is, increasing the compilation area can help us increase the performance of inline compiler intrinsics.

4.5 Trace Filtering

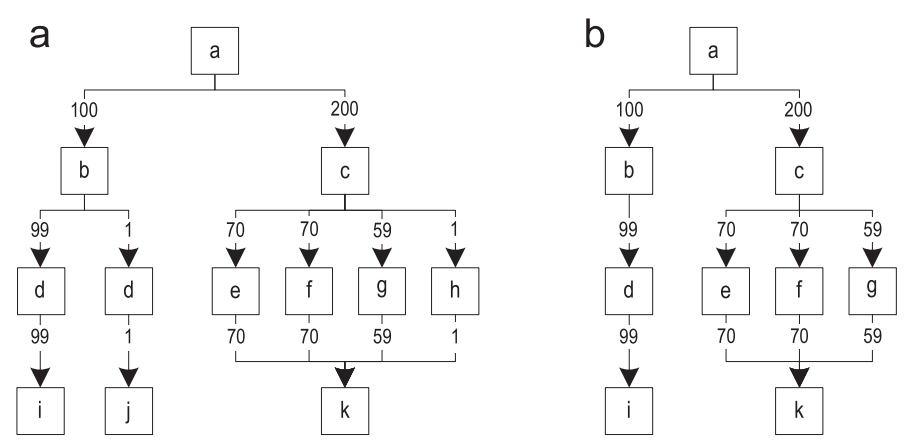

When a trace has already been recorded, there is a good chance that this trace is quite important, and will often be performed. However, sometimes it happens that recorded tracks are rarely performed. Throwing away these traces, we can guarantee that only important paths will be compiled. Figure 10 (a) shows the graph of traces after merging all recorded traces. The edges of the graph are annotated by the frequency of execution.

Figure 10. Filtering rarely performed traces: (a) the original trace graph (b) the trace graph after filtering

For each block, we determine the most often executed outgoing edge, and compare this frequency with all other outgoing edges of the same block. Then, remove the edges with a frequency that is one hundred times less. After processing all the blocks, we remove the no longer available blocks from the graph. Figure 10 (b) shows the resulting graph after filtering.

The recorded information about the traces saves the behavior of the program, which was observed only during a specific time window. At later stages of execution, rarely executed (and therefore remote) paths can become important again, because program behavior changes over time. This leads to frequent deoptimizations, since paths not compiled in advance begin to run. If unnecessarily frequent deoptimization is detected, the old compiled machine code is discarded and a new compilation starts. This compilation avoids filtering traces for those cases that led to frequent deoptimization.

Filtering traces has several difficult cases that you should take care of separately:

- For most loops, the loop body is performed much more frequently than exiting the loop (see Figure 5 (c)). Therefore, the frequency of exits from the cycle should be compared with the frequency of entry into the cycle, but not with the frequency of the previous brunch. Otherwise, the edges of the exits from the cycle will be thrown out, and upon exiting the cycle, deoptimization is required. This will increase the frequency of deoptimizations and reduce the compilation area.

- Aggressive inlining of traces can inline rarely performed traces. These traces do not necessarily reflect normal behavior, so filtering can throw away important traces too. This again leads to frequent deoptimizations, so filtering should be avoided for methods with not enough traces, and for loops.

5. Trail inlining heuristics

All inlining heuristics presented in this part are similar in that they first calculate the relevance of the call location, and then use the relevance value to calculate the size to be inlined. The specific decision about the need for inlining is a simple comparison of the maximum size with the actual size of the traces that we decided to inline. For evaluation, we used a combination of several heuristics with various relevance calculation algorithms.

5.1. Relevance of the call site

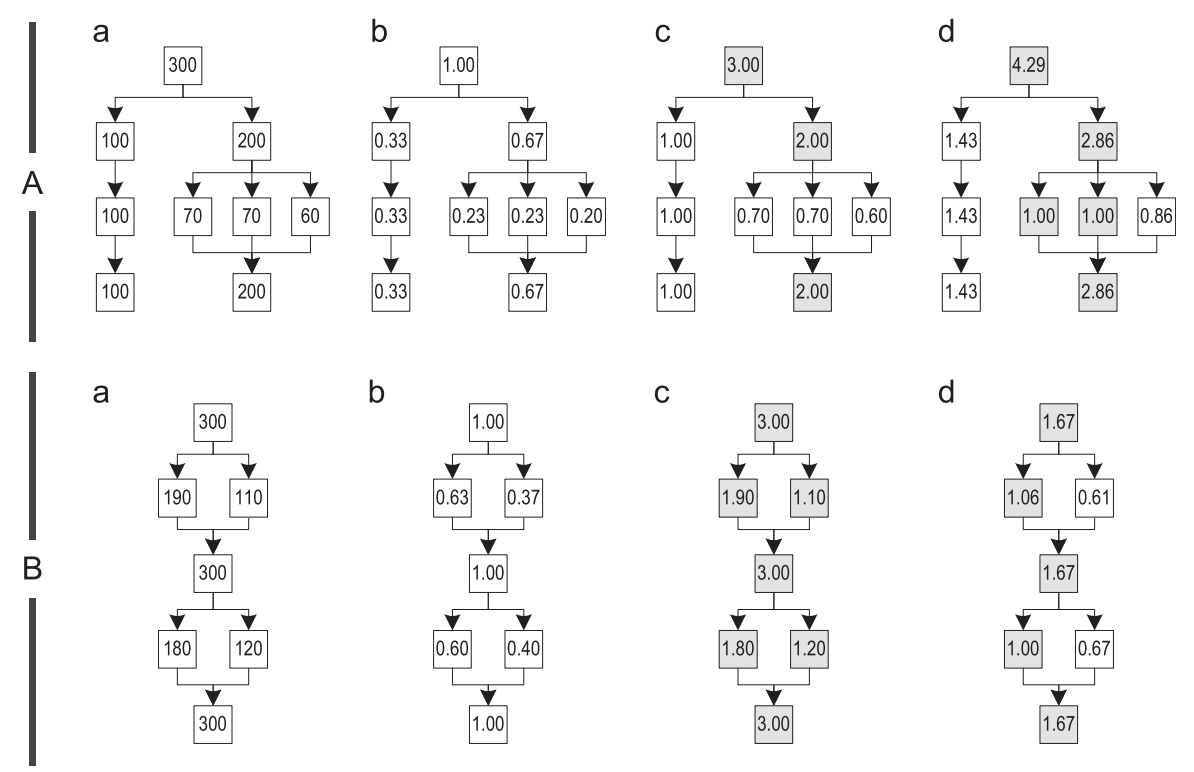

The relevance of the place of the call is determined by the relevance of the block in the trace graph in which this place of the call is located. We tested three different relevance calculation algorithms and illustrated their behavior using two examples of trace graphs, A and B, shown in Figure 11.

Figure 11. Different relevance calculation algorithms: (a) counting the number of executions (b) simple (c) most frequent trace (d) path-based

Example A is built from four different traces that are unlikely to have any identical blocks. Example B represents a graph built of four traces, but each block in them is common for at least one more trace.

To calculate the relevance of the blocks, we first determine how often the recorded traces each block performs. Figure 11 (a) shows the graphs in which each block is annotated with the corresponding execution frequency. Then, we calculate the relevance of each block by dividing its execution frequency by some initial value. Depending on this value, relevance scales differently. One of the following algorithms is used to select the initial value:

- Simple: Простейший способ вычисления релевантности блока – это поделить частоту выполнений на общую частоту выполнения всех трейсов, объединенных в один общий граф. Результирующее значение находится в диапазоне ]0,1], а высокая релевантность выставляется для тех блоков, в которых инлайнинг хорошо влияет на большинстве выполнений, как показано на рисунке 11(b).

- Most frequent trace: Другой способ заключается в том, чтобы поделить частоту выполнения блока на частоту наиболее часто исполняемого трейса, когда-либо вливавшегося в граф. Поскольку трейсы слиты, все блоки, которые принадлежат сразу нескольким трейсам, получают большее число исполнений, чем они имели до слияния. Таким образом, эта метрика устанавливает высокую релевантность для тех мест вызова, которые относятся к этим общим блокам, и ставит в соответствие значение в промежутке ]0,1] для мест вызова, которые содержатся только принадлежат лишь одному трейсу. На рисунке 11( c ), закрашенные блоки относятся сразу к нескольким графам, и иногда так получается, что каждый блок графе может иметь релевантность больше 1, как в примере B.

- Path-based: Наш третий подход вычисляет варианты наиболее часто выполняющихся путей по графу трейсов. Начинаем с корневого блока графа и определяем наиболее часто выполняемое продолжение. Затем мы отмечаем этот блок как «посещенный» и продолжаем работать с этим блоком рекурсивно, до тех пор по либо не найдем блок без продолжений, либо не вернемся к заголовку цикла. Все посещенные блоки согласно этому алгоритму раскрашиваются (см. рисунок 11(d)). Затем мы используем наименьшую частоту выполнений из всех посещенных блоков, чтобы вычислить релевантность всех остальных блоков в графе. Это дает нам некое преимущество в том, что важные места вызова, например, те, которые находятся на этом пути, и на часто выполняемых точках слияния и разделения, имеют значения в промежутке [1; ∞], в то время как менее важные вызовы имеют значение в промежутке ]0,1[.

5.2. Configurations

We started with 15 inlining heuristics, both static and dynamic. For each heuristic, we performed a systematic search for good parameters. During this evaluation, all dynamic heuristics outperformed all static heuristics, therefore, in this article we will not give detailed results for static heuristics. Moreover, we describe only those dynamic heuristic options that showed good peak performance or a small amount of generated machine code.

- Minimum code: Эта эвристика устанавливает размер инлайнинга в 35 байткодов, и этот размер изменяется в зависимости от релевантности месту вызова. Релевантность ниже 1 уменьшает размер инлайнинга, а релевантность больше 1 – увеличивает. Если использовать эту эвристику вместе с алгоритмом вычисления релевантности «path-based», получается довольно неплохая пиковая производительность одновременно с небольшим количеством машинного кода. Мы попробовали комбинировать её с алгоритмом релевантности «simple», однако, это оказало значительный негативный эффект на пиковую производительность, хотя и сгенерировало немного меньше машинного кода. Поэтому мы опускаем детализированные результаты по второму варианту.

- Balanced: Эта эвристика увеличивает размер инлайнинга до 40 байткодов, и этот размер изменяется, основываясь на релевантности месту вызова. Релевантность ниже 1 не изменяет размер инлайнинга, а релевантность больше 1 увеличивает размер. Так что, уменьшение предварительно заданного размера явным образом запрещено, что делает более вероятным инлайнинг важных мест вызова. Мы использовали эту эвристику с «path-based» алгоритмом релевантности, что привело к достижению баланса между пиковой производительностью и количеством сгенерированного машинного кода.

- Performance: Эта эвристика использует большой размер инлайнинга – 150 байткодов, и уменьшает его для тех мест вызова, релевантность которых меньше 1. Увеличение размера инлайнинга дальше предопределенного значения явным образом запрещено. Для вычисления релевантности этих мест вызова, мы снова используем алгоритм «path-based». Эта эвристика оптимизирована для получения максимальной пиковой производительности, тем не менее, удерживая количество сгенерированного машинного кода в приемлемых пределах.

- Greedy: Так же как в предыдущей конфигурации, эта эвристика использует очень большой размер инлайнинга – 250 байткодов, и уменьшает его только для мест вызова с релевантностью ниже 1. Чтобы гарантировать, что вызванные трейсы действительно инлайнятся жадным образом, мы скомбинировали эту эвристику с алгоритмом вычисления релевантности «most frequent trace». Вследствие наличия предопределенного максимального значения, даже эта эвристика избегает инлайнинга слишком больших трейсов. Эта эвристика хорошо показывает, какие из бенчмарков, описанных в части 6, могут использовать преимущества очень агрессивного инлайнинга трейсов. Мы экспериментировали и с более агрессивными эвристиками инлайнинга, но они не смогли еще сильнее улучшить пиковую производительность, при этом генерировуя существенно больше машинного кода.

As with block compilers, all of our heuristics verify that small methods, such as getter-setters, always inline in general. This makes sense, since calling small traces may require more machine code than inlining itself. Another strategy used by the HotSpot server compiler is to avoid inlining methods if the receiver is already separately compiled, or if compilation will result in a lot of generated machine code. This implies that a sufficiently wide compilation area already has enough information for good compiler optimizations, so increasing the compilation area beyond certain boundaries is not advisable. We use this technique for all our inlining heuristics,

To reduce the likelihood of inlining nested traces, we check that inline traces inherit relevance from the parent call location. To do this, we multiply the relevance of each called block by the relevance of the calling block. However, we limit the maximum inherited relevance to 1, because otherwise the relevance may rise with the level of inlining. Again, the inheritance of relevancy reduces the amount of generated machine code without a measurable negative impact on performance, which is why it is used by all our heuristics.

6. Testing and evaluation

We implemented our tracer JIT compiler for the IA-32 architecture for HotSpot VM, using an early access version of b12 under development by JDK8 [20] (it happens in 2013 - approx. Translator). For testing inlining heuristics, we chose SPECjbb2005 [23], SPECjvm2008 [24], and DaCapo 9.12 Bach [3], because they cover a large number of different checks. The benchmark on which the benchmarks were launched has the following configuration: Intel Core-i5 processor with 4 cores at a frequency of 2.66 GHz, 4n256 kb L2 cache, 8 MB shared L3 cache, 8 GB main memory, and Windows 7 Professional instead of the operating system.

The results are presented relative to the unmodified block client compiler HotSpot, which is taken as 100%. For a tracer JIT compiler, the amount of machine code generated also includes data specific to tracer compilation, such as additional information about the deoptimizations needed to fail in the interpreter. Each of the above benchmarks was performed 10 times - the results indicate the average of these results with a 95% confidence interval.

6.1. SPECjbb2005

This benchmark simulates a client-server business application in which all operations are performed on an in-memory database, which is divided into so-called warehouses. The benchmark is executed with a different number of warehouses, and each warehouse is processed in a separate thread. We used a stand with 4 cores, so the official throughput SPECjbb2005, measured in business operations per second (business operations per second, bops), and is defined as the geometric mean of warehouse performance 4-8. A hip of 1200MB size was used for all measurements.

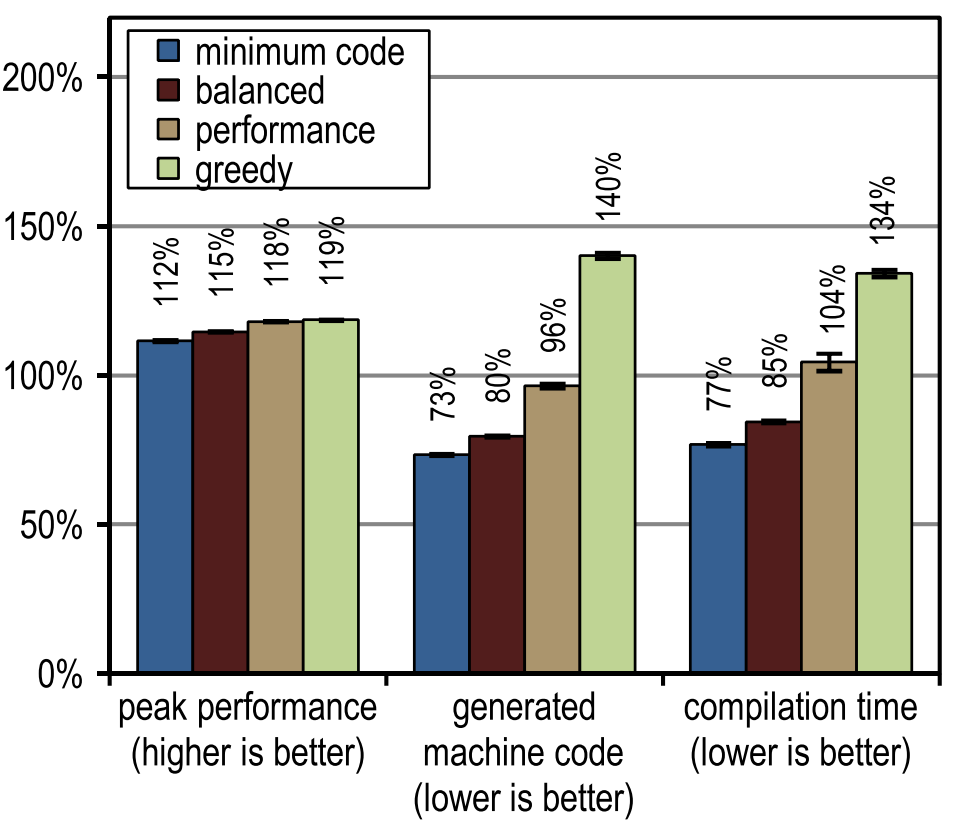

Figure 12 shows the peak performance, generated machine code, and compilation time for SPECjbb2005. All our tracing compiler options turned out to be significantly faster than the client compiler in terms of peak speed. More aggressive tracing inlining led to greater peak performance, but it also led to an increase in the amount of generated machine code and took longer to compile, as this resulted in very large compilation blocks. The peak performance of SPECjbb2005 is clearly improved from the “performance” configuration due to the increased aggressiveness of trace inlining. The greedy configuration raised peak performance, but only marginally, generating much more machine code. In terms of compilation time and the amount of machine code generated,

Figure 12. Results of SPECjbb2005

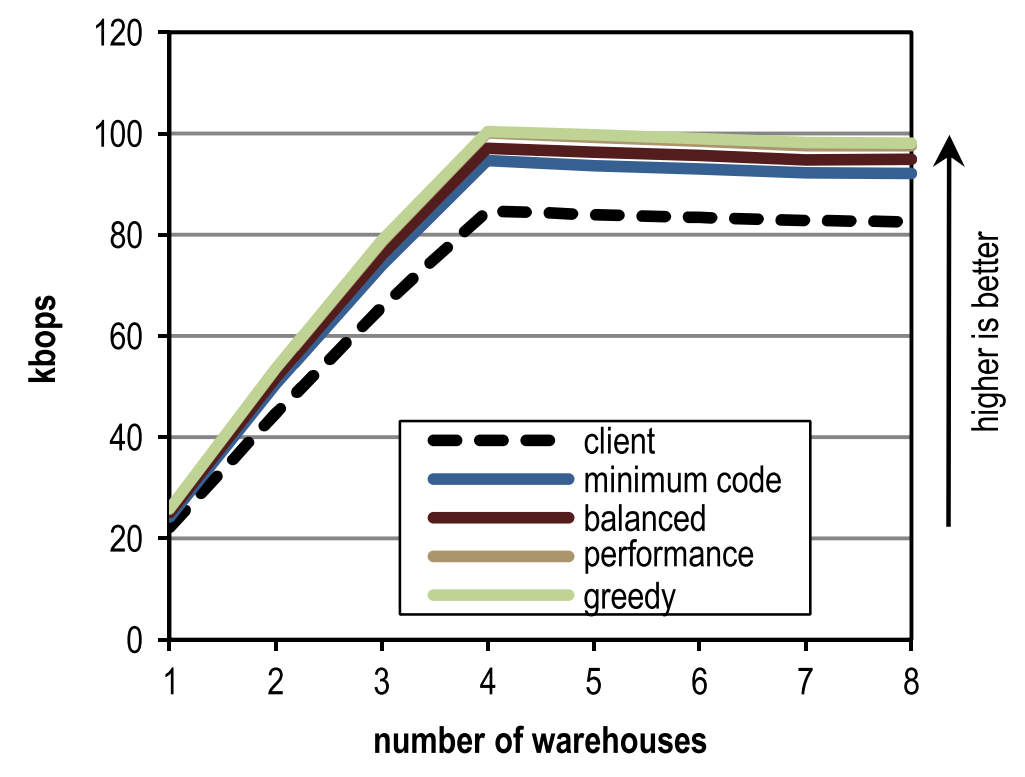

Figure 13 shows the peak performance of SPECjbb2005 for a different number of warehouses and different inlining heuristics. Maximum peak performance was achieved in 4 warehouses, since each warehouse is processed by a separate thread, and our stand has just 4 cores. The figure shows that our configuration overtakes the block client compiler regardless of the number of warehouses.

Figure 13. Peak performance for a different number of warehouses in SPECjbb2005

6.2. SPECjvm2008

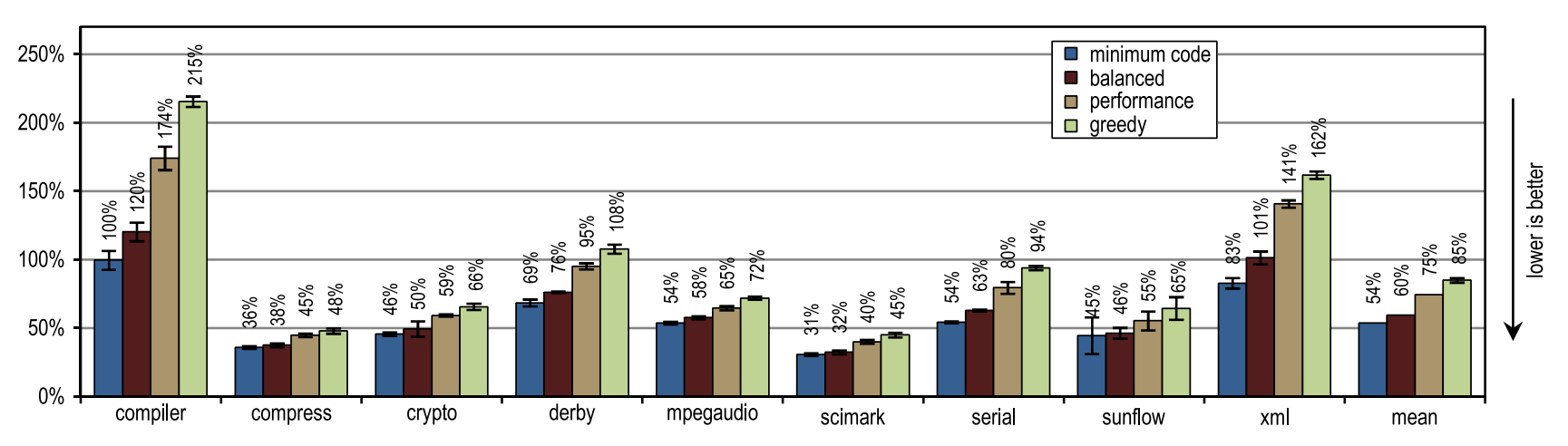

SPECjvm2008 consists of 9 categories of tests that measure peak performance. Immediately after the individual test results, the geometric mean of all the results is shown. A hip of 1024 MB was used for all tests.

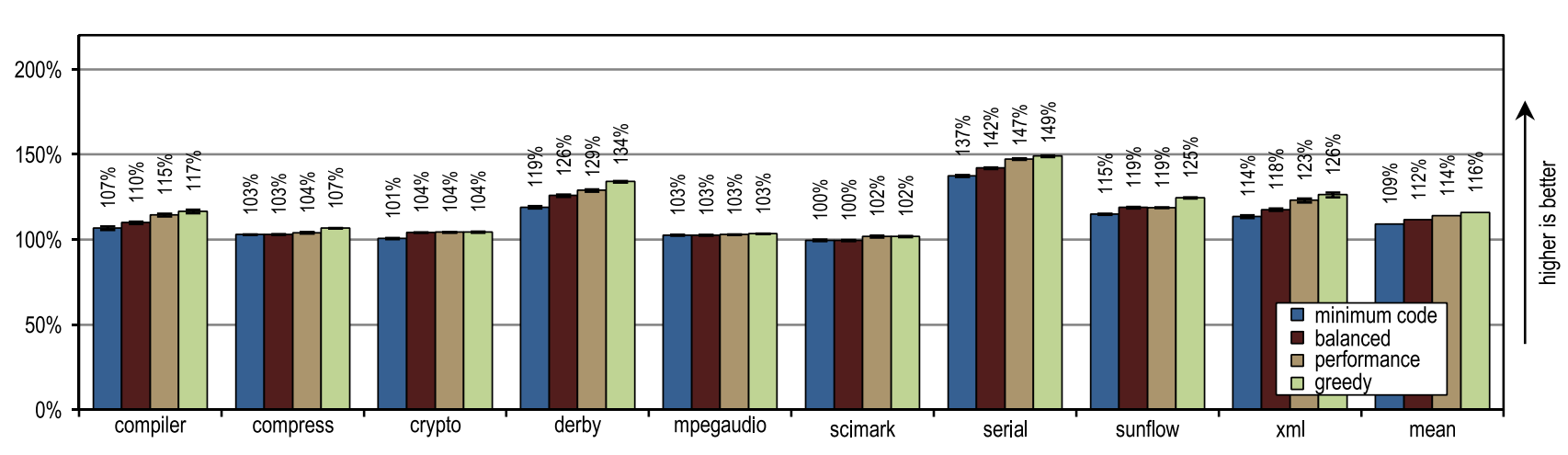

Figure 14. Peak performance of SPECjvm2008

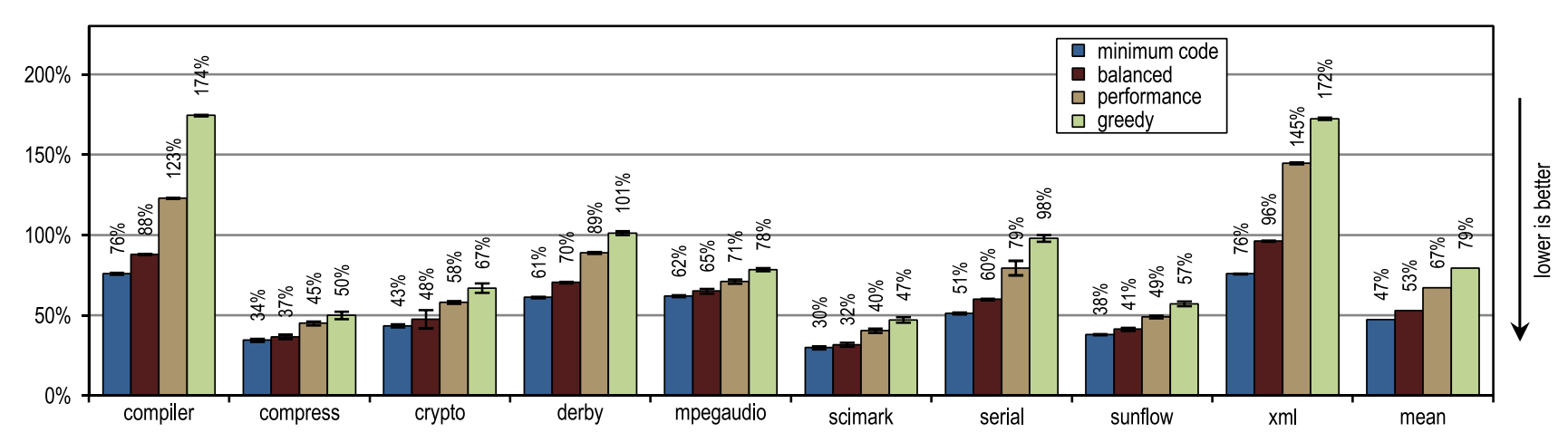

Figure 14 shows that all of our configurations overtook the HotSpot block client compiler. These configurations showed maximum acceleration on derby and serial tests. The inlining of traces on them allowed to reach a larger compilation area than the inlining of methods used in the HotSpot client compiler. A very aggressive trail inlining policy, such as in the greedy configuration, did not lead to an increase in peak performance, especially for the derby and sunflow benchmarks. Moreover, aggressive inlining of traces has increased both the amount of generated machine code and the time of JIT compilation (see figures 15 and 16).

Figure 15. The amount of machine code generated in SPECjvm2008

Figure 16. Compilation time in SPECjvm2008

Small tests with heavy use of crypto, mpegaudio and scimark loops showed almost no increased peak performance, since the HotSpot block client compiler was also able to inline all calls in performance-critical loops. Due to the small size of these tests, our tracer compiler was able to achieve only a comparable compilation area. However, our tracer compiler inline traces instead of the entire contents of the methods, which led to the creation of significantly less generated machine code and reduced compilation time (see Figures 15 and 16).

Figure 15 shows that the amount of machine code generated depends on the inlining size, so that too much code can be suddenly generated. Nevertheless, small and intensively using loop benchmarks do not show excessive code growth, even when our most aggressive inlining heuristics are used. On such benchmarks, inlining heuristics should be very conservative, and should never act too aggressively.

6.3. Dacapo

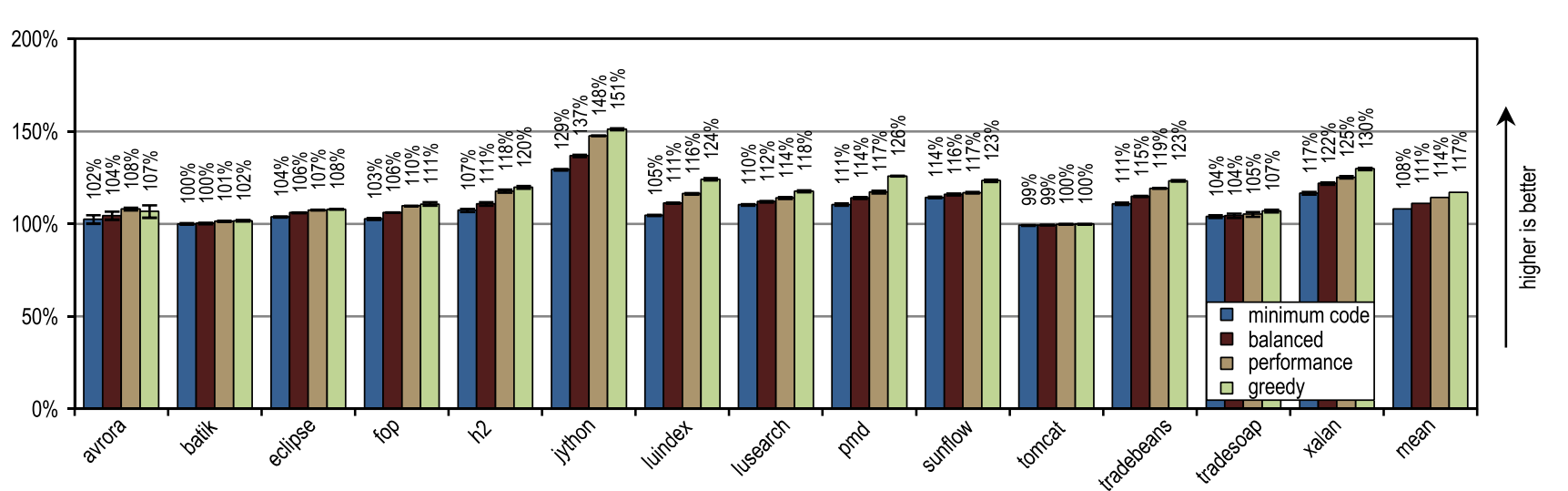

DaCapo 9.12 Bach consists of 14 applications written in Java. Using the default data size and heap size of 1024 MB, each test ran for 20 iterations, so the execution time converges. We show only the fastest start for each of the tests, and the geometric average of all results. A hip size of 1024 MB was used for all measurements.

Figure 17 illustrates the peak performance of DaCapo 9.12 Bach, and Figure 18 shows the amount of machine code generated. The greedy inlining heuristic showed the best peak performance, but generated more machine code than the unmodified HotSpot client compiler. In particular, luindex, pmd and sunflow benefited from using greedy because it provides the maximum inlining size. However, in some tests this heuristic is so aggressive that an excessive amount of machine code is generated without significant changes in peak performance.

Figure 17. Peak performance in DaCapo 9.12 Bach

Figure 18. Number of machine code generated in DaCapo 9.12 Bach

Our configurations got the most advantage on the jython test, which makes a huge number of virtual calls. Our tracer compiler uses recorded trace information for aggressive inlining of virtual methods. Here he got an increased compilation area, which led to high peak performance.

On average, all inlining heuristics except “greedy” generated less machine code than the HotSpot block client compiler, while showing higher average peak performance. One of the static heuristics, which compared the size of traces with a fixed maximum value, reached almost the same peak performance as the “minimum code” configuration, but generated more machine code. This result, very good for static heuristics, was achieved because compiled traces contained only frequently used paths, by definition. But for methods without a large flow, it is enough to simply compare the size of the trace with a fixed threshold value. As long as the maximum inlining size continues to be low,

In terms of the time required for the JIT compilation, Figure 19 shows that our tracer compiler is so highly efficient that even the most aggressive greedy configuration requires on average only the same compilation time that the HotSpot block client compiler requires.

Figure 19. Compilation time in DaCapo 9.12 Bach

6.4. High level optimizations

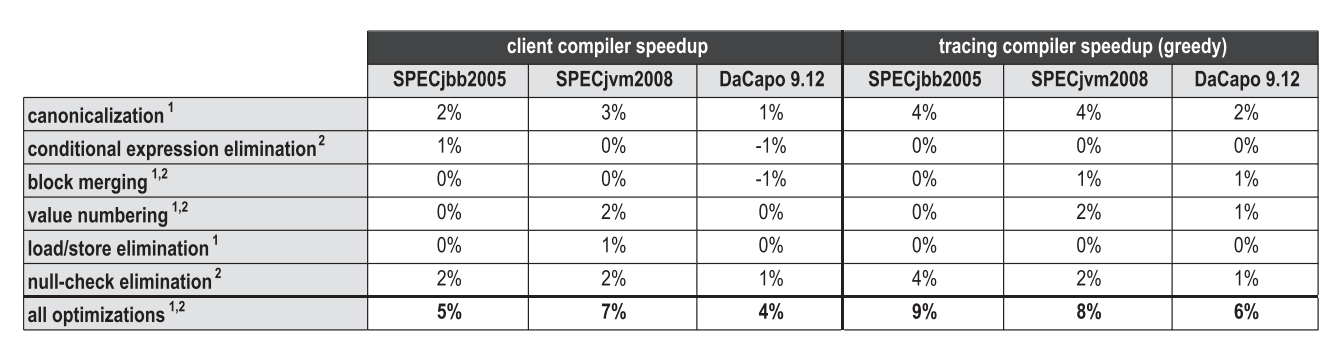

Just like inlining methods, inlining traces is an optimization that has a positive effect on other compiler optimizations, as it increases the compilation area. Figure 20 compares the contribution to peak performance of various high-level optimizations that are used both in the HotSpot block client compiler and in our tracer compiler. Optimizations marked as 1 are local optimizations that are applied directly during bytecode parsing, and optimizations marked as 2 are global optimizations that are performed in a separate phase after graph construction.

Figure 20. Impact of high-level optimizations on peak performance

In general, aggressive inlining of traces increases the compilation area, which positively affects many optimizations. However, depending on benchmarks and specific optimizations, completely different sides of the issue are revealed. For SPECjbb2005, our inlining of traces especially increased the efficiency of the canonicalization test, as well as when testing the performance of simple optimizations, such as folding constants or deleting dead code. But SPECjvm2008 already contained too many small and intensively using test loops in which our tracer compiler could not reach a much larger compilation area. Therefore, it is unlikely that high-level optimizations got at least some advantage there. On DaCapo 9.12 Bach, performance was smeared with a thin layer for all of these optimizations.

6.5. Server compiler

HotSpot contains two different JIT compilers that reuse most of the virtual machine infrastructure together. Our tracing compiler is built on the client compiler, which was created for the best start time of the application, and implements only the most basic optimizations, nevertheless yielding decent peak performance. Server compiler (server compiler) is designed for long-lived server applications, and performs much more optimizations, resulting in highly efficient code. The server compiler can apply some more additional optimizations, such as removing checks for array bounds, loop-invariant code motion (pulling out of a loop), loop unwrapping, and escape analysis. Therefore, the code generated by it has the best peak performance, but it compiles 6-31 times longer (on average 13) on the benchmarks SPECjbb2005, SPECjvm2008 and DaCapo 9.12 Bach. The server runs mostly long-lived applications, so a long compilation introduces only a small overhead compared to the total execution time.

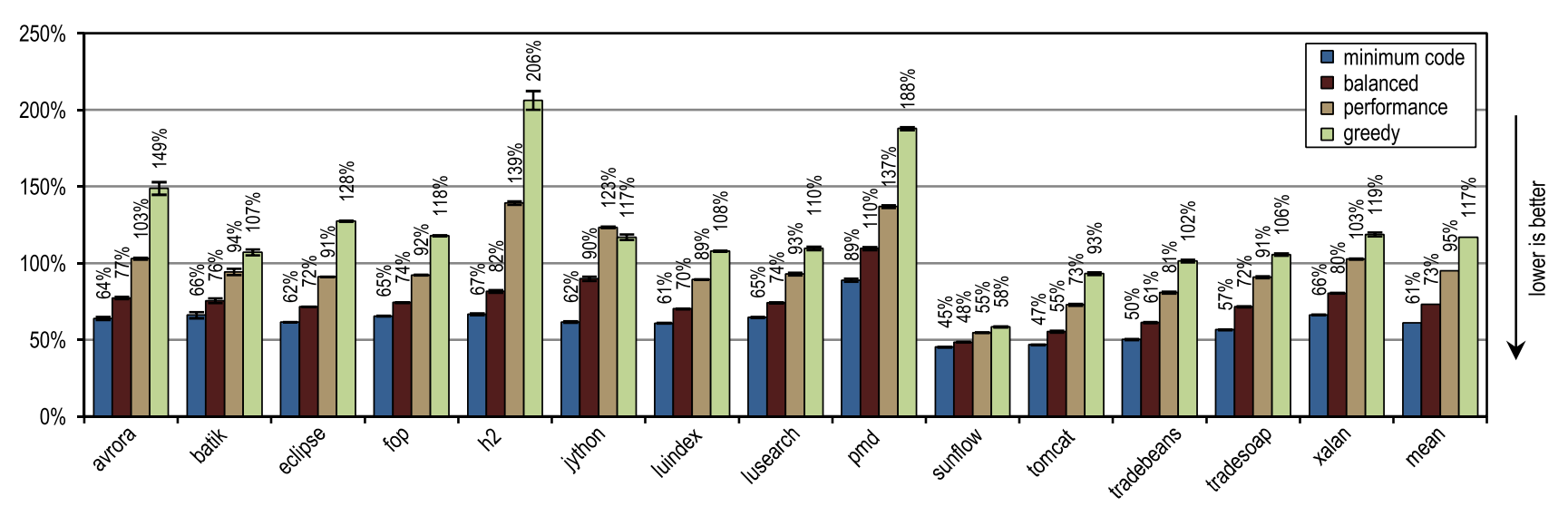

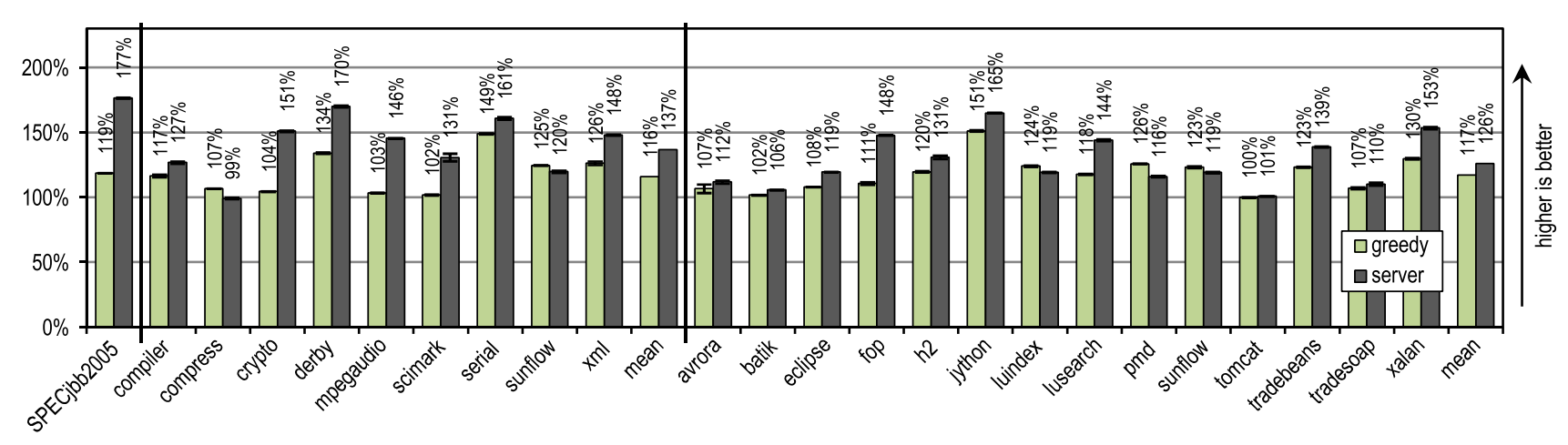

We compared the best peak performance of the greedy configuration with the HotSpot server compiler, and the results are shown in Figure 21.

Figure 21. Peak performance in SPECjbb2005, SPECjvm2008 and DaCapo 9.12 Bach

SPECjbb2005 gained a strong advantage from some optimizations that take a long time to complete and which knows how to produce a server compiler, so that our tracer compiler achieved only 67% of the performance with respect to it.

For SPECjvm2008, our greedy configuration achieved an average of 85% server compiler performance. This benchmark contains many texts that heavily use loops, such as crypto, mpegaudio and scimark, in which the server compiler shows significantly higher peak performance, because it uses the removal of checks on the boundaries of the array and uses smart loop optimizations. Nevertheless, as a result of aggressive inlining of traces, our tracer compiler overtook the server compiler on benchmarks compress and sunflow.

On DaCapo 9.12 Bach, which contains significantly more complex tests using much fewer loops [14], our tracer compiler achieved an average of 93% peak server compiler performance. Despite the fact that our tracer compiler performed only the most basic optimizations, it exceeded the peak performance of the server compiler on the luindex, pmd and sunflow tests. This is made possible by aggressive and context-sensitive inlining.

6.6. Launch speed

Both the HotSpot client compiler, and the server compiler, and our tracer JIT compiler - all of them were developed taking into account multi-threaded compilation in the background. We tested startup performance in the following scenarios:

- В первом сценарии выполнялся 1 поток приложения, в то время как VM использовала вплоть до 4 потоков JIT-компилятора. Поэтому на нашем 4-ядерном стенде, вплоть до 3 ядер были эксклюзивно заняты под JIT-компиляцию.

- Во втором сценарии, 4 потока приложения выполнялись одновременно с 4 потоками JIT-компилятора. На нашем 4-ядерном стенде, потоки JIT-компилятора начали конкурировать с потоками приложения. HotSpot VM назначает более высокий приоритет потокам с JIT-компилятором, потому что JIT-компиляция оказывает позитивное воздействие на скорость запуска.

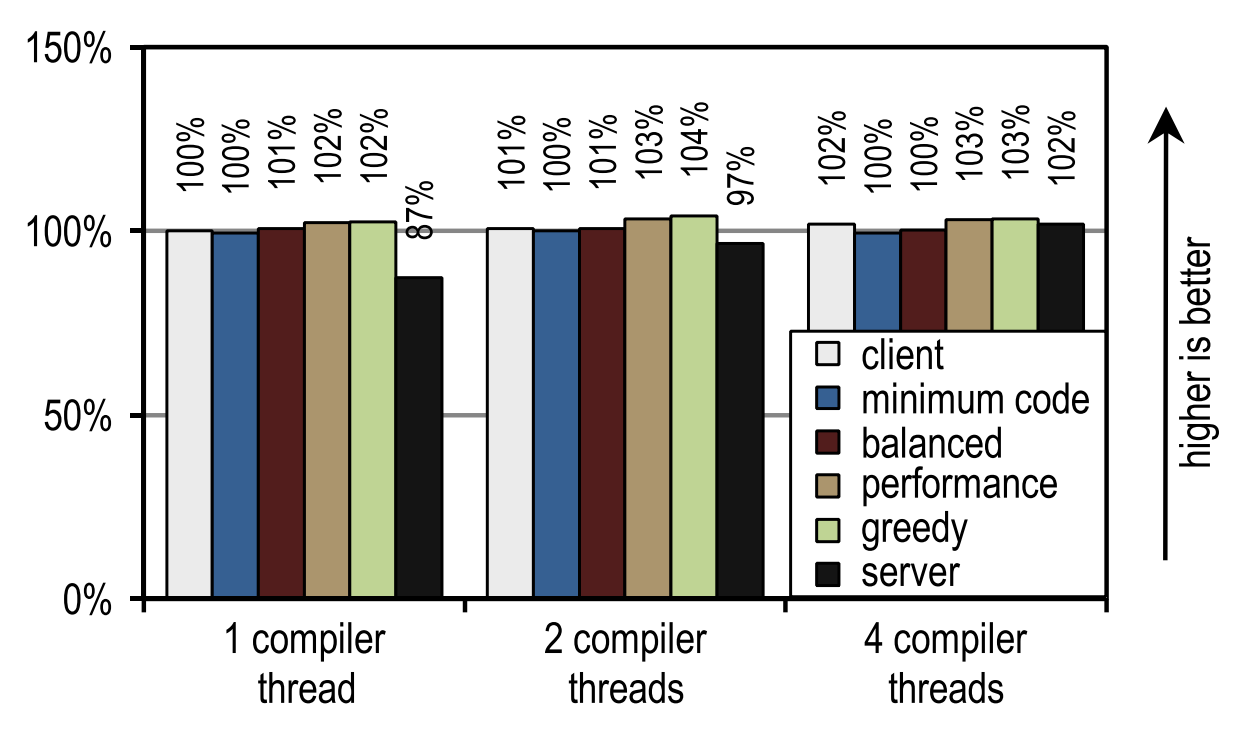

All the presented results are normalized by the performance of the “client” configuration with a single JIT compilation stream. We did not show any launch speed results for SPECjbb2005, as This benchmark is designed to measure peak performance, showing the first results after only 30 seconds, after which all configurations approach their peak performance.

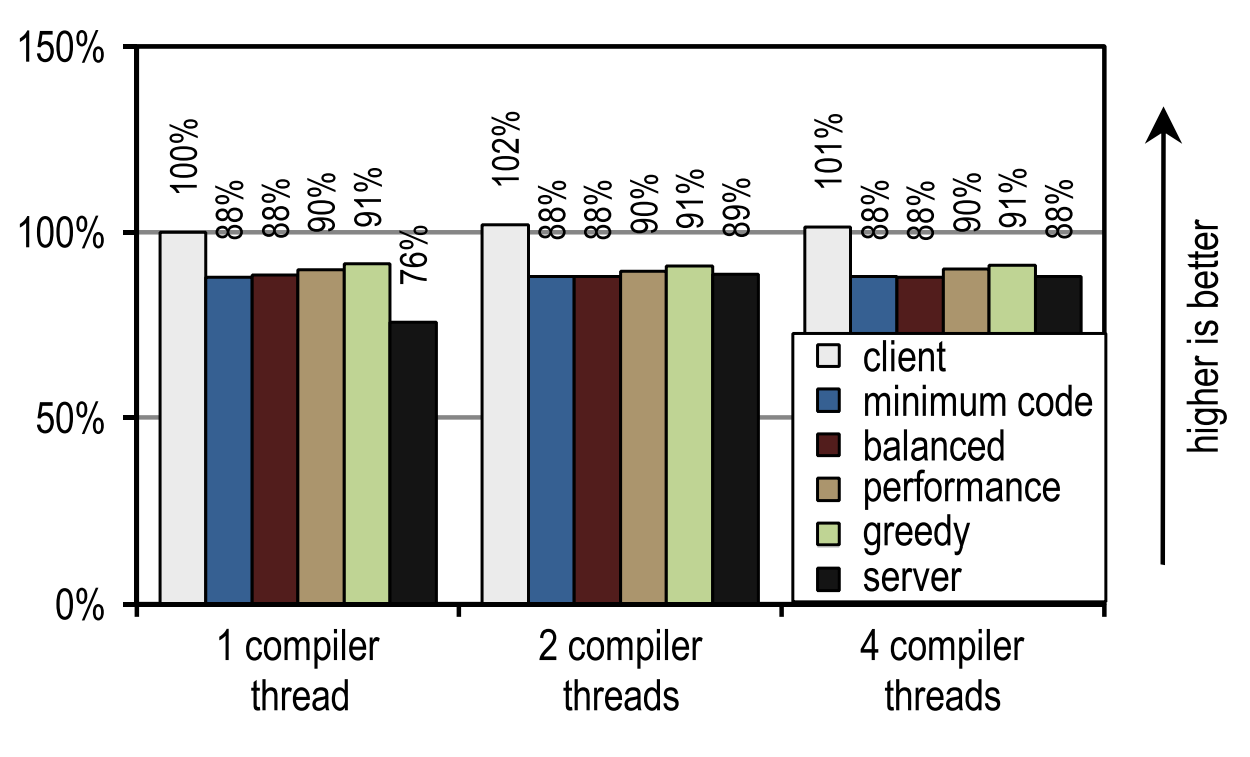

For SPECjvm2008, we measured the startup speed by performing one operation for each test. Figure 23 shows that both the HotSpot client compiler and our tracer compiler achieve good results in those scenarios where application threads compete with compiler threads, i.e. when compilation time is of the essence.

Figure 23. SPECjvm2008 startup time on 4 application threads

Figure 22 shows another scenario in which kernels that would otherwise be idle can be used for JIT compilation.

Figure 22. SPECjvm2008 startup time on 1 application thread

In this case, the HotSpot server compiler shows the best startup speed, since SPECjvm2008 contains several small tests in which you need to compile very little and which reach their peak performance immediately after compiling the deepest loop. This is an ideal case for a server compiler that optimizes loops especially well, and therefore has an advantage in peak performance, which affects startup speed if there are idle kernels to which you can shift the compilation.

This image also shows that the number of compiler threads does not affect either the client compiler or our tracer compiler, since both JIT compilers require a relatively short compilation time. The server compiler requires much more time, and therefore has a strong advantage when there is more than one compilation stream, especially if there are idle kernels that can be used for these purposes.

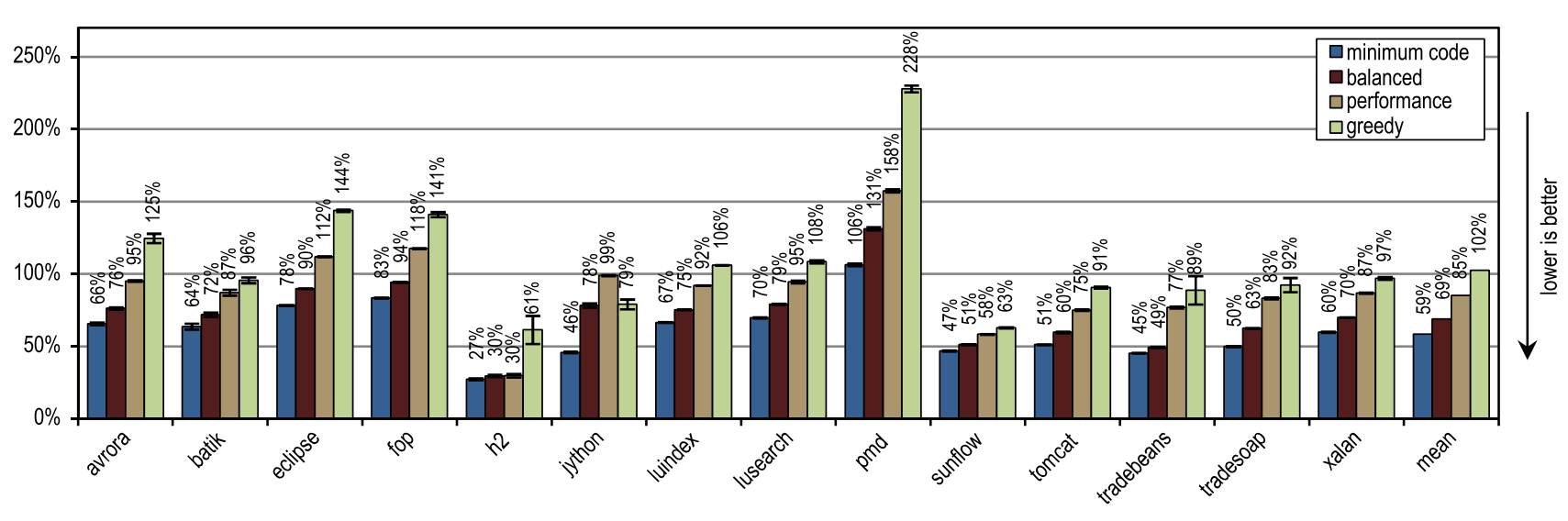

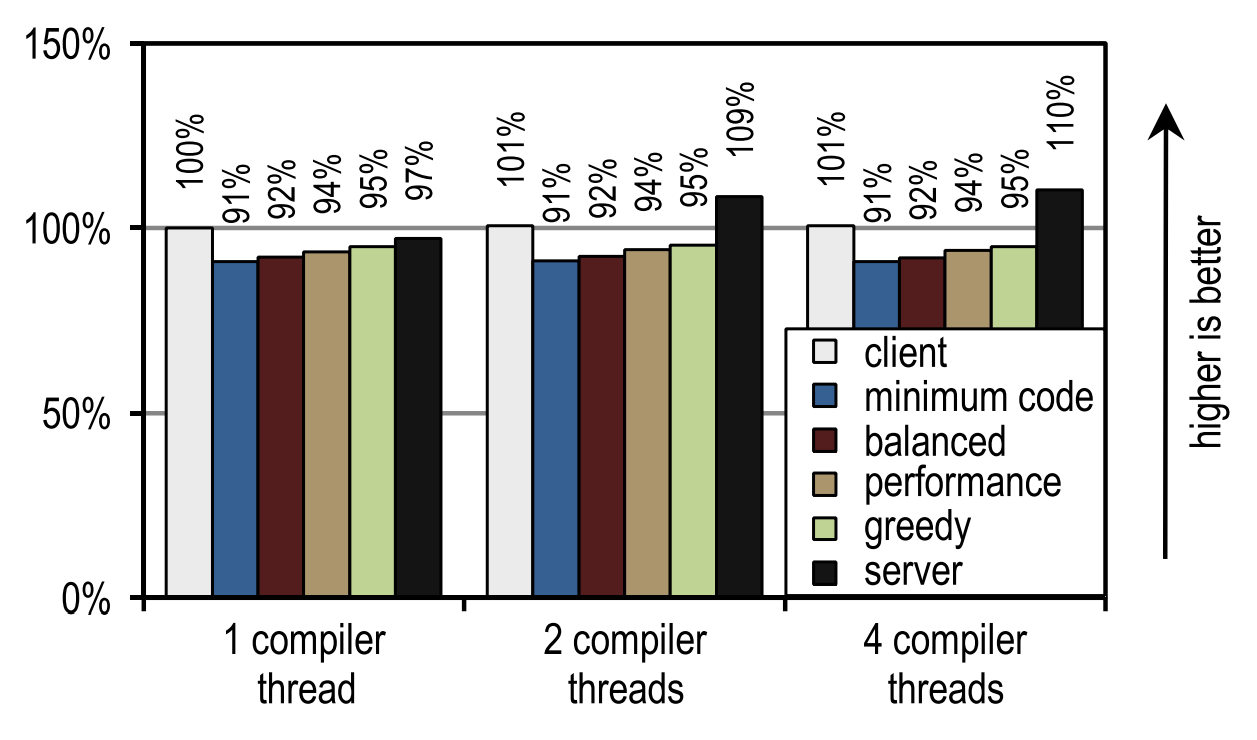

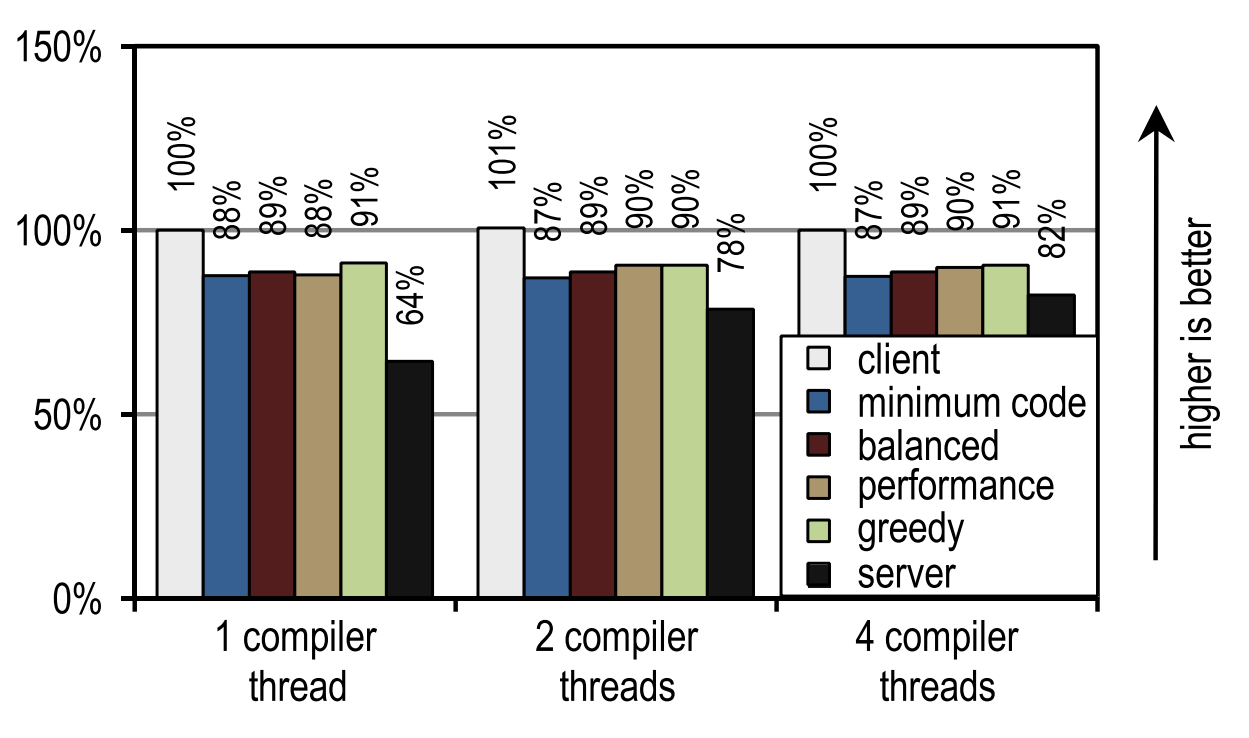

For DaCapo 9.12 Bach, we measured the launch speed by running one iteration for each test. The test results are shown in Figures 24 and 25.

Figure 24. DaCapo 9.12 Bach start time on 1 application thread

Figure 25. DaCapo 9.12 Bach start time on 4 application threads

And again, the number of compilation threads did not affect either the client compiler or our tracer compiler, since they compile quickly. In contrast, the server compiler takes advantage when more than one thread can be used for JIT compilation. However, several of our configurations are always faster than the server compiler, even if it uses all the idle kernels for JIT compilation. This is because DaCapo 9.12 Bach is significantly more complex than the tests in SPECjvm2008 [14], so JIT compilation speed is the dominant factor for application startup speed.

On tests from DaCapo 9.12 Bach, our tracer configurations show about 10% slower startup than on the HotSpot client compiler. Despite the fact that our tracing compiler usually requires even less time for JIT compilation than the HotSpot client compiler, in this case it produces a lower startup speed because it introduces an additional overhead to record traces and deoptimizations that usually occur at the moment of launch . However, this drawback is outweighed by significantly better peak performance. Unlike the HotSpot client compiler, our tracer compiler does not need idle kernels to achieve a good launch speed.

7. Related works

Bala et al. [1] implemented tracer compilation for their Dynamo system to optimize native instruction flows. Hot instruction sequences are identified by running a binary application under the interpreter. Then, these traces are compiled, and the machine code generated by them is executed directly. The overhead from the interpretation decreases with an increase in the number of compiled traces, which sooner or later leads to acceleration. In contrast to them, we identify suitable trace anchors in bytecode, and for them we use static analysis during class loading. We limit individual traces to a depth of one method, linking recorded traces to save context-sensitive information when crossing the method border. This allows us to defer the decision about the need for inlining until compilation time,

Rogers [22] implemented such a JIT compiler for Java in which frequently executed base blocks are marked and compiled. When related blocks that can be stretched to several methods are executed quite often, they are grouped and optimized as one entity. Compared to block compilation, less bytecode is compiled, up to 18%. Our system, however, records and compiles traces, and uses context-sensitive trace inlining to increase peak performance, while generating a very modest amount of machine code.

The following approach is implemented in many tracing compilation options for Java [2,11,12,18]. Nevertheless, all these approaches have in common - traces can be stretched to several methods, and therefore inlining must be done while recording traces. In contrast, we assume that one method is the maximum possible area for capturing a trace, and linking is used to save information between traces. This allows you to postpone the decision about the need for inlining until the compilation time, that is, until much more useful information is available. Therefore, our inlining is more legible, and uses simple heuristics that lead to increased peak performance and fewer generated machine code.

Gal et al. [11,12] implemented a tracer compiler on hardware limited in resources. Traces run on frequently executed targets for reverse brunches, and on the side exits of existing traces. Any trace can be stretched to several methods, so inlining occurs during recording. After recording the trace, it is compiled into machine code and linked to the other compiled traces, forming a tree-like structure. This simple structure of compiled traces simplifies many optimizations, but can lead to excessive tail duplication and bloat code. A similar approach is used in Dalvik VM [4.6] in Android. In contrast, we merge individual traces into the trace graph before compilation, and thus avoid unnecessary duplication.

Bebenita et al. [2] implemented tracer compilation for Maxine VM. Maxine uses a basic JIT compiler instead of an interpreter for initial execution. This basic JIT compiler is modified to generate instrumentation used to record traces. Even before the start of the JIT compilation, the recorded traces merge into “trace regions”, which have an explicit control flow at the merge points. This avoids excessive tail duplication. As a result of all kinds of loop optimizations, the JIT compiler achieves excellent acceleration on tests that use loops heavily. However, it works much worse on compiling methods in tests where loops are used less often. Our work is complementary to their approach, as it focuses on complex applications that rarely use loops, such as DaCapo 9. 12 Bach jython. In our tracer compiler, we achieved excellent acceleration for these applications, but at the same time, all tests with loops showed very little acceleration, because our compiler does not yet perform any smart loop optimizations.

Inoue et al. [18] added a tracer JIT compiler to the IBM J9 / TR JVM, modifying the block JIT compiler. They record linear and cyclic traces without any external merging points, and compile them into optimized machine code. In terms of peak performance, their tracer compiler achieves the performance of a similarly optimized block JIT compiler. Wu et al. [28] expanded this work, avoiding short-lived traces and their unnecessary duplication. Although this does not affect peak performance, it reduces both the amount of machine code generated and compilation time. We also base our work on the existing JVM of industrial quality, but we restrict individual traces to a depth of one method. Therefore, we can defer the decision on inlining until compilation time,

Bradel and Abdelrahman [5] used traces to inline methods in Jikes RVM. Tracks are recorded using an offline system taking into account feedback. Then, frequently executed call points are identified in already recorded traces, and this information is used for inlining methods. They tested on the SPECjvm98 and Java Grande benchmarks, which showed not only a 10% increase in productivity, but also an increase in the amount of generated machine code by 47%. Our system records the traces during execution on the interpreter, after which it compiles and inlines only those parts of the methods through which the traces passed. This increases peak performance and can reduce the amount of machine code generated.

Hazelwood and Grove [16] implemented context-sensitive inlining in a block compiler. Timer sampling and recording of call information is used to decide on inlining at compile time. The amount of generated code and compilation time fell by 10% without changes in performance. In our system, recorded traces contain even more detailed context-sensitive information. Depending on the inlining heuristic, this increases either peak performance or reduces the amount of machine code generated.

Inlining methods is a well-researched issue that is often explored in the relevant literature. Accordingly, the rest of the work concentrates on inlining techniques for individual sections of methods instead of inlining them entirely, and this is close to our work. However, these methods are complementary to compiling traces, as parts of the methods are explicitly excluded from them, and in our country the amount of generated machine code is achieved using the trace record.

Partial compilation of methods [9, 26] uses information from the profile to detect rarely executed parts of methods in order to discard them from compilation. This reduces compilation time, increases startup speed, and can also have a positive effect on peak performance. Our approach is even more selective, as we record and compile only frequently executed traces. Moreover, we use resources saved on compilation to provide more aggressive and context-sensitive inlining, which increases peak performance.

Suganuma et al. [25] implemented compilation with regions, in which rarely executed parts of the method are thrown out of compilation using heuristics and profile information. Then, using heavy inlining methods, often executed code is grouped into one compilation unit. This reduces compilation time by more than 20% and increases peak performance by 5% on average in the benchmarks SPECjvm98 and SPECjbb2000. We only compile frequently executed parts of the methods through which traces pass, and to increase productivity we use inlining of these traces.

8. Conclusion

In this article, we introduced a Java tracer compiler that inlays during a JIT compilation, instead of doing it as usual when recording a trace. Traces are better suited here because they capture only the really executable parts of the methods. Postponing the decision on inlining until the moment of JIT compilation allows for more selective inlining of traces, since at that moment much more information is available. Moreover, all of our recorded traces are context-sensitive, so we inline the various parts of the methods based on the specific places of the call. This allows you to do more aggressive inlining of traces, while generating a reasonable amount of machine code. Additionally, we suggest deleting rarely executed traces before compilation, to ensure

Testing on the benchmarks SPECjbb2005, SPECjvm2008 and DaCapo 9.12 Bach showed that proper inlining of traces can increase peak performance, while generating less machine code than inlining methods. In addition, we also showed that inlining traces expands the compilation area, increasing the efficiency of the main compiler optimizations, and eventually leading to increased peak performance.

Acknowledgments

In creating this work, the authors supported the Austrian Science Fund (FWF): project number P 22493-N18. Oracle and Java are registered trademarks of Oracle and / or its affiliates. All other names may also be registered trademarks of their respective owners.

This translation would never have been done without the spiritual uplift that a person has from attending conferences such as Joker and JPoint , which are organized by the JUG.RU team . One day you return home from a conference, and you realize that you also have to write something.

References

- Bala V, Duesterwald E, Banerjia S. Dynamo: a transparent dynamic optimization system. In: Proceedings of the ACM SIGPLAN conference on programming language design and implementation. ACM Press; 2000. p. 1–12.

- Bebenita M, Chang M, Wagner G, Gal A, Wimmer C, Franz M. Trace-based compilation in execution environments without interpreters. In: Proceedings of the international conference on the principles and practice of programming in Java. ACM Press; 2010. p. 59–68.

- Blackburn SM, Garner R, Hoffman C, Khan AM, McKinley KS, Bentzur R, et al. The DaCapo benchmarks: Java benchmarking development and analysis. In: Proceedings of the ACM SIGPLAN conference on object-oriented programming systems, languages, and applications. ACM Press; 2006. p. 169–90.

- Bornstein D. Dalvik VM Internals. Presented at the Google I/O developer conference, 2008. 〈http://sites.google.com/site/io/dalvik-vm-internals〉.

- Bradel BJ, Abdelrahman TS. The use of traces for inlining in Java programs. In: Proceedings of the international workshop on languages and compilers for parallel computing, 2004. p. 179–93.

- Cheng B, Buzbee B. A JIT compiler for Android's Dalvik VM. Presented at the Google I/O developer conference, 2010. 〈http://www.google.com/events/ io/2010/sessions/jit-compiler-androids-dalvik-vm.html〉.

- Cytron R, Ferrante J, Rosen BK, Wegman MN, Zadeck FK. Efficiently computing static single assignment form and the control dependence graph. ACM Transactions on Programming Languages and Systems 1991;13(4):451–90.

- Dean J, Grove D, Chambers C. Optimization of object-oriented programs using static class hierarchy analysis. In: Proceedings of the European conference on object-oriented programming. Springer-Verlag; 1995. p. 77–101.

- Fink S, Qian F. Design, implementation and evaluation of adaptive recompilation with on-stack replacement. In: Proceedings of the international symposium on code generation and optimization. IEEE Computer Society; 2003. p. 241–52.

- Gal A, Eich B, Shaver M, Anderson D, Mandelin D, Haghighat MR, et al. Trace-based just-in-time type specialization for dynamic languages. In: Proceedings of the ACM SIGPLAN conference on programming language design and implementation. ACM Press; 2009. p. 465–78

- Gal A, Franz M. Incremental dynamic code generation with trace trees. Technical Report. Irvine, USA: Donald Bren School of Information and Computer Science, University of California; 2006

- Gal A, Probst CW, Franz M. HotpathVM: an effective JIT compiler for resource-constrained devices. In: Proceedings of the international conference on virtual execution environments. ACM Press; 2006. p. 144–53.

- R. Griesemer, Generation of virtual machine code at startup. In: OOPSLA workshop on simplicity, performance, and portability in virtual machine design. Sun Microsystems, Inc.; 1999

- Häubl C, Mössenböck H. Trace-based compilation for the Java HotSpot virtual machine. In: Proceedings of the international conference on the principles and practice of programming in Java. ACM Press; 2011. p. 129–38.

- Häubl C, Wimmer C, Mössenböck H. Evaluation of trace inlining heuristics for Java. In: Proceedings of the ACM symposium on applied computing. ACM Press; 2012. p. 1871–6.

- Hazelwood K, Grove D. Adaptive online context-sensitive inlining. In: Proceedings of the international symposium on code generation and optimization. IEEE Computer Society; 2003. p. 253–64.

- Hölzle U, Chambers C, Ungar D. Debugging optimized code with dynamic deoptimization. In: Proceedings of the ACM SIGPLAN conference on programming language design and implementation. ACM Press; 1992. p. 32–43

- Inoue H, Hayashizaki H, Wu P, Nakatani T. A trace-based Java JIT compiler retrofitted from a method-based compiler. In: Proceedings of the international symposium on code generation and optimization. IEEE Computer Society; 2011. p. 246–56

- Kotzmann T, Wimmer C, Mössenböck H, Rodriguez T, Russell K, Cox D. Design of the Java HotSpot client compiler for Java 6. ACM Transactions on Architecture and Code Optimization 2008;5(1):7.

- Oracle corporation. Java platform, standard edition 8 developer preview releases; 2011 〈http://jdk8.java.net/download.html〉

- Paleczny M, Vick C, Click C. The Java HotSpot server compiler. In: Proceedings of the Java virtual machine research and technology symposium. USENIX, 2001. p. 1–12.

- Rogers I. Optimising Java programs through basic block dynamic compilation. PhD thesis. Department of Computer Science, University of Manchester; 2002.

- Standard Performance Evaluation Corporation. The SPECjbb2005 Benchmark; 2005 〈http://www.spec.org/jbb2005/〉.

- Standard Performance Evaluation Corporation. The SPECjvm2008 benchmarks; 2008 〈http://www.spec.org/jvm2008/〉.

- Suganuma T, Yasue T, Nakatani T. A region-based compilation technique for dynamic compilers. ACM Transactions on Programming Languages and Systems 2006;28:134–74.

- Whaley J. Partial method compilation using dynamic profile information. SIGPLAN Notices 2001;36:166–79

- Wimmer C, Mössenböck H. Optimized interval splitting in a linear scan register allocator. In: Proceedings of the ACM/USENIX international conference on virtual execution environments. ACM Press; 2005. p. 132–41.

- Wu P, Hayashizaki H, Inoue H, Nakatani T. Reducing trace selection footprint for large-scale java applications without performance loss. In: Proceedings of the ACM international conference on object oriented programming systems languages and applications. ACM Press; 2011. p. 789–804.

Авторы

Christian Häubl is a PhD student at Johannes Kepler University Linz in Austria, working on tracer Java compilers. He has a Computer Science and Networks and Security degree, both from Johannes Kepler University Linz. He is interested in areas related to compilation and virtual machines, but sometimes switches to security and web applications.

Christian wimmer- Researcher at Oracle Labs, worked on MaxineVM, on the Graal compiler, and on other projects related to dynamic compilation and optimizations. His research interests are related to compilers, virtual machines, and secure systems for component software architectures. He received his doctorate from Computer Science (supervisor: Professor Hanspeter Mössenböck) at Johannes Kepler University Linz. Prior to joining Oracle, he was a doctoral student at the Department of Computer Science at the University of California. He worked with Professor Michael Franz at Secure Systems and Software Laboratory on compiler optimizations, dynamic programming languages, and language security.

Hanspeter mössenböck- Professor of Computer Science at Johannes Kepler University Linz and Head of Christian Doppler Laboratory for Automated Software Engineering. He received PhD CS from the University of Linz in 1987, and was an assistant professor at ETH Zurich from 1988 to 1994, working with Professor Nicklaus Wirth on the Oberon system. Current research interests include programming languages and compilers, as well as object-oriented and component programming. He is the author of several books on teaching programming.

Translators

Oleg Chirukhin - at the time of writing this text, works as an architect at Sberbank-Technology, is engaged in the development of an architecture for automated business process management systems. Prior to joining Sberbank Technologies, he took part in the development of several state information systems, including State services and Electronic Medical Records, as well as in the development of online games. Current research interests include virtual machines, compilers, and programming languages.