What to do if the site is subject to Google sanctions

Filters - the topic for optimizers is always relevant and often quite painful. The lion's share of articles, recommendations and other varieties of SEO-educational program on the Web is devoted precisely to the art of building relationships with search engines. Those who are more fortunate write and read materials on how to avoid sanctions; those who are less - how to get out from under them with minimal losses. This article, as you already understood, will not be one of those that tell success stories or speak of warnings. I would like to share my personal unsuccessful experience with search engines - to describe how the situation looks from the inside, what questions and doubts arise and, of course, what steps it makes sense to take. In a word, I invite everyone to learn from our mistakes!

I think there is no need to describe in detail what filters Google has and what they want from us - the information is quite trivial and publicly available. But for the sake of order, I will briefly characterize those that will be regularly mentioned in the narration:

- Panda - evaluates the content of the site for quality (richness of text, uniqueness) and usefulness for the user;

- Penguin - monitors external links, eliminates sites involved in all kinds of commercial and non-commercial link schemes;

- Hummingbird - checks the semantic core of the site with its actual content and identifies inconsistencies.

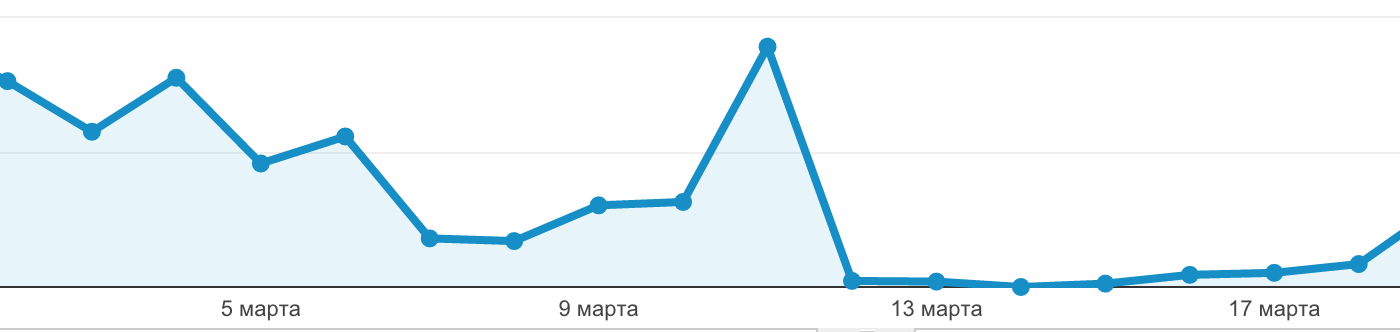

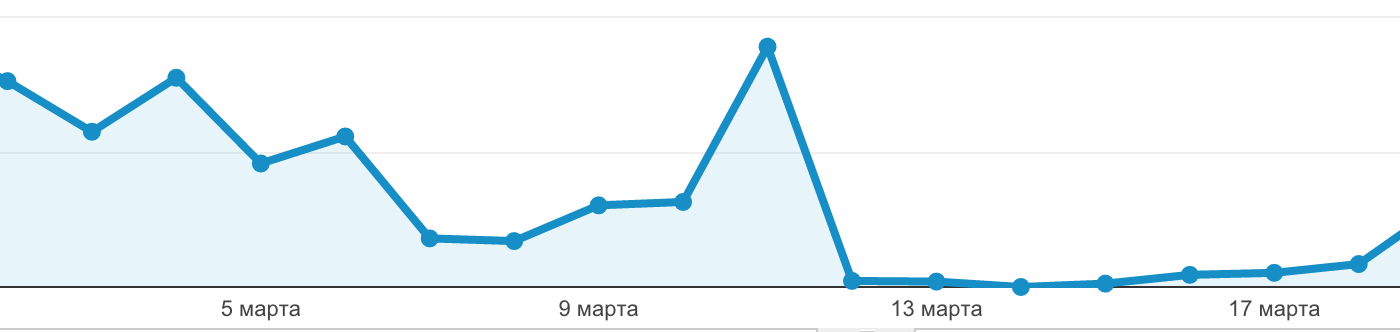

Now, refreshing our memory, we begin our story. So, it all started with a sharp, inexplicable drop in performance in mid-March. As on purpose, right before the imposition of sanctions, we reached the peak of attendance, so the schedule turned out to be especially spectacular:

The site that we operated was then very young, the indexing of pages began not so long ago, so we had a lot of assumptions, one more pessimistic than the other. First of all, of course, all technical aspects were examined with partiality: metric codes were checked, all pages of the site were tested. After making sure that everything functions as it should, we involuntarily began to turn our thoughts to sanctions. I was confused by one point, which is rarely mentioned when talking about filters: we did not receive any notifications by mail and were not completely sure which of these conclusions should be drawn. Obviously, from this it could be concluded that the site did not fall into the paws of the Penguin, whose "letters of happiness" are widely known. But regarding the rest of the fauna of Google, it was difficult to say something definite.

Then, to clarify the situation, we delved into the analysis of traffic sources.

The clinical picture emerged as a textbook: conversions on search queries fell to almost zero, while “external” traffic was practically unaffected. What turned out to be a surprise to us was the simultaneous drop in direct calls. It seems to be obvious that the sources of traffic are interconnected, but we did not think to see the effect in such a short period. One way or another, the conclusion was obvious: we were really “sunk” for violations.

There is little joy, but in this situation there are also its bright sides: for example, the incentive to analyze your own web page in detail and critically for compliance with generally accepted criteria and identify risk areas. To find out which of the silent filters still dealt with us and on what grounds, an entire investigation was ahead. We crossed out hummingbirds on mature thinking: KZ, even at the most picky glance, was quite consistent with the content of the site. The Panda seemed the most likely option, but we could vouch for the uniqueness of our texts, and not enough, maybe regular updates still did not seem to be a good enough reason for such punitive measures.

The reason, as always, was in the details. A few days before the site changed the design of the main page; the existing text lied poorly on the new design, and a decision was made to temporarily remove it. However, due to oversight, instead of deleting, the text was first hidden. We discovered and fixed this error literally in a quarter of an hour, but this time was enough for a vigilant robot. Noting the discrepancy between the content “for the user” and “for the search engine”, Panda decided that she caught us on deceit and duplicity, and then it’s clear.

This was the second important lesson we learned from this epic: SEO optimizer is a bit of a sapper, even the smallest and most stupid mistakes in our profession can lead to devastating consequences.

But be that as it may, the life of the site continued, and it was necessary to somehow return it to its former position. To begin with, we focused on what let us down - the long-suffering text on the main page. We calculated the volume that Google would have liked and would not have negatively affected the site’s usability (remember the conflict with the design?), Selected absolutely new short codes and, finally, rewrote everything from scratch based on a new semantic core. Thus, the work on the bugs was completed successfully, but apart from a clear conscience, it did little to us.

Earlier, I said that getting under the filter stimulates reflection, but in fact, everything is much better: according to the results of reflection, global self-improvement will have to be addressed. Point correction of a single violation will not give anything, even if, as in our case, there is confidence that the problem was precisely in it. Sanctions drop the site to its starting position and make it an object of particularly close attention from search filters. Therefore, to show that you are really using the second chance to the fullest, you have to show your best side. That is, to eliminate the slightest opportunity for nit-picking - at least for the first time.

Our site was, in general, clean: content from the very beginning was created with an eye to Google’s rules, and we were not interested in purchasing links and other risky undertakings. At first glance, it is not clear how to fix it. But over time (something, and Google gave us enough time) it turned out that the longer you look, the more shortcomings are discovered. In addition to working with problematic text, over the next couple of months we also:

- deleted all blank pages;

- Concerned about the internal linking;

- checked all the texts for uniqueness and found out that some of them were copied to other sources;

- finalized the design;

- replaced some of the old keywords who have lost their positions.

I don’t know if any of this changed Google’s attitude, but I want to believe that the measures taken have accelerated the matter. Unlike the fall, progress was very, very gradual, but the indicators began to grow steadily. Some sources advise contacting Google directly for a review; in my opinion, this is unnecessary trouble without much impact. Judging by the speed of the reaction, the robot doesn’t even go to the fined pages very rarely, so be patient and focus on bringing the site to shine. The main danger at this stage is to hurry up, and not to miss the moment. Here is the third lesson.

We are approaching a happy ending. In early August, that is, somewhere 4-5 months after the disaster, the indicators reached their previous values. The term is fairly standard, you will see it in almost any thematic article. With all the efforts, we failed to rehabilitate ahead of schedule, so I would advise you to immediately tune in for long distances.

On the whole and in general, I can say that the experience for our team, although negative, was very valuable in the long run. In the course of working on bugs, we learned to look at the site through the eyes of a search engine, learned some interesting details about the regulations, and developed the habit of not panicking in emergency situations. I hope that our observations will be useful to readers purely as a theoretical guide, but even if you are not lucky to be sanctioned, do not despair and try to approach the situation philosophically. After all, for the beaten, as you know, they give two not beaten.

I think there is no need to describe in detail what filters Google has and what they want from us - the information is quite trivial and publicly available. But for the sake of order, I will briefly characterize those that will be regularly mentioned in the narration:

- Panda - evaluates the content of the site for quality (richness of text, uniqueness) and usefulness for the user;

- Penguin - monitors external links, eliminates sites involved in all kinds of commercial and non-commercial link schemes;

- Hummingbird - checks the semantic core of the site with its actual content and identifies inconsistencies.

Now, refreshing our memory, we begin our story. So, it all started with a sharp, inexplicable drop in performance in mid-March. As on purpose, right before the imposition of sanctions, we reached the peak of attendance, so the schedule turned out to be especially spectacular:

The site that we operated was then very young, the indexing of pages began not so long ago, so we had a lot of assumptions, one more pessimistic than the other. First of all, of course, all technical aspects were examined with partiality: metric codes were checked, all pages of the site were tested. After making sure that everything functions as it should, we involuntarily began to turn our thoughts to sanctions. I was confused by one point, which is rarely mentioned when talking about filters: we did not receive any notifications by mail and were not completely sure which of these conclusions should be drawn. Obviously, from this it could be concluded that the site did not fall into the paws of the Penguin, whose "letters of happiness" are widely known. But regarding the rest of the fauna of Google, it was difficult to say something definite.

Then, to clarify the situation, we delved into the analysis of traffic sources.

The clinical picture emerged as a textbook: conversions on search queries fell to almost zero, while “external” traffic was practically unaffected. What turned out to be a surprise to us was the simultaneous drop in direct calls. It seems to be obvious that the sources of traffic are interconnected, but we did not think to see the effect in such a short period. One way or another, the conclusion was obvious: we were really “sunk” for violations.

There is little joy, but in this situation there are also its bright sides: for example, the incentive to analyze your own web page in detail and critically for compliance with generally accepted criteria and identify risk areas. To find out which of the silent filters still dealt with us and on what grounds, an entire investigation was ahead. We crossed out hummingbirds on mature thinking: KZ, even at the most picky glance, was quite consistent with the content of the site. The Panda seemed the most likely option, but we could vouch for the uniqueness of our texts, and not enough, maybe regular updates still did not seem to be a good enough reason for such punitive measures.

The reason, as always, was in the details. A few days before the site changed the design of the main page; the existing text lied poorly on the new design, and a decision was made to temporarily remove it. However, due to oversight, instead of deleting, the text was first hidden. We discovered and fixed this error literally in a quarter of an hour, but this time was enough for a vigilant robot. Noting the discrepancy between the content “for the user” and “for the search engine”, Panda decided that she caught us on deceit and duplicity, and then it’s clear.

This was the second important lesson we learned from this epic: SEO optimizer is a bit of a sapper, even the smallest and most stupid mistakes in our profession can lead to devastating consequences.

But be that as it may, the life of the site continued, and it was necessary to somehow return it to its former position. To begin with, we focused on what let us down - the long-suffering text on the main page. We calculated the volume that Google would have liked and would not have negatively affected the site’s usability (remember the conflict with the design?), Selected absolutely new short codes and, finally, rewrote everything from scratch based on a new semantic core. Thus, the work on the bugs was completed successfully, but apart from a clear conscience, it did little to us.

Earlier, I said that getting under the filter stimulates reflection, but in fact, everything is much better: according to the results of reflection, global self-improvement will have to be addressed. Point correction of a single violation will not give anything, even if, as in our case, there is confidence that the problem was precisely in it. Sanctions drop the site to its starting position and make it an object of particularly close attention from search filters. Therefore, to show that you are really using the second chance to the fullest, you have to show your best side. That is, to eliminate the slightest opportunity for nit-picking - at least for the first time.

Our site was, in general, clean: content from the very beginning was created with an eye to Google’s rules, and we were not interested in purchasing links and other risky undertakings. At first glance, it is not clear how to fix it. But over time (something, and Google gave us enough time) it turned out that the longer you look, the more shortcomings are discovered. In addition to working with problematic text, over the next couple of months we also:

- deleted all blank pages;

- Concerned about the internal linking;

- checked all the texts for uniqueness and found out that some of them were copied to other sources;

- finalized the design;

- replaced some of the old keywords who have lost their positions.

I don’t know if any of this changed Google’s attitude, but I want to believe that the measures taken have accelerated the matter. Unlike the fall, progress was very, very gradual, but the indicators began to grow steadily. Some sources advise contacting Google directly for a review; in my opinion, this is unnecessary trouble without much impact. Judging by the speed of the reaction, the robot doesn’t even go to the fined pages very rarely, so be patient and focus on bringing the site to shine. The main danger at this stage is to hurry up, and not to miss the moment. Here is the third lesson.

We are approaching a happy ending. In early August, that is, somewhere 4-5 months after the disaster, the indicators reached their previous values. The term is fairly standard, you will see it in almost any thematic article. With all the efforts, we failed to rehabilitate ahead of schedule, so I would advise you to immediately tune in for long distances.

On the whole and in general, I can say that the experience for our team, although negative, was very valuable in the long run. In the course of working on bugs, we learned to look at the site through the eyes of a search engine, learned some interesting details about the regulations, and developed the habit of not panicking in emergency situations. I hope that our observations will be useful to readers purely as a theoretical guide, but even if you are not lucky to be sanctioned, do not despair and try to approach the situation philosophically. After all, for the beaten, as you know, they give two not beaten.