IDA Pro Upgrade. Learning to write Python downloaders

- Tutorial

Hello to all,

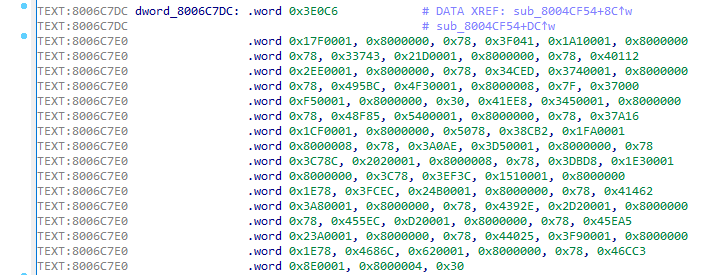

A series of articles on writing different useful pieces for IDA Pro continues. Last time we corrected the processor module , and today we will talk about writing a module-loader (loader) for one vintage operating system, namely for AmigaOS . We will write in Python. I also try to reveal some of the subtleties of working with relocations (they are relocations), which are found in many executable files ( PE, ELF, MS-DOSetc.).

Introduction

Those who have previously worked with the Amiga Hunk format (in AmigaOS, this is the name of the objects containing executable code: executable-, library-files, etc.) and loaded at least one such file into IDA, they probably saw that the bootloader was already exists (moreover, there are even source codes in IDA SDK):

Yes, indeed, everything is already written before us, but ... Everything is implemented so badly that it is simply impossible to work with at least some normal executable file.

Current implementation issues

So here is a list of problems:

- Relocation. In the Amiga Hunk files, their presence is normal practice. And in the existing implementation, they are even applied when loading a file. But this is not always done correctly (the final link may not be calculated correctly).

In addition, you will not be able to do the " Rebase program ... ". This function is absent in the loader. - The file is uploaded to the base address

0x00000000. This is definitely wrong, since various system libraries are loaded at zero offset. As a result, links to these libraries are created in the address space of the downloaded file. - The loader can be set to various flags that do not allow (or, on the contrary, allow) IDA to perform certain “recognition” actions: the definition of pointers, arrays, assembler instructions.

In situations where it does not apply to x86 / x64 / ARM, often after downloading the file, the assembler listing looks like one wants to close IDA at a glance.(and also delete the investigated file and learn how to use radare2). This is due to the default bootloader flags.

Writing a loader template

Actually, writing a bootloader is easy. There are three callbacks that need to be implemented:

1) accept_file(li, filename)

Through this function, IDA determines whether this loader can be used to download a file.filename

defaccept_file(li, filename):

li.seek(0)

tag = li.read(4)

if tag == 'TAG1': # check if this file can be loadedreturn {'format': 'Super executable', 'processor': '68000'}

else:

return02) load_file(li, neflags, format)

Here the file content is loaded into the database, the creation of segments / structures / types, the use of relocs and other actions.

defload_file(li, neflags, format):# set processor type

idaapi.set_processor_type('68000', ida_idp.SETPROC_LOADER)

# set some flags

idaapi.cvar.inf.af = idaapi.AF_CODE | idaapi.AF_JUMPTBL | idaapi.AF_USED | idaapi.AF_UNK | \

idaapi.AF_PROC | idaapi.AF_LVAR | idaapi.AF_STKARG | idaapi.AF_REGARG | \

idaapi.AF_TRACE | idaapi.AF_VERSP | idaapi.AF_ANORET | idaapi.AF_MEMFUNC | \

idaapi.AF_TRFUNC | idaapi.AF_FIXUP | idaapi.AF_JFUNC | idaapi.AF_NULLSUB | \

idaapi.AF_NULLSUB | idaapi.AF_IMMOFF | idaapi.AF_STRLIT

FILE_OFFSET = 0x40# real code starts here

li.seek(FILE_OFFSET)

data = li.read(li.size() - FILE_OFFSET) # read all data except header

IMAGE_BASE = 0x400000# segment base (where to load)# load code into database

idaapi.mem2base(data, IMAGE_BASE, FILE_OFFSET)

# create code segment

idaapi.add_segm(0, IMAGE_BASE, IMAGE_BASE + len(data), 'SEG01', 'CODE')

return13) move_segm(frm, to, sz, fileformatname)

If there are relocations in the downloaded file, then it is impossible just to take and change the base address. It is necessary to recount all relocations and patch links. In general, the code will always be the same. Here we just go through all the previously created relocks, adding a delta to them and applying patches to the bytes of the downloaded file.

defmove_segm(frm, to, sz, fileformatname):

delta = to

xEA = ida_fixup.get_first_fixup_ea()

while xEA != idaapi.BADADDR:

fd = ida_fixup.fixup_data_t(idaapi.FIXUP_OFF32)

ida_fixup.get_fixup(xEA, fd)

fd.off += delta

if fd.get_type() == ida_fixup.FIXUP_OFF8:

idaapi.put_byte(xEA, fd.off)

elif fd.get_type() == ida_fixup.FIXUP_OFF16:

idaapi.put_word(xEA, fd.off)

elif fd.get_type() == ida_fixup.FIXUP_OFF32:

idaapi.put_long(xEA, fd.off)

fd.set(xEA)

xEA = ida_fixup.get_next_fixup_ea(xEA)

idaapi.cvar.inf.baseaddr = idaapi.cvar.inf.baseaddr + delta

return1import idaapi

import ida_idp

import ida_fixup

defaccept_file(li, filename):

li.seek(0)

tag = li.read(4)

if tag == 'TAG1': # check if this file can be loadedreturn {'format': 'Super executable', 'processor': '68000'}

else:

return0defload_file(li, neflags, format):# set processor type

idaapi.set_processor_type('68000', ida_idp.SETPROC_LOADER)

# set some flags

idaapi.cvar.inf.af = idaapi.AF_CODE | idaapi.AF_JUMPTBL | idaapi.AF_USED | idaapi.AF_UNK | \

idaapi.AF_PROC | idaapi.AF_LVAR | idaapi.AF_STKARG | idaapi.AF_REGARG | \

idaapi.AF_TRACE | idaapi.AF_VERSP | idaapi.AF_ANORET | idaapi.AF_MEMFUNC | \

idaapi.AF_TRFUNC | idaapi.AF_FIXUP | idaapi.AF_JFUNC | idaapi.AF_NULLSUB | \

idaapi.AF_NULLSUB | idaapi.AF_IMMOFF | idaapi.AF_STRLIT

FILE_OFFSET = 0x40# real code starts here

li.seek(FILE_OFFSET)

data = li.read(li.size() - FILE_OFFSET) # read all data except header

IMAGE_BASE = 0x400000# segment base (where to load)# load code into database

idaapi.mem2base(data, IMAGE_BASE, FILE_OFFSET)

# create code segment

idaapi.add_segm(0, IMAGE_BASE, IMAGE_BASE + len(data), 'SEG01', 'CODE')

return1defmove_segm(frm, to, sz, fileformatname):

delta = to

xEA = ida_fixup.get_first_fixup_ea()

while xEA != idaapi.BADADDR:

fd = ida_fixup.fixup_data_t(idaapi.FIXUP_OFF32)

ida_fixup.get_fixup(xEA, fd)

fd.off += delta

if fd.get_type() == ida_fixup.FIXUP_OFF8:

idaapi.put_byte(xEA, fd.off)

elif fd.get_type() == ida_fixup.FIXUP_OFF16:

idaapi.put_word(xEA, fd.off)

elif fd.get_type() == ida_fixup.FIXUP_OFF32:

idaapi.put_long(xEA, fd.off)

fd.set(xEA)

xEA = ida_fixup.get_next_fixup_ea(xEA)

idaapi.cvar.inf.baseaddr = idaapi.cvar.inf.baseaddr + delta

return1We write the main loader code

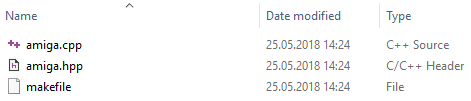

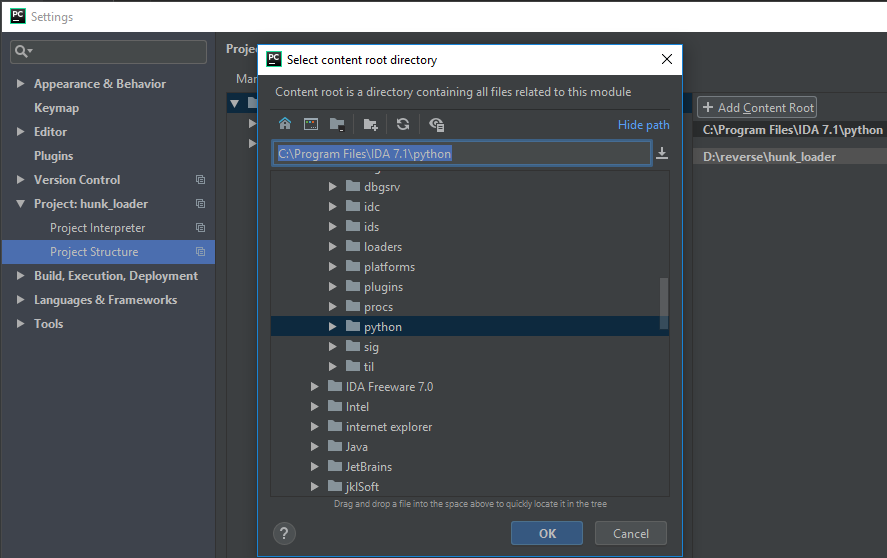

So, with the basics figured out. Let's prepare ourselves a "workplace". I do not know what anyone likes to write code in Python, but I love to do it in PyCharm. Let's create a new project and add a catalog from IDA to the import search path:

People who have already encountered the issue of emulating executable files for AmigaOS have probably heard about a project like amitools . It has almost a complete set of tools for working with Amiga Hunk (both for emulation and for just parsing). I propose on its basis to make a "download" (the project license allows, and our loader will be non-commercial).

After a brief search, the BinFmtHunk.pyamitools file was found . It implements file parsing, definition of segments, relocations and much more. Actually, the Relocate.py file is responsible for the use of the relocation itself .

Now the most difficult thing: you need to amitoolsdrag everything from our entire file tree to our bootloader file, to which there are links in BinFmtHunk.pyand Relocate.py, in some places, making minor corrections.

Another thing I want to add is the definition for each segment of the position in the file from which the data was loaded. This is done by adding an attribute data_offsetto two classes: HunkSegmentBlockand HunkOverlayBlock. It turns out the following code:

classHunkSegmentBlock(HunkBlock):"""HUNK_CODE, HUNK_DATA, HUNK_BSS"""def__init__(self, blk_id=None, data=None, data_offset=0, size_longs=0):

HunkBlock.__init__(self)

if blk_id isnotNone:

self.blk_id = blk_id

self.data = data

self.data_offset = data_offset

self.size_longs = size_longs

defparse(self, f):

size = self._read_long(f)

self.size_longs = size

if self.blk_id != HUNK_BSS:

size *= 4

self.data_offset = f.tell()

self.data = f.read(size)classHunkOverlayBlock(HunkBlock):"""HUNK_OVERLAY"""

blk_id = HUNK_OVERLAY

def__init__(self):

HunkBlock.__init__(self)

self.data_offset = 0

self.data = Nonedefparse(self, f):

num_longs = self._read_long(f)

self.data_offset = f.tell()

self.data = f.read(num_longs * 4)Now we need to add this attribute to the class Segmentthat is created later from Hunk-blocks:

classSegment:def__init__(self, seg_type, size, data=None, data_offset=0, flags=0):

self.seg_type = seg_type

self.size = size

self.data_offset = data_offset

self.data = data

self.flags = flags

self.relocs = {}

self.symtab = None

self.id = None

self.file_data = None

self.debug_line = NoneNext, for the class, BinFmtHunkadd the use data_offsetwhen creating segments. This is done in the method create_image_from_load_seg_filein the cycle of listing segment-units:

segs = lsf.get_segments()

for seg in segs:

# what type of segment to we have?

blk_id = seg.seg_blk.blk_id

size = seg.size_longs * 4

data_offset = seg.seg_blk.data_offset

data = seg.seg_blk.data

if blk_id == HUNK_CODE:

seg_type = SEGMENT_TYPE_CODE

elif blk_id == HUNK_DATA:

seg_type = SEGMENT_TYPE_DATA

elif blk_id == HUNK_BSS:

seg_type = SEGMENT_TYPE_BSS

else:

raise HunkParseError("Unknown Segment Type for BinImage: %d" % blk_id)

# create seg

bs = Segment(seg_type, size, data, data_offset)

bs.set_file_data(seg)

bi.add_segment(bs)We write code for IDA-kolbekov

Now that we have everything we need, let's write the code for callbacks. The first will be accept_file:

defaccept_file(li, filename):

li.seek(0)

bf = BinFmtHunk()

tag = li.read(4)

tagf = StringIO.StringIO(tag)

if bf.is_image_fobj(tagf):

return {'format': 'Amiga Hunk executable', 'processor': '68040'}

else:

return0Everything is simple: we read the first four bytes, make a virtual file ( StringIO) of them and pass it to a function is_image_fobjthat returns Trueif the file is of a suitable format. In this case, we return a dictionary with two fields: format(text description of the downloadable format) and processor(under which platform the executable code is written).

Next you need to upload the file to the IDB. It's more complicated here. The first thing to do is to force the processor type to the required one Motorola 68040:

idaapi.set_processor_type('68040', ida_idp.SETPROC_LOADER)Set the flags for the loader so that any game is not recognized and arrays are not made from everything (the description of the flags can be read here ):

idaapi.cvar.inf.af = idaapi.AF_CODE | idaapi.AF_JUMPTBL | idaapi.AF_USED | idaapi.AF_UNK | \

idaapi.AF_PROC | idaapi.AF_LVAR | idaapi.AF_STKARG | idaapi.AF_REGARG | \

idaapi.AF_TRACE | idaapi.AF_VERSP | idaapi.AF_ANORET | idaapi.AF_MEMFUNC | \

idaapi.AF_TRFUNC | idaapi.AF_FIXUP | idaapi.AF_JFUNC | idaapi.AF_NULLSUB | \

idaapi.AF_NULLSUB | idaapi.AF_IMMOFF | idaapi.AF_STRLITLet's transfer the contents of the loaded file to BinFmtHunk(parsing and all that):

li.seek(0)

data = li.read(li.size())

bf = BinFmtHunk()

fobj = StringIO.StringIO(data)

bi = bf.load_image_fobj(fobj)With a zero download address, I propose to finish choosing another ImageBase. By the way, executable files in AmigaOS are loaded only at accessible addresses, there are no virtual addresses there. I chose 0x21F000, it is beautiful and unlikely to coincide with any constant. Apply it:

rel = Relocate(bi)

# new segment addresses are in this list

addrs = rel.get_seq_addrs(0x21F000)

# new segment datas with applied relocations are in this list

datas = rel.relocate(addrs)Add a starting address from which the program starts:

# addrs[0] points to the first segment' entry point# 1 means that the entry point contains some executable code

idaapi.add_entry(addrs[0], addrs[0], "start", 1)It's time to load the segments into the database and create relocs (in IDA terminology:) reloc == fixup:

for seg in bi.get_segments():

offset = addrs[seg.id]

size = seg.size

to_segs = seg.get_reloc_to_segs()

for to_seg in to_segs:

reloc = seg.get_reloc(to_seg)

for r in reloc.get_relocs():

offset2 = r.get_offset()

rel_off = Relocate.read_long(datas[seg.id], offset2)

addr = offset + rel_off + r.addend

fd = idaapi.fixup_data_t(idaapi.FIXUP_OFF32)

fd.off = addr

fd.set(offset + offset2)

idaapi.mem2base(str(datas[seg.id]), offset, seg.data_offset)

idaapi.add_segm(0, offset, offset + size, 'SEG_%02d' % seg.id, seg.get_type_name())Final note for load_file:

return1move_segmJust take the code without changes.

Final loader code and conclusions

import idaapi

import ida_idp

import ida_fixup

import StringIO

import struct

HUNK_UNIT = 999

HUNK_NAME = 1000

HUNK_CODE = 1001

HUNK_DATA = 1002

HUNK_BSS = 1003

HUNK_ABSRELOC32 = 1004

HUNK_RELRELOC16 = 1005

HUNK_RELRELOC8 = 1006

HUNK_EXT = 1007

HUNK_SYMBOL = 1008

HUNK_DEBUG = 1009

HUNK_END = 1010

HUNK_HEADER = 1011

HUNK_OVERLAY = 1013

HUNK_BREAK = 1014

HUNK_DREL32 = 1015

HUNK_DREL16 = 1016

HUNK_DREL8 = 1017

HUNK_LIB = 1018

HUNK_INDEX = 1019

HUNK_RELOC32SHORT = 1020

HUNK_RELRELOC32 = 1021

HUNK_ABSRELOC16 = 1022

HUNK_PPC_CODE = 1257

HUNK_RELRELOC26 = 1260

hunk_names = {

HUNK_UNIT: "HUNK_UNIT",

HUNK_NAME: "HUNK_NAME",

HUNK_CODE: "HUNK_CODE",

HUNK_DATA: "HUNK_DATA",

HUNK_BSS: "HUNK_BSS",

HUNK_ABSRELOC32: "HUNK_ABSRELOC32",

HUNK_RELRELOC16: "HUNK_RELRELOC16",

HUNK_RELRELOC8: "HUNK_RELRELOC8",

HUNK_EXT: "HUNK_EXT",

HUNK_SYMBOL: "HUNK_SYMBOL",

HUNK_DEBUG: "HUNK_DEBUG",

HUNK_END: "HUNK_END",

HUNK_HEADER: "HUNK_HEADER",

HUNK_OVERLAY: "HUNK_OVERLAY",

HUNK_BREAK: "HUNK_BREAK",

HUNK_DREL32: "HUNK_DREL32",

HUNK_DREL16: "HUNK_DREL16",

HUNK_DREL8: "HUNK_DREL8",

HUNK_LIB: "HUNK_LIB",

HUNK_INDEX: "HUNK_INDEX",

HUNK_RELOC32SHORT: "HUNK_RELOC32SHORT",

HUNK_RELRELOC32: "HUNK_RELRELOC32",

HUNK_ABSRELOC16: "HUNK_ABSRELOC16",

HUNK_PPC_CODE: "HUNK_PPC_CODE",

HUNK_RELRELOC26: "HUNK_RELRELOC26",

}

EXT_SYMB = 0

EXT_DEF = 1

EXT_ABS = 2

EXT_RES = 3

EXT_ABSREF32 = 129

EXT_ABSCOMMON = 130

EXT_RELREF16 = 131

EXT_RELREF8 = 132

EXT_DEXT32 = 133

EXT_DEXT16 = 134

EXT_DEXT8 = 135

EXT_RELREF32 = 136

EXT_RELCOMMON = 137

EXT_ABSREF16 = 138

EXT_ABSREF8 = 139

EXT_RELREF26 = 229

TYPE_UNKNOWN = 0

TYPE_LOADSEG = 1

TYPE_UNIT = 2

TYPE_LIB = 3

HUNK_TYPE_MASK = 0xffff

SEGMENT_TYPE_CODE = 0

SEGMENT_TYPE_DATA = 1

SEGMENT_TYPE_BSS = 2

BIN_IMAGE_TYPE_HUNK = 0

segment_type_names = [

"CODE", "DATA", "BSS"

]

loadseg_valid_begin_hunks = [

HUNK_CODE,

HUNK_DATA,

HUNK_BSS,

HUNK_PPC_CODE

]

loadseg_valid_extra_hunks = [

HUNK_ABSRELOC32,

HUNK_RELOC32SHORT,

HUNK_DEBUG,

HUNK_SYMBOL,

HUNK_NAME

]

classHunkParseError(Exception):def__init__(self, msg):

self.msg = msg

def__str__(self):return self.msg

classHunkBlock:"""Base class for all hunk block types"""def__init__(self):pass

blk_id = 0xdeadbeef

sub_offset = None# used inside LIB @staticmethoddef_read_long(f):"""read a 4 byte long"""

data = f.read(4)

if len(data) != 4:

raise HunkParseError("read_long failed")

return struct.unpack(">I", data)[0]

@staticmethoddef_read_word(f):"""read a 2 byte word"""

data = f.read(2)

if len(data) != 2:

raise HunkParseError("read_word failed")

return struct.unpack(">H", data)[0]

def_read_name(self, f):"""read name stored in longs

return size, string

"""

num_longs = self._read_long(f)

if num_longs == 0:

return0, ""else:

return self._read_name_size(f, num_longs)

@staticmethoddef_read_name_size(f, num_longs):

size = (num_longs & 0xffffff) * 4

data = f.read(size)

if len(data) < size:

return-1, None

endpos = data.find('\0')

if endpos == -1:

return size, data

elif endpos == 0:

return0, ""else:

return size, data[:endpos]

@staticmethoddef_write_long(f, v):

data = struct.pack(">I", v)

f.write(data)

@staticmethoddef_write_word(f, v):

data = struct.pack(">H", v)

f.write(data)

def_write_name(self, f, s, tag=None):

n = len(s)

num_longs = int((n + 3) / 4)

b = bytearray(num_longs * 4)

if n > 0:

b[0:n] = s

if tag isnotNone:

num_longs |= tag << 24

self._write_long(f, num_longs)

f.write(b)

classHunkHeaderBlock(HunkBlock):"""HUNK_HEADER - header block of Load Modules"""

blk_id = HUNK_HEADER

def__init__(self):

HunkBlock.__init__(self)

self.reslib_names = []

self.table_size = 0

self.first_hunk = 0

self.last_hunk = 0

self.hunk_table = []

defsetup(self, hunk_sizes):# easy setup for given number of hunks

n = len(hunk_sizes)

if n == 0:

raise HunkParseError("No hunks for HUNK_HEADER given")

self.table_size = n

self.first_hunk = 0

self.last_hunk = n - 1

self.hunk_table = hunk_sizes

defparse(self, f):# parse resident library names (AOS 1.x only)whileTrue:

l, s = self._read_name(f)

if l < 0:

raise HunkParseError("Error parsing HUNK_HEADER names")

elif l == 0:

break

self.reslib_names.append(s)

# table size and hunk range

self.table_size = self._read_long(f)

self.first_hunk = self._read_long(f)

self.last_hunk = self._read_long(f)

if self.table_size < 0or self.first_hunk < 0or self.last_hunk < 0:

raise HunkParseError("HUNK_HEADER invalid table_size or first_hunk or last_hunk")

# determine number of hunks in size table

num_hunks = self.last_hunk - self.first_hunk + 1for a in xrange(num_hunks):

hunk_size = self._read_long(f)

if hunk_size < 0:

raise HunkParseError("HUNK_HEADER contains invalid hunk_size")

# note that the upper bits are the target memory type. We only have FAST,# so let's forget about them for a moment.

self.hunk_table.append(hunk_size & 0x3fffffff)

defwrite(self, f):# write residentsfor reslib in self.reslib_names:

self._write_name(f, reslib)

self._write_long(f, 0)

# table size and hunk range

self._write_long(f, self.table_size)

self._write_long(f, self.first_hunk)

self._write_long(f, self.last_hunk)

# sizesfor hunk_size in self.hunk_table:

self._write_long(f, hunk_size)

classHunkSegmentBlock(HunkBlock):"""HUNK_CODE, HUNK_DATA, HUNK_BSS"""def__init__(self, blk_id=None, data=None, data_offset=0, size_longs=0):

HunkBlock.__init__(self)

if blk_id isnotNone:

self.blk_id = blk_id

self.data = data

self.data_offset = data_offset

self.size_longs = size_longs

defparse(self, f):

size = self._read_long(f)

self.size_longs = size

if self.blk_id != HUNK_BSS:

size *= 4

self.data_offset = f.tell()

self.data = f.read(size)

defwrite(self, f):

self._write_long(f, self.size_longs)

if self.data isnotNone:

f.write(self.data)

classHunkRelocLongBlock(HunkBlock):"""HUNK_ABSRELOC32 - relocations stored in longs"""def__init__(self, blk_id=None, relocs=None):

HunkBlock.__init__(self)

if blk_id isnotNone:

self.blk_id = blk_id

# map hunk number to list of relocations (i.e. byte offsets in long)if relocs isNone:

self.relocs = []

else:

self.relocs = relocs

defparse(self, f):whileTrue:

num = self._read_long(f)

if num == 0:

break

hunk_num = self._read_long(f)

offsets = []

for i in xrange(num):

off = self._read_long(f)

offsets.append(off)

self.relocs.append((hunk_num, offsets))

defwrite(self, f):for reloc in self.relocs:

hunk_num, offsets = reloc

self._write_long(f, len(offsets))

self._write_long(f, hunk_num)

for off in offsets:

self._write_long(f, off)

self._write_long(f, 0)

classHunkRelocWordBlock(HunkBlock):"""HUNK_RELOC32SHORT - relocations stored in words"""def__init__(self, blk_id=None, relocs=None):

HunkBlock.__init__(self)

if blk_id isnotNone:

self.blk_id = blk_id

# list of tuples (hunk_no, [offsets])if relocs isNone:

self.relocs = []

else:

self.relocs = relocs

defparse(self, f):

num_words = 0whileTrue:

num_offs = self._read_word(f)

num_words += 1if num_offs == 0:

break

hunk_num = self._read_word(f)

num_words += num_offs + 1

offsets = []

for i in xrange(num_offs):

off = self._read_word(f)

offsets.append(off)

self.relocs.append((hunk_num, offsets))

# pad to longif num_words % 2 == 1:

self._read_word(f)

defwrite(self, f):

num_words = 0for hunk_num, offsets in self.relocs:

num_offs = len(offsets)

self._write_word(f, num_offs)

self._write_word(f, hunk_num)

for i in xrange(num_offs):

self._write_word(f, offsets[i])

num_words += 2 + num_offs

# end

self._write_word(f, 0)

num_words += 1# padding?if num_words % 2 == 1:

self._write_word(f, 0)

classHunkEndBlock(HunkBlock):"""HUNK_END"""

blk_id = HUNK_END

defparse(self, f):passdefwrite(self, f):passclassHunkOverlayBlock(HunkBlock):"""HUNK_OVERLAY"""

blk_id = HUNK_OVERLAY

def__init__(self):

HunkBlock.__init__(self)

self.data_offset = 0

self.data = Nonedefparse(self, f):

num_longs = self._read_long(f)

self.data_offset = f.tell()

self.data = f.read(num_longs * 4)

defwrite(self, f):

self._write_long(f, int(self.data / 4))

f.write(self.data)

classHunkBreakBlock(HunkBlock):"""HUNK_BREAK"""

blk_id = HUNK_BREAK

defparse(self, f):passdefwrite(self, f):passclassHunkDebugBlock(HunkBlock):"""HUNK_DEBUG"""

blk_id = HUNK_DEBUG

def__init__(self, debug_data=None):

HunkBlock.__init__(self)

self.debug_data = debug_data

defparse(self, f):

num_longs = self._read_long(f)

num_bytes = num_longs * 4

self.debug_data = f.read(num_bytes)

defwrite(self, f):

num_longs = int(len(self.debug_data) / 4)

self._write_long(f, num_longs)

f.write(self.debug_data)

classHunkSymbolBlock(HunkBlock):"""HUNK_SYMBOL"""

blk_id = HUNK_SYMBOL

def__init__(self, symbols=None):

HunkBlock.__init__(self)

if symbols isNone:

self.symbols = []

else:

self.symbols = symbols

defparse(self, f):whileTrue:

s, n = self._read_name(f)

if s == 0:

break

off = self._read_long(f)

self.symbols.append((n, off))

defwrite(self, f):for sym, off in self.symbols:

self._write_name(f, sym)

self._write_long(f, off)

self._write_long(f, 0)

classHunkUnitBlock(HunkBlock):"""HUNK_UNIT"""

blk_id = HUNK_UNIT

def__init__(self):

HunkBlock.__init__(self)

self.name = Nonedefparse(self, f):

_, self.name = self._read_name(f)

defwrite(self, f):

self._write_name(f, self.name)

classHunkNameBlock(HunkBlock):"""HUNK_NAME"""

blk_id = HUNK_NAME

def__init__(self):

HunkBlock.__init__(self)

self.name = Nonedefparse(self, f):

_, self.name = self._read_name(f)

defwrite(self, f):

self._write_name(f, self.name)

classHunkExtEntry:"""helper class for HUNK_EXT entries"""def__init__(self, name, ext_type, value, bss_size, offsets):

self.name = name

self.ext_type = ext_type

self.def_value = value # defs only

self.bss_size = bss_size # ABSCOMMON only

self.ref_offsets = offsets # refs only: list of offsetsclassHunkExtBlock(HunkBlock):"""HUNK_EXT"""

blk_id = HUNK_EXT

def__init__(self):

HunkBlock.__init__(self)

self.entries = []

defparse(self, f):whileTrue:

tag = self._read_long(f)

if tag == 0:

break

ext_type = tag >> 24

name_len = tag & 0xffffff

_, name = self._read_name_size(f, name_len)

# add on for type

bss_size = None

offsets = None

value = None# ABSCOMMON -> bss sizeif ext_type == EXT_ABSCOMMON:

bss_size = self._read_long(f)

# is a referenceelif ext_type >= 0x80:

num_refs = self._read_long(f)

offsets = []

for i in xrange(num_refs):

off = self._read_long(f)

offsets.append(off)

# is a definitionelse:

value = self._read_long(f)

e = HunkExtEntry(name, ext_type, value, bss_size, offsets)

self.entries.append(e)

defwrite(self, f):for entry in self.entries:

ext_type = entry.ext_type

self._write_name(f, entry.name, tag=ext_type)

# ABSCOMMONif ext_type == EXT_ABSCOMMON:

self._write_long(f, entry.bss_size)

# is a referenceelif ext_type >= 0x80:

num_offsets = len(entry.ref_offsets)

self._write_long(f, num_offsets)

for off in entry.ref_offsets:

self._write_long(f, off)

# is a definitionelse:

self._write_long(f, entry.def_value)

self._write_long(f, 0)

classHunkLibBlock(HunkBlock):"""HUNK_LIB"""

blk_id = HUNK_LIB

def__init__(self):

HunkBlock.__init__(self)

self.blocks = []

self.offsets = []

defparse(self, f, is_load_seg=False):

num_longs = self._read_long(f)

pos = f.tell()

end_pos = pos + num_longs * 4# first read block idwhile pos < end_pos:

tag = f.read(4)

# EOFif len(tag) == 0:

breakelif len(tag) != 4:

raise HunkParseError("Hunk block tag too short!")

blk_id = struct.unpack(">I", tag)[0]

# mask out mem flags

blk_id = blk_id & HUNK_TYPE_MASK

# look up block typeif blk_id in hunk_block_type_map:

blk_type = hunk_block_type_map[blk_id]

# create block and parse

block = blk_type()

block.blk_id = blk_id

block.parse(f)

self.offsets.append(pos)

self.blocks.append(block)

else:

raise HunkParseError("Unsupported hunk type: %04d" % blk_id)

pos = f.tell()

defwrite(self, f):# write dummy length (fill in later)

pos = f.tell()

start = pos

self._write_long(f, 0)

self.offsets = []

# write blocksfor block in self.blocks:

block_id = block.blk_id

block_id_raw = struct.pack(">I", block_id)

f.write(block_id_raw)

# write block itself

block.write(f)

# update offsets

self.offsets.append(pos)

pos = f.tell()

# fill in size

end = f.tell()

size = end - start - 4

num_longs = size / 4

f.seek(start, 0)

self._write_long(f, num_longs)

f.seek(end, 0)

classHunkIndexUnitEntry:def__init__(self, name_off, first_hunk_long_off):

self.name_off = name_off

self.first_hunk_long_off = first_hunk_long_off

self.index_hunks = []

classHunkIndexHunkEntry:def__init__(self, name_off, hunk_longs, hunk_ctype):

self.name_off = name_off

self.hunk_longs = hunk_longs

self.hunk_ctype = hunk_ctype

self.sym_refs = []

self.sym_defs = []

classHunkIndexSymbolRef:def__init__(self, name_off):

self.name_off = name_off

classHunkIndexSymbolDef:def__init__(self, name_off, value, sym_ctype):

self.name_off = name_off

self.value = value

self.sym_ctype = sym_ctype

classHunkIndexBlock(HunkBlock):"""HUNK_INDEX"""

blk_id = HUNK_INDEX

def__init__(self):

HunkBlock.__init__(self)

self.strtab = None

self.units = []

defparse(self, f):

num_longs = self._read_long(f)

num_words = num_longs * 2# string table size

strtab_size = self._read_word(f)

self.strtab = f.read(strtab_size)

num_words = num_words - (strtab_size / 2) - 1# read index unit blockswhile num_words > 1:

# unit description

name_off = self._read_word(f)

first_hunk_long_off = self._read_word(f)

num_hunks = self._read_word(f)

num_words -= 3

unit_entry = HunkIndexUnitEntry(name_off, first_hunk_long_off)

self.units.append(unit_entry)

for i in xrange(num_hunks):

# hunk description

name_off = self._read_word(f)

hunk_longs = self._read_word(f)

hunk_ctype = self._read_word(f)

hunk_entry = HunkIndexHunkEntry(name_off, hunk_longs, hunk_ctype)

unit_entry.index_hunks.append(hunk_entry)

# refs

num_refs = self._read_word(f)

for j in xrange(num_refs):

name_off = self._read_word(f)

hunk_entry.sym_refs.append(HunkIndexSymbolRef(name_off))

# defs

num_defs = self._read_word(f)

for j in xrange(num_defs):

name_off = self._read_word(f)

value = self._read_word(f)

stype = self._read_word(f)

hunk_entry.sym_defs.append(HunkIndexSymbolDef(name_off, value, stype))

# calc word size

num_words = num_words - (5 + num_refs + num_defs * 3)

# alignment word?if num_words == 1:

self._read_word(f)

defwrite(self, f):# write dummy size

num_longs_pos = f.tell()

self._write_long(f, 0)

num_words = 0# write string table

size_strtab = len(self.strtab)

self._write_word(f, size_strtab)

f.write(self.strtab)

num_words += size_strtab / 2 + 1# write unit blocksfor unit in self.units:

self._write_word(f, unit.name_off)

self._write_word(f, unit.first_hunk_long_off)

self._write_word(f, len(unit.index_hunks))

num_words += 3for index in unit.index_hunks:

self._write_word(f, index.name_off)

self._write_word(f, index.hunk_longs)

self._write_word(f, index.hunk_ctype)

# refs

num_refs = len(index.sym_refs)

self._write_word(f, num_refs)

for sym_ref in index.sym_refs:

self._write_word(f, sym_ref.name_off)

# defs

num_defs = len(index.sym_defs)

self._write_word(f, num_defs)

for sym_def in index.sym_defs:

self._write_word(f, sym_def.name_off)

self._write_word(f, sym_def.value)

self._write_word(f, sym_def.sym_ctype)

# count words

num_words += 5 + num_refs + num_defs * 3# alignment word?if num_words % 2 == 1:

num_words += 1

self._write_word(f, 0)

# fill in real size

pos = f.tell()

f.seek(num_longs_pos, 0)

self._write_long(f, num_words / 2)

f.seek(pos, 0)

# map the hunk types to the block classes

hunk_block_type_map = {

# Load Module

HUNK_HEADER: HunkHeaderBlock,

HUNK_CODE: HunkSegmentBlock,

HUNK_DATA: HunkSegmentBlock,

HUNK_BSS: HunkSegmentBlock,

HUNK_ABSRELOC32: HunkRelocLongBlock,

HUNK_RELOC32SHORT: HunkRelocWordBlock,

HUNK_END: HunkEndBlock,

HUNK_DEBUG: HunkDebugBlock,

HUNK_SYMBOL: HunkSymbolBlock,

# Overlays

HUNK_OVERLAY: HunkOverlayBlock,

HUNK_BREAK: HunkBreakBlock,

# Object Module

HUNK_UNIT: HunkUnitBlock,

HUNK_NAME: HunkNameBlock,

HUNK_RELRELOC16: HunkRelocLongBlock,

HUNK_RELRELOC8: HunkRelocLongBlock,

HUNK_DREL32: HunkRelocLongBlock,

HUNK_DREL16: HunkRelocLongBlock,

HUNK_DREL8: HunkRelocLongBlock,

HUNK_EXT: HunkExtBlock,

# New Library

HUNK_LIB: HunkLibBlock,

HUNK_INDEX: HunkIndexBlock

}

classHunkBlockFile:"""The HunkBlockFile holds the list of blocks found in a hunk file"""def__init__(self, blocks=None):if blocks isNone:

self.blocks = []

else:

self.blocks = blocks

defget_blocks(self):return self.blocks

defset_blocks(self, blocks):

self.blocks = blocks

defread_path(self, path_name, is_load_seg=False):

f = open(path_name, "rb")

self.read(f, is_load_seg)

f.close()

defread(self, f, is_load_seg=False):"""read a hunk file and fill block list"""whileTrue:

# first read block id

tag = f.read(4)

# EOFif len(tag) == 0:

breakelif len(tag) != 4:

raise HunkParseError("Hunk block tag too short!")

blk_id = struct.unpack(">I", tag)[0]

# mask out mem flags

blk_id = blk_id & HUNK_TYPE_MASK

# look up block typeif blk_id in hunk_block_type_map:

# v37 special case: 1015 is 1020 (HUNK_RELOC32SHORT)# we do this only in LoadSeg() filesif is_load_seg and blk_id == HUNK_DREL32:

blk_id = HUNK_RELOC32SHORT

blk_type = hunk_block_type_map[blk_id]

# create block and parse

block = blk_type()

block.blk_id = blk_id

block.parse(f)

self.blocks.append(block)

else:

raise HunkParseError("Unsupported hunk type: %04d" % blk_id)

defwrite_path(self, path_name):

f = open(path_name, "wb")

self.write(f)

f.close()

defwrite(self, f, is_load_seg=False):"""write a hunk file back to file object"""for block in self.blocks:

# write block id

block_id = block.blk_id

# convert idif is_load_seg and block_id == HUNK_RELOC32SHORT:

block_id = HUNK_DREL32

block_id_raw = struct.pack(">I", block_id)

f.write(block_id_raw)

# write block itself

block.write(f)

defdetect_type(self):"""look at blocks and try to deduce the type of hunk file"""if len(self.blocks) == 0:

return TYPE_UNKNOWN

first_block = self.blocks[0]

blk_id = first_block.blk_id

return self._map_blkid_to_type(blk_id)

defpeek_type(self, f):"""look into given file obj stream to determine file format.

stream is read and later on seek'ed back."""

pos = f.tell()

tag = f.read(4)

# EOFif len(tag) == 0:

return TYPE_UNKNOWN

elif len(tag) != 4:

f.seek(pos, 0)

return TYPE_UNKNOWN

else:

blk_id = struct.unpack(">I", tag)[0]

f.seek(pos, 0)

return self._map_blkid_to_type(blk_id)

@staticmethoddef_map_blkid_to_type(blk_id):if blk_id == HUNK_HEADER:

return TYPE_LOADSEG

elif blk_id == HUNK_UNIT:

return TYPE_UNIT

elif blk_id == HUNK_LIB:

return TYPE_LIB

else:

return TYPE_UNKNOWN

defget_block_type_names(self):"""return a string array with the names of all block types"""

res = []

for blk in self.blocks:

blk_id = blk.blk_id

name = hunk_names[blk_id]

res.append(name)

return res

classDebugLineEntry:def__init__(self, offset, src_line, flags=0):

self.offset = offset

self.src_line = src_line

self.flags = flags

self.file_ = Nonedefget_offset(self):return self.offset

defget_src_line(self):return self.src_line

defget_flags(self):return self.flags

defget_file(self):return self.file_

classDebugLineFile:def__init__(self, src_file, dir_name=None, base_offset=0):

self.src_file = src_file

self.dir_name = dir_name

self.base_offset = base_offset

self.entries = []

defget_src_file(self):return self.src_file

defget_dir_name(self):return self.dir_name

defget_entries(self):return self.entries

defget_base_offset(self):return self.base_offset

defadd_entry(self, e):

self.entries.append(e)

e.file_ = self

classDebugLine:def__init__(self):

self.files = []

defadd_file(self, src_file):

self.files.append(src_file)

defget_files(self):return self.files

classSymbol:def__init__(self, offset, name, file_name=None):

self.offset = offset

self.name = name

self.file_name = file_name

defget_offset(self):return self.offset

defget_name(self):return self.name

defget_file_name(self):return self.file_name

classSymbolTable:def__init__(self):

self.symbols = []

defadd_symbol(self, symbol):

self.symbols.append(symbol)

defget_symbols(self):return self.symbols

classReloc:def__init__(self, offset, width=2, addend=0):

self.offset = offset

self.width = width

self.addend = addend

defget_offset(self):return self.offset

defget_width(self):return self.width

defget_addend(self):return self.addend

classRelocations:def__init__(self, to_seg):

self.to_seg = to_seg

self.entries = []

defadd_reloc(self, reloc):

self.entries.append(reloc)

defget_relocs(self):return self.entries

classSegment:def__init__(self, seg_type, size, data=None, data_offset=0, flags=0):

self.seg_type = seg_type

self.size = size

self.data_offset = data_offset

self.data = data

self.flags = flags

self.relocs = {}

self.symtab = None

self.id = None

self.file_data = None

self.debug_line = Nonedef__str__(self):# relocs

relocs = []

for to_seg in self.relocs:

r = self.relocs[to_seg]

relocs.append("(#%d:size=%d)" % (to_seg.id, len(r.entries)))

# symtabif self.symtab isnotNone:

symtab = "symtab=#%d" % len(self.symtab.symbols)

else:

symtab = ""# debug_lineif self.debug_line isnotNone:

dl_files = self.debug_line.get_files()

file_info = []

for dl_file in dl_files:

n = len(dl_file.entries)

file_info.append("(%s:#%d)" % (dl_file.src_file, n))

debug_line = "debug_line=" + ",".join(file_info)

else:

debug_line = ""# summaryreturn"[#%d:%s:size=%d,flags=%d,%s,%s,%s]" % (self.id,

segment_type_names[self.seg_type], self.size, self.flags,

",".join(relocs), symtab, debug_line)

defget_type(self):return self.seg_type

defget_type_name(self):return segment_type_names[self.seg_type]

defget_size(self):return self.size

defget_data(self):return self.data

defadd_reloc(self, to_seg, relocs):

self.relocs[to_seg] = relocs

defget_reloc_to_segs(self):

keys = self.relocs.keys()

return sorted(keys, key=lambda x: x.id)

defget_reloc(self, to_seg):if to_seg in self.relocs:

return self.relocs[to_seg]

else:

returnNonedefset_symtab(self, symtab):

self.symtab = symtab

defget_symtab(self):return self.symtab

defset_debug_line(self, debug_line):

self.debug_line = debug_line

defget_debug_line(self):return self.debug_line

defset_file_data(self, file_data):"""set associated loaded binary file"""

self.file_data = file_data

defget_file_data(self):"""get associated loaded binary file"""return self.file_data

deffind_symbol(self, offset):

symtab = self.get_symtab()

if symtab isNone:

returnNonefor symbol in symtab.get_symbols():

off = symbol.get_offset()

if off == offset:

return symbol.get_name()

returnNonedeffind_reloc(self, offset, size):

to_segs = self.get_reloc_to_segs()

for to_seg in to_segs:

reloc = self.get_reloc(to_seg)

for r in reloc.get_relocs():

off = r.get_offset()

if offset <= off <= (offset + size):

return r, to_seg, off

returnNonedeffind_debug_line(self, offset):

debug_line = self.debug_line

if debug_line isNone:

returnNonefor df in debug_line.get_files():

for e in df.get_entries():

if e.get_offset() == offset:

return e

returnNoneclassBinImage:"""A binary image contains all the segments of a program's binary image.

"""def__init__(self, file_type):

self.segments = []

self.file_data = None

self.file_type = file_type

def__str__(self):return"<%s>" % ",".join(map(str, self.segments))

defget_size(self):

total_size = 0for seg in self.segments:

total_size += seg.get_size()

return total_size

defadd_segment(self, seg):

seg.id = len(self.segments)

self.segments.append(seg)

defget_segments(self):return self.segments

defset_file_data(self, file_data):"""set associated loaded binary file"""

self.file_data = file_data

defget_file_data(self):"""get associated loaded binary file"""return self.file_data

defget_segment_names(self):

names = []

for seg in self.segments:

names.append(seg.get_type_name())

return names

classHunkDebugLineEntry:def__init__(self, offset, src_line):

self.offset = offset

self.src_line = src_line

def__str__(self):return"[+%08x: %d]" % (self.offset, self.src_line)

defget_offset(self):return self.offset

defget_src_line(self):return self.src_line

classHunkDebugLine:"""structure to hold source line info"""def__init__(self, src_file, base_offset):

self.tag = 'LINE'

self.src_file = src_file

self.base_offset = base_offset

self.entries = []

defadd_entry(self, offset, src_line):

self.entries.append(HunkDebugLineEntry(offset, src_line))

def__str__(self):

prefix = "{%s,%s,@%08x:" % (self.tag, self.src_file, self.base_offset)

return prefix + ",".join(map(str, self.entries)) + "}"defget_src_file(self):return self.src_file

defget_base_offset(self):return self.base_offset

defget_entries(self):return self.entries

classHunkDebugAny:def__init__(self, tag, data, base_offset):

self.tag = tag

self.data = data

self.base_offset = base_offset

def__str__(self):return"{%s,%d,%s}" % (self.tag, self.base_offset, self.data)

classHunkDebug:defencode(self, debug_info):"""encode a debug info and return a debug_data chunk"""

out = StringIO.StringIO()

# +0: base offset

self._write_long(out, debug_info.base_offset)

# +4: type tag

tag = debug_info.tag

out.write(tag)

if tag == 'LINE':

# file name

self._write_string(out, debug_info.src_file)

# entriesfor e in debug_info.entries:

self._write_long(out, e.src_line)

self._write_long(out, e.offset)

elif tag == 'HEAD':

out.write("DBGV01\0\0")

out.write(debug_info.data)

else: # any

out.write(debug_info.data)

# retrieve result

res = out.getvalue()

out.close()

return res

defdecode(self, debug_data):"""decode a data block from a debug hunk"""if len(debug_data) < 12:

returnNone# +0: base_offset for file

base_offset = self._read_long(debug_data, 0)

# +4: tag

tag = debug_data[4:8]

if tag == 'LINE': # SAS/C source line info# +8: string file name

src_file, src_size = self._read_string(debug_data, 8)

dl = HunkDebugLine(src_file, base_offset)

off = 12 + src_size

num = (len(debug_data) - off) / 8for i in range(num):

src_line = self._read_long(debug_data, off)

offset = self._read_long(debug_data, off + 4)

off += 8

dl.add_entry(offset, src_line)

return dl

elif tag == 'HEAD':

tag2 = debug_data[8:16]

assert tag2 == "DBGV01\0\0"

data = debug_data[16:]

return HunkDebugAny(tag, data, base_offset)

else:

data = debug_data[8:]

return HunkDebugAny(tag, data, base_offset)

def_read_string(self, buf, pos):

size = self._read_long(buf, pos) * 4

off = pos + 4

data = buf[off:off + size]

pos = data.find('\0')

if pos == 0:

return"", size

elif pos != -1:

return data[:pos], size

else:

return data, size

def_write_string(self, f, s):

n = len(s)

num_longs = int((n + 3) / 4)

self._write_long(f, num_longs)

add = num_longs * 4 - n

if add > 0:

s += '\0' * add

f.write(s)

def_read_long(self, buf, pos):return struct.unpack_from(">I", buf, pos)[0]

def_write_long(self, f, v):

data = struct.pack(">I", v)

f.write(data)

classHunkSegment:"""holds a code, data, or bss hunk/segment"""def__init__(self):

self.blocks = None

self.seg_blk = None

self.symbol_blk = None

self.reloc_blks = None

self.debug_blks = None

self.debug_infos = Nonedef__repr__(self):return"[seg=%s,symbol=%s,reloc=%s,debug=%s,debug_info=%s]" % \

(self._blk_str(self.seg_blk),

self._blk_str(self.symbol_blk),

self._blk_str_list(self.reloc_blks),

self._blk_str_list(self.debug_blks),

self._debug_infos_str())

defsetup_code(self, data):

data, size_longs = self._pad_data(data)

self.seg_blk = HunkSegmentBlock(HUNK_CODE, data, size_longs)

defsetup_data(self, data):

data, size_longs = self._pad_data(data)

self.seg_blk = HunkSegmentBlock(HUNK_DATA, data, size_longs)

@staticmethoddef_pad_data(data):

size_bytes = len(data)

bytes_mod = size_bytes % 4if bytes_mod > 0:

add = 4 - bytes_mod

data = data + '\0' * add

size_long = int((size_bytes + 3) / 4)

return data, size_long

defsetup_bss(self, size_bytes):

size_longs = int((size_bytes + 3) / 4)

self.seg_blk = HunkSegmentBlock(HUNK_BSS, None, size_longs)

defsetup_relocs(self, relocs, force_long=False):"""relocs: ((hunk_num, (off1, off2, ...)), ...)"""if force_long:

use_short = Falseelse:

use_short = self._are_relocs_short(relocs)

if use_short:

self.reloc_blks = [HunkRelocWordBlock(HUNK_RELOC32SHORT, relocs)]

else:

self.reloc_blks = [HunkRelocLongBlock(HUNK_ABSRELOC32, relocs)]

defsetup_symbols(self, symbols):"""symbols: ((name, off), ...)"""

self.symbol_blk = HunkSymbolBlock(symbols)

defsetup_debug(self, debug_info):if self.debug_infos isNone:

self.debug_infos = []

self.debug_infos.append(debug_info)

hd = HunkDebug()

debug_data = hd.encode(debug_info)

blk = HunkDebugBlock(debug_data)

if self.debug_blks isNone:

self.debug_blks = []

self.debug_blks.append(blk)

@staticmethoddef_are_relocs_short(relocs):for hunk_num, offsets in relocs:

for off in offsets:

if off > 65535:

returnFalsereturnTruedef_debug_infos_str(self):if self.debug_infos isNone:

return"n/a"else:

return",".join(map(str, self.debug_infos))

@staticmethoddef_blk_str(blk):if blk isNone:

return"n/a"else:

return hunk_names[blk.blk_id]

@staticmethoddef_blk_str_list(blk_list):

res = []

if blk_list isNone:

return"n/a"for blk in blk_list:

res.append(hunk_names[blk.blk_id])

return",".join(res)

defparse(self, blocks):

hd = HunkDebug()

self.blocks = blocks

for blk in blocks:

blk_id = blk.blk_id

if blk_id in loadseg_valid_begin_hunks:

self.seg_blk = blk

elif blk_id == HUNK_SYMBOL:

if self.symbol_blk isNone:

self.symbol_blk = blk

else:

raise Exception("duplicate symbols in hunk")

elif blk_id == HUNK_DEBUG:

if self.debug_blks isNone:

self.debug_blks = []

self.debug_blks.append(blk)

# decode hunk debug info

debug_info = hd.decode(blk.debug_data)

if debug_info isnotNone:

if self.debug_infos isNone:

self.debug_infos = []

self.debug_infos.append(debug_info)

elif blk_id in (HUNK_ABSRELOC32, HUNK_RELOC32SHORT):

if self.reloc_blks isNone:

self.reloc_blks = []

self.reloc_blks.append(blk)

else:

raise HunkParseError("invalid hunk block")

defcreate(self, blocks):# already has blocks?if self.blocks isnotNone:

blocks += self.blocks

return self.seg_blk.size_longs

# start with segment blockif self.seg_blk isNone:

raise HunkParseError(