Study: how users actually work with touch screens

- Transfer

I have been researching how users interact with touch screens for many years, and I can say that understanding of the topic in the professional community is just beginning to be developed. Touch devices are still a fairly new phenomenon, patterns of interaction with similar devices are still being developed.

Over a year ago, I published a study on how people actually hold and how they touch their mobile phones. The next logical step is to understand the motivations of users and identify the relationships between different actions, context and actions of people.

Together with colleagues from ZIPPGUN, I developed a mobile application that allows you to simulate various scenarios of using a mobile phone. Using this application, tests were conducted using 31 participants (about two thirds of them used smartphones, and the rest worked with tablets).

All interactions were recorded on video - this was necessary in order to further restore how a person held the device, how he touched the screen, etc. The direction and “length” of the scroll gestures, the point on the screen where the user clicked with his finger, and also the accuracy of the selection of various menu items were recorded. Recording was carried out using special video glasses.

The number of participants in the experiment was not very large, but even with so many people, the study turned out to be very complicated. Only recording 31 sessions took almost 100 hours of video.

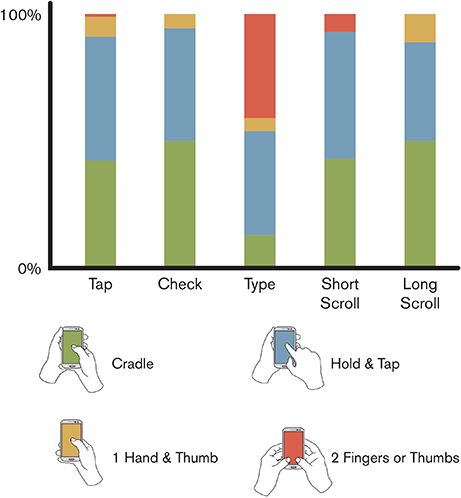

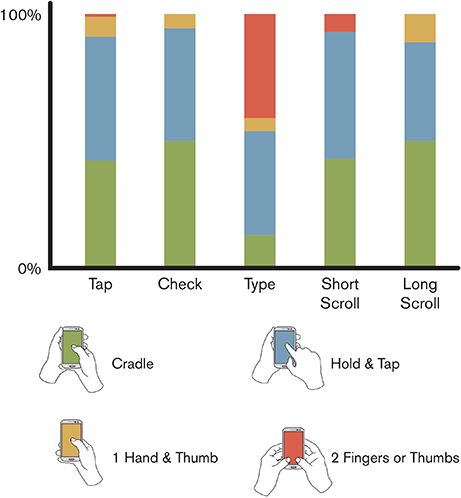

At the first stage of the study, it was decided to focus on identifying the connections between how people hold the phone and touch the screen and various contexts. When conducting tests, outwardly, everything looked pretty normal - for example, many people held the phone with one hand. But when it came to real data analysis, the results were unexpected. As you can see in the picture below, there are practically no scenarios for the “one-armed” use of a smartphone:

People can hold the phone with one hand and scroll the pages with the thumb of the same hand, but as soon as it becomes necessary to perform more serious tasks, they immediately proceed to hold the device with one hand, and use the second to work with the menu - most tasks are solved in this way way. In a little less than 41% of cases, users typed on the virtual keyboard using the thumbs of both hands - some subjects always work with a smartphone like this. Tablet users were excluded from the results of this experiment, as these devices are used in other situations.

Various studies have shown that users are most likely to interact with elements located in the center of the screen of a mobile phone or tablet. It is the center that attracts the attention of people best of all, the edges and corners of the screen interest them much less. Neither environmental conditions nor previous experience with touch screens affect this. People involuntarily strive to “tap” in the center of the screen. In the course of the current study, the data collected confirmed this thesis also for 7, 8, and 10-inch tablets.

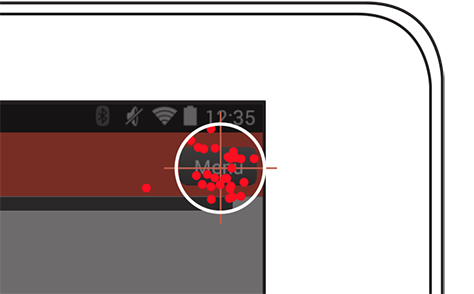

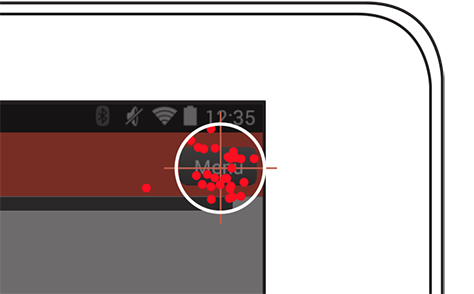

This is how the visualization of people’s attempts to get to the menu button located in the corner of the tablet’s screen looks like (each point is a touch on the screen):

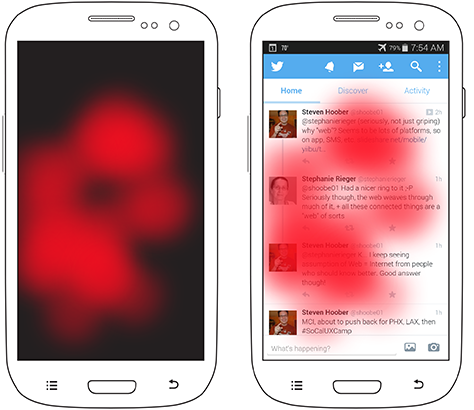

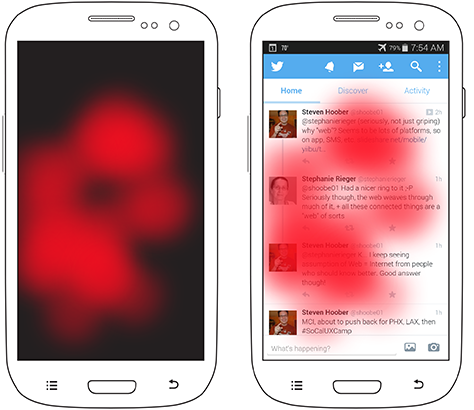

Heatmaps confirm the claim that people prefer to interact with the central part of the screen of their device. The figure below shows such a map for a scenario in which users had to select some element on the page with the ability to scroll in full screen:

In most cases, they scrolled the content to a position in the center of the screen, and only then clicked on it. When the user has the opportunity to select a specific place on the screen to touch him, then almost always he will choose the central two-thirds of the space. The data sampling for this experiment included users of smartphones and tablets - sympathy for the center of the screen does not depend on the type of device.

Even if in order to reach the center of the screen the user has to make more efforts (reach out with a finger or take the phone in his other hand), most often he will do it - not because someone forces him, but because it is more convenient for him. This means that the content and the main elements of the interface should always be located in the central part of the screen, and secondary elements can be moved to the upper or lower part of it.

If you look at the data a little more closely, you can notice a certain tendency of users to touch the left central part of the screen. This phenomenon is not so significant, but it is definitely present. It seems to me that the reason for this lies in the fact that in European languages we read from left to right - therefore, text is aligned to the left.

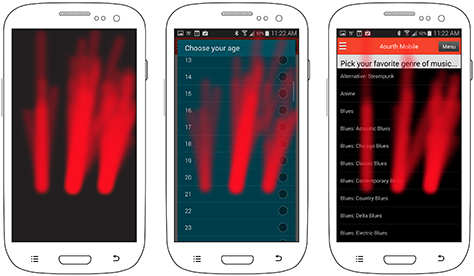

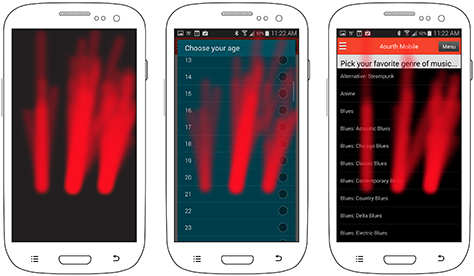

It’s useful for developers of mobile applications to know what specific gestures users use when working with their products. One of the most popular gestures (after “tap”) is scrolling (“scrolling”). The image below shows a heat map of how people typically scroll.

Each of the three images has three distinct areas. But why? It depends on the type of content on the screen. The picture on the left shows how people scroll through short content (usually in a dialog box), the images in the center and on the right correspond to scrolling long content “in full screen”. The central image shows that the items in the list for selecting an answer do not contain a lot of information, as a result of which a significant empty space appears on the screen - and still people prefer to touch the screen in the center, although there is nothing there. In the picture on the right, long list items occupy a significant part of the screen, and users prefer to scroll the screen on the right, where there is no content. Even users with a “working” left hand chose not to touch the content.

After my presentation at a conference during which I shared my experiences, some colleagues shared their experience in creating applications in Hebrew and Arabic. There everything turned out to be exactly the same, only in the other direction. In these languages, people read from right to left and, accordingly, scroll the page to the left of the page so as not to touch the content.

In addition, users are not always free to use scroll gestures where there are various elements on the page - they are afraid to accidentally activate some menu item and just want to see the content. If the page is completely filled, so that there is no free space on it, then native speakers of European languages scroll it, touching the screen on the right. The behavior may depend on the type of device - the tablet screen is larger, as a result of which the content may take up less space on the page, leaving more free space for touch.

Creating a high-quality interface for touch screens is impossible without understanding how users work with such devices. Here are the key findings that I have drawn from my research:

References:

PS If you notice a typo, mistake or inaccuracy of the translation - write in a personal message and I will quickly fix it.

Over a year ago, I published a study on how people actually hold and how they touch their mobile phones. The next logical step is to understand the motivations of users and identify the relationships between different actions, context and actions of people.

Research methodology

Together with colleagues from ZIPPGUN, I developed a mobile application that allows you to simulate various scenarios of using a mobile phone. Using this application, tests were conducted using 31 participants (about two thirds of them used smartphones, and the rest worked with tablets).

All interactions were recorded on video - this was necessary in order to further restore how a person held the device, how he touched the screen, etc. The direction and “length” of the scroll gestures, the point on the screen where the user clicked with his finger, and also the accuracy of the selection of various menu items were recorded. Recording was carried out using special video glasses.

The number of participants in the experiment was not very large, but even with so many people, the study turned out to be very complicated. Only recording 31 sessions took almost 100 hours of video.

Switching

At the first stage of the study, it was decided to focus on identifying the connections between how people hold the phone and touch the screen and various contexts. When conducting tests, outwardly, everything looked pretty normal - for example, many people held the phone with one hand. But when it came to real data analysis, the results were unexpected. As you can see in the picture below, there are practically no scenarios for the “one-armed” use of a smartphone:

People can hold the phone with one hand and scroll the pages with the thumb of the same hand, but as soon as it becomes necessary to perform more serious tasks, they immediately proceed to hold the device with one hand, and use the second to work with the menu - most tasks are solved in this way way. In a little less than 41% of cases, users typed on the virtual keyboard using the thumbs of both hands - some subjects always work with a smartphone like this. Tablet users were excluded from the results of this experiment, as these devices are used in other situations.

Centering

Various studies have shown that users are most likely to interact with elements located in the center of the screen of a mobile phone or tablet. It is the center that attracts the attention of people best of all, the edges and corners of the screen interest them much less. Neither environmental conditions nor previous experience with touch screens affect this. People involuntarily strive to “tap” in the center of the screen. In the course of the current study, the data collected confirmed this thesis also for 7, 8, and 10-inch tablets.

This is how the visualization of people’s attempts to get to the menu button located in the corner of the tablet’s screen looks like (each point is a touch on the screen):

Heatmaps confirm the claim that people prefer to interact with the central part of the screen of their device. The figure below shows such a map for a scenario in which users had to select some element on the page with the ability to scroll in full screen:

In most cases, they scrolled the content to a position in the center of the screen, and only then clicked on it. When the user has the opportunity to select a specific place on the screen to touch him, then almost always he will choose the central two-thirds of the space. The data sampling for this experiment included users of smartphones and tablets - sympathy for the center of the screen does not depend on the type of device.

Even if in order to reach the center of the screen the user has to make more efforts (reach out with a finger or take the phone in his other hand), most often he will do it - not because someone forces him, but because it is more convenient for him. This means that the content and the main elements of the interface should always be located in the central part of the screen, and secondary elements can be moved to the upper or lower part of it.

If you look at the data a little more closely, you can notice a certain tendency of users to touch the left central part of the screen. This phenomenon is not so significant, but it is definitely present. It seems to me that the reason for this lies in the fact that in European languages we read from left to right - therefore, text is aligned to the left.

Gestures

It’s useful for developers of mobile applications to know what specific gestures users use when working with their products. One of the most popular gestures (after “tap”) is scrolling (“scrolling”). The image below shows a heat map of how people typically scroll.

Each of the three images has three distinct areas. But why? It depends on the type of content on the screen. The picture on the left shows how people scroll through short content (usually in a dialog box), the images in the center and on the right correspond to scrolling long content “in full screen”. The central image shows that the items in the list for selecting an answer do not contain a lot of information, as a result of which a significant empty space appears on the screen - and still people prefer to touch the screen in the center, although there is nothing there. In the picture on the right, long list items occupy a significant part of the screen, and users prefer to scroll the screen on the right, where there is no content. Even users with a “working” left hand chose not to touch the content.

After my presentation at a conference during which I shared my experiences, some colleagues shared their experience in creating applications in Hebrew and Arabic. There everything turned out to be exactly the same, only in the other direction. In these languages, people read from right to left and, accordingly, scroll the page to the left of the page so as not to touch the content.

In addition, users are not always free to use scroll gestures where there are various elements on the page - they are afraid to accidentally activate some menu item and just want to see the content. If the page is completely filled, so that there is no free space on it, then native speakers of European languages scroll it, touching the screen on the right. The behavior may depend on the type of device - the tablet screen is larger, as a result of which the content may take up less space on the page, leaving more free space for touch.

Summary

Creating a high-quality interface for touch screens is impossible without understanding how users work with such devices. Here are the key findings that I have drawn from my research:

- Users often change the way they grab a smartphone. If the device is convenient to hold with one hand, this does not mean that typing messages on it or scrolling the page in this position will be just as convenient. Developers must create interfaces that are equally convenient to use, no matter what hand a person holds a smartphone. You should test the interface on different devices and in different contexts to cover all the positions of the hands of users.

- People are subconsciously inclined to touch the center of the smartphone screen and do so in any case, even if they have the opportunity not to do it. Key information and controls should be located on the central two-thirds of the screen, and minor elements can be carried out in its upper and lower parts.

- Users tend to touch the screen to scroll where content is missing. Therefore, the availability of free space on the page can be very useful - people will use it for scroll gestures and “swipe”.

References:

- Hoober, Steven. “ Common Misconceptions About Touch. "- the Russian translation on Habré

- Hoober, Steven. “ How Do Users Really Hold Mobile Devices? ”

- Hoober, Steven, and Patti Shank. “Making mLearning Usable: How We Use Mobile Devices.”

- Hoober, Steven. “ Design for Fingers and Thumbs Instead of Touch. ”

PS If you notice a typo, mistake or inaccuracy of the translation - write in a personal message and I will quickly fix it.