Search Preview - extension for Chrome

About extension

This extension provides the ability to view the site of a search result on Google, which significantly reduces the time it takes to search and process information.

Background

After Google closed the Instant Preview project, the search for the necessary information began to take much more time, open tabs and nerves.

After which I decided to correct this situation and write a small extension that makes my life easier.

Development

Due to the fact that Google Chrome supported HTML5 technology (namely, iframe sandbox), it was not difficult to implement the extension and a prototype was written in one evening. I was certainly happy about this, but it was sad for other people who were also victims of the closure of Instant Preview. Therefore, I decided to post the extension in open access.

The extension itself was based on the ability to load any page in an iframe, while the sandbox attribute allowed you to disable javascript and redirect the main window i.e. it was impossible to execute the following code from iframe:

top.location = ;

And everything was fine until on some sites the header of the ban on displaying in the iframe “X-Frame-Options” was not met, it was still half the trouble, but when Google Chrome in the new version forbade loading unprotected content on the protected site, i.e. it was impossible to embed data on the https site, including iframe http. As a result, most sites were not displayed in the iframe. Users began to complain, the extension did not cope with its task, again had to go straight to the site from the search results, everything returned to the beginning.

Search for a solution

But this did not stop me, it was interesting to solve this problem. The first thing that came to mind to use web proxy, i.e. in iframe, uploading data not directly from the site, but through the web proxy using the https secure protocol, thereby solving the problem with insecure content, and the web proxy could cut the X-Frame-Options header.

I didn’t want to waste time developing my web proxy; the problem needed to be solved quickly. A little google I came across this google-proxy scriptIs a web proxy for appengine written in python. After installing it and rolling out a new version of the extension, I did not forget about the problem for long. But the problem came again in the form of a lack of a free appengine quota; I had to make several mirrors for the web proxy. Having made four mirrors it seemed that everything was fine, but in the reviews of the extension they wrote to me that again there was an error of a lack of quotas. In appengine, the quota is reset every 24 hours, i.e. while I am using the expansion of the quota, there is still enough, but for residents of another part of the world, the quota has already been exhausted.

Own web proxy

After thinking a bit, I decided to spend time writing a web proxy and chose the Go language. It took a little time to learn Go, as before that I wrote small scripts on it for educational purposes. But I did not come across the development of a web server on Go. After reading the documentation and choosing gorillatoolkit as a web tool , namely the gorilla / mux router. Writing a router is pretty easy:

r := mux.NewRouter()

r.HandleFunc("/env", environ)

r.HandleFunc("/web_proxy", web_proxy.WebProxyHandler)

r.PathPrefix("/").Handler(http.StripPrefix("/", http.FileServer(http.Dir(staticDir))))

r.Methods("GET")

Further, for replacing links and addresses, cleaning html chose the gokogiri library this is the shell for libxml. It was necessary to get all the addresses (url) on my web proxy script. Then I disabled some tags by completely removing them from the DOM, including the script tag. Interactive preview site is not needed. CSS has been processed too since there are url pictures, fonts, etc.

It turns out such a mapping of tags when bypassing the DOM, the function corresponding to the tag is called.

var (

nodeElements = map[string]func(*context, xml.Node){

// proxy URL

"a": proxyURL("href"),

"img": proxyURL("src"),

"link": proxyURL("href"),

"iframe": proxyURL("src"),

// normalize URL

"object": normalizeURL("src"),

"embed": normalizeURL("src"),

"audio": normalizeURL("src"),

"video": normalizeURL("src"),

// remove Node

"script": removeNode,

"base": removeNode,

// style css

"style": proxyCSSURL,

}

xpathElements string = createXPath()

includeScripts = []string{

"/js/analytics.js",

}

cssURLPattern = regexp.MustCompile(`url\s*\(\s*(?:["']?)([^\)]+?)(?:["']?)\s*\)`)

)

Html filtering

func filterHTML(t *WebTransport, body, encoding []byte, baseURL *url.URL) ([]byte, error) {

if encoding == nil {

encoding = html.DefaultEncodingBytes

}

doc, err := html.Parse(body, encoding, []byte(baseURL.String()),

html.DefaultParseOption, encoding)

if err != nil {

return []byte(""), err

}

defer doc.Free()

c := &context{t: t, baseURL: baseURL}

nodes, err := doc.Root().Search(xpathElements)

if err == nil {

for _, node := range(nodes) {

name := strings.ToLower(node.Name())

nodeElements[name](c, node)

}

}

// add scripts

addScripts(doc)

return []byte(doc.String()), nil

}

CSS Filtering:

func filterCSS(t *WebTransport, body, encoding []byte, baseURL *url.URL) ([]byte, error) {

cssReplaceURL := func (m []byte) []byte {

cssURL := string(cssURLPattern.FindSubmatch(m)[1])

srcURL, err := replaceURL(t, baseURL, cssURL)

if err == nil {

return []byte("url(" + srcURL.String() + ")")

}

return m

}

return cssURLPattern.ReplaceAllFunc(body, cssReplaceURL), nil

}

They also filtered out the cookie, the X-Frame-Options header, and set the cache for 24 hours.

It was decided to install the web proxy on heroku, also thought about openshift, but as far as I know there is a limit on the number of connections.

The solution on heroku + Go completely suited me and there are no problems with quotas, no one complains (compared to appengine), since March, as they say, the flight is normal.

Expansion

The extension works well, added zoom buttons. There was still that story with zooming, I immediately went the wrong way and applied the CSS transform scale to the iframe, every time I had to change the zoom or the main window, calculate the iframe size according to the new one. But then a fairly simple solution came up:

zoom: %;

This zoom applies to the web proxy page to document.documentElement. But I did not stop there, I did not have enough highlight in the preview of what I was looking for. The keyword highlighting algorithm was chosen simple - search for a string in a substring. This worked well for simple cases, but when words with different endings appear in the text, the search did not work or even worse, the short substrings were found in the wrong words, well, you yourself understand how such a search works.

Fulltext search highlight

The solution was found in the form of a Porter Stemmer, namely its implementation in the form of a snowball , the xregexp library and the Unicode addon were also used to create a token that was transmitted to the Stemmer. I did not write an algorithm for determining the language for the stemmer, but applied the stemmer for different languages to the token and, if successful, the stemmer returned true.

Instruments

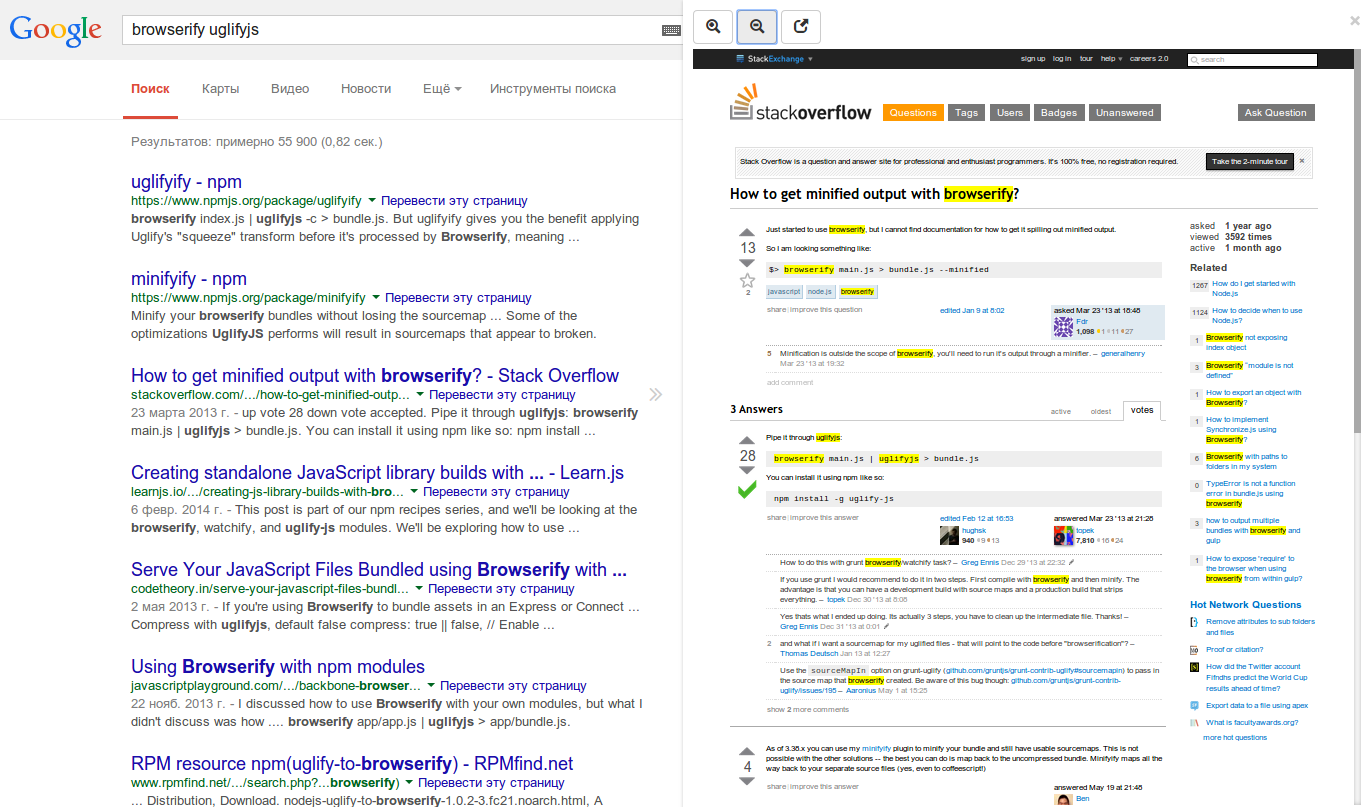

I chose coffeescript as the language, I like it like python, but it has its drawbacks. Gulp is responsible for building the dev and production versions. Very simple builder, a large number of plugins. Not a small part of the time I spent studying and finishing gulpfile.coffee, but this is justified by the speed of assembly. Also involved in the assembly browserify.

PS ah yes! I almost forgot about the extension link .

Questions, suggestions?