How the “magic” of error correction code, which is already over 50 years old, can speed up flash memory

An Error Correction Code or ECC is added to the transmitted signal and allows not only to identify transmission errors, but also to correct them if necessary (which is generally obvious from the name), without having to re-request the data from the transmitter. Such an operation algorithm allows transmitting data at a constant speed, which can be important in many cases. For example, when you watch digital television, it would be very uninteresting to watch a frozen picture, while waiting for repeated repeated requests for data.

In 1948, Claude Shannon published his famous work on the transmission of information, which, among other things, formulated a theorem on the transmission of information over an interference channel. After publication, a lot of error correction algorithms were developed using some increase in the amount of transmitted data, but one of the most common families of algorithms is algorithms based on low density parity-check code (LDPC-code). low-density code), which have now spread due to ease of implementation.

Claude Shannon

The LDPC was first introduced to the world at MIT by Robert Gray Gallager, an outstanding communications specialist. It happened in 1960, and the LDPC was ahead of its time. Vacuum lamp computers, common at the time, rarely had enough power to work effectively with LDPC. A computer capable of processing such data in real time in those years occupied an area of almost 200 square meters, and this automatically made all algorithms based on LDPC economically disadvantageous. Therefore, for almost 40 years, simpler codes have been used, and the LDPC has remained rather an elegant theoretical construction.

Robert Gallager

In the mid-90s, engineers working on satellite-based digital television transmission algorithms “shook off the dust” from the LDPC and began to use it, since computers by that time had become both more powerful and smaller. By the beginning of the 2000s, LDPC was becoming widespread, as it allows one to correct errors with high efficiency for high-speed data transmission under high noise conditions (for example, with strong electromagnetic interference). Also, the emergence of specialized systems on chips used in WiFi technology, hard drives, SCSI controllers contributed to the spreadetc., such SoCs are optimized for tasks, and for them, calculations related to LDPC do not pose a problem at all. In 2003, the LDPC code supplanted the turbo code technology and became part of the DVB-S2 standard for digital satellite data transmission. A similar replacement occurred in the DVB-T2 standard for digital "terrestrial" television.

It is worth saying that very different solutions are built on the basis of LDPC, there is no “only right” reference implementation. Often, LDPC-based solutions are incompatible with each other and code developed, for example, for satellite television, cannot be ported and used on hard drives. Although most often, combining the efforts of engineers from different fields gives a lot of advantages, and LDPC “in general” is a technology not patented, different know-how and proprietary technologies along with corporate interests get in the way. Most often, such cooperation is possible only within the same company. An example is the LSI HDD reading channel solution called TrueStore®, which the company has been offering for 3 years now. Following the acquisition of SandForce,

Here, of course, most readers will have a question: how are the algorithms different from each LDPC algorithm? Most LDPC solutions start as hard decision decoders, i.e. such a decoder works with a strictly limited data set (most often 0 and 1) and uses an error correction code for the smallest deviations from the norm. This solution, of course, allows you to effectively detect errors in the transmitted data and correct them, but in the case of a high level of errors, which sometimes happens when working with SSDs, such algorithms cease to cope with them. As you remember from our previous articlesAny flash memory is subject to an increase in the number of errors during operation. This inevitable process should be taken into account when developing error correction algorithms for SSD drives. What to do in case of an increase in the number of errors?

Here comes the LDPC with a soft solution, which is essentially “more analog”. Such algorithms “look” deeper than “hard” ones, and have a wide range of capabilities. An example of the simplest such solution can be an attempt to read the data again using a different voltage, just as we often ask the interlocutor to repeat the phrase louder. Continuing the metaphors with the communication of people, we can give an example of more complex correction algorithms. Imagine that you are talking in English with a person who speaks with a strong accent. In this case, a strong emphasis acts as a hindrance. Your interlocutor said a long phrase that you did not understand. In this case, the LDPC role with a soft solution will be several short leading questions that you can ask and clarify the whole point of a phrase that you did not understand initially. Such soft solutions often use sophisticated statistical algorithms to eliminate false positives. In general, as you already understood, such solutions are noticeably more difficult to implement, but they most often show much better results compared to “hard-wired” ones.

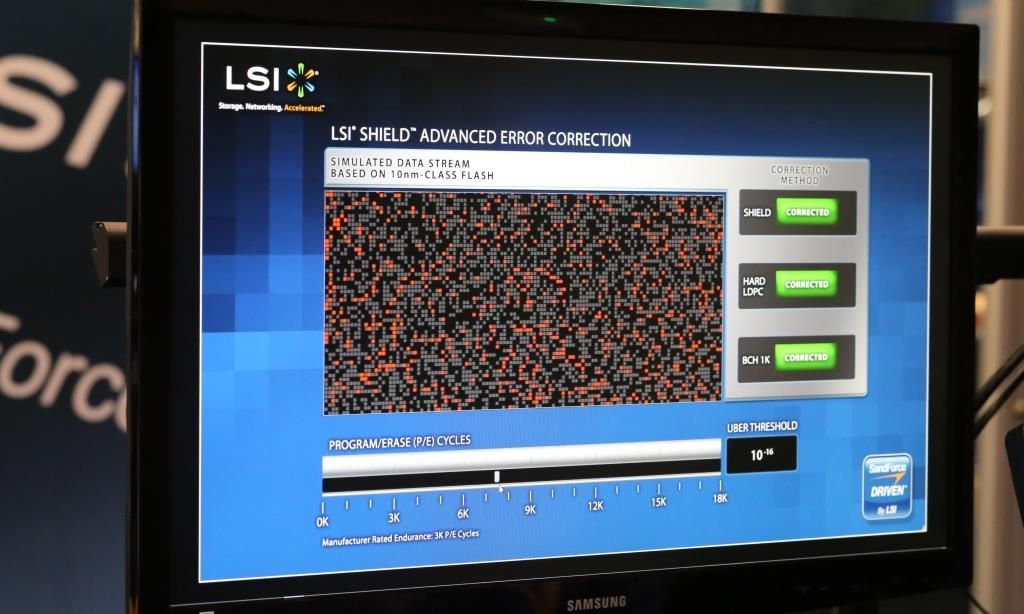

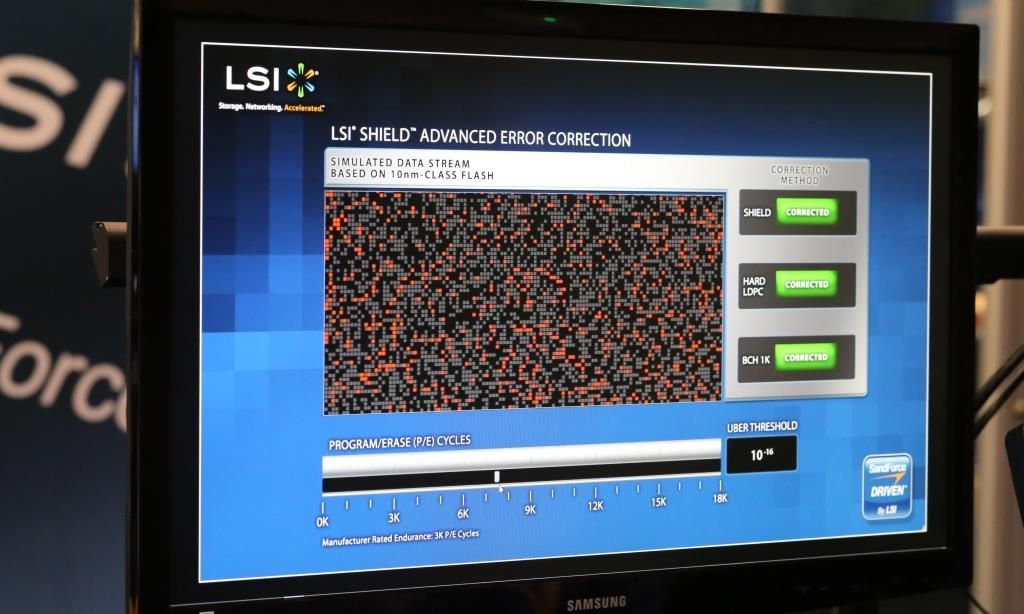

At the 2013 Flash Memory Summit in Santa Clara, California, LSI introduced its SHIELD advanced error correction technology. Combining approaches with a soft and tough solution, DSP SHIELD offers a number of unique optimizations for future Flash technologies. For example, the Adaptive Code Rate technology allows you to change the amount allocated for ECC so that it takes up as little space as possible initially, and dynamically increases as the number of errors typical for SSDs inevitably increases.

As you can see, different LDPC solutions work very differently, and offer different functions and capabilities, on which the quality of the final product will depend in many respects.

In 1948, Claude Shannon published his famous work on the transmission of information, which, among other things, formulated a theorem on the transmission of information over an interference channel. After publication, a lot of error correction algorithms were developed using some increase in the amount of transmitted data, but one of the most common families of algorithms is algorithms based on low density parity-check code (LDPC-code). low-density code), which have now spread due to ease of implementation.

Claude Shannon

The LDPC was first introduced to the world at MIT by Robert Gray Gallager, an outstanding communications specialist. It happened in 1960, and the LDPC was ahead of its time. Vacuum lamp computers, common at the time, rarely had enough power to work effectively with LDPC. A computer capable of processing such data in real time in those years occupied an area of almost 200 square meters, and this automatically made all algorithms based on LDPC economically disadvantageous. Therefore, for almost 40 years, simpler codes have been used, and the LDPC has remained rather an elegant theoretical construction.

Robert Gallager

In the mid-90s, engineers working on satellite-based digital television transmission algorithms “shook off the dust” from the LDPC and began to use it, since computers by that time had become both more powerful and smaller. By the beginning of the 2000s, LDPC was becoming widespread, as it allows one to correct errors with high efficiency for high-speed data transmission under high noise conditions (for example, with strong electromagnetic interference). Also, the emergence of specialized systems on chips used in WiFi technology, hard drives, SCSI controllers contributed to the spreadetc., such SoCs are optimized for tasks, and for them, calculations related to LDPC do not pose a problem at all. In 2003, the LDPC code supplanted the turbo code technology and became part of the DVB-S2 standard for digital satellite data transmission. A similar replacement occurred in the DVB-T2 standard for digital "terrestrial" television.

It is worth saying that very different solutions are built on the basis of LDPC, there is no “only right” reference implementation. Often, LDPC-based solutions are incompatible with each other and code developed, for example, for satellite television, cannot be ported and used on hard drives. Although most often, combining the efforts of engineers from different fields gives a lot of advantages, and LDPC “in general” is a technology not patented, different know-how and proprietary technologies along with corporate interests get in the way. Most often, such cooperation is possible only within the same company. An example is the LSI HDD reading channel solution called TrueStore®, which the company has been offering for 3 years now. Following the acquisition of SandForce,

Here, of course, most readers will have a question: how are the algorithms different from each LDPC algorithm? Most LDPC solutions start as hard decision decoders, i.e. such a decoder works with a strictly limited data set (most often 0 and 1) and uses an error correction code for the smallest deviations from the norm. This solution, of course, allows you to effectively detect errors in the transmitted data and correct them, but in the case of a high level of errors, which sometimes happens when working with SSDs, such algorithms cease to cope with them. As you remember from our previous articlesAny flash memory is subject to an increase in the number of errors during operation. This inevitable process should be taken into account when developing error correction algorithms for SSD drives. What to do in case of an increase in the number of errors?

Here comes the LDPC with a soft solution, which is essentially “more analog”. Such algorithms “look” deeper than “hard” ones, and have a wide range of capabilities. An example of the simplest such solution can be an attempt to read the data again using a different voltage, just as we often ask the interlocutor to repeat the phrase louder. Continuing the metaphors with the communication of people, we can give an example of more complex correction algorithms. Imagine that you are talking in English with a person who speaks with a strong accent. In this case, a strong emphasis acts as a hindrance. Your interlocutor said a long phrase that you did not understand. In this case, the LDPC role with a soft solution will be several short leading questions that you can ask and clarify the whole point of a phrase that you did not understand initially. Such soft solutions often use sophisticated statistical algorithms to eliminate false positives. In general, as you already understood, such solutions are noticeably more difficult to implement, but they most often show much better results compared to “hard-wired” ones.

At the 2013 Flash Memory Summit in Santa Clara, California, LSI introduced its SHIELD advanced error correction technology. Combining approaches with a soft and tough solution, DSP SHIELD offers a number of unique optimizations for future Flash technologies. For example, the Adaptive Code Rate technology allows you to change the amount allocated for ECC so that it takes up as little space as possible initially, and dynamically increases as the number of errors typical for SSDs inevitably increases.

As you can see, different LDPC solutions work very differently, and offer different functions and capabilities, on which the quality of the final product will depend in many respects.