About ratings: Hu from hu in the digital analytics market?

Among web development companies, it is customary to measure "success rates". In addition to the primary signs, such as the number of famous customers and the thickness of the portfolio, secondary ratings were also invented.

Over time, it came to measuring followers and likes - banter, taken by many too seriously. However, there are also traditional case-by-case ratings, which everyone in the industry knows about.

By the way, Tagline , one of the largest Russian ratings of web development studios and Internet agencies , is launching a vote today .

In light of the relevance of the issue, a little research. In order:

Who is in the Runet rating network?

Offhand I recall:

- Tagline . It has been held since

- Rating Runet (CMS Magazine). It has been held since

- 1C-Bitrix . Internal, for Bitrix partners, no comment.

- UMI.CMS Similarly, for UMI.

- NetCat . Similarly, for NetCat.

- Rating of SEO-companies (CMS Magazine).

- Rating SEO News .

- This is only global. There are still regional ones, of which there are many more. You can look through the search results.

-Ruward . Self-declared "rating ratings", a combined rating of 52 others.

We will not use “inter-company” ratings of commercial CMS in the study. Also, we will not take into account too specific ratings (for example, for developers of flash-sites). Those ratings where about 20 participants are not representative are not taken either.

So let's go.

Study

We narrow the circle to the ratings of web developers. The final list:

- tagline ;

- Rating Runet ;

- Rating of the East European Guild of Web Developers;

- Rating Wwwrating;

- Rating BestWebDevs.

At the heart of any rating is its criteria. It is clear that the criteria for a web studio should be different from those for an SEO agency. This, by the way, is the first stone in the garden of "collective ratings", about which later.

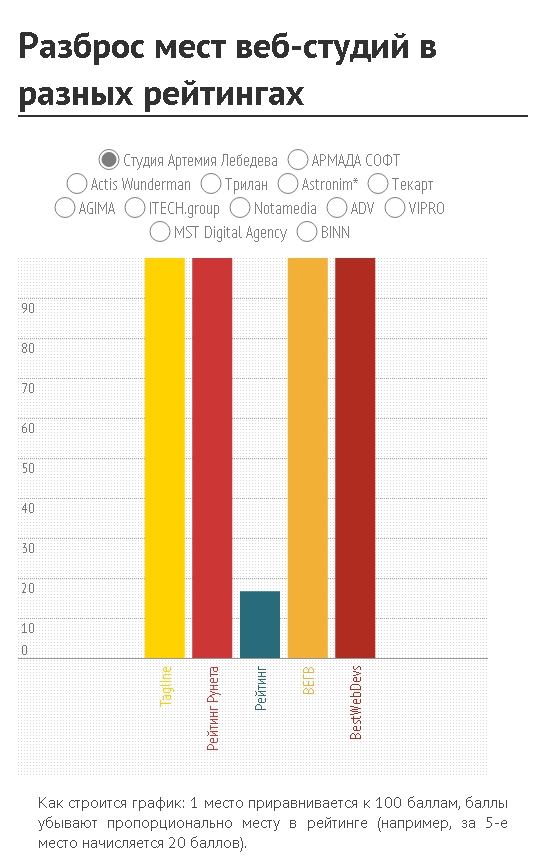

Hypothesis: Success rates for companies with the same field of activity should be very close for different ratings. Therefore - the spread of places should be insignificant.

We take the top twenty of the “Runet Rating” as a basis. The first (and, in general, the expected news) - only 15 of the 20 companies are represented in more than one rating. This somewhat distorts the picture, but the most interesting is to come. Click on the chart.

The effect is as if a grenade was thrown into the Runet rating system. Too much variation in opinion. How to behave to a customer who first faced the choice of a contractor is not at all clear.

Let's get to the reasons. That is, to the evaluation criteria.

Firstly, three of the five ratings above have a closed rating system. That is, to subject them to any criticism is physically impossible, except to indicate this very closeness.

Secondly, the "transparent" system for evaluating the Tagline and the Runet Rating is actually not so transparent. A detailed methodology is, for example, on the site of the first.

Analysis

But opacity is not so bad. Suppose you can trust the analytic departments of rating compilers. And we will consider that the “equalizing” coefficients help to create the necessary objectivity.

The reason for the failure of ratings number two: there are many. If some loudly announce themselves in the media and attract as many participants as possible through their channels, the latter doze off at the bottom of the sea, like Great Cthulhu, and then suddenly publish the results. The principle of "who succeeded - that and slippers" in action. The studio contractor physically cannot (and does not want to) be in time everywhere. And the spread, meanwhile, is increasing.

Voluntary participation in ratings gives rise to incomplete information. Incompleteness gives rise to an unreliability of the overall picture. Inaccuracy generates customer confusion. Delusion leads to the dark side.

Another reason: the desire to embrace everything. Often, narrowly targeted ratings are published “quietly,” and most of the attention (and PR efforts) is spent on pushing “general composite ratings”. For places in which the battle breaks out later. But in fact - it is a vinaigrette from contractors of completely different specialization. The client goes, naturally, to the most popular rating - and gets a distorted picture.

Perhaps there is such a reason as the fanaticism of the participants. Although, at the same time, everyone understands that knowing the criteria can "optimize the metric." Yes, so that you get into the tops. Only there will be any sense from this - one off-scale ChSV and the increased stream of potential customers. Which the level of a company’s portfolio can sober up - it didn’t grow while you were engaged in optimization of metrics.

Simple conclusions

- The dependence of the probability of successful completion of the project on the place in a rating is weak.

- It is rational to use only “narrowly targeted” ratings in order to make a list of contractors to whom you can entrust development. And then choose among them. Any aggregate ratings (web studios, online agencies, and design studios on the heap) are worthless.

- The most correct selection criteria are still the recommendations of colleagues and the level of work of the studio. About which, by the way, the Runet Rating once wrote, reinforcing with his own research .

- The more ratings - the less valuable it becomes to participate in them. The fewer people who want to participate, the less reliable the ratings themselves.

These are the conclusions. I propose to discuss in the comments.