Artificial Intelligence - Concept

In one of the previous posts, I argued that creating an artificial intelligence (IR) is impossible ( here ). Without abandoning my previous opinion, I want to nevertheless consider the principles of the work of what is impossible to create. Which way to go to humanity to deceive, let not nature, but at least itself - to consider that the problem of creating IR is safely resolved? In my opinion, this.

I will make a reservation that everything expressed below:

a) OPINION,

b)

PRIVATE opinion, c) private opinion of the DILETANT (a specialist in another field who came up with the problem of IR in the course of solving his highly professional tasks).

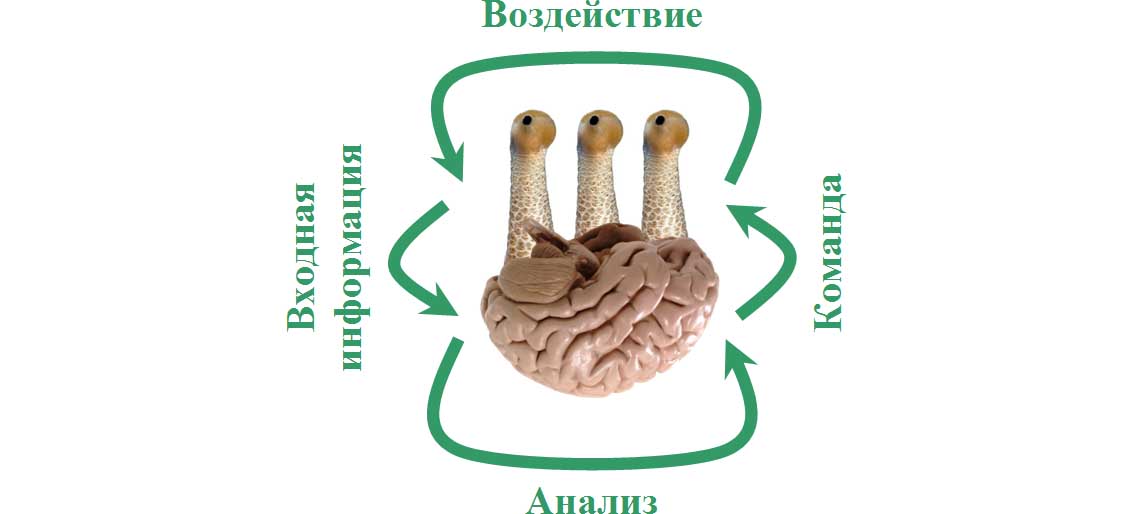

About the screensaver: not the sexual parts of the rhinoceros protrude from the brain, along with mechanical devices, as you might think, but the eyes of a snail. They symbolize the sensors that IR has.

***

I'll start with a completely trivial one.

If the task is for the artificial being to react - and reasonably! - on external signals, the creature must have devices that perceive these external signals (which no one ever doubted). Two options are possible here:

1) an input device for “finished” information - simply put, a keyboard or something that replaces it,

2) sensors that perceive external information.

The difference between the options is dramatic:

• in one case, the IR operates with information that is entered into it by a person,

• in another case, the information comes from the environment.

The concept of creating IR comes from the fact that an artificial person should be indistinguishable from a natural, living person - thereby on the assumption that a natural person is also a mechanical being in some way and his device can be repeated on a different elemental basis. But if so, then the option of entering pre-prepared information is categorically not suitable. A natural person draws knowledge from an environment that is diverse and unpredictable, so creating something that mimics a person is only possible by connecting the IR to the information base that the natural person uses. By limiting the environment of IR, for example, playing tic-tac-toe, we get a player in tic-tac-toe, but by no means a full-fledged mind in the sense that is desirable to us — such a mind that can argue with the human in all intellectual aspects.

While spending nights at the computer, you can draw up algorithms or enter input data for as long as you like, but never fill the artificial brain with vital content of the same level of quality and complexity that you have.

What is required is different: a computer calculator with sensors connected to it that respond to the environment - only then there is hope, IR will perceive you as a rational being and speak on equal terms.

What should be the sensors? It’s worth considering.

When humanity wants to invent IR, is it looking for an equal interlocutor, or is it a being that exceeds its intellect? If the second, then the use of a wide variety of sensors - including those that a natural person does not possess - is strongly encouraged. True, in this case, IR will have a different type of thinking than a natural person. I do not mean the elemental base from which the brains are made - that is natural, that artificial - namely, the type of thinking that is directly dependent on what the subject perceives. Everyone remembers the parable about how three blind men are brought to an elephant and demand to be characterized: depending on which part of the elephant's body — legs, tail or tusk — the blind man touched, the characteristics given out differ.

The conclusion suggests itself: if we want IR to speak the same language with us, its perception of the world should be similar to the human one.

***

Okay, suppose the ideal ratio between the types of sensors is found, especially since the choice is determined by technical capabilities and not too large, so what next?

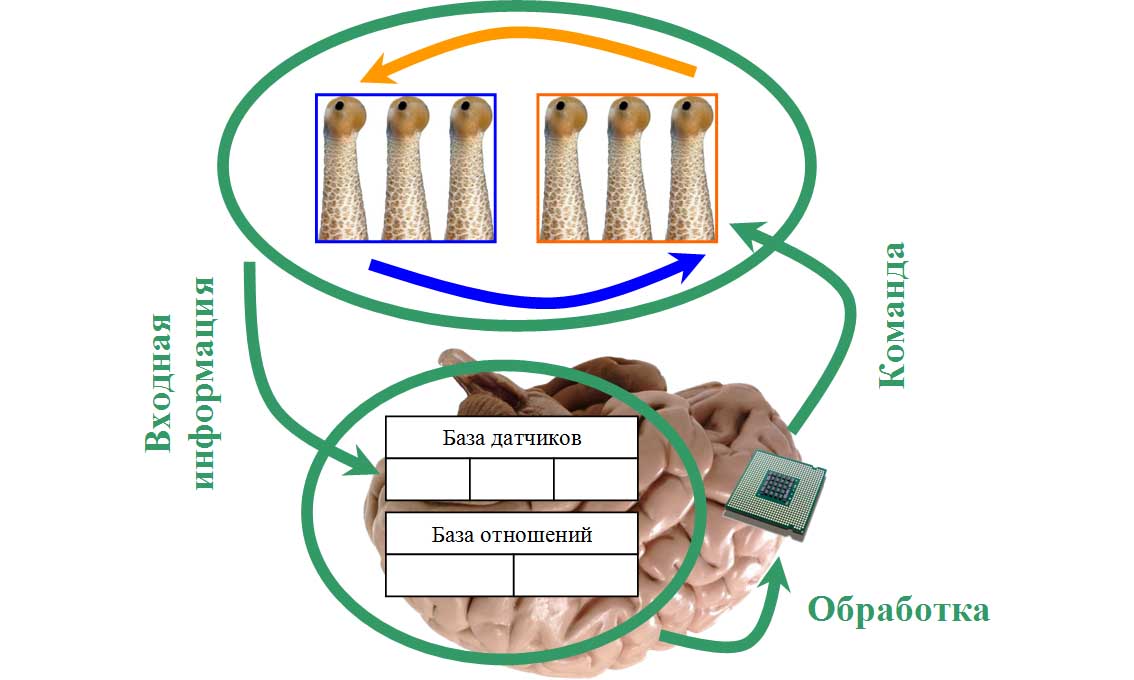

How should IR be arranged? Based on the feedback, it goes without saying : to take into account the impact of the output information on the environment, thereby foreseeing and manipulating it, because the difference between a person and inanimate nature is that a person is able to foresee the consequences of his actions. IR should be an active being, influence the world around and analyze the consequences of its impacts. No one doubted, actually.

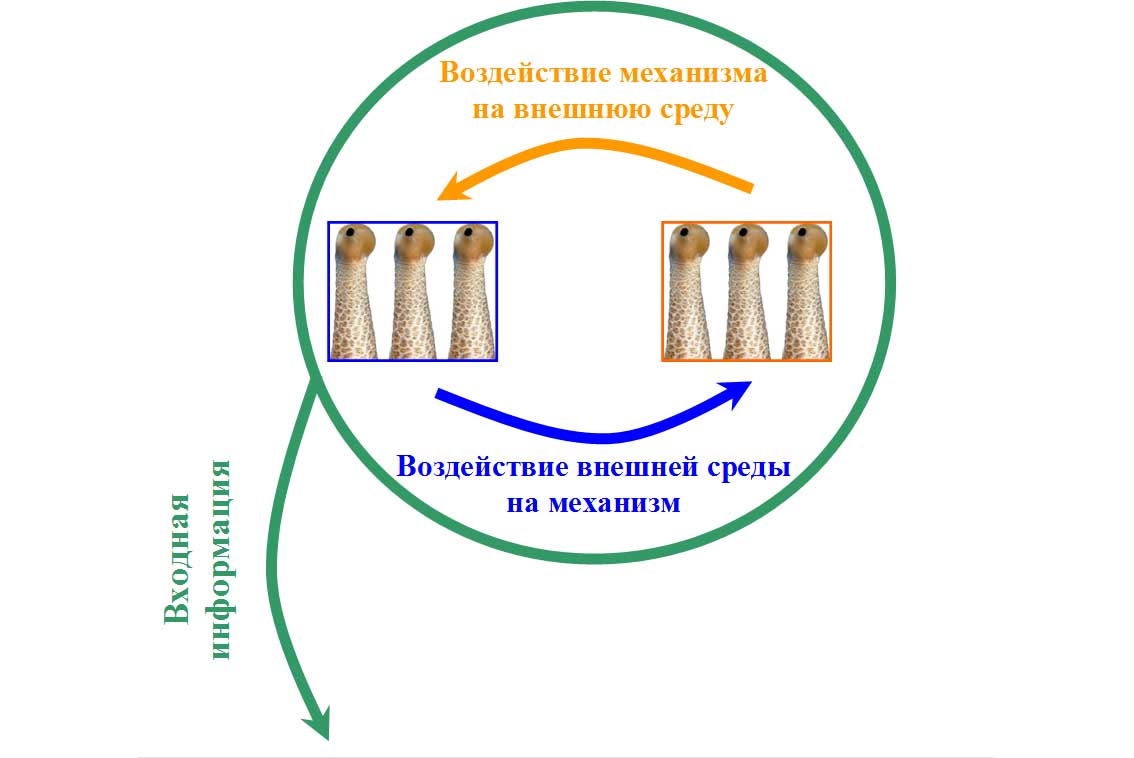

However, as shown above, feedback cannot be implemented.

Why? Because it is absent in the presented scheme: here feedback is only thought by an observer who is outside the boundaries of the created system. The calculator itself - IR - is not able to interpret its commands as changing its external environment: it has no reason for this. There is input information to which the calculator responds in accordance with the inherent algorithm: each subsequent reaction (calculator command) is, in fact, predetermined by a new piece of information. The algorithm can be arbitrarily complex, but to call a language like the mind does not turn.

If we proceed from the fact that man is created as a mechanism, even if it’s biological, and we want to artificially reproduce man on a different elemental base, it is necessary to make sure that the calculator perceives not only the external environment, but also himself (his own “I”) in the external environment. This can be implemented at the sensor level. So a person has it: some of his sensations (vision, hearing, smell) are responsible for the object world, others (touch, taste) - for the subject. This structural feature allows a person to perceive himself as an actor - in the form of a subject acting in the surrounding world.

This means that when creating IR, the sensors should be divided into:

• showing the state of the external environment;

• showing the state of the mechanism itself, claiming to be reasonable.

Since a person is in the external field in relation to the creation of his hands, it is not at all difficult to distinguish the former from the latter. For example, one sensor measures the temperature of the environment, and the second sensor measures the temperature of the computer.

But! - a person is active not only in the field of consciousness, but also in the field of matter, therefore, it is not enough to test the simple state of an artificial system. If the sensors of the second type will show the state of the mechanism, which does not correlate with the state of the environment, no IR will fail, if only because it does not sound like a natural person.

A natural person is an actor, a subject in an object environment, and in this sense he is an object:

• on the one hand, the environment affects a person,

• on the other hand, the person himself has the ability to influence the environment.

For this reason, IR - if it wants to be reasonable, of course - is obliged to influence the environment not only informationally, but also quite financially, as an object .

It is unlikely that I will make a splash by declaring that a reasonable mechanism requires:

a) a manipulator (of which the person performs the functions of a person), and

b) a vehicle (legs).

Hands and feet are exactly the means by which a natural person acts on the environment. Accordingly, IR without hands will be like without legs, and without legs, like without hands (joke): it will receive information from the environment other than what a person receives, as a result of which it will be forced to acquire a different type of thinking than that of a person. And we agreed on the desirability of the same type of thinking: so that IR could communicate with a natural person in one language.

In short, we attach a vehicle and a manipulator to our IR, and with the obligatory location on them of at least some sensors that must respond to a change in position - not the environment, but just the vehicles and manipulators.

This raises questions that it is desirable to resolve.

1. Should the sensors that record the internal state of the mechanism, match the type of sensors that record the state of the environment?

Probably not, because in a person they do not coincide: as they said, some human sensations (sight, hearing, smell) are responsible for objectivity, others (touch, taste) for subjectivity. Although it can be assumed that this is not a prerequisite. It is easy to imagine (as I did a few paragraphs above) two temperature sensors, the first of which measures the temperature of the computer, and the second - the ambient temperature. It is only important that the readings on them can be different and each sensor is perceived by the calculator as relating either to the external environment or to the mechanism itself. There can be no golden mean - to characterize both an object and a subject at the same time.

2. How many sensors should be?

Ideally, they should correspond to five human sensations, although it will still have to be determined on the basis of existing technical capabilities. The same can be said with regard to the number of sensors related to the same sensations (after all, you can install one “external” temperature sensor, or you can install two, three or more).

3. Should sensors be installed only on manipulators or are others acceptable that characterize an internal, non-environmental state?

Sensors are mandatory on manipulators, while their role is dual. After all, the sensors must register something objective for the external observer, and for the owner of IR, it is alternately either objective or subjective.

Imagine that the computer instructs to move forward. The mechanism is moving forward, but the environment is such that the mechanism is thrown back. There are two consecutive readings of the sensor that records the move:

• forward movement corresponding to the command issued by the computer — this is a subjective, “volitional” change;

• throwing back in the absence of a calculator command. This means an environmental reaction, and should be interpreted by the computer.

In other words, a change in an indicator directly at a calculator command is interpreted as the result of a command; a change in a parameter without a calculator command is interpreted as a change in the environment. This is different from sensors that measure the state of the environment: they record the state of the environment, regardless of the command of the calculator.

Sensors characterizing the internal state of the mechanism, which is not related to the environment, are possible, but in this case they will not be able to take part in the calculations, i.e. in the process of artificial thinking.

Suppose the sensor shows the remaining energy supply, and then what? If the mechanism should not react in any way to this fact - if this indicator is significant only for the creator of IR observing the mechanism from the outside - the testimony obtained for analyzing the situation is meaningless. But if the mechanism extracts energy from the environment on its own, then, of course, indications of the energy reserve are one of the most significant. The greater the correlation of the external environment with the readings of the sensors responsible for the state of the mechanism, the more in-depth analysis can be carried out by the computer and the higher quality intelligence is realized.

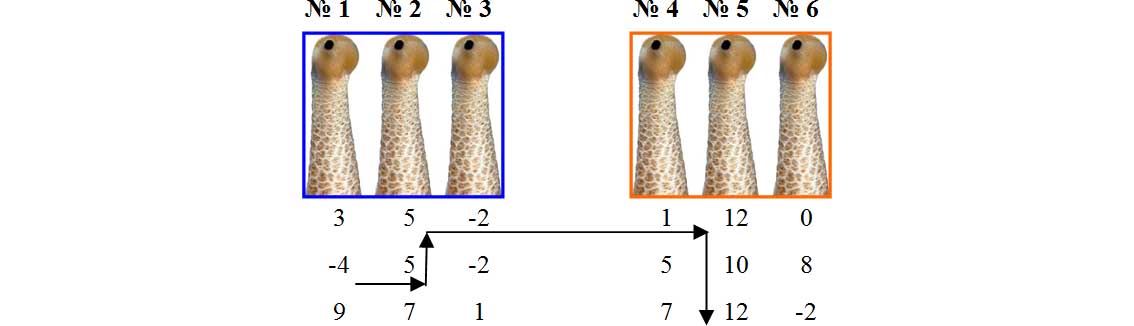

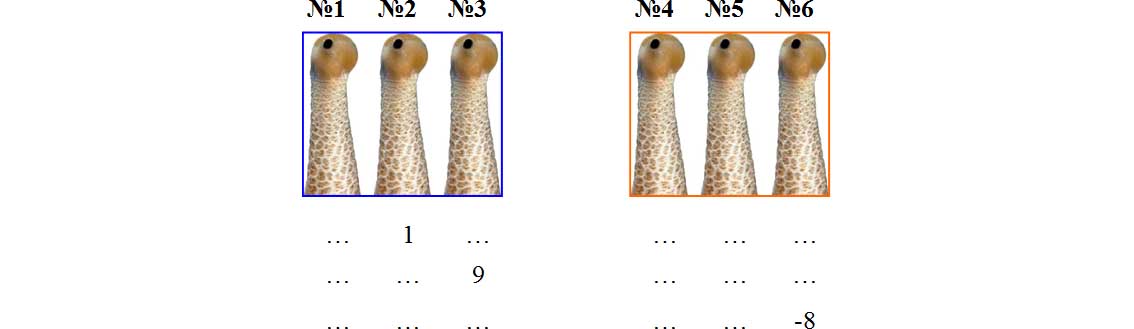

Suppose there are three sensors of both types: showing the state of the external environment and the state of the mechanism.

The computer sends a signal to the manipulator and the vehicle, the sensors record their change, which leads to a change in the environment, including in the form of a response of the environment to the mechanism, which is also fixed.

This is a real feedback that can be analyzed as such.

***

And what, in fact, is subject to analysis? The readings of the sensors, of course ...

The signals received from the sensors must be analyzed, for which they are divided into elementary significant blocks, which in a certain way relate to each other. Any calculator is arranged in a similar way, only in one case (with analog readings of sensors) does it “decompose” the information series into single semantic elements, and in the second case (with discrete readings in the form of an elementary value) it receives them from sensors, what’s called laid out on shelves. But this is for an external observer, and for myself - I mean a computer - information is always discrete and structured. This discrete and structured information is what is commonly called a database.

I'm trying to prove thatany information can be presented only in the form of a sequence of discrete elementary signals (values) for a certain number of sensors.

The values for each of the sensors we used are incomparable (unless, of course, the sensors are of the same type), but for the sake of clarity we will take them as numerical. In this case, the perceived IR will be a database with numerical values to be processed.

The same matrix, in short ...

If you object that a person perceives the world on an analogous basis, I will have to object with all possible weightiness. Nothing like this! The visual picture that we observe is not a two-dimensional image at all, but ... a point, i.e. single meaning (something meaningful). Everything else that we perceive as a holistic visual image is nothing more than an appendage to a truly observable point, partly intellectual, and partly related to the internal structure of our information system.

Further explanations will lead us far from IR, so I return to our sheep.

So, the calculator of any intelligent creature - including the human brain - works with a base consisting of elementary sensor readings.

The database is constantly updated due to limited volume: already analyzed, outdated data is erased to give way to new ones. The proof of this statement is the impossibility to restore every moment of our life: the data is erased from our memory as unnecessary, leaving room for only really important and unique events. It sounds corny, but reality in general is terribly banal - this is its main property, apparently.

***

Next is about the problem of self-learning.

Man is a self-learning system explicitly, therefore, and IR must be a self-learning system. Personally, I see two solutions:

1) self-learning can be achieved by complicating the algorithms used by the mind - in some way, the mind itself;

2) either due to the transition to a qualitatively new level of abstraction, using algorithms that remain unchanged.

The first method is rarely used. We reject it as impractical and, by and large, impracticable due to high theoretical considerations: where is it seen that a computer program writes itself? Up to a certain border set by the developer, this is conceivable, but only to a clearly defined border, beyond which any development - in relation to the creation of IR, self-training - completely stops.

There remains the second option, in which the algorithm embedded in the system remains unchanged, and self-learning occurs due to the constant complication of the processed information - in our case, due to the constant transition from a low level of abstraction to a higher one. That’s how the human brain works, according to my assumptions.

What is the human world - unless, of course, a person is taken for a mechanism that can be reproduced artificially? Pieces of the beyond, structured into a database with which a natural calculator works - the human brain. At the same time, the environmental base has an internal structure defined by its identifiers. But there is something other than the one named: the relationship between significant system elements or identifiers. These relations are determined by the binary principle: similar - not similar. The green color of one object is similar to the green color of another object and not similar to the red color of the third object, etc. Since both pieces of the beyond and identifiers representing the internal structure of the system represent some values, we can say that any relation is the result of comparing two values. Comparing one value with another, you can "go" through the database from one value to another and from one row to another. That's about like that.

We go from -4 to 5, because these values refer to one record (and the record in the system is identified, i.e. indicated by an identifier), then from 5 records to 5 records (since the values coincide), etc. As a result of the work done, the value of -4 begins to correlate with the value of 12. All this is not arbitrary, of course, but based on the algorithm laid down in the program.

This principle of “walking” along the chains of values is extremely reminiscent of the deductive method of Sherlock Holmes, in particular, the case when Holmes was able to restore the chain of his thoughts by the look of Watson, thrown by him at some object, and his further grimaces. I don’t remember what story this passage belongs to, but the essence is clear: you take a look and after a moment you remember something related to what you saw. This is the principle of our thinking (which is well known): associatively, according to a chain of values, from one to another, and if so, this principle must be reproduced when creating IR.

One act of mental analysis is comparing values through an associative chain. The associative chain is finite, because you can travel through relationships for as long as you like, and is determined by the technical capabilities of the system. The relations obtained (in our example between -4 and 12) are stored in a separate database intended for storing relations.

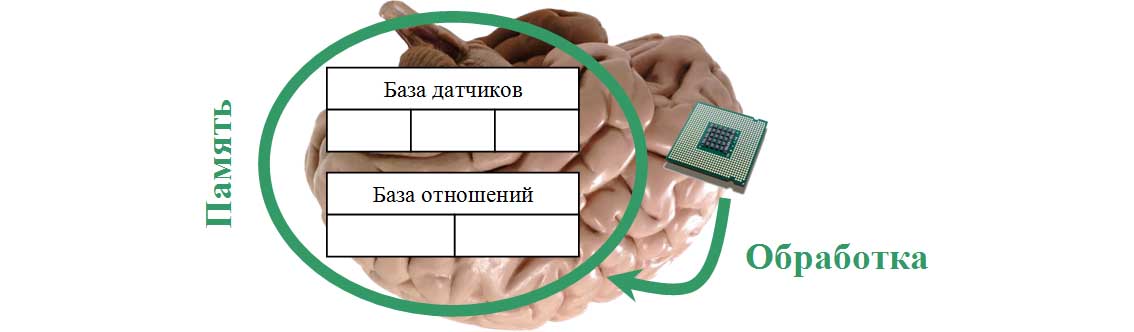

It turns out that the system contains two databases (performing the memory function) :

1) a database storing sensor readings,

2) and a database in which the relations between the values are written - in other words, the results of comparisons.

Both databases are processed using an algorithm that was introduced into the system from the outside by its creator-developer, and remains unchanged.

Based on the processing results, an action command is issued.

What is the relationship between IR and the relationships obtained by comparing the values? The same as between the deductive method of Sherlock Holmes and the search for criminals.

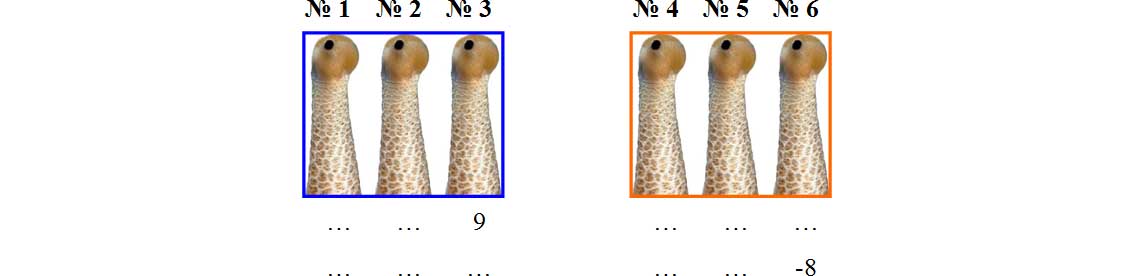

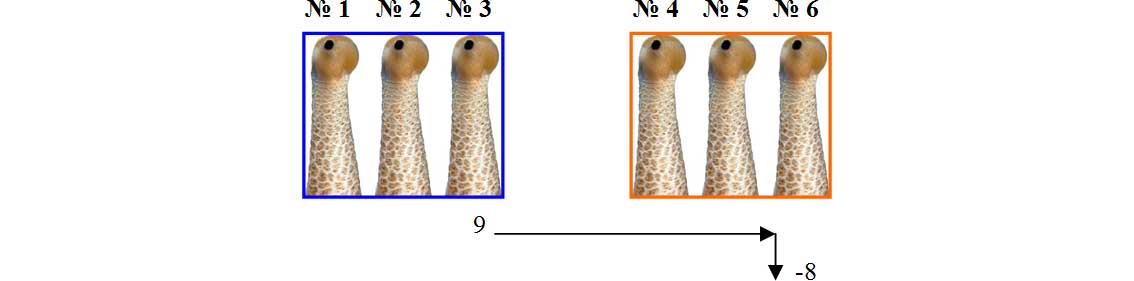

Suppose, the number 9 on the sensor No. 3 entails the next instant (in the next record, from the point of view of the database) the number -8 on the sensor No. 6, which is unfavorable for the system. After all, sensors No. 4-6 reflect the internal state of the mechanism, therefore, initially, at the program level, they can (and should) be interpreted as favorable, inert and unfavorable.

An analysis of the relationship between the readings of sensors No. 3 and No. 6 will indicate a causal relationship between them.

For some time now, the established relationship does not mean anything, because other sensors have their own indications, but in the case of regular repeatability a statistical pattern is displayed: reading 9 on sensor No. 3 leads to an unfavorable reading -8 on sensor No. 6.

Now let's see how the system should respond to an adverse indication. Probably, in the same way as a person who touches a red-hot stove reacts, pulling his hand away. True, the hand pulls back reflexively - in most situations, for an adequate reaction, you must have some experience that our IR does not have. So, experience will have to be gained.

The calculator is able to give commands to the vehicle and the manipulator - that, in turn, has the ability to affect the environment. Suppose that to overcome the negative consequences, it is necessary to send command -1 to sensor No. 4. But the calculator — not trained, of course — cannot know this for the time being, so he goes through valid commands and at the same time establishes a relationship between them and the environmental response. Sooner or later, a statistical pattern between the necessary -1 command on sensor No. 4 will be detected. If the pattern is confirmed (with each command -1 to sensor No. 4, the value 9 on sensor No. 3 will change to safe), the problem will be resolved ... until a new problem appears, of course, for example,

This is how a natural person behaves - that is how IR should behave, in everything except the elemental base, copying the natural.

Can a similar method of analysis and action be recognized by a self-learning system? In no case - there is development in it, but there is no qualitative dynamics that arises only with the transition to a new level of abstraction. A person does not compare every green apple with every red one - this level of thinking remains in infancy - and immediately, after receiving the initial sensation, it goes into much more complex abstract categories. These abstractions are the result of the same mental action, i.e. comparisons, however, not received from the sensors of elementary signals, but of relations between relations, and so on with each next level. Once the deduced relationship between the readings of counters No. 3 and No. 6 can be compared with the new readings of the sensors, and if a dependence is detected, derive a new dependence,

To compare on a binary basis - either yes or no - it is necessary not only values, but also relations, and relations of relations, and relations of relations of relations and more ... Each significant result with the identifier assigned to it is recorded in the database of relations.

The ability to compare already established relationships with sensors or to compare relationships with relationships means looking for new statistical patterns not only in the sensor database, but also in the relationship database.

The calculator looks for statistical patterns in both databases and writes the results to the relationship database; sensor readings are only needed to establish new dependencies of the surrounding world. Moreover, the base of relations is updated only in the following cases:

a) correction of an erroneous relationship between values,

b) replacing relations of one order of abstraction with relations of a higher order of abstraction.

Thus, the job of the calculator is only to establish dependencies between the readings of the sensors and / or previously established dependencies (relations). This is how a natural person functions: when he sees an object, he does not comprehend, much less re-establishes all the relations connected with this object, but reacts according to the latest association in all its fullness, for example, when he sees an enemy, he is drawn to parabellum. The associative chain - in a very simplified form, of course - looks like this: the enemy (sensor readings interpreted as the enemy’s approach) - a danger signal - parabellum (warning reaction). A self-learning system is determined by the transition to more and more new levels of abstraction.

In theoretical terms, the levels of abstraction are endless - I understood this, but decided to double-check. And since mathematics is not at odds, I had to climb with questions to the kids, although they are very busy with me and they don’t like dad’s questions.

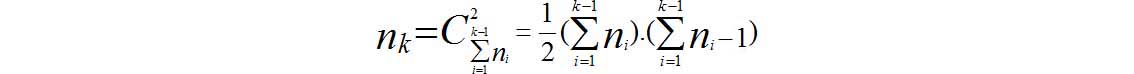

Suppose - I asked the children (I asked something wrong, of course, it was then that I had to bring my question into a more mathematical form) - there are many of n 1 objects. Let n 1, i be the ith object of this set. There is only one relationship between any two objects: (n 1,1 , n 1,2 ), (n 1,1 , n 1,3 ), (n 1,2 , n 1,3) etc. Combine the elements and their relationships in a new set of the same type with the number of elements n 2 and repeat the entire operation described (k-1) times. Question: what formula displays the obtained number of elements n k (which, if you understand, shows the number of elements for comparison, which the system operates at the level of abstraction k)?

After some breakdowns, discussions and reductions, the children jointly built the following construction:

I won’t dare to judge how much the obtained mathematical formula is true - and without it it is clear: the higher the level of abstraction, the more elements for comparison.

The calculator - anyway, artificial or natural - is not able to process information arrays going to infinity, therefore it stops at a certain level. It corresponds to everyday life, by the way: an intellectual is able to think in high abstract categories, while for a non-intellectual, on the contrary, he mainly responds to such simple concepts as “woman”, “snack” and “TV”.

***

In the considered methodological framework, the problem of communication can be solved, without which a rational being, as a rule, is not conceived.

As you know, communication between intelligent beings is possible only through symbols, which are completely material objects, at the same time possessing the ability to denote something else - not what they really are. The word "stone", written or uttered, is the result of someone's sensations, while it does not at all resemble a stone lying on the side of the road. And telepathy, alas, is impossible.

What does this mean in relation to the topic of this post? The fact that a rational creature merely interprets an object as a creature equivalent to itself in reason according to the readings of sensors is at some level of abstraction, of course.

Take the previous example with sensor No. 3, the value of 9 of which leads to an unfavorable reading of -8 on sensor No. 6. If before that from time to time on the sensor No. 2 a value of 1 appears, it is inert in itself, but after which the adverse effects described above occur, this may be regarded as a warning.

If other statistical dependencies are found - especially at the proper level of abstraction and those in which the readings of the state sensors of the mechanism coincide with the readings of environmental sensors (if they are sensors of the same type) - a conclusion can be made about a creature equivalent to my mind. A value of 1 on sensor No. 2 will then turn out to be a hazard warning signal.

***

Deepening further is pointless. In any case, I am not able to write the IR algorithm, but it seems to me that I pointed out the general (approximate) principle of its action (if not for readers, then for myself, at least). These are:

• comparison of values in both databases: sensors and relations,

• establishment of statistical dependencies between values and recording of results in the relationship database,

• calculator team based on previously established dependencies.

Perhaps some purely technical blocks of the mechanism are missing, but in general it should be clear.

In nature, in any case, more complicated. A person is born not with innate databases (sensors and relationships), but fills them as they grow older for several years. At first, the infant feels only taste and color spots, which, after gaining some experience, begin to take shape in more or less complete pictures, then comes the turn of higher-order abstractions. And so - unless, of course, one is lucky to be born a soulless cattle - until the end of life. And how many years IR should be trained, one can only guess on the coffee grounds: it is unlikely that even if it is possible to correctly design it, it will learn faster than a natural baby, in my opinion.

***

The scheme given above is not complete: it lacks the main thing, namely the super-task set by the creator of IR.

There are no mechanisms per se, but mechanisms adapted for specific purposes: shaving, snow removal, squeezing carrot juice, and communicating with people on an equal footing. The IR system must also have a similar super-task, otherwise it will not function reasonably, but mechanically - as they say, without a twinkle. Do you remember our initial premise: if IR wants to become like the natural, is it obliged to borrow from the natural mind everything except the elemental base? But what is the super task facing a person and, most importantly, how is it implemented in the concept of a natural person?

In my opinion, the supertask is realized in a natural person in two ways - more precisely, in three ways.

1. Firstly, using the limit values of the sensors.

Each of the subjective sensations (touch, taste) has a region of normal values, beyond which begins a region of abnormal values that are dangerous to the functioning of the body.There is a pleasant touch and an unpleasant touch, a pleasant taste and an unpleasant taste: in one case, the sensors of a natural person - his human feelings - signal that everything is in order, you can continue, and in another case they warn of danger. Please note that object sensations (vision, hearing) do not possess such properties! No, if one can put it that way, objectively pleasant or unpleasant color or sound, and if they come (for example, a too bright light or a too loud sound), then just a minute! - a person feels discomfort with the organs of touch, do not you? True, the list of object sensations does not contain a sense of smell, which does not seem to fall within the characteristics indicated here - there are no doubt pleasant and unpleasant odors, but ... No, I have nothing to say in my defense. Maybe I was wrong and the sense of smell refers not to the object but to the subjective sensations, although I have another assumption, namely: the sense of smell is the oldest sensation that formed during the existence of some objects (before the appearance of the subjects), thereby the sense of smell refers both to the object and to the subject. Here, anticipating the nervous reaction of the readers, I am silent, humbly apologizing for the thoughtlessness and returning to my circles, to artificial creatures.

For them, imitation of the limit values of the sensors has long been absolutely feasible, for example: the temperature in such and such parameters is permissible, and higher or lower it is dangerous for the system. Therefore, the calculator must give some commands, which, according to the previously established relationships, will lead to the normalization of the parameters.

2. Secondly, the first paragraph to establish the super-task is completely insufficient - you need something else, just as tangible. The required something is (in the natural person we are talking about now) a feeling of hunger / thirst .

But in trying to refute me, one does not have to chew about the anatomical structure of a person or the energy that the body needs to be replenished in a timely manner. It would be necessary if a person would restore the spent energy directly from the Sun, but this was not given to a person - precisely in order to make a person act, as I believe. What would a person do if he didn’t need food? The answer is obvious: basking in the sun with a friend - and at what stage of development would earth civilization be then? No, no, no, the other is for those who designed a natural person! The two-legged and big-headed creation is intended for action: the development of virgin swamps, and the construction of pigsties, and the launch of spaceships - and as an incentive to action, he was given the feeling of periodically tormenting him with hunger / thirst.

I’m far from certain that this feeling belongs to touch: it is possible that hunger / thirst is not a touch at all, but of a completely different kind - the sixth sense, which characterizes not an object or a subject, but that it is the fulfillment of a super task. It is also possible that the sixth sense is connected with human emotions. Although it is more likely that hunger and thirst are still cases of negative tactile perception.

Any of the options is suitable for creating IR:

• either one of the subjective sensors must not only respond to the environment, but interpret it in a certain way, “explaining” to the calculator that one situation is desirable for the execution of the super task, and the other is not;

• or the same function will be performed by a separate “sensor”- It’s difficult to call it a sensor either, since it should not show something that exists in reality, but something that exists as a strategic goal. This sensor is false, but its readings should take part in the analysis along with the readings of other sensors.

If the IR performs what is laid down as its super-task, the sensor gives a positive sensation; otherwise, when the IR turned in the opposite direction - negative. Just like a person: after all, the sudden feeling of hunger / thirst, although expected, does not have any, so to speak, objective prerequisites in the surrounding nature (the reader does not start about the anatomical structure of a person, I’m talking about something else!), The prerequisites for sucking under the spoon are exclusively teleological.

This raises the question: what to indicate as the IR super-task?The instinct of self-preservation is implemented in paragraph one , but what in paragraph two? And one more thing: how does the specified super-task, even if it can be correctly determined, should predict the situations in which the IR will be? In one case, to achieve the goal, you need to turn left, and in the other case, right - but how do you know about this for a programmer who writes a certain code in the IR program?

It would be ideal to take the created artificial creature to a dense forest, and leave it there: Nehai himself generates energy (at least searches for a gas station, if he can), that is, to act in a way that was once done to humanity. In this case, the search for a gas station can be posed as a supertask: approaching a gas station will signal the IR that it is on the right track, and the distance from the gas station, on the contrary, will create unpleasant sensations, plus a gradual increase in unpleasant sensations until the gas station is discovered. Of course, for this you need to know where the nearest gas station is located.

But a person doesn’t need a gas station - he always builds something, but how would you know what exactly -and while the super-task set before a person is not known, a similar super-task can not be set for IR, without which he will not be like a natural person . The logic is this, and it seems hopeless.

However, some considerations in this regard are found in paragraph three.

3. A signaling device oriented towards achieving the supertask should also be installed at the program level. In fact, this is a part of the general algorithm that rejects or, on the contrary, approves the discovered interdependencies regardless of their probability and rationality of decisions based on them.

To find this algorithmic part in people is quite easy.

Tell me, what does a person begin to achieve after he has extinguished the instinct of self-preservation and quenched the feeling of hunger? A person begins to seek power. Different people, depending on their education and intellect, desire for power manifests itself in different ways - some intend to expand their living space, others dream of commanding countries and continents, others who are the most conceited, in an effort to gain power over the whole nature, cut laboratory mice, - however the feeling of lust for power is universal, characteristic of every normal person.

The will to power, the desire to dominate the world and change it according to your own understanding - this is, with a high degree of probability, a super task implanted in human brains.

We get the following construction:

• the limiting values of subjective sensations show the normal state of the system and going beyond the limits of the normal state;

• a feeling of hunger corrects the state of the environment in relation to the super task set before a person;

• the will to power corrects information processing algorithms in relation to the super-task set for a person.

The above-mentioned traits — the will to rule over the world, including man — we must repeat in an artificial being so that it speaks to us on equal terms. First on an equal footing, and then ... And did you intend, creating an IR, to chat with him about the last film of Kusturica, and then ask to bring slippers? But figs to you! If this artificial one really turns out to be reasonable, it will not bring slippers to you, but it will cut the throat with a hacksaw - simply because it is reasonable. Wandering stories about the rebellion of robots - this is not just so, but because. Not every successful storyline becomes popular, but the one in which the future is predicted: such films are liked because viewers intuitively feel the truth of what is happening. And the film "The Matrix" is not just like that: in addition to successful directing, successful camera work and successful casting, the philosophical idea of the digital nature of the universe takes for the soul, even if the viewer is not aware of this, this is a logical success. Keanu Reeves alone is not enough to repeat this depth.

Following the Azimov laws of robotics, you will get nothing but an automatic coffee machine: if you want to create a full-fledged artificial person, and not some disabled person or a vegetarian, you will have to invest all human shortcomings in it. And you do not need to condemn IR in advance for cruelty: he, realizing himself rational, will begin to suffer no less than yours. A humanoid robot, not aware of this and considering himself a human being, is a plot no less common (and true) than a rebellion of machines. And what will you do if representatives of an alien mind landed on our planet with irrefutable evidence that humanity was created by them: well, they’ll imagine a film on which bubbles with primary biological substance gurgle, and then dinosaurs hatch from these bubbles, and also the evidence of their alien notary about the origin of life on earth? First, rush into the negation room, and then when the alien evidence will be bolted to the wall? Run for slippers or a hacksaw?

The desire to create IR, and in his person a competitor, can lead to sad consequences for humanity. However, people, in this case scientists, are so programmed that they will not enjoy life until they become omnipotent, although the result of this omnipotence can be the complete destruction of humanity. Scientific curiosity is almost impossible to overcome - sucks. I am also so arranged, unfortunately, the result of which is a real post.

As for the general concept of IR, it, in my PRIVATE OPINION of an amateur, turns out to be this (hardly innovative, I understand):

But is it worth explaining that reality will certainly surpass not only mine, but also your ideas about it.