Kinect for Windows SDK. Part 3. Functionality

- Kinect for Windows SDK. Part 1. Sensor

- Kinect for Windows SDK. Part 2. Data Streams

- [Kinect for Windows SDK. Part 3. Functionality]

- We play cubes with Kinect

- Program, aport!

Tracking a human figure

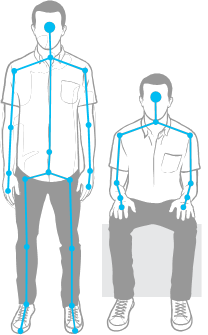

With such a wonderful feature, Kinect is able to recognize a person’s figure and movement. And, in fact, not even one, but six! In the sense of determining that up to six people are in the sensor’s field of view, but only for two detailed information can be collected. Take a look at the picture:

Personally, my first question was: “Why did he [Kinect] build a full 20-point skeleton for these two peppers, and only show the navels for the others?” But the point here is not discrimination, it's just that the sensor works by default - for the first two recognized figures, a detailed skeleton is built, the rest are satisfied with the fact that they were at least noticed. MSDN even has an example of how to change this behavior, for example, to build a detailed skeleton for people closest to the sensor.

The points in the constructed skeleton are called Joint, which can be translated as a joint, joint, node. The knot seems to me to be a more adequate translation, and even to call the head a joint is somehow not very good.

So, the first thing you need to do in the application to get information about the figures in the frame is to enable the desired stream:

// подписываемся на событие с координатами найденных фигур в кадре

sensor.SkeletonFrameReady += SkeletonsReady;

// включаем поток

sensor.SkeletonStream.Enable();

The second is to handle the SkeletonFrameReady event. All that remains to be done is to extract information about the figures of interest from the frame. One figure - one object of the Skeleton class . The object stores data on the state of tracking - the TrackingState property (whether the full skeleton is built or is it known only about the location of the figure), data about the nodes of the figure is the Joints property . This is essentially a dictionary whose keys are the values of the JointType enumeration . For example, you wanted to get the location of the left knee - nothing is easier!

Joint kneeLeft = skeleton.Joints[JointType.KneeLeft];

int x = kneeLeft.Position.X;

int y = kneeLeft.Position.Y;

int z = kneeLeft.Position.Z;

JointType enumeration values are originally shown in the figure of a Vitruvian man.

Before these lines, I wrote about a 20-node skeleton. Build which is not always possible. So there was a regime called seated skeletal tracking . In this mode, the sensor does not build a complete 10-node skeleton.

In order for Kinect to start recognizing shapes in this mode, it is enough to set the TrackingMode property of the SkeletonStream object during stream initialization:

kinect.SkeletonStream.TrackingMode = SkeletonTrackingMode.Seated;

In the tracking mode of a seated figure, the sensor can also recognize up to six figures and track two figures. But there are also features. So, for example, for the sensor to “notice” you need to move around, wave your hands, while in full skeleton recognition mode it’s enough to stand in front of the sensor. Tracking a seated figure is a more resource-intensive operation, so be prepared to reduce FPS.

Another article in the series - We play cubes with Kinect , is entirely devoted to the topic of tracking a human figure.

Speech recognition

Strictly speaking, speech recognition is not a built-in feature of Kinect, since it uses an additional SDK, and the sensor acts as an audio signal source. Therefore, the development of speech recognition applications will require the installation of Microsoft Speech Platform . If desired, you can install different language packs, and for client machines there is a separate package (speech platform runtime).

In general, the use case for speech platform is as follows:

- select a recognition engine for the required language from the available in the system;

- create a dictionary and pass it to the selected handler. Speaking humanly, it is necessary to decide what words your application should be able to recognize and pass them to the recognition processor in the form of strings (there is no need to create audio files with the sound of each word);

- set the audio source for the processor. It can be Kinect, microphone, audio file;

- give a command to the handler to start recognition.

After that, it remains only to handle the event that occurs whenever the handler recognizes the word. An example of working with this platform can be found in the article Program, aport!

Face tracking

Unlike figure tracking, face tracking is fully implemented programmatically, based on data obtained from the video stream (color stream) and the data stream of the rangefinder (depth stream) . Therefore, the resources of the client computer will determine how fast tracking will work.

It is worth noting that face tracking is not the same as face recognition . It's funny, but in some articles I met exactly the stories that Kinect has a face recognition function. So what is face tracking and where can it be useful?

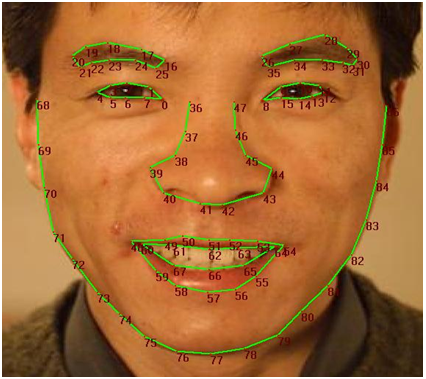

Face tracking is tracking a person’s face in a frame with the construction of 87 nodal face patterns. MSDN says, it is possible to monitor several faces, but it is not said about the upper limit, it is probably equal to two (for so many people the sensor can build an N nodal skeleton). Functionality can be useful in games so that your character (avatar) can convey the entire palette of emotions displayed on your face; in applications that adapt to your mood (tearful or playful); in face recognition applications, finally, or even emotions (Dr. Lightman?).

So, the scheme. Actually, here it is (the scheme of my dreams):

In addition to these 87 nodes, you can get the coordinates for 13 more: the centers of the eyes, nose, corners of the lips and the borders of the head. The SDK can even build a 3D face mask, as shown in the following figure:

Now, armed with a common understanding of face tracking implemented by Face Tracking SDK, it's time to get to know him better. Face Tracking SDK is a COM library (FaceTrackLib.dll) that is part of the Developer Toolkit. There is also a project and wrap (wrapper) Microsoft.Kinect.Toolkit.FaceTracking , which can be safely used in managed projects. Unfortunately, it was not possible to find a description of the wrapper, except for the link provided (I believe that while active development is in progress and while Face Tracking SDK is not included in the Kinect SDK, we can only expect help to appear on MSDN).

I will focus on only a few classes. The centerpiece is the FaceTracker class , oddly enough. Its tasks include initialization of the handler (engine)tracking and tracking human movements in the frame. The overloaded Track method allows you to search for a person using data from a video camera and rangefinder. One of the overloads of the method takes a human figure - Skeleton , which positively affects the speed and quality of the search. The FaceModel class helps in building 3D models, as well as transforming models into a camera coordinate system. In the Microsoft.Kinect.Toolkit.FaceTracking project, in addition to wrapper classes, you can find simpler, but no less useful types. For example, the FeaturePoint enumeration describes all nodes of the face outline (see the figure with 87 points above).

In general, the algorithm for using tracking can look like this:

- select a sensor and enable the video stream (color stream) , the data stream of the rangefinder (depth stream) and the figure tracking stream (skeleton stream) ;

- Add an AllFramesReady sensor event handler, which occurs when frames of all threads are ready for use;

- in the event handler, initialize FaceTracker (if it’s not already done), go through the shapes found in the frame and collect the built face patterns for them;

- process face patterns (for example, show the constructed 3D mask or determine the emotions of people in the frame).

I want to note that I deliberately do not give code examples, because It’s impossible to give an example in a couple of lines, but I don’t want to overload the article with giant multi-line listings.

Remember that the quality of the face in the frame depends both on the distance to the head, and on its position (inclinations). Acceptable head tilt for the sensor are considered up and down ± 20 °, left and right ± 45 °, side inclination ± 45 °. The optimal values are ± 10 °, ± 30 ° and ± 45 ° for tilts up-down, left-right and sideways, respectively (see 3D Head Pose ).

Kinect studio

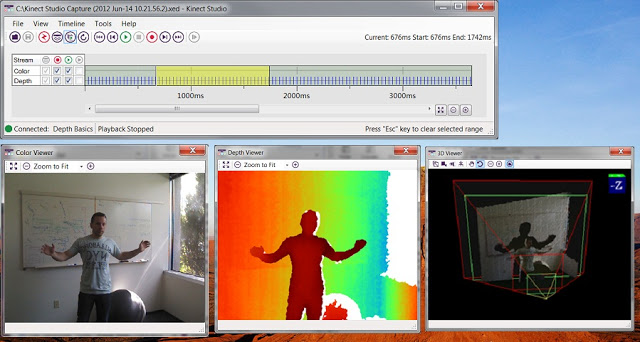

The first time you try to write something for Kinect, the feeling that something is missing does not leave for a minute. And when you set breakpoints for the hundredth time so that they work exactly with a certain gesture, and when you make this gesture for the hundredth time in front of the camera, then you understand what is really missing! A simple emulator. So that you can record the necessary gestures, and then calmly sit and debug. Oddly enough, the rays of good sent by developers around the world who have experienced development for Kinect, have reached the goal. The Developer Toolkit includes a tool called Kinect Studio .

Kinect Studio can be thought of as a debugger or emulator. Its role is extremely simple, to help you record the data received from the sensor and send it to your application. You can play back the recorded data again and again, and if you wish, save them to a file and return to debugging after a while.

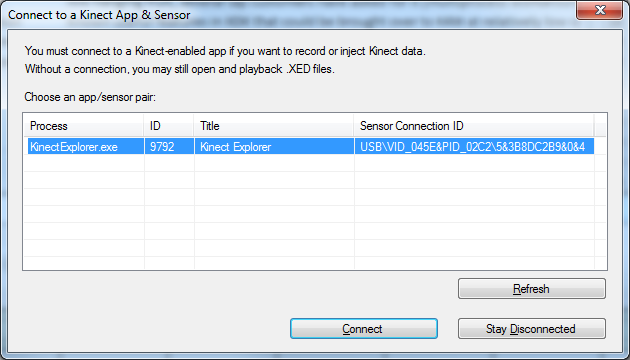

To start working with Kinect Studio, you connect to the application and select a sensor. Now you are ready to to start recording (recording) data sensor or injection (injection) stored data.

Afterword

The review article, which was not even planned, underwent more than one change during the work, but as a result was divided into three parts. In them, I tried to collect material that I found on the expanses of the World Wide Web. But still, MSDN has been and remains the main source of knowledge. Now Kinect is more like a kitten who is just learning to crawl than a product that can be perceived not only as just for fun. I myself treat him with a certain skepticism. But who knows what will happen tomorrow. Now Kinect shows good results in the gaming industry, and Kinect for Windows opens up scope for creativity to developers around the world.