Speech recognition and synthesis in any iOS application in an hour

Introduction:

The toolkit itself is called NDEV. In order to get the necessary code (it is not enough) and documentation (there are a lot of it), you need to register on the site in the “cooperation program”. Website:

dragonmobile.nuancemobiledeveloper.com/public/index.php

This is all “hemorrhoids” if the clients of your application are less than half a million and they use the services less than 20 times a day. Immediately after registration you will receive a Silver membership, which will allow you to use these services for free.

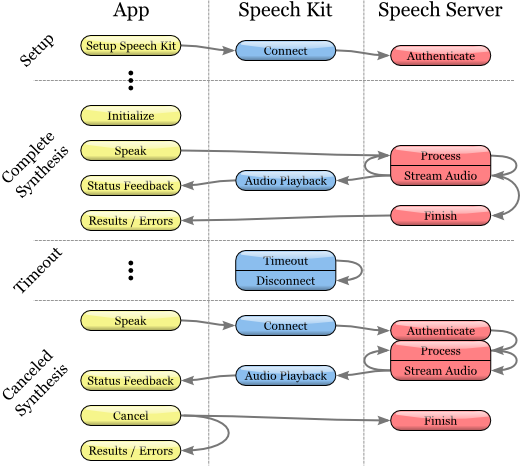

Developers are offered step-by-step instructions for introducing speech recognition and synthesis services into their iOS application: The

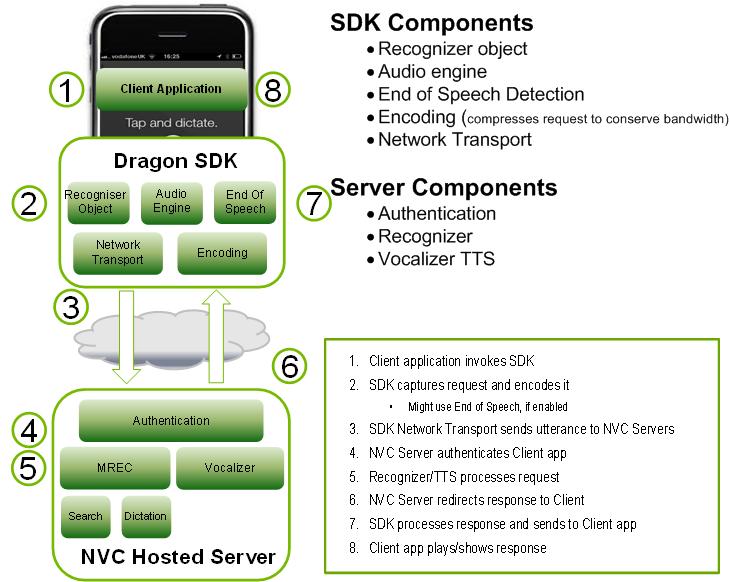

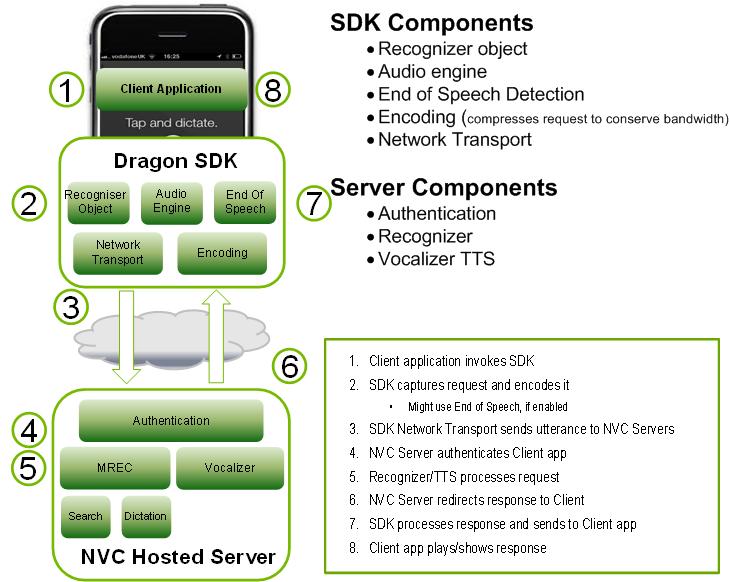

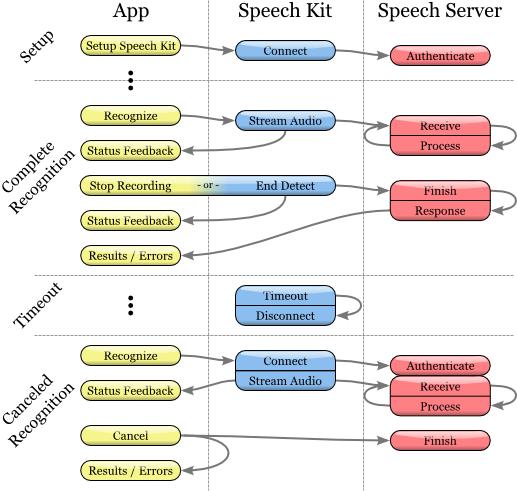

Toolkit (SDK) contains both the client and server components. The diagram illustrates their interaction at the top level:

The Dragon Mobile SDK consists of various code samples and project templates, documentation, as well as a software platform (framework) that simplifies the integration of voice services into any application.

The Speech Kit framework allows you to easily and quickly add speech recognition and synthesis (TTS, Text-to-Speech) services to applications. This platform also provides access to speech processing components located on the server through asynchronous “clean” network APIs, minimizing overhead and resource consumption.

The Speech Kit platform is a full-featured high-level “framework” that automatically manages all low-level services.

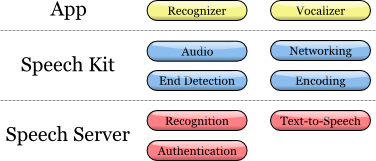

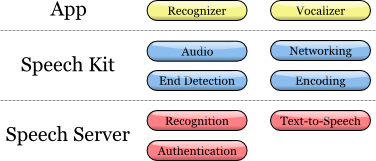

Architecture Speech Kit

main part

At the application level, the developer has two main services available: speech recognition and speech synthesis from text.

The platform performs several coordinated processes:

It fully controls the audio system for recording and playback

The network component manages the connections to the server and automatically reconnects with the elapsed timeout with each new request

The end of speech detector detects when the user has finished speaking and automatically if necessary stops recording

coding component compresses and decompresses audio stream, reducing the bandwidth requirements & Reduction I mean latency.

The server system is responsible for most of the operations involved in the speech processing cycle. The process of speech recognition or synthesis is performed entirely on the server, processing or synthesizing the audio stream. In addition, the server authenticates according to the configuration of the developer.

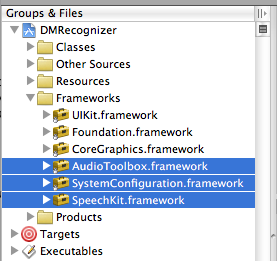

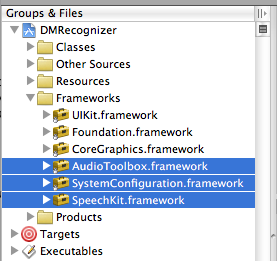

In this specific article, we will focus on iOS development. The Speech Kit framework can be used just like any standard iPhone software platform, such as Foundation or UIKit. The only difference is that the Speech Kit is a static framework, and is entirely contained in the compilation of your application. The Speech Kit is directly related to some of the key operating components of the iPhone OS, which must be included as interdependent in the application so that they are available while the application is running. In addition to Foundation, you need to add the System Configuration and Audio Toolbox components to the Xcode project:

1. Start by choosing the software group framework within your project

2. Then right-click or “Frameworks” and click on the menu that appears: Add (Add) ‣ Existing frameworks ...

3. Finally, select the required frameworks and click the Add button. The selected platforms are displayed in the Frameworks folder (see figure above).

To start using the SpeechKit software platform, add it to your new or existing project:

1. Open your project and select the group in which you want the Speech Kit platform to be located, for example: file: Frameworks.

2. From the menu, select Project ‣ Add to Project ...

3. Next, find the SpeechKit.framework framework into which you unpacked the Dragon Mobile SDK tools and select Add.

4. To make sure that the Speech Kit is in your project and does not refer to the original location, select Copy items ... and then Add.

5. As you can see, the Speech Kit platform has been added to your project, which you can expand to access Public Headers.

Platforms Needed for the Speech Kit

The Speech Kit framework provides one top-level header that provides access to the complete application programming interface (API), up to and including classes and constants. You need to import the Speech Kit headers into all the source files where you are going to use the Speech Kit services:

#import

Now you can start using the services of recognition and conversion of text to speech (speech synthesis).

The Speech Kit platform is a network service and needs some basic settings before using speech recognition or speech synthesis classes.

This installation performs two basic operations:

First, it defines and authorizes your application.

Secondly, - establishes a connection with the voice server, - this allows you to make quick requests for voice processing and, therefore, improves the quality of user service.

Note

The specified network connection requires authorization of credentials and server settings specified by the developer. The necessary permissions are provided through the Dragon Mobile SDK portal:dragonmobile.nuancemobiledeveloper.com .

Installing Kit Setup The

SpeechKitApplicationKey application key is requested by the software platform and must be installed by the developer. The key acts as the password for your application for speech processing servers and must be kept secret to prevent misuse.

Your unique credentials, including the Application Key, which is provided through the developer portal, involve several additional lines of code to set these rights. Thus, the process boils down to copying and pasting lines in the source file. You need to install your application key before initializing the Speech Kit system. For example, you can configure the application key as follows:

const unsigned char [] SpeechKitApplicationKey = {0x12, 0x34, ..., 0x89};

The installation method, setupWithID: host: port, contains 3 parameters:

Application ID

Server Address

Port

The ID parameter identifies your application and is used in conjunction with your application key, providing authorization for access to voice servers.

The host and port parameters are set by the speech server, which can vary from application to application. Therefore, you should always use the values specified by the authentication parameters.

The framework is configured using the following example:

[SpeechKit setupWithID: @ "NMDPTRIAL_Acme20100604154233_aaea77bc5b900dc4005faae89f60a029214d450b"

host: @ "10.0.0.100"

port: 443];

Note

The setupWithID: host: port method is a class method and does not generate an object (instance). This method is intended for a one-time call while the application is running, it sets up the main network connection. This is an asynchronous method that runs in the background, establishes a connection, and performs authorization. The method does not report a connection / authorization error. The success or failure of this installation becomes known using the SKRecognizer and SKVocalizer classes.

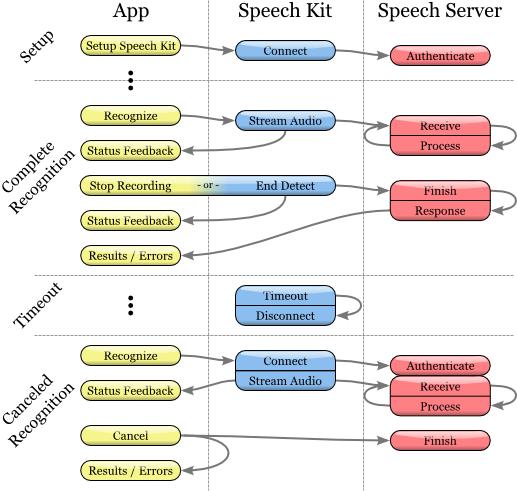

At this point, the voice server is fully configured, and the platform begins to establish a connection. This connection will remain open for some time, guaranteeing that subsequent voice requests are processed promptly until voice services are actively used. If the connection times out, it is interrupted, but will be restored automatically at the same time as the next voice request.

The application is configured and ready to recognize and synthesize speech.

Speech recognition

Recognition technology allows users to dictate instead of typing where text input is usually required. The speech recognizer provides a list of text results. It is not tied to any user interface (UI) object in any way, therefore the selection of the most suitable result and the selection of alternative results remains at the discretion of the user interface of each application.

Speech Recognition Process

Initiating the Speech Recognition Process

1. Before using the Speech Recognition service, make sure that the original Speech Kit platform is configured by using the setupWithID: host: port method.

2. Then create and initialize the SKRecognizer object:

3. recognizer = [[SKRecognizer alloc] initWithType: SKSearchRecognizerType

4. detection: SKShortEndOfSpeechDetection

5. language: @ "en_US"

6. delegate: self];

7. The initWithType: detection: language: delegate method initializes the recognizer and starts the speech recognition process.

The typical parameter is NSString *, this is one of the typical recognition constants defined by the Speech Kit platform and accessible through the SKRecognizer.h header. Nuance may provide you with other values for your unique recognition needs, in which case you will need to add the NSString extension.

The detection parameter defines the “speech ending detection” model and must match one of the SKEndOfSpeechDetection types.

The language parameter defines the language of speech as a string in the format of the ISO 639 language code, followed by the underscore “_”, followed by the country code in the ISO 3166-1 format.

Note

For example, English that is spoken in the United States is en_US. An updated list of supported recognition languages is available on the FAQ: dragonmobile.nuancemobiledeveloper.com/faq.php .

8. The delegate receives a recognition result or error message as described below.

Obtaining recognition results

To obtain recognition results, refer to the delegizer delegation method: didFinishWithResults:

- (void) recognizer: (SKRecognizer *) recognizer didFinishWithResults: (SKRecognition *) results {

[recognizer autorelease];

// perform any actions on the results

}

The delegation method will be applied only upon successful completion of the process, the list of results will contain zero or more results. The first result can always be found using the firstResult method. Even in the absence of an error, a tip (suggestion) from the speech server may be present in the object of recognition results. Such advice (suggestion) should be presented to the user.

Error processing

To get information about any recognition errors, use the delegation method recognizer: didFinishWithError: suggestion :. In case of an error, only this method will be called; on the contrary, if successful, this method will not be called. In addition to the error, as mentioned in the previous section, advice may or may not be present as a result.

- (void) recognizer: (SKRecognizer *) recognizer didFinishWithError: (NSError *) error suggestion: (NSString *) suggestion {

[recognizer autorelease];

// informing the user about the error and advice

}

Managing the recording stages

If you want to receive information about when the recognizer starts or stops recording audio, use the delegation methods recognizerDidBeginRecording: and recognizerDidFinishRecording :. So, there may be a delay between the initialization of recognition and the actual start of recording, and the message recognizerDidBeginRecording: may signal to the user that the system is ready to listen.

- (void) recognizerDidBeginRecording: (SKRecognizer *) recognizer {

// Update the UI to indicate that the system is recording

}

The recognizerDidFinishRecording: message is sent before the speech server finishes receiving and processing the audio file, and therefore before it becomes result available.

- (void) recognizerDidFinishRecording: (SKRecognizer *) recognizer {

// Update UI to indicate that the recording has stopped and speech is still being processed

}

This message is sent regardless of whether there is a model for detecting the end of the recording. The message is sent the same way when the stopRecording method is called, and by a signal to detect the end of recording.

Setting “sound icons” (signals)

In addition, “sound icons” can be used to play sound signals before and after recording, and also after canceling a recording session. You need to create an SKEarcon object and set the setEarcon: forType: method of the Speech Kit platform for it. The following example demonstrates how to set “sound icons” in an example application

- (void) setEarcons {

// Play “sound icons”

SKEarcon * earconStart = [SKEarcon earconWithName: @ "earcon_listening.wav"];

SKEarcon * earconStop = [SKEarcon earconWithName: @ "earcon_done_listening.wav"];

SKEarcon * earconCancel = [SKEarcon earconWithName: @ "earcon_cancel.wav"];

[SpeechKit setEarcon: earconStart forType: SKStartRecordingEarconType];

[SpeechKit setEarcon: earconStop forType: SKStopRecordingEarconType];

[SpeechKit setEarcon: earconCancel forType: SKCancelRecordingEarconType];

}

When a code block of a higher level is called (after you have configured the Speech Kit main software platform using the setupWithID: host: port method), the earcon_listening.wav audio file is played before the recording process starts, and the earcon_done_listening.wav audio file is played when the recording is complete. If the recording session is canceled, the earcon_cancel.wav audio file is played for the user. The method ``earconWithName: works only for audio files that are supported by the device.

Sound level display

In some cases, especially during prolonged dictation, it is convenient to provide the user with a visual display of the sound power of his speech. The sound recording interface supports this feature by using the audioLevel attribute, which returns the relative power level of recorded sound in decibels. The range of this value is characterized by a floating point and lies between 0.0 and -90.0 dB where 0.0 is the highest power level and -90.0 is the lower limit of sound power. This attribute must be available during recording, in particular, between the receipt of messages recognizerDidBeginRecording: and recognizerDidFinishRecording :. In general, you will need to use a timer method, such as performSelector: withObject: afterDelay: to regularly display the power level.

Text to Speech

The SKVocalizer class provides developers with a network interface for speech synthesis.

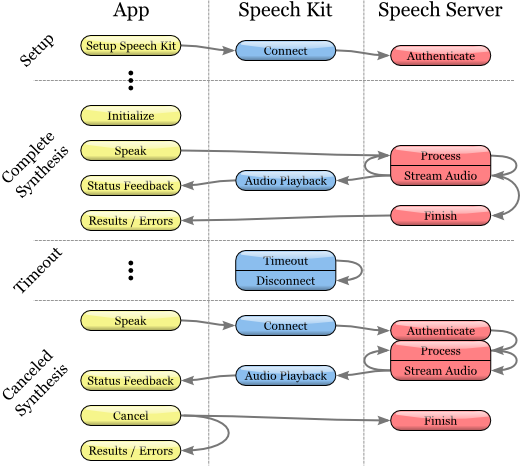

Speech synthesis

process Initializing the speech synthesis process

1. Before using the speech synthesis service, make sure that the main software platform is configured by the Speech Kit using the setupWithID: host: port method.

2. Then create and initialize the SKVocalizer object to convert text to speech:

3. vocalizer = [[SKVocalizer alloc] initWithLanguage: @ "en_US"

4. delegate: self];

5.

1. initWithLanguage: delegate method: initializes the speech synthesis service with the default language.

The language parameter is NSString *, which defines the language as a language code in ISO 639 format, underscore “_” and the following country code in ISO 3166-1 format. For example, English used in the USA has the format en_US. Each supported language has one or more unique voices, male or female.

Note An

updated list of supported languages for speech synthesis is available at dragonmobile.nuancemobiledeveloper.com/faq.php . The list of supported languages will be updated when new language (s) is supported. New languages will not necessarily require updating the existing Dragon Mobile SDK.

The delegated parameter defines the object for receiving statuses and error messages from the speech synthesizer.

2. The initWithLanguage: delegate method: uses the default voice selected by Nuance. To select a different voice, use the initWithVoice: delegate: method instead of the previous one.

The voice parameter is NSString *, it defines the sound model. For example, the default voice for British English is Samantha.

Note An

updated list of supported votes, compared with supported languages, is available at

dragonmobile.nuancemobiledeveloper.com/faq.php .

5. To begin the process of converting text to speech, you need to use the speakString: or speakMarkupString: method. These methods send the requested string to the speech server and initiate streaming processing and audio playback on the device.

6. [vocalizer speakString: @ “Hello world.”]

Note

The speakMarkupString method is used in exactly the same way as the speakString method, with the only difference that the NSString * class is executed by the speech synthesis markup language for describing synthesized speech. Recent discussions of markup languages for speech synthesis are outside the scope of this document, however you can find more information on this topic provided by W3C at www.w3.org/TR/speech-synthesis .

Speech synthesis is a network service and, therefore, the methods mentioned above are asynchronous - in general, an error message is not displayed instantly. Any errors are presented as messages to the delegate.

Speech synthesis system feedback control

Synthesized speech will not be played instantly. Most likely there will be a slight delay in time during which a request is sent and redirected to the voice service. The optional delegation method vocalizer: willBeginSpeakingString: is used to coordinate the user interface and aims to indicate when the sound starts to play.

- (void) vocalizer: (SKVocalizer *) vocalizer willBeginSpeakingString: (NSString *) text {

// Refresh the user interface to indicate when the speech began to play

}

The NSString * class in the message acts as a link to the original line executed by one of the methods: speakString or speakMarkupString, and can be used during sequential playback of a track when appropriate requests are made for converting text to speech.

At the end of the speech, a vocalizer: didFinishSpeakingString: withError message is sent. This message is always sent both in case of successful completion of the playback process, and in the event of an error. If successful, the error "vanishes."

- (void) vocalizer: (SKVocalizer *) vocalizer didFinishSpeakingString: (NSString *) text withError: (NSError *) error {

if (error) {

// Display an error dialog for the user

} else {

// Update the user interface, indicate completion of playback

}

}

After these manipulations, you just have to debug the corresponding service and use it.

The toolkit itself is called NDEV. In order to get the necessary code (it is not enough) and documentation (there are a lot of it), you need to register on the site in the “cooperation program”. Website:

dragonmobile.nuancemobiledeveloper.com/public/index.php

This is all “hemorrhoids” if the clients of your application are less than half a million and they use the services less than 20 times a day. Immediately after registration you will receive a Silver membership, which will allow you to use these services for free.

Developers are offered step-by-step instructions for introducing speech recognition and synthesis services into their iOS application: The

Toolkit (SDK) contains both the client and server components. The diagram illustrates their interaction at the top level:

The Dragon Mobile SDK consists of various code samples and project templates, documentation, as well as a software platform (framework) that simplifies the integration of voice services into any application.

The Speech Kit framework allows you to easily and quickly add speech recognition and synthesis (TTS, Text-to-Speech) services to applications. This platform also provides access to speech processing components located on the server through asynchronous “clean” network APIs, minimizing overhead and resource consumption.

The Speech Kit platform is a full-featured high-level “framework” that automatically manages all low-level services.

Architecture Speech Kit

main part

At the application level, the developer has two main services available: speech recognition and speech synthesis from text.

The platform performs several coordinated processes:

It fully controls the audio system for recording and playback

The network component manages the connections to the server and automatically reconnects with the elapsed timeout with each new request

The end of speech detector detects when the user has finished speaking and automatically if necessary stops recording

coding component compresses and decompresses audio stream, reducing the bandwidth requirements & Reduction I mean latency.

The server system is responsible for most of the operations involved in the speech processing cycle. The process of speech recognition or synthesis is performed entirely on the server, processing or synthesizing the audio stream. In addition, the server authenticates according to the configuration of the developer.

In this specific article, we will focus on iOS development. The Speech Kit framework can be used just like any standard iPhone software platform, such as Foundation or UIKit. The only difference is that the Speech Kit is a static framework, and is entirely contained in the compilation of your application. The Speech Kit is directly related to some of the key operating components of the iPhone OS, which must be included as interdependent in the application so that they are available while the application is running. In addition to Foundation, you need to add the System Configuration and Audio Toolbox components to the Xcode project:

1. Start by choosing the software group framework within your project

2. Then right-click or “Frameworks” and click on the menu that appears: Add (Add) ‣ Existing frameworks ...

3. Finally, select the required frameworks and click the Add button. The selected platforms are displayed in the Frameworks folder (see figure above).

To start using the SpeechKit software platform, add it to your new or existing project:

1. Open your project and select the group in which you want the Speech Kit platform to be located, for example: file: Frameworks.

2. From the menu, select Project ‣ Add to Project ...

3. Next, find the SpeechKit.framework framework into which you unpacked the Dragon Mobile SDK tools and select Add.

4. To make sure that the Speech Kit is in your project and does not refer to the original location, select Copy items ... and then Add.

5. As you can see, the Speech Kit platform has been added to your project, which you can expand to access Public Headers.

Platforms Needed for the Speech Kit

The Speech Kit framework provides one top-level header that provides access to the complete application programming interface (API), up to and including classes and constants. You need to import the Speech Kit headers into all the source files where you are going to use the Speech Kit services:

#import

Now you can start using the services of recognition and conversion of text to speech (speech synthesis).

The Speech Kit platform is a network service and needs some basic settings before using speech recognition or speech synthesis classes.

This installation performs two basic operations:

First, it defines and authorizes your application.

Secondly, - establishes a connection with the voice server, - this allows you to make quick requests for voice processing and, therefore, improves the quality of user service.

Note

The specified network connection requires authorization of credentials and server settings specified by the developer. The necessary permissions are provided through the Dragon Mobile SDK portal:dragonmobile.nuancemobiledeveloper.com .

Installing Kit Setup The

SpeechKitApplicationKey application key is requested by the software platform and must be installed by the developer. The key acts as the password for your application for speech processing servers and must be kept secret to prevent misuse.

Your unique credentials, including the Application Key, which is provided through the developer portal, involve several additional lines of code to set these rights. Thus, the process boils down to copying and pasting lines in the source file. You need to install your application key before initializing the Speech Kit system. For example, you can configure the application key as follows:

const unsigned char [] SpeechKitApplicationKey = {0x12, 0x34, ..., 0x89};

The installation method, setupWithID: host: port, contains 3 parameters:

Application ID

Server Address

Port

The ID parameter identifies your application and is used in conjunction with your application key, providing authorization for access to voice servers.

The host and port parameters are set by the speech server, which can vary from application to application. Therefore, you should always use the values specified by the authentication parameters.

The framework is configured using the following example:

[SpeechKit setupWithID: @ "NMDPTRIAL_Acme20100604154233_aaea77bc5b900dc4005faae89f60a029214d450b"

host: @ "10.0.0.100"

port: 443];

Note

The setupWithID: host: port method is a class method and does not generate an object (instance). This method is intended for a one-time call while the application is running, it sets up the main network connection. This is an asynchronous method that runs in the background, establishes a connection, and performs authorization. The method does not report a connection / authorization error. The success or failure of this installation becomes known using the SKRecognizer and SKVocalizer classes.

At this point, the voice server is fully configured, and the platform begins to establish a connection. This connection will remain open for some time, guaranteeing that subsequent voice requests are processed promptly until voice services are actively used. If the connection times out, it is interrupted, but will be restored automatically at the same time as the next voice request.

The application is configured and ready to recognize and synthesize speech.

Speech recognition

Recognition technology allows users to dictate instead of typing where text input is usually required. The speech recognizer provides a list of text results. It is not tied to any user interface (UI) object in any way, therefore the selection of the most suitable result and the selection of alternative results remains at the discretion of the user interface of each application.

Speech Recognition Process

Initiating the Speech Recognition Process

1. Before using the Speech Recognition service, make sure that the original Speech Kit platform is configured by using the setupWithID: host: port method.

2. Then create and initialize the SKRecognizer object:

3. recognizer = [[SKRecognizer alloc] initWithType: SKSearchRecognizerType

4. detection: SKShortEndOfSpeechDetection

5. language: @ "en_US"

6. delegate: self];

7. The initWithType: detection: language: delegate method initializes the recognizer and starts the speech recognition process.

The typical parameter is NSString *, this is one of the typical recognition constants defined by the Speech Kit platform and accessible through the SKRecognizer.h header. Nuance may provide you with other values for your unique recognition needs, in which case you will need to add the NSString extension.

The detection parameter defines the “speech ending detection” model and must match one of the SKEndOfSpeechDetection types.

The language parameter defines the language of speech as a string in the format of the ISO 639 language code, followed by the underscore “_”, followed by the country code in the ISO 3166-1 format.

Note

For example, English that is spoken in the United States is en_US. An updated list of supported recognition languages is available on the FAQ: dragonmobile.nuancemobiledeveloper.com/faq.php .

8. The delegate receives a recognition result or error message as described below.

Obtaining recognition results

To obtain recognition results, refer to the delegizer delegation method: didFinishWithResults:

- (void) recognizer: (SKRecognizer *) recognizer didFinishWithResults: (SKRecognition *) results {

[recognizer autorelease];

// perform any actions on the results

}

The delegation method will be applied only upon successful completion of the process, the list of results will contain zero or more results. The first result can always be found using the firstResult method. Even in the absence of an error, a tip (suggestion) from the speech server may be present in the object of recognition results. Such advice (suggestion) should be presented to the user.

Error processing

To get information about any recognition errors, use the delegation method recognizer: didFinishWithError: suggestion :. In case of an error, only this method will be called; on the contrary, if successful, this method will not be called. In addition to the error, as mentioned in the previous section, advice may or may not be present as a result.

- (void) recognizer: (SKRecognizer *) recognizer didFinishWithError: (NSError *) error suggestion: (NSString *) suggestion {

[recognizer autorelease];

// informing the user about the error and advice

}

Managing the recording stages

If you want to receive information about when the recognizer starts or stops recording audio, use the delegation methods recognizerDidBeginRecording: and recognizerDidFinishRecording :. So, there may be a delay between the initialization of recognition and the actual start of recording, and the message recognizerDidBeginRecording: may signal to the user that the system is ready to listen.

- (void) recognizerDidBeginRecording: (SKRecognizer *) recognizer {

// Update the UI to indicate that the system is recording

}

The recognizerDidFinishRecording: message is sent before the speech server finishes receiving and processing the audio file, and therefore before it becomes result available.

- (void) recognizerDidFinishRecording: (SKRecognizer *) recognizer {

// Update UI to indicate that the recording has stopped and speech is still being processed

}

This message is sent regardless of whether there is a model for detecting the end of the recording. The message is sent the same way when the stopRecording method is called, and by a signal to detect the end of recording.

Setting “sound icons” (signals)

In addition, “sound icons” can be used to play sound signals before and after recording, and also after canceling a recording session. You need to create an SKEarcon object and set the setEarcon: forType: method of the Speech Kit platform for it. The following example demonstrates how to set “sound icons” in an example application

- (void) setEarcons {

// Play “sound icons”

SKEarcon * earconStart = [SKEarcon earconWithName: @ "earcon_listening.wav"];

SKEarcon * earconStop = [SKEarcon earconWithName: @ "earcon_done_listening.wav"];

SKEarcon * earconCancel = [SKEarcon earconWithName: @ "earcon_cancel.wav"];

[SpeechKit setEarcon: earconStart forType: SKStartRecordingEarconType];

[SpeechKit setEarcon: earconStop forType: SKStopRecordingEarconType];

[SpeechKit setEarcon: earconCancel forType: SKCancelRecordingEarconType];

}

When a code block of a higher level is called (after you have configured the Speech Kit main software platform using the setupWithID: host: port method), the earcon_listening.wav audio file is played before the recording process starts, and the earcon_done_listening.wav audio file is played when the recording is complete. If the recording session is canceled, the earcon_cancel.wav audio file is played for the user. The method ``earconWithName: works only for audio files that are supported by the device.

Sound level display

In some cases, especially during prolonged dictation, it is convenient to provide the user with a visual display of the sound power of his speech. The sound recording interface supports this feature by using the audioLevel attribute, which returns the relative power level of recorded sound in decibels. The range of this value is characterized by a floating point and lies between 0.0 and -90.0 dB where 0.0 is the highest power level and -90.0 is the lower limit of sound power. This attribute must be available during recording, in particular, between the receipt of messages recognizerDidBeginRecording: and recognizerDidFinishRecording :. In general, you will need to use a timer method, such as performSelector: withObject: afterDelay: to regularly display the power level.

Text to Speech

The SKVocalizer class provides developers with a network interface for speech synthesis.

Speech synthesis

process Initializing the speech synthesis process

1. Before using the speech synthesis service, make sure that the main software platform is configured by the Speech Kit using the setupWithID: host: port method.

2. Then create and initialize the SKVocalizer object to convert text to speech:

3. vocalizer = [[SKVocalizer alloc] initWithLanguage: @ "en_US"

4. delegate: self];

5.

1. initWithLanguage: delegate method: initializes the speech synthesis service with the default language.

The language parameter is NSString *, which defines the language as a language code in ISO 639 format, underscore “_” and the following country code in ISO 3166-1 format. For example, English used in the USA has the format en_US. Each supported language has one or more unique voices, male or female.

Note An

updated list of supported languages for speech synthesis is available at dragonmobile.nuancemobiledeveloper.com/faq.php . The list of supported languages will be updated when new language (s) is supported. New languages will not necessarily require updating the existing Dragon Mobile SDK.

The delegated parameter defines the object for receiving statuses and error messages from the speech synthesizer.

2. The initWithLanguage: delegate method: uses the default voice selected by Nuance. To select a different voice, use the initWithVoice: delegate: method instead of the previous one.

The voice parameter is NSString *, it defines the sound model. For example, the default voice for British English is Samantha.

Note An

updated list of supported votes, compared with supported languages, is available at

dragonmobile.nuancemobiledeveloper.com/faq.php .

5. To begin the process of converting text to speech, you need to use the speakString: or speakMarkupString: method. These methods send the requested string to the speech server and initiate streaming processing and audio playback on the device.

6. [vocalizer speakString: @ “Hello world.”]

Note

The speakMarkupString method is used in exactly the same way as the speakString method, with the only difference that the NSString * class is executed by the speech synthesis markup language for describing synthesized speech. Recent discussions of markup languages for speech synthesis are outside the scope of this document, however you can find more information on this topic provided by W3C at www.w3.org/TR/speech-synthesis .

Speech synthesis is a network service and, therefore, the methods mentioned above are asynchronous - in general, an error message is not displayed instantly. Any errors are presented as messages to the delegate.

Speech synthesis system feedback control

Synthesized speech will not be played instantly. Most likely there will be a slight delay in time during which a request is sent and redirected to the voice service. The optional delegation method vocalizer: willBeginSpeakingString: is used to coordinate the user interface and aims to indicate when the sound starts to play.

- (void) vocalizer: (SKVocalizer *) vocalizer willBeginSpeakingString: (NSString *) text {

// Refresh the user interface to indicate when the speech began to play

}

The NSString * class in the message acts as a link to the original line executed by one of the methods: speakString or speakMarkupString, and can be used during sequential playback of a track when appropriate requests are made for converting text to speech.

At the end of the speech, a vocalizer: didFinishSpeakingString: withError message is sent. This message is always sent both in case of successful completion of the playback process, and in the event of an error. If successful, the error "vanishes."

- (void) vocalizer: (SKVocalizer *) vocalizer didFinishSpeakingString: (NSString *) text withError: (NSError *) error {

if (error) {

// Display an error dialog for the user

} else {

// Update the user interface, indicate completion of playback

}

}

After these manipulations, you just have to debug the corresponding service and use it.