Rewriting Internet Content

Hello, dear harazhiteli!

This is a continuation of the introductory article on personalizing the Internet. The following briefly describes the technology on which the company’s products for personalizing the Internet are based.

Avvea has developed a technology for transcribing the content of dynamic web pages. This technology is not new and is known as reverse proxy. Examples of high-quality reverse proxy servers in the business are the development of F5 and Juniper. The development technologies of reverse proxy servers of each of these companies have gone over a decade of development and are aimed at supporting a limited number of complex applications of corporate clients.

An example of an amateur level reversy-proxy is the freeware Glype development. There are a lot of such servers, among them the most popular are the so-called anonymizers.

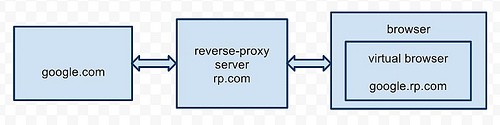

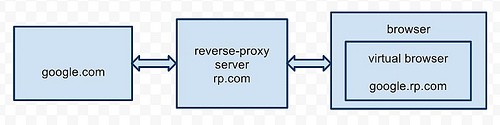

Let's consider some technical features. The main task of the reverse proxy server is to create a virtual layer between the browser interface and the client program code. And the better this problem is solved, the better the final reverse proxy server is.

Our technology involves intercepting and rewriting all interface properties and methods of all active elements of a web page (HTML, JavaScript, Adobe Flash, Java and others). Thus, a kind of "virtual browser" is created inside the real browser.

If everything is simple with HTML, and even free anonymizers do a good job of rewriting static HTML, then, for example, things are much more complicated with JavaScript. Until now, there has not been a unified approach to solving this problem. Our development has changed the situation.

We will show the main idea of the new approach on the example of a machine for rewriting JavaScript of our reverse proxy server. Schematically, the machine consists of 3 parts: lexer, parser and patcher, each of which is an independent element of the system.

A lexer is the syntax base of a machine, which is responsible for recognizing elements of a language in a stream of characters. The parser, based on the lexer, parses the input stream into components: variables, functions, operations, the language itself, etc. The patcher applies to found interface properties and methods.

Input streams are processed based on expressions - elementary, indivisible constructions of user program code from the point of view of their processing by the parser. The parser uses a specialized two-stack disassembly / assembly method. On one stack are operands, on the other are operations with their priorities. One stack filling cycle - one expression. Thanks to this, the system turned out to be single pass and streaming. This means that the code is given to the browser as it downloads. Those. expressions found at the beginning are processed immediately as soon as they completely appear in the patcher buffer, without waiting for the whole code to load. All this had a positive effect on the performance and “lightness” of the system. In combination with the reasonable caching of patched code, you can sometimes observe the effect when a site rewritten by a reverse proxy server

Thus, the reverse proxy server was created for the first time, which correctly works with sites of the real Internet, and not with a limited set of browser applications. This means that the technology can already be successfully used.

About how to use our technology to solve specific problems, we will write in more detail in the following articles.

This is a continuation of the introductory article on personalizing the Internet. The following briefly describes the technology on which the company’s products for personalizing the Internet are based.

Avvea has developed a technology for transcribing the content of dynamic web pages. This technology is not new and is known as reverse proxy. Examples of high-quality reverse proxy servers in the business are the development of F5 and Juniper. The development technologies of reverse proxy servers of each of these companies have gone over a decade of development and are aimed at supporting a limited number of complex applications of corporate clients.

An example of an amateur level reversy-proxy is the freeware Glype development. There are a lot of such servers, among them the most popular are the so-called anonymizers.

Let's consider some technical features. The main task of the reverse proxy server is to create a virtual layer between the browser interface and the client program code. And the better this problem is solved, the better the final reverse proxy server is.

Our technology involves intercepting and rewriting all interface properties and methods of all active elements of a web page (HTML, JavaScript, Adobe Flash, Java and others). Thus, a kind of "virtual browser" is created inside the real browser.

If everything is simple with HTML, and even free anonymizers do a good job of rewriting static HTML, then, for example, things are much more complicated with JavaScript. Until now, there has not been a unified approach to solving this problem. Our development has changed the situation.

We will show the main idea of the new approach on the example of a machine for rewriting JavaScript of our reverse proxy server. Schematically, the machine consists of 3 parts: lexer, parser and patcher, each of which is an independent element of the system.

A lexer is the syntax base of a machine, which is responsible for recognizing elements of a language in a stream of characters. The parser, based on the lexer, parses the input stream into components: variables, functions, operations, the language itself, etc. The patcher applies to found interface properties and methods.

A few words about how this works.

Input streams are processed based on expressions - elementary, indivisible constructions of user program code from the point of view of their processing by the parser. The parser uses a specialized two-stack disassembly / assembly method. On one stack are operands, on the other are operations with their priorities. One stack filling cycle - one expression. Thanks to this, the system turned out to be single pass and streaming. This means that the code is given to the browser as it downloads. Those. expressions found at the beginning are processed immediately as soon as they completely appear in the patcher buffer, without waiting for the whole code to load. All this had a positive effect on the performance and “lightness” of the system. In combination with the reasonable caching of patched code, you can sometimes observe the effect when a site rewritten by a reverse proxy server

Thus, the reverse proxy server was created for the first time, which correctly works with sites of the real Internet, and not with a limited set of browser applications. This means that the technology can already be successfully used.

About how to use our technology to solve specific problems, we will write in more detail in the following articles.