Memory on demand

Memory on demand - automatic allocation of memory to a virtual machine as necessary.

I wrote a little earlier about this idea: Managing the memory of a guest machine in the cloud . Then it was a theory and some sketches with evidence that this idea would work.

Now that this technology has gained practical implementation, and the client and server parts are ready and (seemingly) debugged, we can talk not about the idea, but about how it works. Both from the user and server part. At the same time, we’ll talk about what didn’t turn out as perfect as we would like.

The essence of memory on demand technologyis to provide the guest with the amount of memory that he needs at any given moment in time. Changing the memory volume occurs automatically (without the need to change something in the control panel), on the go (without rebooting) and in a very short time (of the order of a second or less). To be precise, the amount of memory allocated by the applications and the kernel of the guest OS is allocated, plus a small amount of cache.

Technically, for Xen Cloud Platform this is organized very simply: in the guest machine we have an agent (self-written, because regular utilities are too gluttonous and inconvenient), written in C. I chose what to write on - shell, python or C. The shell said simplicity of implementation (5 lines), for python - the reliability and beauty of the code. But C won (about 150 lines of code) for two reasons: information about the state of the machine needs to be sent often - and it would be dishonest to "eat" someone else's machine time and someone else's memory in a convenient and beautiful code (instead of not very elegant, but very fast code on Si).

Against the python, among other things, it was also said that it was not in the minimal installation of Debian.

The server part (actually, deciding how much memory is needed and allocating it) is still in python - there are not very many mathematics, but there are a lot of boring operations for C related to converting from strings to numbers, working with lists, dictionaries, etc. In addition, this machine consumes office resources and does not affect the costs of customers.

The server part receives data from the guest system and changes the memory size of the guests according to the memory management policy. The policy is determined by the user (from the control panel or through the guest API).

There are no perfect things. Memory on demand also has its own problems - and I think that talking about them in advance is better than dumbing the client post-factum.

Scripts that regulate memory do not monitor requests to the OS, they only monitor performance in the OS itself. In other words, if someone asks the OS for a couple of gigabytes of memory at a time, then they may be denied. But if he asks at intervals of 10 times 200, then they will give it completely. As tests have shown, this is exactly the case in a server environment - memory consumption increases with the fork of daemons and load growth, and grows at a completely finite speed (so that the mod-server manages to fill up memory before the next major request).

Another insurance against this is a swap. Those who are used to working on openVZ-based VDSs will probably be surprised. Those who are used to Xen or to ordinary machines will not even pay attention to it. Yes, there is a swap in virtual machines. And it is even used! After some time, the machine (in real conditions, and not in the laboratory doing nothing) in the swap is several hundred megabytes of data.

Fortunately, Linux is very, very neat with a swap, and throws only unused data there (and you can control this behavior using vm.swapiness).

So, the main task of a swap in conditions of Memory on demand is to insure against too fast / thick requests. Such requests will be successfully processed without oom_killer, albeit at the cost of some brakes. The user also has the opportunity to influence this behavior through policies.

If aperson program in the guest system asked for a lot of memory at a time and some of the underutilized code was thrown into a swap, then the mod-server (MOD = Memory On Demand) is triggered, which throws memory. Enough to clear the swap, but Linux is a lazy creature, and unloading unused data from the swap is in no hurry. Due to this, a large amount of memory is given under the disk cache (increased performance). If Linux needs something from a swap, then the memory is ready to accept this data.

The second drawback is more fundamental. Dynamic memory management requires ... memory. Yes, and quite a lot. For 256 MB, this is about 12 MB of overhead, for 512 - about 20, for 2 GB - about 38, for 6 GB - about 60 MB of overhead. The overhead is "eaten up" either by the hypervisor, or by the core of the guest system ... It does not even appear in 'free' in TotalMem.

You might think that this overhead is not very big. However, if you have a 5GB supply, and the actual consumption is 200, then you will have an overhead of 50MB (i.e., pay for + 25% of the memory for the right to grow up to 5GB). If you lower the ceiling to 2 GB, then the overhead will decrease to 10% of memory at 256 bases.

Thus, an overhead is a payment for the willingness to receive a lot of memory from the hypervisor. For our part (mercantile, self-serving, etc.) this is a small insurance that a person will not just reserve 64 GB of memory for himself (he will have to have an overhead of about 6 GB, which is quite expensive for a machine with 200 MB consumption). But to make a typewriter with an interval of 300-2 GB is just that. The overshot is small, there is a margin of memory.

Another drawback is that you can change the upper memory limit only with a reboot.

Anlim (any amount of memory at the first request - emphasis on the words "any") is not and never will be. There are several reasons.

Firstly, we do not physically have 500 GB of memory right for you, right here and now for a single virtual machine. Even if you ask. So many memory slots in the server does not fit. Secondly, the technology itself requires (at the moment) the presence of a ceiling, and, preferably, not much higher than average consumption (no more than one and a half orders of magnitude, with large numbers, the overhead grows strongly, there was more about it, the stock up to 500 GB will gobble up you a gigabyte of 30 memory "nowhere" - an expensive pleasure is obtained).

They promised anlim. But they didn’t. More precisely, formally it can be done within the capabilities of the cloud host, but such ranges (128-48 GB) are not economically feasible.

Alas, a beautiful picture with a complete absence of the upper memory bar failed. But it was possible to implement the technology of payment for consumption. If you (your VM) consume little memory, then little money is paid. And there is a reserve for the case of "crazy habr effect" with swelling Apaches.

A special conversation with the cache. We cannot set the memory capacity of a virtual machine strictly by consumption, because cache is still needed. Despite the fact that before physical screws there are two levels of caching and tens of gigabytes of memory with cache (general purpose). Its local cache allows you to reduce the number of disk operations (and they, by the way, are paid separately).

Thus, we can reserve a small amount of memory in the machine, which, on the one hand, serves requests for new memory inside the virtual machine (i.e., reduces the number of memory quota operations on the machine), and on the other hand, is used as a disk cache. Since we monitor the amount of memory in the guest, there is almost always memory for the cache, except for the moments of sharp peak memory consumption (this is visible on the graph).

Will not work. Modern cores want a lot of memory, so even a 64MB digit won't suit them. As the test showed, it makes sense to talk about numbers from 96MB, or, with a slight correction for cache, from 128MB. If you try to trim the memory below this value (we don’t provide this opportunity for users, but I tried it in the laboratory), it turns out very badly - the kernel starts to panic, start to do stupid things. Thus, the reasonable limit fixed in our interface is a conscious decision after the tests, and not a ban on saving.

Another potential problem may be applications whose strategy is to use the entire available amount of memory. In this situation, bad recursion is enabled:

This recursion will end at the moment when the MOD server cannot increase the amount of memory (due to the upper limit).

I know that this is how Exchange 2007 and higher behave, some versions of SQL servers. What to do in such a situation?

I do not know which approach will be better, practice will show.

Well, there’s no special secret.

At the moment, memory management is carried out according to a very clumsy algorithm with three modes (init / run / stop) and simple hysteresis, in the near future, writing an algorithm with an eye on the statistics of previous requests and adjusting the level of optimism for memory allocation.

PS It was planned to add asys .. to the graphs of consumption and memory allocation, however, making a stand where it was not quite synthetic was more difficult than I thought, so the schedules will be in a few days.

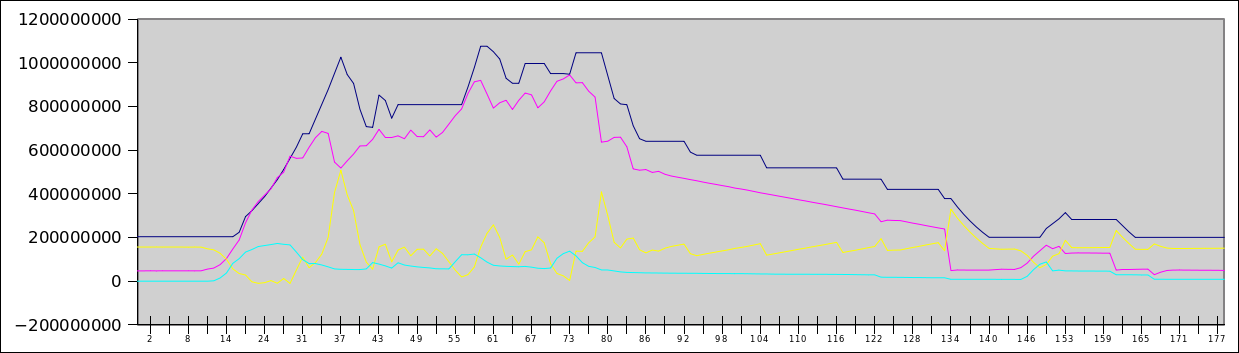

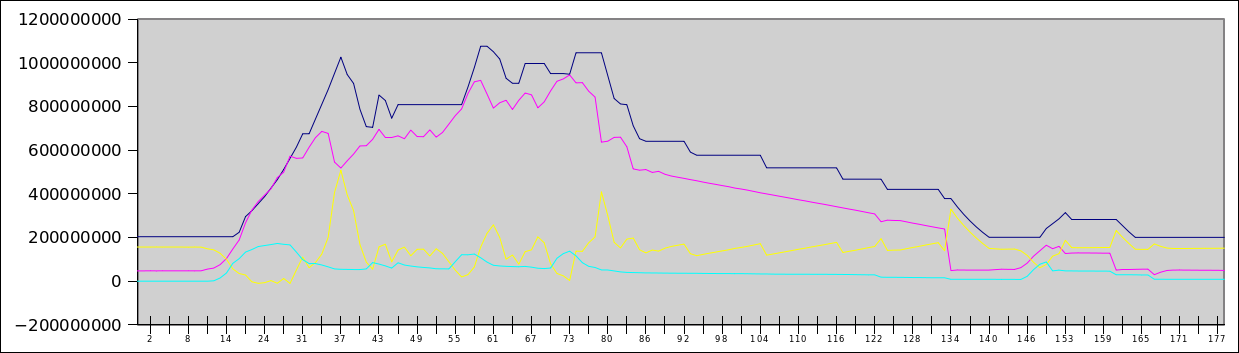

PPS As a teaser - a graph of the habra effect for 140 users who are simultaneously walking around the site. The blue line is the allocated memory, the red is the occupied memory, the yellow is free, the cyan is the swap file. By Y - bytes (i.e. top - 1.2 GB), by X - seconds from the moment the test starts.

I wrote a little earlier about this idea: Managing the memory of a guest machine in the cloud . Then it was a theory and some sketches with evidence that this idea would work.

Now that this technology has gained practical implementation, and the client and server parts are ready and (seemingly) debugged, we can talk not about the idea, but about how it works. Both from the user and server part. At the same time, we’ll talk about what didn’t turn out as perfect as we would like.

The essence of memory on demand technologyis to provide the guest with the amount of memory that he needs at any given moment in time. Changing the memory volume occurs automatically (without the need to change something in the control panel), on the go (without rebooting) and in a very short time (of the order of a second or less). To be precise, the amount of memory allocated by the applications and the kernel of the guest OS is allocated, plus a small amount of cache.

Technically, for Xen Cloud Platform this is organized very simply: in the guest machine we have an agent (self-written, because regular utilities are too gluttonous and inconvenient), written in C. I chose what to write on - shell, python or C. The shell said simplicity of implementation (5 lines), for python - the reliability and beauty of the code. But C won (about 150 lines of code) for two reasons: information about the state of the machine needs to be sent often - and it would be dishonest to "eat" someone else's machine time and someone else's memory in a convenient and beautiful code (instead of not very elegant, but very fast code on Si).

Against the python, among other things, it was also said that it was not in the minimal installation of Debian.

The server part (actually, deciding how much memory is needed and allocating it) is still in python - there are not very many mathematics, but there are a lot of boring operations for C related to converting from strings to numbers, working with lists, dictionaries, etc. In addition, this machine consumes office resources and does not affect the costs of customers.

The server part receives data from the guest system and changes the memory size of the guests according to the memory management policy. The policy is determined by the user (from the control panel or through the guest API).

There are no perfect things. Memory on demand also has its own problems - and I think that talking about them in advance is better than dumbing the client post-factum.

disadvantages

Asynchronous memory allocation

Scripts that regulate memory do not monitor requests to the OS, they only monitor performance in the OS itself. In other words, if someone asks the OS for a couple of gigabytes of memory at a time, then they may be denied. But if he asks at intervals of 10 times 200, then they will give it completely. As tests have shown, this is exactly the case in a server environment - memory consumption increases with the fork of daemons and load growth, and grows at a completely finite speed (so that the mod-server manages to fill up memory before the next major request).

Another insurance against this is a swap. Those who are used to working on openVZ-based VDSs will probably be surprised. Those who are used to Xen or to ordinary machines will not even pay attention to it. Yes, there is a swap in virtual machines. And it is even used! After some time, the machine (in real conditions, and not in the laboratory doing nothing) in the swap is several hundred megabytes of data.

Fortunately, Linux is very, very neat with a swap, and throws only unused data there (and you can control this behavior using vm.swapiness).

So, the main task of a swap in conditions of Memory on demand is to insure against too fast / thick requests. Such requests will be successfully processed without oom_killer, albeit at the cost of some brakes. The user also has the opportunity to influence this behavior through policies.

If a

Overhead

The second drawback is more fundamental. Dynamic memory management requires ... memory. Yes, and quite a lot. For 256 MB, this is about 12 MB of overhead, for 512 - about 20, for 2 GB - about 38, for 6 GB - about 60 MB of overhead. The overhead is "eaten up" either by the hypervisor, or by the core of the guest system ... It does not even appear in 'free' in TotalMem.

You might think that this overhead is not very big. However, if you have a 5GB supply, and the actual consumption is 200, then you will have an overhead of 50MB (i.e., pay for + 25% of the memory for the right to grow up to 5GB). If you lower the ceiling to 2 GB, then the overhead will decrease to 10% of memory at 256 bases.

Thus, an overhead is a payment for the willingness to receive a lot of memory from the hypervisor. For our part (mercantile, self-serving, etc.) this is a small insurance that a person will not just reserve 64 GB of memory for himself (he will have to have an overhead of about 6 GB, which is quite expensive for a machine with 200 MB consumption). But to make a typewriter with an interval of 300-2 GB is just that. The overshot is small, there is a margin of memory.

Another drawback is that you can change the upper memory limit only with a reboot.

Anlim

Anlim (any amount of memory at the first request - emphasis on the words "any") is not and never will be. There are several reasons.

Firstly, we do not physically have 500 GB of memory right for you, right here and now for a single virtual machine. Even if you ask. So many memory slots in the server does not fit. Secondly, the technology itself requires (at the moment) the presence of a ceiling, and, preferably, not much higher than average consumption (no more than one and a half orders of magnitude, with large numbers, the overhead grows strongly, there was more about it, the stock up to 500 GB will gobble up you a gigabyte of 30 memory "nowhere" - an expensive pleasure is obtained).

They promised anlim. But they didn’t. More precisely, formally it can be done within the capabilities of the cloud host, but such ranges (128-48 GB) are not economically feasible.

Alas, a beautiful picture with a complete absence of the upper memory bar failed. But it was possible to implement the technology of payment for consumption. If you (your VM) consume little memory, then little money is paid. And there is a reserve for the case of "crazy habr effect" with swelling Apaches.

Disk cache

A special conversation with the cache. We cannot set the memory capacity of a virtual machine strictly by consumption, because cache is still needed. Despite the fact that before physical screws there are two levels of caching and tens of gigabytes of memory with cache (general purpose). Its local cache allows you to reduce the number of disk operations (and they, by the way, are paid separately).

Thus, we can reserve a small amount of memory in the machine, which, on the one hand, serves requests for new memory inside the virtual machine (i.e., reduces the number of memory quota operations on the machine), and on the other hand, is used as a disk cache. Since we monitor the amount of memory in the guest, there is almost always memory for the cache, except for the moments of sharp peak memory consumption (this is visible on the graph).

Make me a server with 8MB of RAM

Will not work. Modern cores want a lot of memory, so even a 64MB digit won't suit them. As the test showed, it makes sense to talk about numbers from 96MB, or, with a slight correction for cache, from 128MB. If you try to trim the memory below this value (we don’t provide this opportunity for users, but I tried it in the laboratory), it turns out very badly - the kernel starts to panic, start to do stupid things. Thus, the reasonable limit fixed in our interface is a conscious decision after the tests, and not a ban on saving.

I looked back to see if you looked back, stack overflow

Another potential problem may be applications whose strategy is to use the entire available amount of memory. In this situation, bad recursion is enabled:

- The program sees that 32 MB is free

- The program asks the OS for 30 MB

- The MOD agent informs the server that the OS has 2 MB free memory left

- The MOD server throws another 64MB of memory on the guest OS

- The program sees that another 66 MB of memory is free

- The program asks the OS for another 64MB of memory

This recursion will end at the moment when the MOD server cannot increase the amount of memory (due to the upper limit).

I know that this is how Exchange 2007 and higher behave, some versions of SQL servers. What to do in such a situation?

- Disable the ability to allocate memory. As much memory as they put in the socket. Boring decision

- Disable automatic memory allocation, switch to API (need memory, requested). The main problem is that this approach contradicts the idea of memory on demand - automatic memory allocation

- Change program settings (all similar programs allow you to change behavior)

- Change the setting of the memory allocation policy (for example, to make the memory allocation happen when the swap starts to be used).

I do not know which approach will be better, practice will show.

How is this implemented?

Well, there’s no special secret.

xe vm-memory-dyniamic-range-set max=XXX min=YYY uuid=...- and it's in the hat. The very possibility of changing the memory of a virtual machine on the go has been present in Xena a long time ago. However, the existing implementation (xenballoond) was too optimistic (i.e., it reserved a lot of memory for the virtual machine more than necessary) and slow - it did not work out bursts and consumption peaks. In addition, she relied heavily on swaps, which is not a good idea for paid disk operations. Not to mention that the demon himself was written on the shell.Prospects

At the moment, memory management is carried out according to a very clumsy algorithm with three modes (init / run / stop) and simple hysteresis, in the near future, writing an algorithm with an eye on the statistics of previous requests and adjusting the level of optimism for memory allocation.

PS It was planned to add a

PPS As a teaser - a graph of the habra effect for 140 users who are simultaneously walking around the site. The blue line is the allocated memory, the red is the occupied memory, the yellow is free, the cyan is the swap file. By Y - bytes (i.e. top - 1.2 GB), by X - seconds from the moment the test starts.