Google opens robots.txt parser source code

Today, Google announced a draft RFC of the Robots Exclusion Protocol (REP) standard , simultaneously making its robots.txt file parser available under the Apache License 2.0. Until today, no official standard for Robots Exclusion Protocol (REP) and the robots.txt does not exist (it was the closest to this is ) that allows developers and users to interpret it in their own way. The company’s initiative aims to reduce differences between implementations.

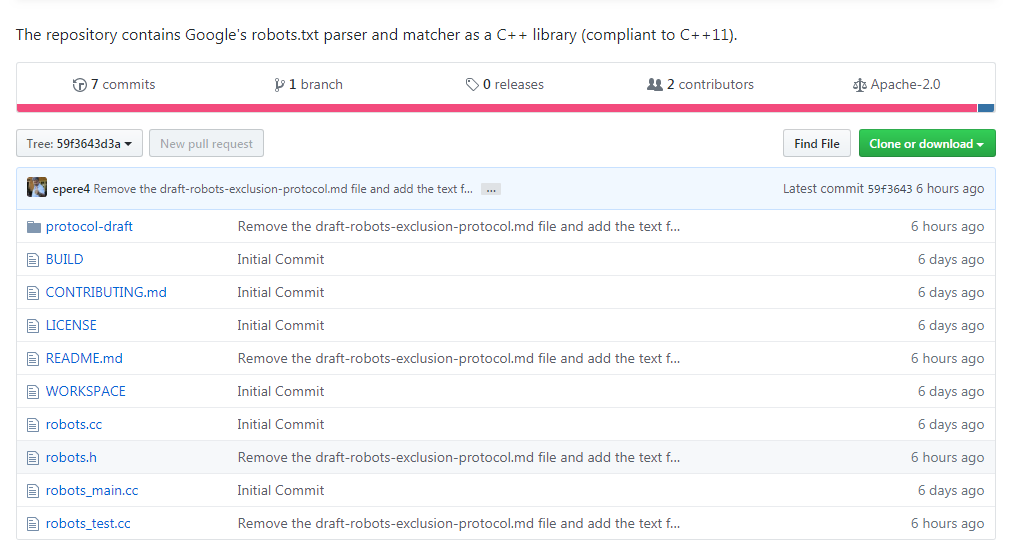

A draft of the new standard can be viewed on the IETF website , and the repository is available on Github at https://github.com/google/robotstxt .

The parser is the source code that Google uses as part of its production systems (with the exception of minor edits - such as cleaned-up header files that are used only within the company) - the robots.txt files are parsed exactly like Googlebot does (including how he treats Unicode characters in patterns). The parser is written in C ++ and essentially consists of two files - you need a compiler compatible with C ++ 11, although the library code dates back to the 90s, and you will find “raw” pointers and strbrk in it . In order to assemble it, it is recommended to use Bazel (CMake support is planned in the near future).

The very idea of robots.txt and the standard belongs to Martain Coster, who created it in 1994 - according to legend, the reason for this was the search engine spider Charles Strauss, who “dropped” the Bonfire server using a DoS attack. His idea was picked up by others and quickly became the de facto standard for those involved in the development of search engines. Those who wanted to do its parsing sometimes had to reverse engineer Googlebot, including Blekko, which wrote its own parser for Perl for its search engine .

The parser was not without fun moments: take a look, for example, at how much work went into disallow processing .