How A Plague Tale: Innocence Frame Renders

- Transfer

Foreword

As in my other studies, let's start with the introduction. Today we look at the latest game from the French developer Asobo Studio. The first time I saw a video of this game last year, when a colleague shared a 16-minute gameplay trailer with me . The mechanics of “rat against the light” caught my attention, but I didn’t really want to play this game. However, after its release, many began to say that it looks like it was made on the Unreal engine, but this is not so. I was curious to see how rendering works and how much developers were inspired by Unreal in general. I was also interested in the process of rendering a flock of rats, because in the game it looked very convincing and, moreover, it is one of the key elements of the gameplay.

When I started trying to capture the game, I thought that I would have to give up, because nothing worked. Although the game uses DX11, which is now supported by almost all analysis tools, I could not get any of them to work. When I tried to use RenderDoc, the game crashed at startup, and the same thing happened with PIX. I still don’t know why this happens, but fortunately, I was able to complete several captures using NSight Graphics. As usual, I raised all the parameters to the maximum and started looking for frames suitable for analysis.

Frame Break

Having made a couple of captures, I decided to use one of the very beginning of the game for frame analysis. There is not much difference between the grips, and besides, I can avoid spoilers.

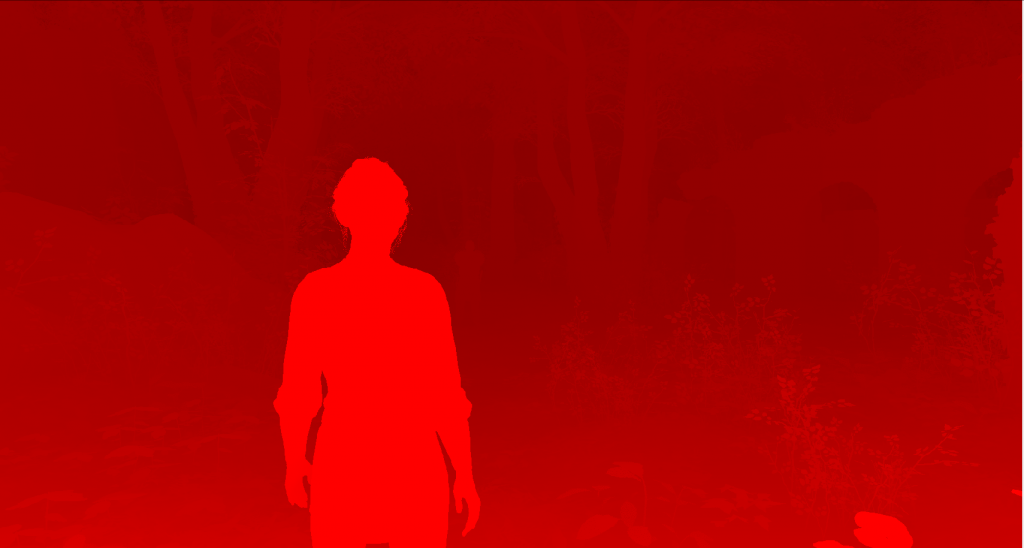

As usual, let's start with the final frame:

The first thing I noticed was a completely different balance in this game of rendering events compared to what I saw in other games earlier. There are many draw calls here, which is normal, but surprisingly only a few of them are used for post-processing. In other games, after rendering colors to get the final result, the frame goes through many more stages, but in A Plague Tale: Innocence the post-processing stack is very small and optimized for only a few rendering / computing events.

The game begins building a frame by rendering GBuffer with six render targets. Interestingly, all of these render targets have a 32-bit unsigned integer format (with the exception of one) instead of RGBA8 colors or other formats specific to such data. This was difficult because I had to decode each channel manually using the Custom Shader function from NSight. I spent a lot of time figuring out which values are encoded in 32-bit targets, but it is possible that I missed something anyway.

GBuffer 0

The first target contains some shading values in 24 bits, and some other values for hair in 8 bits.

GBuffer 1 The

second target looks like a traditional RGBA8-target with different material control values in each channel. As far as I understand, the red channel is metalness (it’s not entirely clear why some leaves are marked with it), the green channel looks like a roughness value, and the blue channel is the mask of the main character. None of the captures I made used the alpha channel.

GBuffer 2

The third target also looks like RGBA8 with albedo in the RGB channels, and the alpha channel in each capture I made was completely white, so I don’t quite understand what this data should do.

GBuffer3 The

fourth target is curious, because on all my captures it is almost completely black. The values look like a mask of part of the vegetation and all hair / fur. Perhaps this has something to do with translucency.

GBuffer 4

The fifth target is probably some kind of normal encoding, because I haven’t seen them anywhere else, and the shader seems to sample normal maps and then output to that target. With this in mind, I have not figured out how to properly visualize them.

Depth from GBuffer 5

Mask from GBuffer 5

The last target is an exception, because it uses a 32-bit floating-point format. The reason for this is that it contains the linear depth of the image, and the sign bit encodes some other mask, again masking the hair and part of the vegetation.

After the GBuffer creation is completed, the resolution of the depth map is reduced in the computational shader, and then shadow maps (directional cascading shadow maps from the sun and cubic depth maps from point light sources) are rendered.

Twilight rays

After completing the shadow maps, you can calculate the illumination, but before that, twilight rays (god rays) are rendered into a separate target.

SSAO

At the lighting calculation stage, a computational shader is performed to calculate the SSAO.

Illuminated opaque geometry

Lighting is added from cubic maps and local light sources. All of these different light sources, combined with the targets rendered above, form an illuminated HDR image as a result.

Elements rendered in proactive rendering

Elements rendered in proactive rendering are added on top of the illuminated opaque geometry, but in this scene they are not particularly noticeable.

After the accumulation of all the color, we are almost done, there are only a few post-processing operations and the UI.

Color resolution is reduced in the computational shader and then increased to create a very beautiful and soft bloom effect.

After compositing all the previous results, adding camera dirt, color grading, and finally tonal image correction, we get the colors of the scene. The UI overlay gives us an image from the beginning of the article.

Worth mentioning a couple of interesting things about rendering:

- Instancing (duplication of geometry) is used only for individual meshes (it seems that only for vegetation). All other objects are rendered in separate draw calls.

- It looks like objects are sorted approximately from front to back, with a few exceptions.

- It seems that the developers did not make any effort to group the draw calls in terms of material parameters.

Rats

As I said at the beginning of the article, one of the reasons I wanted to explore this game was because of the way to render the pack of rats. The decision disappointed me in some ways: it seems that it was made by brute force. Here I use screenshots from another scene of the game, but I hope there are no spoilers in it.

As with other objects, rats do not seem to have any duplication of geometry, unless we reach the distance at which we switch to the last level of mesh detail (LOD). Let's see how it works.

LOD0

LOD1

LOD2

LOD3

Rats have 4 LOD levels. Interestingly, at the third level, the tail is bent to the body, while the fourth tail does not. This probably means that animations are only active for the first two levels. Unfortunately, NSight Graphics does not seem to have enough tools to test this.

No duplication (instancing) of rats.

With duplication.

In the scene shown above, the following number of rats were rendered:

- LOD0 - 200

- LOD1 - 200

- LOD2 - 1258

- LOD3 - 3500 (with duplication of geometry)

This makes us understand that there is a strict limit on the number of rats that can be rendered on the first two LODs.

In the capture I made, I was unable to identify any logic linking rats to individual LODs. Sometimes rats closer to the camera are not very detailed, and sometimes rats that are barely visible have high detail.

Finally

Plague Tale: Innocence is very interesting in terms of rendering. His results undoubtedly impressed me, they serve the gameplay very well. As with any proprietary engine, it would be great to hear a more detailed analysis from the lips of the developers themselves, especially because I was not able to confirm some of my theories. I hope my article someday gets to someone from Asobo Studio and they see that people have an interest in this.