Mathematicians have discovered the perfect way to multiply numbers

- Transfer

By breaking large numbers into small numbers, the researchers exceeded the fundamental mathematical speed limit

Four thousand years ago, the inhabitants of Babylonia invented multiplication. And in March of this year, mathematicians improved it.

On March 18, 2019, two researchers described the fastest known method of multiplying two very large numbers. The work marks the culmination of a long-standing search for the most efficient procedure for performing one of the basic operations of mathematics.

“Everyone thinks that the method of multiplication that they taught at school is the best, but in fact, active research is underway in this area,” says Joris van der Hoeven , a mathematician at the French National Center for Scientific Research, one of the co-authors of the work.

The complexity of many computational problems, from counting new digits of the number π to finding large primes, reduces to the rate of multiplication. Van der Hoeven describes their result as an assignment of a kind of mathematical speed limit for solving many other problems.

“In physics, there are important constants such as the speed of light that allow you to describe all kinds of phenomena,” said van der Hoeven. “If you want to know how quickly computers can solve certain mathematical problems, then the multiplication of integers arises in the form of some basic building block, with respect to which you can express such a speed.”

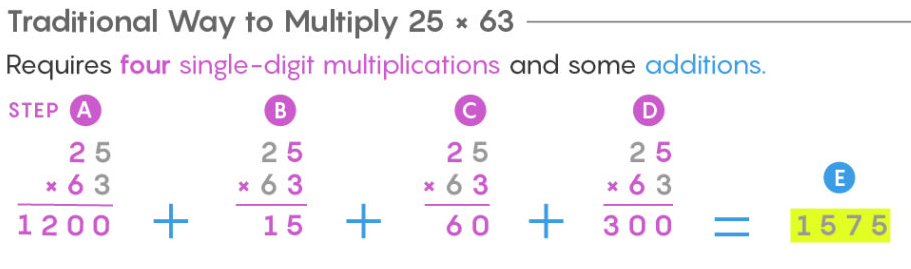

Almost everyone learns to multiply numbers the same way. We write the numbers in a column, multiply the top number by each digit of the bottom (taking into account the digits) and add the result. When you multiply two two-digit numbers, you have to do four smaller multiplications to get the final result.

The school method of " transfer " requires n 2 steps, where n is the number of digits in each of the multiplied numbers. Calculations with three-digit numbers require nine multiplications, and with single-digit ones - 10 000.

The transfer method works fine with numbers consisting of several digits, but it starts to slip when multiplying numbers consisting of millions or billions of digits (which computers do when accurately calculating π or in a worldwide search for largeprime numbers). To multiply two numbers with a billion digits, you will need to produce a billion squared, or 10 18 , multiplications - this will take about 30 years for a modern computer.

For several millennia, it was believed that numbers could not be multiplied faster. Then in 1960, 23-year-old Soviet and Russian mathematician Anatoly Alekseevich Karatsuba attended a seminar conducted by Andrei Nikolayevich Kolmogorov , a Soviet mathematician, one of the greatest mathematicians of the 20th century. Kolmogorov stated that there is no generalized method of multiplication requiring less than n 2 operations. Karatsuba decided that there was such a method - and after a week of searching he discovered it.

Anatoly Alekseevich Karatsuba

Multiplication of Karatsubaconsists in breaking up the digits of a number and repeating their combination in a new way, which allows instead of a large number of multiplications to carry out fewer additions and subtractions. The method saves time, since addition takes only 2n steps instead of n 2 .

The traditional method of multiplication 25x63 requires four multiplications by a single digit and several additions.

Multiplication of Karatsuba 25x63 requires three multiplications by a single digit and several additions and subtractions.

a) divide the numbers

b) multiply the tens

c) multiply the units

d) add the numbers

e) multiply these sums

f) consider e - b - c

g) collect the total sum from b, c and f

As the number of characters in numbers increases, the Karatsuba method can be used recursively.

The traditional method of multiplying 2531x1467 requires 16 multiplications by a single digit.

Multiplication of Karatsuba 2531x1467 requires 9 multiplications.

“Addition at school takes place a year earlier, because it is much simpler, it runs in linear time, with the speed of reading numbers from left to right,” said Martin Fuhrer , a mathematician from Pennsylvania State University, who created the fastest multiplication algorithm in 2007.

When dealing with large numbers, the multiplication of Karatsuba can be repeated recursively, breaking the original numbers into almost as many parts as there are signs in them. And with each partition, you change the multiplication, which requires many steps, to addition and subtraction, which require much less steps.

“Several multiplications can be turned into additions, given that computers will be able to do this faster,” said David Harvey , a mathematician at the University of New South Wales and co-author of the new work.

Karatsuba's method made it possible to multiply numbers using only n 1.58multiplications by a single number. Then, in 1971, Arnold Schönhage and Volker Strassen published a method for multiplying large numbers by n × log n × log (log n) small multiplications. To multiply two numbers from a billion characters, each Karatsuba method will require 165 trillion steps.

Joris van der Hoeven, mathematician at the French National Center for Scientific Research, the

Schönhage-Strassen method is used by computers to multiply large numbers, and has led to two other important consequences. First, he introduced a technique from the field of signal processing called Fast Fourier Transform . Since then, this technique has been the basis of all fast multiplication algorithms.

Secondly, in the same work, Schönhage and Strassen suggested the possibility of an even faster algorithm — a method requiring only n × log n multiplications by one sign — and that such an algorithm would be the fastest possible. This assumption was based on the feeling that for such a fundamental operation as multiplication, the restriction of operations should be written somehow more elegantly than n × log n × log (log n).

“Most generally agreed that multiplication is such an important basic operation that, from a purely aesthetic point of view, it needs a beautiful restriction in complexity,” said the Führer. “From experience, we know that the mathematics of basic things always end up being elegant.”

The awkward restriction of Schönhage and Strassen, n × log n × log (log n), lasted 36 years. In 2007The Führer broke this record, and everything spun. Over the past decade, mathematicians have found ever-faster multiplication algorithms, each of which gradually crawled to the n × log n mark, not quite reaching it. Then in March of this year, Harvey and van der Hoeven reached it.

Their method is an improvement on the great work done before them. He breaks numbers into signs, uses an improved version of the fast Fourier transform, and takes advantage of other breakthroughs made over the past 40 years. “We use the fast Fourier transform much more roughly, use it several times, not just one, and replace even more multiplications with addition and subtraction,” van der Hoeven said.

Harvey and van der Hooven's algorithm proves that multiplication can be done in n × log n steps. However, he does not prove the absence of a faster method. It will be much more difficult to establish that their approach is as fast as possible. At the end of February, a team of computer scientists from Aarhus University published a paper claiming that if one of the unproven theorems turns out to be true, then this method will indeed be the fastest way to multiply.

And although in theory this new algorithm is very important, in practice it will not change much, since it only slightly beats the already used algorithms. “All we can hope for is threefold acceleration,” said van der Hoeven. “Nothing beyond.”

In addition, computer equipment circuits have changed. Twenty years ago, computers performed addition much faster than multiplication. The gap in the rates of multiplication and addition has since seriously decreased, as a result of which, on some chips, multiplication can even overtake addition. Using certain types of equipment, “you can speed up the addition by forcing the computer to multiply numbers, and this is some kind of madness,” said Harvey.

Equipment changes over time, but the best algorithms of its class last forever. No matter how computers look in the future, the Harvey and van der Hooven algorithm will still be the most efficient way to multiply numbers.