WebRTC and video surveillance: how we defeated the delay of video from cameras

From the first days of working on a cloud-based video surveillance system, we ran into a problem without which Ivideon could be put an end to - it was our Everest, climbing it took a lot of effort, but now we finally stuck an ice ax into the crown of the cross-platform rebus.

The system for transmitting sound and video over the Internet should not depend on equipment, Web clients and the standards they support, and also work correctly when there are Network Address Translators and firewalls. The user of cloud video surveillance wants to access the service, even if he uses analog cameras, and prefers to watch live video broadcasts on the most modern device.

It is very significant that the user wants to watch video with minimal delay. Almost the only way to display low latency videos in a browser is to use WebRTC (web real-time communications). WebRTC is a set of technologies for peer-to-peer video and audio transmission in browsers, originally designed to transmit and play a video stream with low latency. For this, among other things, the UDP protocol is used.

Before telling you what the new engine gives the user, we will remind you why and why we support HLS technologies, and for what we decided to move on.

HLS engine: pros and cons

( c )

HLS (HTTP Live Streaming) technology was developed by Apple, so it is not surprising that for the first time its support appeared on devices of this particular brand. To date, almost all television set-top boxes and many devices running on the Android OS can also play HLS-format footage.

The HLS engine uses the well-known H264 video codec in combination with AAC or MP3 audio streams for video streaming. The entire audio and video stream is packaged in an MPEG-TS transport container. For transmission via HTTP, the information contained in the stream is divided into fragments described in m3u8 playlists. And only then these fragments, along with playlists, are transmitted via HTTP. Dividing into fragments automatically means a delay in seconds. Such a feature of the MPEG-TS container.

The HLS engine also supports multibit streams, Live / VOD.

The main advantages of HLS:

- built-in support in all major browsers;

- ease of implementation (when compared with WebRTC);

- it’s very convenient and effective to organize all kinds of broadcasts to a large audience due to the fact that segments can be loaded once onto a CDN.

Despite the simplicity of the engine, not everything is as smooth as it seems. The main problem is that the developers of third-party players have moved away from Apple's recommendations, for example, in terms of supported audio formats. In particular, many developers began to add the ability to work with popular audio streams: mpeg2 video, mpeg2 audio, etc. As a result, we had to create different playlist formats for different players.

But one of the biggest problems with the HLS engine is the high latency in data transfer.

The origins of the "brakes"

The main reason for the high delay in HLS lies in the fact that programmers created an engine to get the highest quality picture. Therefore, the parameters of the used frame interval and the size of the playback buffer are simply not suitable for conducting live video broadcasts. Because of this, a rather high delay in the transmission of the video sequence occurs, which can be 5-7 seconds.

On the one hand, this is a bit, for example, for those who watch a movie from a video hosting server. But for video surveillance systems, the delay in the transmission of footage can be very important.

If you are observing an office where employees come off the monitors once an hour, a delay of 5 seconds does not matter. But people started complaining that, for example, when broadcasting a football match, GOOOOOOL was already written in the chat, but this is not on the video :). We already have a number of custom cases where Ivideon should almost replace skype.

Is it possible to defeat the delay in HLS? The answer to this question sounds like a speech by an experienced rat fighter at a lecture before novice disruptors: “Rats cannot be exterminated, but their numbers can be reduced to a reasonable minimum.” So with a delay in HLS, removing it to zero will not work, but there are solutions on the market that can significantly reduce the delay.

Shallow cut

Another disadvantage of the engine is the use of small-sized files for data transfer. It would seem that this is bad?

Anyone who tried to copy a large number of small files from one medium to another, probably noticed that the write speed of such a set is much lower than one large file of the same size. Yes, and the intensity of access to the hard drive increases significantly, which generally negatively affects the performance of the entire computer. Therefore, the transmission of video data in the form of small 10 second fragments also contributes to the increased engine delay.

Briefly summarize all the pros and cons of HLS technology.

Advantages of HLS:

- Ability to work with any device. You can watch videos on any modern device, whether it is a smartphone, tablet, laptop or desktop PC. The main thing is that the web browser is up-to-date and compatible with HTML5 and Media Source Extensions.

- Great picture quality. The adaptive data transfer function used allows you to dynamically change the quality of the transmitted video sequence depending on the bandwidth of the Internet connection, while the algorithm seeks to maintain maximum quality.

- There is no need for complex configuration of user equipment.

Disadvantages:

- Limited support for working with the engine on some devices.

- High delays in image transmission.

- Strong increase in overhead and complexity of optimization due to the use of small files. Due to the nature of the container, we can never get a delay less than the segment size.

The disadvantages of HLS outweighed our advantages for us and made us look for alternative options.

What is WebRTC?

( c )

The WebRTC platform was developed by Google in 2011 to stream video and audio between browsers and mobile applications with minimal delay. For this, the standard UDP protocol and special flow control algorithms are used. Today it is an open source project, it is actively supported by Google and is developing.

WebRTC is a set of technologies for peer-to-peer transmission of video and sound. That is, for example, user browsers using WebRTC can transfer data to each other directly, without using remote servers to store and process data. All information is also processed by browsers and mobile applications of end users.

The convenience and great capabilities of this technology were appreciated by the developers of all popular browsers. Today WebRTC support is implemented in Mozilla Firefox, Opera, Google Chrome (and all Chromium-based browsers), as well as in mobile applications for Android and iOS.

With all its undoubted advantages, WebRTC has several significant disadvantages.

Difficulties of choice

WebRTC is much more complex in terms of networking because it is about P2P. It is difficult to debug, test, it can behave unpredictably. At the same time, we need to overcome NAT and firewall, we need to provide work in networks where UDP is blocked.

Google’s WebRTC implementation is very difficult to use. There is even a whole company that provides SDK assembly services. Plus, the implementation from Google was very difficult to integrate with our system so as not to transcode all the video.

However, we have long wanted to give users the opportunity to work with a full-fledged "live" video sequence and minimize the lag of the image on the screen from the events themselves. Plus, we had a desire to make the use of PTZ cameras more comfortable, where delays are critical.

Considering that other implementations of the fight against lags so far have limited functionality and work noticeably worse, we decided to use WebRTC.

What have we done

Properly implementing the WebRTC platform is not an easy task. Any miscalculation or inaccuracy can lead to the fact that delays in the transmission of video sequences not only do not decrease compared to other platforms, but also increase.

For WebRTC to work correctly, first of all, it is necessary to carry out a technological upgrade of the stack for working with web video. Which we did.

First, we implemented the WebRTC signaling protocol server on top of Websocket, and also deployed the WebRTC peer server in the cloud based on the webrtc.org SDK. Its task is to distribute video streams to client WebRTC peers in the H.264 + Opus / G.711 format without video transcoding.

We chose Websocket as the signaling protocol because it already has quality support in all popular web browsers. Due to this, it is possible to significantly reduce not only development overhead, but also not to waste time and resources on repeated TCP and TLS handshake compared to AJAX.

The fact is that by default WebRTC does not provide the signaling protocol necessary for the proper configuration, support and breaking of real-time video communications between the source and client applications.

And in order to independently implement the signaling technology, we needed to develop our own signal server with support for several web protocols (Websocet, WebRTC). And also with the ability to securely manage sessions and notifications in real time, video management and many other parameters.

We overcame the limitations of P2P by reducing the delay not due to P2P, but due to UDP and flow control aimed at reducing the delay. This is also embedded in WebRTC, since the main use-case is p2p conversations through the browser.

In the mobile client, we implemented the player using the webrtc.org SDK, since only in it the flow control is correctly implemented, there are all known Forward Error Correction (FEC) schemes, the packet re-sending mechanism for all browsers is correctly implemented. It is also important that the SDK webrtc.org is actively developed by Google.

What is the result of implementing WebRTC?

To watch live video from cameras, we added a new optimized player based on WebRTC to our account. It provides high-speed video footage and completely eliminates the problem of accumulation of delay as the viewing time increases.

After implementing WebRTC support in the Ivideon cloud service, we can confidently say that now our customers can watch full-fledged live video. Now the delay in broadcasting footage does not exceed one second! For comparison, the previous HLS engine provided video delivery with a delay of 5-7 seconds. The difference in the speed of video demonstration is very significant, and the user will notice it immediately after starting to work with our video service.

As we expected, the implementation of the new player allowed us to increase the responsiveness of PTZ and voice communication with the camera.

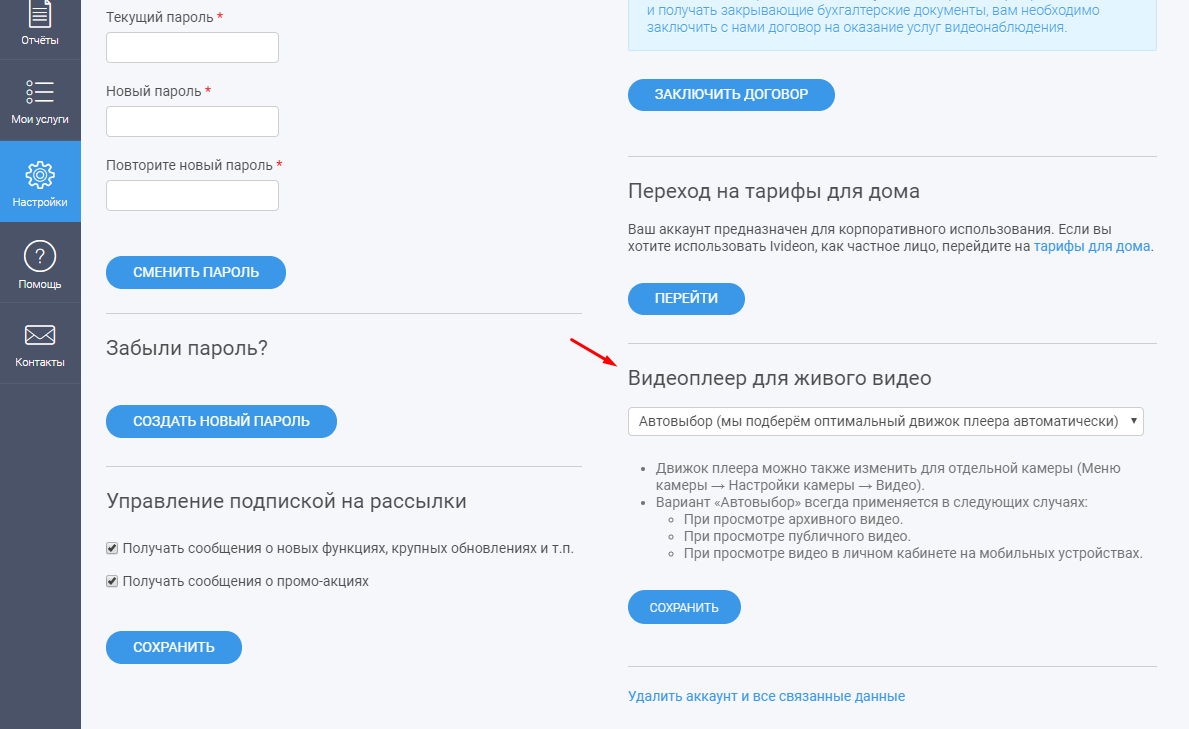

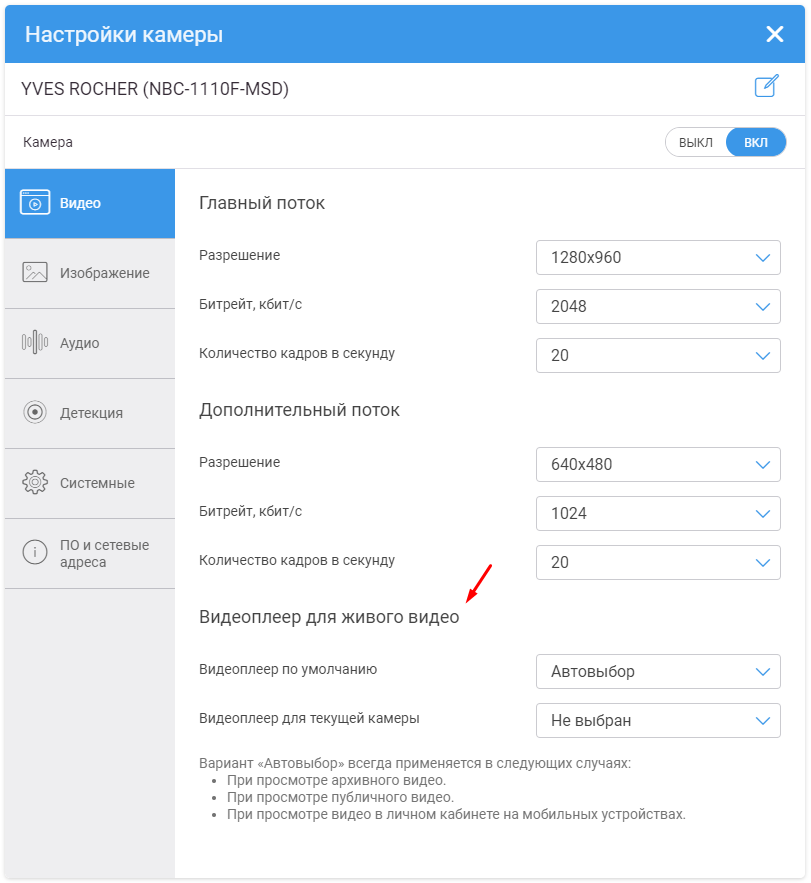

There is only one subtle point that we want to draw attention to. The new WebRTC player is still in test mode. And that is why we do not connect it to all our customers by default. But you can activate it yourself by enabling the corresponding item in the camera settings (for this you need to go to your personal account ).

Features of WebRTC implementation in Ivideon service

WebRTC is currently still an experimental technology. Its support has not yet been correctly implemented in all browsers and user devices, and also not in all cameras.

This explains the fact that we have not yet made the WebRTC player the default default for all users.

For now, we recommend using WebRTC only in Google Chrome browsers. Recent versions of Firefox and Safari also support this technology, but, unfortunately, are still unstable.

We have not yet implemented WebRTC support for browsers on mobile devices. Now, if you log in from your mobile device and activate WebRTC, this mode will not work. However, WebRTC is in our mobile applications for Android and iOS .

And concluding the story about the features of WebRTC implementation in our service, we note two more subtle points.

Firstly, the technology is focused on broadcasting live video in real time. Therefore, if the bandwidth of your channel is not enough for video transmission, you will notice a drop in frames (with HLS, you will notice video fading and an increase in delay, while frames will not drop out), but the video will still be broadcast in real time.

Secondly, since the technology is designed to work with live video in real time, we do not use it to work with archived video data.

Other service changes

At the moment, Flash is no longer involved in the automatic engine selection mechanism. You can still use such a player, but for this you need to select it manually in the account or camera settings. This is not a fad, just according to statistics from our service of users working with Flash, there are practically no more. And in an attempt to determine whether the user's browser supports it, we lose about 2 seconds of precious time.

Here is a brief summary of the changes that await you in our cloud-based video surveillance system and personal account. Stay tuned and stay tuned!